Most articles about llms.txt will tell you it’s the next robots.txt for AI. That’s wrong on both counts. llms.txt isn’t a replacement for anything, and as of 2026, no major AI company has publicly confirmed they use it to crawl, rank, or cite your content. But the file isn’t useless either, and the conversation around it reveals something important about where AI visibility is actually heading. Here’s what llms.txt really is, what it does, and whether it belongs in your stack.

The Short Version

- llms.txt is a proposed Markdown file placed at your site’s root that gives large language models a curated map of your most important content.

- It was proposed by Jeremy Howard (Answer.AI) in September 2024, it’s a community proposal, not an official standard backed by OpenAI, Anthropic, Google, or Perplexity.

- As of 2026, there’s no verified evidence that major AI crawlers read, honor, or prioritize llms.txt content over regular HTML pages.

- It’s different from robots.txt: robots.txt tells crawlers what to avoid. llms.txt tells LLMs what’s worth reading.

- Docs-heavy companies benefit most, Anthropic, Cursor, Vercel, and Cloudflare all publish one. For most marketing sites, the impact is speculative.

- It won’t fix invisibility in ChatGPT or Perplexity. Getting cited by AI requires earned presence in training data, not a file on your server.

What llms.txt Actually Is

llms.txt is a plain-text Markdown file you place at yoursite.com/llms.txt. It contains a short description of your site and a curated list of links to your most important pages, written in a format LLMs can parse easily.

The proposal came from Jeremy Howard of Answer.AI in September 2024. His argument: AI models have small context windows, HTML is noisy, and websites are bloated with navigation, ads, and JavaScript. A single clean Markdown file pointing to your best content would make it easier for LLMs to use your site at inference time.

The file has a simple structure: an H1 with your site name, a blockquote summary, optional background notes, then one or more H2 sections listing links with short descriptions. Many implementations also publish llms-full.txt (the full Markdown content of key pages) and mirror important URLs as .md files so LLMs can fetch clean versions on demand.

That’s the whole concept. No tracking pixels. No schema. No server configuration. Just a file.

How llms.txt Differs From robots.txt and sitemap.xml

The three files get lumped together constantly. They solve completely different problems.

| File | Who It’s For | What It Does | Status |

|---|---|---|---|

| robots.txt | Search engine crawlers | Tells crawlers which pages to avoid | Universally supported since 1994 |

| sitemap.xml | Search engine crawlers | Lists all indexable URLs for discovery | Universally supported |

| llms.txt | Large language models | Provides a curated Markdown summary and links to high-value content | Proposal, no official AI adoption as of 2026 |

robots.txt is exclusionary. sitemap.xml is comprehensive. llms.txt is curatorial. It doesn’t try to list everything, it tries to surface the handful of pages you’d actually want an LLM to read if it could only read a few.

The files complement each other. You can run all three. llms.txt doesn’t override robots.txt (an AI crawler honoring robots.txt will still skip disallowed URLs, even if they appear in llms.txt), and it doesn’t replace your sitemap for search engines.

What a Real llms.txt File Looks Like

Here’s a stripped-down example that follows the proposed spec:

# Acme Analytics

> Acme Analytics is a B2B SaaS platform for product teams who need real-time user behavior data without engineering lift.

Our documentation covers product setup, API reference, and common integration patterns.

## Docs

- [Getting Started](https://acme.com/docs/getting-started.md): Account setup and first event in under 10 minutes.

- [API Reference](https://acme.com/docs/api.md): Full endpoint documentation with code samples.

- [Integrations](https://acme.com/docs/integrations.md): Supported platforms and connection guides.

## Policies

- [Privacy Policy](https://acme.com/privacy.md)

- [Terms of Service](https://acme.com/terms.md)

## Optional

- [Changelog](https://acme.com/changelog.md): Recent product updates.The spec recommends linking to .md versions of each page (clean Markdown mirrors of the HTML). The “Optional” section is a signal to LLMs that they can skip those links if context is tight.

That’s the whole file. Most production llms.txt files are under 100 lines.

Who Actually Publishes an llms.txt File

Adoption skews heavily toward developer tooling and documentation-first companies. Sites with large public docs benefit most because their content is already structured, referenceable, and useful out of context.

Confirmed publishers as of 2026 include:

- Anthropic, docs.anthropic.com/llms.txt

- Cursor, cursor.com/llms.txt

- Cloudflare, developers.cloudflare.com/llms.txt

- Vercel, vercel.com/docs/llms.txt

- Astro, Svelte, Mintlify, framework and docs platforms pushing the standard

Community directories like directory.llmstxt.cloud track adoption. The number of domains publishing the file has grown from a few hundred in early 2025 to several thousand by 2026, but that’s still a rounding error relative to the broader web.

The pattern is clear: if your audience is developers using coding agents, llms.txt is worth publishing. If your audience is marketers, buyers, or consumers, the case is much weaker.

Does llms.txt Actually Do Anything?

The llms.txt mistake we see most often in audits is a team treating the file as a visibility lever instead of a housekeeping item. Publishing it takes an afternoon, removing or correcting it later is trivial, and no credible crawler currently weights it the way robots.txt is weighted. Budget the effort accordingly and keep the real visibility work (editorial coverage, category-prompt testing, monitoring cadence) where it belongs.

This is the question that matters. And the honest answer is: we don’t fully know yet.

Here’s what the evidence shows in 2026:

What’s verified. AI coding agents (Cursor, GitHub Copilot agents, Claude’s agentic workflows) do fetch llms.txt and llms-full.txt files when working inside a project. When you ask Claude or Cursor to “use the Vercel docs,” those agents often pull from the .md mirrors rather than scraping HTML. That’s a real, observable use case.

What’s not verified. There’s no public confirmation from OpenAI, Anthropic, Google, or Perplexity that their consumer-facing products (ChatGPT, Claude.ai, Gemini, Perplexity) weight llms.txt content differently during crawl, training, or retrieval. Server log analyses from multiple SEO publications have shown that major AI bots (GPTBot, ClaudeBot, PerplexityBot, Google-Extended) rarely fetch /llms.txt in the wild.

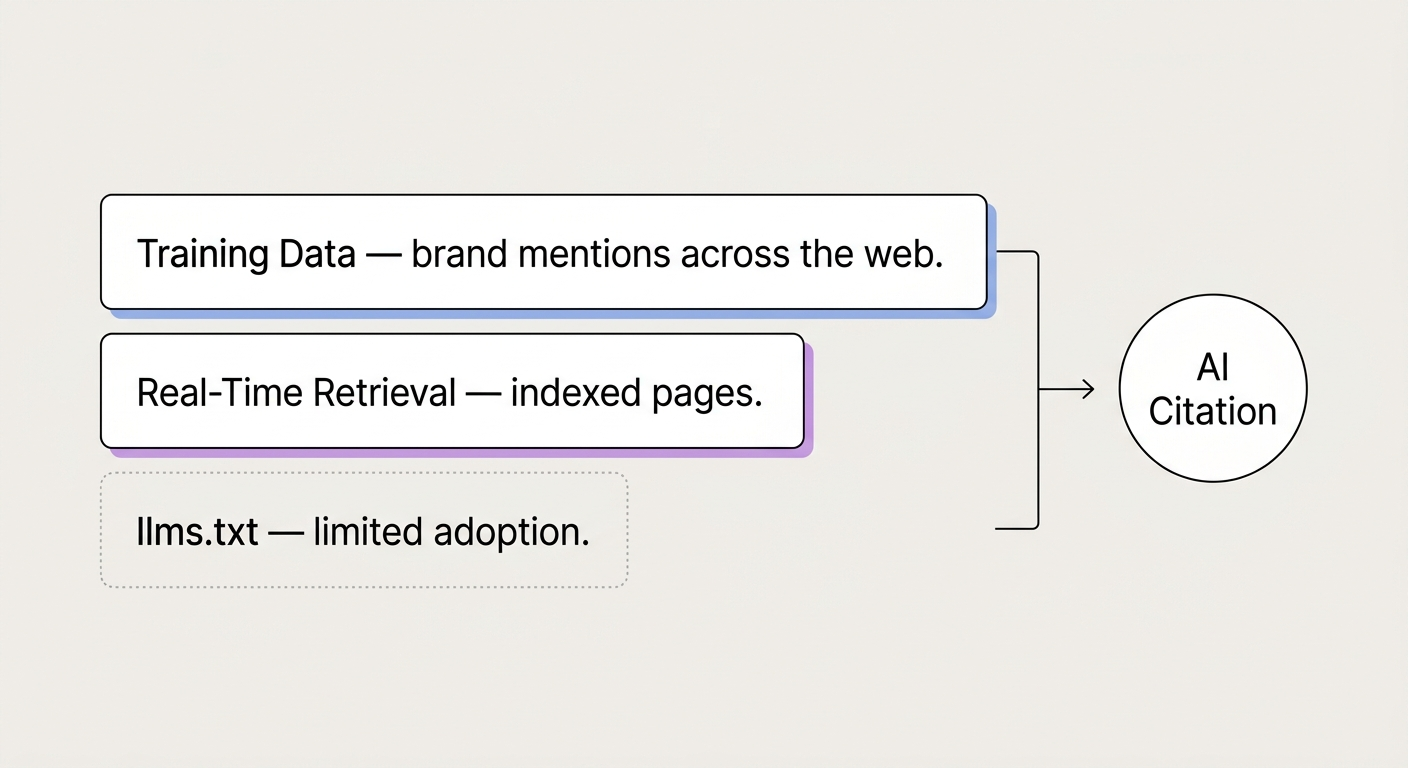

What’s speculative. The claim that llms.txt improves your chances of being cited in ChatGPT or Perplexity answers has no supporting data. Citations in those platforms come from training data, real-time retrieval from indexed web content, and brand signals, not from a file that most of their crawlers aren’t fetching.

Jeremy Howard himself has been measured about this. llms.txt was proposed as infrastructure for AI to use a website at inference time, not as a ranking signal.

So when someone tells you “add llms.txt and watch your AI visibility improve,” they’re selling a story the evidence doesn’t support yet.

Where llms.txt Does Provide Value

For the per-platform walkthroughs that actually move AI visibility regardless of whether llms.txt matures, see how to check brand mentions in ChatGPT and how to track brand mentions in Perplexity, and monitoring brand mentions in LLMs covers the cross-platform cadence the file was, at best, meant to complement.

Even without guaranteed adoption, there are cases where publishing the file makes sense:

Developer documentation. If your product is used by developers who work with coding agents, a well-maintained llms.txt and llms-full.txt makes your docs dramatically easier for those agents to use. We’ve seen developer-tool clients report cleaner, more accurate agent outputs after publishing Markdown mirrors, users get better answers when Claude or Cursor can fetch structured content instead of scraping JavaScript-heavy pages.

Token efficiency for agent workflows. Markdown is dramatically more token-efficient than HTML. Some documentation teams have reported 5–10x token reductions when agents read the .md mirror instead of the rendered page. For users paying per token, that’s a real UX improvement.

Content governance. Building llms.txt forces you to answer a useful question: if an AI could only read 10 pages of my site, which 10? That exercise often surfaces weaknesses, outdated docs, duplicate pages, content nobody maintains, regardless of whether AI models ever read the file.

Future-proofing at low cost. The file takes minutes to create and costs nothing to maintain if your docs are already in Markdown. If adoption accelerates in 2026 or 2027, you’re ready. If it doesn’t, you’ve lost nothing.

What llms.txt won’t do: make your brand appear in ChatGPT answers, boost your rankings in AI Overviews, or substitute for earned citations across high-authority publications. Those outcomes depend on different inputs entirely.

How to Create an llms.txt File

The process is straightforward. Four steps:

- Decide what matters. List your 10–30 most important pages, the ones you’d genuinely want an AI to use if it could only see a handful. Prioritize canonical documentation, core product pages, policies, and reference material. Skip blog posts that age quickly, internal announcements, and low-value pages.

- Write the file in Markdown. Follow the structure from the example above: H1 with your site name, blockquote summary, optional background, H2 sections grouping links by category. Each link needs a short description. Keep it under 100 lines if you can.

- Publish Markdown mirrors (optional but recommended). For each link in your llms.txt, publish a

.mdversion at the same URL path. If your CMS doesn’t support this natively, plugins exist for WordPress, and platforms like Mintlify and GitBook generate them automatically. - Upload to root. Place the file at

yoursite.com/llms.txt. It must be at the root, subfolder locations aren’t part of the spec. Verify it loads publicly and returns a 200 status.

Maintenance matters. Stale llms.txt files pointing to deleted pages or outdated docs actively hurt you, they give AI systems bad information. Treat the file as part of your docs workflow, not a one-time publish-and-forget.

For WordPress sites, plugins like the Hostinger llms.txt plugin and SEOPress handle generation automatically. For documentation platforms (Mintlify, GitBook, Docusaurus), it’s usually a checkbox in settings.

The Bigger Question Most llms.txt Articles Skip

Every guide to llms.txt ends with “publish the file and future-proof your site.” That’s fine advice. It’s also incomplete.

A file sitting on your server doesn’t make AI models recommend your brand. What does: consistent presence across the editorial sources AI models learn from, strong entity definition, and a track record of being cited by publications in your category. Those are the inputs that shape whether ChatGPT mentions you when someone asks for a recommendation in your space.

In our work analyzing AI citation patterns for B2B clients, the brands that show up consistently in AI answers share a common pattern, they’ve built presence across publications their competitors haven’t. A perfectly maintained llms.txt file on a brand with zero editorial presence still produces zero AI citations. The file can clean up how AI uses your content once it finds you. It can’t create visibility that isn’t there.

If AI visibility is your actual goal, llms.txt is a small, optional tactic, not the strategy.

Frequently Asked Questions

Is llms.txt an official standard?

No. It’s a community proposal from Jeremy Howard of Answer.AI, introduced in September 2024. No standards body has ratified it, and no major AI company has publicly committed to supporting it. Adoption is voluntary and still limited.

Where should the llms.txt file live?

At the root of your domain, yoursite.com/llms.txt. The proposal doesn’t support subdirectory placement. It must be publicly accessible and return a 200 status code.

Does llms.txt help SEO?

Not directly. Google Search doesn’t use llms.txt as a ranking factor, and John Mueller has publicly stated that traditional search engines don’t rely on it. Any SEO benefit would be indirect, through cleaner content discovery by AI assistants that feed back into search behavior.

What’s the difference between llms.txt and llms-full.txt?

llms.txt is a curated index of links to your most important content. llms-full.txt contains the actual Markdown content of those pages in one file, useful when an AI wants to ingest everything without making multiple requests. Some teams publish both. Others only publish the index.

Do ChatGPT and Perplexity read llms.txt?

There’s no public confirmation. Server log data from multiple SEO analyses shows that GPTBot, ClaudeBot, PerplexityBot, and Google-Extended rarely fetch /llms.txt in the wild. AI coding agents (Cursor, Claude’s agent workflows) do fetch it, but that’s a different use case than citation in consumer AI answers.

Should small sites create an llms.txt file?

If your site has fewer than 20 pages and your content is already clean, the file adds little value. Small sites tend to be easier for AI to parse already. llms.txt matters most for sites with extensive documentation or large content libraries where curation actually helps.

How often should I update llms.txt?

Whenever your linked pages change, get deleted, or lose relevance. For most teams, a quarterly review works. For fast-moving documentation, monthly. Outdated llms.txt files are worse than none, they actively misinform AI systems.

A Pragmatic llms.txt Decision for the Next 30 Days

llms.txt is a low-cost, low-risk file worth publishing if you run developer docs or a content-heavy site. It’s not a ranking factor, not a citation guarantee, and not a substitute for building real presence in the sources AI models actually learn from. Publish it as housekeeping, not as a visibility strategy.

If you want to understand whether AI actually recommends your brand today, skip the file and test the output. Ask ChatGPT, Perplexity, and Gemini to recommend a solution in your category. Note which competitors appear. That’s your starting point, and it tells you more about your AI visibility than any file ever will. For a deeper read on the signals that shape AI recommendations, see our guide on how brand mentions in AI actually work.