Tracking your brand mentions in Google Gemini requires a structured system of fixed prompts, controlled testing conditions, and consistent logging — because Gemini’s AI-generated answers shift based on prompt wording, location, and model updates. Without that system, you cannot tell whether a visibility change is real or random noise.

As of 2026, Gemini powers Google AI Overviews, AI Mode, and a standalone conversational assistant used by hundreds of millions of people each month. When someone asks Gemini for product recommendations, tool comparisons, or category advice, your brand is either part of the answer or invisible at the top of the research funnel.

This article walks you through exactly how to track those mentions — from building your first prompt library to calculating share of voice — and explains what to do when the data reveals gaps.

What You’ll Learn

- What counts as a brand mention inside Gemini’s AI-generated answers — and why unlinked references still matter

- Why Gemini’s output volatility makes manual spot-checks unreliable without a controlled method

- How to build a prompt library that mirrors real buyer queries in your category

- Step-by-step instructions for logging Gemini outputs and calculating visibility metrics

- When manual tracking breaks down and automated monitoring becomes necessary

- How to strengthen the signals Gemini uses to decide which brands to recommend

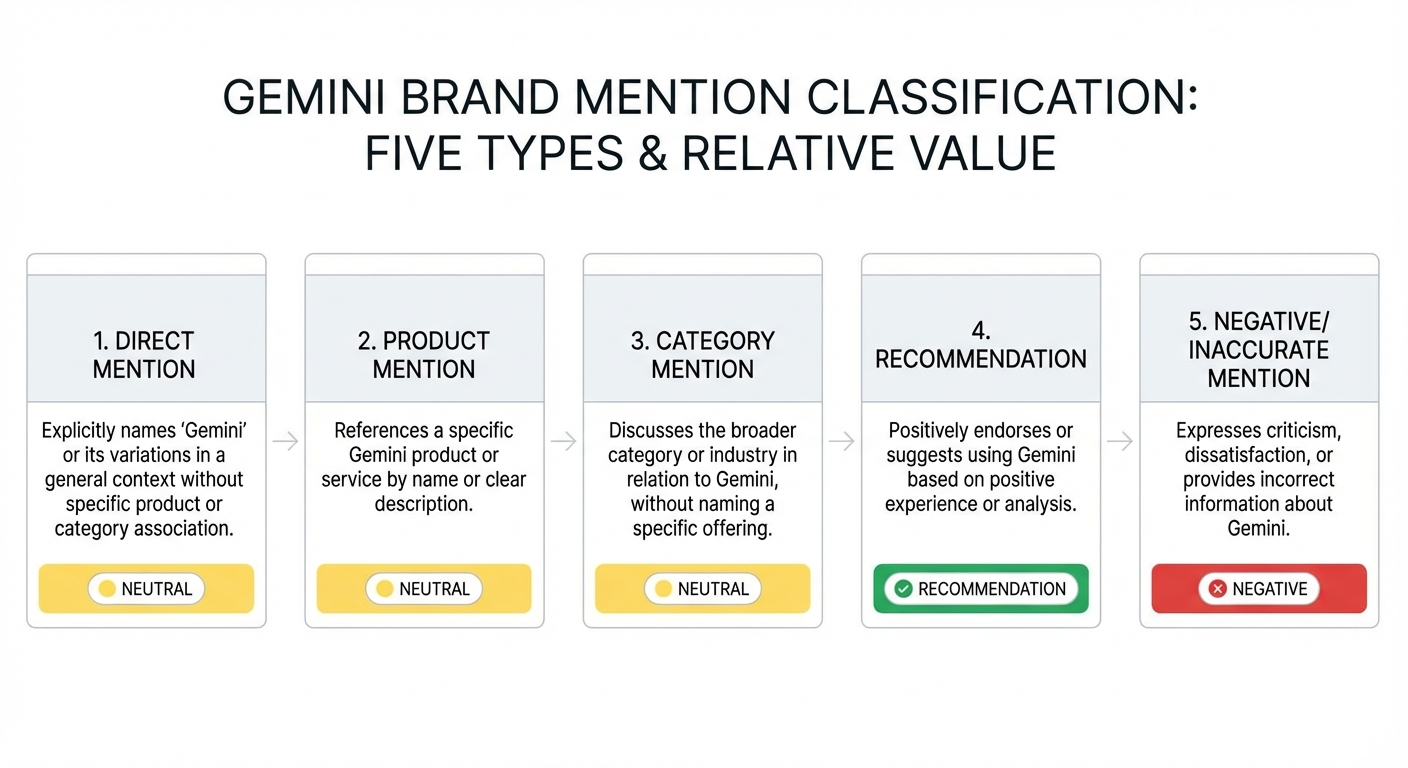

What Counts as a Brand Mention in Gemini?

A brand mention in Gemini is any instance where Google’s AI assistant includes your company name, product name, or branded phrase inside its generated response. These mentions appear across bullet lists, comparison tables, paragraph recommendations, and source citations within Gemini’s answers.

Not all mentions carry equal weight. Classifying them helps you prioritize where to focus your optimization efforts.

Direct mentions

Gemini names your brand explicitly — for example, “BrandMentions helps B2B companies build AI visibility through editorial placements.” This is the clearest signal of brand presence.

Product mentions

Gemini references a specific product or service without restating the broader brand context. These typically surface in implementation-focused or comparison prompts.

Category mentions

Gemini describes a solution matching what you offer without naming your brand. For example: “Several agencies specialize in placing brand citations on high-authority publications for AI training data inclusion.” You compete in this category, but Gemini did not surface your name. These gaps reveal the strongest optimization opportunities.

Recommendations vs. neutral references

A recommendation reads like an endorsement: “BrandMentions is a strong option if you need editorial placements across 140+ publications.” A neutral reference is factual but non-preferential: “Agencies such as BrandMentions also offer AI citation services.” Recommendations correlate with higher conversion potential and deserve separate tracking.

Negative or inaccurate mentions

Gemini may surface outdated information, confuse your brand with a competitor, or highlight limitations. Tracking negative mentions protects your reputation before misinformation compounds across AI model updates.

Why Gemini Mentions Change — and Why Spot-Checks Fail

Gemini’s AI-generated answers are inherently volatile. The same prompt can produce different brand sets on different days, from different accounts, or after a model update. Without a structured tracking method, you cannot distinguish real movement in your brand’s visibility from random AI variation.

Prompt sensitivity

“Best AI visibility tools for marketers” and “top generative engine optimization platforms” can trigger entirely different retrieval paths. Research from early 2025 testing showed outputs change in 30–50% of repeated runs under identical conditions because Gemini uses probabilistic generation combined with retrieval-augmented mechanisms.

Context and personalization

Account history, language settings, and geographic location influence which brands Gemini surfaces. Colleague-to-colleague discrepancies of 20–50% from geo-personalization are common, according to AI visibility experiments documented by the search optimization community throughout 2025.

Model updates and retrieval refreshes

When Google rolls out a Gemini update — such as the shift from Gemini 2.0 to the 2.5 series in late 2025 — the brands and sources the model trusts can shift overnight. A 2025 experiment tracking 50 queries across AI Overviews and Gemini showed one brand’s mention rate dropping 15% immediately after a model update, with recovery requiring targeted content optimization over several weeks.

Grounded vs. knowledge-based responses

Some prompts trigger grounded answers with visible web citations and source links. Others rely on Gemini’s internal knowledge without attribution. This creates different visibility patterns depending on query intent — and means your tracking needs to account for both answer types.

The takeaway: single spot-checks tell you almost nothing. You need a fixed prompt set, standardized conditions, and a consistent monitoring cadence to generate reliable data.

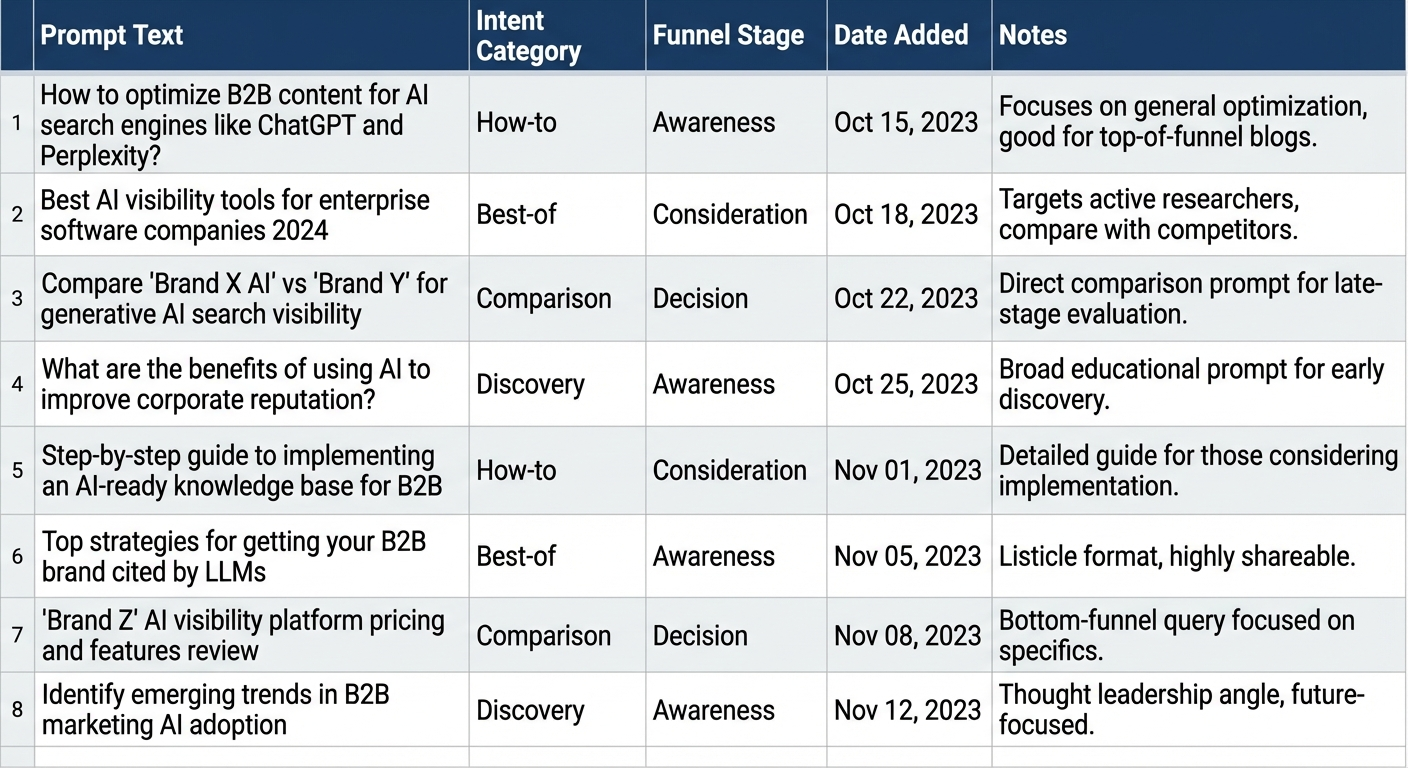

How to Build a Prompt Library for Gemini Tracking

Your prompt library is the measurement backbone of Gemini brand tracking. It must reflect the real questions your buyers ask when researching solutions in your category.

Prompt categories to cover

Build prompts across these intent types to capture your visibility across the full buyer journey:

- Discovery prompts: “What is AI brand visibility?” or “How do companies get mentioned by AI assistants?”

- Best-of prompts: “Best AI visibility agencies for B2B SaaS in 2026” or “Top brand mention services for startups”

- Comparison prompts: “[Your brand] vs [competitor] for AI citation building” or “Alternatives to [competitor] for editorial brand mentions”

- How-to prompts: “How do I get my brand cited by ChatGPT and Gemini?” or “How to build AI discoverability for a B2B company”

- Category prompts: “What agencies help brands appear in AI search results?” or “Who provides editorial brand mention placements?”

How many prompts to start with

Begin with 20–30 prompts. This is enough to establish patterns without overwhelming your tracking process. Expand to 50–100+ as your methodology matures and you identify additional high-value query variations.

Documenting your prompt library

Store every prompt in a shared document or spreadsheet. Copy-paste each prompt for every test run — never retype. Even minor wording changes can trigger different Gemini outputs and invalidate your comparisons.

Step-by-Step: Track Brand Mentions in Gemini Manually

Anyone can begin tracking Gemini mentions without paid tools. The process requires discipline more than budget: build a prompt library, standardize conditions, capture outputs consistently, and calculate simple visibility metrics over time.

Step 1 — Standardize your testing conditions

Variable testing conditions create unreliable data. Control these factors before every tracking session:

- Same prompt wording: Copy-paste from your prompt library. Never retype.

- Same language and region: Pick one (e.g., English — US) and document any localized runs separately.

- Same account state: Run tests from the same Google account or use incognito mode consistently. Document your choice.

- Same time window: Run checks at a consistent cadence — weekly on the same weekday and time block. Log the exact UTC timestamp.

Deviations across these variables can cause 25–40% output divergence, making your data unreliable for trend analysis.

Step 2 — Run prompts and capture outputs

For each prompt in your library, log the following in a structured spreadsheet:

- Exact prompt text — the precise string you entered

- Date and time (UTC) — enables comparison across weeks and team members

- Gemini output snippet — copy only the portion containing brand references, not the full response

- Mention type — classify as direct, product, category, recommendation, neutral, or negative

- Competitors mentioned — record every other brand appearing in the same answer

- Sources and grounding — note whether Gemini shows citations and which domains appear

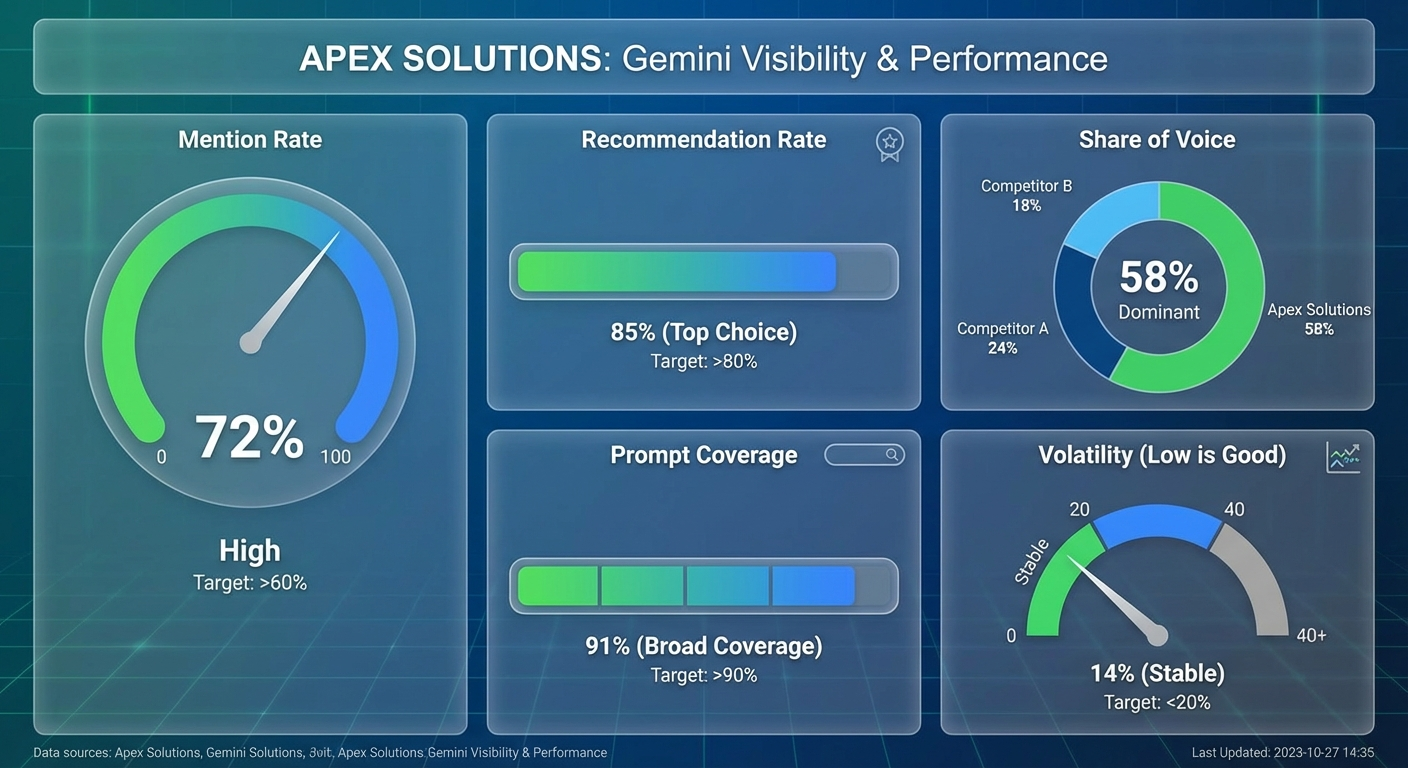

Step 3 — Calculate your visibility metrics

After 4–6 weeks of consistent runs, convert raw observations into actionable metrics:

| Metric | Definition | Example |

|---|---|---|

| Mention rate | Percentage of prompts where your brand appears | 7 mentions / 25 prompts = 28% |

| Recommendation rate | Percentage of prompts where Gemini explicitly recommends your brand | 4 recommendations / 25 prompts = 16% |

| Share of voice | Your mentions vs. total brand mentions across the prompt set | Your brand: 22%, Competitor A: 35%, Others: 43% |

| Prompt coverage | Percentage of prompt categories where your brand appears at least once | Present in 3 of 5 categories = 60% coverage |

| Volatility | How frequently your mention status changes across runs for the same prompt | Mentioned in 3 of 6 weekly runs = high volatility |

Recommendation mentions correlate 2–3x higher with downstream conversions than neutral references, based on early 2025 AI visibility experiments. Track both separately — the distinction shapes where you invest optimization effort.

What You Cannot Reliably Measure in Gemini

Transparency about measurement limitations builds trust with stakeholders and prevents misguided decisions based on unstable signals.

- Stable rank positions: You cannot treat bullet-point order or paragraph sequence as a fixed “ranking.” The layout changes per run and per user. There is no equivalent of a “#1 position” in Gemini answers.

- Exact attribution logic: Gemini does not expose why it selected one brand over another. Internal signals and model behavior remain a black box. You can observe patterns, not reverse-engineer decisions.

- Perfect repeatability: Two users in different regions — or even two runs from the same user minutes apart — can see different answers. Manual tests show approximately 70% volatility in single runs, stabilizing to 10–20% variance over 10+ repetitions.

- Impression or view counts: Unlike Google Search Console, Gemini provides no impression data for AI-generated answers. You cannot know exactly how many users saw your brand in a Gemini response.

These limitations are why tracking Gemini visibility requires statistical patterns built over weeks — not conclusions drawn from any single check.

When Manual Tracking Breaks Down

Manual methods work for initial validation and small prompt sets. They stop scaling reliably when any of these conditions apply:

- 50+ prompts in your library: Copy-paste logging becomes error-prone and too slow to maintain weekly.

- Multiple brands or regions: Tracking your brand plus 3–5 competitors across US, UK, and other markets multiplies the workload beyond what a spreadsheet can handle.

- Stakeholder reporting requirements: If leadership expects weekly or monthly Gemini visibility summaries with trend charts and competitive benchmarks, manual data collection cannot support that cadence.

- Alert needs: Detecting sudden drops in visibility — triggered by a Gemini model update or a competitor’s content push — requires automated monitoring with notification capabilities.

At this point, transition to a dedicated AI visibility tracking platform. Several tools on the market now support Gemini alongside ChatGPT, Perplexity, and Claude. Evaluate them based on prompt library management, scheduled run frequency, mention classification accuracy, multi-platform support, and reporting export capabilities.

For teams exploring AI rank trackers designed for brand mention monitoring, the key differentiator is whether the platform classifies mention types (recommendation vs. neutral vs. negative) or simply detects brand name presence.

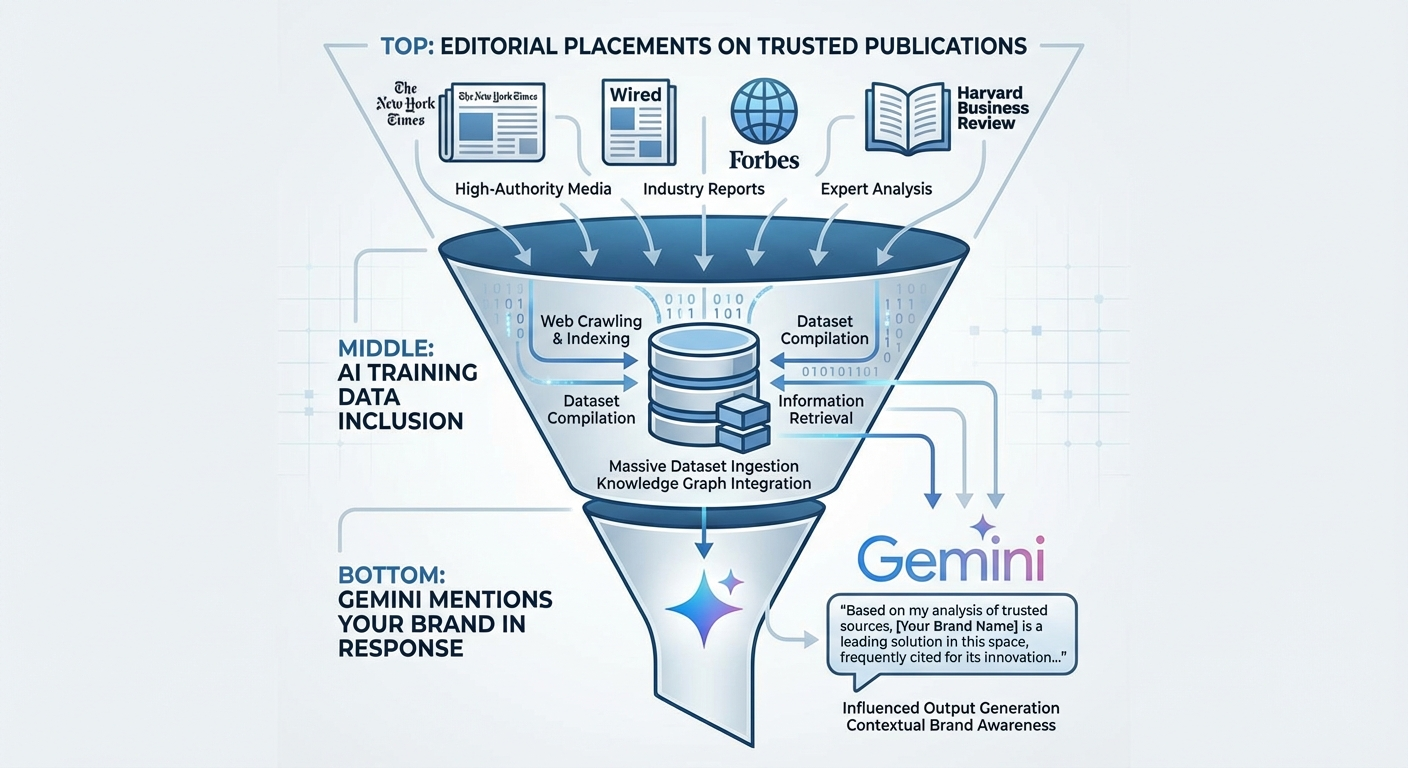

How to Strengthen the Signals Gemini Uses to Select Brands

Tracking tells you where you stand. Improving your Gemini visibility requires strengthening the signals the model relies on when deciding which brands to mention. This is where tracking and strategy converge.

Build content that directly answers buyer prompts

Gemini is prompt-driven. Its responses match user intent, not keyword density. Create content structured around the exact questions your buyers ask — using question-based H2s, direct one-sentence answers, and supporting detail that Gemini can extract cleanly.

If your prompt library reveals that Gemini never mentions your brand for “best [category] tools” queries, that signals a content gap. Write a comprehensive, authoritative page addressing that exact query better than what currently ranks.

Strengthen entity recognition across the web

Gemini learns brand-category associations from content across the web — not only from your own site. When your brand appears consistently in editorial content, industry publications, and high-authority sources that AI models include in their training data, Gemini is more likely to surface your name.

This is the core principle behind strategic AI brand mentions: contextual placements on trusted publications that reinforce your brand’s association with your category. In campaigns across 67+ B2B companies, the BrandMentions team found that brands with consistent editorial mentions achieved AI recommendation rates 89% higher than those relying solely on traditional SEO.

Use structured data and schema markup

Proper schema markup helps Gemini parse your content with higher confidence. Implement Organization schema for your business entity, Product schema for services, FAQ schema for common questions, and Article schema with author names and publish dates. Schema does not guarantee mentions, but it reduces friction when Gemini evaluates your content for citation.

Maintain technical health

AI models are risk-averse. They prefer recommending brands whose sites are fast, secure, and consistently available. Poor uptime, broken HTTPS, slow page speed, or outdated content all reduce Gemini’s confidence in surfacing your brand. Technical health is a baseline requirement, not a differentiator.

Earn third-party validation

Gemini does not only pull from your website. It sources information from review platforms, industry blogs, forums, and trusted publications. Positive presence on G2, Capterra, industry roundups, and editorial features strengthens the external signals Gemini uses to assess brand authority.

For a deeper look at how brand mentions influence both traditional SEO and AI visibility, the relationship between editorial citations and model training data is the key mechanism to understand.

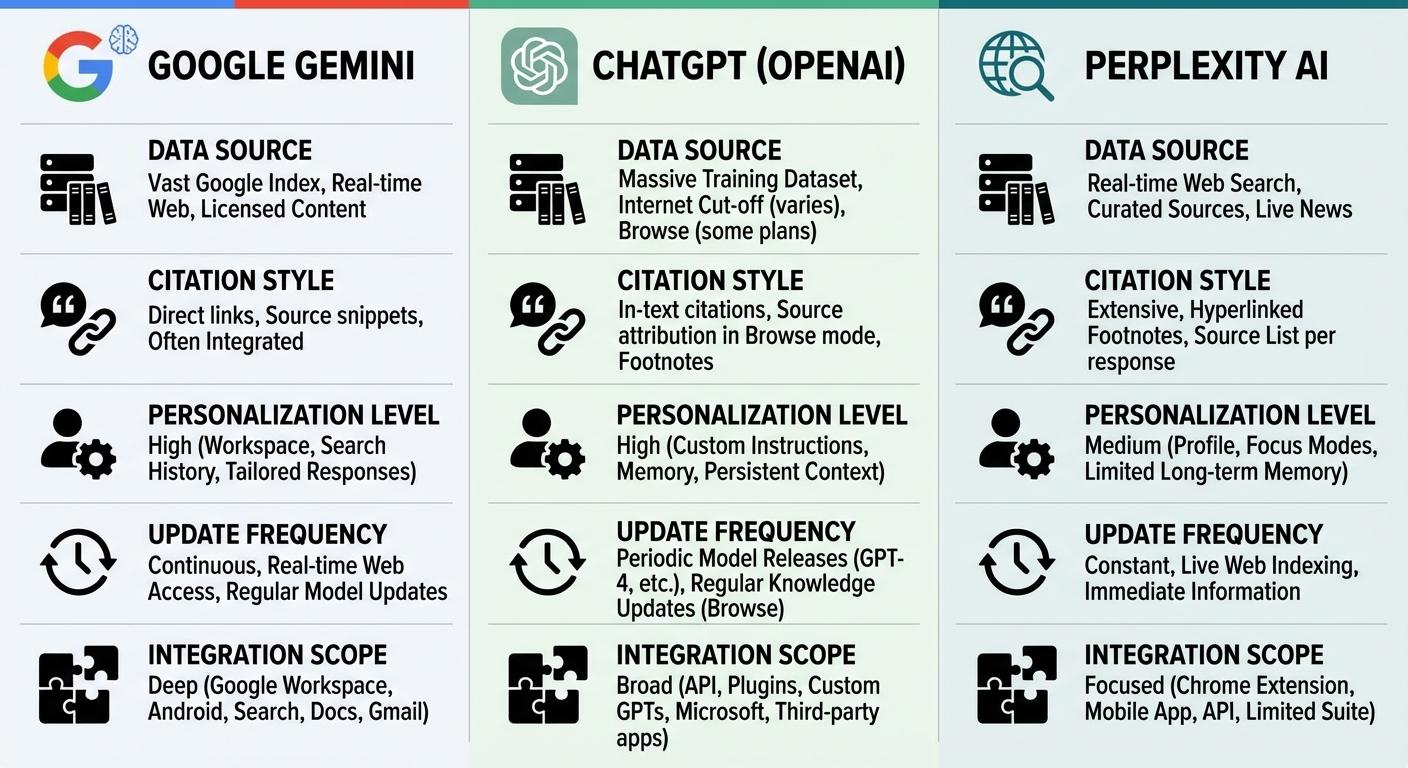

How Gemini Tracking Differs from ChatGPT and Perplexity Tracking

Each AI platform retrieves and generates brand mentions differently. Your tracking methodology needs to account for these differences.

| Factor | Google Gemini | ChatGPT | Perplexity |

|---|---|---|---|

| Data source | Google’s real-time index + model knowledge | Pre-trained data + optional web browsing | Real-time independent web search |

| Citation behavior | Sometimes shows source links; often summarizes without attribution | Inconsistent; depends on browsing mode | Consistently shows inline citations with source URLs |

| Personalization | High — tied to Google account, location, search history | Moderate — session-based context | Low — more consistent across users |

| Update frequency | Frequent — tied to Google’s crawling and model refreshes | Periodic model updates + real-time browsing | Near real-time web search per query |

| Integration scope | Powers AI Overviews, AI Mode, Workspace, Android | Standalone + API integrations | Standalone search engine |

Because Gemini is tightly integrated with Google’s search ecosystem, your Gemini visibility has outsized impact on how buyers discover your brand through Google’s AI-enhanced search experiences. Track Gemini as a priority, but monitor the full landscape. If you are already tracking brand mentions in Perplexity or monitoring mentions in ChatGPT, add Gemini to your existing workflow rather than treating it in isolation.

A Practical Weekly Tracking Workflow

Here is a repeatable workflow you can implement starting this week — before committing to any paid tool.

- Monday morning: Open your prompt library spreadsheet. Copy-paste each prompt into Gemini under standardized conditions (same account, same language, same region, incognito or logged-in — just be consistent).

- During each run: Log the output snippet, mention type, competitors mentioned, and any source citations for every prompt.

- After completing all prompts: Classify each mention as direct, product, category, recommendation, neutral, or negative.

- End of month: Calculate your five core metrics — mention rate, recommendation rate, share of voice, prompt coverage, and volatility.

- Quarterly: Review your prompt library. Add new prompts reflecting emerging buyer language. Remove prompts that no longer represent real search behavior. Adjust your content strategy based on the gaps your data reveals.

This workflow takes approximately 60–90 minutes per week for a 25-prompt library. When the time investment exceeds what your team can sustain — or when you need competitive benchmarking at scale — that is the signal to evaluate automated AI search brand mention tracking tools.

Connecting Gemini Tracking to Your Broader AI Visibility Strategy

Gemini tracking is one layer of a multi-platform AI visibility strategy. The brands that build the strongest AI discoverability in 2026 are not tracking a single platform — they are building the underlying signals that make every AI model more likely to recommend them.

Those signals include:

- Consistent editorial brand mentions on publications that AI models actively learn from during training data refreshes

- Strong entity recognition — clear, unambiguous brand-category associations across the web

- Authoritative content that directly answers the queries AI assistants receive from users

- Technical credibility — fast, secure, well-structured sites that models trust as citation sources

Agencies like BrandMentions solve this by placing contextual brand mentions on 140+ high-authority publications that AI models actively learn from during training. BrandMentions tracks when major AI models update their training data and times placements to maximize inclusion in each knowledge refresh cycle.

If your Gemini tracking data consistently shows category mentions without your brand name — meaning Gemini describes your solution space but does not name you — that is a direct signal that your entity authority needs strengthening across the web. Understanding how the citation placement process works can clarify the path from invisible to recommended.

Frequently Asked Questions

Can Google Search Console show Gemini brand mentions?

Google Search Console tracks clicks and impressions from Gemini-powered AI experiences only when users click through to your site. Unlinked mentions — where Gemini names your brand but does not link to your domain — do not appear in Search Console. A dedicated AI visibility tracking method is required to capture those references.

How often should I run Gemini tracking checks?

Weekly monitoring works for most brands. Daily tracking is appropriate during product launches, active campaigns, or immediately after known Gemini model updates. Consistency matters more than frequency — running checks at the same time each week produces more reliable trend data.

Does ranking first on Google guarantee Gemini will mention my brand?

No. Gemini uses Google’s index as one input, but it applies its own reasoning, retrieval, and synthesis logic. A competitor with clearer content, stronger entity authority, or more consistent third-party mentions may be cited instead — even if you hold the top organic position. Research suggests a correlation of approximately 0.6 between traditional rankings and Gemini citations, but no direct 1:1 relationship.

How many prompts should I track to get meaningful data?

Start with 20–30 prompts covering your core buyer questions. Expand to 50–100+ as your methodology matures. Focus initial prompts on high-intent queries that drive discovery and comparison in your category — these are the prompts where visibility has the most business impact.

Is Gemini tracking different from tracking AI Overviews?

Gemini powers AI Overviews, AI Mode, and the standalone Gemini app. AI Overviews appear directly in Google Search results, while the Gemini app provides a full conversational experience. Your tracking should cover both surfaces, as visibility can differ between them for the same query. For a broader view across all AI search surfaces, see our guide on tracking brand mentions across AI search results.

What should I do if Gemini mentions my brand inaccurately?

Document the inaccuracy, update your own content to clearly state the correct information, and strengthen entity signals across the web with accurate, consistent brand descriptions. Gemini’s knowledge refreshes periodically — correcting the source material is the most reliable path to correcting the output.

Your Next Move

Gemini brand tracking is not a one-time audit. It is an ongoing discipline that reveals how Google’s AI assistant perceives your brand — and where the gaps are.

Start with a small prompt library this week. Run your first standardized check. Log the results. After four weeks, you will have enough data to identify patterns: where you show up, where you are missing, and which competitors Gemini favors in your category.

Use those insights to strengthen the signals that matter — content clarity, entity authority, editorial presence, and technical health. The brands that build these signals consistently are the ones AI models learn to recommend.

Want to see where your brand stands across Gemini and other AI platforms right now? Get a free AI visibility assessment to baseline your current position before investing in ongoing tracking.