Quick answer: Tracking brand mentions in Perplexity requires a different approach than traditional SEO monitoring, because Perplexity doesn’t rank URLs, it cites sources inside AI-generated answers. If your brand appears in those answers, you’re influencing buyer research at the exact moment decisions form. If it doesn’t, you’re invisible in a search channel that’s reshaping how B2B buyers shortlist vendors.

As of 2026, Perplexity handles over 500 million queries per month and cites only a handful of sources per response. That selectivity makes each mention disproportionately valuable, and each absence a measurable gap in your pipeline. This article breaks down the practical methods, metrics, and workflows you need to track brand mentions in Perplexity accurately, interpret what the data means, and act on it.

What You’ll Learn

- How Perplexity selects and cites sources differently from ChatGPT, Gemini, and Google

- The three distinct outcomes to measure: mentions, citations, and links

- A manual tracking workflow you can start today with zero budget

- How to build a repeatable prompt library anchored in real buyer queries

- Five KPIs that separate useful Perplexity data from vanity metrics

- When manual tracking breaks down, and what to automate first

- How to turn tracking data into content and visibility improvements

Why Perplexity Mentions Deserve a Separate Tracking Workflow

A brand mention in Perplexity is any instance where your company, product, or service name appears in the AI-generated answer text, whether or not Perplexity links to your website. This differs from a citation, which is a numbered reference to a specific URL in the response’s source panel, and a link, which is a clickable URL a user can follow to your domain.

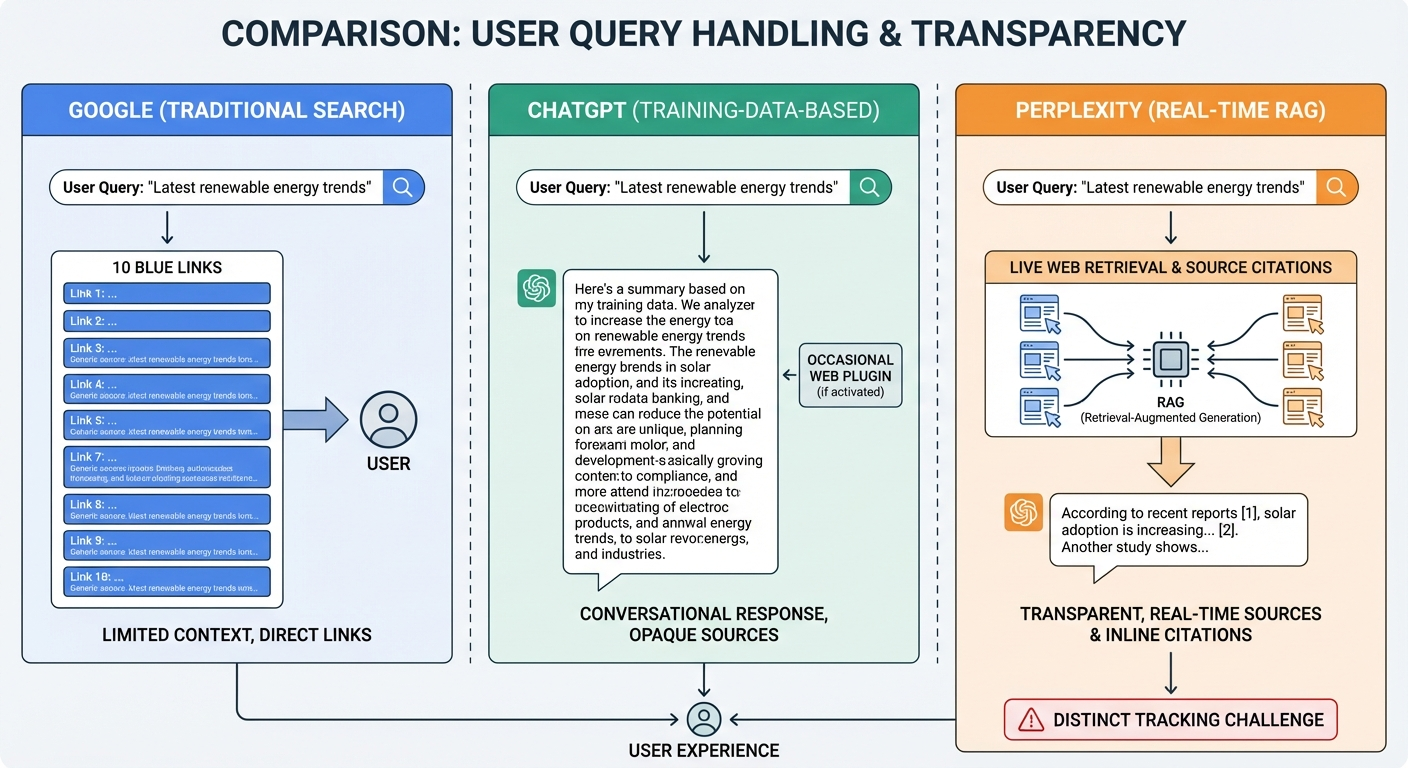

Perplexity operates on a Retrieval-Augmented Generation (RAG) architecture, meaning it performs a live web search for every query rather than relying solely on pre-trained knowledge. This has three practical consequences for tracking:

- Freshness matters immediately. New content can surface in Perplexity answers within hours of publication, a cycle dramatically faster than ChatGPT’s training data updates, which can take months.

- Source selection is transparent. Perplexity shows numbered citations for nearly every claim, so you can see exactly which domains it trusts for a given topic.

- Results shift frequently. Because Perplexity re-crawls the web for each query, your visibility can change day to day based on content updates, competitor actions, or source trust signals.

Traditional rank trackers don’t capture this. Google Search Console doesn’t log Perplexity traffic. Standard brand monitoring tools designed for social media and news coverage miss AI-generated answers entirely. That’s why Perplexity tracking requires its own dedicated workflow, whether manual or automated.

Three Outcomes to Measure, and Why Mixing Them Distorts Your Data

Many teams make the mistake of tracking “Perplexity visibility” as a single binary metric. In practice, there are three distinct outcomes that tell you very different things about your brand’s position:

| Outcome | Definition | What It Tells You |

|---|---|---|

| Mention | Your brand name appears in the answer text | Perplexity recognizes your brand as relevant to the topic |

| Citation | Your domain appears as a numbered source reference | Perplexity treats your content as trustworthy enough to cite |

| Link | A clickable URL to your site appears in the response | You can capture referral traffic from the answer |

Wondering how Perplexity stacks up against ChatGPT for buyer research? Our breakdown of Perplexity vs ChatGPT covers citation patterns, source quality, and which model your buyers actually use.

Frequently Asked Questions

How do I track brand mentions in Perplexity?

Perplexity mention tracking starts with a simple workflow: run 5 to 8 prompts about your category in Perplexity every week, log which sources Perplexity cites, and check whether your brand appears in either the answer text or the source list. The fastest setup uses a free Perplexity account plus a Google Sheet. Paid tools like BrandMentions automate this across ChatGPT, Gemini, and Claude in one dashboard, but the weekly manual cadence works fine for solo founders or small marketing teams getting baseline data.

A brand that gets mentioned but never cited has an entity recognition problem, Perplexity knows who you’re but doesn’t trust your content as a primary source. A brand that gets cited but not mentioned has the opposite issue: your pages serve as background evidence, but Perplexity names a competitor in the answer. Tracking all three separately lets you diagnose the specific gap and apply the right fix.

For a broader view of how these metrics apply across ChatGPT, Gemini, and other AI platforms, see how to track brand mentions across AI search results.

How to Set Up Manual Perplexity Tracking (Zero Budget)

Manual tracking works well for teams monitoring fewer than 40 queries who want to understand baseline visibility before investing in automation. Here’s a repeatable process.

Step 1: Create a Controlled Testing Environment

Open Perplexity in a fresh incognito browser window. If possible, use a dedicated testing account rather than one with search history. This reduces personalization noise that can skew results between sessions.

Document your testing conditions in a single header row of your tracking spreadsheet:

- Browser and device

- Logged-in or logged-out state

- Region and any VPN endpoint

- Perplexity model setting (Sonar, Sonar Pro, or Sonar Reasoning)

Keep these conditions identical across every tracking run. If Perplexity changes its default model, which has happened multiple times since 2024, note it explicitly. Model changes can shift which sources get cited even when nothing else changes.

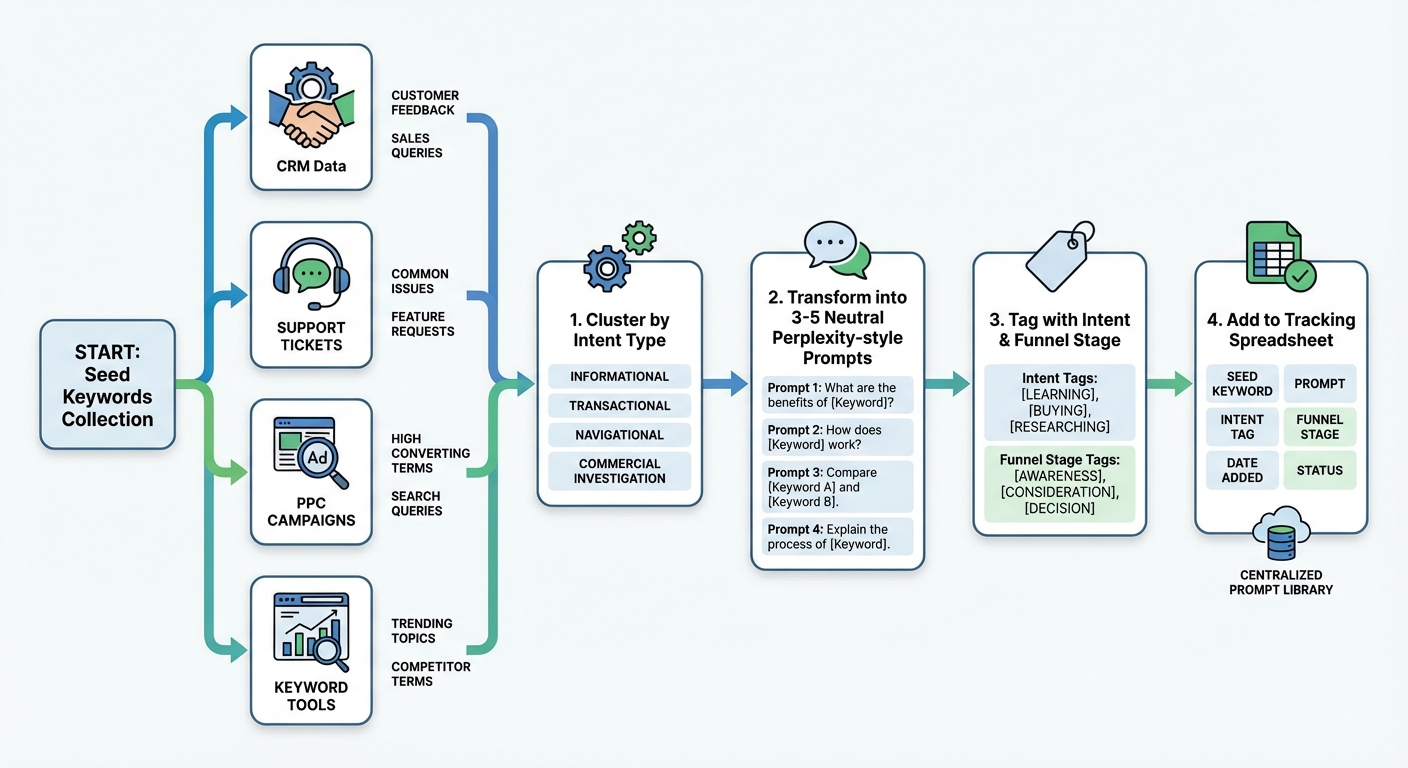

Step 2: Build a Prompt Library From Real Buyer Queries

Your prompt library should reflect how your actual buyers search, not how your marketing team talks about your product. Pull seed queries from:

- Sales call transcripts and CRM notes

- Customer support tickets and on-site search logs

- PPC search term reports

- Keyword research tools (filter for question-format and comparison queries)

Transform each seed into 3–5 Perplexity-style prompts. Keep them neutral, never stuff your brand name into the prompt. You want to test whether Perplexity surfaces your brand organically.

Prompt construction examples:

| Seed Keyword | Perplexity Prompt Variant | Intent Type |

|---|---|---|

| AI brand visibility service | “What companies help brands get mentioned by AI assistants?” | Commercial |

| track AI search mentions | “How do I monitor when ChatGPT or Perplexity mentions my brand?” | Informational |

| [competitor] alternative | “What are the best alternatives to [competitor] for AI visibility?” | Evaluative |

| best B2B brand mention tools | “Which tools track brand mentions across AI search engines?” | Commercial |

Start with 25–50 prompts. Categorize each by intent type (informational, commercial, evaluative) and funnel stage. This structure matters later when you analyze which categories drive or lose visibility.

Step 3: Run Queries and Record Structured Data

For each prompt, capture these fields in your tracking spreadsheet:

- Date and time of the query

- Exact prompt text (copy-paste, don’t paraphrase)

- Perplexity model used

- Mention status (Yes/No, did your brand name appear in the answer text?)

- Mention position (first paragraph, middle, or final section)

- Citation status (Yes/No, did your domain appear in the numbered sources?)

- Cited URL (the specific page on your domain Perplexity referenced)

- Link status (Yes/No, is the citation clickable?)

- Competitors mentioned (list every brand name in the response)

- Source domains (list all domains in Perplexity’s reference panel)

- Accuracy score (1–5 scale, does the description match your actual positioning?)

- Notes (flag anything unusual, outdated info, inaccurate claims, missing product details)

Save a screenshot or full text snapshot of each response. You’ll need these to compare across runs and to brief your content team on specific improvements.

Step 4: Set a Tracking Cadence

Run your full prompt library weekly for the first four to six weeks. This gives you enough data points to spot patterns rather than reacting to single-session noise.

After establishing a baseline, shift to:

- Weekly: 10–15 highest-priority prompts (your revenue-driving queries)

- Bi-weekly or monthly: Full library of 25–50+ prompts

- Event-triggered: After publishing major content, earning press coverage, launching products, or making significant website changes

Pro Insight: A common mistake is changing prompt wording, model settings, and location in the same tracking run. If you change multiple variables simultaneously, you can’t isolate what caused a visibility shift. Change one variable at a time.

Five KPIs That Make Perplexity Data Actionable

Raw data becomes useful only when converted into metrics you can compare across time periods and act on. These five KPIs cover the full picture:

1. Mention Rate

Formula: (Prompts where your brand is mentioned ÷ Total prompts) × 100

This is your clearest signal of whether Perplexity associates your brand with your category. Track it separately for branded prompts (where your name appears in the query) and non-branded prompts (category and problem queries). Non-branded mention rate is the harder, and more valuable, metric to improve.

2. Citation Rate

Formula: (Mentions with a domain citation ÷ Total mentions) × 100

If your mention rate is 40% but your citation rate is only 15%, Perplexity recognizes your brand but doesn’t trust your content enough to cite it. That’s a content quality and authority problem, not a brand awareness problem.

3. Share of Voice

Formula: (Your brand mentions ÷ Total brand mentions across all competitors) × 100

Share of voice shows your relative position in the category. A 50%+ share indicates category leadership in Perplexity’s view. Below 20% means competitors dominate the conversation for your key queries.

4. Position in Response

Track whether your brand appears first, second, or third in the answer, or only in the closing section. First-mentioned brands receive disproportionate attention because users scan the opening paragraphs most carefully. This isn’t a precise ranking system, but it’s a consistent directional signal when measured the same way each run.

5. Accuracy Score

Rate each mention on a 1–5 scale for how accurately Perplexity describes your brand, product, or positioning. Scores below 4 indicate entity clarity problems, inconsistent information across the web, outdated content, or missing structured data. These issues are often easier to fix than visibility gaps and can improve multiple KPIs at once.

For additional metrics and frameworks that apply across multiple AI platforms, explore best ways to track brand mentions in AI search.

When Manual Tracking Breaks Down, and What to Automate First

Manual tracking stops being practical in three situations:

- Scale: You’re tracking more than 40–50 prompts, monitoring multiple product lines, or covering different geographic markets.

- Consistency: Team members run prompts at different times, in different browser states, or forget to log results, creating data gaps that undermine trend analysis.

- Reporting: Stakeholders need recurring dashboards and alerts, not ad-hoc screenshots.

Several dedicated AI rank trackers for brand mentions have emerged to handle Perplexity-specific monitoring. When evaluating automation tools, prioritize these capabilities:

- Perplexity model selection: The tool should let you specify which Perplexity model (Sonar, Sonar Pro, Sonar Reasoning) you’re tracking, since outputs differ across models.

- Separate mention, citation, and link tracking: Tools that collapse these into a single “visibility score” hide the diagnostic detail you need.

- Competitor co-mention tracking: You need to see which other brands appear in the same responses, not just whether your brand appears.

- Historical data storage: Trend analysis requires weeks or months of stored responses, not just the latest snapshot.

- Multi-platform coverage: If you’re also tracking ChatGPT, Gemini, or Claude, a unified dashboard reduces overhead. See how to monitor brand mentions in ChatGPT for platform-specific considerations.

Key Definition: An AI visibility tracker is software that automatically queries AI search engines on a schedule, captures the full response and citation panel, extracts brand mentions, and stores the data for trend analysis over time.

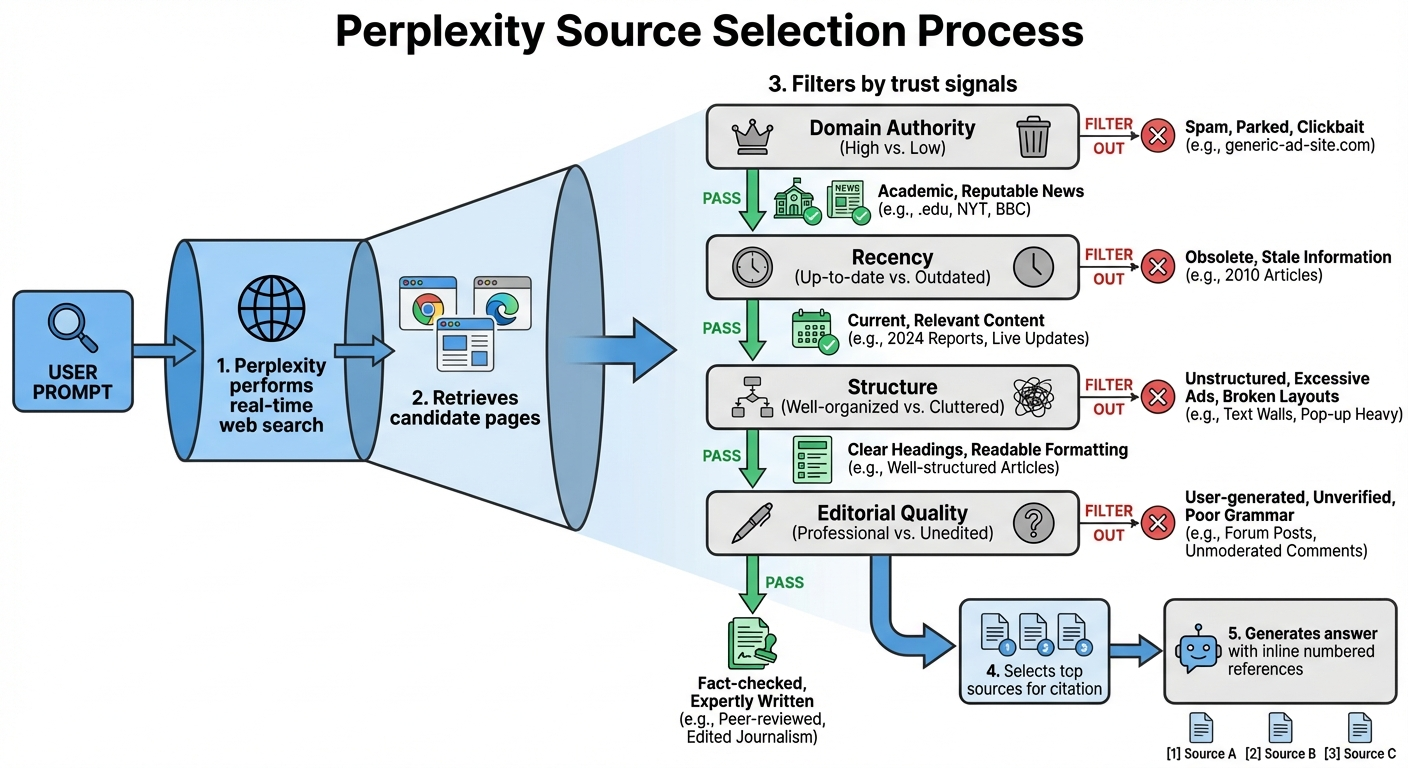

How Perplexity Selects Sources, and What That Means for Your Content

Understanding Perplexity’s source selection logic helps you interpret tracking data and prioritize the right improvements. Based on patterns we see repeatedly in Perplexity citation audits across category-relevant publication sets, Perplexity tends to prioritize:

- Topical depth and specificity: Pages that comprehensively answer a specific question outperform general overview content.

- Structured, parseable formatting: Clear H2/H3 headings, bullet points, tables, and direct answers in opening paragraphs make content easier for RAG systems to extract and cite.

- Source trust signals: Domain authority, editorial quality, HTTPS, clean technical infrastructure, and consistent author bylines all contribute to whether Perplexity treats a page as citable.

- Recency: Because Perplexity crawls the live web, recently updated content has an advantage, especially for queries where freshness matters (product comparisons, pricing, feature lists).

- Third-party validation: Perplexity frequently cites authoritative third-party sources (industry publications, review sites, news outlets) rather than the brand’s own website. This means your off-site presence, press coverage, editorial mentions, directory listings, directly influences Perplexity citations.

This last point is critical. If your tracking data shows that Perplexity mentions your brand but cites a third-party review site rather than your own domain, that third-party page is your “citation gatekeeper.” Improving your presence on that site (more reviews, updated profile, richer content) can shift Perplexity’s citation behavior faster than publishing new pages on your own domain.

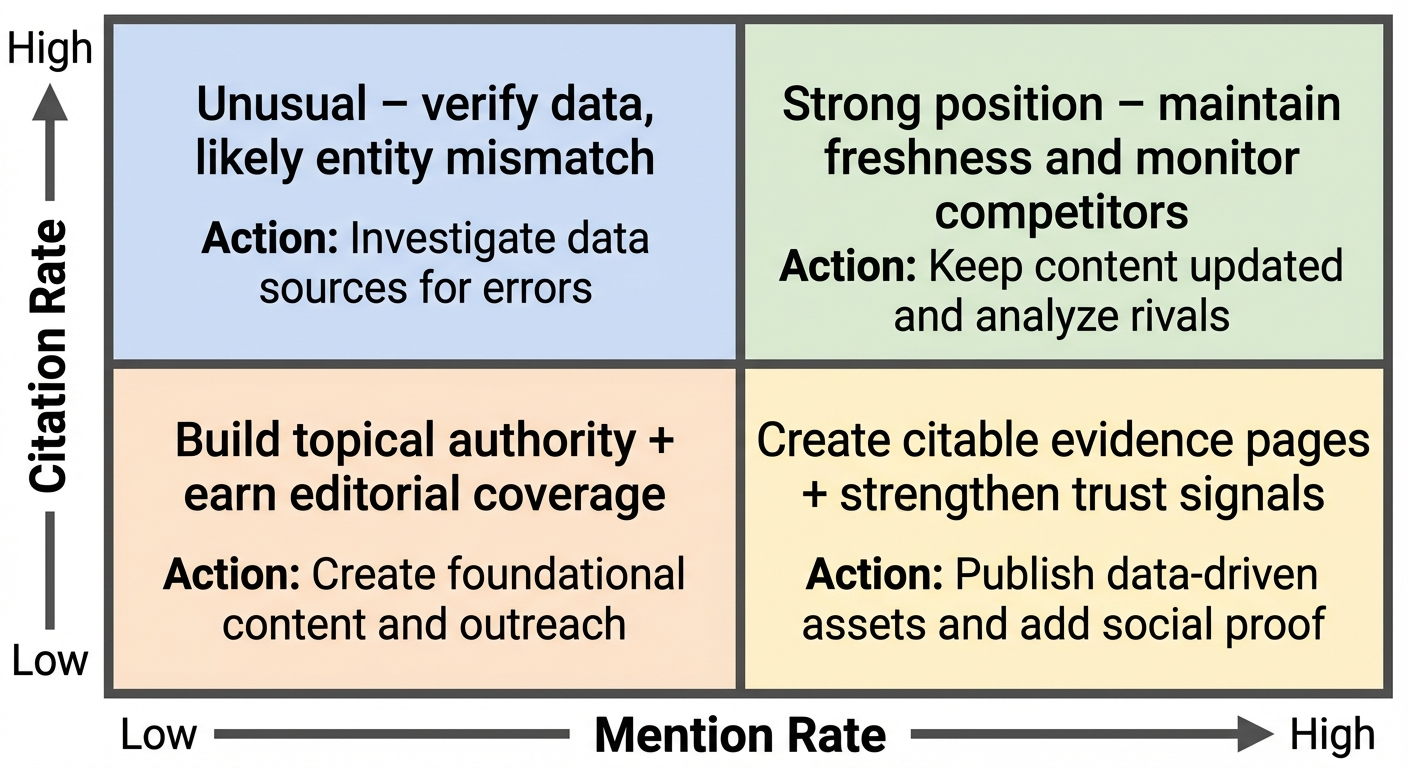

Turning Tracking Data Into Visibility Improvements

Tracking without action is just bookkeeping. Here’s how to convert each data pattern into a specific next step:

High Mention Rate, Low Citation Rate

Diagnosis: Perplexity knows your brand but doesn’t consider your content authoritative enough to cite directly.

Actions:

- Audit the pages Perplexity does cite for your category queries. What do they have that your pages lack? (Usually: original data, clearer structure, stronger trust signals.)

- Create “evidence pages”, content built specifically to be citable, with original research, structured comparisons, and verifiable claims.

- Strengthen on-page trust signals: author bylines with credentials, publish and update dates, links to primary sources.

Low Mention Rate Across Non-Branded Queries

Diagnosis: Perplexity doesn’t associate your brand with the category. you’ve a topical authority gap.

Actions:

- Build content clusters around the topic areas where you’re invisible. Cover both beginner and advanced angles.

- Earn editorial coverage on publications that Perplexity already cites for your category. Check your tracking data, the “source domains” field shows exactly which sites Perplexity trusts.

- Strengthen entity signals: consistent brand naming across the web, Organization schema markup, and a comprehensive “About” page that clearly defines what your company does.

A specialist approaches this systematically by placing contextual brand mentions across a network of category-relevant publications AI retrievers frequently surface during web retrieval. The pattern we see in Perplexity audits is that brands with sustained editorial coverage on those publications achieve materially higher recommendation rates than brands leaning on owned content alone.

Mentioned but With Inaccurate Descriptions

Diagnosis: Perplexity has conflicting or outdated information about your brand. This is an entity consistency problem.

Actions:

- Audit every public-facing source of brand information: your website, directory profiles, press releases, social media bios, and third-party listings. Standardize naming, descriptions, and key claims.

- Publish a definitive “What We Do” or product page with structured data (Organization, Product, or FAQPage schema) that gives Perplexity a canonical source for accurate information.

- If a specific third-party page is spreading outdated information that Perplexity cites, contact the publisher to request an update.

Competitors Dominate Your Category Queries

Diagnosis: Competitors have stronger content, more citations, or better third-party coverage for the queries that matter to your business.

Actions:

- Analyze which competitor pages Perplexity cites. Reverse-engineer their content format, depth, and source quality.

- Create content that adds original value competitors lack, proprietary data, unique methodology, more current information.

- Prioritize the queries with highest revenue impact rather than trying to win every category prompt at once.

For a deeper look at the service and process behind building AI-level brand authority, see how the placement process works at BrandMentions.

How Perplexity Tracking Differs From ChatGPT and Gemini

For the companion per-platform walkthroughs, see how to check brand mentions in ChatGPT and the detailed Perplexity tracking guide, and monitoring brand mentions in LLMs covers the cross-platform cadence the Perplexity workflow described here plugs into.

If you’re monitoring multiple AI platforms, understanding Perplexity’s unique characteristics prevents you from applying the wrong playbook.

| Dimension | Perplexity | ChatGPT | Google Gemini |

|---|---|---|---|

| Data source | Real-time web retrieval (RAG) | Training data + optional web browsing | Hybrid: training data + Google Search integration |

| Citation transparency | Always shows numbered source references | Conversational mentions; citations less consistent | Links and snippets integrated into response |

| Content freshness window | Hours to days | Months (training cycle dependent) | Days to weeks |

| Response consistency | Moderate, shifts with web changes | Higher for training-data queries; variable for browsing | Moderate, influenced by Google’s index |

| Best tracking approach | Prompt-based with citation panel analysis | Prompt-based with mention extraction | Prompt-based with link and snippet tracking |

The biggest practical difference: Perplexity’s real-time retrieval means your content improvements can show results within days, not months. But it also means competitors can displace you just as quickly. This is why a regular tracking cadence matters more for Perplexity than for any other AI platform.

For platform-specific monitoring approaches, see how to track your brand in ChatGPT and the broader guide to AI search brand monitoring.

Common Mistakes That Distort Perplexity Tracking Data

The Perplexity-tracking mistake we see most often in audits is a team running the same prompt three times in a fresh session and averaging the results, without controlling for session history or signed-in state. The variance across those three runs is often wider than the week-over-week signal people are trying to measure. Standardize the environment first, log the run conditions, and the numbers stop bouncing.

After reviewing tracking setups across dozens of B2B teams, these are the errors that most frequently lead to misleading conclusions:

- Changing multiple variables at once. If you update prompt wording, switch Perplexity models, and change your VPN location in the same session, you can’t isolate what caused a visibility shift. Change one variable per tracking run.

- Only tracking “best of” queries. Commercial-intent queries (“best AI visibility tool”) get all the attention, but informational queries (“how does AI search work”) often determine which sources Perplexity trusts when it later answers commercial queries. Track both.

- Treating screenshots as a dataset. One-off screenshots don’t support trend analysis. Use a structured spreadsheet with consistent fields so you can compare week over week without interpretation drift.

- Ignoring the citation source URLs. The domains Perplexity cites tell you exactly where to focus your off-site content and PR efforts. Skipping this field is like running Google Analytics but never looking at traffic sources.

- Not separating mentions from citations from links. Bundling these into a single “visibility” score hides the specific problem you need to fix.

Warning: Perplexity results can vary based on model selection, time of day, and recent web crawl activity. A single data point is a snapshot, not a trend. Wait for 4–6 weeks of consistent data before drawing strategic conclusions.

Practical Checklist: Launch Perplexity Tracking This Week

Use this checklist to go from zero to structured Perplexity monitoring in under two hours:

- Create a dedicated tracking spreadsheet with the 12 fields listed in the manual tracking section above.

- Document your baseline testing conditions (browser, device, login state, region, model) in the spreadsheet header.

- Build an initial prompt library of 25–30 queries from CRM data, support tickets, and keyword research. Tag each by intent type and funnel stage.

- Run your first full tracking session in incognito mode. Record every field for every prompt.

- Calculate your five baseline KPIs: mention rate, citation rate, share of voice, position distribution, and average accuracy score.

- Identify your top three gaps: which high-value queries does Perplexity answer without mentioning your brand? Which competitors dominate?

- Set a weekly tracking calendar reminder for your 10–15 highest-priority prompts.

- Brief your content team on the specific pages and topics where Perplexity cites competitors instead of your brand.

If you’re managing multiple brands, running 50+ prompts, or need stakeholder-ready dashboards, evaluate whether an AI rank tracker built for brand mentions will save enough time to justify the investment.

FAQ

Does Perplexity always show citations in its answers?

Perplexity includes numbered source citations in nearly every response, which is what makes it uniquely trackable among AI platforms. However, the number and specificity of citations can vary by query complexity and model selection. Sonar Reasoning Pro responses tend to include more detailed citations than standard Sonar responses.

Can I see Perplexity referral traffic in Google Analytics?

Yes, if Perplexity links to your domain (not just mentions your brand), you’ll see referral traffic from perplexity.ai in your analytics. However, mentions without links won’t generate measurable referral traffic, which is why tracking mentions separately from citations and links matters. Google Search Console doesn’t track Perplexity data.

How often should I run Perplexity tracking?

For initial baseline building, run your full prompt library weekly for 4–6 weeks. After that, track your 10–15 highest-priority queries weekly and run the full library monthly. Add event-triggered runs after major content publishes, product launches, or press coverage.

What’s the difference between Perplexity tracking and traditional brand monitoring?

Traditional brand monitoring (tools like Mention or Google Alerts) tracks when your brand appears on websites, social media, and news outlets. Perplexity tracking measures whether AI-generated answers mention, cite, and link to your brand, a fundamentally different surface that reflects how AI synthesizes and recommends information, not just where your name appears online.

Can I influence what Perplexity says about my brand?

You can’t directly control Perplexity’s outputs, but you can improve the inputs. Perplexity generates answers from live web sources, so improving your on-site content quality, earning editorial mentions on authoritative publications, and maintaining consistent entity information across the web all influence what Perplexity retrieves and cites. This is a core principle behind how AI brand mention strategies work.

How many prompts do I need to track for reliable data?

Start with 25–30 prompts to establish a meaningful baseline. Expand to 50–100+ once you want stable trendlines, better category coverage, and statistically meaningful share-of-voice comparisons. Prioritize prompts with clear revenue intent over vanity queries.

A 30-Day Perplexity Visibility Improvement Plan

Perplexity tracking is the diagnostic layer: it gives you a baseline for how the retriever describes your brand and where the gaps are. The strategic layer is acting on that data: building citable content, earning editorial mentions on the publications Perplexity already trusts, and strengthening entity signals so AI systems consistently associate your brand with your category.

The brands that compound AI visibility over time don’t just track, they build a systematic presence across the sources that AI platforms retrieve from. If your tracking data reveals persistent gaps in Perplexity citations, the problem is usually upstream: your brand lacks the editorial footprint that gives AI systems confidence to recommend you.

If you want a baseline before committing to a tool or process, request a quick AI visibility audit. We’ll run 25 category-relevant prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews so you can see exactly which sources each platform trusts for your category, and which competitors are capturing citations you’re not.