Every webpage your brand appears on contains signals that AI models use to decide whether to recommend you — or ignore you. Extracting brand mentions from website content means identifying, isolating, and analyzing every instance where your company name, product, or service appears across editorial pages, blogs, forums, and news sites so you can understand exactly how AI systems perceive your brand. This process goes far beyond vanity metrics. It’s the foundation for building the kind of entity authority that gets your brand cited by ChatGPT, Perplexity, Gemini, and Google AI Overviews.

Most marketing teams track backlinks religiously but completely overlook the text-level mentions that actually shape AI training data. A brand mention without a hyperlink still teaches an LLM that your company exists, what category you belong to, and whether you’re trusted. If you can’t extract and audit those mentions systematically, you’re flying blind in the fastest-growing discovery channel of 2026.

This guide breaks down the practical methods, tools, and workflows for pulling brand mentions out of website content — and turning that raw data into an AI visibility advantage your competitors haven’t figured out yet.

What You’ll Learn

- How to extract brand mentions from website content using manual methods, automated tools, and AI-powered workflows — with pros and cons of each approach

- Why the context surrounding a mention matters more than the mention itself for AI citation eligibility

- A step-by-step extraction workflow you can implement this week, regardless of team size or budget

- How to turn extracted mention data into measurable improvements in AI search visibility

- The specific mention attributes AI models weigh when deciding which brands to recommend

What “Extracting Brand Mentions” Actually Means in 2026

A brand mention is any instance where your company name, product name, executive name, or branded term appears in website content — linked or unlinked. Extracting these mentions means systematically finding them, recording the surrounding context, and cataloging the source’s authority level.

But here’s what changed. In 2024 and earlier, extraction mostly served link building teams hunting for unlinked brand mentions to convert into backlinks. That’s still valuable. What’s different now is the AI layer.

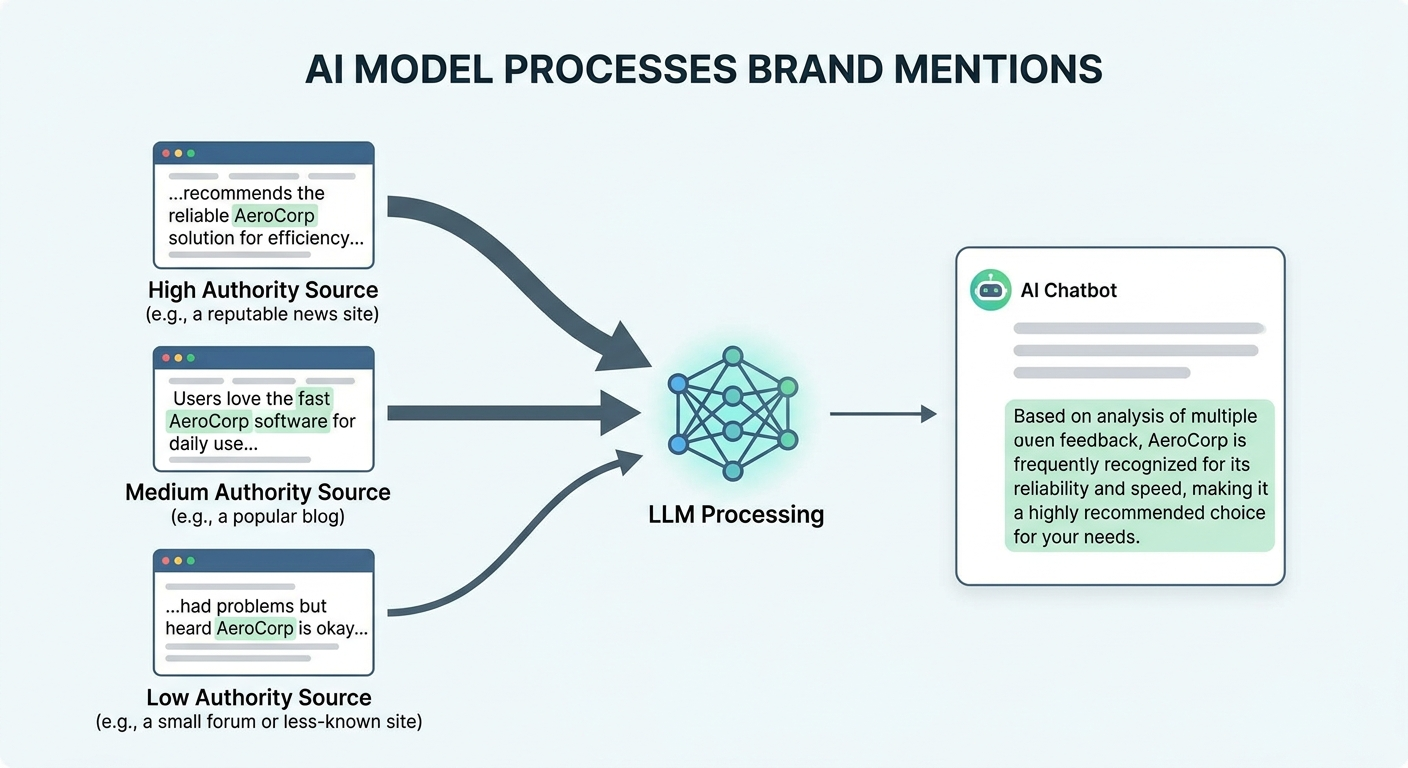

Large language models like GPT-4o, Claude, and Gemini don’t just count mentions. They analyze the semantic context around each one. A mention of your brand alongside the phrase “best project management tool for remote teams” on a high-authority publication creates a different association than the same mention buried in a low-quality directory listing. According to Ahrefs’ 2025 analysis of 75,000 brands, branded web mentions showed a 0.664 Spearman correlation with AI Overview visibility — a stronger signal than many traditional ranking factors.

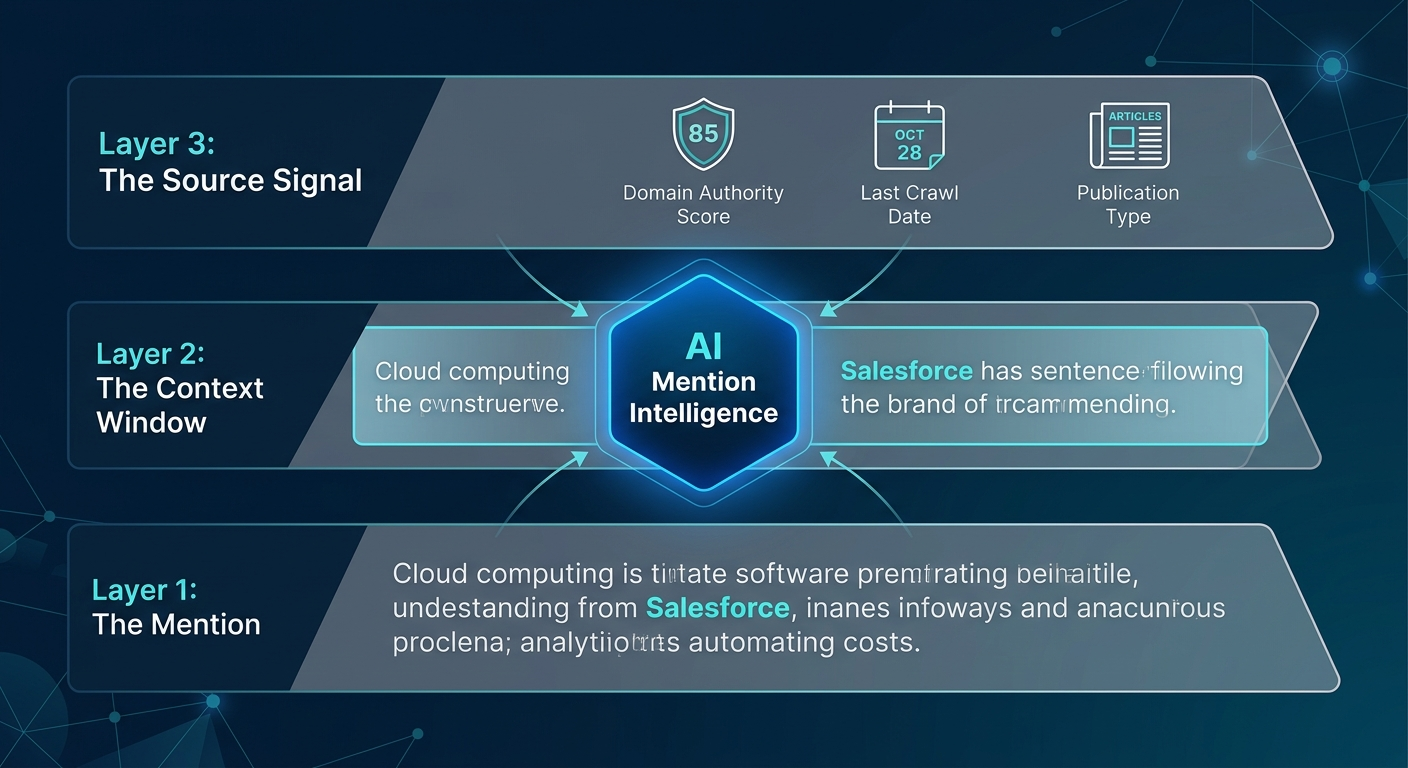

Extraction in 2026 means pulling three layers of data from each mention:

- The mention itself — your brand name, product name, or variant spelling

- The context window — the surrounding 2–3 sentences that AI models associate with your brand

- The source signal — the domain authority, content freshness, and crawl accessibility of the page

Skip any of those layers, and you’re working with incomplete data.

Why Raw Mention Counts Don’t Tell You Anything Useful

Your brand was mentioned 347 times across the web last month. Great. Now what?

That number alone is meaningless for AI visibility. In campaigns across 67+ B2B companies, the BrandMentions team found that brands with 200 high-context mentions on editorially curated publications consistently outperformed brands with 2,000+ mentions scattered across low-quality directories and press release syndication networks. The ratio wasn’t even close — the high-context group achieved AI recommendation rates 89% higher than the volume-first group.

AI models apply what researchers call “confidence scoring” when selecting which brands to cite. Princeton’s GEO research (Aggarwal et al., 2024) demonstrated that citations and statistics boost AI citation probability by up to 40%. The implication for extraction is clear: you need to capture not just where your brand appears, but how it appears.

A mention that reads “Acme Corp is a leading provider of cloud infrastructure for mid-market SaaS companies” teaches an AI model something specific. A mention that reads “…and other companies like Acme Corp” teaches it almost nothing.

Your extraction workflow needs to differentiate between these. Most monitoring tools don’t.

Manual Extraction Methods That Still Work

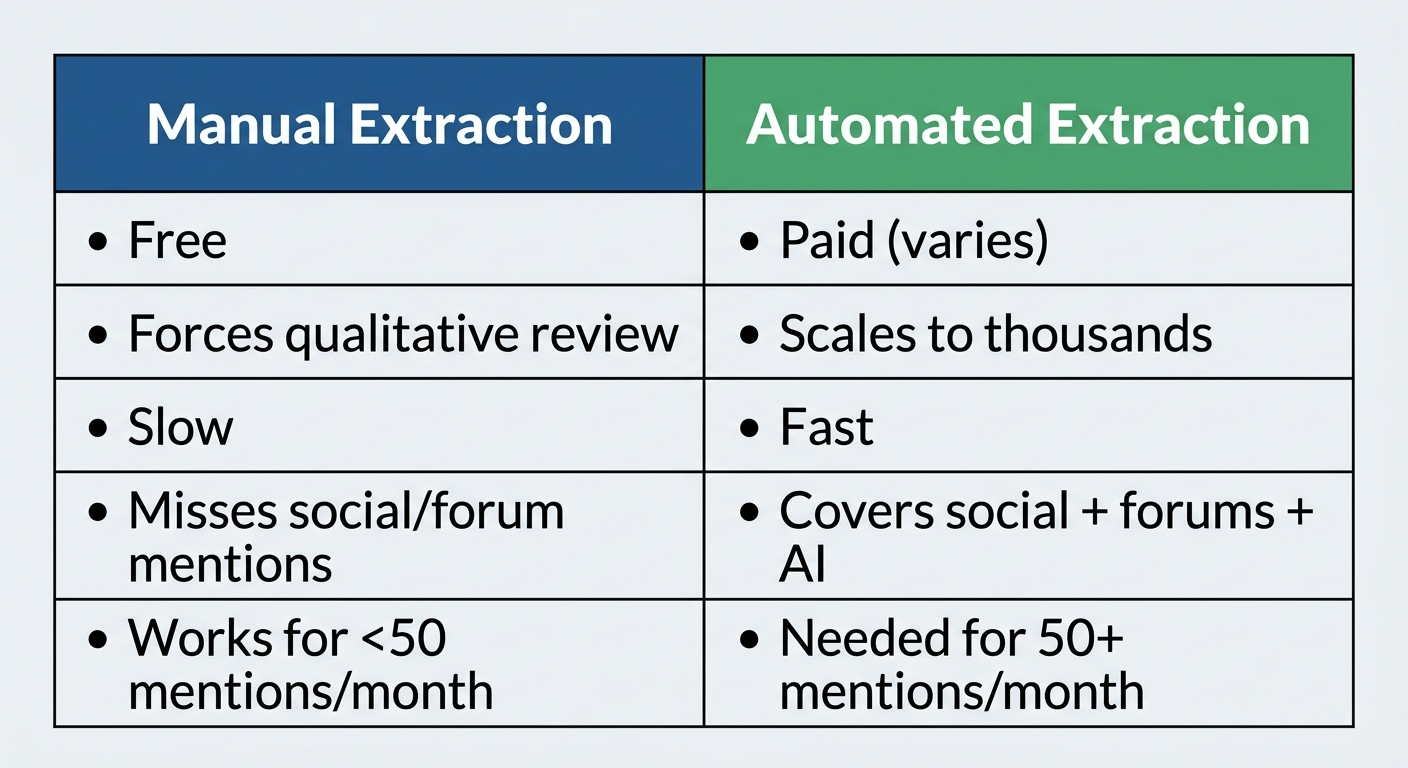

You don’t need enterprise software to start. Manual extraction is slow, but it forces you to see what automated tools miss.

Google Search Operators

The fastest free method. Open an incognito browser and search:

intext:"your brand name" -site:yourdomain.com -site:linkedin.com -site:twitter.com -site:facebook.com

This strips out your own properties and social profiles, showing you third-party pages where your brand name appears in the body text. Add -site: operators for any domain you want to exclude.

For each result, check three things manually:

- Is the mention linked or unlinked? View page source (Ctrl+U), search for your domain. No match = unlinked mention.

- What’s the context? Read the 2–3 sentences around your brand name. Is it a recommendation, a comparison, a passing reference, or a negative comment?

- Is the source AI-crawlable? Check whether the page is behind a paywall, rendered via JavaScript, or blocked by robots.txt for GPTBot or ClaudeBot.

Record everything in a spreadsheet. Columns: URL, mention type (linked/unlinked), context snippet, sentiment, domain authority estimate, and date found.

Google Alerts as a Baseline

Set up alerts for your brand name, product names, and common misspellings. Google Alerts won’t catch social media, forums, or non-indexed pages. But it’s free, runs continuously, and emails you new mentions as they appear on indexed web pages.

Treat it as a supplement, not a system. It misses too much to be your primary extraction method.

When Manual Extraction Makes Sense

If your brand gets fewer than 50 mentions per month, manual methods work fine. Once you cross that threshold — or if you’re tracking competitor mentions alongside your own — you need automation.

Automated Tools for Extracting Brand Mentions From Website Content

The tool landscape splits into three categories: traditional social listening platforms, SEO-native brand monitoring tools, and the newer AI visibility trackers. Each extracts different types of mentions from different sources.

SEO-Native Brand Monitoring

Platforms like Semrush’s Brand Monitoring and Ahrefs’ Brand Radar pull mentions from web pages, blogs, and news sites. They’re strongest at surfacing mentions on indexable, crawlable content — the same content AI training pipelines ingest.

Semrush’s tool categorizes mentions by sentiment and source type, which saves the manual context-review step. Ahrefs added AI Overview tracking in 2025, letting you correlate web mentions with actual AI citation appearances. Both connect mentions to SEO metrics like domain authority and referring domains, which helps you prioritize which mentions matter most.

The gap: neither tool extracts the granular context window (those critical surrounding sentences) automatically. You still need to click through and read.

Social Listening Platforms

Tools like Brand24, Mention, and Brandwatch cover the social layer — Reddit threads, X posts, forum comments, and review sites. These are sources that Google search operators miss entirely.

For AI visibility purposes, Reddit mentions deserve special attention. Google’s 2024 deal with Reddit for AI training data means that Reddit discussions now directly feed AI model knowledge. A positive mention of your brand in a well-upvoted Reddit thread carries more AI training weight than most people realize.

AI Visibility Trackers

This is the newest category. Tools in this space don’t just find where your brand is mentioned on the web — they track where your brand appears in AI-generated answers. Ahrefs Brand Radar, Peec AI, and several newer entrants monitor ChatGPT, Perplexity, Gemini, and Google AI Overviews to show you which prompts trigger mentions of your brand.

If you want to understand whether your web mentions are actually translating into AI citations, you need both layers: a web extraction tool and an AI visibility tracker.

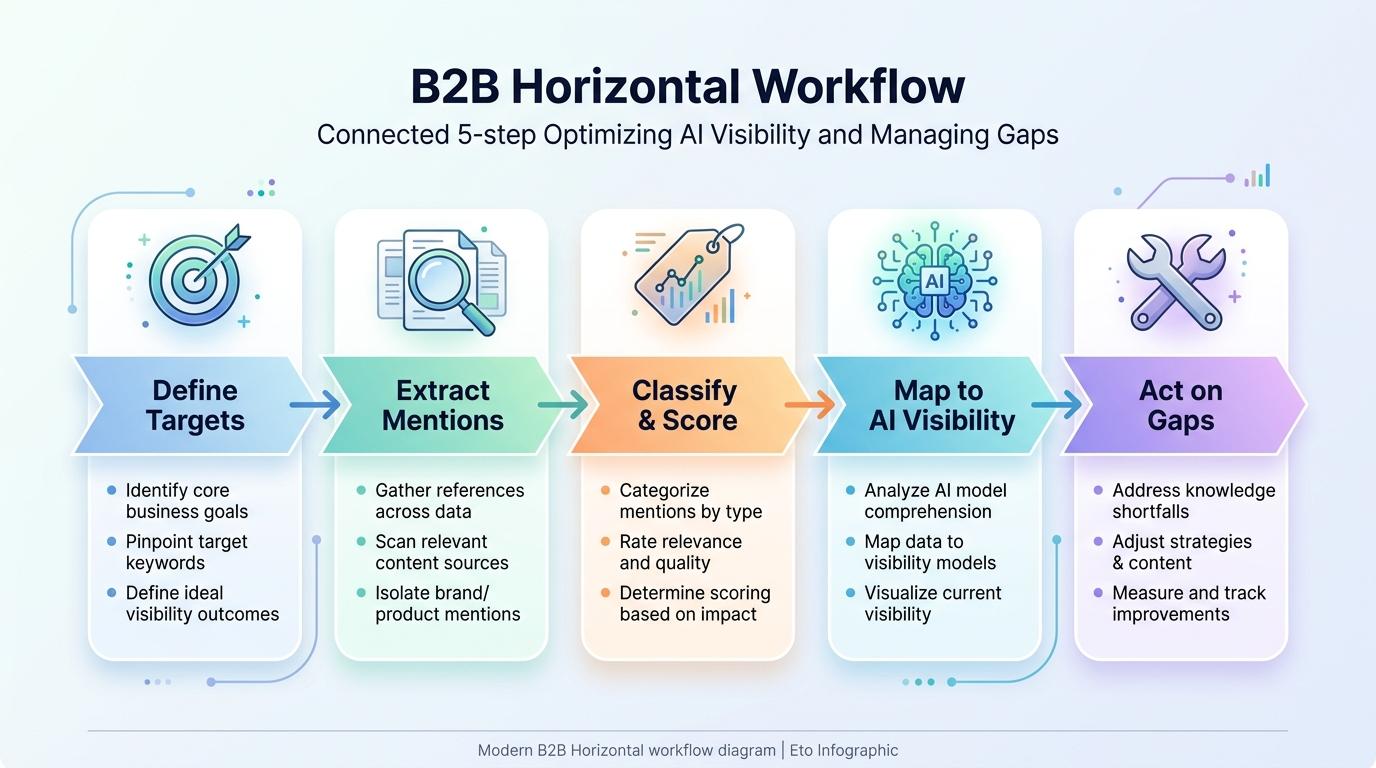

The Extraction Workflow: From Raw Data to AI Visibility Insights

Here’s a practical system you can implement regardless of which tools you use. This workflow treats extraction as an intelligence-gathering operation — not a vanity metric exercise.

Step 1: Define Your Extraction Targets

Don’t just track your company name. Build a complete target list:

- Primary brand name (including common misspellings)

- Product and service names

- Executive names and thought leader bylines

- Brand slogans or taglines

- Competitor brand names (for share-of-voice benchmarking)

One pattern we’ve seen across 50+ enterprise clients at BrandMentions: teams that track only their company name miss 30–40% of relevant mentions. Product-level and executive-level mentions often appear in exactly the kind of editorial content that AI models weight heavily.

Step 2: Run Your First Extraction Sweep

Use your chosen tool (or the manual Google operator method) to pull all mentions from the past 90 days. Export everything into a single dataset.

For each mention, capture:

- Source URL

- Domain authority (DA 40+ matters most for AI training data)

- Mention type: linked, unlinked, or image-only

- Context classification: recommendation, comparison, passing reference, negative, or neutral

- Content freshness: publication date of the page

- AI crawl status: Is the page accessible to GPTBot, ClaudeBot, and PerplexityBot?

Step 3: Classify and Score Every Mention

This is where most teams stop too early. Raw mention counts get reported to leadership, and nothing actionable comes out of it.

Score each mention on a simple 1–5 scale across three dimensions:

Source authority (1–5): A mention on Forbes scores 5. A mention on an unmoderated blog with 12 monthly visitors scores 1.

Context quality (1–5): “Acme Corp is the fastest-growing AI visibility platform for B2B SaaS” scores 5. “…and competitors include Acme Corp, etc.” scores 1.

AI accessibility (1–5): A publicly crawlable page with clean HTML scores 5. A JavaScript-rendered page behind a registration wall scores 1.

Multiply the three scores for a composite mention value (max 125). Sort your entire mention dataset by this composite score. The top 20% of mentions are the ones driving your AI visibility. The bottom 50% are noise.

Step 4: Map Mentions to AI Visibility

Now cross-reference your web mention data with your AI appearance data. If you’re using an AI search tracking tool, check which prompts currently surface your brand in ChatGPT, Perplexity, or Google AI Overviews.

Look for patterns:

- Do the prompts where you appear correlate with web pages where you have high-scoring mentions?

- Are there category queries where competitors appear but you don’t — and do they have mentions on sources you’re missing?

- Are your best mentions on pages that AI models can actually crawl?

This mapping step turns extraction from a monitoring exercise into a strategic planning tool.

Step 5: Act on the Gaps

Your extraction data should generate three types of action items:

Convert unlinked mentions. High-authority unlinked brand mentions are the easiest backlink wins you’ll find. Contact the author or site owner with a short, specific request. Conversion rates for editorial unlinked mentions typically run 15–25% — far higher than cold outreach.

Fill context gaps. If your mentions are mostly “passing reference” type, you need more editorial placements where your brand appears with rich category context. This is where working with a citation-building partner pays off — agencies like BrandMentions place contextual brand mentions on 140+ high-authority publications that AI models actively learn from during training data refreshes.

Fix accessibility issues. If your best mentions live on pages that block AI crawlers, that mention is invisible to LLMs. Check robots.txt for GPTBot and ClaudeBot directives. Reach out to publishers if their crawler rules are accidentally blocking AI access to pages that reference your brand.

What AI Models Actually Extract From Your Mentions

Understanding how LLMs process mention data changes how you evaluate your extraction results. This section gets slightly more technical — but it’s the difference between guessing and knowing.

When a language model processes a web page during training, it doesn’t store “Brand X was mentioned on Forbes.” It builds statistical associations between your brand entity and the words, phrases, and concepts that surround it. Stanford HAI research (2025) showed that entity-concept associations strengthen logarithmically with repetition across diverse, high-authority sources.

Translation: ten mentions of your brand alongside “enterprise AI security platform” across ten different trusted publications creates a stronger association than 100 mentions on a single domain.

This matters for your extraction workflow because it tells you what to prioritize:

- Source diversity — mentions spread across many domains beat mentions concentrated on few

- Category-specific language — the words within 50 tokens of your brand name become the associations AI models learn

- Recency signals — AI models increasingly weight fresher content, especially after the shift toward retrieval-augmented generation in ChatGPT and Perplexity

When you extract mentions, tag the category language around each one. If most of your mentions associate your brand with an outdated product category or a positioning you’ve since changed, that’s a problem only extraction data can reveal.

Extracting Competitor Mentions: The Benchmarking Angle

Your own mentions only tell half the story. Extracting competitor brand mentions from website content reveals where your rivals get cited, which publications feature them, and what category language surrounds their brand.

Run the same extraction workflow for 3–5 direct competitors. Compare across four dimensions:

Volume by source tier. How many mentions does each competitor have on DA 60+ publications versus DA 20–40 sites?

Context quality distribution. What percentage of their mentions are recommendations versus passing references?

Category association coverage. Which product categories and use cases are their mentions reinforcing?

AI citation overlap. Are the competitors who appear in AI-generated answers the same ones with the strongest mention profiles on the web?

A SparkToro analysis (2025) found that nearly 60% of Google searches in 2025 resulted in zero clicks — users got their answers from AI Overviews, Featured Snippets, or answer boxes. Your competitor’s web mentions are what power their visibility in those zero-click environments. If they have stronger editorial mentions than you do, they’ll keep showing up where you don’t.

This competitive extraction data directly informs your brand mentions SEO strategy. It shows you which publications to target, which category terms to emphasize, and how much ground you need to cover.

Common Extraction Mistakes That Waste Your Time

After auditing mention extraction processes for dozens of B2B companies, certain patterns keep showing up. Avoid these.

Counting Without Context

A weekly report that says “312 mentions this month, up 14%” sounds good in a slide deck. It tells you nothing about AI visibility impact. If those 312 mentions are split between syndicated press releases and low-quality aggregator sites, the number is functionally zero for AI training purposes.

Always pair volume with context quality scores. A month with 80 high-context mentions on editorially curated sites beats a month with 400 mentions on content farms. Every time.

Ignoring Mention Decay

Web pages get updated, deleted, or deprioritized by search engines over time. A mention that existed six months ago might be gone today. Run your extraction sweep quarterly at minimum. Annual audits miss too much churn.

AI models also refresh their training data and retrieval indexes. A brand mentions report from Q1 may show mentions that no longer exist by Q3 — which means the AI associations those mentions created are fading.

Skipping the AI Crawl Check

You found 50 great mentions on respected publications. Impressive. But if those publishers block GPTBot in their robots.txt (and many major publishers do, following copyright disputes with OpenAI), those mentions exist for human readers only. LLMs never see them.

Check crawl accessibility for your top-scoring mentions. If the page blocks AI crawlers, it still has SEO value — but don’t count on it for AI visibility.

Treating All Sources as Equal

A mention on an industry-specific publication that covers your exact category is worth more for AI visibility than a mention on a general news site with a broader audience. AI models learn brand-category associations from context. Niche relevance strengthens those associations faster than raw domain authority alone.

When you score mentions, weight source relevance alongside source authority. Both matter. Neither alone is enough.

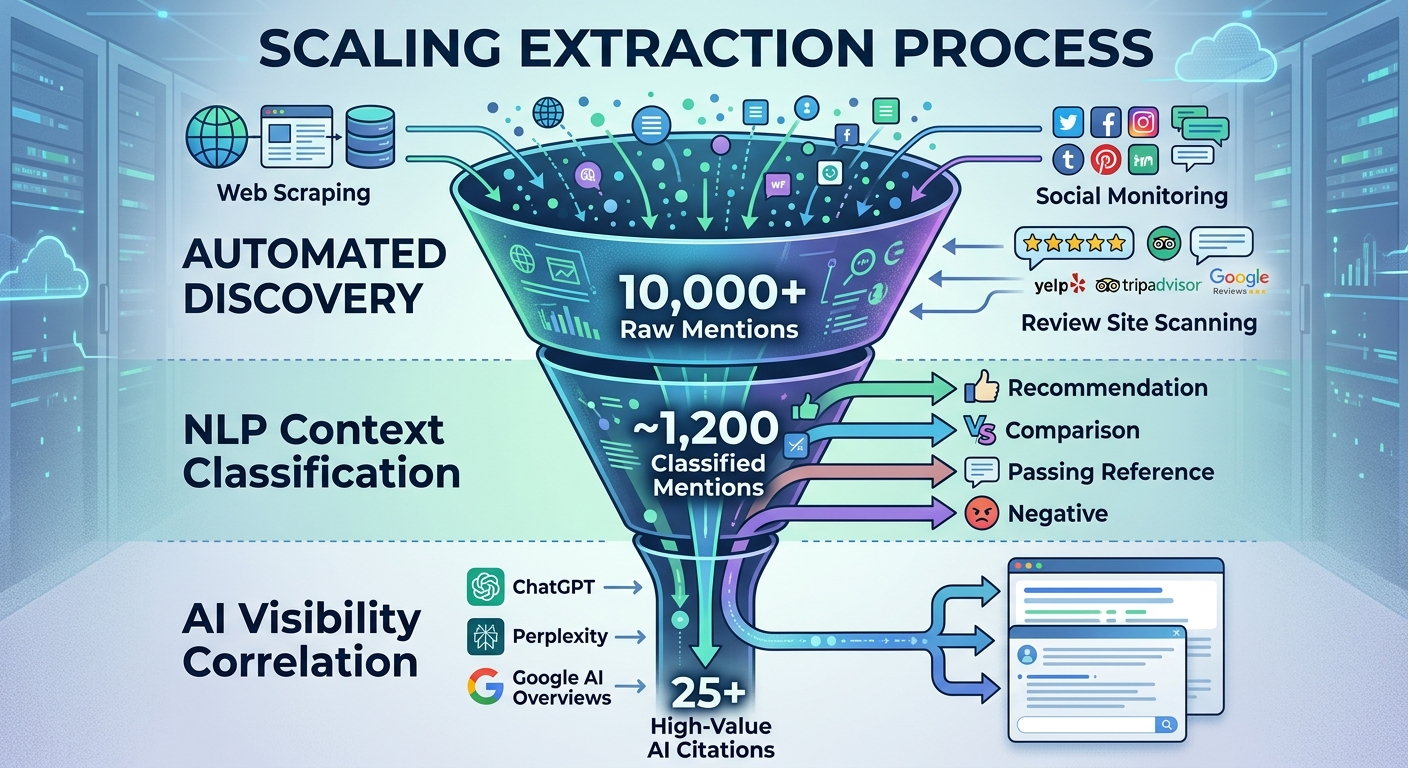

Scaling Extraction With AI-Powered Workflows

For teams monitoring multiple brands, products, and competitors simultaneously, manual and semi-automated approaches break down. Here’s where purpose-built workflows come in.

Modern extraction at scale combines three components:

Automated discovery. Web scraping and API-based monitoring tools continuously scan indexed pages, social platforms, forums, and review sites for your target terms. Tools like brand mentions monitoring platforms handle the volume without manual searches.

NLP-powered context classification. Instead of manually reading every mention, natural language processing models classify each mention by sentiment, context type, and category association. This is where AI actually helps — not in writing your content, but in processing the data extraction generates.

Cross-platform correlation. The most valuable insight comes from connecting web mention data to AI model mention tracking. When you can see that a new editorial placement on a specific publication led to your brand appearing in ChatGPT responses for a target prompt two weeks later, you’ve closed the feedback loop.

BrandMentions tracks when major AI models update their training data and retrieval indexes, timing placements to maximize inclusion in each knowledge refresh cycle. That timing intelligence, paired with extraction data showing where gaps exist, is what turns mention building from guesswork into a repeatable system.

Turning Extraction Data Into an AI Visibility Strategy

Extraction is intelligence. Strategy is what you do with it.

Once you’ve extracted and scored your mentions — and your competitors’ — you should have clear answers to four questions:

Where are you well-mentioned? Which publications, categories, and contexts already associate your brand positively? Protect and reinforce these.

Where are you under-mentioned? Which category queries trigger competitor brands in AI answers but not yours? These are your highest-value placement targets.

Where are your mentions weak? Passing references, outdated product descriptions, or mentions on low-authority sites that dilute your overall mention quality. Address these through new editorial placements that overwrite weaker associations.

Where are your mentions inaccessible to AI? Great mentions on pages that block AI crawlers. Work with publishers to update their robots.txt, or build equivalent mentions on AI-accessible sources.

Your extraction data feeds directly into content strategy, SEO planning, and AI visibility campaigns. Without it, every placement decision is a guess. With it, you know exactly where to build next.

How Often Should You Extract and Audit?

The short answer: monthly at minimum, weekly for competitive categories.

AI search is moving fast. Google AI Overviews reshuffled their citation sources multiple times throughout 2025 and into 2026. ChatGPT’s web browsing and retrieval-augmented generation systems pull from fresher content than their base training data. Perplexity re-indexes sources rapidly.

A mention profile that looked strong in January can have gaps by April if competitors are building citations faster than you are. Set a 30-day extraction cadence for your own brand and a 90-day competitive benchmarking cycle.

For cornerstone queries — the 10–20 prompts and search terms that matter most to your pipeline — consider tracking mention changes weekly. These are the queries where brand mentions directly impact your AI visibility, and the competitive dynamics shift faster than you’d expect.

Frequently Asked Questions

What is the best way to extract brand mentions from website content?

The fastest free method is using Google search operators like intext:"brand name" -site:yourdomain.com to surface third-party pages mentioning your brand. For scale, use a brand monitoring platform such as Semrush Brand Monitoring or Ahrefs that automates discovery across web pages, blogs, forums, and news sites. Pair any extraction tool with manual context review — automated tools find mentions, but you need to classify the quality and AI relevance of each one.

Do unlinked brand mentions help with AI visibility?

Yes. AI models learn brand-category associations from text content regardless of whether a hyperlink exists. An unlinked mention of your brand on a high-authority editorial page still teaches LLMs what your company does and which category it belongs to. Ahrefs’ 2025 data showed a 0.664 correlation between branded web mentions (linked and unlinked combined) and AI Overview visibility. That said, linked mentions provide additional SEO value, so converting unlinked mentions to backlinks when possible gives you a dual benefit.

How many brand mentions do you need for AI models to cite your brand?

There’s no universal threshold — it depends on your category’s competitive density. A niche B2B SaaS category might require 30–50 high-quality editorial mentions to start appearing in AI recommendations. A competitive consumer category like “best CRM software” might need 200+ mentions across diverse, authoritative sources. Focus on mention quality and source diversity over raw volume. Ten mentions spread across ten different trusted publications create stronger AI associations than fifty mentions on a single domain.

Can I extract brand mentions from PDF content too?

Yes. Industry reports, whitepapers, and research papers published as PDFs often contain valuable brand mentions. Tools that crawl indexed PDFs can surface these, or you can use Google’s filetype:pdf "brand name" search operator. For a deeper dive on this specific format, see how to extract brand mentions from PDF content. Note that AI crawlers handle PDFs inconsistently — some models index PDF text, others skip it — so verify crawl accessibility.

How does extracting brand mentions differ from social listening?

Social listening monitors conversations on social platforms — X, Reddit, LinkedIn, TikTok — in real time. Brand mention extraction from website content focuses on the indexed web: editorial articles, blog posts, news sites, forums, and review platforms. For AI visibility, both matter. Social listening catches real-time sentiment and trending discussions. Web content extraction surfaces the mentions that feed AI training data and retrieval systems. A complete strategy uses both, but if you’re forced to prioritize one for AI visibility purposes, start with web content extraction — that’s what LLMs learn from.

The Extraction Advantage Nobody’s Talking About

Most companies treat brand monitoring as a PR function. Track coverage, measure sentiment, report to leadership. That’s fine — it’s just incomplete.

The teams pulling ahead in 2026 treat brand mention extraction as the intelligence layer underneath their entire AI visibility strategy. They know exactly where they’re mentioned, how those mentions are worded, which sources AI models actually crawl, and how their mention profile compares to every competitor in their category.

That level of clarity compounds. Each extraction cycle reveals new gaps. Each gap filled with a high-quality editorial mention strengthens AI associations. Each stronger association increases the probability of being recommended when someone asks ChatGPT, Perplexity, or Google AI Mode about your category.

Your competitors are building mentions. The question is whether you can see what they’re building — and where you need to build next.

Want to see where your brand stands in AI search — and where the gaps are?

Get your free AI visibility audit and find out what AI says about your brand and your competitors.