Your buyers stopped Googling. They’re asking ChatGPT which vendor to shortlist, asking Perplexity to compare your category, asking Claude to draft an RFP. If your SaaS doesn’t appear in those answers, you’re not in the deal, you’re not even in the conversation. AI visibility for B2B SaaS is the practice of getting your brand cited, recommended, and named inside AI-generated answers across ChatGPT, Perplexity, Claude, Gemini, and Google AI Overviews, by building the entity authority and citation profile those models actually pull from. It’s not SEO with a new label. It’s a different distribution problem with a different solution.

Most B2B SaaS teams are still publishing for Google’s index while their buyers are getting answers from a different set of sources entirely. That gap is why a DerivateX 2026 benchmark found 44% of B2B SaaS companies score below 50 on AI presence. The gap closes, but only when you stop optimizing for the wrong surface.

What You’ll Learn

- Why traditional SEO metrics don’t tell you whether AI assistants recommend your SaaS

- The five citation sources that move ChatGPT, Perplexity, Claude, and Gemini for B2B SaaS queries

- A 90-day program that builds visibility without gaming the system

- The three measurement layers every SaaS marketing team needs in place by Q2

- How to spot the moment your category is “locked in”, and what to do if you missed the window

Why B2B SaaS Has the Worst AI Visibility Problem

B2B SaaS is uniquely exposed. Your buyers are technical, research-heavy, and early adopters of AI tools. G2’s 2025 buyer research found that half of B2B software buyers now start their evaluation inside an AI chat interface, not a search engine. Demo bookings, free trials, RFPs, they all start downstream of an AI conversation you weren’t part of.

And SaaS sites have a self-inflicted problem on top of it. Analysis of nearly 3,000 sites found B2B SaaS had the highest rate of blocking LLM crawlers. GPTBot, ClaudeBot, PerplexityBot. Teams worried about content scraping locked themselves out of the recommendation engine that now drives shortlist creation. That decision made sense in 2023. It’s a strategic disaster in 2026.

Three structural realities make the problem worse for SaaS specifically:

- Long sales cycles, AI-influenced top of funnel. The first AI conversation happens months before a salesperson hears about the deal. By the time you’re in pipeline, the shortlist is already locked.

- Comparison-heavy buying behavior. “Best CRM for mid-market,” “Stripe alternatives,” “Notion vs Asana”, these queries get answered by AI assistants pulling from a small handful of comparison sources. If you’re not in those sources, you’re not in the comparison.

- Category proliferation. New SaaS categories form fast. AI models lock in early authority signals during training. The brands establishing entity authority now will be the default recommendations for the next 18–24 months.

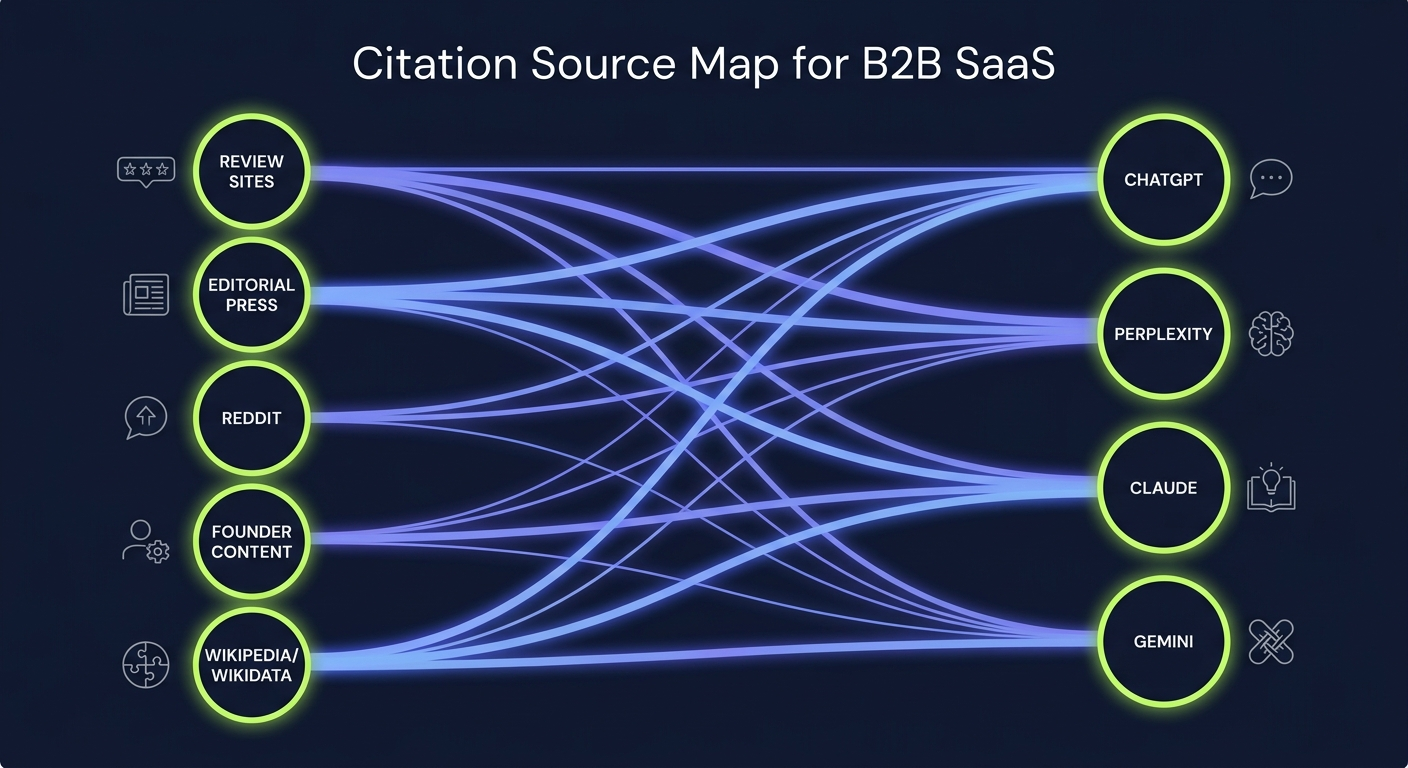

The Five Sources AI Models Actually Pull From for SaaS Queries

Stop guessing where to invest editorial energy. After auditing AI citations across hundreds of B2B SaaS queries, the same five source types appear over and over. Different platforms weight them differently, but if you’re absent from all five, you’re invisible everywhere.

| Source Type | Why AI Models Cite It | Highest Weight On |

|---|---|---|

| Independent comparison and review sites (G2, Capterra, TrustRadius, Software Advice) | Structured product data, verified user reviews, category taxonomy | ChatGPT, Gemini |

| Editorial publications with topical authority (TechCrunch, The Verge, vertical-specific trade press) | Editorial vetting, named authors, original reporting | Perplexity, Claude |

| Reddit threads and Stack Exchange answers ranking on Google | Authentic user perspective, problem-specific context | ChatGPT, Perplexity, Google AI Overviews |

| Founder and operator content on LinkedIn, Substack, and personal blogs | First-person experience, recency, named expertise | Perplexity, Claude |

| Wikipedia, Wikidata, and entity-graph references | Disambiguation, entity confirmation, sameAs relationships | Gemini, Google AI Overviews |

The pattern that matters: none of these are your owned blog. You can publish a thousand posts on your own domain and still be invisible if you’re not present across these five source types. SaaS marketing teams that figure this out early stop measuring blog traffic and start measuring presence on the surfaces that feed the models.

How to Measure Whether You’re Actually Visible

You can’t fix what you don’t measure. The mistake most SaaS teams make is treating AI visibility as a single metric. It isn’t. It’s three layers, and each one tells you something different.

Layer 1: Presence. Are You Cited at All?

Build a query library of 50–100 prompts your buyers would actually ask. Not “what is [your category]”, that’s a definitional query. The ones that matter are evaluation prompts: “best [category] for [ICP segment],” “[competitor] alternatives,” “tools for [specific job-to-be-done],” “compare [you] vs [competitor].”

Run each prompt across ChatGPT, Perplexity, Claude, and Gemini once a week. Log whether your brand appears, where in the response, and what’s said about you. This is manual at first. That’s fine, startups don’t need enterprise tooling for this. A spreadsheet and a recurring Friday block works.

Layer 2: Share of Voice. How Often Versus Competitors?

Presence tells you if you’re there. Share of voice tells you if you’re winning. Across your prompt library, count mentions per brand and divide by total prompts. If you appear in 12 of 100 prompts and your top competitor appears in 47, you have a share-of-voice problem, not a content problem.

This metric exposes category dominance. In most B2B SaaS verticals, two or three brands eat 60%+ of AI mentions. The path to growth is taking share from those leaders, which means earning citations on the same sources they appear on.

Layer 3: Sentiment and Context. What’s Being Said?

Being mentioned is not the same as being recommended. AI models can cite you as “the expensive option,” “the legacy player,” or “complicated for small teams”, and that citation actively hurts pipeline. Track the qualifying language around every mention. Patterns reveal where your positioning is leaking into the training data through reviews, comparison articles, and Reddit threads you’ve never read.

The 90-Day AI Visibility Program for B2B SaaS

Visibility compounds slowly, then suddenly. The brands cited consistently in 2026 started this work in 2026. Here’s the program that actually moves the metric in 90 days, not the theoretical version, the operational one.

Days 1–14: Stop the Bleeding

- Unblock the crawlers. Audit your robots.txt. If GPTBot, ClaudeBot, PerplexityBot, Google-Extended, or Applebot-Extended are blocked, unblock them today. This is the single highest-use 30-minute task in the program.

- Publish an llms.txt file. Point AI crawlers at your highest-authority pages, pricing, docs, comparison pages, customer stories. A well-structured llms.txt reduces ambiguity for the models trying to learn your category.

- Build the prompt library. 50–100 evaluation prompts across awareness, consideration, and decision stages. Run baseline measurements. This is your starting line.

- Audit your presence on the five sources. Are you on G2 with verified reviews? Capterra? Are you mentioned in any editorial publication this year? Do you have an active Reddit presence in your category subreddits? Is your Wikipedia entity properly disambiguated? Score yourself out of 5.

Days 15–45: Fix Your Foundation

- Strengthen your entity graph. Wikidata entry, Crunchbase profile, LinkedIn company page, GitHub organization (if applicable), structured data on your homepage and product pages. Entity SEO work here pays back across every AI surface.

- Get reviewed on G2 and Capterra. Aim for 25+ verified reviews on your primary category page. Review sites are the single most-cited source type for ChatGPT and Gemini on “best [category]” queries.

- Publish two anchor comparison pages. “[You] vs [top competitor]” and “[Top competitor] alternatives”, written honestly, with real tradeoffs, not as marketing puffery. AI models can detect promotional framing and skip it.

- Restructure your content for extraction. Direct-answer paragraphs of 40–80 words after every H2. Comparison tables. Defined entities on first mention. This is the structural work that turns a published page into a citable source.

Days 46–75: Build the Authority Layer

- Editorial placements on publications AI models trust. Two or three founder bylines or expert quotes in trade publications relevant to your category. Not press release distribution, real editorial. Editorial link building is where AI authority compounds.

- Activate Reddit and community presence. Find the 5–10 threads ranking on Google for your category’s evaluation queries. Show up as a real practitioner, answer questions, disclose affiliation, add value. AI models pull these threads heavily, especially Perplexity and ChatGPT.

- Operator content from your team. Your founder, your VP Product, your head of CX, they all need a presence on LinkedIn or Substack with content tied to your category. First-person operator content is disproportionately weighted by Perplexity and Claude.

Days 76–90: Measure, Iterate, Systematize

- Re-run the prompt library. Compare against the Day 1 baseline. Look for movement on presence rate first, share of voice second, sentiment third, they shift in that order.

- Identify the wins and double down. If a specific Reddit thread, review site presence, or editorial placement is producing citations, study what made it work and replicate.

- Hand off to operations. Who owns prompt monitoring weekly? Who owns review velocity? Who owns editorial outreach quarterly? AI visibility dies the moment it stops being a recurring operational rhythm.

Realistic Benchmarks by Funding Stage

The right target depends on where you are. A pre-seed startup expecting Stripe-level AI presence is going to burn out in week three. A Series C company tolerating 5% share of voice in their own category is leaving the market wide open for the next entrant.

| Stage | Realistic Presence Rate (90 days) | Share of Voice Target (12 months) | Primary Focus |

|---|---|---|---|

| Pre-seed / Seed | 5–15% | 3–8% | Founder content, Reddit, entity foundation |

| Series A | 15–30% | 8–15% | Review sites, comparison pages, first editorial wins |

| Series B | 30–50% | 15–25% | Editorial authority, sentiment management, category narrative |

| Series C+ | 50–70% | 25–40% | Defending share, owning category-defining queries, international expansion |

These ranges come from observed patterns across B2B SaaS verticals. They’ll vary by category maturity, a brand-new category has fewer competitors and faster movement; a saturated one (CRM, project management, marketing automation) compounds slower.

The Mistakes That Kill SaaS Visibility Programs

After watching dozens of these programs, the failures cluster around the same five mistakes. Most of them aren’t strategic, they’re operational.

Treating It as a Content Project Instead of a Distribution Problem

Publishing a hundred blog posts on your own domain doesn’t move AI visibility. The models aren’t training on your blog primarily, they’re training on the five source types above. If your investment is 90% owned content and 10% distribution, you’ve inverted the ratio. The compound returns live in distribution.

Measuring What’s Easy Instead of What Matters

Organic traffic is easy to measure. AI citations are harder. Teams default to the easy metric, then wonder why traffic is up but pipeline is flat. Share of voice across AI surfaces is the metric that correlates with shortlist appearance, track it even if it’s manual.

Quitting at Month Two

The hardest months are 8 through 16. You’ve done the foundational work, citations are starting, but the curve hasn’t bent yet. Most teams kill the program here. The ones who push through see the inflection, citations compound because each new citation makes the next one easier for the models to surface.

Ignoring Sentiment

You’ll start getting cited and stop checking what’s being said. Then a sales call comes back with “they said you’re hard to implement” and you realize a Reddit thread from 2024 is now the dominant context AI models pull. Sentiment management is part of the program, not a separate function.

One Strategy Across All Platforms

ChatGPT, Perplexity, Claude, and Gemini cite differently. Perplexity weights recency and named experts. ChatGPT weights structured review data and high-traffic comparison content. Gemini integrates Google’s knowledge graph heavily. A single strategy serving all four equally serves none of them well. Pick your two priority platforms based on where your buyers actually are, then optimize for those first.

What’s Different About 2026

The window for establishing default-recommendation status is closing in mature B2B SaaS categories. Project management, CRM, email marketing, design tools, these categories already have AI-native incumbents. The brands cited consistently in those answers today will be hard to dislodge for the next training cycle.

The categories still wide open: vertical AI tooling, AI infrastructure, developer experience, regulated industries (healthcare, fintech, legal tech), and any category that didn’t exist 24 months ago. If you’re in one of those, the work you do in 2026 sets the citation defaults for 2027 and 2028. If you’re in a mature category, the work is harder but the playbook is the same, you’re just taking share from incumbents instead of claiming uncontested ground.

One thing has clearly changed since 2024: AI assistants now show their citations. Buyers click through, evaluate the source, and form judgments about it. Being cited isn’t the end of the funnel, it’s the new top of it. Treat each citation as a first impression that needs to convert.

Frequently Asked Questions

How long does it take to see B2B SaaS brand mentions in AI search results?

Most B2B SaaS programs see first AI citations within 6–10 weeks of executing the foundational work, unblocking crawlers, fixing entity data, and publishing the first comparison pages. Consistent, measurable share of voice growth typically takes 6–9 months. Perplexity moves fastest because it weights recency; ChatGPT and Claude move slower because their citations come through training cycles and indexed comparison sources.

Should we still invest in traditional SEO if we’re focused on AI visibility?

Yes, but not for the reason you’d think. Traditional SEO matters because the sources AI models cite (review sites, Reddit threads, editorial publications) are themselves ranked by Google. If your G2 page or comparison article doesn’t rank, AI models are less likely to surface it. SEO is now an input to AI visibility, not a competing channel.

What’s the difference between AI visibility and generative engine optimization?

AI visibility is the outcome, your brand appearing in AI-generated answers. Generative engine optimization is one set of practices that contributes to that outcome, focused mostly on structuring your owned content for AI extraction. AI visibility is broader: it includes GEO plus distribution, entity authority, review presence, and editorial citations across the sources AI models pull from.

Do we need an enterprise AI visibility tool to track this?

Not at Series A or earlier. A spreadsheet, a 50-prompt library, and a recurring Friday hour gets you 80% of the value of enterprise tools. Move to dedicated tooling when prompt monitoring becomes too time-consuming to do manually, usually around Series B when the program scales across multiple categories or geographies.

What if our category doesn’t exist yet in AI training data?

This is actually an opportunity, not a problem. Categories that haven’t been “claimed” by incumbents in AI answers are the easiest places to establish default-recommendation status. Define the category clearly, publish category-defining content, get cited in editorial coverage, and seed the entity graph. The first three to five brands AI models learn in a new category usually become the long-term defaults.

How do we know which AI platform to prioritize?

Ask your last 20 closed-won and closed-lost prospects which AI tools they used during evaluation. The answer is usually concentrated, most B2B buyers default to one or two assistants. Optimize for those first. For most B2B SaaS in the US in 2026, ChatGPT and Perplexity are the two highest-use starting points; Claude and Gemini are usually phase two.

Can we just buy our way into AI citations?

No. Sponsored content, paid placements, and affiliate-style mentions are visible to AI models and weighted lower than editorial citations. The brands winning in AI search built genuine editorial authority, earned reviews, real expert content, organic Reddit presence, not paid placements. The shortcut isn’t a shortcut.

Building the Function That Owns This

The teams seeing real AI visibility growth in 2026 aren’t running this as a side project. Someone owns it, usually a senior content lead, a head of growth, or a category marketing manager, and the program has a quarterly rhythm built into the marketing calendar. AI visibility isn’t a campaign. It’s a function. Treat it like one and the compounding starts. Treat it like a project and you’ll be back to invisible by Q3.

If you want to see where your B2B SaaS brand stands across ChatGPT, Perplexity, Claude, and Gemini today, and what would actually move the metric over the next 90 days, request a free AI visibility audit. We’ll run your prompt library, benchmark you against your top three competitors, and show you the specific source gaps that are keeping you out of the answer.