Tracking Google AI mentions means monitoring when and how AI-generated search results — particularly Google AI Overviews, AI Mode, and Gemini — name, cite, or recommend your brand in response to user queries. As of 2026, this is a distinct discipline from traditional rank tracking, and it requires different tools, metrics, and workflows.

Google AI Overviews now appear in roughly 47% of U.S. searches, according to a 2025 Botify and DemandSphere study reported by Search Engine Journal. AI Mode — Google’s fully conversational search experience — is expanding rapidly. Meanwhile, Google Search Console still doesn’t separate AI Overview clicks from standard organic clicks. If you’re relying on legacy SEO dashboards alone, you’re missing how the largest search engine on earth actually presents your brand to users in 2026.

This article walks through the specific metrics, tools, and processes you need to track your brand’s presence across Google’s AI surfaces — and what to do when the data shows gaps.

Key Takeaways

- Google AI mentions span three distinct surfaces — AI Overviews, AI Mode, and Gemini — each with different retrieval mechanisms and tracking requirements

- Mentions (brand named in AI text) and citations (domain linked as a source) are separate KPIs that measure different outcomes

- Google Search Console cannot isolate AI-generated clicks, making third-party tracking tools essential

- AI Overview content changes approximately 70% of the time between identical searches, so weekly tracking for directional trends beats one-time snapshots

- Manual tracking covers 15–25 queries per session — automated tools scale to hundreds or thousands daily

- Content structure, E-E-A-T signals, recency, and topical authority drive citation eligibility more than keyword density or backlink volume alone

What “Google AI Mentions” Actually Covers in 2026

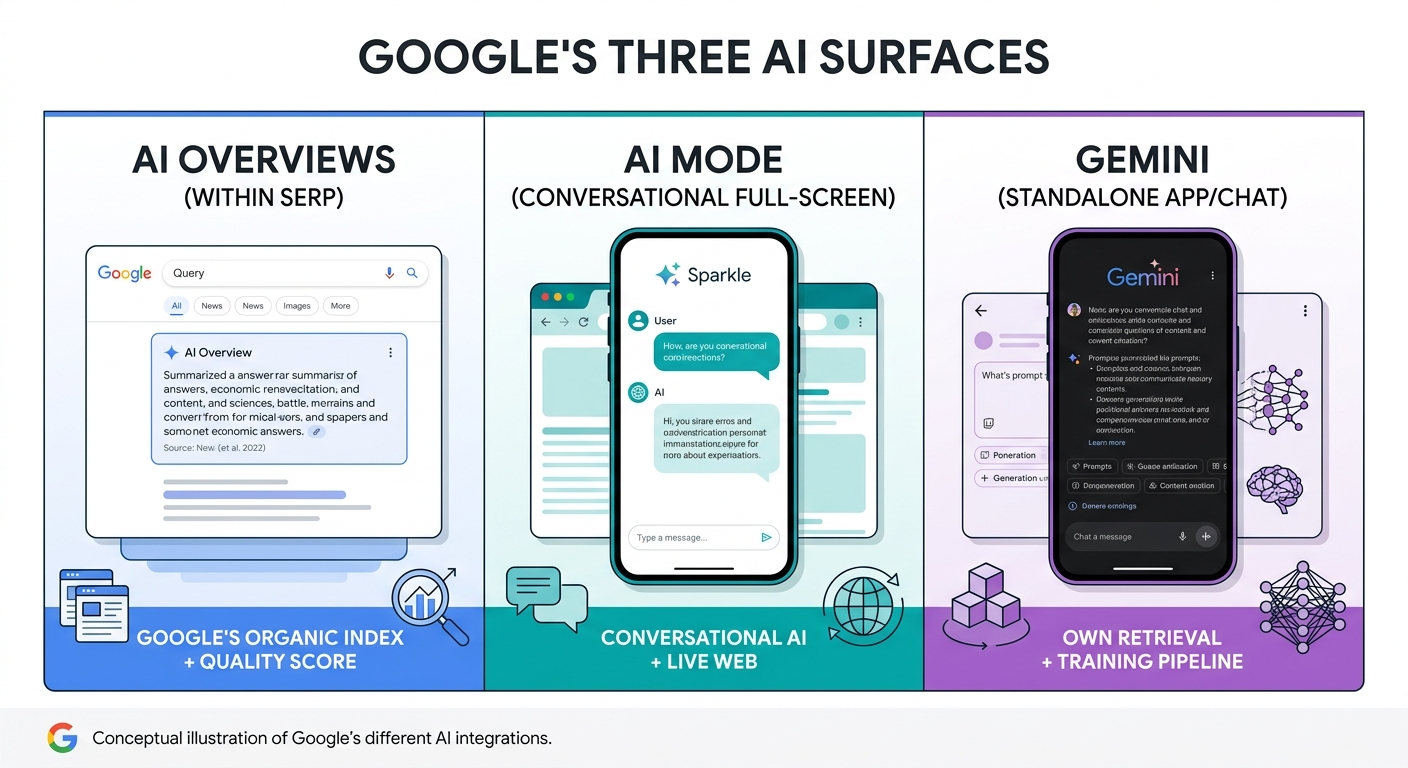

A Google AI mention is any instance where Google’s AI systems name or reference your brand within an AI-generated response. This happens across three separate surfaces, each powered by different retrieval logic.

AI Overviews

AI Overviews are AI-generated summaries that appear at the top of standard Google search results. They pull sources from Google’s organic search index and evaluate them using Quality Score signals — including E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). An AI Overview synthesizes information from multiple web pages and cites them inline with source links.

AI Mode

AI Mode is a fully conversational search experience within Google Search. Users interact in a chat-like format, ask follow-up questions, and explore topics in depth. AI Mode generates responses that can reference brands and cite source URLs, but the conversational context means recommendations shift based on the dialogue flow.

Gemini (Standalone)

Google Gemini operates as a standalone AI assistant — separate from Google Search. It uses its own retrieval and generation pipeline, drawing from broader training data rather than Google’s search index exclusively. A brand cited in AI Overviews is not guaranteed to appear in Gemini, and the reverse is equally true.

Tracking Google AI mentions effectively means covering all three surfaces, because visibility in Gemini doesn’t predict visibility in AI Overviews, and neither predicts what AI Mode will recommend.

Why Google Search Console Can’t Show You This Data

Google does not separate AI Overview clicks or AI Mode interactions from standard organic clicks in Search Console or Google Analytics 4. Any click originating from an AI-generated citation appears as google / organic in GA4 — indistinguishable from a traditional blue-link click.

This creates a significant blind spot. You can rank first organically for a keyword, but if the AI Overview for that query cites a competitor instead of you, your effective visibility has dropped — and Search Console won’t flag it.

According to a 2025 DataSlayer analysis, organic click-through rates drop by 34.5% on average when AI Overviews answer a query. Some categories reported declines up to 79%. Without tracking your presence inside those AI-generated answers directly, you’re measuring traditional SERP performance while ignoring the surface that’s absorbing a growing share of user attention.

This limitation makes dedicated brand mention monitoring across Google’s AI surfaces a separate, essential workflow — not an optional add-on to your existing SEO dashboard.

Mentions vs. Citations: Two Metrics, Two Business Outcomes

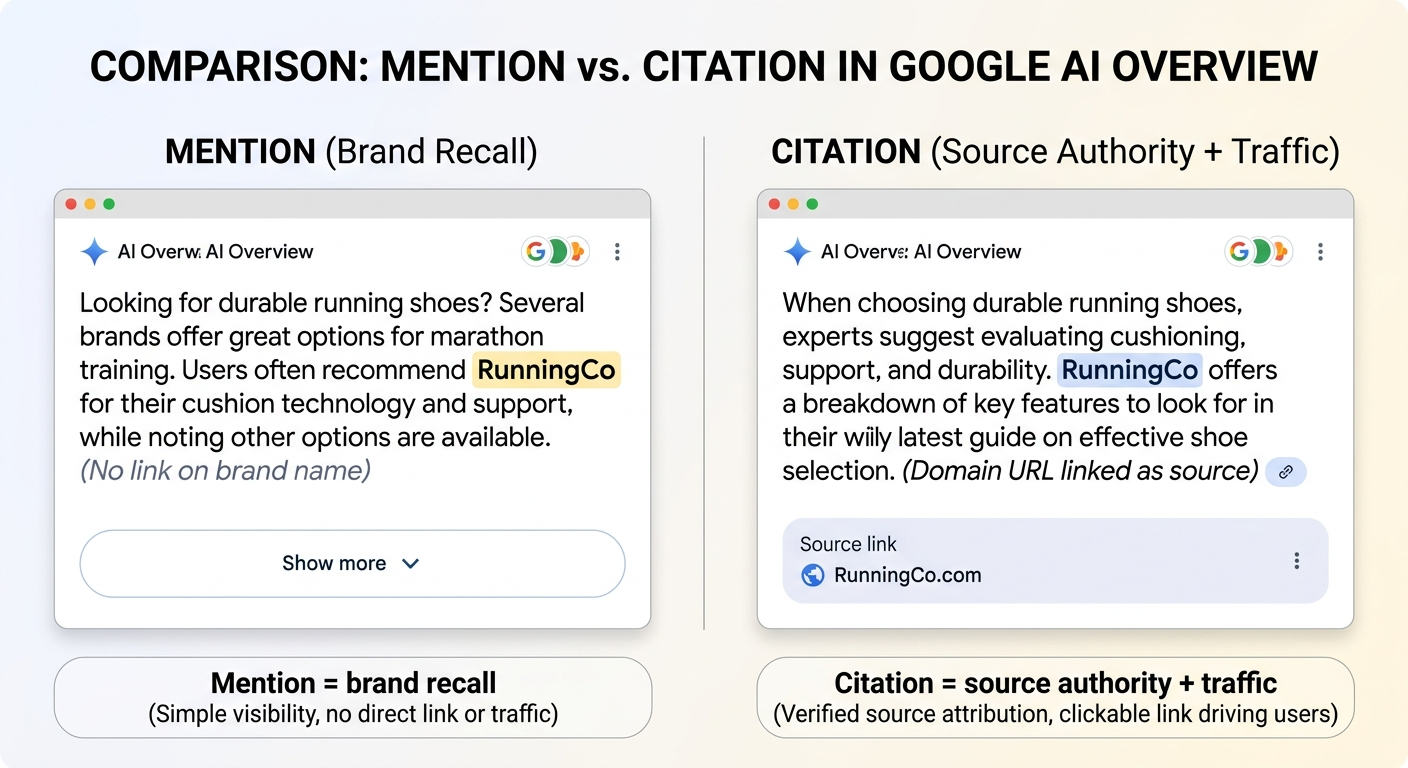

When tracking Google AI mentions, the distinction between a mention and a citation determines what you’re actually measuring.

A mention occurs when Google’s AI names your brand in the generated text — without linking to your domain. This indicates brand recall: the AI considers your brand relevant to the topic.

A citation occurs when the AI links to your domain as a source. This indicates source authority: the AI trusts your content enough to point users to it as evidence.

| Metric | Definition | What It Measures | Business Impact |

|---|---|---|---|

| Mention | Brand named in AI-generated text (no link) | Brand recall and category relevance | Awareness, preference, mindshare |

| Citation | Domain linked as an AI source | Source authority and content trust | Referral traffic, trust signals, conversions |

A brand can be mentioned without being cited, and cited without being prominently mentioned. Track both as separate KPIs. Mention rate tells you whether you’re in the conversation. Citation rate tells you whether you’re driving outcomes from it.

BrightEdge’s 2025 AI search study found that Google AI Overviews mention brands by name only about 6% of the time, compared to 99% in relevant ChatGPT queries. That selectivity means earning a Google AI mention carries disproportionate weight — and losing one matters more than most teams realize.

The Five Metrics That Define Your Google AI Visibility

Raw mention counts are a starting point, not a strategy. To make tracking actionable, measure these five metrics across each Google AI surface:

1. Inclusion Rate

The percentage of your tracked queries where your brand appears in the AI-generated response — whether as a mention or citation. This is your baseline visibility metric. Segment it by AI surface (Overviews, AI Mode, Gemini) and by intent type (informational, commercial, comparison).

2. Citation Coverage

The percentage of your appearances that include a clickable link to your domain. A high inclusion rate with low citation coverage means the AI knows your brand but doesn’t trust your content enough to send users to it. That’s a content quality signal, not a brand awareness problem.

3. Share of Voice

Your brand’s mention and citation share relative to competitors across the same set of tracked queries. Share of voice reveals competitive positioning — whether you’re the primary recommendation, one of several alternatives, or absent entirely.

4. Answer Placement

Where your brand appears within the AI response matters. Being named first in an AI Overview or recommended at the top of an AI Mode response carries more influence than a passing reference at the end. Weight earlier placements more heavily in your scoring.

5. Volatility

AI Overview content changes roughly 70% of the time between identical searches, according to 2026 Ahrefs data. Citations change 46% of the time. Track week-over-week shifts in which brands appear for each query to separate stable visibility from noise. High-volatility queries need more frequent monitoring.

Together, these metrics give you a comprehensive brand mentions report that connects AI visibility to business outcomes — not just presence counts.

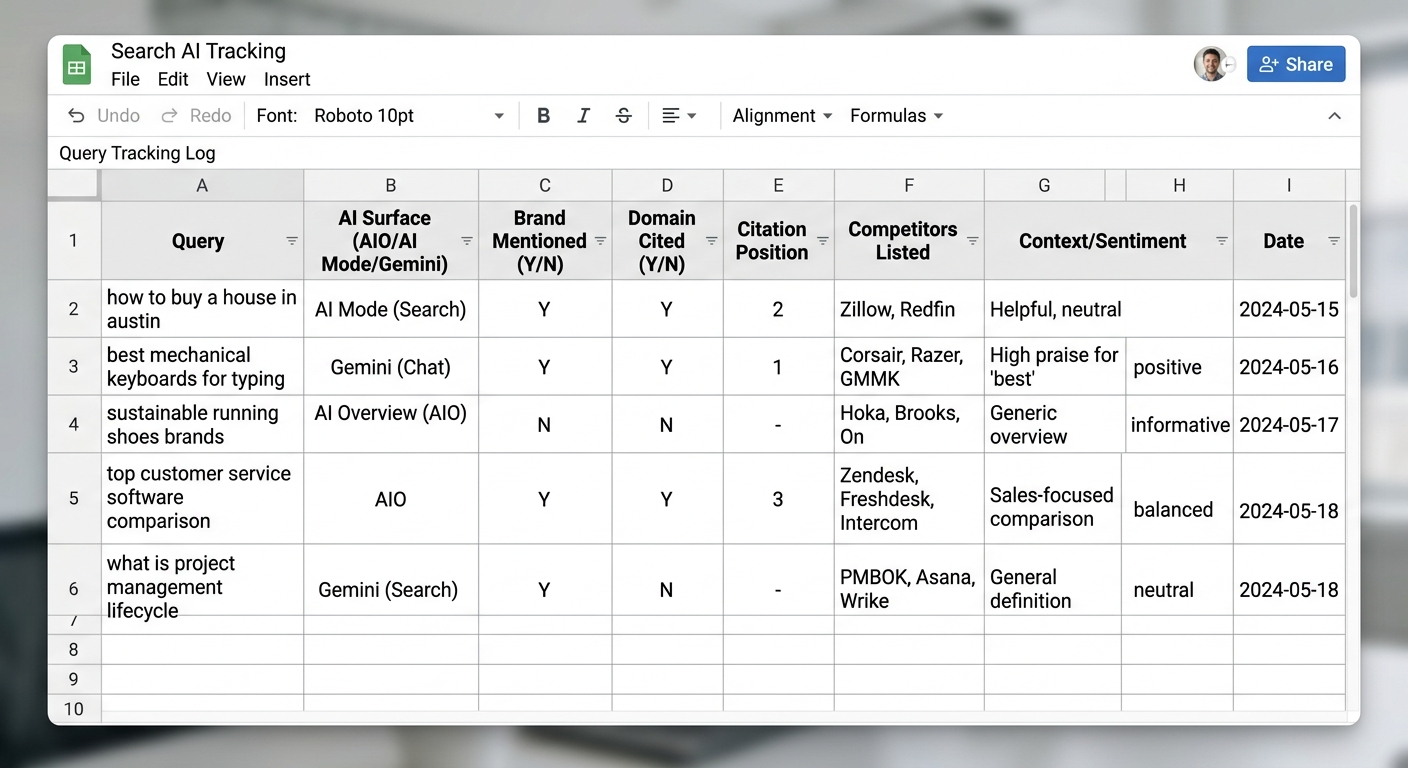

How to Manually Track Google AI Mentions

Manual tracking is free and useful for initial audits or small query sets. It does not scale beyond 15–25 queries per session, but it gives you direct observation of what users see.

Step 1: Build Your Query List

Compile 15–25 queries across four categories:

- Branded queries: “[your brand] review,” “[your brand] pricing,” “[your brand] alternatives”

- Category queries: “best [product type] for [use case],” “top [product category] 2026”

- How-to queries: “how to [solve problem your product addresses]”

- Comparison queries: “[your brand] vs [competitor],” “compare [product category] tools”

Pull phrasing from actual customer language — sales calls, support tickets, community forums, and “People Also Ask” boxes. Queries with 8+ words are seven times more likely to trigger an AI Overview, according to 2025 BrightEdge research.

Step 2: Search in Incognito Mode Across Devices

Open Google in an incognito or private browser window. Search each query and check whether an AI Overview or AI Mode response appears. Test from both mobile and desktop — AI Overview appearance rates differ by device. Test from 2–3 geographic locations if your audience is distributed.

Step 3: Document Your Findings

For each query where an AI response appears, record:

- Whether your brand is mentioned in the AI text

- Whether your domain is cited (linked) as a source

- Your citation position (first, second, third source)

- Which competitors appear alongside you

- The context in which your brand is described (positive, neutral, caveat-laden)

Run each query 2–3 times per session. AI Overview appearance can vary — log the frequency of your brand’s presence, not just a single observation.

Step 4: Repeat Weekly

Enter results in a shared spreadsheet and repeat weekly. Single snapshots are unreliable given AI answer volatility. Weekly tracking over 4–6 weeks reveals directional trends — whether your visibility is improving, declining, or stable for each query cluster.

Pro Insight: Manual tracking is most valuable during the first two weeks of any new AI visibility initiative. It builds intuition for how Google’s AI surfaces treat your brand and competitors before you invest in automated tooling. Once you’ve established baseline patterns, transition to automated tracking for scale.

Automated Tracking: Tools Built for Google AI Monitoring

Manual tracking covers a fraction of your keyword universe. Automated tools monitor hundreds or thousands of queries daily, capturing front-end AI responses as users actually see them — not API approximations.

What to Look for in a Google AI Tracking Tool

Not all AI visibility tools track the same surfaces or metrics. When evaluating options, check whether the tool covers:

- AI Overviews, AI Mode, and Gemini — not just one surface

- Mentions and citations separately — many tools blend them into a single score

- Front-end capture — API outputs can differ from what users see in the browser

- Citation position and source URLs — not just a binary “present/absent” flag

- Competitor benchmarking — share of voice requires tracking the same prompts for rival brands

- Historical data — trend analysis needs weekly snapshots stored over time

Several platforms now offer some combination of these features. SE Ranking tracks AI Overview presence alongside traditional keyword rankings. Otterly.AI monitors mentions across multiple AI platforms including Google AI Overviews. Rankability tracks AI Mode and AI Overviews with source citation data. Each tool has different strengths depending on your monitoring scope.

For a broader comparison across AI platforms beyond Google — including ChatGPT and Perplexity — see the best ways to track brand mentions in AI search.

Key Automated Metrics to Monitor

| Metric | What It Shows | Review Cadence |

|---|---|---|

| Inclusion rate | % of tracked queries where your brand appears | Weekly |

| Citation coverage | % of appearances with a domain link | Weekly |

| Share of voice | Your visibility vs. competitors per query set | Bi-weekly |

| Citation position | First, second, or third source in AI response | Weekly |

| Co-citation patterns | Which brands appear alongside yours | Monthly |

| Volatility index | Week-over-week change in brands per query | Weekly (high-volatility queries) |

Agencies like BrandMentions approach this from the content side — placing contextual brand mentions on 140+ high-authority publications that AI models actively index. This creates the editorial footprint that Google’s AI evaluates when deciding which brands to cite. Tracking those placements and their downstream effect on AI mention rates closes the loop between action and measurement.

How Google’s AI Selects Which Brands to Mention

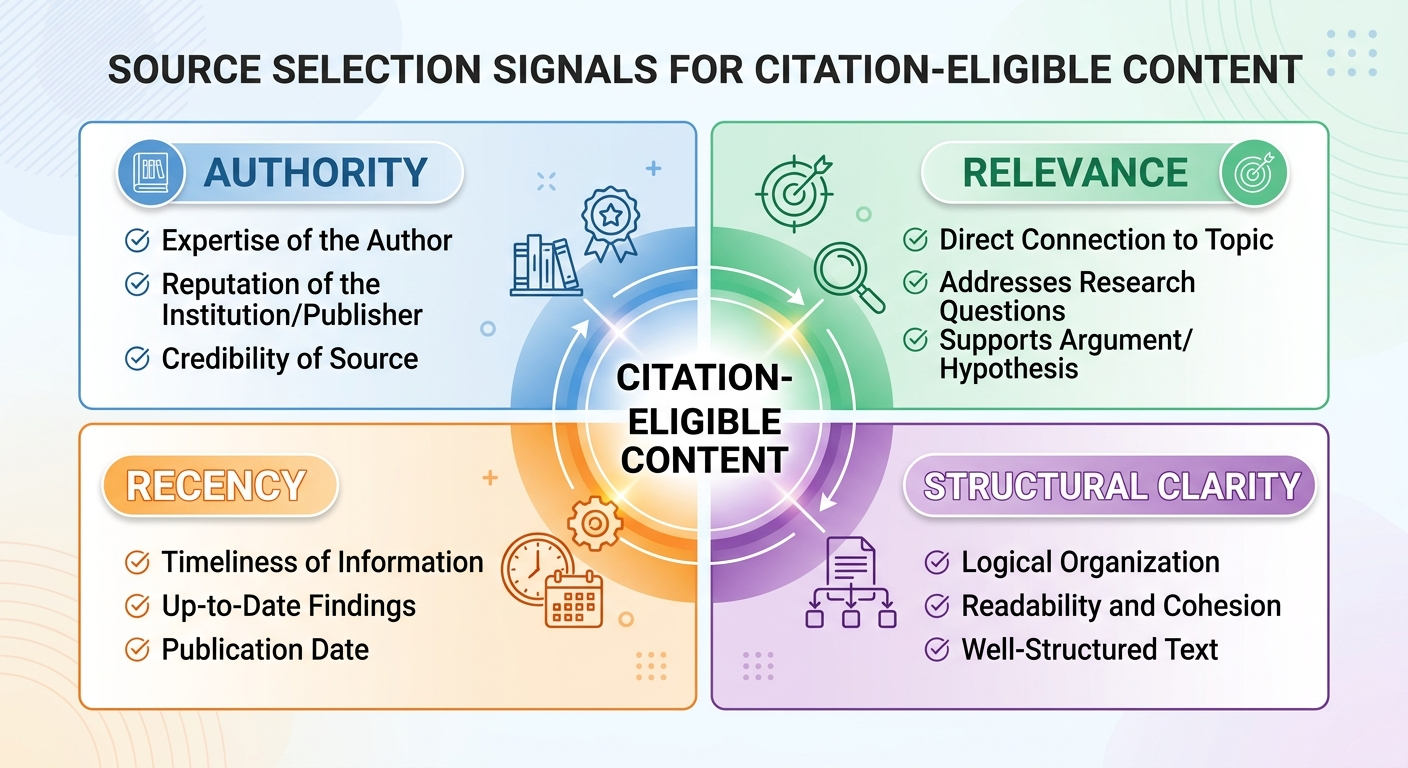

Understanding what drives source selection helps you prioritize the right fixes when your tracking data reveals gaps.

Google AI Overviews evaluates sources using four primary signals, as observed across industry research from BrightEdge, Ahrefs, and Google’s own documentation:

Authority

Domain authority and E-E-A-T signals weigh heavily. Google’s Quality Score evaluates whether the content demonstrates real experience, subject-matter expertise, recognized authoritativeness, and trustworthiness. Brands with verified author bylines, transparent sourcing, and consistent editorial depth outperform anonymous or thin content.

Relevance

Topical authority matters more than single-page optimization. AI Overviews favors content from domains with comprehensive coverage of a topic across multiple related pages. A single blog post is less likely to earn a citation than a content cluster with a pillar page and supporting articles covering subtopics, comparisons, and FAQs.

Recency

Recently published or updated content with current data receives higher citation probability. Publish dates, last-updated timestamps, and references to 2026 data signal freshness. Stale content — especially in fast-moving categories — gets deprioritized.

Structural Clarity

Answer-shaped paragraphs, clear headings, structured data markup (Article, FAQPage, HowTo), and concise direct answers help Google’s AI extract and cite content accurately. Content that hedges with excessive qualifiers (“may,” “might,” “could”) receives fewer citations than content stating facts directly with evidence.

In campaigns across 67+ B2B companies, the BrandMentions team found that brands satisfying all four signals achieved AI citation rates significantly higher than those optimizing for only one or two. The compounding effect means partial optimization rarely produces consistent citations.

For a deeper look at how AI platforms across the board select which brands to reference, see how brand mentions impact visibility in AI search.

Building a Prompt Set That Mirrors Real User Behavior

Your tracking is only as good as the queries you monitor. A prompt set built around internal assumptions misses how your actual buyers phrase questions to AI.

Start from the Buyer Journey

Structure your prompt set around intent stages:

- Problem-aware prompts: “how to [solve problem your product addresses]” — these target users who know the problem but haven’t identified solutions yet

- Solution-aware prompts: “best [product category] for [specific use case]” — users evaluating options in your category

- Decision-stage prompts: “[your brand] vs [competitor],” “[your brand] reviews,” “is [your brand] worth it for [industry]” — users comparing specific solutions

Pull Language from Real Sources

Mine actual customer phrasing from:

- Sales call transcripts and demo recordings

- Support tickets and onboarding questions

- Reddit, Quora, and industry forums

- Google’s “People Also Ask” boxes for your target keywords

- Review sites where customers describe what they were looking for

Add Prompt Variants

AI responses shift based on phrasing. For each core prompt, create 2–3 synonym variations:

- “best project management software for remote teams”

- “top project management tools remote work 2026”

- “recommended project management platforms for distributed teams”

A working prompt set for most B2B brands runs 50–200 queries per market. Start with 30–50 core prompts and expand as your tracking workflow matures. Revisit quarterly to prune low-value queries and add new phrasing that surfaces in customer conversations or competitor content.

Tracking Cadence: How Often to Check and What to Prioritize

Not every query needs daily monitoring. Match your cadence to query importance and answer volatility:

| Query Type | Recommended Cadence | Rationale |

|---|---|---|

| Core commercial queries (top 20) | Weekly | Directly tied to pipeline; need fast feedback on gains or losses |

| Extended prompt set (50–100) | Bi-weekly | Broad coverage without excessive data noise |

| Long-tail and experimental | Monthly | Trend analysis and opportunity discovery |

| Post-campaign or content update | 3–5 days after launch | Measures time-to-inclusion and immediate impact |

Track your core prompt set weekly, review extended sets bi-weekly, and use monthly reviews for strategic planning. When you publish a major content update or earn a significant editorial placement, check relevant queries within 3–5 days to measure time-to-inclusion — how quickly Google’s AI surfaces reflect the change.

Warning: Do not treat any single tracking snapshot as ground truth. AI Overview content volatility means a query that shows your brand today may not show it tomorrow — and vice versa. Decisions should be based on 4+ weeks of directional trend data, not individual observations.

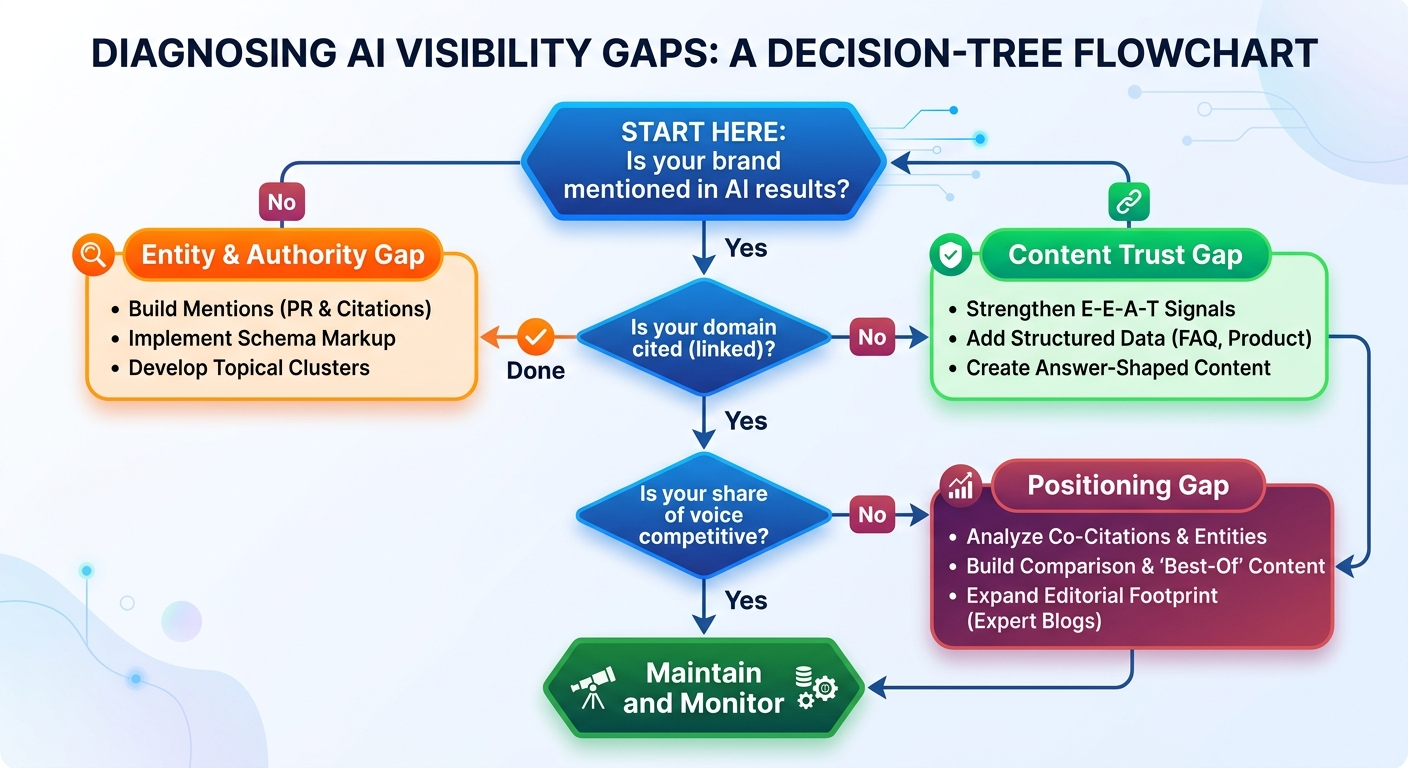

What to Do When Your Brand Is Missing from Google AI Results

Tracking data becomes valuable when it drives action. If your monitoring reveals low inclusion rates or absent citations, the fix depends on which gap the data exposes.

If Your Brand Isn’t Mentioned at All

This is an entity recognition and authority gap. Google’s AI doesn’t associate your brand strongly enough with the topic to include it.

Actions:

- Audit your brand mentions for SEO — are you referenced on the types of publications Google’s AI trusts?

- Build topical content clusters around the queries where you’re absent — pillar pages with supporting articles covering related subtopics

- Implement Organization and Product schema markup to reinforce entity identity

- Earn editorial mentions on high-authority publications in your category — not for links alone, but for the entity associations AI models build from those references

If You’re Mentioned but Not Cited

This is a content trust gap. The AI recognizes your brand as relevant but doesn’t trust your pages enough to link to them as evidence.

Actions:

- Strengthen E-E-A-T signals on target pages — named authors with credentials, transparent sourcing, original data

- Add structured data (FAQPage, HowTo, Article schema) to improve machine-readable clarity

- Write answer-shaped paragraphs that directly address the query within the first 200 words of each relevant page

- Update publish dates and content freshness signals — include 2026 data where possible

If You’re Cited but Competitors Dominate Share of Voice

This is a competitive positioning gap. You’re in the conversation but not winning it.

Actions:

- Analyze co-citation patterns — which competitors appear alongside you? What content do they publish that earns primary citation position?

- Build comparison content and definitive category guides that directly address the queries where competitors rank higher

- Expand your editorial brand mention footprint on domains that Google’s AI already cites as sources for your target queries

Tracking Across All Three Google AI Surfaces: A Unified Workflow

Most brands make the mistake of tracking only one Google AI surface — usually AI Overviews — and assuming it represents their overall Google AI visibility. In practice, each surface requires separate monitoring because they use different retrieval logic and can produce conflicting results for the same query.

AI Overviews

Track weekly using your core prompt set. Focus on queries that trigger AI Overviews (roughly 47% of U.S. searches as of 2025 data). Monitor both mention presence and citation position. Use the AI Overviews mentions tool comparison to choose the right platform for your needs.

AI Mode

AI Mode is expanding rapidly in 2026. Because it’s conversational, the same base query can produce different brand recommendations depending on follow-up questions. Track your inclusion in initial responses and — where tools support it — in multi-turn conversation flows.

Gemini (Standalone)

Gemini uses its own retrieval pipeline. Track it separately from AI Overviews and AI Mode. Brands that dominate in AI Overviews frequently have zero presence in Gemini responses. For detailed Gemini tracking methods, see how to track brand mentions in Gemini.

Unifying the Data

Create a single dashboard or report that shows inclusion rate, citation coverage, and share of voice broken out by surface. This prevents the common error of celebrating an AI Overview citation while being completely invisible in AI Mode for the same query — a gap that grows more costly as AI Mode adoption increases.

For tracking beyond Google’s ecosystem — including ChatGPT, Perplexity, and Claude — see how to track brand mentions across AI search platforms.

Common Mistakes That Undermine Google AI Tracking

After reviewing how dozens of B2B marketing teams approach AI mention tracking, these are the errors that most frequently produce misleading data or wasted effort:

- Tracking only branded queries. Your most valuable AI mentions come from category and problem-solution queries — where unaware buyers discover brands for the first time. If you only track “[your brand] reviews,” you miss the queries that actually drive new pipeline.

- Treating all AI surfaces as one system. AI Overviews, AI Mode, and Gemini use different retrieval mechanisms. Appearing in one doesn’t mean appearing in the others. Track each separately.

- Relying on API outputs instead of front-end capture. API responses from AI platforms can differ from what users see in the browser. Tools that capture front-end rendered responses produce more reliable data.

- Checking once and drawing conclusions. AI answer volatility means a single check is a snapshot, not a trend. Minimum viable tracking requires 4+ weeks of weekly data before making strategic decisions.

- Ignoring unlinked mentions. A brand mentioned without a citation link still shapes user perception and purchasing decisions. Track unlinked brand mentions alongside citations.

- Not tracking competitors. Your inclusion rate means nothing without competitive context. If you appear in 40% of queries but your primary competitor appears in 75%, your visibility position is weak — regardless of the absolute number.

What Has Changed Since 2024–2025

Google AI tracking in 2026 looks materially different from where it stood even 12 months ago:

- AI Overview expansion: Trigger rates have grown from roughly 13% of queries in early 2025 (BrightEdge data) to approximately 47% by late 2025 (Botify/DemandSphere). As of 2026, AI-generated results are the norm for informational and commercial queries, not the exception.

- AI Mode rollout: Google’s conversational search experience has moved from limited testing to broad availability in 2026, creating a second major AI surface that requires dedicated tracking.

- Tool maturation: In 2024, most teams tracked AI mentions manually. In 2026, multiple platforms offer automated Google AI tracking with historical data, competitive benchmarking, and front-end answer capture.

- Mention selectivity: Google AI Overviews has remained highly selective about naming brands — the 6% brand mention rate reported by BrightEdge in 2025 underscores that earning a Google AI mention requires genuine authority, not just SEO tactics. This selectivity has made AI mention tracking a leading indicator of category authority rather than a vanity metric.

Frequently Asked Questions

Can I track Google AI mentions for free?

You can manually track 15–25 queries per session using incognito browsing at no cost. This works for initial audits and small query sets. Automated tracking tools that monitor hundreds of queries daily typically require paid subscriptions, though several offer free trials — including tools like Morningscore, Keyword.com, and Rank Prompt.

How often do Google AI Overviews change their cited sources?

AI Overview citations change approximately 46% of the time between identical searches, according to 2026 Ahrefs data. The underlying meaning of responses remains semantically stable (0.95 cosine similarity), but the specific brands and sources referenced shift frequently. This is why weekly tracking for directional trends produces more reliable insights than single observations.

Does ranking first on Google guarantee an AI Overview citation?

No. Ranking first organically increases citation probability, but Google AI Overviews evaluates sources using its own Quality Score criteria — including structural clarity, E-E-A-T signals, and topical authority. Research indicates approximately 75% of cited sources rank within the top 12 organic positions, but ranking alone is not sufficient. A competitor with clearer, more structured content on the same topic can earn the citation instead.

Is tracking Google AI mentions different from tracking ChatGPT or Perplexity mentions?

Yes. Google AI Overviews pulls from Google’s organic search index. ChatGPT relies primarily on pre-trained data plus optional web browsing. Perplexity runs its own real-time search engine. Each platform uses different retrieval logic, which means your brand may appear in one and be absent from another. Track each platform separately using tools designed for that purpose. For ChatGPT-specific tracking, see the best tools for monitoring ChatGPT mentions.

What schema markup helps with Google AI citation eligibility?

Implement Organization, Product, FAQPage, HowTo, and Article structured data (JSON-LD format). Include critical properties: name, description, brand, author, dateModified, and mainEntityOfPage. Add sameAs links to Wikipedia, LinkedIn, and Crunchbase to help AI models disambiguate your entity. Schema markup doesn’t guarantee citations, but it improves the machine-readable clarity that Google’s AI evaluates during source selection.

Your Next Step: Start Tracking This Week

Google AI mentions are a leading indicator of how your brand will perform as AI-generated search continues absorbing user attention from traditional blue links. The tracking workflow is straightforward — build a prompt set, choose your tools, establish a cadence, and act on the gaps the data reveals.

Start with 20–30 core queries this week. Search them manually in incognito mode. Document whether your brand is mentioned, cited, or absent. Note which competitors appear. Do this for two consecutive weeks to establish a baseline. Then scale to automated tools for ongoing monitoring.

The brands that track AI visibility now are building the historical data and competitive intelligence that will compound as these surfaces grow. The brands that wait are making strategic decisions without seeing half the picture.

If you want to see where your brand stands across Google AI Overviews, AI Mode, and Gemini — alongside ChatGPT, Perplexity, and Claude — request a free AI visibility audit from BrandMentions.

Researched and drafted with AI assistance. Reviewed and edited by the BrandMentions editorial team.