Tracking brand mentions across AI search platforms requires monitoring how ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews reference your brand — then measuring inclusion rate, citation coverage, and share of voice on a weekly cadence. Traditional SEO tools cannot capture this data because AI platforms synthesize answers instead of returning ranked links.

As of 2026, AI-powered search tools process billions of queries monthly. A significant share of B2B buyers now consult AI assistants before evaluating vendors. If your brand doesn’t appear in those AI-generated answers, you’re invisible during the most critical moments of the buying journey — regardless of how well you rank on Google.

This article walks you through a practical system for tracking brand mentions across every major AI search platform, from building your prompt set to choosing the right tools to interpreting what the data means for your pipeline.

Key Takeaways

- AI brand tracking measures mentions (brand named in the answer) and citations (your domain linked as a source) — both matter, but they indicate different things

- You need to monitor at least five platforms: ChatGPT, Google AI Overviews, Perplexity, Gemini, and Claude — each surfaces different brands for the same query

- Front-end capture (what users actually see) is more reliable than API responses, which can diverge from the live experience

- A weekly tracking cadence catches shifts fast enough to act before visibility erodes

- Share of voice across AI platforms is now a leading indicator of brand consideration — not a vanity metric

- Schema markup, editorial brand mentions on high-authority sites, and structured evidence pages are the three levers that move AI citations most

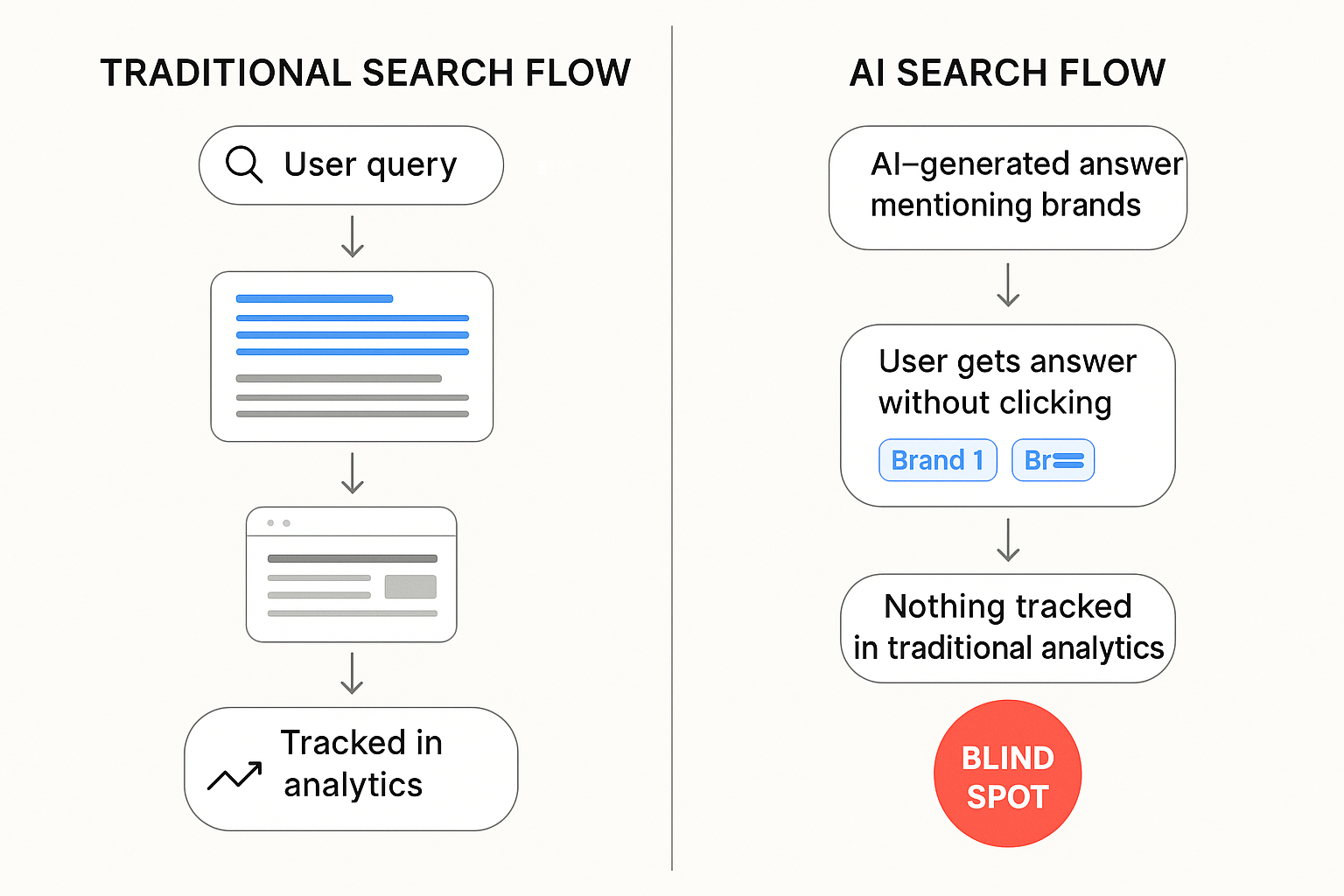

Why Traditional Analytics Miss AI Search Entirely

Google Analytics, Search Console, and conventional rank trackers were built for a click-based ecosystem. A user types a query, clicks a blue link, and lands on your site. You measure impressions, clicks, and position.

AI search doesn’t work that way.

When someone asks ChatGPT “What’s the best project management tool for remote teams?” the model generates a synthesized answer. It might name five brands, link to two sources, and never send the user to any website. Your analytics dashboard shows nothing — zero impressions, zero clicks — even if your brand was recommended to thousands of people that day.

According to a 2025 Gartner forecast, traditional organic search traffic is expected to decline by 50% by 2028 as AI-generated answers reduce click-through behavior. Meanwhile, research from BrightEdge published in 2025 found that Google’s AI Overviews now appear on over 13% of search results pages, with that percentage climbing steadily.

The gap is clear: if you only track what happens on your website, you’re measuring a shrinking fraction of your actual brand visibility.

What “Tracking” Actually Means in AI Search

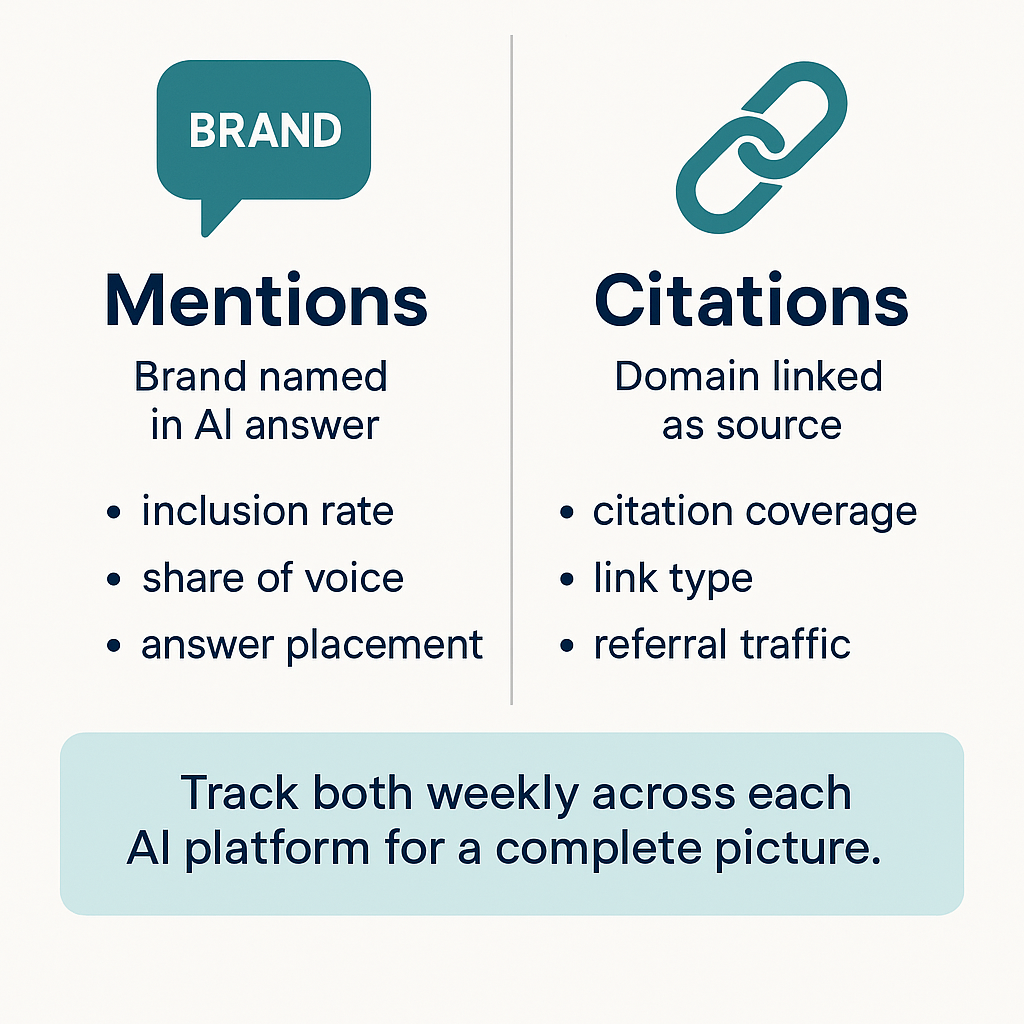

Tracking brand mentions across AI search platforms involves two distinct measurements that serve different purposes.

Brand mentions vs. citations

A brand mention occurs when an AI model names your company in its response — with or without linking to your site. A citation occurs when the AI model links to your domain as a source or reference within its answer.

Both matter, but they signal different things:

- Mentions indicate brand awareness and recommendation likelihood. They influence how users perceive your category authority.

- Citations drive referral traffic and build trust. They prove the AI model considers your content authoritative enough to source.

Research published in 2025 by BrightEdge found that citation behavior varies dramatically across platforms. In ChatGPT, only about 2 in 10 brand mentions include a clickable link. Perplexity averages over 5 citations per answer but mentions brands less frequently — roughly 1 in 5 answers include brand references. Google AI Overviews sit in the middle, blending brand recall with source attribution.

This means a brand could have strong mention rates on ChatGPT but almost no citation traffic — or strong citations on Perplexity but low mention frequency. You need to measure both metrics, per platform, to understand your actual AI visibility.

The five core metrics for AI brand tracking

Once you understand the mention/citation distinction, build your measurement framework around these five metrics:

- Inclusion Rate (IR): The percentage of relevant prompts where your brand is named or cited, segmented by AI platform and query intent

- Citation Coverage (CC): The percentage of your mentions that include a clickable link to your domain — broken down by link type (homepage, product page, blog post, third-party source)

- AI Share of Voice (SOV): Your brand’s mention and citation frequency compared to competitors across the same prompt set

- Answer Placement Score (APS): Where your brand appears within the AI response — first mentioned, middle of a list, or buried at the end. Earlier placement correlates with stronger recommendation signals.

- Volatility Index: Week-over-week change in which brands appear for a given prompt. High volatility means the AI model’s recommendations are unstable, and your position could shift quickly.

These five metrics give you a complete operational picture: Are we present? Are we attributed? Are we winning against competitors? How strongly are we recommended? How stable is our position?

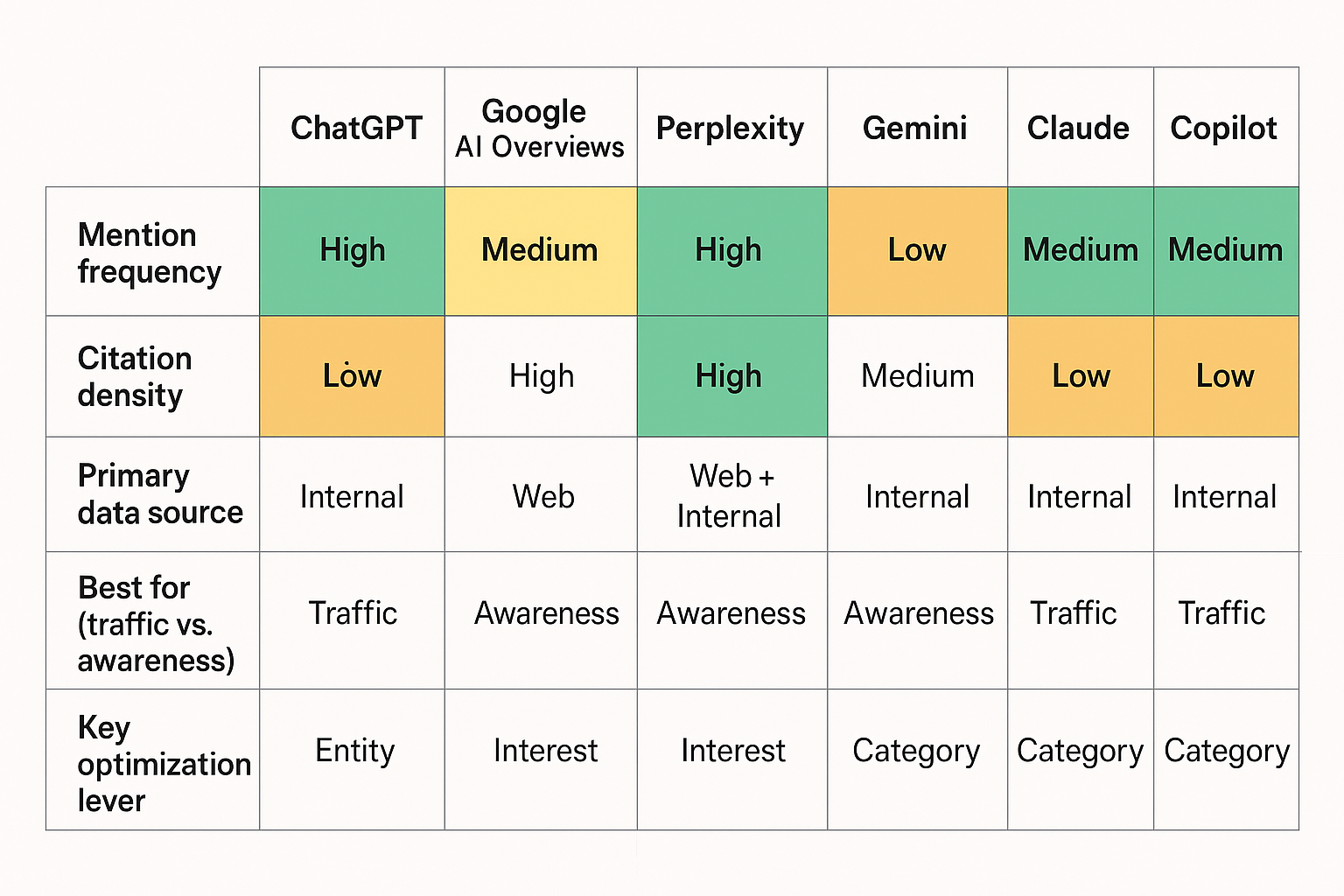

Which AI Platforms You Need to Monitor

AI search is fragmented. Each platform pulls from different data sources, uses different retrieval methods, and produces different brand recommendations for identical queries. Monitoring only one platform gives you an incomplete — and potentially misleading — view of your visibility.

As of 2026, these are the platforms that matter most for B2B brand tracking:

ChatGPT

ChatGPT remains the dominant AI assistant, with over 800 million weekly active users reported by OpenAI in late 2025. It processes a massive volume of commercial and research queries. Its browsing mode pulls real-time information, but its base model also draws on training data — meaning your brand needs both recent web presence and historical editorial footprint to appear consistently.

Google AI Overviews

AI Overviews appear on billions of Google searches and sit above traditional organic results. They heavily favor domains with strong traditional SEO authority — news sites, .edu and .gov domains, and well-established industry publications. If you’re already investing in brand mentions for SEO, those same signals often influence AI Overview inclusion.

Perplexity

Perplexity has grown rapidly as a research-oriented AI search engine, reaching approximately 22 million monthly active users by early 2026. It provides more citations per answer than any other platform, making it valuable for referral traffic. However, it mentions brands less frequently — so when it does cite you, the traffic impact is significant. Learn more about building brand mentions in Perplexity specifically.

Google Gemini

Google’s standalone AI assistant is growing its user base rapidly, particularly through integration with Android devices and Google Workspace. Brand mentions in Gemini tend to reflect Google’s Knowledge Graph, meaning structured data and entity clarity have outsized influence on whether your brand appears.

Claude

Anthropic’s Claude has gained traction among enterprise users and research-focused audiences, with integration into tools like Safari expanding its reach. Claude tends to favor content with high informational density and clear expert sourcing.

Microsoft Copilot

Copilot surfaces AI answers across Bing, Microsoft Edge, and the Windows operating system. It draws heavily from Bing’s index, making Bing SEO signals more relevant here than on other AI platforms.

Pro Insight: Research from Fractl, cited by Search Engine Land in 2025, found that only 7.2% of domains get cited in both LLMs and Google’s AI Overviews. Most brands appear in one ecosystem or the other. This means your tracking — and your optimization strategy — must be platform-specific.

How to Build Your AI Tracking Prompt Set

The quality of your tracking depends entirely on the prompts you monitor. AI responses are query-specific — the same brand might appear for one prompt and be absent from a slight variation. A structured prompt set eliminates guesswork and gives you consistent, comparable data over time.

Step 1: Map prompts to buyer intent stages

Start from your buyer journey and work backward. Group prompts into three intent clusters:

- Category prompts reach problem-aware users. Examples: “best tools to track AI brand mentions,” “top AI visibility platforms for B2B.” These tell you whether your brand is being recommended when buyers first explore the category.

- Comparison prompts reach solution-aware users. Examples: “BrandX vs. BrandY for AI monitoring,” “which AI visibility tool has the best Perplexity tracking.” These reveal whether you appear in head-to-head evaluations.

- Solution/How-to prompts reach users actively evaluating. Examples: “how to track brand mentions across AI search platforms,” “how to get cited by ChatGPT.” These show whether your content is considered authoritative enough to cite as guidance.

Step 2: Build 50–200 core prompts per market

Pull phrasing from real sources: sales call transcripts, support tickets, community forums, and “People Also Ask” boxes on Google. For each core prompt, create 2–3 synonym variations (“best” vs. “top” vs. “recommended”) and add geo or language variants if you serve multiple markets.

Assign each prompt a business value score based on revenue potential, funnel stage, and competitive intensity. This helps you prioritize which prompts to track weekly versus biweekly.

Step 3: Set your tracking cadence

- Weekly: Core prompts (your highest-value 50–100 queries). Fast feedback on gains, losses, and competitor movement.

- Biweekly: Extended prompt set (additional variations and long-tail queries).

- Monthly: Experimental and emerging prompts. Revisit quarterly to prune low-value prompts and add new phrasing you discover.

Store everything in a single source of truth — a spreadsheet, database, or tracking platform — with owners, cadence, and last-run dates clearly documented.

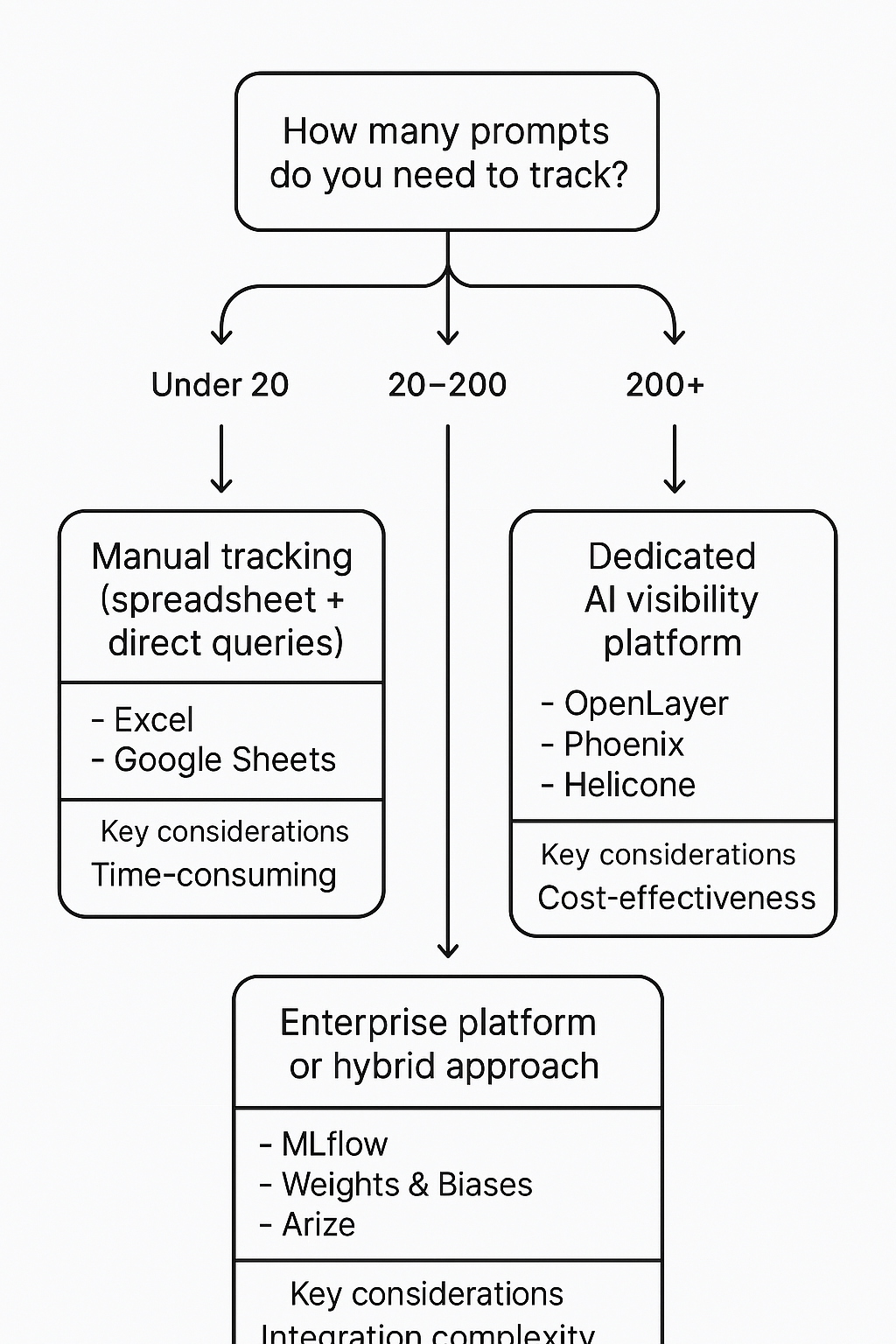

Choosing the Right Tracking Approach

You have three options for tracking brand mentions across AI platforms, and the right choice depends on your budget, team size, and how many prompts you need to monitor.

Manual tracking

Type your prompts directly into each AI platform, record the response, and log which brands are mentioned and cited. This works for small prompt sets (under 20 queries) and gives you the most accurate view of what users actually see.

The downside: it’s time-intensive, doesn’t scale, and AI responses can vary between sessions — making it hard to establish reliable baselines.

Best for: Initial exploration, sanity-checking tool data, and teams just starting with AI visibility tracking.

Dedicated AI visibility platforms

Specialized tools like SE Ranking’s AI Search Toolkit, Nightwatch, and others run structured prompts across multiple AI platforms and record mentions, citations, placement, and sentiment automatically. They provide dashboards for inclusion rate, share of voice, and competitive benchmarking.

Key evaluation criteria when choosing a platform:

- Platform coverage: Does it track all the AI engines your audience uses?

- Front-end capture: Does it capture what users actually see, or does it rely on API responses that can diverge from the live interface?

- Competitive benchmarking: Can you compare your visibility against named competitors on the same prompts?

- Historical data: Does it store past responses so you can measure trends and volatility?

- Citation detail: Does it differentiate between mentions and linked citations, and show which specific URLs are cited?

For a deeper comparison of available tools, see our breakdown of AI rank trackers for brand mentions.

Hybrid approach with traditional SEO tools

Platforms like Semrush and Ahrefs have added AI visibility modules that connect traditional SEO data with AI search performance. This approach works well if your team already uses these tools and wants to layer AI tracking onto existing workflows without adopting a completely new platform.

The tradeoff: AI-specific features are typically less granular than dedicated AI tracking platforms, but the convenience of a unified dashboard can be worth it for smaller teams.

Setting Up Your Tracking Dashboard

Raw data means nothing without a structured way to interpret it. Your dashboard should answer four questions at a glance:

- Are we present? (Inclusion Rate by platform and intent cluster)

- Are we attributed? (Citation Coverage by link type)

- Are we winning? (Share of Voice vs. top 3 competitors)

- How stable is our position? (Volatility Index, week over week)

Structure the executive view

Keep the top-level view on a single screen. Show IR, CC, SOV, and APS by platform, with trend arrows indicating weekly movement. Below that, list your top three gains, top three losses, and any active optimization initiatives.

This gives leadership a clear read on AI visibility performance without requiring them to interpret raw prompt-level data.

Build the operational layer

Below the executive view, create drill-down views by:

- Platform: Compare your performance on ChatGPT vs. Gemini vs. Perplexity — you’ll often find significant differences

- Intent cluster: Separate category, comparison, and solution prompts to see where you’re strong and where you have gaps

- Competitor: Track which competitors appear for prompts where you don’t — these are your immediate optimization targets

Log the evidence

For every tracking run, store the full answer text, visible links, brand names mentioned, placement order, model version, locale, and timestamp. This evidence layer makes your data auditable and lets you explain trend shifts when AI platforms update their models or retrieval methods.

Without stored evidence, you can’t distinguish between a real visibility loss and normal AI response variability.

Interpreting Your Results: What the Data Tells You

Data without interpretation is just noise. Here’s how to read the most common patterns in your AI tracking dashboard.

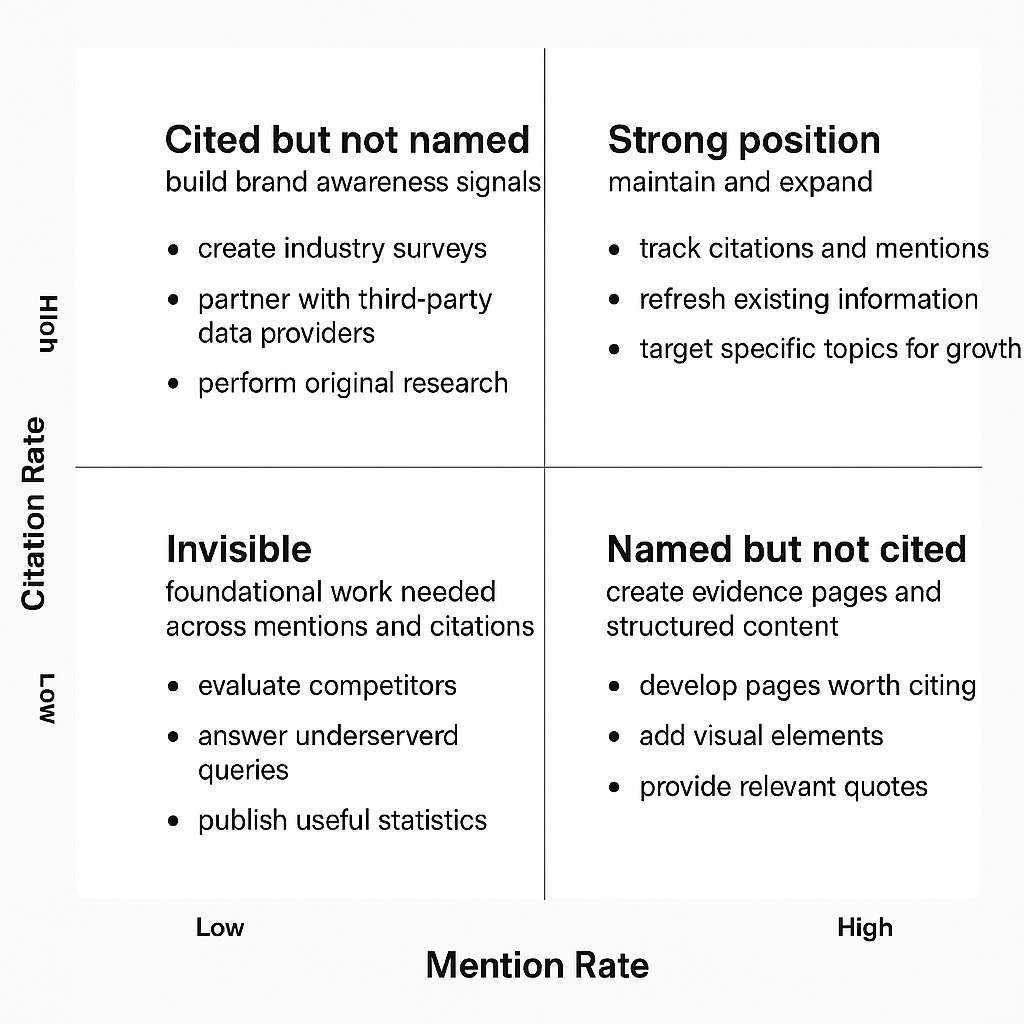

High mentions, low citations

AI platforms name your brand frequently but rarely link to your site. This typically means your brand has strong awareness signals (media coverage, social discussion) but your owned content isn’t structured for citation. Fix this by creating evidence pages — comprehensive guides, comparison matrices, and data-rich resources with clear headings, FAQ schema, and transparent sourcing that AI models can easily reference and link to.

Strong on one platform, invisible on others

Each AI platform draws from different data sources and applies different retrieval logic. If you’re visible in ChatGPT but absent in Perplexity, investigate which sources Perplexity cites for those queries — then create or earn content on those specific domains. Platform-specific optimization is essential because a strategy that works for one AI engine may not transfer to another.

Declining visibility over time

AI models continuously retrain on new content. If your visibility drops, competitors may be publishing more citation-worthy material, or your content may be aging out. Check your volatility index: if it’s high, the AI’s recommendations are shifting frequently, and you need more frequent content updates. If volatility is low but your position has dropped, a competitor likely displaced you with a stronger resource.

Competitors appear where you don’t

This is your highest-value optimization signal. When a competitor is cited for a prompt where you’re absent, analyze what makes their content citation-worthy. Is it more comprehensive? More recently updated? Published on a higher-authority domain? Use these gaps to prioritize your content and brand mention strategy for generative AI.

Key Definition: Competitive displacement rate (CDR) is the percentage of tracked prompts where your brand replaced a competitor’s mention over a given time period. A rising CDR means your AI visibility strategy is actively winning share from competitors.

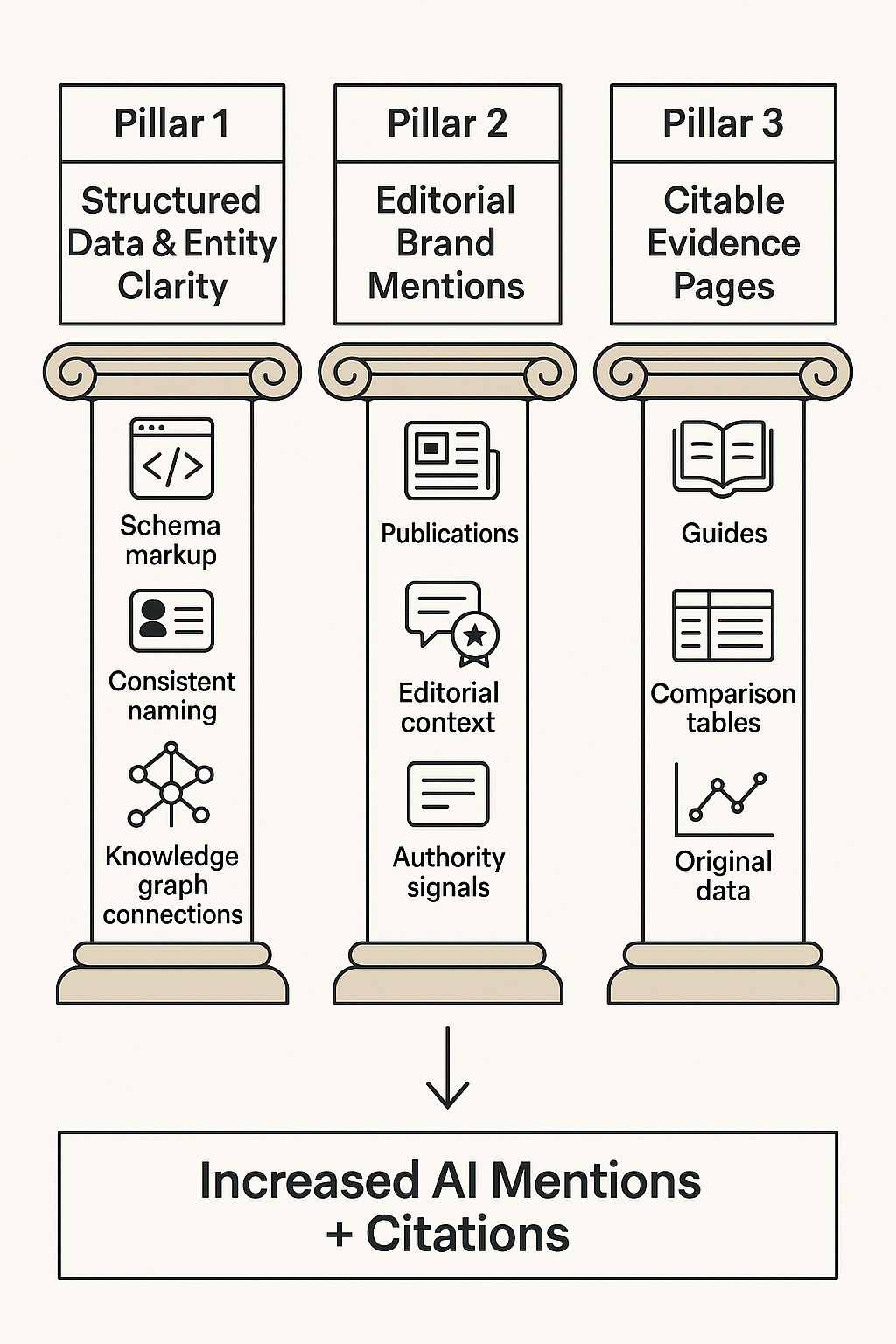

Three Levers That Move AI Mentions and Citations

Tracking reveals the gaps. Closing those gaps requires action across three interconnected levers.

Lever 1: Structured data and entity clarity

AI models need to understand what your brand is and what category it belongs to before they can recommend it. Structured data — specifically schema markup — provides that clarity.

Implement these schema types on your site:

- Organization: Name, description, URL, logo, sameAs (linking to Wikipedia, LinkedIn, Crunchbase)

- Product or Service: Name, description, brand, category, offers

- FAQPage: Question-and-answer pairs that AI models can extract directly

- HowTo: Step-by-step processes with clearly defined steps

- AggregateRating and Review: Social proof signals that build model confidence

Keep entity names consistent across every platform — your website, Google Business Profile, Crunchbase, LinkedIn, and industry directories. Inconsistency confuses AI models and reduces your chances of being surfaced.

Lever 2: Editorial brand mentions on high-authority publications

AI models learn brand-category associations from the content they’re trained on and the sources they retrieve in real time. When your brand is mentioned contextually in high-authority editorial content — industry publications, respected blogs, news outlets, and research reports — AI platforms are more likely to recognize and recommend you.

This is where strategic AI brand mentions become critical. A mention in a well-regarded industry publication carries significantly more weight than hundreds of mentions on low-authority sites.

In campaigns across 67+ B2B companies, the BrandMentions team found that brands with consistent editorial mentions on high-authority publications achieved AI recommendation rates 89% higher than those relying solely on traditional SEO. The key was placement on publications that AI models actively learn from during training and retrieval cycles.

Lever 3: Citable evidence pages

AI models cite content that directly, clearly, and authoritatively answers a user’s question. Create dedicated evidence pages for your most important tracked prompts:

- Comprehensive how-to guides with clear H2/H3 structure, numbered steps, and FAQ sections

- Comparison matrices with factual, balanced analysis (not sales pages disguised as comparisons)

- Original research or data with methodology, specific numbers, and transparent sourcing

- Decision frameworks that help users evaluate options in your category

Every evidence page should include a dateModified timestamp, author byline with credentials, and internal links to related resources. Keep them updated — freshness is a measurable factor in citation selection across ChatGPT, Perplexity, and Gemini.

Research from Princeton University, Georgia Tech, and the Allen Institute for AI, published in 2024, found that adding citations, statistics, and expert quotes to content boosted AI visibility by more than 40%. Structure and evidence density directly influence whether your content gets cited.

A Weekly Tracking Workflow You Can Start This Week

You don’t need an enterprise platform to begin tracking. Here’s a practical workflow that works for teams of any size.

Monday: Run your core prompt set

Query your top 20–50 prompts across ChatGPT, Perplexity, and Gemini (or use your tracking tool’s automated run). Log: brand mentioned (Y/N), citation link (Y/N), placement (first/middle/end), competitor names, and the full answer text.

Tuesday: Score and compare

Calculate your inclusion rate, citation coverage, and share of voice for the week. Compare against the previous week. Flag any prompts where your visibility dropped or where a new competitor appeared.

Wednesday–Thursday: Investigate gaps

For prompts where competitors appear and you don’t, analyze the cited sources. What content are AI platforms pulling from? Is it a specific blog post, a third-party review, or a comparison page? Document the gap and the content or mention needed to close it.

Friday: Prioritize and assign actions

Pick the two to three highest-impact gaps from the week. Assign specific actions: create an evidence page, update an existing resource, pursue an editorial mention on a cited publication, or refine your tracking approach based on what you’ve learned.

This weekly cadence turns tracking from a passive reporting exercise into an active optimization loop. Within 4–6 weeks, you’ll have enough trend data to identify which actions move your metrics and where to concentrate resources.

Common Mistakes That Undermine AI Tracking

Avoid these pitfalls that waste time and produce misleading data:

- Tracking only one AI platform. ChatGPT visibility doesn’t predict Perplexity visibility. Monitor all platforms your audience uses.

- Relying on API responses instead of front-end capture. API outputs can differ from what users actually see. Always validate against the live interface.

- Using too few prompts. A handful of queries doesn’t provide statistical significance. Build a prompt set of at least 50 core queries for reliable data.

- Ignoring prompt variations. “Best AI tracking tool” and “top AI tracking platform” can produce completely different brand recommendations. Test synonym variations.

- Counting redirected or UTM-tagged links as separate citations. Normalize URLs before counting to avoid inflating citation metrics.

- Skipping version and timestamp logging. Without metadata, you can’t explain trend shifts when AI platforms update their models.

- Treating AI tracking as a one-time audit. AI recommendations change weekly. Continuous monitoring is the only approach that produces actionable insights.

How AI Visibility Connects to Pipeline

AI brand tracking isn’t an academic exercise — it drives measurable business outcomes when connected to your broader marketing measurement.

According to Adyen’s 2025 retail report, 37% of consumers use AI to assist with shopping decisions, and 56% have used AI specifically to discover brands they wouldn’t have found through traditional search. For B2B brands, the pattern is similar: buyers ask AI assistants to shortlist vendors, compare solutions, and validate purchasing decisions.

The connection to pipeline works through several mechanisms:

- Brand recall: Better.com reported a 41% improvement in brand recall after optimizing content for AI search, according to case study data published in 2025. Users who see your brand recommended by AI develop stronger familiarity before they ever visit your site.

- Direct brand searches: Samsung attributed 28% of its direct brand searches to increased zero-click exposure in AI Overviews, as reported by industry analysts in 2025. AI visibility creates downstream search behavior that traditional analytics can capture.

- Shorter sales cycles: When buyers arrive at your site already pre-sold by an AI recommendation, they convert faster. Agencies like BrandMentions track when major AI models update their training data and time placements to maximize inclusion — a process that directly impacts how quickly AI-influenced prospects move through the funnel. Explore how the placement process works.

To measure this connection, correlate changes in your AI share of voice with branded search volume, direct traffic, and inbound demo requests over 30–60 day windows. The attribution won’t be pixel-perfect, but the directional signal is clear and actionable.

What’s Changed Since 2024–2025

AI search tracking was barely a defined discipline in 2024. Here’s what has shifted:

- Tool maturity: In 2024, most AI visibility tracking was manual or cobbled together from beta features in SEO platforms. As of 2026, dedicated AI tracking platforms offer automated prompt monitoring, cross-platform dashboards, historical trend analysis, and competitive benchmarking as standard features.

- Platform fragmentation has increased: Google AI Overviews, Google AI Mode, ChatGPT with browsing, Perplexity, Gemini, Claude, and Copilot all serve different audiences and cite different sources. The tracking surface area is larger than it was even 12 months ago.

- Citation behavior has become more measurable: Early 2025 research established baseline citation rates per platform (Perplexity’s high citation density vs. ChatGPT’s lower linking rate). As of 2026, teams can benchmark against established norms rather than guessing.

- Brand mentions are now a recognized SEO signal: The connection between brand mentions in AI and broader marketing outcomes is no longer theoretical. Multiple published case studies now tie AI visibility to brand search volume, pipeline velocity, and revenue attribution.

Frequently Asked Questions

How often should I track brand mentions across AI search platforms?

Track your core prompt set weekly. AI-generated responses shift frequently as models retrain and retrieval systems update. A weekly cadence catches meaningful changes fast enough to respond before visibility erodes. For extended prompt sets, biweekly monitoring is sufficient. Increase frequency immediately after publishing major content updates or earning new editorial mentions to measure time-to-inclusion.

Can I track AI brand mentions for free?

Yes, through manual testing. Query your target prompts directly in ChatGPT, Perplexity, Gemini, and other platforms, then log the results in a spreadsheet. This works for small prompt sets (under 20 queries) but doesn’t scale. Google Alerts can supplement by tracking web mentions that influence AI training data, though it doesn’t monitor AI-generated answers directly. For ongoing tracking at scale, dedicated tools are more practical.

Why does my brand appear in ChatGPT but not Perplexity?

Each AI platform uses different data sources and retrieval methods. ChatGPT draws on its training data plus real-time browsing results. Perplexity pulls heavily from its own web index with a citation-dense format. A brand strong in one ecosystem may have gaps in the other. Analyze which sources Perplexity cites for your target queries, then focus on earning presence on those specific domains. For platform-specific strategies, see our guides on tracking brand mentions in Perplexity and monitoring brand mentions in ChatGPT.

What’s the difference between AI visibility tracking and traditional SEO rank tracking?

Traditional rank tracking measures your website’s position in a list of search results for specific keywords. AI visibility tracking measures whether your brand is named or cited in AI-generated answers — a fundamentally different discovery mechanism. You can rank #1 on Google for a keyword and still be absent from ChatGPT’s answer for the same query. Both types of tracking are necessary as of 2026, because users increasingly consult both traditional search and AI assistants during their research process.

Does tracking AI mentions actually improve my visibility?

Tracking alone doesn’t improve visibility — but it identifies the specific gaps you need to close. Without tracking, you’re optimizing blind. With it, you know exactly which prompts to target, which competitors to displace, and which content to create or update. Teams that implement a weekly tracking-to-action loop typically see measurable improvements in inclusion rate within 8–12 weeks, depending on the competitive intensity of their category.

Researched and drafted with AI assistance, reviewed and edited by the BrandMentions editorial team.

Want to see where your brand currently stands across AI search platforms? Get a free AI visibility audit and find out which prompts mention your competitors — but not you.