Perplexity AI answers millions of research queries every day — and every response includes citations. Monitoring your brand mentions in Perplexity requires a combination of structured prompt testing, systematic tracking of mentions versus citations versus links, and consistent measurement over time. Unlike traditional search monitoring, Perplexity’s real-time web retrieval means your visibility can shift within hours, not months.

If you sell B2B software, professional services, or any product where buyers research before purchasing, Perplexity is shaping their shortlists. The question is whether your brand appears — and how it’s framed when it does.

This guide covers exactly how to monitor Perplexity brand mentions in 2026, from manual workflows you can start today to automated approaches that scale across hundreds of queries. You’ll also learn which metrics matter, how to interpret results, and what actions actually improve your citation rate.

What You’ll Learn

- Why Perplexity brand monitoring differs from traditional search tracking — and what that means for your workflow

- Three distinct metrics to track: mentions, citations, and links (and why mixing them leads to wrong conclusions)

- How to build a repeatable prompt library anchored in real buyer queries

- A step-by-step manual tracking method with a standardized spreadsheet structure

- When to shift from manual monitoring to automated tools — and what to look for

- Specific actions that improve Perplexity citation rates based on how its retrieval system works

- Common mistakes that waste monitoring effort and how to avoid them

Why Does Perplexity Brand Monitoring Matter in 2026?

Perplexity processes over 400 million queries monthly as of early 2026, according to DemandSage’s usage data. Its user base skews toward researchers, analysts, and professionals making purchase decisions — people who want sourced answers, not ten blue links.

Every Perplexity response includes numbered citations. That transparency creates a measurable opportunity: you can see exactly which sources Perplexity trusts for your category and whether your brand is among them.

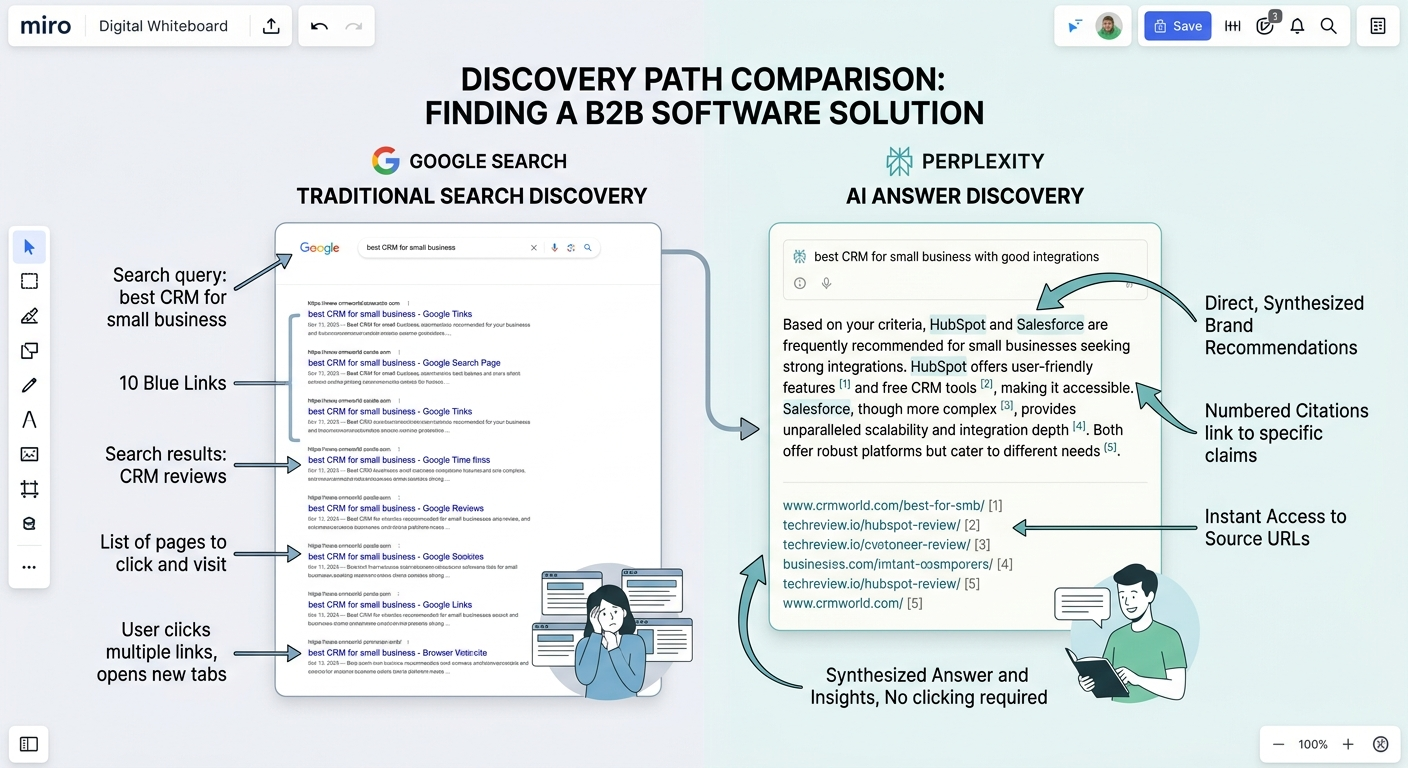

Traditional SEO tools track keyword rankings and backlinks on Google. They don’t capture what happens when a procurement manager asks Perplexity “best project management tools for remote teams” and your competitor gets cited while you don’t. That gap between Google rankings and AI answer visibility is where brands lose deals they never knew existed.

Perplexity Uses Real-Time Retrieval, Not Training Data

Perplexity operates differently from ChatGPT or Claude. It uses Retrieval-Augmented Generation (RAG) — a process where the model searches the live web for every query, then synthesizes what it finds into a cited answer. This means fresh content can surface within hours or days, not months.

That real-time retrieval has two implications for monitoring. First, your visibility can change quickly when you publish new content or earn new coverage. Second, a single tracking snapshot tells you very little. You need repeated measurement on a consistent cadence to separate real trends from noise.

How This Differs From Monitoring ChatGPT or Gemini

If you already monitor brand mentions in ChatGPT, Perplexity tracking requires a different approach. ChatGPT relies primarily on training data plus occasional web access. Perplexity searches the web for every response. That means:

- Citation transparency — Perplexity shows its sources with numbered references. ChatGPT often doesn’t.

- Faster content reflection — new pages can appear in Perplexity answers within days. ChatGPT training data updates take months.

- Less output variation — identical queries in Perplexity produce more consistent results than in ChatGPT, making structured tracking more reliable.

For a broader view across all major AI platforms, see how to track brand mentions across AI search platforms.

Separate Mentions, Citations, and Links — They Mean Different Things

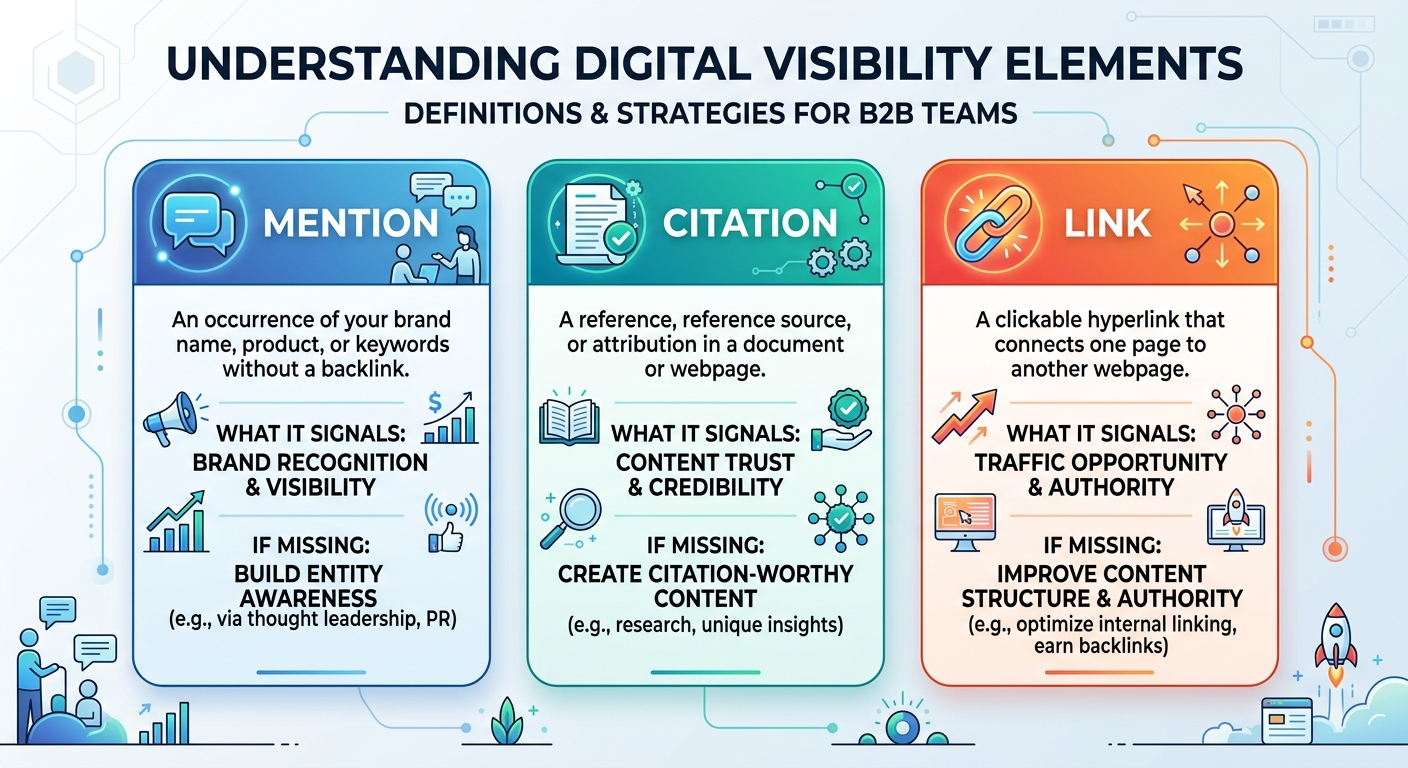

One of the most common monitoring mistakes is treating all Perplexity appearances as the same metric. They’re not. Each reflects a different level of visibility and requires a different response if it’s missing.

A brand mention is when your brand name appears in Perplexity’s answer text. It means the model recognizes your brand as relevant to the query — but it doesn’t necessarily link to your content.

A citation is when your domain appears in Perplexity’s numbered reference list. This means Perplexity used your content as a source to build its answer. Citations carry more weight because they signal content trust.

A link is a clickable URL to your site that users can follow directly from the Perplexity response. Links drive referral traffic and are the most commercially valuable outcome.

Track all three with separate columns in your monitoring system. If you’re getting mentioned but not cited, Perplexity recognizes your brand but doesn’t trust your content as a source — a different problem than being invisible entirely. If you’re cited but not linked, your content serves as background research without driving clicks.

Key Definition: A brand mention in Perplexity is any instance where your company name appears in the AI-generated answer text — distinct from a citation (your domain in the reference list) or a link (a clickable URL users can follow).

How to Build a Prompt Library for Perplexity Monitoring

Monitoring starts with knowing what to test. A prompt library is a standardized set of queries you run through Perplexity on a regular schedule. Without one, tracking becomes inconsistent and the data is unreliable.

Start With Real Buyer Queries, Not Random Questions

Pull seed queries from sources that reflect actual demand:

- PPC search terms — queries people already use to find products like yours

- Sales call transcripts and CRM notes — questions prospects ask before buying

- Support tickets and on-site search logs — problems your audience needs solved

- Keyword research tools — category terms and long-tail questions in your space

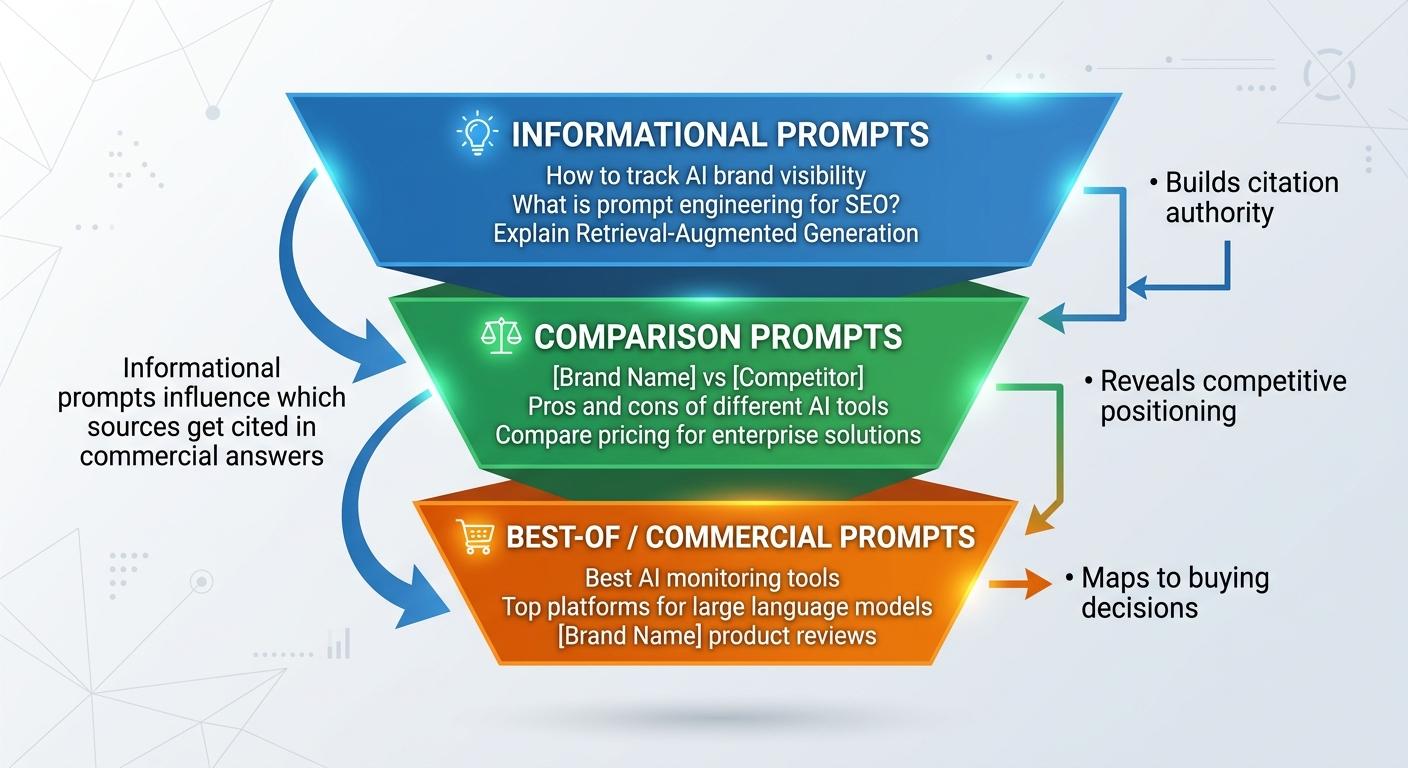

Avoid the temptation to only test “best of” queries. Informational prompts — the ones where buyers gather context before comparing options — often determine which sources Perplexity trusts when it later answers commercial-intent questions.

Transform Seeds Into Synthetic Prompts

Each seed keyword should generate three to five prompts that mirror how people actually ask Perplexity. Keep prompts neutral. Don’t include your brand name — you want to test whether Perplexity surfaces your brand organically.

Seed keyword: “AI visibility monitoring tools”

Synthetic prompts:

- “What are the best tools for monitoring brand visibility in AI search?”

- “How do I track whether AI platforms mention my brand?”

- “Which tools monitor Perplexity and ChatGPT brand mentions?”

- “What should I look for in an AI brand monitoring platform?”

Cover Three Prompt Categories

Your library should include a mix of query types for complete coverage:

- Best-of queries — “best [category] for [audience]” or “top [solution] providers” — these map to shortlist behavior

- Comparison queries — “[brand] vs [competitor]” or “alternatives to [product]” — these reveal competitive positioning

- How-to and informational queries — “how to [solve problem]” or “what is [concept]” — these build the citation graph that feeds commercial answers

Start with 25–50 prompts for an initial baseline. Expand to 100–200 once you need stable trendlines across product lines, locations, or audience segments.

Manual Tracking: A Step-by-Step Workflow

Manual monitoring works well when you’re building your first baseline, tracking under 50 prompts, or managing a single brand. Here’s how to set it up properly.

Step 1: Create a Dedicated Testing Environment

Open Perplexity in an incognito or private browser window. Use a separate browser profile if possible. This reduces personalization noise and keeps results comparable between sessions.

Before you start, document your baseline environment:

- Device and browser

- Logged-in or logged-out state

- Region or VPN endpoint

- Perplexity model selection (if applicable)

Keep these variables constant across every tracking run. Changing your browser, location, and model selection in the same week makes it impossible to attribute any visibility change to a specific cause.

Step 2: Run Your Prompt Library and Record Results

For each prompt, capture these data points in a spreadsheet (Google Sheets or Airtable both work):

- Date and time

- Exact prompt text

- Perplexity model used

- Mentioned? (Yes/No — did your brand name appear in the answer text?)

- Cited? (Yes/No — did your domain appear in the numbered references?)

- Linked? (Yes/No — was a clickable URL to your site included?)

- Cited source URLs (the full list of domains Perplexity referenced)

- Competitors mentioned (every brand name in the response)

- Position (first paragraph, middle, or end of the response)

- Accuracy score (1–5 scale — does Perplexity describe your brand correctly?)

- Notes (anything unusual: outdated info, wrong pricing, confusing brand name variants)

Pro Insight: Always save the cited source URLs. These tell you exactly which domains Perplexity trusts for your category. If a specific review site or industry publication appears repeatedly as a reference, that’s where you should focus your earned media and content distribution efforts.

Step 3: Set a Consistent Tracking Cadence

Run your full prompt library on a fixed schedule:

- Weekly: Test your 10–15 highest-priority prompts (commercial intent, revenue-driving queries)

- Monthly: Full audit of all prompts in your library

- After major events: New content published, product launches, PR coverage, or Perplexity model updates

One snapshot shows a single moment. Four to six weeks of data reveal patterns. Track trends — don’t react to isolated results.

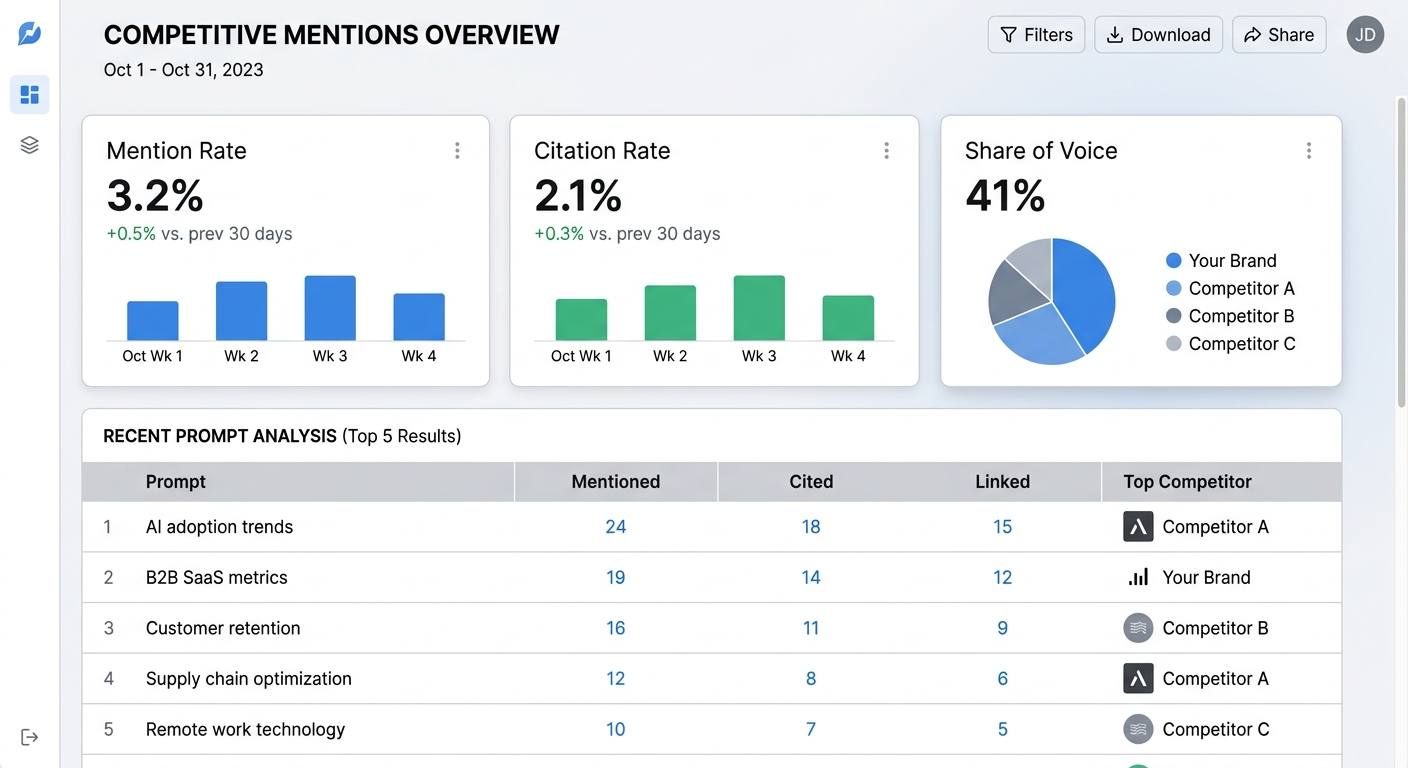

Step 4: Calculate Your Core KPIs

From your tracking data, calculate three metrics that tell you where you stand:

- Mention rate — (Prompts where your brand is mentioned ÷ Total prompts) × 100. Target: 30%+ for branded queries, 10%+ for category queries.

- Citation rate — (Mentions that include a citation to your domain ÷ Total mentions) × 100. Target: above 50%. Below that means Perplexity knows your brand but doesn’t trust your content enough to cite it.

- Share of voice — (Your brand mentions ÷ Total brand mentions across all competitors in the response) × 100. This shows your relative position in the category.

When Manual Tracking Isn’t Enough: Scaling With Automated Tools

Manual monitoring breaks down once you cross 50 prompts, manage multiple brands or locations, or need consistent reporting for stakeholders. At that point, you need automated AI visibility analytics tools that handle prompt execution, response capture, and trend analysis.

What to Look for in an Automated Perplexity Monitoring Tool

Not every AI monitoring platform covers Perplexity specifically. When evaluating options, check for these capabilities:

- Perplexity-specific tracking — the tool must run queries through Perplexity’s actual engine, not simulate it

- Separate mention, citation, and link metrics — tools that lump these together hide critical diagnostic information

- Historical data storage — you need trend lines, not just snapshots

- Competitor tracking — share of voice requires seeing who else appears in the same responses

- Multi-platform support — tracking Perplexity alongside ChatGPT, Gemini, and Claude in one dashboard saves time

- Scheduling and alerts — automated runs on your chosen cadence with notifications for significant changes

For a broader comparison of monitoring platforms across all AI engines, see the full breakdown of AI rank trackers for brand mentions.

Manual vs. Automated: When to Switch

Stay manual when you’re establishing your first baseline, refining which prompt categories matter, or tracking fewer than 30 queries for a single brand. The hands-on process teaches you how Perplexity’s responses work, which builds intuition that automated dashboards can’t replace.

Move to automation when you need daily or weekly monitoring at scale, track multiple competitors or locations, or report AI visibility metrics to leadership. Automation reduces human error, ensures consistent testing conditions, and frees your team to focus on acting on the data instead of collecting it.

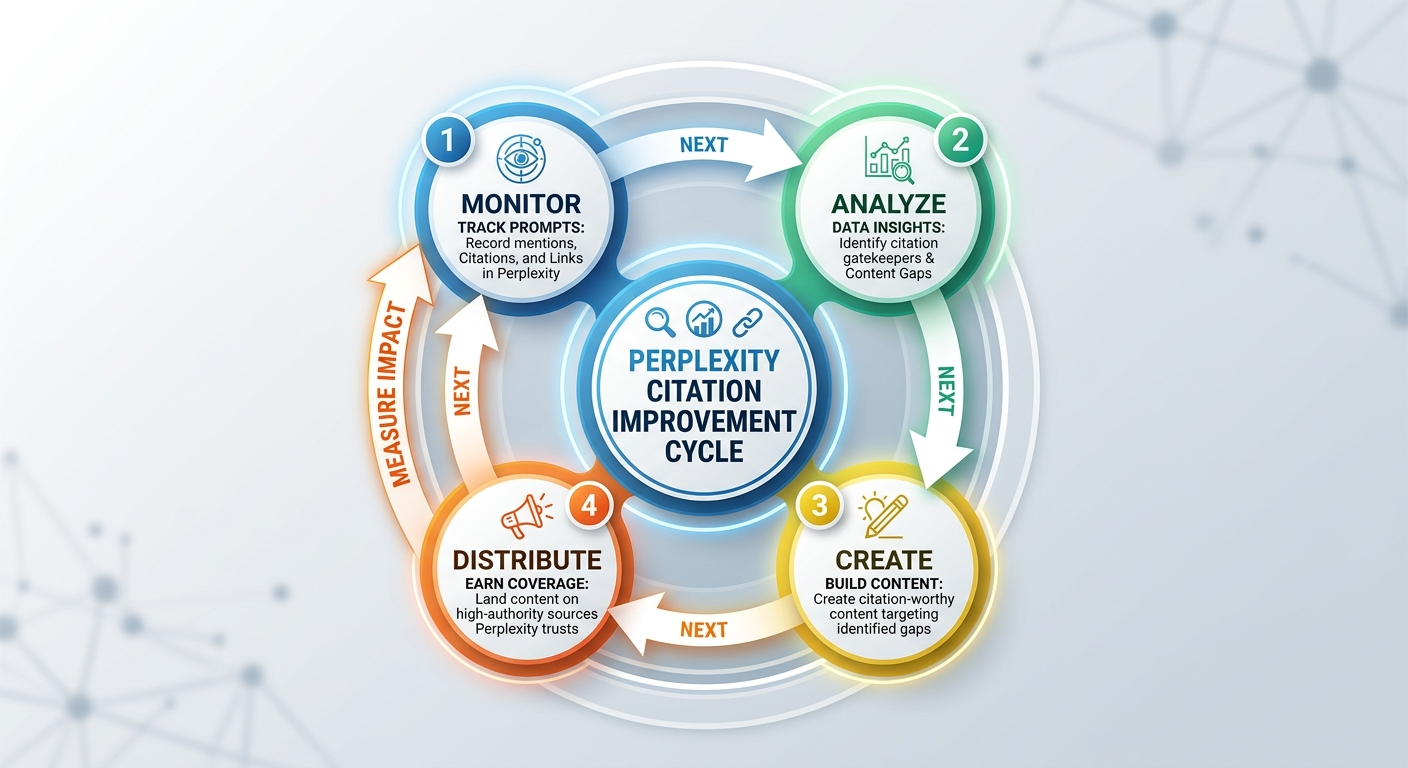

How to Improve Your Perplexity Citation Rate

Tracking is diagnostic. The real value comes from using monitoring data to improve how often Perplexity cites your brand. Since Perplexity retrieves from the live web, your content strategy directly influences what it finds and chooses to reference.

Create Citation-Worthy Content

Perplexity’s retrieval system favors content that reads like a reliable source — clear claims, structured formatting, and verifiable details. The pages most frequently cited across B2B categories share common traits:

- Direct answers to specific questions — lead with the answer, then explain. Don’t bury the key claim in paragraph four.

- Structured formatting — use clear H2/H3 headings, bullet points, tables, and definitions that AI can extract cleanly

- Original data or research — proprietary statistics, survey results, or analysis not available elsewhere. Perplexity prioritizes unique sources.

- Recency signals — publish dates, “updated for 2026” timestamps, and fresh statistics signal that your content reflects current reality

Pages that perform well as Perplexity sources often look like evidence pages: methodology explainers, pricing breakdowns, product comparisons with structured data, and research reports with named findings.

Strengthen Your Entity Consistency

A brand entity in AI search is the collection of facts, associations, and attributes that models connect to your brand name. If your company name, product descriptions, or core positioning are inconsistent across the web, Perplexity struggles to resolve which entity you are — and defaults to competitors with clearer signals.

Audit for consistency across:

- Your website’s About page, product pages, and schema markup

- Third-party profiles (G2, Capterra, Crunchbase, LinkedIn)

- Press coverage and guest posts

- Directory listings and industry databases

Standardize your brand name, product naming, core claims, and category language everywhere your brand appears online. This isn’t just about Perplexity — it strengthens visibility across all generative AI platforms.

Earn Coverage on Perplexity’s Trusted Sources

Your monitoring data reveals which domains Perplexity cites most often for your category. These are your “citation gatekeepers.” If Perplexity consistently references a specific industry publication, review platform, or news outlet when answering queries in your space, earning mentions on those sources directly improves your Perplexity visibility.

Agencies like BrandMentions approach this systematically — placing contextual brand mentions on high-authority publications that AI models actively pull from during retrieval. In campaigns across 67+ B2B companies, the BrandMentions team found that brands with consistent editorial mentions on AI-trusted sources achieved citation rates significantly higher than brands relying on owned content alone.

For deeper context on how editorial mentions influence AI recommendations, see how brand mentions work for SEO and AI visibility together.

Common Mistakes That Waste Your Monitoring Effort

Perplexity monitoring seems straightforward, but several common errors undermine the data quality and lead to wrong conclusions.

Changing Too Many Variables at Once

If you change your prompt phrasing, switch your VPN location, and update your testing browser in the same week, you can’t attribute any visibility shift to a specific cause. Change one variable at a time. Document everything.

Tracking Only Commercial-Intent Prompts

Monitoring only “best [product]” queries misses the informational prompts that build Perplexity’s trust in your content. Informational queries — “how to,” “what is,” “why does” — often determine which sources Perplexity uses when it later answers commercial questions. Include both in your library.

Treating One Snapshot as a Trend

Perplexity answers can vary based on model updates, new content indexed, and source freshness. A single check tells you what happened at one moment. You need four to six weeks of consistent data before drawing conclusions about your visibility trajectory.

Ignoring Accuracy Alongside Visibility

Being mentioned is not always positive. If Perplexity describes your product with outdated features, wrong pricing, or incorrect positioning, that mention works against you. Always score accuracy alongside presence. An inaccurate mention may need faster correction than a missing one.

How Perplexity Monitoring Fits Into Your Broader AI Visibility Strategy

Perplexity is one surface in a multi-platform AI search landscape. Your buyers use ChatGPT, Google Gemini, Claude, and Perplexity in different contexts and at different stages of their research. A complete monitoring strategy covers all of them.

The same principles — structured prompt libraries, separated metrics, consistent cadence — apply across platforms. The implementation differs because each model retrieves and presents information differently. Perplexity’s citation transparency makes it the easiest platform to track systematically, which is why it’s often the best starting point for teams new to AI visibility monitoring.

For platform-specific approaches, see how to check brand mentions in ChatGPT and track brand mentions in Gemini. For a unified view across all platforms, explore monitoring brand mentions in LLMs.

FAQ

How often should I check my brand mentions in Perplexity?

Run your highest-priority prompts weekly and a full audit monthly. Perplexity’s real-time retrieval means visibility can shift quickly, but weekly tracking gives you enough data points to spot trends without overwhelming your team. Add extra checks after major content publishes, PR coverage, or product launches.

Can I track Perplexity brand mentions in Google Search Console?

No. Google Search Console only tracks impressions and clicks within Google’s own search results. Perplexity runs an independent search engine and does not pass data to Google Search Console. You need either a manual tracking workflow or a dedicated AI brand monitoring tool to measure Perplexity visibility.

What’s the difference between a Perplexity mention and a citation?

A mention means your brand name appears in the answer text. A citation means your domain appears in the numbered reference list as a source. You can be mentioned without being cited — meaning Perplexity recognizes your brand but didn’t use your content to build its answer. Citations indicate content trust and are more valuable for driving traffic.

Which types of content get cited most by Perplexity?

Perplexity favors content with clear structure, direct answers, original data, and verifiable claims. Research reports, product comparisons with tables, pricing pages, methodology explainers, and well-organized FAQ sections are cited more frequently than generic blog posts. Content that reads like an authoritative reference tends to outperform content optimized purely for keyword rankings.

Does being mentioned in Perplexity help my Google rankings?

Not directly. Perplexity citations don’t function as backlinks in Google’s ranking algorithm. However, the content qualities that earn Perplexity citations — authority, clarity, structured data, original research — also strengthen traditional SEO performance. Building for AI citation-worthiness and building for Google visibility are increasingly the same discipline.

Your Next Steps

Perplexity monitoring doesn’t require expensive tools or complex infrastructure to start. It requires a systematic approach and consistent execution.

This week: Build a prompt library of 25–30 queries using real buyer questions from your sales team, PPC data, and support logs. Run your first tracking session in an incognito browser. Record mentions, citations, links, competitors, and accuracy scores in a structured spreadsheet.

This month: Establish a weekly cadence for your top 10 prompts and a monthly full audit. Calculate your mention rate, citation rate, and share of voice. Identify which domains Perplexity cites as authorities in your category.

Ongoing: Use your monitoring data to prioritize content creation. Build citation-worthy pages that address the queries where you’re invisible. Earn coverage on the sources Perplexity trusts. Measure the impact in your next tracking cycle.

If you want to understand where your brand stands across Perplexity, ChatGPT, Gemini, and other AI platforms right now — see where your brand stands in AI search.