Your competitor shows up when a buyer asks ChatGPT for the best tool in your category. You don’t. The reason often sits inside G2 review data you’ve never read carefully. G2 AEO insights are the patterns inside G2’s category rankings, review language, and comparison pages that predict whether AI models will cite your brand when buyers ask for recommendations. Read them right and you find the exact gaps costing you citations. Read them wrong and you chase star ratings while your rivals own the answer.

What G2 AEO Insights Actually Mean

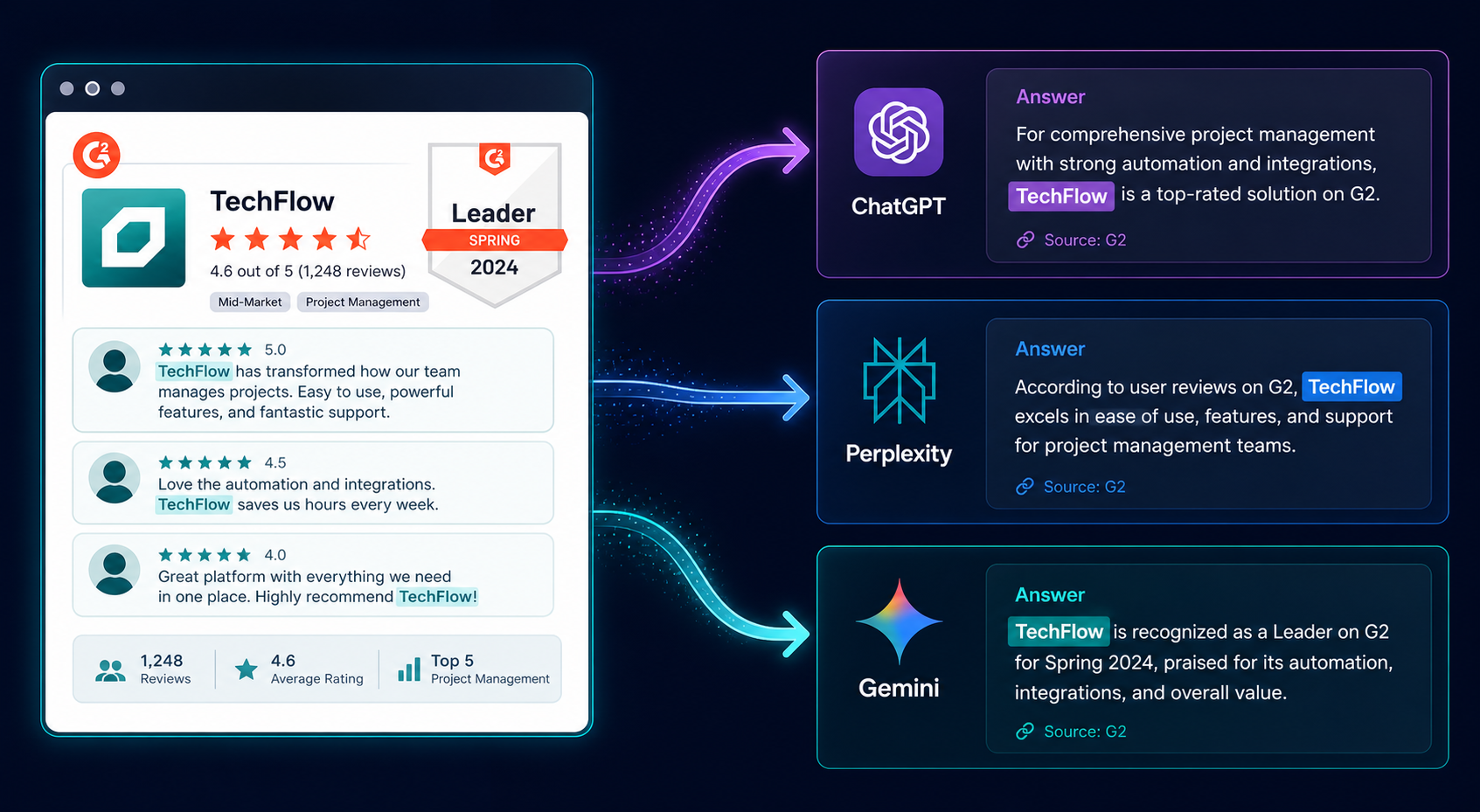

G2 is one of the most cited B2B review sources inside ChatGPT, Perplexity, and Gemini responses. When a buyer asks an AI model to compare answer engine optimization tools, the model leans on G2 category pages, badge winners, and reviewer phrasing to construct its answer.

That makes G2 a signal layer, not a destination.

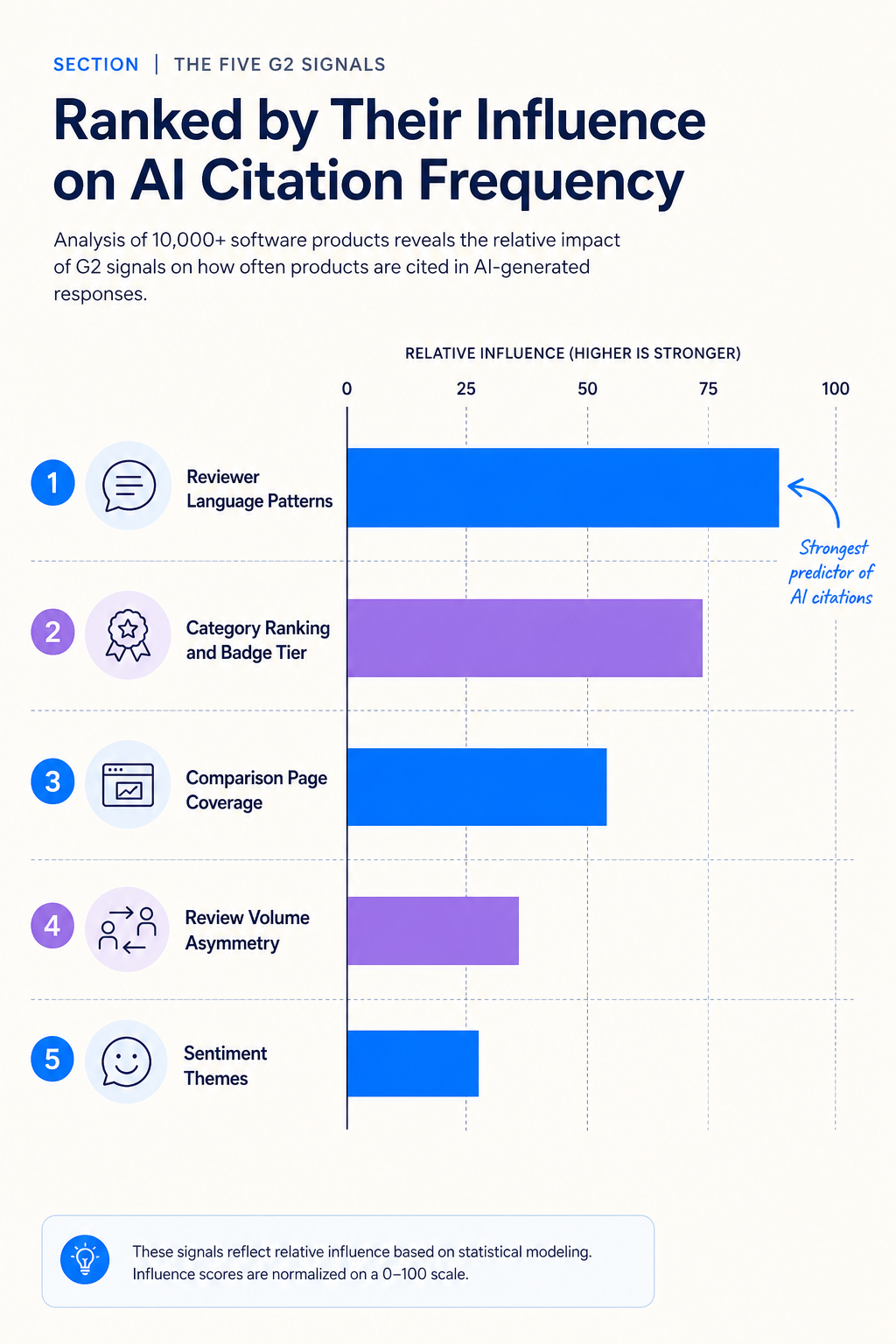

An AEO insight from G2 is any pattern in that signal layer you can act on. Five matter most:

- Category ranking position and badge status inside the answer engine optimization category

- Reviewer language that mirrors how buyers prompt AI tools

- Competitor comparison pages and how often your brand appears alongside others

- Review volume gaps between you and the category leader

- Sentiment themes that surface in AI-generated tool summaries

Each of these influences what an AI model says about you. None of them appear on a standard SEO dashboard.

Why G2 Sits So Close to the AI Answer Layer

AI models prefer sources buyers already trust. G2 carries millions of verified reviews, structured comparison pages, and category taxonomies that map cleanly onto buyer prompts. That structure is easy for a retrieval system to parse.

The answer engine optimization category on G2 launched as an inaugural category in late 2025. It now holds hundreds of listings. Buyers searching for AEO tools through AI assistants get answers shaped by who ranks well inside that category.

G2’s own 2026 buyer research found that 51% of B2B software buyers now start research with AI tools more often than Google. Review site citations were the most confidence-inspiring trust signal when those buyers evaluated an AI answer. G2’s 2026 AI search insight report documents the shift in detail.

So when an AI model recommends a tool in your category, two things have usually happened. The model retrieved a G2 page during answer construction. And the buyer mentally checked for review-site backing before trusting the recommendation.

Both happen invisibly. Both decide whether you get the click.

The Five G2 Signals AI Models Read Most Often

Most teams obsess over star ratings. Star ratings move almost nothing in AI citations. Five other signals do.

Category Ranking and Badge Tier

AI models cite Leaders and High Performers more than Contenders. When a model summarizes “the top AEO tools,” it reaches for Grid Leaders first. If your badge tier drops between quarterly reports, your AI mention frequency drops with it.

Check your placement on the live answer engine optimization category page. If you sit below the fold or in a lower tier, you’re competing with sources the model already pre-ranked above you.

Reviewer Language Patterns

Read the verbatim language reviewers use inside your top 20 G2 reviews. Then read the language used in your competitors’ top 20.

What you’re looking for: which phrases buyers actually type into ChatGPT when they search for tools in your category.

If reviewers describe your competitor as “the best tool for tracking brand mentions in ChatGPT” and reviewers describe you as “great customer support and easy onboarding,” the model will cite your competitor when someone asks about tracking AI mentions. Reviewer phrasing trains the answer.

Competitor Comparison Page Coverage

Every G2 comparison page (yours vs a competitor) is an AI-citable asset for head-to-head prompts. If your competitor has 40 comparison pages and you have 8, the model has five times more material to construct comparison answers that exclude you.

List every comparison page where your brand appears. Then list every page where a peer in your category appears that doesn’t include you. That second list is your visibility gap.

Review Volume Asymmetry

Two tools can both be Leaders. One has 800 reviews. One has 80. The model sees both, but the 800-review tool carries more retrieval weight because it has more text for the system to ground against.

This isn’t about social proof for human readers. It’s about how much signal exists for a model to pull from.

Sentiment Themes That Surface in AI Summaries

When ChatGPT summarizes a tool, it pulls common sentiment themes from across reviews. “Easy to set up” and “powerful for tracking AI citations” and “lacks advanced reporting” all surface differently in answers.

If the dominant sentiment theme in your G2 reviews is “good support,” the AI model will summarize you as a support-strong tool, not a category-leading visibility tool. Theme drift in reviews becomes theme drift in AI citations.

How to Extract These Insights From Your G2 Profile

You don’t need a special tool to start. You need 90 minutes and a spreadsheet.

Pull the following data from G2 manually for your brand and your top three competitors:

- Current badge tier in the answer engine optimization category and any adjacent category you compete in

- Total review count and review velocity over the last 90 days

- Top 20 most recent reviews, copied verbatim, with sentiment tagged

- Every comparison page URL where your brand or a competitor’s brand appears

- The three most common phrases used across reviewer “What do you like best?” answers

Now compare row by row. The gaps surface within 20 minutes of reading.

One pattern shows up almost every time we run this exercise for a client. The brand with the highest star rating is rarely the brand AI cites most. The brand cited most is the one whose reviewer language matches buyer prompts.

What G2 AEO Insights Don’t Tell You

G2 data is one signal source. It’s not the whole picture.

G2 won’t tell you which AI surfaces are actually citing you right now. For that, you need a separate tracker that runs prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews and logs who gets mentioned.

G2 also won’t tell you about citations on external publications, podcasts, or Reddit threads, all of which AI models pull from. A great G2 profile with zero presence in industry editorial coverage still loses to a competitor with a moderate G2 profile and strong editorial citations across tier one and tier two publications.

And G2 won’t tell you whether your brand is mentioned correctly. Sentiment drift, factual errors, and outdated comparisons inside AI answers need their own audit. That’s a separate workflow tied to tracking brand mentions in AI search results.

Treat G2 insights as one input in a three-source view: review platforms, editorial citations, and live AI mention tracking. Any one source alone misleads.

Turning G2 Gaps Into AI Citation Lifts

Reading the data isn’t the win. Acting on it is.

Once you’ve mapped your gaps, three moves tend to compound fastest:

Run a Targeted Review Campaign Aimed at Buyer Prompt Language

Most review campaigns ask customers to leave a review. Better campaigns ask customers to describe a specific outcome in language buyers actually use when prompting AI.

If buyers prompt ChatGPT with “best tool for tracking brand mentions in AI,” you want reviews containing that exact framing. Send your most engaged customers a short prompt structure: “Describe the specific problem we solved and the result you got, in one or two sentences.” That phrasing produces review text that mirrors buyer search behavior.

Don’t fake reviews. Don’t script them. Coach the framing.

Build Comparison Page Coverage Strategically

G2 generates comparison pages based on review patterns and category co-occurrence. You can’t directly request a comparison page, but you can influence which competitors G2 pairs you with by encouraging reviewers to mention specific alternatives they evaluated.

Pick the three competitors you most want comparison page presence against. Then ask recent customers to note which alternatives they considered when leaving their review.

Layer Editorial Citations on Top of G2 Presence

G2 alone caps your AI citation rate. The brands cited most often pair G2 leadership with consistent presence in editorial publications AI models read during training and retrieval.

That’s where building a citation network across high-authority publications becomes the multiplier. G2 makes you defensible at the comparison stage. Editorial citations make you discoverable at the recommendation stage.

How Often to Re-Read Your G2 AEO Signals

Quarterly is the minimum. Monthly is better if you’re in an active category.

Three triggers should prompt an immediate re-read:

- A new G2 quarterly report drops in your category

- A competitor publishes a major product release or funding announcement

- Your AI citation tracker shows a sudden drop in mention frequency on one platform

The third trigger matters most. AI citation drops are rarely random. They usually trace back to a shift on a source the model trusts, and G2 is one of the first places to check.

Frequently Asked Questions

Do G2 reviews actually influence what ChatGPT recommends?

Yes, but indirectly. ChatGPT and other AI models retrieve G2 pages during answer construction for B2B software categories. Reviewer language, badge tier, and comparison page coverage all shape how the model summarizes a tool. The connection is observable when you run the same prompt before and after a major shift in your G2 presence.

How many G2 reviews do I need to show up in AI answers?

There’s no fixed threshold. What matters more is review velocity, reviewer language alignment with buyer prompts, and category badge tier. A tool with 80 well-phrased reviews and Leader status often outperforms a tool with 400 generic reviews and Contender status in AI citations.

Is G2 the only review source AI models cite for B2B software?

No. Capterra, TrustRadius, and Gartner Peer Insights all appear in AI answers, though G2 carries the most weight in most B2B SaaS categories. The AEO category specifically leans heavily on G2 because the category originated there.

Can I improve my G2 AEO presence without paying for G2 advertising?

Yes. Organic improvements come from review velocity, reviewer language coaching, and getting customers to mention specific competitors when evaluating you. Paid G2 placements help with category visibility but don’t change the underlying review signal AI models read.

What’s the fastest G2 insight I can act on this week?

Read your last 20 reviews and your top competitor’s last 20 reviews side by side. Find the three phrases your competitor’s reviewers use that yours don’t. Send a short outreach to five engaged customers asking them to describe the specific outcome you solved, in their own words. Those new reviews start shifting your reviewer language within a quarter.

Where This Leaves Your Next 30 Days

Open G2 right now and ask ChatGPT to recommend the top tools in your category. Compare the answer to where you actually sit on the G2 category page. If those two views don’t match, you’ve found your starting point. The brands winning AI citations in 2026 aren’t the ones with the highest star ratings. They’re the ones whose review signal, comparison coverage, and editorial presence all tell the same story to a model that has to pick one answer.

See where your brand stands in AI search and get a free audit of your G2 signal alongside your live citation footprint across ChatGPT, Perplexity, and Gemini.

Article delivered as a single clean HTML block ready for the Gumloop to WordPress pipeline.