Your SEO dashboard says rankings are up. Organic traffic is steady. CTR looks healthy. And your CEO just asked why a competitor keeps showing up when she asks ChatGPT for vendors in your category, and you don’t. That gap between “the dashboard looks fine” and “we’re invisible where buyers are actually researching” is the entire story of AI visibility vs SEO metrics. SEO metrics measure how Google’s index treats your pages. AI visibility metrics measure how language models treat your brand. They’re related, but they’re not the same thing, and tracking only one in 2026 means you’re flying half-blind.

This piece breaks down what each set of metrics actually measures, where they overlap, where they diverge, and the dashboard you should be running this quarter.

The Short Version

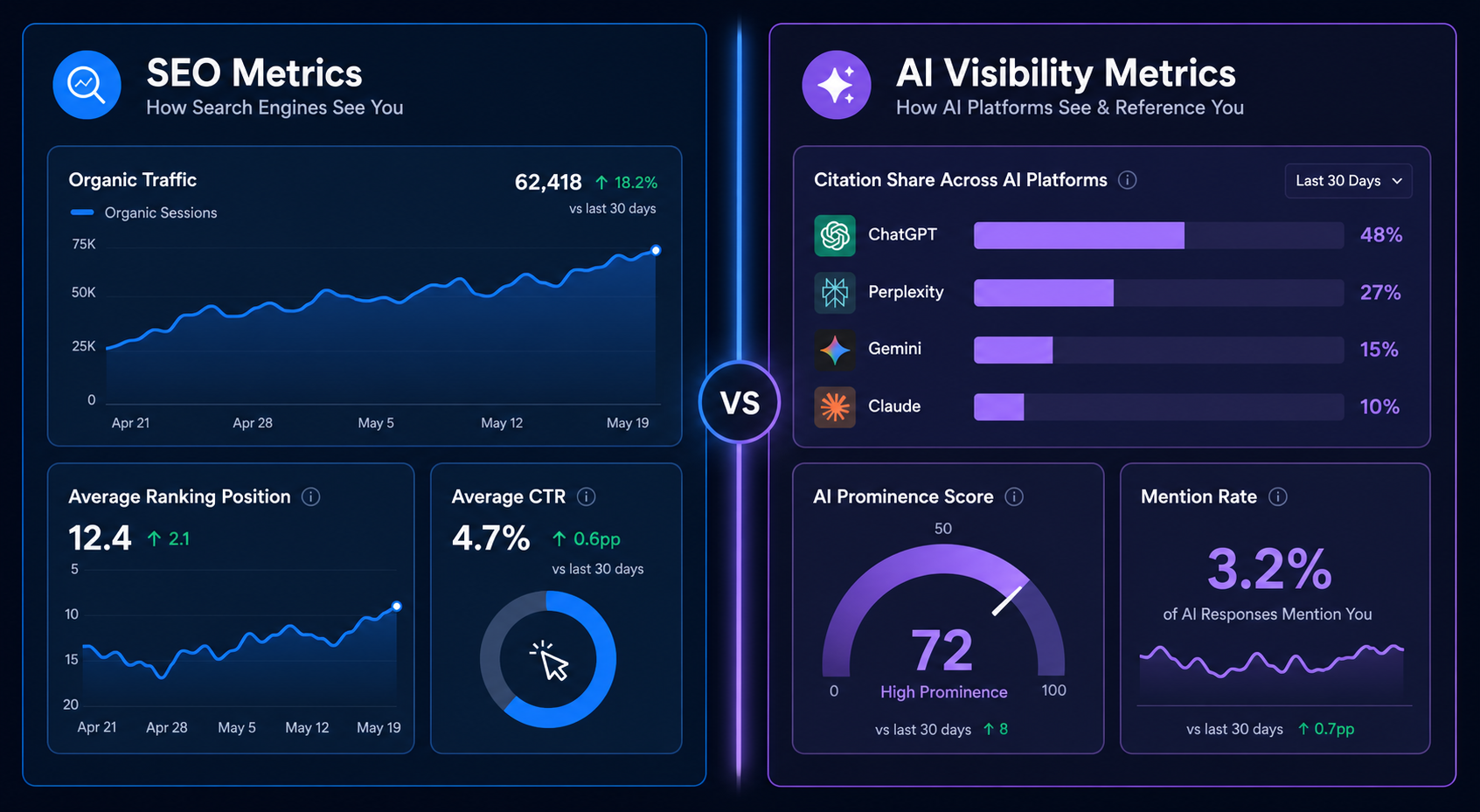

- SEO metrics measure page-level performance in Google’s index: rankings, impressions, clicks, organic traffic, CTR, backlinks.

- AI visibility metrics measure brand-level presence in AI-generated answers: citation share, mention rate, prominence, sentiment, share of voice across ChatGPT, Perplexity, Gemini, and Claude.

- The overlap is smaller than most teams assume. One study found only 17% agreement on brand recommendations across major AI platforms, and a separate analysis showed only ~12% of LLM-cited URLs appear in Google’s top 10.

- You need both. SEO still drives the majority of measurable traffic. AI visibility drives the new top of the funnel, the recommendations buyers see before they ever land on a SERP.

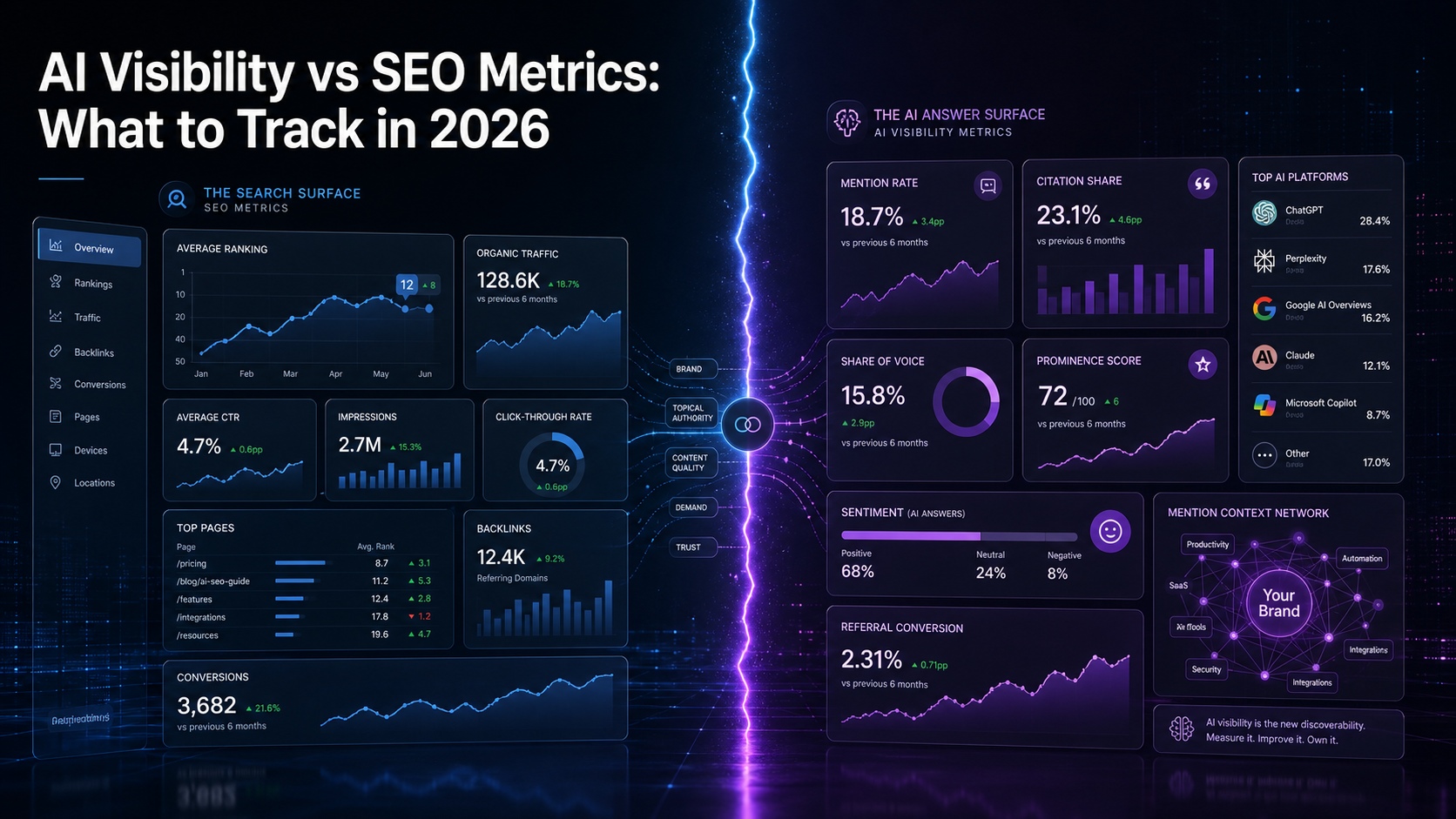

- The dashboard that works in 2026 pairs 4 SEO metrics with 6 AI visibility metrics, refreshed on different cadences.

What SEO Metrics Actually Measure

SEO metrics describe what Google’s index is doing with your pages. That’s it. They’re page-level, query-level, and link-level signals, refined over twenty years and well understood.

The core SEO metrics still worth tracking in 2026:

- Keyword rankings. Where each page sits in the SERP for a tracked query.

- Organic impressions and clicks. From Google Search Console, how often pages surface and how often they’re clicked.

- Click-through rate (CTR). Clicks divided by impressions. Drops here often signal AI Overview cannibalization.

- Organic sessions. Sessions attributed to organic search in your analytics platform.

- Backlinks and referring domains. The link graph. Still meaningful, still imperfect.

- Indexed pages and crawl health. Whether Google can find, render, and index your content.

- Conversions from organic. Pipeline impact, the metric that actually matters to the CFO.

These are real, useful, and not going anywhere. The issue is that they describe a shrinking surface. Zero-click search rose from 56% to 69% between 2024 and 2025, and Gartner forecasts a 25% drop in search engine volume by 2026 as buyers shift to AI assistants. Your SEO metrics can hold steady while your category awareness erodes. That’s the gap AI visibility metrics fill.

What AI Visibility Metrics Actually Measure

AI visibility metrics describe how language models talk about your brand when buyers ask them questions. They’re brand-level, prompt-level, and platform-level, and they behave nothing like SEO metrics.

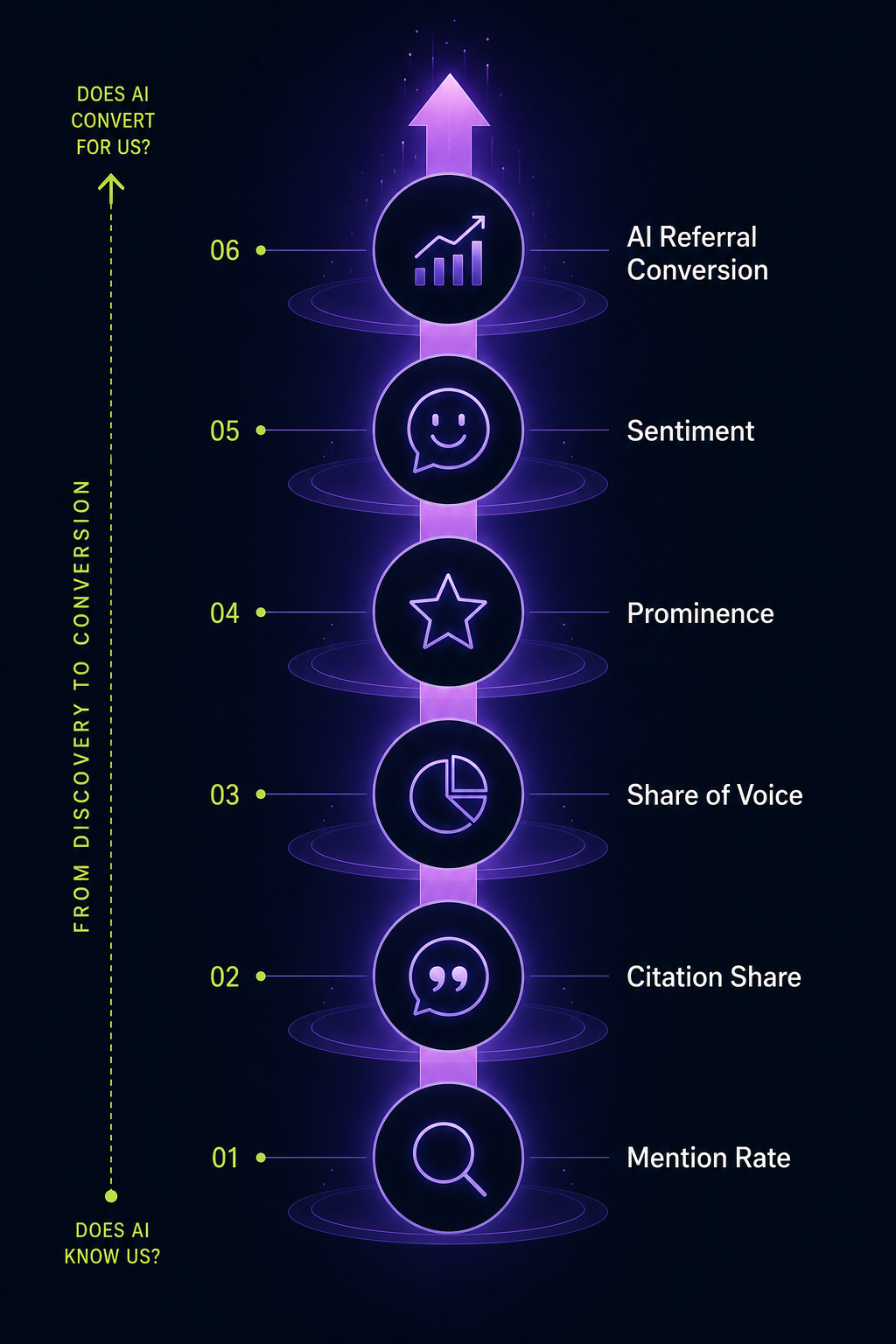

Six metrics matter most:

1. Mention Rate

The percentage of relevant prompts where your brand is named in the response. If you run 100 prompts about “best brand monitoring tools for B2B” across ChatGPT, Perplexity, Gemini, and Claude, and your brand appears in 23 of them, your mention rate is 23%. This is the closest equivalent to “rankings”, but it measures whether you’re named, not where you sit on a page.

2. Citation Share

When AI responses include source links (Perplexity, Google AI Mode, ChatGPT with search), citation share measures the percentage of those source slots your domain occupies. This is where backlinks-thinking partially survives, domains AI engines treat as authoritative get cited more often. Research from SE Ranking found roughly 71% of pages cited by ChatGPT include structured data, signaling that machine-readable content earns more slots.

3. Share of Voice in AI Answers

How much of the answer your brand owns relative to competitors. If five brands get mentioned in a response and yours gets the longest explanation, your share of voice is higher than the mention count alone suggests. We cover the full measurement framework in our guide to share of voice in AI search.

4. Prominence

Where your brand appears within the response. First mention in a list of five carries more weight than seventh. One analysis from Position Digital found that 44.2% of LLM citations come from the first 30% of source text, position matters in citation graphs the same way it matters on a SERP.

5. Sentiment

How the AI describes your brand. Neutral, positive, negative, or qualified (“X is strong for enterprise but expensive for small teams”). Sentiment shifts when third-party content shifts. Reddit threads, G2 reviews, and trade publications move this number more than your own site does.

6. AI Referral Traffic and Conversion

The traffic AI platforms send to your site, and how it converts. A widely cited Seer Interactive study showed ChatGPT referrals converting at 15.9% versus Google organic at 1.76%. Low volume, high intent. Worth tracking, but never the headline number, most AI influence happens in conversations the user never clicks out of.

Where SEO and AI Visibility Metrics Overlap

They overlap in inputs more than outputs. The things that improve both:

- Topical authority. Deep coverage of a subject helps Google rank you and helps AI engines treat your domain as a source.

- Structured data and clean technical setup. Schema, semantic HTML, and crawlable architecture help Google’s bots and help LLM crawlers extract content reliably.

- Backlinks from authoritative publications. Editorial mentions in trusted publications strengthen your link graph for SEO and your entity authority for AI engines.

- E-E-A-T signals. Author bylines, real expertise, original data. Google ranks higher for these, and AI engines cite more often for these.

But the outputs diverge fast. A page can rank #1 on Google and never appear in a single AI response. A brand can dominate ChatGPT recommendations while ranking on page two for its core terms. One independent analysis found only ~12% of URLs cited by LLMs appear in Google’s top 10. The overlap is real but partial.

Where the Metrics Actually Diverge

Five places the two systems part ways, and why each divergence matters.

Page vs. Brand as the Unit of Measurement

SEO measures pages. A specific URL ranks for a specific query. AI visibility measures the brand entity. ChatGPT doesn’t cite your blog post URL when it recommends a vendor, it names your company. This changes what you optimize. SEO rewards page-by-page craft. AI visibility rewards entity-wide consistency across every source AI models read.

Determinism vs. Probability

Google rankings are deterministic within a session, the same query from the same location returns the same SERP. AI responses are probabilistic. A SparkToro analysis of nearly 3,000 prompts found fewer than 1 in 100 runs produced the same brand list, and fewer than 1 in 1,000 produced the same list in the same order. You can’t “rank” in an AI response. You can only raise the probability of being named.

Crawl Cycles vs. Training and Retrieval

SEO operates on crawl-index cycles measured in days. AI operates on a mix of training data (refreshed every 6–18 months depending on the model) and real-time retrieval (live every query, in tools like Perplexity and ChatGPT Search). A new page can rank on Google in a week. A new brand can take a full training cycle to enter a model’s recommendations, unless retrieval-based sources cite it first.

Backlinks vs. Mentions

SEO weights backlinks heavily. AI engines weight mentions, linked or unlinked. A brand named in 200 articles without a single link can earn AI citation share that no backlink strategy alone would produce. Our breakdown of brand mentions vs backlinks covers this shift in detail.

Click as the Goal vs. Recommendation as the Goal

SEO wins when someone clicks. AI visibility wins when someone is told you’re the answer, whether they click or not. This is the hardest mental shift. You can be the recommendation a buyer acts on without ever appearing in their browser history.

| Dimension | SEO Metrics | AI Visibility Metrics |

|---|---|---|

| Unit measured | Page / URL | Brand / entity |

| Behavior | Deterministic per session | Probabilistic across runs |

| Update cadence | Crawl cycles (days) | Training + retrieval (mixed) |

| Authority signal | Backlinks, domain authority | Mentions, entity authority, citations |

| Success outcome | Click and session | Mention, citation, or recommendation |

| Primary tools | GSC, Ahrefs, Semrush | Citation trackers, prompt monitors |

The Dashboard That Works in 2026

You don’t need to abandon SEO tracking. You need to add a parallel layer and refresh each on its own cadence.

The working dashboard pairs four SEO metrics with six AI visibility metrics. SEO metrics, rankings, organic clicks, CTR, and conversions from organic, get refreshed weekly. AI visibility metrics, mention rate, citation share, share of voice, prominence, sentiment, and AI referral conversion, get refreshed monthly because of platform volatility.

Weekly cadence (SEO)

- Tracked keyword rankings for priority queries

- Organic clicks and impressions by page

- CTR changes on AI-Overview-affected queries

- Conversions attributed to organic search

Monthly cadence (AI visibility)

- Mention rate across a fixed prompt set (50–200 prompts depending on category)

- Citation share on the platforms that show sources (Perplexity, AI Overviews, ChatGPT Search)

- Share of voice vs. your top 3 competitors

- Prominence, first-mention rate vs. later-mention rate

- Sentiment distribution across responses

- AI referral sessions and conversion rate, segmented by platform

Quarterly cadence (strategy review)

- Source-mix analysis, which third-party publications are AI engines pulling your brand from?

- Competitor source-mix gap, where do they get cited that you don’t?

- Prompt-set refresh, is the prompt set still reflecting how buyers ask AI?

The Mistake Most Teams Are Making Right Now

One of two patterns, repeated across most marketing teams we talk to:

Pattern A: Ignoring AI entirely. The dashboard is pure SEO. Rankings, traffic, conversions. The team knows AI is “a thing” but hasn’t built any visibility into it. Six months later, organic traffic is steady but new pipeline from category awareness has quietly dropped, and nobody can explain why because the dashboard doesn’t measure it.

Pattern B: Chasing AI metrics with SEO tactics. The team adds an AI visibility tool, sees mention rate is low, and responds by publishing more blog content. Six months later, blog output is up and mention rate hasn’t moved, because AI engines aren’t reading their blog, they’re reading G2, Reddit, trade publications, and the news. The inputs were wrong.

The fix isn’t more content. The fix is understanding that AI visibility is downstream of brand mentions across the sources AI engines actually learn from, not downstream of your editorial calendar. SEO content still belongs in your stack. It’s just not the lever that moves AI metrics.

What Each Metric Tells You to Do

Metrics that don’t drive action are reporting overhead. Here’s the action layer for each AI visibility metric:

- Low mention rate to You’re not in enough source content. Audit which publications, communities, and review sites AI engines pull from in your category. Build presence there.

- Low citation share but reasonable mention rate to AI knows you exist but isn’t linking to you. Tighten schema, structured data, and on-page extraction patterns. Make your pages easier to cite.

- Low prominence (always mentioned last) to Your brand is in the consideration set but not the lead recommendation. This is usually a category-authority gap, competitors are described as the default, you’re described as an alternative. Fix with strategic editorial placements.

- Negative or qualified sentiment to Third-party content is shaping the description. Audit Reddit threads, G2 reviews, and trade coverage. Sentiment shifts when source content shifts.

- High mention rate, low AI referral conversion to Your AI visibility is working at the awareness layer but the on-site experience isn’t closing. Standard CRO problem, just upstream traffic from a new source.

- Low share of voice vs. competitors to Competitive citation gap. Use it to prioritize which sources to target next.

Tools That Cover Each Layer

You won’t get this from one tool. The stack splits cleanly:

- SEO layer: Google Search Console (free, mandatory), plus one of Ahrefs, Semrush, or Moz for rank tracking, backlinks, and competitor research.

- AI visibility layer: A dedicated tracker for mention rate, citation share, and prompt-level monitoring. We’ve compared the category in our review of AI visibility analytics tools and generative engine optimization tools.

- Brand mention layer: A monitoring tool that catches when and where your brand is mentioned across the web, the input layer for AI citations. Our roundup of brand monitoring tools tested for B2B in 2026 covers the options.

- Analytics layer: GA4 with referral source segmentation. Tag ChatGPT, Perplexity, Gemini, and Claude as distinct sources. Most teams haven’t done this and their AI referral data is invisible inside “Direct.”

A Note on Data Reliability

AI visibility metrics are directional, not exact. The same prompt run twice will return different responses. The same dashboard reading two weeks apart will show real shifts and noise mixed together. This is uncomfortable for teams trained on SEO’s relative precision, but it’s the reality of measuring probabilistic systems.

The right response is methodological discipline:

- Fix your prompt set. Don’t change it week to week or you can’t compare anything.

- Run each prompt multiple times per cycle (5–10 is a reasonable floor). Average the results.

- Track trends over 4–6 week windows, not week-to-week changes.

- Pair quantitative data with qualitative review, read the actual responses, not just the numbers.

Treat the numbers as a thermometer, not a stopwatch. Trends matter. Single readings don’t.

Frequently Asked Questions

Are AI visibility metrics replacing SEO metrics?

No. They’re adding a parallel layer. SEO still drives the majority of measurable organic traffic and conversions for most B2B brands. AI visibility metrics measure a different surface, the recommendation layer that increasingly precedes a buyer’s first click. Track both.

What’s the single most important AI visibility metric to start with?

Mention rate. It’s the foundation, it answers “does AI know we exist in our category?” Once you have a baseline mention rate across your top 50–100 buyer prompts, you can layer in citation share, prominence, and sentiment. Starting with anything more advanced is premature optimization.

How often do AI visibility metrics change?

Daily, in small ways. Meaningfully, over weeks. Major shifts (new training cycle, platform algorithm change) happen every few months. Refresh your dashboard monthly. Don’t react to weekly noise, it’ll burn out your team and produce false-signal strategy changes.

Does Google ranking help with AI visibility?

Partially. Research suggests roughly 12% of LLM-cited URLs appear in Google’s top 10, meaningful overlap, but not enough to assume one drives the other. Strong SEO helps with retrieval-based AI surfaces (ChatGPT Search, Perplexity) more than with training-based recall. It’s a partial input, not a sufficient one.

How do you measure AI visibility for a small brand with low mention volume?

Use a tighter prompt set, 30–50 high-intent buyer prompts instead of 200. Run each prompt 10 times instead of 5 to reduce noise. Track competitive context (who gets mentioned instead of you) so you have something to optimize toward even when your own mention rate is low. Small-brand AI visibility is a baseline-building exercise for the first 3–6 months.

What’s the relationship between backlinks and AI citations?

Correlated but not identical. Backlinks help SEO directly and help AI visibility indirectly (high-authority backlinks often come from publications AI engines also treat as sources). But AI engines weight unlinked mentions too, a brand named in 200 trade publications without a single backlink can outperform a brand with 50 backlinks from low-context sources.

Can you A/B test AI visibility changes?

Not cleanly. You can’t show one ChatGPT user a “variant A” response and another user “variant B.” What you can do is measure before-and-after on a fixed prompt set when you make a specific input change, a major editorial placement, a schema deployment, a new source partnership. Hold the prompt set constant, vary the input, measure the response shift over 30–60 days.

Build the Dashboard That Sees Both Surfaces

The teams that win the next two years won’t be the ones with the best SEO dashboards or the best AI visibility dashboards. They’ll be the ones who built a single view that connects both, and who understood that page rankings and brand recommendations are two different games played at the same time. Start by adding three metrics to your existing SEO report this quarter: mention rate, citation share, and AI referral conversion. That’s the on-ramp. The rest builds from there.

Want to see how your brand currently performs across ChatGPT, Perplexity, Gemini, and Claude? Get a free AI visibility audit, we’ll benchmark your mention rate and citation share against your top three competitors and show you where the gaps are.