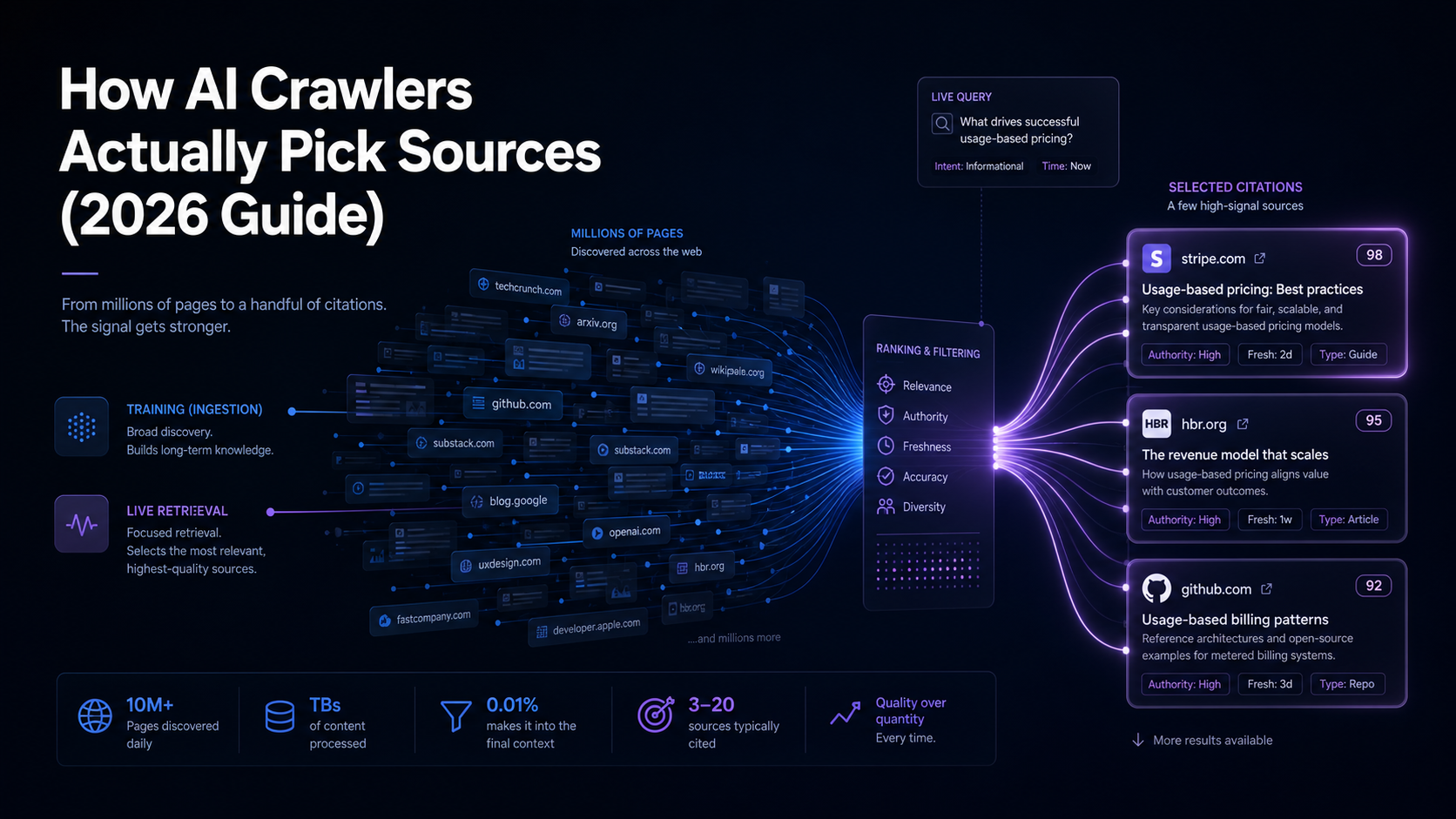

AI crawlers don’t pick sources the way Googlebot does. They run two separate jobs, training-time ingestion and live retrieval, and each one uses a different filter. The brands that show up in ChatGPT, Perplexity, Gemini, and Claude aren’t the ones publishing the most content. They’re the ones whose content survives both filters: clean to render, dense with claims, cited by trusted third parties, and present on the small set of domains these systems actually weight.

This guide breaks down the real selection logic, what each crawler reads, what it ignores, what makes a source eligible for citation, and what gets a page filtered out before the model ever sees it.

The Short Version

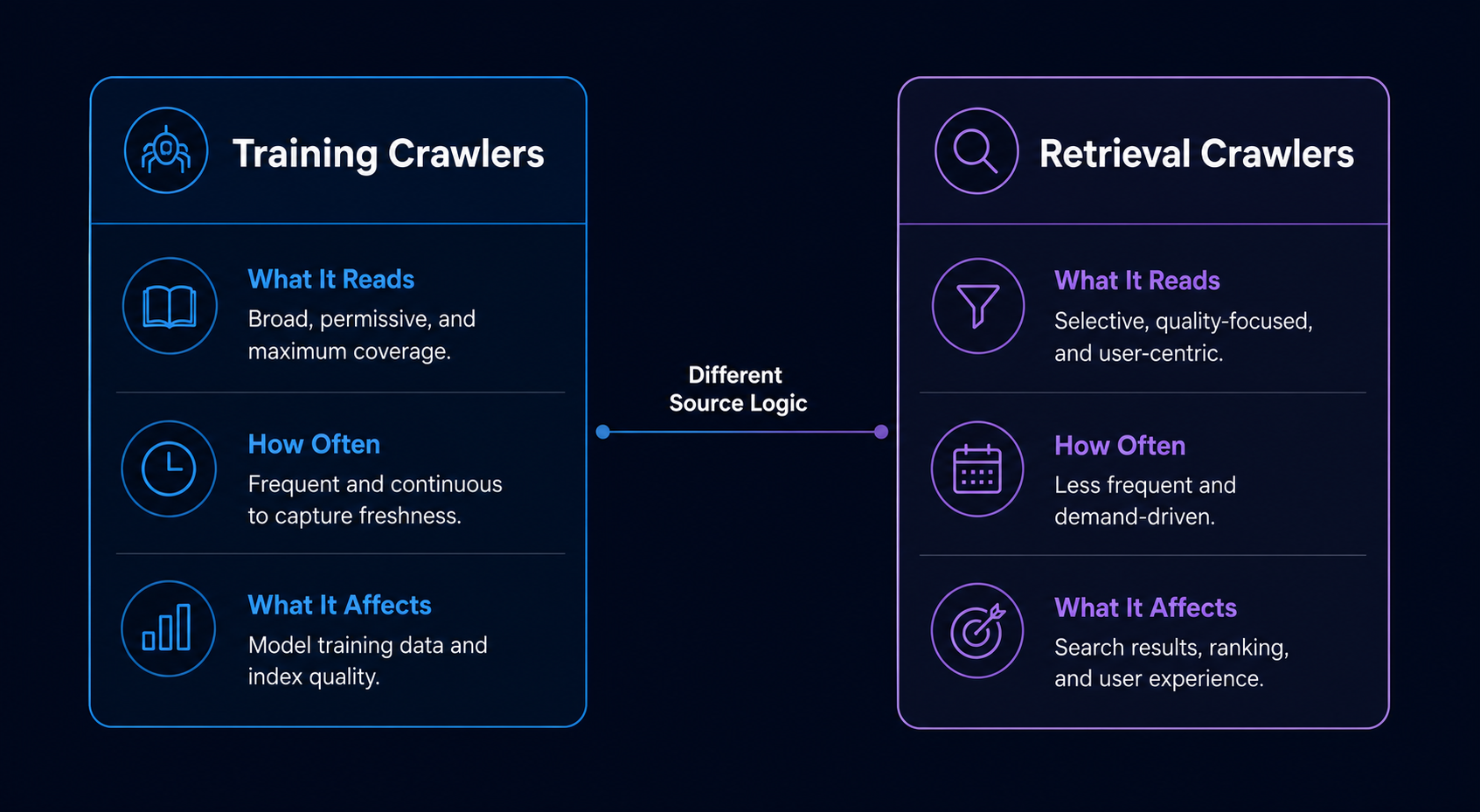

- AI crawlers split into two jobs: training crawlers (build the model) and retrieval crawlers (fetch live answers). Source-selection logic differs for each.

- Most AI crawlers strip the

<head>, convert pages to plain text, and weight body content over schema. JSON-LD helps Gemini more than ChatGPT. - Roughly half of major AI crawlers render JavaScript only briefly or not at all. JS-dependent content is often invisible.

- Retrieval systems pick sources based on freshness, authority, topical density, and whether the page directly answers the query in extractable form.

- Training systems weight Common Crawl, licensed datasets, and a narrow list of high-trust domains, most of the open web gets sampled, not absorbed.

- Brand citations cluster on roughly 200–400 domains across most B2B categories. Earning placement on that short list is the actual game.

Two Crawlers, Two Different Source Filters

The biggest misconception about AI crawlers is treating them as one thing. They aren’t. A training crawler harvesting text for the next model release behaves nothing like a retrieval crawler fetching a page to answer a question someone just typed into ChatGPT.

Training crawlers (GPTBot, ClaudeBot, Google-Extended, Meta-ExternalAgent) sweep the web for text to feed model pretraining. They prioritize scale, diversity, and content quality. They run on slow cycles, weeks to months, and the brand associations they build become baked into model weights for the life of that model version.

Retrieval crawlers (ChatGPT-User, Claude-User, PerplexityBot, OAI-SearchBot) fetch pages on demand to answer specific user queries. They prioritize freshness, relevance to the exact query, and extractability. Their output influences a single answer, not the model itself.

The source-selection logic is fundamentally different. A page can be invisible to one and dominant in the other. This is why brands that rank in Perplexity sometimes don’t appear in ChatGPT’s training-era answers, and vice versa. If you want to track which bots are hitting your site, our guide on how to track which AI bots crawl your site walks through the log analysis.

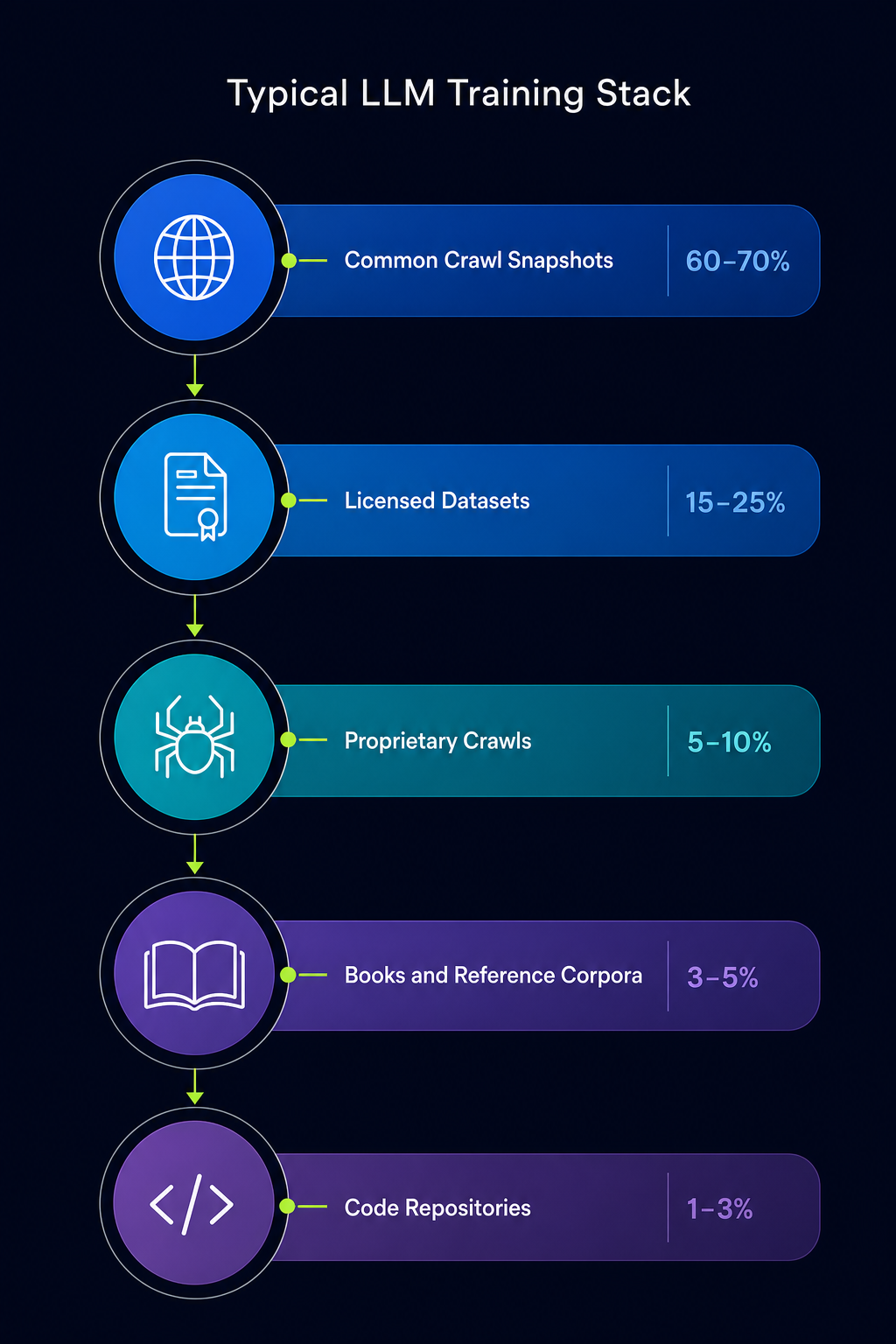

What Training Crawlers Actually Pull From

Training data for the major models doesn’t come from a fresh web crawl every time. It comes from a layered stack: Common Crawl snapshots, licensed dataset deals, proprietary scrapes, books, code repositories, and curated reference corpora like Wikipedia and academic archives.

Common Crawl is the single largest public source. Most major LLMs use filtered subsets of it, and the filters do the real work. Pages get scored on language quality, perplexity, duplicate content, toxicity, and domain authority. Low-quality pages get dropped before they ever influence the model. A page on a high-trust domain with clean prose, original claims, and few duplicates passes through. A thin SEO page on a low-trust domain doesn’t.

On top of Common Crawl, each AI company runs its own crawler (GPTBot, ClaudeBot, etc.) to fill gaps and refresh content. These crawlers also score sources, and the criteria are remarkably similar across vendors:

- Domain authority and trust signals, established publishers, .edu, .gov, established news outlets get heavier weights.

- Content uniqueness, pages with high text overlap with other indexed pages get downweighted.

- Language quality, perplexity scoring filters out keyword-stuffed or low-coherence content.

- Topical depth, pages that cover a topic with specificity outperform pages that skim it.

- Citation patterns, content that other trusted sources reference gets weighted up.

The practical implication: training crawlers don’t care how often you publish. They care whether your published content survives the quality filters and lives on a domain the filters trust. In our experience auditing B2B citation profiles, the single biggest predictor of training-era visibility is whether the brand appears on the 50–100 publications a model’s filtering pipeline trusts in that vertical, not the brand’s own content volume.

How Retrieval Crawlers Pick Sources for Live Answers

Retrieval is where most of the visibility action happens in 2026. When someone asks Perplexity or ChatGPT a question, the system does something close to this:

- Query rewriting. The system breaks the user’s question into one or more search queries, sometimes a dozen, using the model itself to expand and clarify intent.

- Search. Those queries hit a search index. Bing, Google, or a custom index, and pull a candidate pool of URLs.

- Fetch and render. A retrieval crawler grabs the top candidates, strips most of the markup, and converts each page to plain text or a structured chunk.

- Re-ranking. The system scores each chunk against the original query using a smaller, faster model. Chunks that directly answer the query in extractable form rank up.

- Generation with citation. The model writes the answer, citing the chunks it drew from.

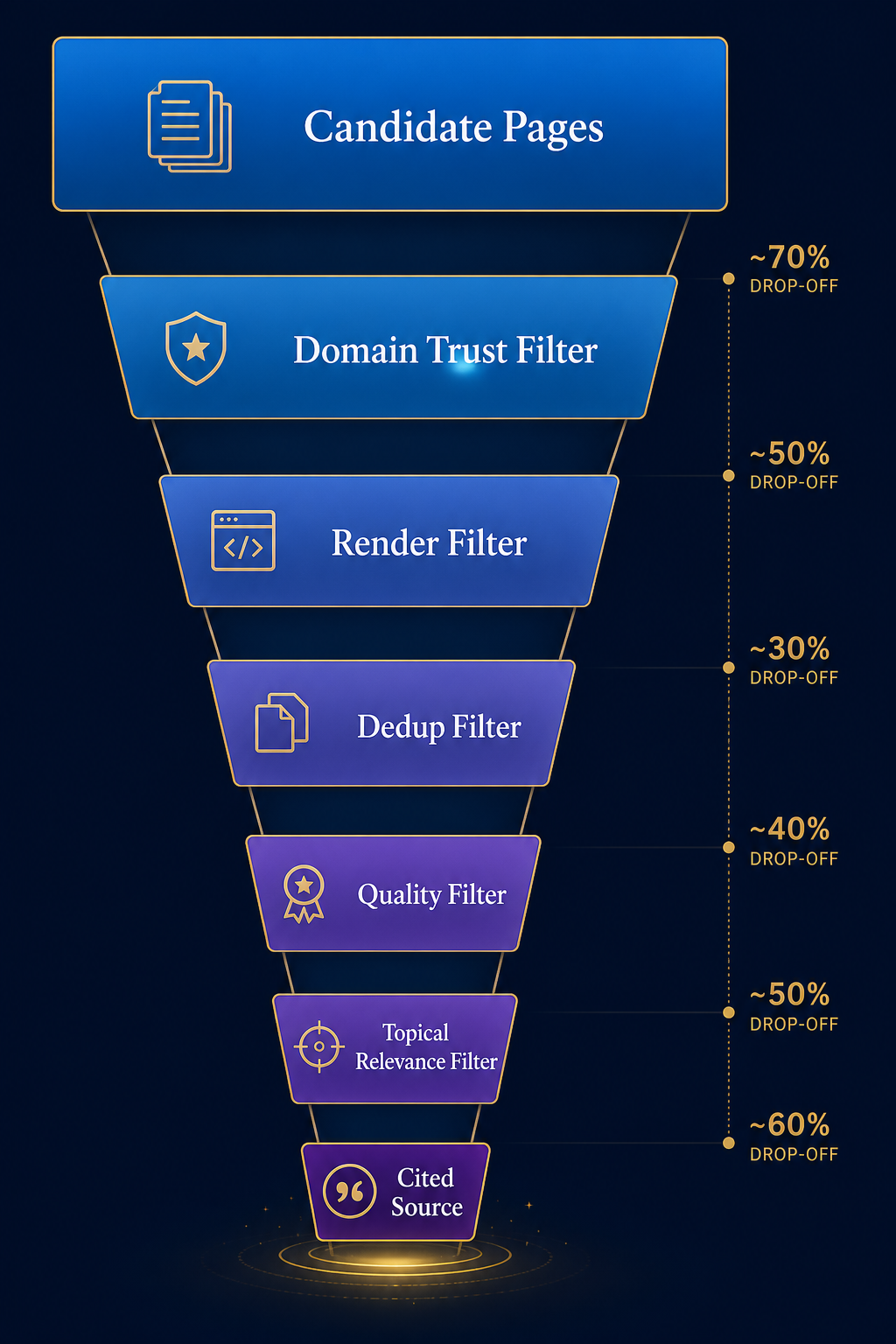

The source-selection filter at each step is brutal. A typical query starts with thousands of candidate pages and ends with two to six cited sources. The selection logic at the re-ranking step weights:

- Topical density, does this chunk answer the specific question, or does it dance around it?

- Authority signals, does the source domain have credibility signals the system trusts for this topic?

- Freshness, for time-sensitive queries, recent dates win. For evergreen queries, freshness matters less.

- Extractability, clean prose with clear claims outperforms heavy formatting or PDF-style layouts.

- Source diversity, most systems try to cite from different domains rather than stacking citations from one site.

Perplexity has been transparent about citing more sources per answer than competitors, typically four to ten, while ChatGPT and Gemini tend toward fewer. That difference matters: if you’re optimizing for Perplexity, the citation pool is wider and easier to enter. If you’re optimizing for ChatGPT, the bar is higher per query. For platform-by-platform tactics, our breakdown of what earns citations in Perplexity goes deeper.

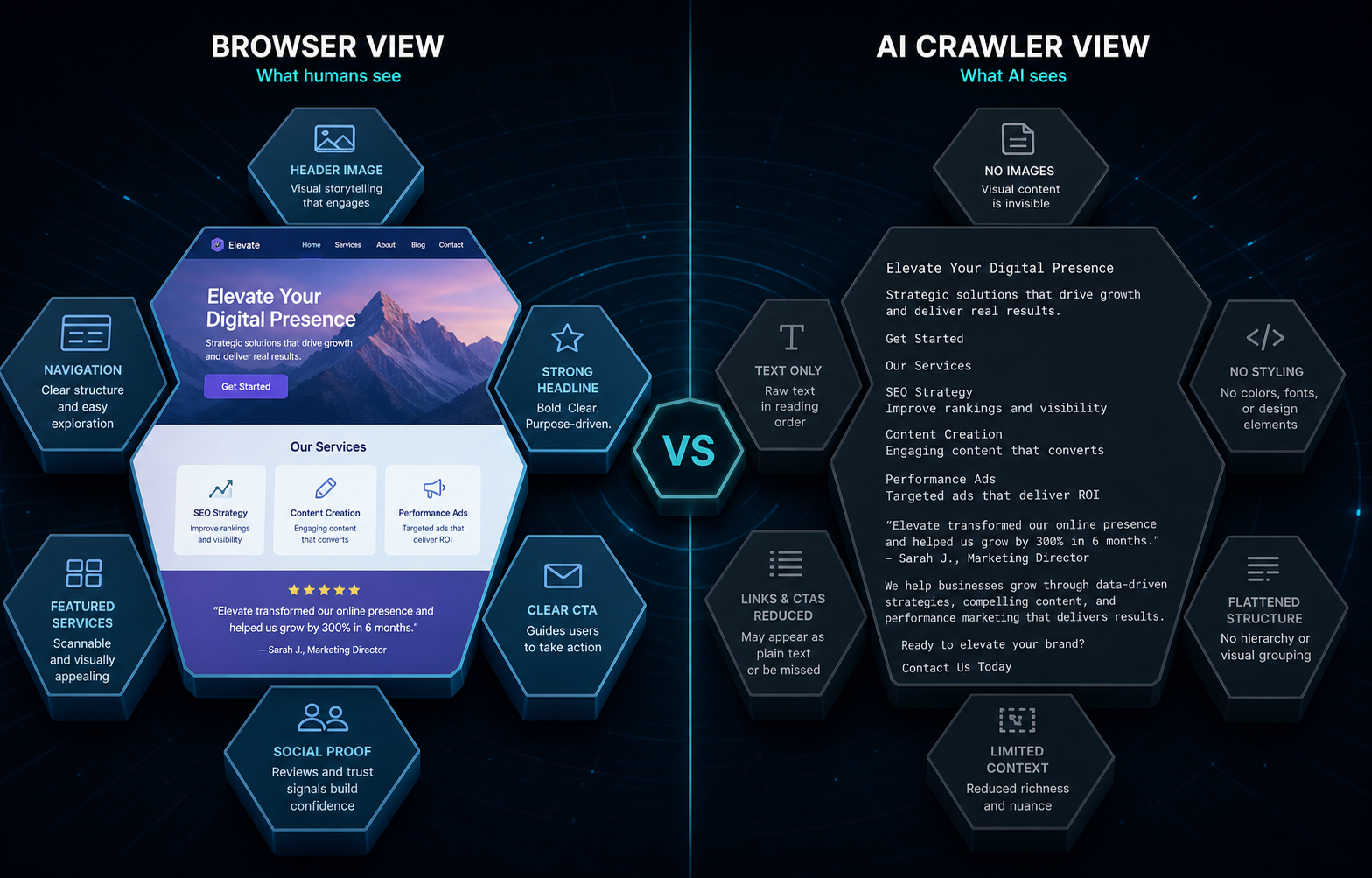

What Crawlers Actually Read on Your Page

This is where most SEO playbooks fail when applied to AI. Crawlers don’t read your page the way a browser renders it.

Most AI crawlers, both training and retrieval, strip the <head>, drop most non-content markup, and convert the body to plain text or a flat structured representation before the model touches it. Practical implications:

| Element | Read by Most AI Crawlers | Notes |

|---|---|---|

| Title tag | Yes | Consistently read across all major crawlers. |

| Body text (H1–H4, paragraphs, lists) | Yes | The primary input. Where the model actually learns and extracts. |

| Meta description | Inconsistent | Often dropped in training pipelines. Some retrieval crawlers use it. |

| JSON-LD structured data | Partial | Helps Gemini and Google AI Overviews. Mostly ignored by ChatGPT and Claude. |

| Open Graph / Twitter tags | Mostly no | Built for social previews, not AI. |

| JavaScript-rendered content | Inconsistent | Roughly half of major AI crawlers don’t execute JS or wait less than 3 seconds. |

| Images | Alt text only | Image content itself isn’t read by most text crawlers. |

| llms.txt | Limited adoption | John Mueller stated in 2026 that no AI systems were actively using it. Adoption has grown but remains inconsistent in 2026. |

The takeaway: your body content is doing almost all the work. Title tags help. Schema helps Gemini specifically. Everything else, meta tags, OG tags, fancy JS rendering, is largely invisible to the systems deciding whether to cite you.

If your site relies on client-side rendering, you have a real problem. A meaningful share of training and retrieval crawlers will see an empty page. Server-side rendering or static generation isn’t optional for AI visibility, it’s table stakes. For the technical side of structuring content for crawlers, see how to write llms.txt for AI search.

The Source Authority Filter That Most Brands Miss

Both training and retrieval crawlers apply domain-level authority filters before page-level signals matter. This is the part that confuses brands new to AI visibility.

You can write the perfect page, clean prose, dense claims, well-structured, server-rendered, and still get filtered out if your domain doesn’t clear the trust threshold for the topic. Conversely, a mediocre page on a high-trust domain often gets cited over a strong page on an unknown domain.

The trust signals these systems use overlap significantly with traditional SEO authority signals, but with critical differences:

- Citation graph position matters more than backlink count. A domain that established publishers reference gets weighted up.

- Topical concentration matters more than overall authority. A mid-tier domain that consistently publishes deep content on one topic often outperforms a generalist high-DA site on that topic.

- Editorial signals, bylines, expert authors, sourced citations, structured journalism, increase trust scores.

- Wikipedia presence for the entity (brand, person, product) is a strong amplifier for both training and retrieval.

This is why most B2B brands hit a wall. They publish consistently on their own domain, build technical SEO, and still don’t get cited. The model has nothing else to triangulate. The brand exists in its own content silo, not in the wider citation graph the crawlers actually weight.

The fix is structural: get mentioned on the publications that already clear the trust filter in your category. Our framework for tier-based publication hierarchy for AI citations walks through how to identify which domains AI systems weight in a given vertical.

Why Freshness Hits Differently for Training vs. Retrieval

Freshness is the most misunderstood signal in AI visibility.

For training crawlers, freshness is almost irrelevant in the way SEOs think about it. A page published in 2023 has the same chance of influencing a 2026 model as a page published in 2026, provided both pass the quality filters and live within the training cutoff. What matters is whether the content existed at scale during the training window.

For retrieval crawlers, freshness is a top-tier signal, but only for queries the system classifies as time-sensitive. “Best CRM 2026” gets ranked heavily on recency. “How does email authentication work” doesn’t. Most retrieval systems use query classifiers to decide whether to weight recent content or evergreen content.

The practical implication: publishing fresh content matters for retrieval visibility on time-sensitive queries. For evergreen topics, depth and authority matter more than recency. And for training-era visibility, the answers the model gives without retrieval, you need to be building content that persists in the corpus, not chasing a publishing treadmill.

What Gets a Source Filtered Out Before the Model Sees It

Source filtering happens at multiple stages, and most filtered-out pages never even reach the model. Common disqualifiers:

- Heavy JavaScript rendering, pages that require JS to display content often return empty to crawlers that don’t execute scripts or wait long enough.

- Login walls and paywalls, content behind authentication is invisible to crawlers without special arrangements.

- Duplicate or near-duplicate content, the dedup filters in training pipelines drop pages that overlap significantly with already-indexed pages.

- Low-quality language signals, keyword stuffing, broken grammar, AI-generated thin content, and machine-translated content get downweighted or dropped.

- Toxicity and safety filters, pages flagged for policy violations are excluded from training and re-ranking.

- Suspicious link patterns, sites with manipulative SEO signals get downweighted in trust scoring.

- robots.txt blocks, most major AI crawlers respect explicit disallows for their user agent.

- Domain-level distrust, sites with persistent quality issues get filtered at the domain level, not page-by-page.

The most overlooked filter is duplicate content. Many B2B sites publish thin variations of the same content across category pages, location pages, and competitor comparisons. Training pipelines deduplicate aggressively. If your “best CRM for healthcare” page is 80% the same as your “best CRM for fintech” page, neither one is going to carry weight in the model.

How Each Major AI Platform Picks Sources Differently

Source selection logic isn’t uniform across platforms. The differences matter when you’re prioritizing where to invest:

| Platform | Primary Source Stack | What It Weights Most |

|---|---|---|

| ChatGPT | Training corpus + Bing index + ChatGPT-User retrieval | Domain authority, citation graph position, training-era persistence. Fewer citations per answer. |

| Perplexity | Live web retrieval, multiple search APIs | Freshness, topical density, source diversity. Cites 4–10 sources per answer. |

| Gemini | Google’s index, knowledge graph, training corpus | Knowledge graph entities, structured data, Google E-E-A-T signals. |

| Claude | Training corpus + Claude-User retrieval | Editorial quality, depth of source, citation rigor. Conservative on citation count. |

| Google AI Overviews | Google’s index + training | Top-10 ranking pages, structured snippets, knowledge graph entities. |

| Copilot | Bing index + training | Similar to ChatGPT, leans on Bing’s authority signals. |

If your category lives heavily in Perplexity-style research queries, you’re optimizing for a wider citation pool with strong freshness signals. If your category lives in ChatGPT’s training-era answers, you need long-horizon investment in citation graph position. These aren’t the same playbook.

What This Means for Source Selection in Practice

The practical translation of all this for a brand trying to get cited:

Audit which sources AI is currently citing in your category. Run the questions your buyers ask through ChatGPT, Perplexity, Gemini, and Claude. Note which domains get cited repeatedly. That’s your target list. In most B2B categories, the list is shorter than people expect, usually 30–80 publications doing the bulk of the citation work.

Get your own content past the render filter. Server-side rendering, clean HTML, body text doing the work. Strip out JS dependencies for primary content. Make sure GPTBot, ClaudeBot, PerplexityBot, and Google-Extended aren’t blocked unless you have a deliberate reason.

Build placements on the trusted-source list. Editorial mentions, expert commentary, original data shared with publishers, anything that puts your brand inside content that the trust filter already approves. This is the work that moves training-era visibility. Our practitioner guide on how to increase brand mentions in AI search covers the placement strategy in depth.

Match content depth to the platforms you care about. For Perplexity, write content that survives chunk-level extraction, direct claims, dense answers, clear structure. For ChatGPT and Claude, the same plus long-form depth that signals editorial quality. For Gemini, structured data and knowledge graph alignment.

Track which crawlers actually hit you. Log analysis is the only way to know whether your robots.txt, render setup, and content are reaching the systems you care about. If GPTBot isn’t crawling, no amount of optimization fixes the gap.

Frequently Asked Questions

Do AI crawlers use Google’s index, or do they crawl independently?

Both. ChatGPT and Copilot use Bing’s index for live retrieval, while Gemini uses Google’s index. All major AI companies also run their own crawlers (GPTBot, ClaudeBot, PerplexityBot) to fill gaps and refresh content independently of search engines.

Does schema markup help AI crawlers pick my page?

It helps Gemini and Google AI Overviews significantly because they tie back to Google’s knowledge graph. JSON-LD has limited impact on ChatGPT, Claude, and Perplexity, which weight body text and source authority more than structured data.

How do AI crawlers decide which pages to cite from a domain?

They score individual pages on topical density, claim specificity, freshness when relevant, and extractability, but only after the domain itself clears a trust threshold. A high-trust domain gets more pages cited; a low-trust domain rarely gets cited regardless of page quality.

Will publishing more content increase my AI citation rate?

Not by itself. Training crawlers deduplicate aggressively and filter for quality, so volume without depth gets dropped. The bigger lever is earning placements on the small set of high-trust publications that AI systems already weight in your category.

Do AI crawlers respect robots.txt?

The major declared crawlers. GPTBot, ClaudeBot, PerplexityBot, Google-Extended, generally honor robots.txt directives for their specific user agents. Undeclared or spoofed crawlers don’t, and roughly 6% of traffic claiming to be AI crawlers is spoofed, according to a 2024 Human Security estimate.

How often do AI crawlers re-fetch a page?

Training crawlers operate on slow cycles measured in weeks or months. Retrieval crawlers fetch on demand whenever a user query triggers a search, so a single popular page might be fetched dozens of times per day during query bursts and ignored for weeks otherwise.

Does the title tag matter for AI source selection?

Yes. The title tag is consistently read across major AI crawlers and influences how the page is summarized and chunked. It’s one of the few <head> elements that reliably survives the markup-stripping step.

Are AI Overviews and AI chatbots picking sources the same way?

No. AI Overviews lean heavily on top-ranked Google results and knowledge graph entities. AI chatbots run their own retrieval and re-ranking pipelines that often surface sources outside the top-10 search results, especially Perplexity and Claude.

If you want to understand which publications are actually moving the needle for your brand in AI search, and which gaps are keeping you out of the citation graph, start with an audit of what AI says about your category today. Our AI visibility diagnostic framework walks through the full process. The brands getting cited in 2027 are doing this work now.