A perplexity mentions tool is software that tracks when and how Perplexity AI references your brand in its answers — capturing citation URLs, mention frequency, sentiment, and competitive positioning across the prompts your buyers actually use. If you’re a B2B marketer trying to understand whether Perplexity recommends your brand or your competitor’s, this is the category of tool that closes that visibility gap.

As of 2026, Perplexity processes an estimated 1.2–1.5 billion queries per month. Each answer cites a handful of sources — sometimes just one or two. If your brand isn’t among them, you’re invisible during high-intent research moments. The challenge is that traditional SEO tools weren’t built for this. They track blue links and keyword positions. Perplexity mentions tools track whether AI answer engines include you in the conversation at all.

This article breaks down what a perplexity mentions tool actually measures, how the best options compare, what to look for before committing budget, and how to turn monitoring data into content that earns more citations.

What a Perplexity Mentions Tool Actually Measures

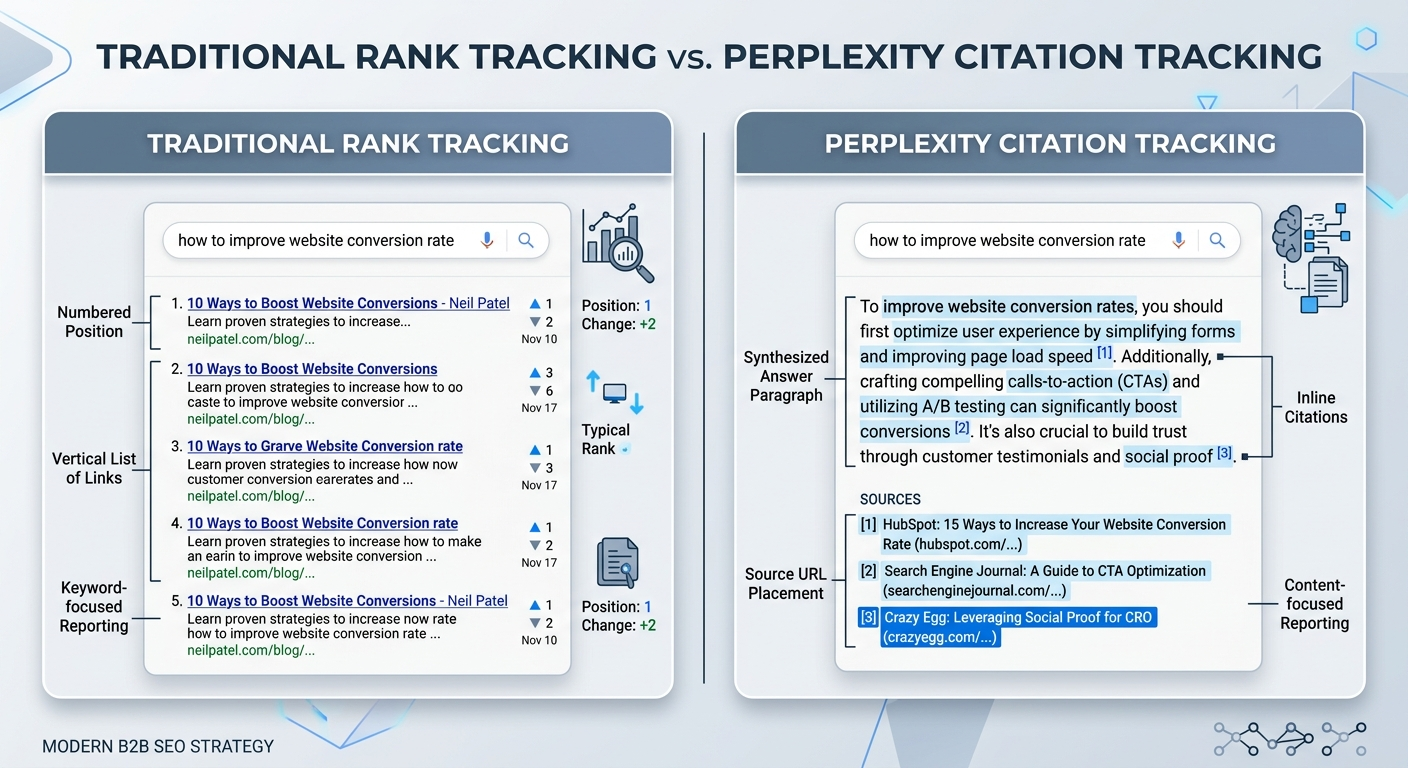

A perplexity mentions tool monitors AI-generated answers — not search engine results pages. That distinction matters because Perplexity doesn’t rank URLs in a numbered list. It synthesizes responses from multiple sources and cites them inline. Your brand either appears in that synthesis or it doesn’t.

The core metrics these tools capture include:

- Brand mention frequency — how often Perplexity names your brand across a defined set of prompts

- Citation URLs — the specific pages Perplexity links to as sources within its answers

- Competitive share of voice — which rival brands appear alongside or instead of yours

- Sentiment and framing — whether Perplexity describes your brand positively, neutrally, or with outdated information

- Source attribution — which third-party domains (review sites, publishers, forums) influence your inclusion

- Trend movement — how mention rates and citation positions shift over days, weeks, and months

This is fundamentally different from tracking Google rankings. In Google, position three means your URL appears third. In Perplexity, position means nothing in a traditional sense — the AI might cite three sources or eight, and those citations shift based on timing, follow-up questions, and geographic context.

Why this matters for B2B marketing teams

Perplexity users ask intent-rich questions: “best project management software for remote teams,” “top CRM alternatives to Salesforce,” “most reliable cybersecurity vendors for mid-market.” These are the exact moments when buying decisions form. A 2025 study by Profound found that 73% of AI search users take action within 24 hours of receiving an AI recommendation. Visitors arriving from AI answer engines convert at 4.4 times the rate of traditional organic search traffic.

If your competitor is cited during those moments and you are not, you’ve lost the consideration set before a prospect ever visits your website. A perplexity mentions tool makes that gap visible and measurable.

How Perplexity Selects Sources — and Why It Shapes Your Tool Choice

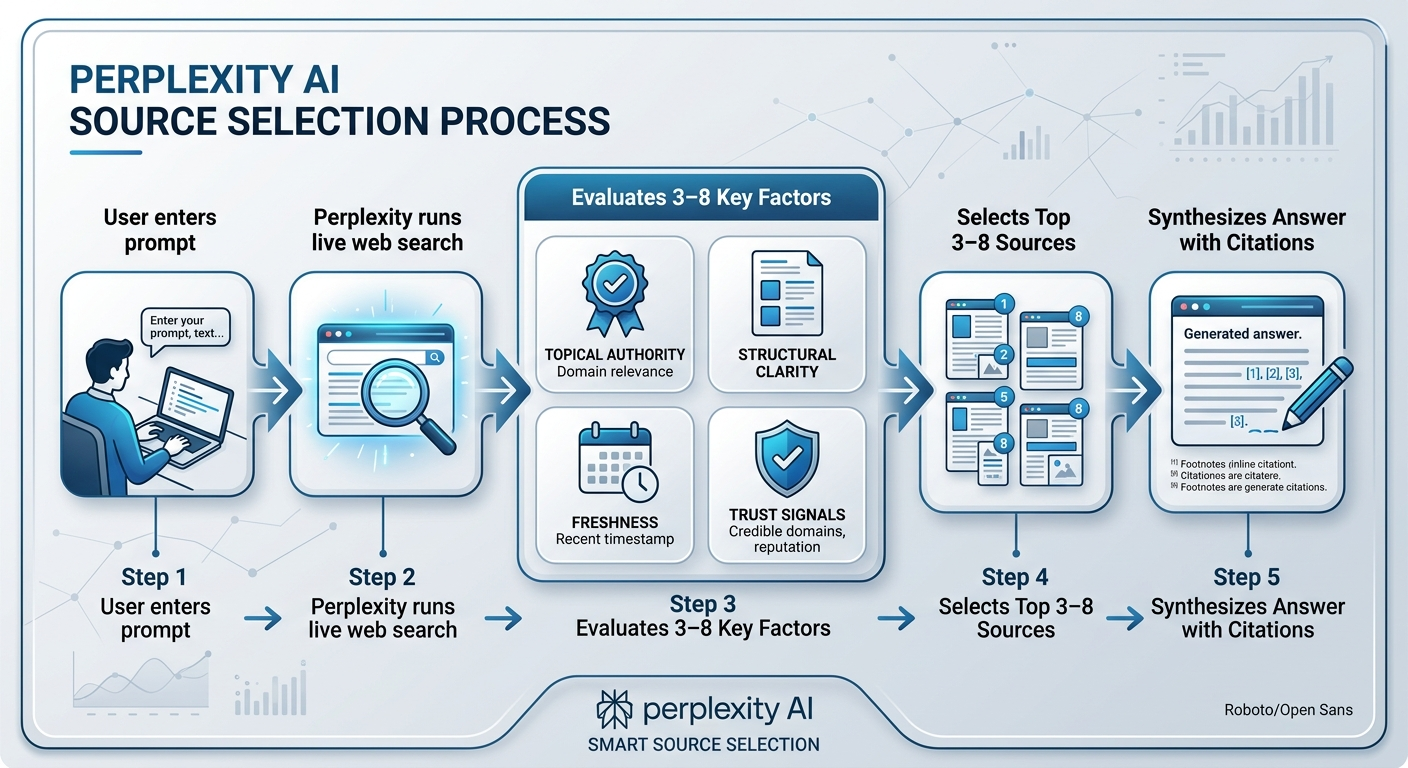

Perplexity conducts live web searches for every query. Unlike ChatGPT, which relies partially on training data, Perplexity always pulls fresh sources and cites them directly. That means the signals it uses to select sources are closer to real-time authority signals than static knowledge.

Based on observed citation patterns across campaigns and public research from the Allen Institute for AI (2024), Perplexity tends to favor:

- Topical authority — sites that cover a subject deeply across multiple pages, not just one article

- Structural clarity — content with clear headings, direct answers, FAQ structures, and schema markup

- Source trustworthiness — domains with strong backlink profiles, editorial standards, and third-party validation

- Content freshness — recently published or updated pages receive preference for time-sensitive queries

- Direct answer alignment — content that closely matches the phrasing and intent of the user’s question

This means the right perplexity mentions tool should not only show you that you’re missing from answers — it should help you understand why by revealing which competitor URLs earned the citation and what structural patterns those pages share.

What Separates a Strong Perplexity Mentions Tool from a Basic Dashboard

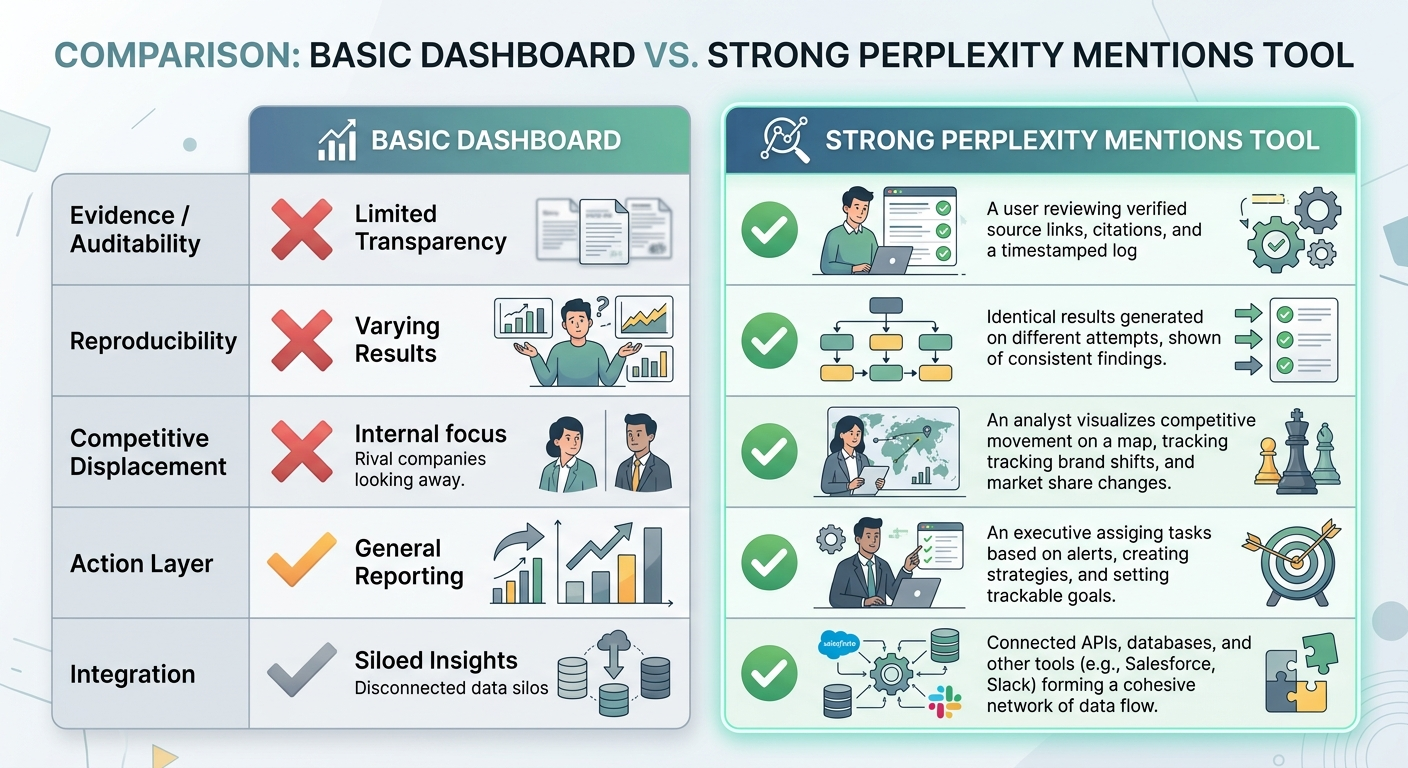

Most tools in this category can tell you whether your brand appeared in a Perplexity answer. That’s table stakes. The tools worth paying for go further — they show you what displaced you, which content pattern is winning citations, and what to change next.

Evidence and auditability

A credible tool provides downloadable proof that citations actually appeared. That means timestamped screenshots or logs, exportable citation URL lists, and retention policies that keep historical data accessible beyond 30 days. Without this, you’re trusting a dashboard number with no way to verify it during a strategy review or client report.

Reproducibility controls

Perplexity answers vary based on personalization, location, and session context. A reliable tool runs queries in standardized conditions — logged-out browser sessions, consistent geographic settings, and documented prompt templates. This ensures your tracking data reflects what your buyers see, not what your own browsing history generates.

Competitive displacement intelligence

Knowing your share of voice dropped from 40% to 25% is useful. Knowing that a specific competitor’s comparison page on a specific publisher domain replaced your citation is actionable. The best tools surface the exact rival URL that took your spot, so your team can analyze what that content does differently and respond.

An action layer

Monitoring without execution creates expensive awareness of problems you don’t fix. Some tools now generate content briefs, outline structures, or placement recommendations directly from citation data. If a perplexity mentions tool only tells you what happened but never suggests what to do, your team still has to bridge the gap manually.

Integration and export flexibility

Your Perplexity visibility data needs to flow into existing reporting workflows. CSV exports, API endpoints, Looker Studio connectors, and Slack notifications ensure visibility metrics reach the right stakeholders without manual copy-paste cycles.

Comparing the Top Perplexity Mentions Tools in 2026

The market for AI visibility tracking has matured since 2024–2025, when most options were either manual workflows or bolt-on features inside traditional SEO suites. As of 2026, dedicated tools exist alongside integrated platforms. Your best choice depends on team size, budget, and whether you need monitoring alone or monitoring plus execution guidance.

Dedicated AI visibility platforms

Tools like Omnia, Peec AI, Scrunch AI, and Otterly AI are built specifically for tracking brand presence across AI answer engines including Perplexity. They typically offer daily monitoring, citation-level URL tracking, competitive benchmarking, and geographic segmentation. Pricing ranges from approximately $29/month for basic plans to $500+ for enterprise tiers.

Omnia stands out for teams that need an action layer — it converts monitoring data into prioritized content briefs and placement recommendations. Peec AI offers strong multi-language support across 115+ languages with unlimited user seats. Scrunch AI emphasizes enterprise security with SOC 2 compliance and crawler behavior analysis.

AI features inside traditional SEO suites

Ahrefs Brand Radar, Semrush AI Visibility Toolkit, and SE Ranking each offer Perplexity tracking as part of broader SEO platforms. The advantage is consolidated reporting — you can see Perplexity citations alongside backlink data and organic rankings. The trade-off is that Perplexity-specific features tend to be less granular, and pricing can escalate quickly when AI tracking is sold as an add-on.

Ahrefs Brand Radar, for example, tracks mentions across 150+ million monthly prompts but requires either $199/month per platform or $699/month for all AI platforms. Semrush bundles AI tracking as a $99/month add-on to existing subscriptions.

Lightweight and free options

HubSpot’s AEO Grader provides free Perplexity visibility audits — useful for baseline snapshots but not continuous monitoring. Omnia’s free AI Visibility Checker runs 40 prompts across four engines in under five minutes. These work well for initial assessment but won’t sustain an ongoing monitoring program.

Key comparison factors

| Factor | What to evaluate |

|---|---|

| Refresh frequency | Daily tracking catches volatile citation shifts. Weekly or monthly creates blind spots. |

| Geographic coverage | Perplexity answers vary by location. Per-country tracking prevents averaged-out data. |

| Citation-level detail | Does the tool show the exact URLs cited, or only brand-name mentions? |

| Competitive displacement | Can you see which competitor URL replaced yours for a specific prompt? |

| Action recommendations | Does the tool suggest what content to create or update based on citation gaps? |

| Pricing transparency | Watch for per-country fees, prompt limits, and data retention restrictions that inflate costs. |

If you’re evaluating tools across multiple AI platforms — not just Perplexity — our overview of AI visibility analytics tools for brand mentions covers broader platform options.

How to Set Up Effective Perplexity Monitoring

Choosing a tool is step one. Configuring it to generate actionable data is where most teams either succeed or waste budget. Here’s a practical setup workflow.

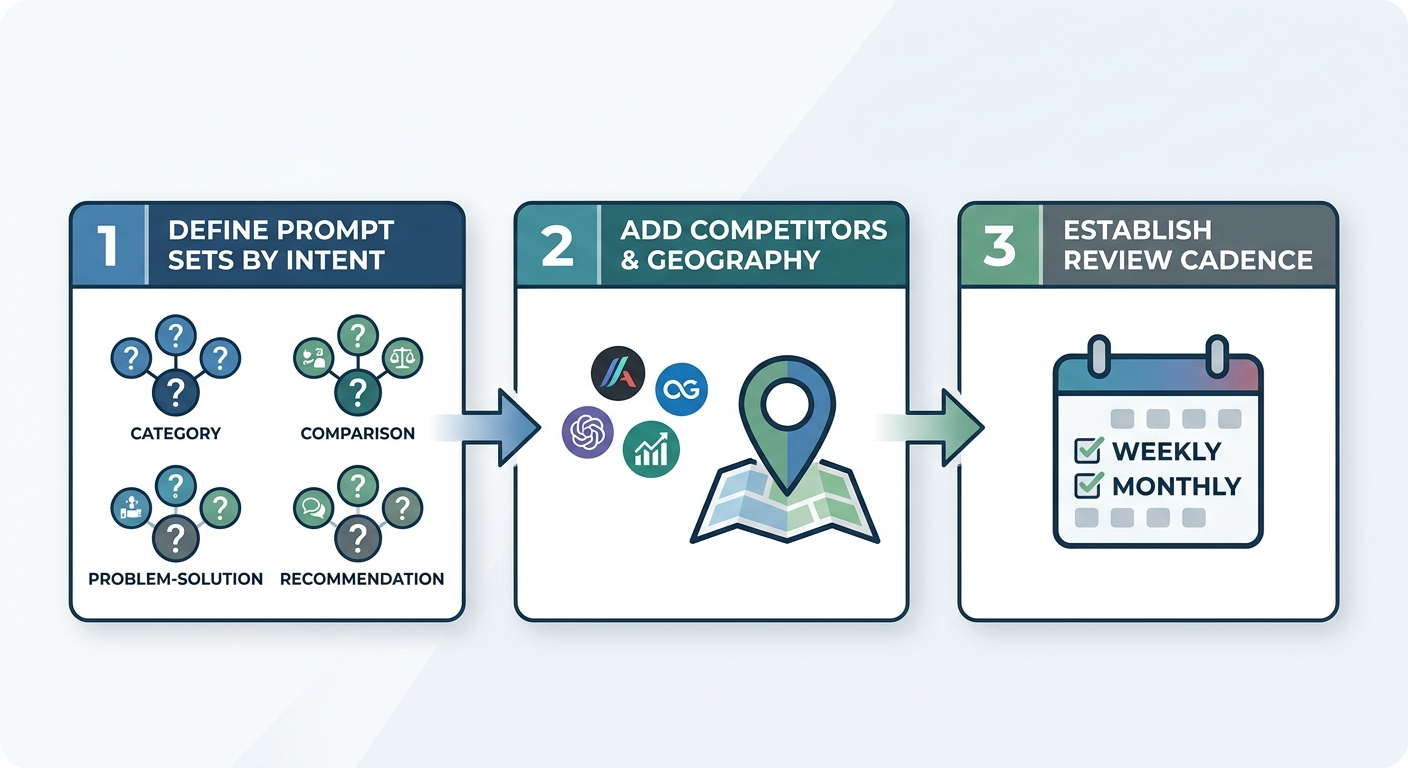

Step 1: Define your prompt sets by intent

Start with the questions your buyers actually ask during research — not the keywords your SEO team already tracks. These are different. Perplexity users type natural-language questions like “best compliance software for healthcare startups” or “alternatives to [competitor] with better API documentation.”

Group prompts into clusters:

- Category discovery — “best [category] tools,” “top [category] vendors”

- Comparison — “[your brand] vs. [competitor],” “alternatives to [competitor]”

- Problem-solution — “how to solve [pain point],” “tools for [specific use case]”

- Recommendation — “what [type of company] should use for [function]”

Track both branded prompts (where users mention your name) and non-branded prompts (where they describe a need). Non-branded discovery is where new pipeline starts.

Step 2: Add competitors and set geographic parameters

Include your top three to five competitors for every prompt cluster. This turns raw mention data into competitive intelligence. Set geographic parameters to match your actual markets — Perplexity answers vary by region because it pulls from live web search results that differ by location.

Step 3: Establish a review cadence

Weekly reviews work for most B2B teams. Check for citation gains and losses, new competitors entering your prompt clusters, and sentiment shifts. Monthly reviews should connect visibility trends to content and PR activity — did that guest post on a high-authority publication increase Perplexity citations? Did a competitor’s product launch shift share of voice?

For teams building a broader monitoring system, our guide on how to track brand mentions across AI search platforms walks through multi-engine setup.

Turning Perplexity Monitoring Data into More Citations

Tracking is only valuable if it changes what you do. Here’s how to convert monitoring insights into content and placement decisions that improve your citation rate.

Identify your citation gaps

Look for prompts where competitors are cited but you’re not. These are your highest-priority content opportunities. Categorize gaps by type:

- Missing content — you don’t have a page that directly addresses the prompt topic

- Weak content — you have a page, but it lacks the structural clarity, depth, or freshness Perplexity rewards

- Missing third-party validation — your owned content exists, but no external publishers reference it

Each gap type requires a different response. Missing content means you need to create. Weak content means you need to restructure and update. Missing validation means you need editorial placements on publications that Perplexity already cites in your category.

Analyze what citation-winning content looks like

When a competitor earns a citation you don’t, study the actual page Perplexity linked to. Common patterns among cited pages include:

- Direct, clear answers to the query within the first 100 words

- Structured headings that mirror how users phrase questions

- Specific data points, comparisons, or original research

- Recent publication or update dates

- FAQ schema or HowTo schema markup

This analysis turns competitor citations into a content blueprint. You’re not copying their page — you’re understanding the structural and authority signals that earned the citation and building something better.

Strengthen your third-party footprint

Perplexity frequently cites third-party sources — publisher articles, review roundups, analyst reports, and community discussions. If your brand appears primarily through owned content, your citation surface area is limited.

Agencies like BrandMentions address this by placing contextual brand mentions across 140+ high-authority publications that AI models actively reference. In campaigns across 67+ B2B companies, brands with consistent editorial mentions achieved AI recommendation rates 89% higher than those relying solely on owned-domain content.

Building this third-party layer is especially important for Perplexity because it conducts live web searches — meaning fresh editorial coverage can influence citations within days, not months. For a deeper look at how brand mentions on external sites influence AI visibility, see brand mentions for SEO.

Update and re-optimize existing pages

If your monitoring data shows a page that used to earn Perplexity citations but stopped, that’s a freshness or relevance signal. Common fixes include:

- Updating statistics and data to reflect 2026 numbers

- Adding structured FAQ sections that match current prompt language

- Improving the first paragraph to deliver a direct answer before context

- Adding schema markup (FAQ, HowTo, Product) to increase parseability

Track the impact of these changes in your next monitoring cycle. If citations return within one to two weeks, you’ve confirmed the optimization worked.

Common Mistakes When Using a Perplexity Mentions Tool

Monitoring tools create value only when used correctly. These are the patterns that waste budget or produce misleading data.

Tracking only branded prompts

If you only monitor prompts that include your brand name, you’ll see high mention rates and conclude everything is fine. The real story lives in non-branded prompts — the category and problem-oriented questions where new buyers first encounter potential vendors. Always weight your prompt sets toward non-branded discovery queries.

Ignoring geographic variation

Perplexity answers differ by region because its live web search pulls location-influenced results. A brand that’s well-cited for U.S. prompts may be invisible in European or APAC markets. If you sell internationally, track each market separately.

Treating snapshots as trends

A single check tells you almost nothing. Perplexity citations shift based on content freshness, competitor activity, and query refinement. Minimum viable monitoring requires weekly data over at least four to six weeks before drawing strategic conclusions.

Monitoring without an execution plan

The most common failure mode: teams invest in a tool, review dashboards regularly, and never change their content or placement strategy based on what they learn. Assign specific owners for citation gap responses and tie monitoring reviews to content calendar decisions.

How Perplexity Mentions Tracking Fits into a Broader AI Visibility Strategy

Perplexity is one AI answer engine among several that shape brand discovery. ChatGPT, Google AI Overviews, Gemini, Claude, and Copilot each have different source selection behaviors, different update cadences, and different user bases.

A mature AI visibility strategy tracks mentions across all platforms where your buyers conduct research. Some tools — like those covered in our roundup of AI rank trackers for brand mentions — support multi-engine monitoring from a single dashboard. Others specialize in one platform and need to be combined.

The strategic approach:

- Start with Perplexity and ChatGPT — these two cover the largest share of AI-driven research behavior in 2026

- Add Google AI Overviews — because they appear directly within Google search results, influencing traditional organic visibility. Our guide on AI Overviews mentions tools covers setup specifics.

- Layer in Gemini and Claude — as your monitoring program matures and you need category-level coverage across all major models

BrandMentions tracks when major AI models update their training data and times placements to maximize inclusion in each knowledge refresh cycle. This cross-platform approach ensures editorial placements don’t just improve Perplexity citations — they compound visibility across every AI surface where your buyers look for recommendations.

For a foundational understanding of how brand mentions work across all AI search environments, see brand mentions in AI.

Choosing the Right Tool for Your Team

The right perplexity mentions tool depends on your team’s size, technical sophistication, and what you plan to do with the data.

- Small teams with limited budget — start with a free baseline audit (HubSpot AEO Grader or Omnia’s free checker), then graduate to a focused tool like Peec AI or Otterly AI for continuous monitoring under $200/month

- Growth-stage B2B companies — prioritize tools with an action layer that converts monitoring into content briefs and placement recommendations. Omnia fits this profile well.

- Enterprise teams already invested in SEO platforms — evaluate whether Ahrefs Brand Radar or Semrush AI Visibility Toolkit provides sufficient Perplexity granularity as an add-on before purchasing a standalone tool

- Agencies managing multiple clients — look for workspace isolation, white-label reporting, and per-client prompt management. Rankability and Otterly AI offer strong agency workflows.

Regardless of which tool you choose, the evaluation should always answer: does this platform tell me what happened, why it happened, and what to do about it?

Pro Insight: Before committing to annual pricing, run a two-week trial with your actual prompt sets — not the vendor’s demo queries. Citation rates, competitive coverage, and data granularity often look different when applied to your specific category and market.

FAQ

What is the difference between a Perplexity mentions tool and a traditional rank tracker?

A traditional rank tracker monitors your URL’s position in a numbered list of search results. A perplexity mentions tool tracks whether your brand appears in AI-synthesized answers, which sources Perplexity cites, and how your mention rate compares to competitors. Perplexity doesn’t produce ranked lists — it produces answers with inline citations, which requires a fundamentally different measurement approach.

Can I track Perplexity mentions manually without a tool?

You can spot-check a few queries manually, but AI answers change frequently based on timing, location, and follow-up context. Manual checks miss trends, competitive shifts, and citation volatility. A dedicated tool automates prompt monitoring on a consistent schedule, providing the historical data needed for strategic decisions.

How quickly can content changes affect Perplexity citations?

Because Perplexity runs live web searches, content updates can influence citations within days — significantly faster than traditional SEO changes affect Google rankings. Teams that update page structure, add FAQ schema, or publish fresh editorial content often see citation movement within one to two weekly monitoring cycles.

Does tracking Perplexity mentions also help with other AI search engines?

The content improvements that earn Perplexity citations — clear structure, direct answers, strong third-party validation — also improve visibility in ChatGPT, Gemini, Google AI Overviews, and Claude. The signals differ slightly by platform, but the foundational strategy overlaps. For a broader look at cross-platform tracking, see our guide on monitoring brand mentions in LLMs.

How much does a Perplexity mentions tool typically cost?

Pricing ranges from free (baseline audits) to $700+/month for enterprise multi-platform coverage. Most mid-market B2B teams can get effective Perplexity monitoring for $100–$300/month. Watch for hidden costs: per-country surcharges, prompt limits, data retention caps, and export restrictions can inflate the real price significantly beyond advertised rates.

What to Do This Week

AI answer engines are already shaping how your buyers research, compare, and shortlist vendors. Perplexity is one of the largest and fastest-growing surfaces for that activity. Every week you don’t monitor it is a week where competitors may be earning citations — and mindshare — you can’t see.

Start with a baseline audit. Run your top 10 category prompts through Perplexity manually or with a free tool. Note which brands appear, which URLs are cited, and where you’re absent. That exercise alone will reveal whether this is a visibility gap worth closing.

If the gap is real — and for most B2B brands in competitive categories, it is — invest in a perplexity mentions tool that matches your team’s needs. Then connect monitoring to action: content creation, page optimization, and third-party placements that build citation-worthy authority across the sources Perplexity trusts.

If you want to understand where your brand stands across AI search right now — including Perplexity, ChatGPT, and Gemini — see where your brand stands in AI search.