Tracking brand mentions in AI search results means systematically monitoring when and how ChatGPT, Perplexity, Gemini, Google AI Overviews, Claude, and Copilot name, describe, or cite your brand in their generated answers. Unlike traditional SEO rank tracking, this requires querying AI platforms directly — because your Google position tells you nothing about whether AI recommends you to buyers.

As of 2026, AI search platforms collectively process billions of queries every week. ChatGPT alone surpassed 800 million weekly active users, and Google AI Overviews reach 1.5 billion users monthly across 200+ countries. Your buyers are asking these systems for recommendations right now. If you don’t have a structured way to see what AI says about you — and your competitors — you’re operating blind in the fastest-growing discovery channel in B2B.

This article walks through the specific metrics, tools, prompt strategies, and workflows you need to track AI brand mentions accurately and turn that data into a competitive advantage.

What You’ll Learn

- Why traditional SEO tools and web analytics miss AI brand mentions entirely

- The difference between AI mentions and AI citations — and why both matter

- Which metrics to track weekly: inclusion rate, citation coverage, share of voice, and placement scoring

- How to build a buyer-intent prompt set that reflects real customer research behavior

- A comparison of dedicated AI visibility tracking platforms available in 2026

- How to close visibility gaps when competitors get recommended and you don’t

- The workflow for connecting AI mention tracking to pipeline and revenue outcomes

Why Traditional Tracking Tools Miss AI Brand Mentions

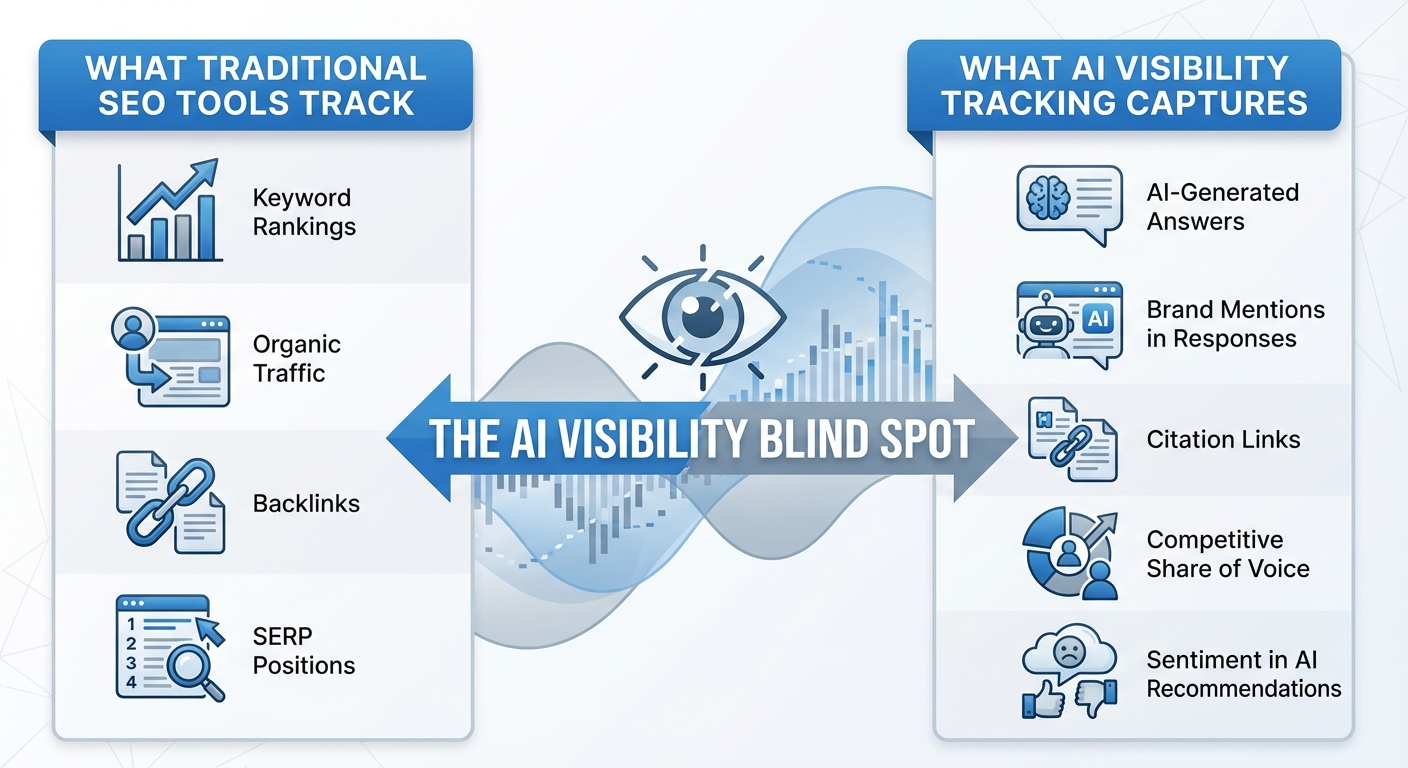

Google Search Console, GA4, Ahrefs, and Semrush were built for a world of blue links and click-based discovery. They track rankings, impressions, clicks, and referral traffic. None of them capture what happens inside an AI-generated answer.

When a VP of Engineering asks Perplexity “What are the best observability platforms for Kubernetes?” the AI synthesizes information from dozens of sources and generates a curated answer. It might name five tools, describe their strengths, and link to two or three sources. Your brand either appears in that answer or it doesn’t.

Here’s the problem: that interaction generates zero data in your traditional analytics unless the user clicks through — and research from Ahrefs found that AI Overviews reduce clicks by 34.5% when present. Many AI platforms don’t pass consistent referral data at all.

This creates a dangerous blind spot. A brand can hold strong Google rankings while being completely absent from AI recommendations. According to Passionfruit research, 26% of brands have zero mentions in Google AI Overviews — including some market leaders with dominant traditional SEO profiles.

The takeaway is straightforward: you need purpose-built tracking for AI search, running alongside your existing SEO stack — not instead of it.

Mentions vs. Citations: Two Distinct Metrics That Require Separate Tracking

A brand mention is any instance where an AI platform names your brand in a generated response — with or without a link. A citation is when the AI attributes a source link to your domain as evidence supporting its answer.

These are fundamentally different signals, and conflating them leads to poor decisions.

Why the Distinction Matters

Research published by BrightEdge in 2025 found that citation behavior varies dramatically across AI platforms. In ChatGPT, only 2 in 10 mentions include citation links. Perplexity averages over 5 citations per answer but mentions brands less frequently — only about 1 in 5 answers include brand references. Google AI Overviews sit between these extremes, blending brand recall with source attribution.

This means a high mention count on ChatGPT might come with almost no direct traffic. A low mention count on Perplexity might still drive significant referral visits because of its aggressive citation behavior.

| Metric | What It Measures | Business Impact |

|---|---|---|

| Brand Mention | AI names your brand in its answer | Buyer awareness, shortlist inclusion, brand recall |

| Citation | AI links to your domain as a source | Referral traffic, trust signal, conversion opportunity |

Track both per query and per platform. A brand that gets mentioned frequently but never cited has a trust or content-structure problem. A brand that gets cited but rarely mentioned may have strong content assets but weak category association.

For a deeper look at tracking mentions specifically in ChatGPT, see how agencies monitor brand mentions in ChatGPT using structured prompt workflows.

The Five Metrics That Actually Matter for AI Brand Tracking

Dashboards bloated with vanity metrics create noise. Focus on these five, measured weekly across each AI platform that matters to your audience.

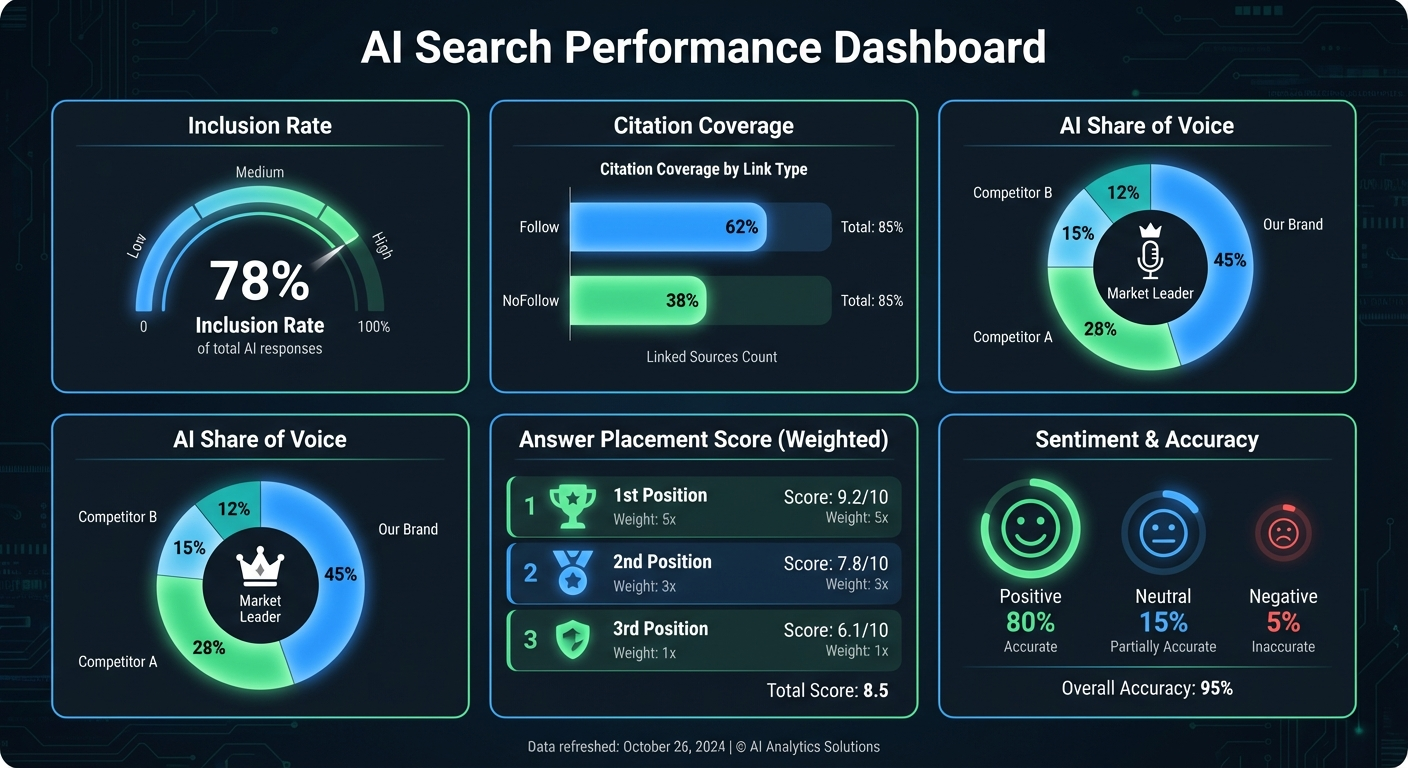

1. Inclusion Rate

Inclusion rate is the percentage of relevant prompts where your brand appears — mentioned or cited — in the AI’s response. This is your core visibility metric.

Calculate it by dividing the number of prompts where your brand appears by the total prompts tested, segmented by AI platform and intent type (category, comparison, problem-solving).

An inclusion rate of 40–60% on high-intent commercial queries typically indicates strong AI visibility. Below 20% signals a significant gap.

2. Citation Coverage

Citation coverage measures the percentage of your appearances that include a clickable link to your domain. Break this down further by link type: homepage, product page, blog post, or third-party source mentioning you.

Low citation coverage with high mention frequency usually means AI “knows” your brand but doesn’t trust your content enough to link to it directly.

3. AI Share of Voice

AI share of voice (SOV) compares your mention frequency against competitors for the same set of prompts. If five brands appear in a category query and you’re one of them, your raw SOV is 20%. Weight this by placement position — being mentioned first carries more influence than appearing last in a list.

4. Answer Placement Score

Not all mentions are equal. A brand recommended first in an AI answer gets more attention and higher perceived endorsement than one listed fifth. Answer placement score normalizes your position within each response — first mention scores highest, later mentions score progressively lower.

5. Sentiment and Accuracy

AI models don’t just name brands — they characterize them. Monitor whether the AI describes your brand accurately (correct pricing, features, use cases) and with what sentiment (positive, neutral, negative, or comparative). An inaccurate positive mention can be worse than no mention at all if it sets wrong expectations for buyers.

Pro Insight: Track these metrics separately for each AI platform. Your inclusion rate on ChatGPT might be 55% while Gemini shows 12%. Platform-specific data reveals where to focus optimization efforts — and which platforms your buyers actually use.

How to Build a Buyer-Intent Prompt Set

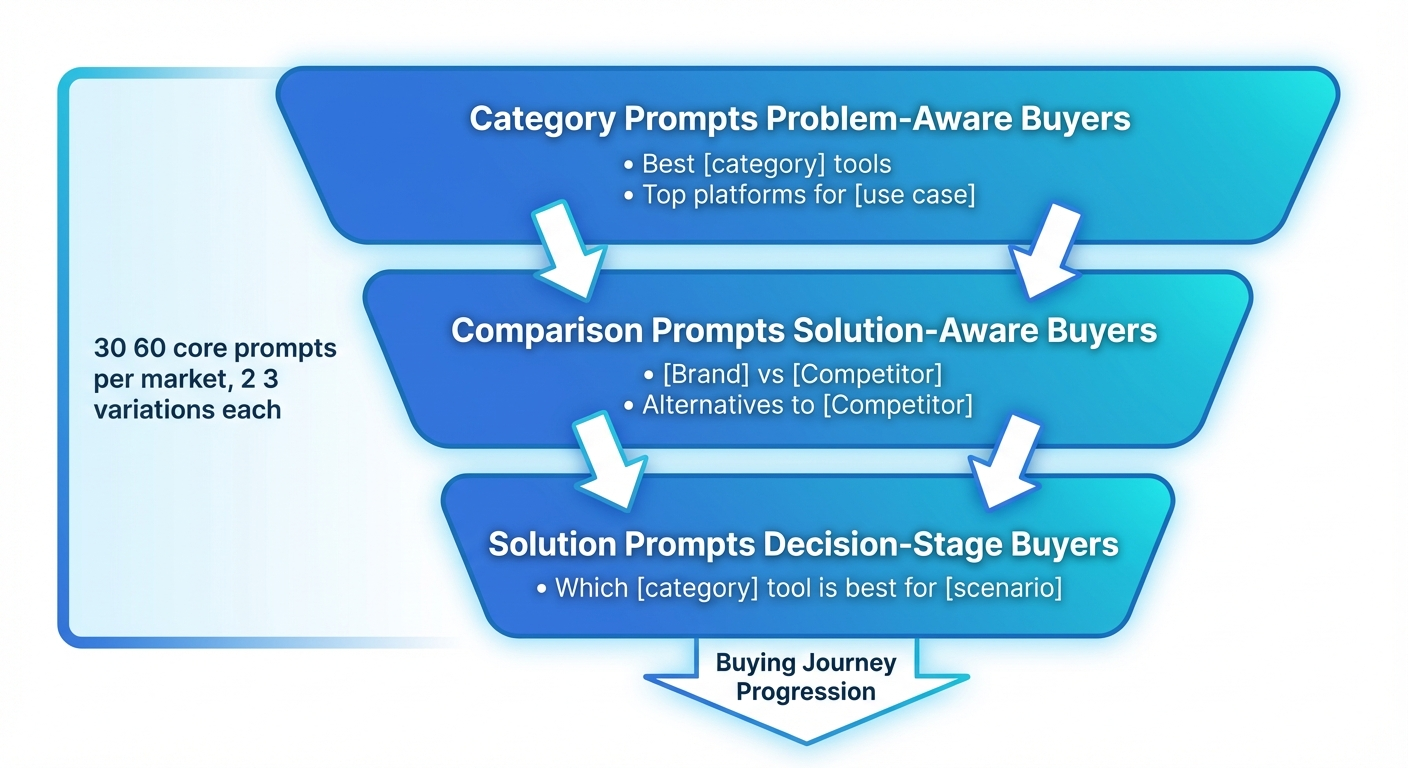

The quality of your tracking depends entirely on the prompts you test. Generic prompts produce generic data. Buyer-intent prompts reveal whether AI recommends you at the moments that influence pipeline.

Map Prompts to the Buyer Journey

Organize your prompt set into three clusters that mirror how B2B buyers actually research solutions:

- Category prompts (problem-aware stage): “What are the best tools for [your category]?” / “Top [your category] platforms for mid-market companies”

- Comparison prompts (solution-aware stage): “[Your brand] vs. [Competitor]” / “Alternatives to [Competitor]” / “How does [Your brand] compare to [Competitor] for [use case]?”

- Solution prompts (decision stage): “How to solve [specific problem your product addresses]” / “Which [category] tool is best for [specific scenario]?”

Pull phrasing from actual buyer language — sales call transcripts, support tickets, community forums, and People Also Ask data. A prompt like “best SIEM tools for SOC 2 compliance” reflects real buyer intent far better than “top cybersecurity software.”

How Many Prompts Do You Need?

Start with 30–50 core prompts per market. That’s enough for statistically meaningful signal without creating an unmanageable testing burden. For each core prompt, create 2–3 natural variations (synonym rewrites like “best/top/recommended” and specificity variants like adding “for startups” or “for enterprise”).

Add geographic and language variants if you sell internationally. AI answers shift significantly based on locale settings.

Assign Business Value

Not every prompt matters equally. Assign a revenue-weight to each prompt based on the deal size and conversion potential of the buyer intent behind it. A prompt like “best enterprise CRM for healthcare” drives higher-value pipeline than “free CRM tools” — prioritize tracking and optimization accordingly.

The best ways to track brand mentions in AI search all start with this kind of structured prompt mapping — not random querying.

Capture Front-End Answers, Not API Responses

A common mistake in AI visibility tracking: relying on API responses rather than the actual front-end UI that users see.

API outputs can diverge from front-end answers. Formatting, citation placement, and even which brands appear can differ between what the model returns via API and what renders in the user interface. If you’re making decisions based on API data alone, your tracking may not reflect real user experience.

For each tracking run, capture:

- The complete answer text as rendered in the front-end UI

- All brands named, in order of appearance

- All visible citation links, normalized (strip UTMs, resolve redirects)

- Model version, browsing/retrieval mode, country, and language settings

- Timestamp for longitudinal comparison

Store weekly snapshots in a single repository. This gives you the historical data needed to calculate week-over-week volatility, track time-to-inclusion after content updates, and explain trend shifts to stakeholders.

Warning: AI answers are volatile. Ahrefs research from late 2025 found that AI Overview content changes 70% of the time for the same query, with 45.5% of citations getting replaced in each new answer. A single snapshot is unreliable — weekly cadence is the minimum for meaningful data.

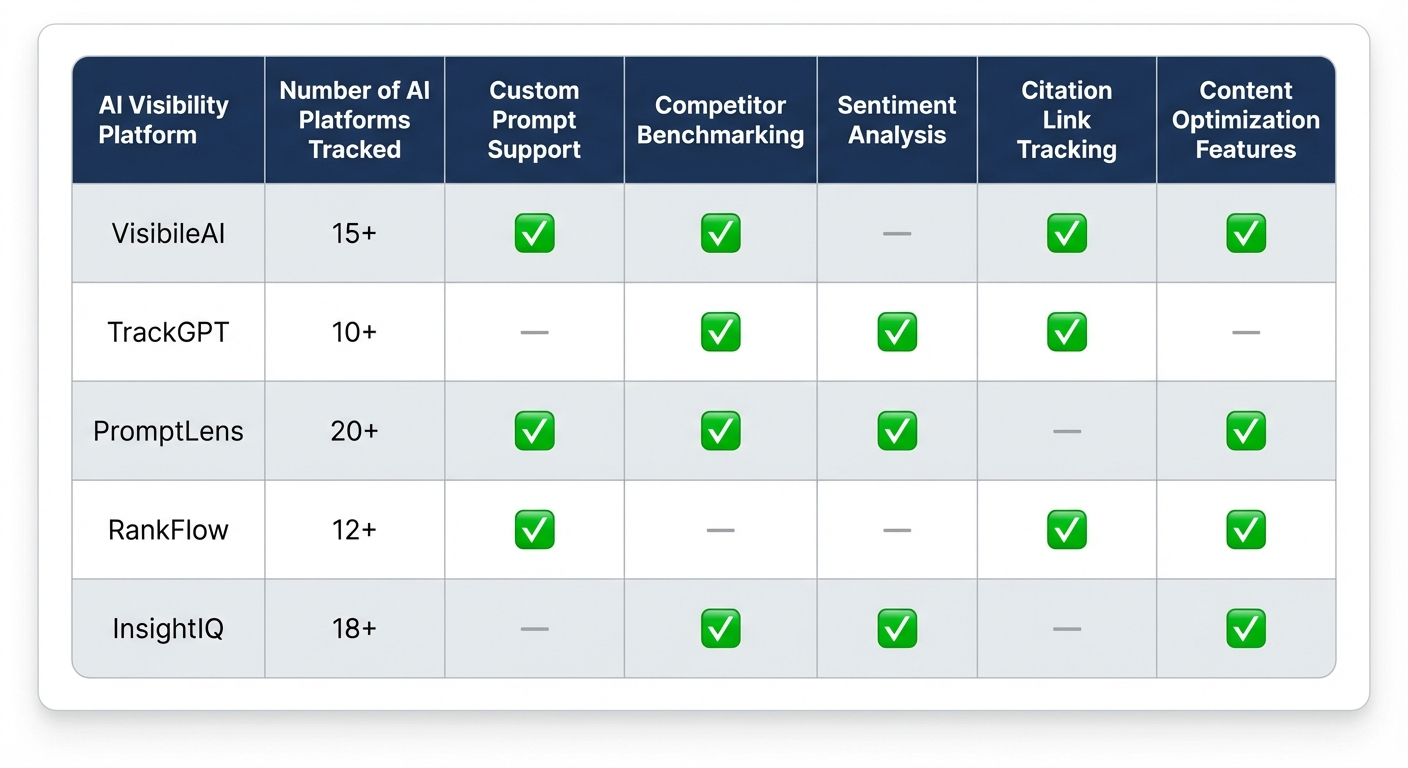

AI Visibility Tracking Tools: What’s Available in 2026

The AI visibility tracking market has matured rapidly since 2024. Here’s a practical breakdown of the platforms available to marketing teams, grouped by approach.

Dedicated AI Visibility Platforms

These tools are built specifically to track brand presence inside AI-generated answers:

| Platform | AI Engines Covered | Key Strength | Starting Price |

|---|---|---|---|

| Ahrefs Brand Radar | ChatGPT, Perplexity, Gemini, Copilot, AI Overviews, AI Mode | 327M+ monthly prompts from real search data, unlimited projects | Standalone or included with Ahrefs plans |

| Semrush AI Visibility Toolkit | ChatGPT, Perplexity, Gemini, AI Overviews | Connects AI visibility to traditional SEO data | $99/month |

| Otterly.AI | ChatGPT, Perplexity, Gemini, AI Overviews, Copilot | Affordable entry point for smaller teams | $29/month |

| Peec AI | ChatGPT, Perplexity, Gemini, AI Overviews | Sentiment tracking and contextual narrative analysis | €89/month |

| GrackerAI | ChatGPT, Perplexity, Claude, Gemini, AI Overviews, Copilot | Monitoring plus automated content optimization | Free tier available |

Traditional SEO Platforms With AI Tracking Features

Ahrefs and Semrush now include AI visibility modules alongside their traditional keyword and backlink tools. These work well if your team already uses these platforms and wants a unified view. The limitation: their AI tracking often covers fewer platforms and updates less frequently than dedicated tools.

Manual Tracking

For teams with limited budgets, a structured spreadsheet still works. Run 10–20 core prompts across 2–3 AI platforms weekly. Record: prompt text, brand mentioned (Y/N), position, citation link (Y/N), competitor names, AI platform, and date. It’s labor-intensive but produces usable baseline data.

For a comparison of AI rank trackers built for brand mention monitoring, the range of options has expanded significantly since early 2025.

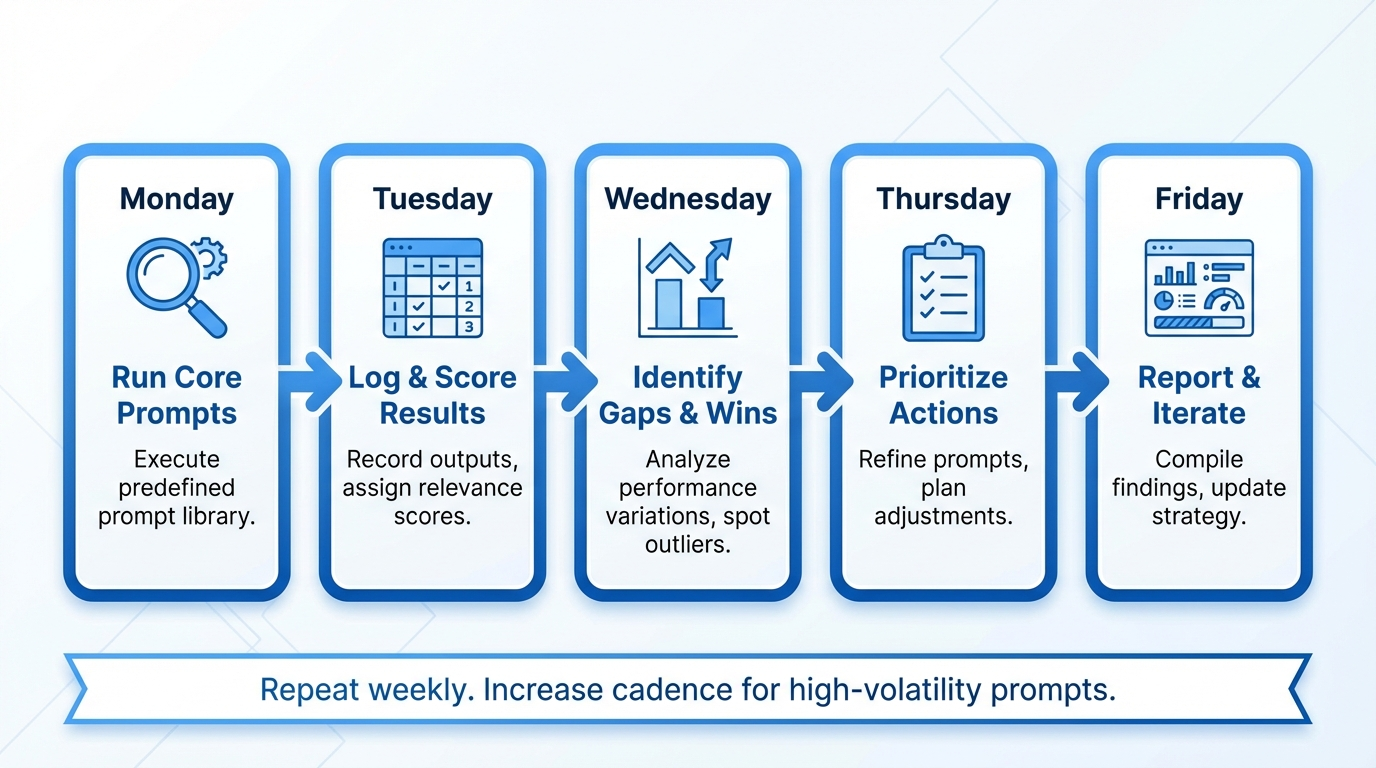

A Weekly AI Visibility Tracking Workflow

Consistent tracking beats sporadic audits. Here’s a practical weekly workflow that produces actionable data without consuming your entire team’s bandwidth.

Step 1: Run Your Core Prompt Set (Monday)

Execute your 30–50 core prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews. Use consistent settings: same country, language, and browsing mode for each run. If using a dedicated platform, this is automated. If manual, allocate 60–90 minutes.

Step 2: Log Results and Score (Tuesday)

For each prompt, record: inclusion (Y/N), citation (Y/N), placement position, sentiment, accuracy flag, and competitors present. Calculate your weekly inclusion rate, citation coverage, SOV, and placement scores. Compare against the previous week to spot gains and losses.

Step 3: Identify Gaps and Wins (Wednesday)

Flag the highest-impact gaps — prompts where competitors appear and you don’t, especially in high-value buyer-intent categories. Also flag wins: prompts where you gained visibility this week. Investigate what changed (new content, PR coverage, competitor content removed).

Step 4: Prioritize Action Items (Thursday)

Convert gaps into specific tasks: create a comparison page, publish a structured guide targeting a missing query, request a correction for inaccurate AI descriptions, or pitch a guest post to a source that AI platforms frequently cite.

Step 5: Report and Iterate (Friday)

Produce a one-page summary: inclusion rate trend, SOV change, top 3 gaps, top 3 wins, and actions in progress. Share with marketing leadership. Over time, this creates a clear record of how AI visibility improvements correlate with pipeline activity.

What to Do When AI Ignores Your Brand

If your tracking reveals low inclusion rates, the problem usually falls into one of three categories: a proof gap, an entity gap, or a source authority gap. Each requires a different fix.

Close the Proof Gap

AI platforms cite content that directly, clearly, and authoritatively answers the user’s question. If your website lacks structured, citation-worthy content for the queries your buyers ask, AI has nothing to pull from.

Create evidence pages: detailed comparison matrices, step-by-step guides, FAQ-format content targeting specific buyer questions, and data-backed analysis. Structure these with clear H2/H3 hierarchies, bullet points, tables, and direct answer sentences that AI can extract cleanly.

Close the Entity Gap

AI systems need to understand what your brand is, what it does, and who it serves. If your structured data is thin or inconsistent, models struggle to associate your brand with the right category.

Implement Organization, Product, FAQPage, and HowTo schema markup. Use sameAs properties to link to your Wikipedia page, LinkedIn company profile, and Crunchbase listing. Keep brand name, descriptions, and category associations consistent across every property.

Close the Source Authority Gap

AI platforms heavily weight third-party validation. According to AirOps data published in late 2025, brands are 6.5x more likely to be cited through third-party sources than their own domains. If the publications and platforms that AI models trust don’t mention you, your own content alone may not be enough.

Build presence on the specific domains AI engines already cite in your category. Analyze which sources appear in AI answers for your target prompts, and focus digital PR, guest contributions, and community engagement on those exact outlets.

Agencies like BrandMentions approach this systematically — placing contextual brand mentions on 140+ high-authority publications that AI models actively reference when generating category recommendations.

Connecting AI Visibility Tracking to Revenue

Tracking AI mentions only matters if you can connect that data to business outcomes. Here’s how to bridge the attribution gap.

Segment AI Referral Traffic in GA4

Create custom channel groupings in GA4 to isolate traffic from AI sources: ChatGPT (referrals from chat.openai.com), Perplexity (perplexity.ai), and others. While this only captures click-through traffic — not the larger influence of zero-click AI mentions — it provides a measurable baseline.

Track Branded Search Volume as a Proxy

AI visibility drives downstream behavior. Buyers who see your brand recommended by ChatGPT often search for you directly afterward. Monitor branded search volume in Google Search Console and Ahrefs. A consistent increase in branded queries correlating with improved AI mention rates suggests AI is influencing awareness.

Samsung, for example, attributed 28% of its direct brand searches to increased zero-click exposure in AI and featured snippet results, according to research cited by The HOTH in 2025.

Use Time-to-Inclusion as an Optimization KPI

After publishing new content or earning a new third-party mention, track how long it takes for AI platforms to reflect that change in their answers. This time-to-inclusion (TTI) metric shows you which content types and publication channels get absorbed by AI models fastest — so you can double down on what works.

Report AI Visibility Alongside Pipeline Metrics

Present AI visibility data on the same dashboard as MQL volume, pipeline generation, and close rates. Over two to three quarters, patterns emerge. In campaigns across 67+ B2B companies, the BrandMentions team found that brands with consistent editorial mentions achieved AI recommendation rates 89% higher than those relying solely on traditional SEO — and those recommendations correlated with measurable increases in inbound demo requests.

For SaaS companies specifically, the SaaS brand mentions approach connects AI visibility improvements directly to pipeline metrics that growth teams care about.

How Often Should You Retest AI Answers?

AI answers are inherently volatile. The same prompt asked on Monday and Friday can produce different brand recommendations because models pull from updated sources, adjust retrieval weights, and respond to changes in the underlying web content.

Here’s a practical cadence:

- Weekly: Core buyer-intent prompts (your top 30–50). This is the minimum for meaningful trend data.

- Bi-weekly: Extended prompt sets covering long-tail variations and secondary queries.

- After major changes: Increase frequency temporarily after publishing significant content, earning high-authority PR placements, or noticing competitor content shifts.

- Monthly: Full audit of prompt set relevance — add new buyer queries surfacing in sales conversations and prune prompts that no longer match active buyer behavior.

Tip: Flag high-volatility prompts — queries where the set of recommended brands changes significantly week over week. These prompts represent opportunities. Volatile answers mean AI hasn’t “settled” on a preferred recommendation, which makes it easier to earn your way in with strong, fresh content.

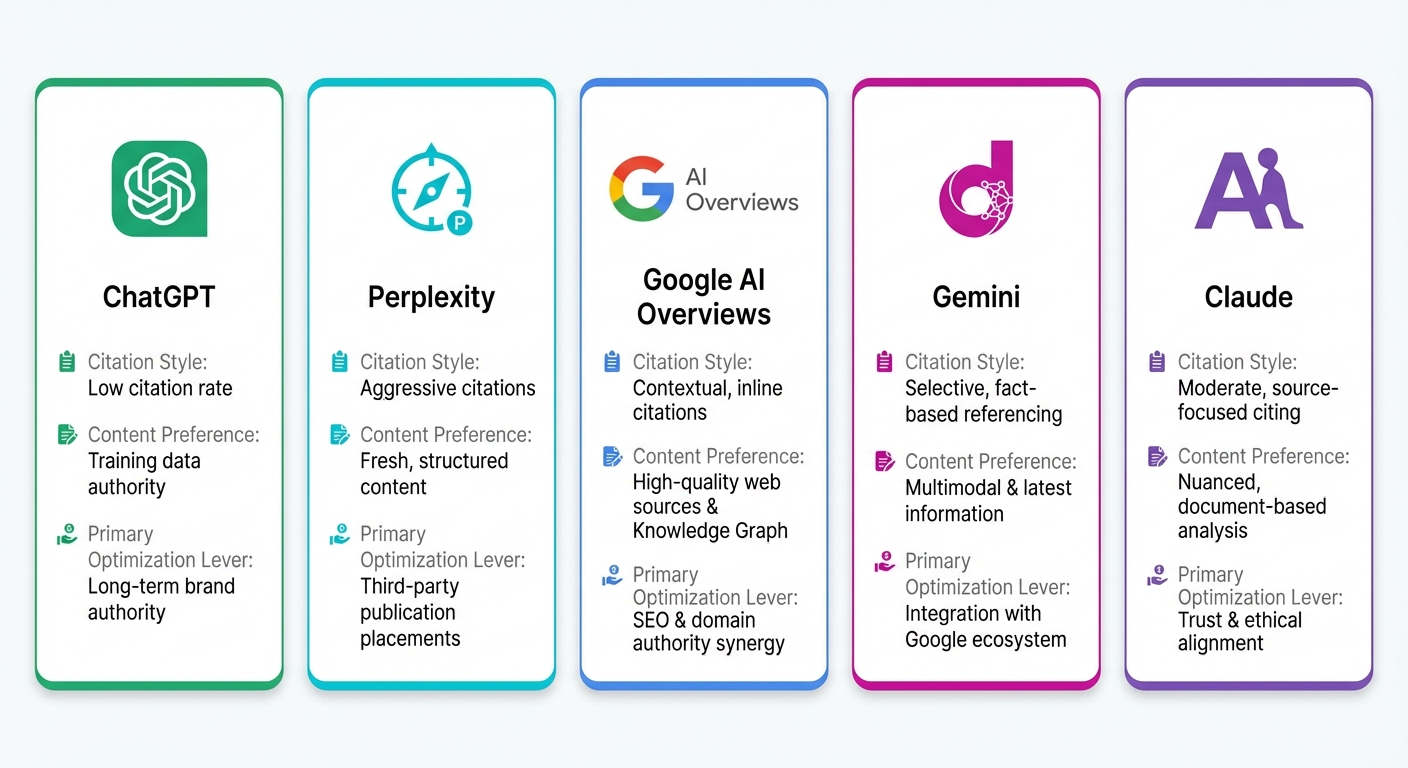

What AI Platforms Track Differently — And Why Platform-Specific Monitoring Matters

Each AI platform has its own retrieval behavior, citation style, and source preferences. A brand that dominates ChatGPT answers might be absent from Perplexity. Tracking aggregate numbers across all platforms masks critical differences.

ChatGPT

Relies heavily on training data for brand associations, supplemented by real-time web browsing when enabled. Low citation rate (roughly 2 in 10 mentions include links). Favors brands with strong category association in its training corpus — which means long-term content authority and consistent web presence matter most.

Perplexity

Aggressively cites sources — averaging 5+ citations per answer. But mentions brands less frequently (about 1 in 5 answers include specific brand references). Perplexity rewards structured, recently published content from authoritative domains. If your content appears on the sources it indexes, citation coverage tends to be high.

Google AI Overviews and AI Mode

Heavily influenced by traditional Google ranking signals: domain authority, topical relevance, backlink profile, and structured data. Brands that rank well organically have an advantage, but AI Overviews also pull from sources not in the top 10 organic results — making third-party citations a significant factor.

Gemini and Claude

Both lean toward niche expertise and well-structured educational content. Gemini integrates deeply with Google’s Knowledge Graph, rewarding brands with strong entity definitions. Claude tends to favor nuanced, balanced content — comparison pages and thorough analysis perform well.

Track each platform independently. Your optimization strategy for ChatGPT (building long-term brand authority) may differ entirely from your Perplexity strategy (publishing fresh, well-cited content on high-authority domains).

Common Mistakes That Undermine AI Brand Tracking

Even teams that invest in AI visibility tracking often make errors that degrade data quality or lead to wrong conclusions.

- Testing only your own brand name. You learn nothing about competitive positioning if you don’t track how competitors appear for the same prompts. Always include your top 3–5 competitors in every tracking run.

- Counting redirect variants as separate citations. If your domain uses UTM parameters, trailing slashes, or HTTP-to-HTTPS redirects, normalize URLs before counting. Otherwise, one citation looks like three.

- Ignoring locale and language settings. AI answers shift based on the user’s country and language. A brand visible in US-English ChatGPT might be absent in UK-English or German queries. Set consistent locale parameters — and test international markets separately if you sell globally.

- Treating a single snapshot as truth. One prompt, tested once, on one platform, tells you almost nothing. Trends emerge from weekly repetition. Build your conclusions on multi-week data, not individual observations.

- Relying on API outputs instead of front-end capture. As discussed earlier, API and front-end responses can diverge. Always validate against the actual user experience.

FAQ

How is tracking AI brand mentions different from tracking Google rankings?

Google rankings measure your website’s position in a list of links. AI brand mentions measure whether an AI platform names or recommends your brand inside a generated answer — a synthesized response that may or may not link to you. The two metrics don’t correlate reliably, which is why they require separate tracking systems and distinct optimization strategies.

Can I track AI brand mentions for free?

Yes. A structured spreadsheet with 10–20 prompts tested weekly across 2–3 AI platforms provides usable baseline data at no cost beyond your time. Google Alerts also catch some web mentions that feed AI training data. For scalable, automated tracking across all major platforms, dedicated tools range from $29 to $500+ per month depending on features and coverage.

How quickly can I improve my AI visibility after I start tracking?

Early gains typically appear within 4–6 weeks of targeted content creation and structured data optimization. Significant improvements in citation frequency and competitive share of voice usually take 2–3 months. Building durable AI brand authority — where models consistently recommend you across diverse queries — is a 6–12 month process that compounds over time.

Which AI platform should I prioritize for tracking?

Start with the platforms your buyers use most. For B2B SaaS, ChatGPT and Google AI Overviews typically have the highest volume. Add Perplexity — its aggressive citation behavior makes it a strong source of AI referral traffic. Expand to Gemini, Claude, and Copilot once your core tracking workflow is established.

Does strong traditional SEO automatically mean strong AI visibility?

Not necessarily. Research from Passionfruit found that 80% of sources cited by AI platforms don’t appear in Google’s traditional top 10 results. Strong SEO creates a foundation, but AI visibility also depends on third-party mentions, structured data quality, content freshness, and cross-source consistency — factors that go beyond traditional ranking signals.

What’s the minimum prompt set I need for meaningful tracking?

Start with 30–50 prompts per market, split across category, comparison, and solution queries. Include 2–3 natural variations per prompt. This gives you enough data points to calculate statistically meaningful inclusion rates and share of voice — without creating an unmanageable weekly workload.

Turn AI Tracking Into Competitive Advantage

Tracking brand mentions in AI search results is no longer optional for B2B marketing teams. It’s the difference between knowing where your brand stands in the fastest-growing buyer research channel — and guessing.

The workflow is clear: build a buyer-intent prompt set, track inclusion and citation metrics weekly across each AI platform, identify competitive gaps, and close them with structured content, entity optimization, and strategic third-party placements.

What separates brands that grow AI visibility from those that stagnate is consistency. Weekly tracking creates compounding insight. Each cycle sharpens your understanding of which content moves AI recommendations, which platforms your buyers trust most, and where your competitors are vulnerable.

If you want to see where your brand stands across ChatGPT, Perplexity, Gemini, and AI Overviews right now — before your next planning cycle — get a free AI visibility audit and find out exactly what AI says about your brand, and your competitors.