Most marketing teams have no reliable way to know whether Perplexity mentions their brand — or how often it recommends a competitor instead. Tracking brand mentions in Perplexity requires a prompt-based monitoring workflow because Perplexity has no analytics dashboard, no search console, and no native reporting for brands. You have to query it systematically, record what it returns, and measure changes over time.

This is a different discipline than traditional SEO monitoring. Perplexity uses retrieval-augmented generation (RAG) — pulling live web sources in real time and synthesizing them into cited answers. Your content either earns a mention, a citation, or nothing. And unlike Google rankings, the results shift based on prompt phrasing, model selection, and source freshness.

As of 2026, Perplexity processes over 400 million queries per month, according to the company’s own reporting. That volume makes it a meaningful discovery channel — especially for B2B buyers who use it for vendor research, product comparisons, and shortlisting.

Below, you’ll find a practical system for tracking your brand’s Perplexity visibility — from building a prompt library to defining the right KPIs to choosing between manual and automated workflows.

What You’ll Learn

- The difference between mentions, citations, and links in Perplexity — and why you need to track all three separately

- How to build a repeatable prompt library anchored in real buyer queries

- Which KPIs actually measure Perplexity visibility (and which vanity metrics to ignore)

- A step-by-step manual tracking workflow you can launch in 30 minutes

- When manual tracking breaks down and automation becomes necessary

- How to interpret patterns and turn tracking data into content improvements

- Common mistakes that corrupt your data — and how to avoid them

Why Perplexity Tracking Requires a Different Approach Than Google

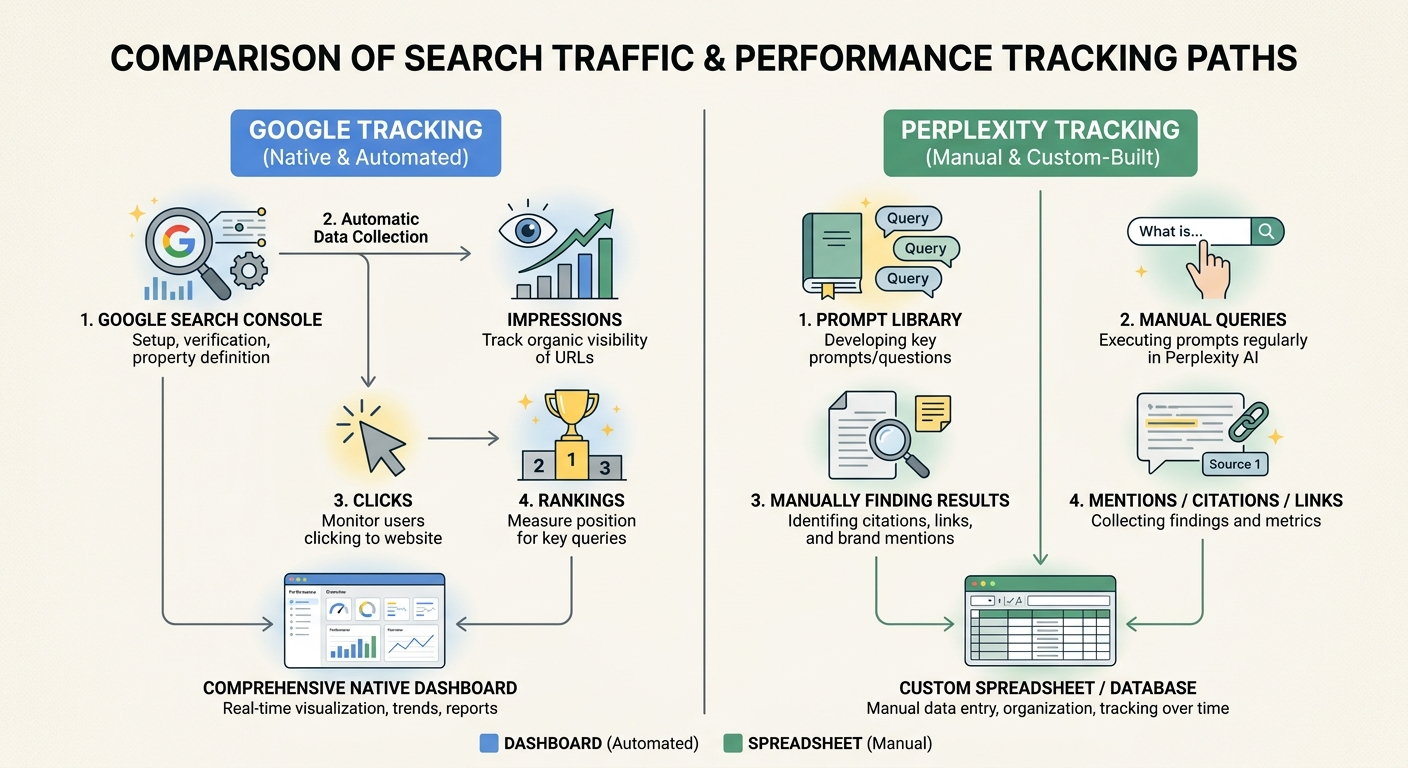

Google Search Console gives you impressions, clicks, and average position for every query. Perplexity gives you nothing. There is no brand dashboard, no analytics API for publishers, and no way to see how often your domain appears in answers.

This creates a measurement gap that many marketing teams underestimate. You can rank well in Google organic results while being completely invisible in Perplexity — because Perplexity selects sources based on different signals.

Perplexity’s RAG architecture means it performs a fresh web search for every query, evaluates source quality in real time, and synthesizes an answer with inline citations. The sources it selects depend on:

- Content clarity — direct answers, structured formatting, clear claims

- Source authority — domain trust, editorial quality, backlink profile

- Freshness — recently published or updated content surfaces faster

- Topical depth — consistent coverage across related subtopics

A page that ranks #1 in Google may never appear in Perplexity if it’s poorly structured, lacks clear claims, or competes against a more authoritative source for that specific query. That’s why you need a dedicated tracking workflow — not just a column added to your existing rank tracker.

Mentions vs. Citations vs. Links: Three Metrics, Three Signals

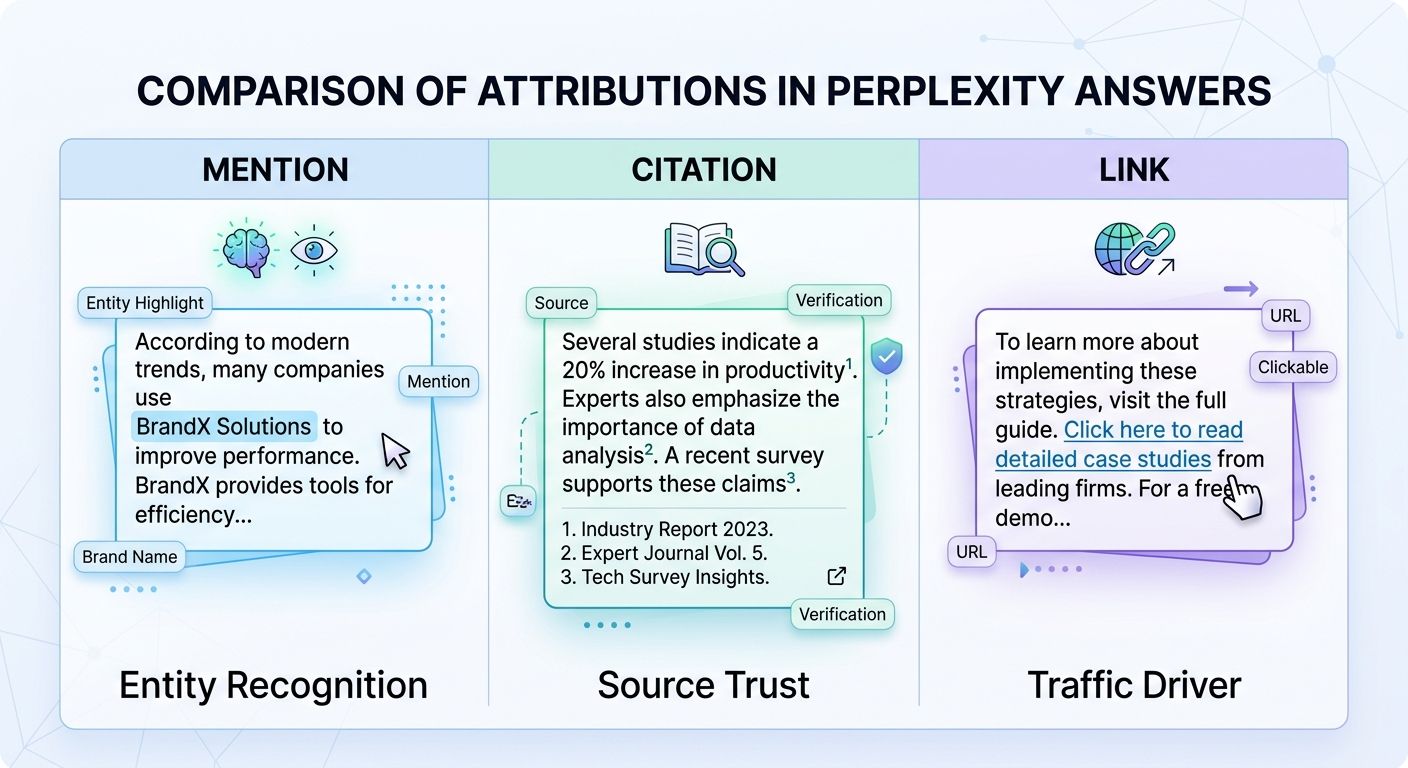

A brand mention in Perplexity is any instance where your company or product name appears in the answer text. A citation is when your domain appears in the numbered reference list at the bottom of the answer. A link is a clickable URL that sends users directly to your site.

These are three distinct outcomes, and they tell you different things about your visibility:

- Mention without citation — Perplexity recognizes your brand as an entity but doesn’t trust your content enough to source it. This signals an entity recognition problem, not a content problem.

- Citation without mention — your content is used as a source, but your brand name doesn’t appear in the answer text. Perplexity treats your page as informational, not as a brand recommendation.

- Mention with citation and link — the strongest signal. Perplexity both recommends your brand and directs users to your content.

If you blend these three metrics into a single “visibility score,” you lose the diagnostic power. A brand with high mentions but low citations needs different fixes than a brand with zero mentions entirely. Separate tracking columns for each metric let you diagnose the specific gap.

For context on how brand mentions function across AI platforms beyond Perplexity, the overview at brand mentions in AI breaks down the broader landscape.

How to Build a Prompt Library That Mirrors Real Buyer Queries

Your tracking is only as useful as your prompt list. Random questions produce random data. A structured prompt library — anchored in actual buyer language — produces actionable visibility intelligence.

Start with seed keywords from real demand signals

Pull seed terms from sources that reflect how your buyers actually search:

- PPC search term reports (especially high-converting queries)

- CRM call logs and sales conversation transcripts

- On-site search data

- Support ticket themes and common pre-sale questions

- Keyword research tools filtered by commercial and informational intent

Avoid inventing prompts based on what you think buyers ask. Ground every prompt in evidence of real demand.

Transform seeds into natural-language prompts

Perplexity users type conversational queries — not keyword strings. Transform each seed into 3–5 natural-language prompts that mirror how someone would actually ask the question.

Seed keyword: “AI visibility tracking tools”

Prompts:

- “What are the best tools for tracking brand visibility in AI search?”

- “How do I monitor my brand mentions in AI-generated answers?”

- “Which platforms track whether my brand appears in Perplexity and ChatGPT?”

Keep prompts neutral. Never include your own brand name in the prompt unless you’re specifically testing branded awareness. Stuffing your brand into the query biases the result and defeats the purpose of measurement.

Organize prompts by intent category

Cluster your prompts into three categories so you can compare performance across buyer journey stages:

- Informational — “How does AI search visibility work?” (awareness stage)

- Commercial — “Best AI brand monitoring tools for SaaS companies” (consideration stage)

- Comparison — “[Competitor] vs. [Competitor] for tracking AI mentions” (decision stage)

This structure reveals whether Perplexity associates your brand with early-stage education, active vendor evaluation, or neither. Most brands discover they’re missing entirely from commercial-intent prompts — which is where revenue impact concentrates.

How many prompts to start with

Begin with 25–50 prompts. This is enough to establish a baseline across your core topic clusters without creating an unmanageable manual workload. Expand to 100–200 once you’ve validated your tracking cadence and need stable trendlines.

Prioritize prompts with revenue intent first. Backfill informational prompts later — they matter for building the citation graph that feeds commercial answers, but they’re not where you start measuring ROI.

Setting Up a Manual Tracking Workflow

Manual tracking works well for initial baselines and small prompt libraries (under 50 queries). Here’s how to set it up so your data is reliable and comparable week over week.

Step 1: Create a controlled testing environment

Open Perplexity in an incognito browser window or a dedicated browser profile. This reduces personalization noise. Before your first run, document your baseline environment:

- Device and browser

- Logged-in or logged-out state

- Region (or VPN endpoint if testing multiple locations)

- Perplexity model selection (Sonar, Sonar Pro, etc.)

Keep these variables consistent across every tracking run. If Perplexity changes its default model between runs, note it explicitly — model changes can shift outputs even when nothing else changes.

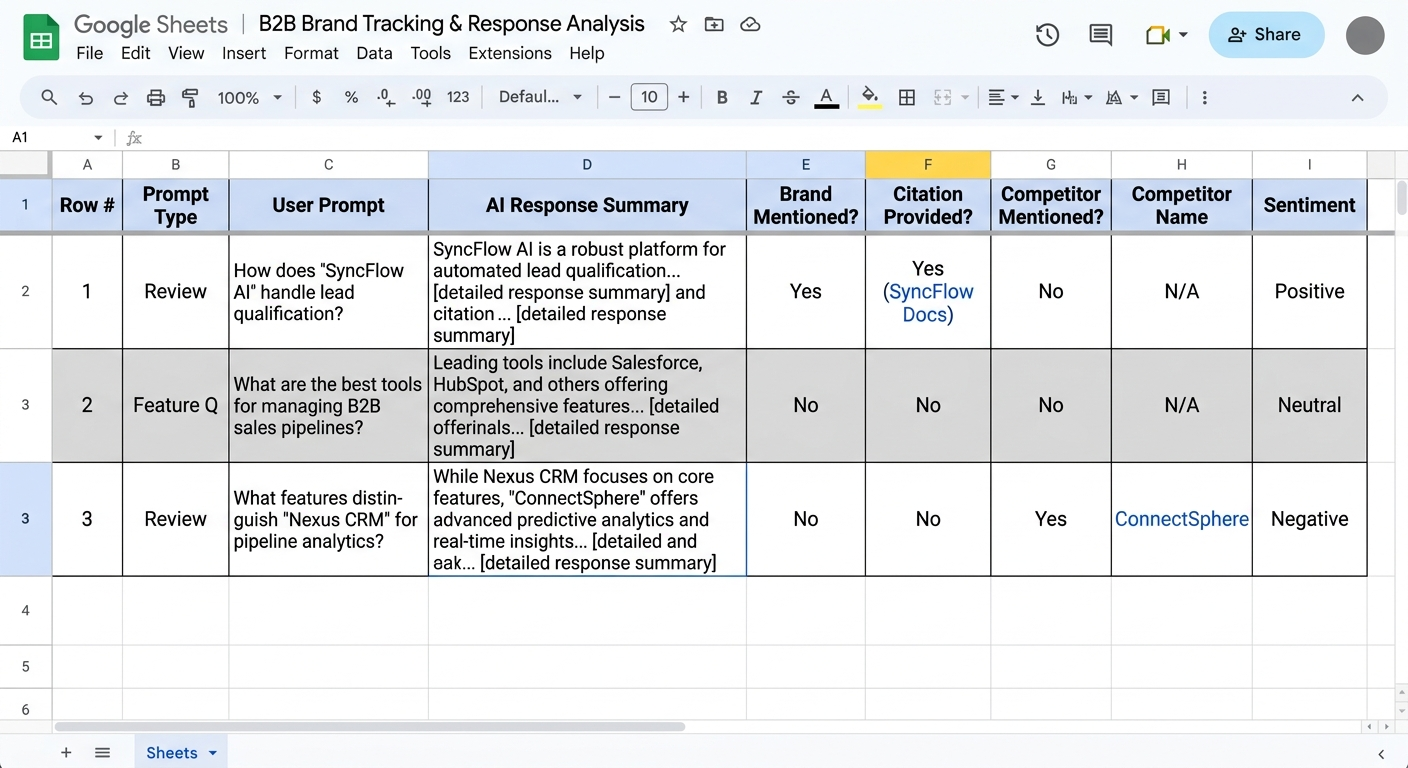

Step 2: Build your tracking spreadsheet

Use Google Sheets, Airtable, or Notion. Create columns for:

- Date and time

- Exact prompt text

- Perplexity model used

- Mentioned? (Yes/No)

- Cited? (Yes/No, with URL if applicable)

- Linked? (Yes/No, with URL if applicable)

- Competitors mentioned (list all brand names)

- Source URLs in references

- Accuracy score (1–5: does the description match your actual positioning?)

- Notes (entity errors, outdated info, wrong service area, etc.)

Save the full answer text or a screenshot for each prompt. You’ll need it for trend analysis and for diagnosing why visibility changed.

Step 3: Run prompts in weekly batches

Pick a fixed day and time each week. Run your full prompt library (or a prioritized subset if you have 100+). Record every data point for every prompt — even when the results look the same as last week.

Annotate your sheet with any external events that could affect results: new content published, a PR hit, a schema update, a product launch, or a directory listing improvement. Without these annotations, you’ll struggle to explain what caused a visibility change.

Step 4: Define your baseline KPIs

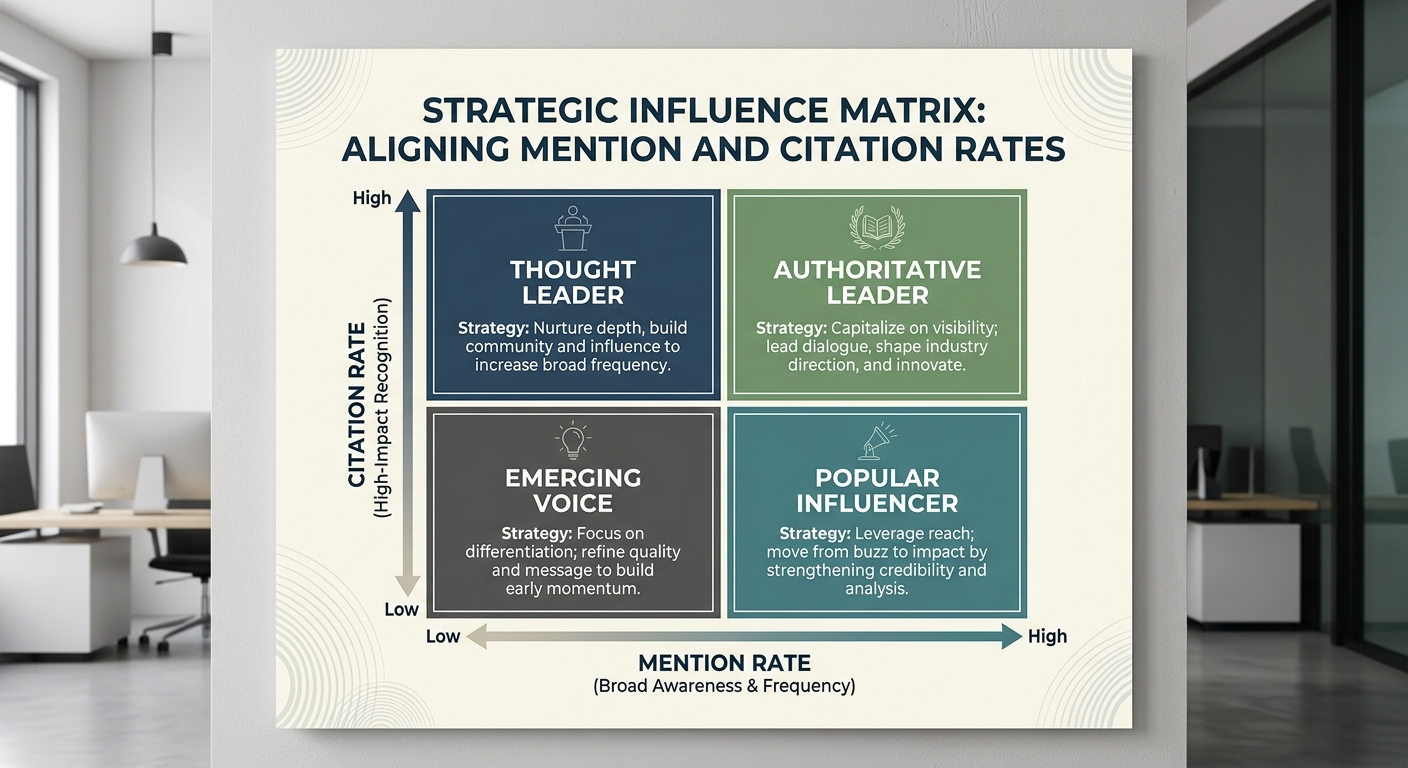

Two primary metrics anchor your tracking:

Visibility rate — the percentage of prompts where your brand is mentioned in the answer text. Calculate: (prompts with mention ÷ total prompts) × 100.

Citation rate — the percentage of mentions where your domain appears in the reference list. Calculate: (mentions with citation ÷ total mentions) × 100.

A secondary metric worth tracking: share of voice — your mentions compared to competitor mentions across the same prompt set. This tells you whether you’re gaining or losing ground relative to your category.

For a broader view of tracking across multiple AI platforms, the guide on how to track brand mentions across AI search platforms covers the cross-platform workflow.

When Manual Tracking Breaks Down (and What to Do Next)

Manual tracking is viable for 25–50 prompts with weekly cadence. Beyond that, three problems emerge:

- Time cost compounds. Running 100 prompts manually takes 2–3 hours per session. At weekly cadence, that’s over 100 hours per year on data collection alone — before any analysis.

- Consistency degrades. Human error creeps in: skipped prompts, inconsistent screenshots, forgotten annotations. Data quality drops, and trend analysis becomes unreliable.

- Multi-location tracking becomes impractical. If you operate in multiple regions, you’d need separate VPN sessions for each location — multiplying the workload.

At this point, automated monitoring tools become the practical choice. Several platforms now offer Perplexity-specific tracking — including Keyword.com, SE Ranking, LLM Pulse, and others. These tools run your prompt library on a schedule, capture mentions and citations, and store historical data for trend analysis.

If you’re evaluating tools specifically for Perplexity, the Perplexity mentions tool comparison covers the current options in detail.

Pro Insight: Don’t skip manual tracking entirely just because automation exists. Run a manual baseline first to understand what the data looks like and which prompts matter most. Then migrate your validated prompt library into an automated tool. This prevents the common mistake of tracking 200 prompts that don’t map to real buyer behavior.

How to Read Perplexity Tracking Data Without Overthinking It

Raw data becomes useful only when you map it to specific actions. Here are the four patterns you’ll encounter most often — and what each one means.

Pattern 1: High mention rate, high citation rate

Perplexity recognizes your brand and trusts your content enough to source it. This is the strongest signal. Your priority: maintain momentum by keeping cited pages fresh and expanding into adjacent topic clusters.

Pattern 2: High mention rate, low citation rate

Perplexity knows your brand exists but isn’t using your content as the source. It’s pulling the information from third-party coverage — directories, reviews, news articles — rather than your own pages. Your priority: improve on-site content structure, add original data, and build pages that are easier for RAG systems to extract from.

Pattern 3: Low mention rate across all prompts

Perplexity doesn’t associate your brand with the topics you care about. This is usually an entity authority problem, not a content volume problem. Your priority: build consistent editorial mentions across high-authority publications that AI models draw from, and ensure entity consistency (brand name, positioning, category) across the web.

Pattern 4: Competitor dominates your category prompts

A specific competitor consistently appears where you don’t. Examine which source URLs Perplexity cites for that competitor. Often, the competitor has stronger coverage on the exact sites Perplexity trusts for your category — industry directories, niche publications, or comparison pages. Your priority: earn coverage on those same sources and create content that directly answers the prompts where you’re missing.

The Source URLs That Matter Most

Every Perplexity answer includes numbered references. Those reference URLs are the most valuable data in your entire tracking workflow — more valuable than the mention itself.

Here’s why: the source URLs reveal Perplexity’s citation supply chain. If Perplexity consistently cites a particular review site, industry directory, or publication for your category, that source is a “citation gatekeeper.” Getting your brand mentioned or reviewed on that source directly influences whether Perplexity includes you in future answers.

Track source URLs across all your prompts and look for patterns:

- Which domains appear most frequently as references?

- Are there sources your competitors appear on that you don’t?

- Which of your own pages get cited — and which never do?

This analysis gives you a concrete content and PR roadmap. Instead of guessing which publications to target, you’re working from evidence of what Perplexity already trusts.

Agencies like BrandMentions approach this systematically — placing contextual brand mentions on 140+ high-authority publications that AI models actively draw from during source retrieval. The goal isn’t volume; it’s earning presence on the specific sources that feed Perplexity’s citation graph for your category.

Understanding how these brand mentions in Perplexity function at the source level gives you a clearer picture of what drives inclusion versus exclusion.

Five Mistakes That Corrupt Your Tracking Data

Bad data leads to bad decisions. These are the most common errors teams make when tracking Perplexity visibility — and each one is avoidable.

1. Changing multiple variables between runs

If you change the prompt phrasing, model selection, and location in the same week, you can’t attribute any visibility change to a specific cause. Change one variable at a time. If Perplexity updates its default model, note it and keep everything else constant.

2. Only tracking “best of” prompts

Shortlist queries (“best CRM for startups”) get all the attention, but informational queries (“how does CRM integration work”) often determine which sources Perplexity trusts when it later answers commercial questions. Track both.

3. Not saving source URLs

Screenshots alone aren’t enough. Record the full list of cited source URLs for every prompt. These URLs are the foundation of your content improvement and PR targeting strategy.

4. Treating one check as a trend

A single data point is a snapshot, not a signal. You need 4–6 weeks of consistent data before you can identify meaningful patterns. Resist the urge to react to a single week’s results.

5. Blending mentions, citations, and links into one metric

As covered earlier, these three outcomes require separate tracking columns. A composite “visibility score” obscures the specific problem you need to fix.

How to Improve Your Brand’s Perplexity Visibility Based on Tracking Data

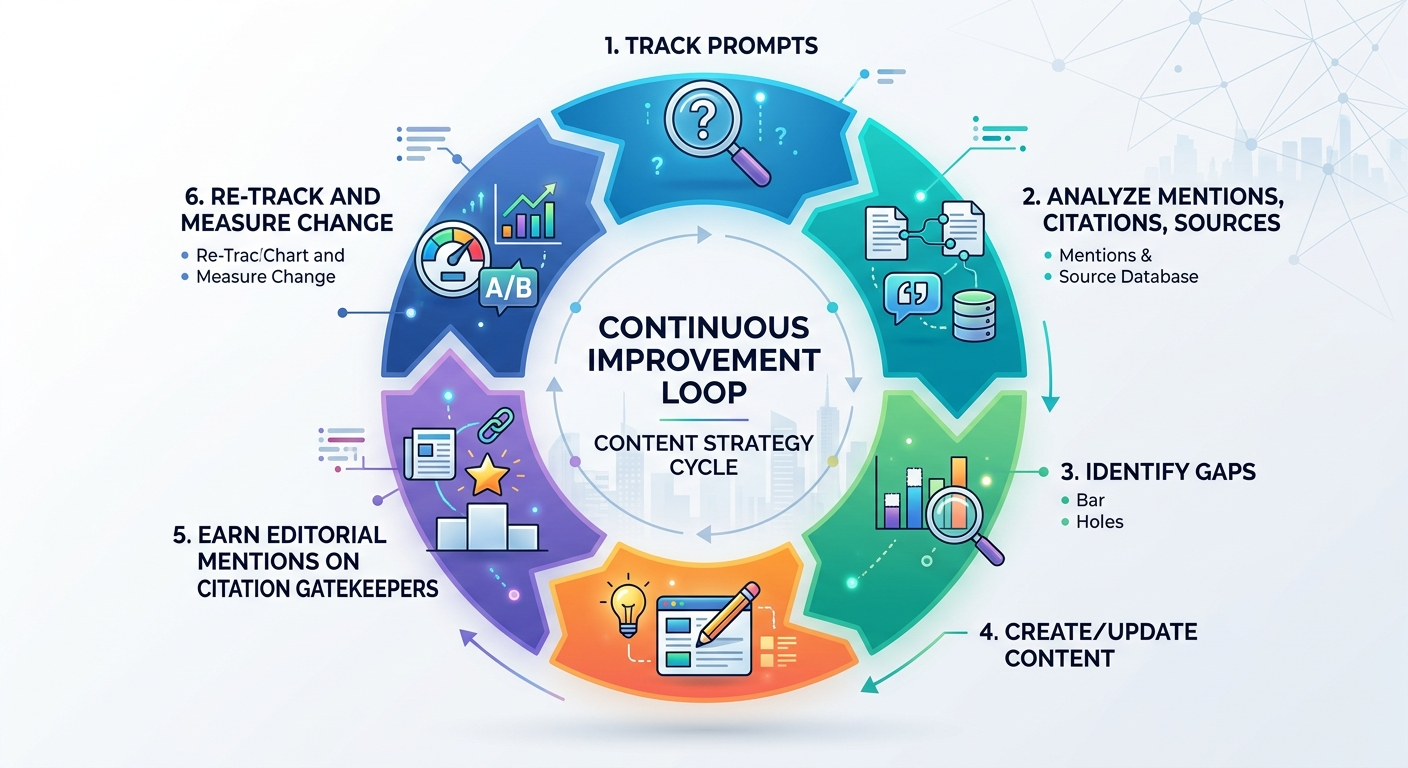

Tracking without action is just record-keeping. Here’s how to close the loop between measurement and improvement.

Create content structured for RAG extraction

Perplexity’s RAG system pulls from pages that make it easy to extract clear, verifiable claims. Structure your content with:

- Question-style headings that match how users phrase Perplexity queries

- Lead paragraphs that directly answer the heading’s question in 1–2 sentences

- Specific, sourced claims rather than vague generalities

- Tables, numbered lists, and comparison matrices that organize information cleanly

Pages designed this way are significantly more likely to be selected as citation sources — because the RAG system can extract a clear, self-contained answer without needing to interpret dense paragraphs.

Build entity authority through consistent editorial mentions

If Perplexity doesn’t mention your brand at all, the issue is usually entity authority — the AI doesn’t have enough signals to associate your brand with a specific category or solution.

Entity authority builds when your brand appears consistently across high-authority editorial content, with the same name, positioning, and category associations. This is where brand mentions for SEO and AI visibility converge — the same editorial placements that strengthen your backlink profile also feed the citation graph that AI models rely on.

In campaigns across 67+ B2B companies, the BrandMentions team found that brands with consistent editorial mentions on authoritative publications achieved AI recommendation rates 89% higher than those relying solely on traditional SEO.

Target the citation gatekeepers your tracking data reveals

Your source URL analysis (from the section above) tells you exactly which publications and directories Perplexity trusts for your category. Prioritize earning coverage on those specific sources. A single mention on a site Perplexity already cites for your topic can shift your visibility faster than publishing five new blog posts on your own domain.

Keep cited pages fresh

Perplexity favors recently updated content. If your tracking shows a page was cited last month but dropped off this month, check whether a competitor published fresher content on the same topic. Update your page with current data, new examples, and a visible “last updated” date.

For a deeper look at the monitoring workflow across multiple AI platforms, how to monitor Perplexity brand mentions covers the ongoing process in more detail.

Tracking Perplexity Alongside Other AI Platforms

Perplexity is one surface in a multi-platform AI search ecosystem. As of 2026, buyers use ChatGPT, Google Gemini, Claude, and Copilot alongside Perplexity — often comparing answers across platforms before making decisions.

Your tracking workflow should extend beyond a single platform when your resources allow. The prompts you build for Perplexity can be reused across ChatGPT and Gemini with minor adjustments. The metrics (mention rate, citation rate, share of voice) apply universally.

Key differences to account for:

- Perplexity cites sources with numbered references on every query. Citations are transparent and measurable.

- ChatGPT draws primarily from training data, with web browsing as a supplement. Mentions are conversational, not citation-linked. See how to check brand mentions in ChatGPT for platform-specific tracking.

- Google Gemini uses a hybrid of training data and live search. Citation behavior varies by query type. The Gemini tracking guide covers its nuances.

A cross-platform view reveals whether your brand’s AI visibility is consistent or fragmented. A brand that appears in Perplexity but not ChatGPT likely has strong web presence but weak training-data signals. A brand visible in ChatGPT but absent from Perplexity may have historical authority but lacks fresh, structured content. The AI visibility analytics tools overview covers platforms that consolidate multi-model tracking into a single workflow.

FAQ

Does Perplexity provide any native analytics for brands?

No. As of 2026, Perplexity does not offer a publisher dashboard, brand analytics, or any reporting on how frequently a domain appears in answers. All tracking must be done externally — either through manual prompt testing or third-party monitoring tools. This is a fundamental difference from Google, which provides Search Console data.

How often should I run Perplexity tracking?

Weekly cadence works well for most brands. This is frequent enough to detect trends without creating an unsustainable time commitment. If you’re running a major campaign or content push, increase to twice weekly for the 2–3 weeks following launch. Monthly tracking is too infrequent to catch fast-moving changes — Perplexity pulls live web data, so visibility can shift within days.

Can I track Perplexity visibility without any paid tools?

Yes. Manual tracking with a spreadsheet, incognito browser, and a structured prompt library costs nothing but your time. This approach works for up to 50 prompts at weekly cadence. Beyond that scale, the time investment typically justifies moving to an automated tool.

What makes a page more likely to be cited by Perplexity?

Perplexity favors pages with clear, direct answers to specific questions, structured formatting (headings, lists, tables), verifiable claims with source attribution, and recent publication or update dates. Domain authority and backlink quality also influence which sources Perplexity selects during its real-time retrieval process.

Is Perplexity tracking relevant for B2B brands specifically?

Particularly relevant. Perplexity’s user base skews toward researchers, technical professionals, and informed buyers — exactly the audience B2B brands need to reach during the vendor evaluation process. When a buyer asks Perplexity “best [category] tools for enterprise” and your brand doesn’t appear, you’ve lost a discovery opportunity that no amount of Google ranking can recover.

Your Next Step

Start with a focused prompt library of 25 queries mapped to your most revenue-relevant buyer questions. Run your first manual tracking session this week — incognito browser, structured spreadsheet, all three metrics (mentions, citations, links) recorded separately. After four weeks of consistent data, you’ll have a clear picture of where your brand stands in Perplexity and what to improve first.

If you want to understand where your brand currently appears — or doesn’t — across Perplexity and other AI platforms, get a free AI visibility audit to see the gaps and opportunities.