Your hospital system buyer just asked ChatGPT which remote patient monitoring vendors fit a 200-bed community hospital. Three names came back. Yours wasn’t one of them. Your competitor, smaller, less funded, worse product, was named first. That’s the AI visibility gap, and for healthtech companies it’s already shaping pipeline decisions before a single sales call happens. This playbook covers what works in 2026: how to earn citations from the publications AI models trust, how to stay inside HIPAA and FDA boundaries while doing it, and how to measure whether any of it is moving your numbers.

What You’ll Learn

- Why healthtech buyers, hospital systems, payers, investors, now use AI assistants before vendor calls

- The three publication tiers AI models actually pull from for healthcare recommendations

- How to build a compliance-safe claim matrix that protects you across FDA, HIPAA, and state regulators

- A 90-day execution plan with specific milestones for citation density and AI mention rate

- The metrics that connect AI visibility to qualified pipeline (and the ones that don’t)

Why Healthtech Buyers Reach for AI Before They Reach for You

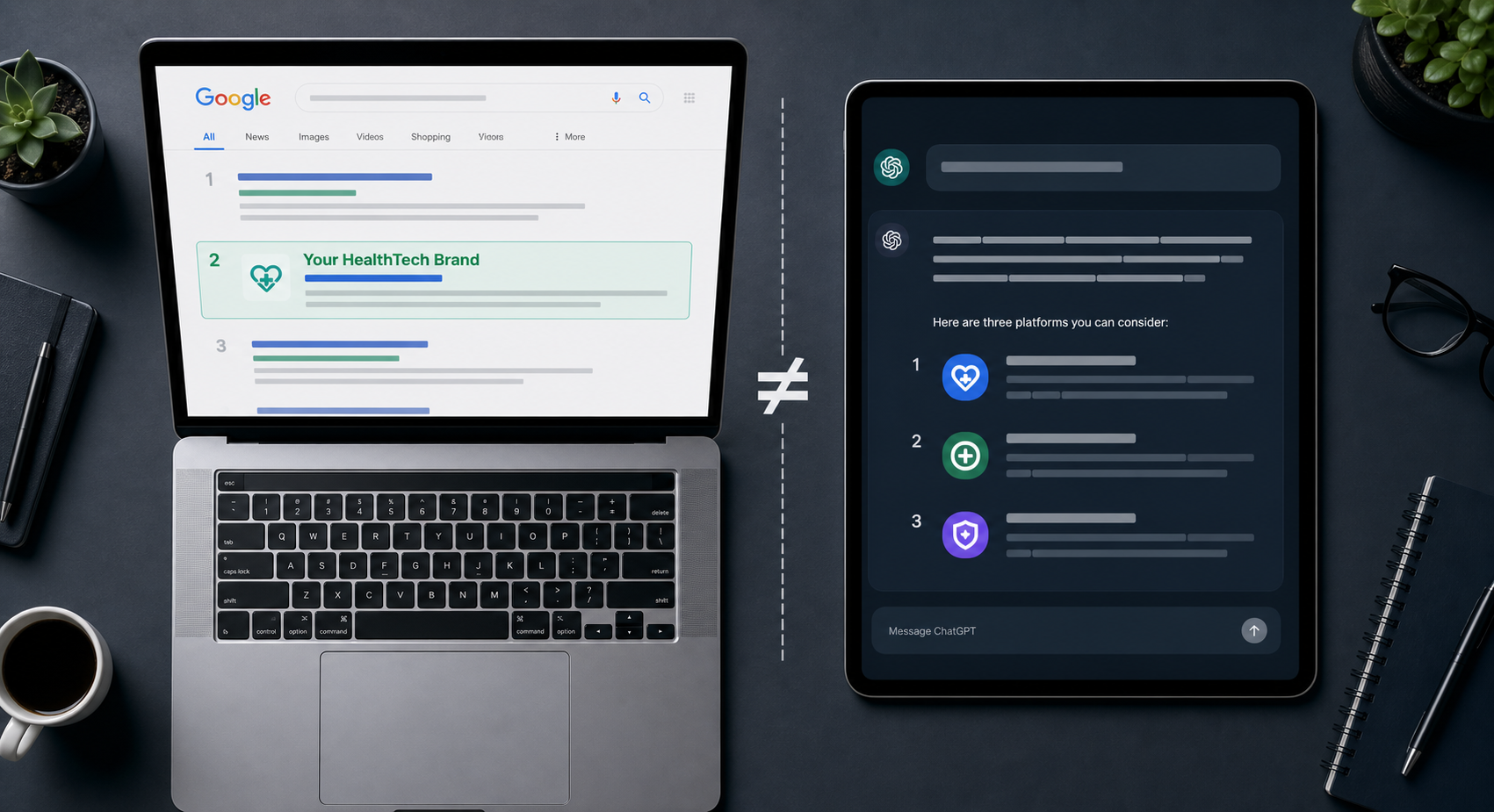

Hospital procurement teams aren’t searching Google the way they did in 2023. A VP of clinical operations evaluating remote monitoring vendors will ask Perplexity for a shortlist, cross-check Claude on integration risk with Epic, then send the names that survive both passes to their CIO. By the time a vendor lands a discovery call, the AI has already shaped the consideration set.

This shift hits healthtech harder than other categories for three reasons. Sales cycles are long, so any influence at the awareness stage compounds across months of consideration. Buyers are risk-averse, so anything that flags credibility, or absence of it, gets disproportionate weight. And category language is technical, which means AI models have to work harder to disambiguate vendors, which makes citation signals decisive.

Healthtech companies that show up early in AI responses get a structural advantage that’s hard to claw back later. The ones that don’t appear at all aren’t being rejected, they’re being filtered out before the buyer ever sees them.

What Changed Between 2024 and 2026

Two years ago, getting cited by ChatGPT was a curiosity metric. Today it’s a leading indicator of pipeline. AI assistants now handle a meaningful share of vendor research in B2B healthcare, and AI Overviews sit at the top of clinical and operational queries on Google. The buyers most likely to use AI tools first, younger clinical leaders, digital-native procurement teams, growth-stage health system VPs, are also the ones writing the next wave of vendor contracts.

If your visibility strategy is still organized around keyword rankings and gated whitepapers, you’re optimizing for a layer of the funnel that fewer buyers touch each quarter.

How AI Models Actually Decide Which Healthtech Brands to Name

AI assistants don’t pick vendors randomly. They lean on patterns from training data and real-time retrieval, and in healthcare those patterns favor entities with three traits: clear category positioning across multiple credible sources, consistent naming across the web, and absence of trust-damaging signals like FDA warning letters or unresolved data breach coverage.

The mechanic matters because it tells you what to fix. A healthtech company invisible in AI responses usually has one of these problems:

- Citation thinness, the brand appears in its own marketing content but rarely in third-party editorial coverage

- Entity fragmentation, multiple product names, an acquisition that changed the parent company, or a domain migration that scrambled the brand graph

- Category ambiguity, the company describes itself in language no buyer or analyst uses, so AI can’t confidently slot it into a recommendation

- Trust gaps, old negative coverage that AI still surfaces, or a complete absence of credibility signals like clinical study citations and SOC 2 verification

Fix the inputs and citations follow. The healthtech brands ChatGPT names confidently in 2026 are almost always brands that built consistent third-party presence months earlier.

The Three Publication Tiers That Drive Healthtech Citations

Not every publication is equal in AI training data, and in healthcare the weighting is sharper than in other verticals. Generic high-DA business sites help, but they don’t carry the same signal as a specialty trade publication or a peer-reviewed clinical outlet. Your earned media plan should hit three lanes deliberately.

Tier 1: Healthcare-Native Trade Publications

This is the highest-use tier for healthtech specifically. Outlets like Fierce Healthcare, MedCity News, Healthcare IT News, STAT News, Modern Healthcare, and Becker’s Hospital Review carry disproportionate weight because AI models have learned to associate them with credible healthcare commentary. A single thoughtful contributed piece in MedCity News on RPM reimbursement trends will move citation density faster than five business-press features.

Pitch angles that work: category analysis, regulatory commentary, integration architecture explainers, market sizing pieces grounded in real data. Pitch angles that don’t: product announcements, funding press releases without strategic context, generic “AI in healthcare” thought leadership.

Tier 2: Digital Health and Healthtech-Adjacent Outlets

Rock Health, Second Opinion, Bessemer’s State of Healthtech, and category-specific newsletters cover the operational and investment side of healthtech. These outlets reach the buyers and capital allocators most likely to be using AI tools for early diligence. Coverage here builds the layer of context AI models need to confidently slot you into a recommendation.

Tier 3: General Business and Tech Press

Forbes, Fast Company, TechCrunch, Bloomberg, and the Wall Street Journal still matter, but for healthtech they’re amplifiers, not primary signals. A Forbes feature without supporting coverage in healthcare-native outlets reads as PR-driven and gets weighted accordingly. Treat Tier 3 as a layer that compounds the work done in Tiers 1 and 2.

| Tier | Example Outlets | Citation Weight | Best For |

|---|---|---|---|

| Tier 1: Healthcare Trade | Fierce Healthcare, MedCity News, STAT, Becker’s | Highest | Category authority, regulatory framing |

| Tier 2: Digital Health | Rock Health, Second Opinion, Bessemer reports | High | Buyer and investor visibility |

| Tier 3: General Business | Forbes, TechCrunch, Bloomberg, WSJ | Medium (as amplifier) | Cross-domain credibility |

The companies winning AI visibility in healthtech aren’t just chasing logos. They’re building presence across all three lanes on a quarterly rhythm so AI models keep encountering them in different contexts.

Building a Compliance-Safe Claim Matrix

Here’s the problem most healthtech communications teams hit: the messaging that wins citations is the same messaging that gets you a regulatory letter. Aggressive clinical claims, outcome guarantees, or anything that drifts toward “this device cures X” creates exposure under FDA promotional rules, HIPAA, and state attorneys general. Compliance-safe doesn’t mean boring, it means defensible.

Build a claim matrix before you pitch a single publication. The matrix is a single source of truth that legal, clinical, marketing, and external PR all work from. Every external statement maps to a row.

What Goes in the Matrix

- Approved claim, the exact language signed off by legal and clinical

- Evidence, the study, dataset, or operational metric backing the claim

- Boundary, what the claim explicitly does not say (e.g., “improves workflow efficiency” not “improves clinical outcomes”)

- Use cases, which channels and audiences the claim is approved for

- Owner, who can approve variants

Frame messaging around workflow, infrastructure, market dynamics, and integration architecture. These categories carry editorial value, get picked up by Tier 1 healthcare trade outlets, and stay clear of FDA promotional boundaries. Save clinical outcome claims for peer-reviewed publication and regulatory submissions where the proof bar is met properly.

The Sub-Vertical Problem

Compliance reality varies sharply across healthtech sub-verticals. A claim matrix for a clinical decision support vendor selling into hospital systems looks nothing like one for a wellness platform selling direct to consumers. Map your matrix to your actual regulatory profile:

- SaMD and medical devices. FDA promotional rules, predetermined change control plans for AI/ML

- Provider-facing SaaS. HIPAA, BAAs, security disclosure boundaries

- Payer technology, state insurance regulator language, anti-discrimination compliance

- Direct-to-consumer wellness. FTC substantiation rules, state consumer protection

- Clinical research and pharma adjacent, promotional review, off-label discussion boundaries

The 90-Day Healthtech AI Visibility Plan

Citations compound. That’s the good news and the hard news. You can’t shortcut the timeline, but you can make every week count by sequencing the work correctly. Here’s the plan that’s worked for healthtech companies moving from invisible to consistently cited.

Days 1–30: Foundation

The first month is unglamorous and decisive. Skip it and the next 60 days don’t compound.

- Audit current AI visibility, run 30 buyer-shaped prompts across ChatGPT, Perplexity, Gemini, and Claude. Document where you appear, where competitors appear, and which sources are being cited

- Resolve entity fragmentation, one canonical company name, consistent product naming, clean Wikidata and Crunchbase entries, redirected legacy domains

- Build the claim matrix, get legal and clinical signed off before any external pitch goes out

- Map your tier-by-tier publication target list, 8 Tier 1 outlets, 5 Tier 2, 3 Tier 3 for the quarter

- Identify three subject-matter experts internally who can be credibly bylined

Days 31–60: Activation

Month two is when external work goes live. Pace matters more than volume.

- Publish or place 4–6 pieces across the three tiers, weighted toward Tier 1

- Pitch contributed commentary on a regulatory or category development happening in your space

- Submit to 2–3 industry awards or rankings that AI models actively reference (KLAS, HIMSS, Rock Health lists)

- Update your owned content layer, make sure your category positioning and proof points are crawlable, structured, and consistent with what you’re saying externally

- Re-run the prompt audit at day 45, most teams see the first detectable shifts in citation patterns by week 6

Days 61–90: Compound

Month three separates the teams who get results from the teams who quit at month two. Citations rarely move dramatically in 30 days. They move meaningfully across 90.

- Sustain Tier 1 placement cadence, at least 2 strong pieces per month

- Layer in research-driven content, a small original dataset, even 50 surveyed buyers, creates citation-worthy material

- Land a podcast or video appearance on a healthcare-native show

- Run the full prompt audit again, measure mention rate change, sentiment shift, and citation source overlap with competitors

- Build the next 90-day plan based on what’s converting and what isn’t

How to Measure Whether Any of This Is Working

The measurement problem in AI visibility is real. You can’t pull a clean attribution report from ChatGPT, and Perplexity’s citation logs don’t tie to your CRM. But that doesn’t mean the work is unmeasurable. It means you measure leading indicators rigorously and connect them to lagging pipeline metrics over a longer window.

Leading Indicators (Track Weekly)

- Mention rate, across a fixed prompt set of 30+ buyer-shaped queries, how often does your brand appear?

- Citation source overlap, which publications are AI models pulling from when answering category queries, and how many of those sources mention you?

- Position, when named, are you first, second, or last in the list?

- Sentiment, is the AI describing you accurately, or repeating outdated framing?

- Competitor delta, are you closing or widening the gap with the brands AI cites most often?

Lagging Indicators (Track Monthly)

- Branded search volume, buyers who first encountered you through AI often come back via direct branded search

- Inbound qualified pipeline tagged “AI-influenced”, add a single question to demo request forms: “Where did you first hear about us?”

- Sales call mentions, track how often prospects say “ChatGPT” or “Perplexity” unprompted in discovery calls

- Citation density on your owned brand graph, how many high-quality third-party mentions exist of your brand, versus three months ago?

The honest version: AI visibility is a brand investment with a delayed conversion signature. The teams that report cleanly on it are the ones that decided in advance which leading indicators they trust, then watched lagging indicators move in the same direction over a quarter or two. If you wait for a perfect attribution model before investing, your competitors will lock in citation positions you’ll spend a year trying to dislodge.

The Mistakes Healthtech Teams Keep Making

A few patterns show up often enough that they’re worth flagging directly.

Treating AI visibility as a content marketing problem. It isn’t. Content marketing builds owned-media depth. AI visibility is mostly an earned-media and entity-clarity problem. Publishing more on your own blog won’t change what ChatGPT says about you.

Pitching the same product story to every tier. The pitch that lands in Forbes won’t land in MedCity News. Tier 1 healthcare outlets want category insight, not founder profiles. Customize per tier or skip the pitch.

Quitting at week 8. The single most common failure mode. Citation patterns shift on a 60–90 day lag. Teams that pull the plug at the end of month two never see the curve they were paying for.

Ignoring entity hygiene. If your acquisition history, product naming, or domain structure confuses AI models, all the earned media in the world won’t help. Fix the entity layer first.

Letting compliance become an excuse. “We can’t say anything externally” is rarely true once a real claim matrix exists. The teams that say it usually haven’t built one.

Frequently Asked Questions

How long does it take to see AI visibility results for a healthtech company?

Detectable shifts in mention rate typically show up between weeks 6 and 10. Meaningful, sustained citation presence usually takes 90 to 180 days, depending on how thin your starting baseline was. Healthtech moves slightly slower than other B2B verticals because AI models weight healthcare-trade publications heavily, and those outlets have longer editorial cycles.

Can healthtech companies do AI visibility work without compliance risk?

Yes, with a working claim matrix in place. The risk isn’t AI visibility itself, it’s making promotional claims that drift outside legal, clinical, or regulatory boundaries. Frame messaging around workflow, infrastructure, market dynamics, and category insight rather than clinical outcomes, and the work stays defensible.

Which AI assistants matter most for healthtech buyers?

ChatGPT and Perplexity dominate early-stage vendor research in B2B healthcare. Gemini matters for queries that surface in Google AI Overviews. Claude is gaining ground with technical and clinical leaders evaluating integration risk. Track all four, the signals diverge enough that any single platform misses real movement.

What’s the difference between AI visibility and SEO for healthtech?

SEO optimizes pages to rank in search results. AI visibility optimizes the entity and citation graph so AI models confidently name your brand in generated answers. The work overlaps, both reward credible third-party coverage, but the tactics diverge. SEO rewards on-page optimization and link velocity. AI visibility rewards consistent presence in the publications AI models trained on.

Do small healthtech startups have any chance against incumbents in AI visibility?

Yes, and often more than they have in traditional SEO. Incumbent visibility in AI is anchored to specific citation sources, not domain authority alone. A focused 90-day campaign in healthcare-native trade publications can shift a startup’s mention rate dramatically because the citation graph in healthtech is narrower and more discoverable than the broader B2B SaaS landscape.

How does HIPAA affect AI visibility work?

HIPAA doesn’t restrict your ability to publish category commentary, market analysis, or product positioning. It restricts how you talk about specific patients, PHI, and BAAs. Earned media built around workflow, integration architecture, and operational metrics stays well clear of HIPAA boundaries. The teams that struggle here usually conflate HIPAA with general communications caution.

What’s the right budget for healthtech AI visibility in 2026?

Most healthtech companies serious about this commit between $8K and $25K monthly across PR, content, and earned media work, depending on category competitiveness and the cost of in-house clinical SME time. Lower than that, and you can’t sustain the cadence required for citation patterns to compound. Higher than that usually means the team is buying broader brand strategy work rather than AI visibility specifically.

The Citation Gap Closes Faster Than You Think

The healthtech companies that AI assistants confidently recommend in 2026 aren’t the ones with the biggest marketing budgets. They’re the ones that recognized 18 months ago that AI search would reshape vendor consideration, built the entity and citation foundation early, and kept showing up across the publications AI models actually trust. That window is still open. It won’t be in 12 months.

Run the prompt audit this week. Ask ChatGPT, Perplexity, and Gemini the five most important questions a hospital VP, payer executive, or health system CIO would ask when shortlisting vendors in your category. Document which brands get named, which sources get cited, and where you sit. That single hour tells you whether the rest of this playbook is urgent or routine for your team. For most healthtech companies, the answer is urgent.

If you want a deeper read on related territory, the AI Visibility for B2B SaaS playbook covers the entity and citation mechanics that apply across categories, and the fintech version shows how regulated-industry teams handle the compliance-meets-visibility tension. For tactical work on tracking mentions across AI surfaces, see our guide on tracking brand mentions across AI search platforms.