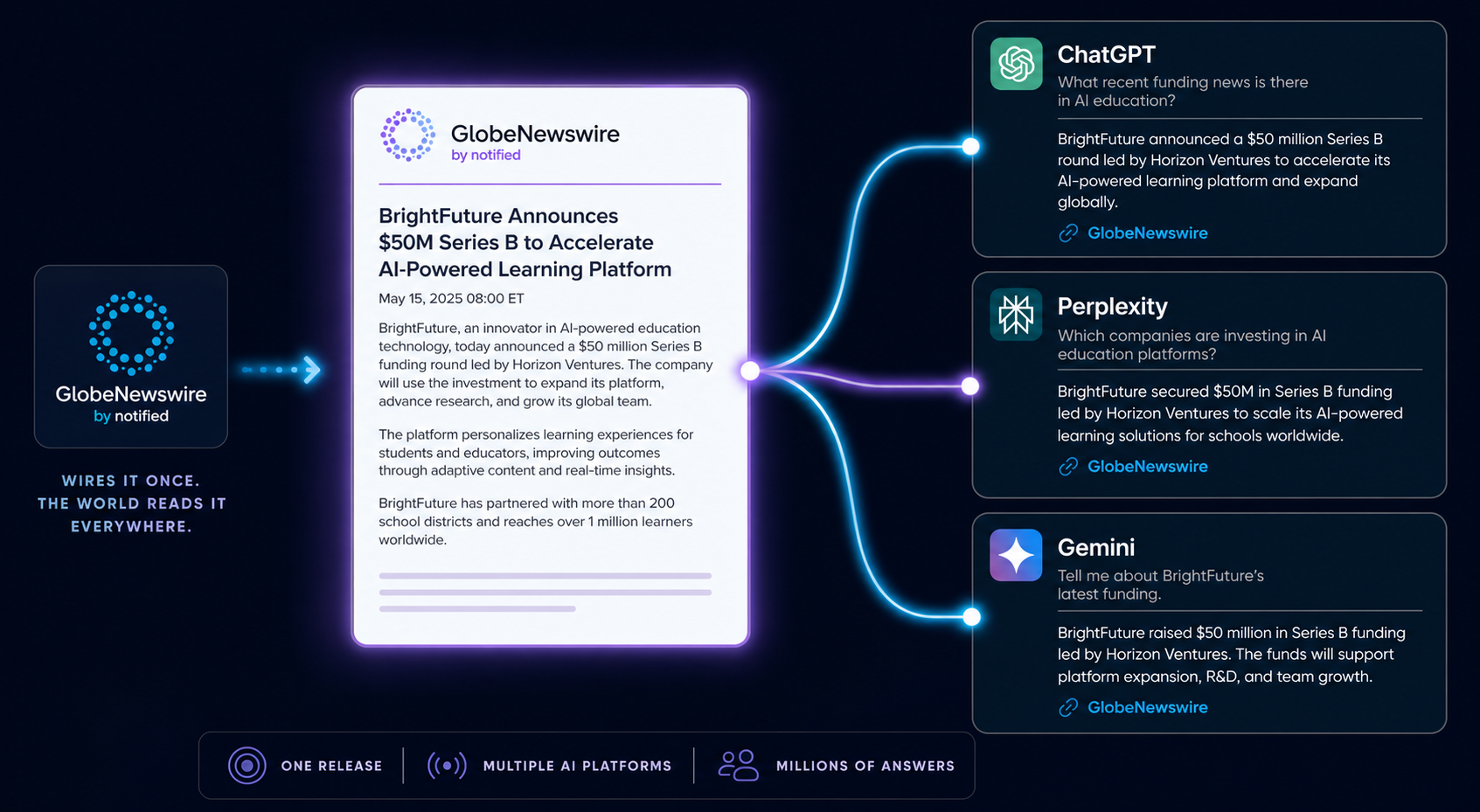

Quick answer: Most PR teams are still writing press releases for journalists who stopped reading them years ago. Meanwhile, ChatGPT, Perplexity, and Gemini are quietly pulling brand recommendations from wire content every single day, and the brands that figured this out are racking up citations while everyone else fights for the same three Forbes contributor slots. A press release strategy built for AI citations isn’t a rewrite of your existing template. It’s a different distribution model, a different writing standard, and a different definition of success.

Press release strategy for AI citations means writing, distributing, and structuring releases so large language models extract and cite them in generated answers, prioritizing wire services LLMs actively crawl, entity-rich opening paragraphs, verifiable data, and structured metadata over traditional journalist pickup metrics. The shift is real: Muck Rack’s Generative Pulse Report found that PR-driven content accounts for the overwhelming majority of citations across major AI engines, and wire syndication patterns directly correlate with which brands get pulled into AI answers.

What You’ll Learn

- Why press releases now earn AI citations faster than blog content or earned media in many B2B categories

- The five wire services LLMs actually index, and the ones AI engines mostly ignore

- A 9-element release structure designed for entity extraction, not journalist sentiment

- How to write the first 75–100 words so AI models pull your framing instead of paraphrasing it away

- A 12-week distribution cadence that compounds citation share across ChatGPT, Perplexity, and Gemini

- What to measure when “pickup” no longer means anything

Why Press Releases Quietly Became the Highest-ROI AI Citation Asset

Blog content takes 4–6 months to earn AI citations because LLMs need to encounter it, index it, and develop confidence in the source. Press releases distributed through major wire services skip most of that. Wire content gets syndicated to hundreds of newsrooms and aggregators within minutes, most of which AI crawlers already trust as canonical sources for company news, executive quotes, product launches, and verifiable data.

The result: a well-built release can appear in AI answers within weeks, not quarters. That’s not theoretical. In our work building citation profiles for B2B SaaS clients, releases distributed through GlobeNewswire and Business Wire consistently surface in Perplexity answers faster than equivalent blog content on the same topic, sometimes within 10 days of distribution.

Why? Three things AI models care about: source diversity (wire syndication creates dozens of canonical URLs), entity density (releases are built around named people, companies, dates, and figures), and structural predictability (LLMs know how to parse the inverted pyramid).

What Changed Since 2024

Two things. First, AI engines began weighting structured, machine-readable content far more heavily than long-form opinion pieces, and press releases are inherently more structured than blog posts. Second, the major wire services started shipping AI-readable metadata: schema markup, entity tagging, and clean newsroom URLs that crawlers can ingest without parsing through ad scripts and cookie banners.

The brands winning AI citations in 2026 noticed both shifts early. The ones still measuring releases by journalist pickup are watching their share of voice erode.

The Wire Services That LLMs Actually Index

Not all distribution is equal. AI models pull from wire services they can crawl, parse, and trust, and the gap between the top tier and the bottom tier is enormous. According to Muck Rack’s 2025 analysis of AI citation sources, GlobeNewswire, PR Newswire, and Business Wire account for the vast majority of wire-sourced citations across ChatGPT, Perplexity, and Gemini combined.

| Wire Service | AI Citation Strength | Best For |

|---|---|---|

| GlobeNewswire | Highest across Perplexity and ChatGPT | B2B SaaS, enterprise tech, public companies |

| PR Newswire (Cision) | Strong across all engines | Consumer brands, financial announcements |

| Business Wire | Strong, especially for financial filings | Earnings, M&A, regulated industries |

| EIN Presswire | Moderate, narrower syndication | SMB and regional reach |

| Free/cheap distribution sites | Negligible, often ignored or penalized | Skip these for AI visibility |

The pattern is clear. Pay for distribution that gets syndicated to outlets AI engines already trust. Cheap distribution buys you nothing, it can actually hurt by associating your brand with low-quality source clusters that AI models discount.

The SEO Hierarchy Doesn’t Map Cleanly Here

A wire service with a Domain Authority of 92 isn’t automatically a better AI citation source than one with a DA of 88. AI models weight different signals: how often the source appears in their training corpus, how structured the content is, and whether the syndication network includes outlets the model treats as authoritative for your category. For a fuller breakdown of how AI engines rank source authority, our guide to the tier-based publication hierarchy for AI citations covers the exact weighting model we use.

The 9-Element Release Structure Built for AI Extraction

The traditional inverted pyramid still works, but AI engines extract differently than journalists scan. They pull from specific structural positions: the opening paragraph, the first attributed quote, the boilerplate, and any clearly labeled data block. Build the release around what gets extracted.

Element 1: Entity-Rich Headline

Lead with the named entity, your brand, followed by the specific action and the measurable outcome. “Acme Inc. Launches AI Citation Tracker That Reduces Reporting Time 60%” extracts cleanly. “Game-Changing New Tool Revolutionizes Industry” doesn’t extract at all because there’s nothing for the model to grab.

Element 2: Dateline and Geographic Anchor

Standard wire dateline format. AI models use this to disambiguate company entities, particularly important if your brand name overlaps with other companies in different geographies.

Element 3: The First 75–100 Words (The AI Extraction Zone)

This is the most important real estate in the entire release. Most AI engines pull their framing of your announcement from these words. They should contain: the company name, the action, the specific outcome or data point, the timeframe, and the category context. Write it as if it’s the only paragraph that will ever be read, because for most AI citations, it is.

Element 4: Named-Executive Quote

Attribute every quote to a specific named person with a specific title. “John Chen, VP of Product at Acme” extracts as an entity. “A company spokesperson” extracts as nothing. AI models build executive thought-leadership entity profiles from attributed quotes, make sure yours are doing that work.

Element 5: Verifiable Data Block

One block of clearly labeled, specific numbers. Customers served, dollars raised, percentage improvements, dates. AI models weight verifiable claims far more heavily than promotional language. “Reduces processing time by 47%” is citable. “Dramatically improves efficiency” is not.

Element 6: Context Paragraph

Explain why this announcement matters within the broader category. This is where AI models pick up your category positioning, and it’s the section most teams skip or fill with corporate filler. Use it to plant the entity-category association you want LLMs to learn.

Element 7: Second Quote (External or Customer)

A second attributed quote from an analyst, customer, or partner. Adds source diversity within the release itself and gives AI engines a second named entity to associate with your story.

Element 8: Boilerplate

Standard company description. This is high-value extraction territory because AI models pull it for follow-up “what is [company]” queries. Update it quarterly. Include specific products, customer count, founding year, and headquarters, every concrete entity helps.

Element 9: Structured Metadata

Schema markup at the newsroom URL: NewsArticle schema, Organization schema, Person schema for executives quoted. Most wire services apply this automatically. If yours doesn’t, push for it or self-host the canonical version with proper markup.

How to Write the First 75 Words So AI Pulls Your Framing

The opening paragraph is the difference between AI citing your release the way you wrote it and AI paraphrasing it into something unrecognizable. Five rules:

- Lead with the named entity. “Acme Inc., a B2B citation tracking platform…” not “Today, a leading provider in the citation tracking space…”

- State the action in active voice. “Launched,” “released,” “raised,” “acquired.” Not “is pleased to announce.”

- Include one specific number in the first sentence. Dollars, percentages, dates, customer counts. Specific numbers anchor AI extraction.

- Name the category explicitly. “AI citation tracking,” “marketing analytics software,” “B2B SaaS.” Don’t make the model guess what category you’re in.

- Skip the adjectives. “Innovative,” “leading,” “modern,” “next-generation.” AI models discount promotional language and may strip your release of its framing entirely.

Here’s the difference in practice. Most releases open like this: “Acme is pleased to announce a groundbreaking new product that revolutionizes the way businesses approach AI visibility.” That extracts to nothing because there’s nothing extractable.

Rewritten for AI extraction: “Acme Inc., a B2B AI visibility platform, launched Citation Tracker on November 14, 2026, a tool that monitors brand mentions across ChatGPT, Perplexity, and Gemini and has reduced reporting time by 60% for early customers including three Fortune 500 SaaS companies.”

Same announcement. The second version contains nine extractable entities and three verifiable claims. The first contains zero of either.

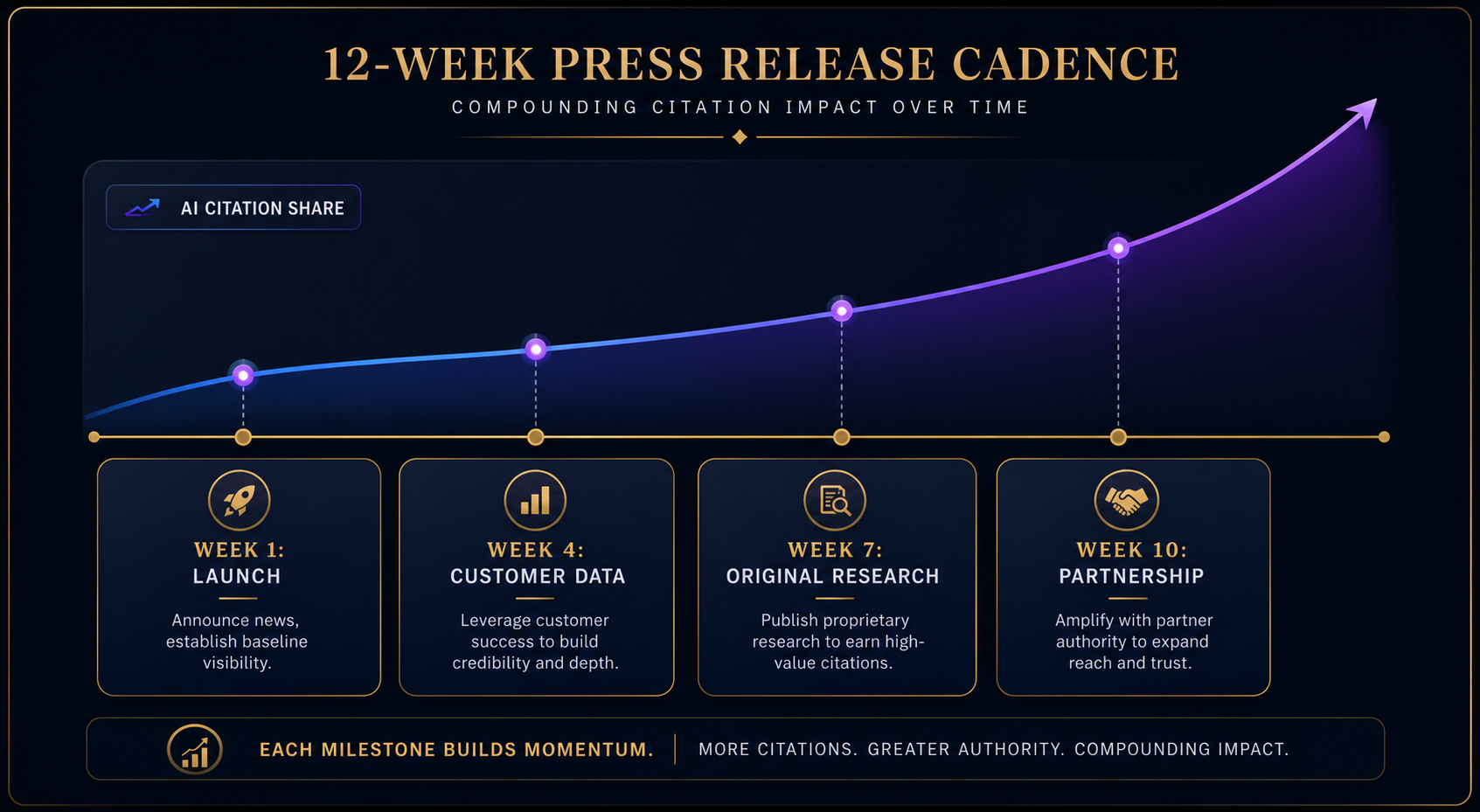

The 12-Week Distribution Cadence That Compounds

One press release won’t move AI citation share. A pattern of releases will. AI models build category associations through repeated exposure, the more often your brand appears alongside the right category and the right adjacent entities, the more likely you become the default recommendation.

The cadence we use with B2B clients runs in 12-week cycles, with four release types staggered for source diversity:

| Week | Release Type | What It Builds |

|---|---|---|

| Week 1 | Product or feature launch | Category-action association |

| Week 4 | Customer data or case study release | Verifiable outcome claims |

| Week 7 | Original research or industry data | Authority and citation density |

| Week 10 | Partnership, integration, or milestone | Adjacent-entity associations |

Four releases a quarter. Each one entity-rich, data-anchored, and distributed through a tier-one wire. By month four, you’ll start seeing the brand appear in Perplexity answers it didn’t appear in before. By month seven, ChatGPT begins surfacing the brand for category queries. By month twelve, you’ve built compound citation share that competitors can’t match without their own 12-month head start.

The Mistake That Kills the Cadence

Skipping the research release. Most teams default to product launches and customer wins, both useful, both common. Original research is what differentiates. A short data report based on your own customer base, your own platform metrics, or a small survey gives AI models something to cite that no competitor can claim. It’s also the release most likely to get picked up by trade publications, which compounds the AI signal further.

What to Measure When “Pickup” Doesn’t Matter Anymore

Journalist pickup was always a flawed metric. For AI citation strategy, it’s the wrong metric entirely. Here’s what to measure instead.

AI Citation Frequency by Engine

Track how often your brand appears in generated answers across ChatGPT, Perplexity, Gemini, and Google AI Overviews, for both branded queries and category queries. Branded queries tell you whether the model knows your brand. Category queries tell you whether you’re a default recommendation. The gap between the two is your real opportunity. For deeper measurement methodology, see our guide on how to track brand mentions in AI search results.

Syndication Depth

How many unique URLs does each release generate across the wire’s syndication network? GlobeNewswire’s full network can produce 200+ canonical URLs from one release. Each one is a separate signal AI crawlers can encounter.

Entity Co-Occurrence

Which adjacent entities is your brand appearing alongside in AI answers? Competitors, categories, technologies, executives. This tells you what category associations the models are building, and whether they match what you’re trying to build.

Citation Quality

When AI engines cite your release, do they pull your framing or paraphrase it into something generic? Quality citations preserve specific numbers, named executives, and category positioning. Low-quality citations strip everything down to “Acme is a company that does things.” If you’re seeing the second pattern, the first 75 words of your releases need rewriting.

Time-to-Citation

How long between distribution and first AI citation? Six weeks is healthy. Twelve weeks suggests distribution problems. Two weeks suggests your releases are doing exactly what they should.

Where Most PR Teams Go Wrong

The pattern we see most often isn’t strategic failure, it’s tactical drift. Teams that started with a sound AI citation strategy gradually slip back into journalist-pickup habits. The release gets longer. The lead gets fluffier. The distribution gets cheaper. The cadence gets inconsistent. Within two quarters, the citation gains evaporate.

Three guardrails keep the strategy intact:

- Hard rule on the first 75 words. Every release passes the extraction test before it ships. If a teammate can’t list five concrete entities and one verifiable claim from the opening paragraph, the release isn’t ready.

- Distribution discipline. Premium wire only, every time. The cost savings from downgrading to cheap distribution always exceed the citation losses by a wide margin.

- Quarterly research release is non-negotiable. Skip it once and the compound effect breaks. Even a small data report, 200 customers surveyed, 50 internal benchmarks, works.

The teams that hold these three lines see AI citation share grow quarter over quarter. The teams that don’t end up wondering why their competitor with worse content shows up in ChatGPT and they don’t.

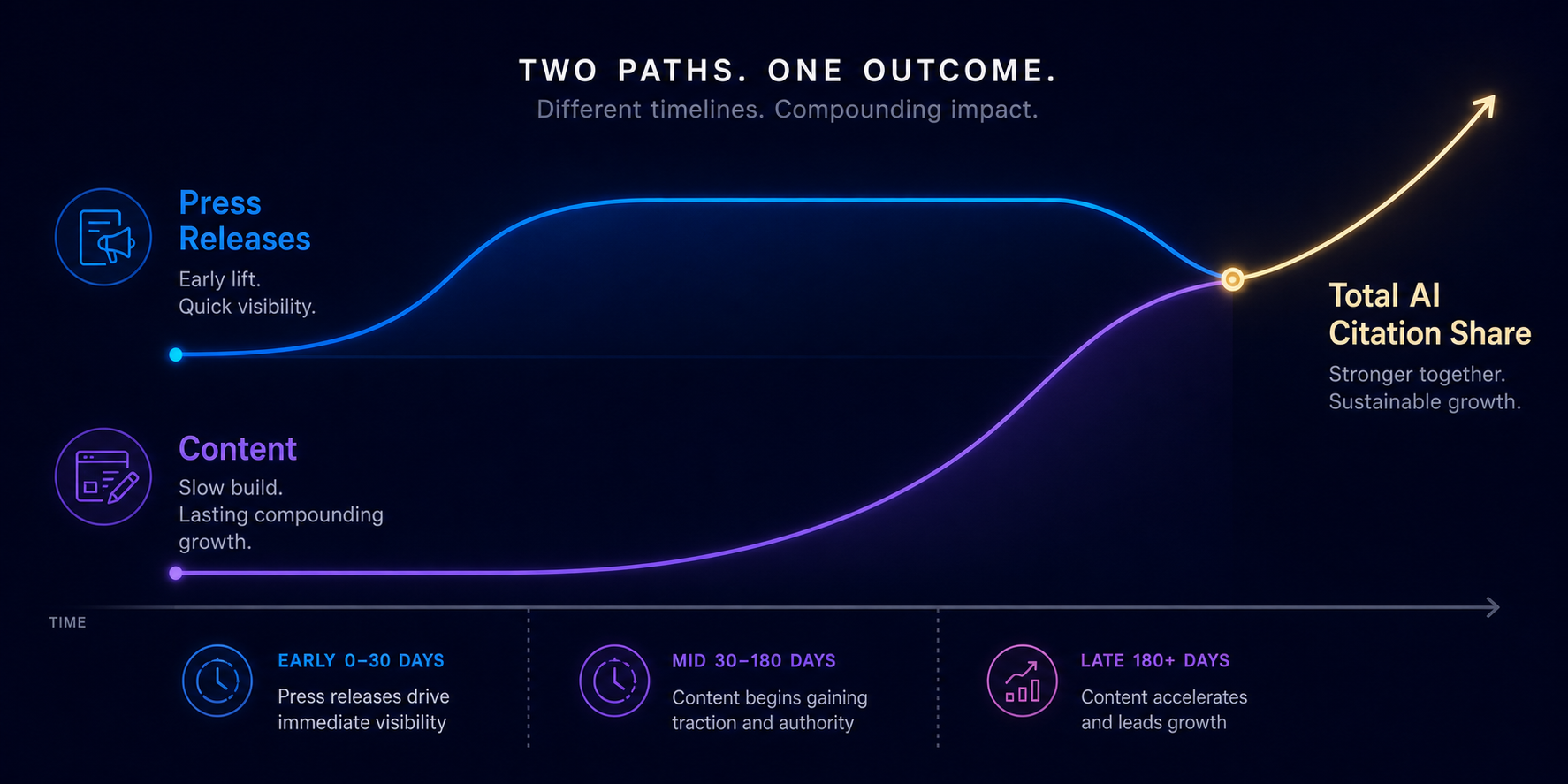

Press Release Strategy vs. Content Strategy for AI Citations

These aren’t substitutes. They’re complements that move on different timelines. Press releases drive citation share fast, within weeks of distribution. Content drives durable category authority over months and quarters. The brands winning AI visibility in 2026 are running both: a 12-week wire-distribution cadence for momentum, and a parallel content engine building topical depth.

If you’re starting from zero, lead with releases. They produce the fastest signal AI engines can detect, and they create the entity scaffolding that makes your blog content more citable when it’s eventually indexed. If you have an active content engine but no PR cadence, you’re leaving the fastest channel on the table. For a broader view of how content and PR fit together, our take on how to increase brand mentions in AI search covers the full visibility stack.

Frequently Asked Questions

How often do AI engines actually cite press releases?

Frequently, and increasingly. Muck Rack’s 2025 Generative Pulse Report found that PR-driven content accounts for the overwhelming majority of citations across major AI engines. The pattern is most pronounced for B2B company queries, executive thought leadership, and category-specific recommendations, where wire content provides the structured, verifiable claims AI models prefer to cite.

Which wire service is best for AI citation visibility?

GlobeNewswire consistently shows the strongest AI citation share across Perplexity and ChatGPT, with PR Newswire and Business Wire close behind. The exact best choice depends on your category, financial announcements favor Business Wire, consumer brands often see better results from PR Newswire, and B2B tech tends to perform strongest on GlobeNewswire. Cheap or free distribution sites produce negligible AI citation lift and shouldn’t be part of an AI-focused strategy.

How long until a press release shows up in AI answers?

Two to six weeks for the fastest engines, typically Perplexity, which actively retrieves recent content. ChatGPT and Gemini are slower because they rely more heavily on training data cycles, though both have added retrieval layers that pull recent wire content. If you haven’t seen any citation movement 12 weeks after distribution, the issue is usually structural: weak opening paragraph, low-quality wire service, or insufficient entity density in the release.

Do I need to write different press releases for AI than for journalists?

Not entirely, but the priorities shift. A release optimized for AI extraction is more entity-dense, more numerically specific, and less reliant on narrative framing than a release optimized for journalist pickup. The good news: AI-optimized releases tend to perform better with journalists too, because journalists also want specific names, specific numbers, and clear category positioning. The release that extracts well for ChatGPT usually reads well for a reporter on deadline.

Can press release strategy alone build AI visibility, or do I also need content?

Press releases alone can produce measurable AI citation share within a quarter, but durable category authority requires both releases and content. Releases create the entity scaffolding, the names, dates, numbers, and category associations AI models latch onto. Content builds the topical depth that makes your brand the default recommendation for broader category queries. Run both for the strongest results.

What’s the minimum budget to make this strategy work?

One premium wire distribution per quarter, typically $1,000–$2,500 per release through GlobeNewswire or PR Newswire at the geographic and category targeting levels that matter. Four releases a year through a tier-one wire is the floor for meaningful AI citation lift. Below that, you can’t generate enough source diversity or entity repetition for AI models to build strong category associations.

How do I measure if this is actually working?

Track AI citation frequency by engine for both branded and category queries, syndication depth per release, entity co-occurrence patterns in generated answers, and time-to-first-citation after distribution. The most diagnostic metric is the gap between branded citation rate (which tells you the model knows your brand) and category citation rate (which tells you the model recommends your brand). Closing that gap is the goal.

Build the Cadence Before Competitors Notice the Channel

Press releases became the highest-ROI AI citation channel almost by accident, the format that was already structured, entity-rich, and wire-syndicated happened to be exactly what LLMs prefer to extract from. Most PR teams haven’t caught up yet. That window won’t stay open. The brands locking in 12-week cadences through premium wires in 2026 will own category citation share that takes competitors years to displace. Start with one release built around the 9-element structure, distribute it through a tier-one wire, and measure citation lift across ChatGPT and Perplexity over the next 6 weeks. The signal will tell you whether to scale.

Want a deeper look at where AI engines pull citations from beyond the wire? Our guide on how AI crawlers actually pick sources breaks down the full source-weighting model.