Most brands chasing AI citations are pitching the wrong publications. They’re going after high-DA generalist sites because that’s what traditional SEO taught them, and they’re getting ignored by ChatGPT, Perplexity, and Gemini anyway. The publications that actually drive AI citations sit in a hierarchy, and most B2B teams have zero presence on the tiers that matter. A tier-based publication hierarchy for AI citations ranks publications by how likely AI models are to pull from them, so you can stop wasting cycles on outlets that don’t move the needle.

This guide breaks down the four-tier model we use to prioritize earned media for AI visibility, what each tier does, how to qualify a publication into a tier, and where to start if your brand has zero AI citations today.

What You’ll Learn

- The four-tier publication model and what each tier contributes to AI citation likelihood

- Why domain authority alone is a weak predictor, and what to use instead

- How to qualify a publication into a tier in under 10 minutes

- Which tiers ChatGPT, Perplexity, Gemini, and Google AI Overviews pull from most

- A sequencing playbook for brands starting from zero

Why Domain Authority Stopped Predicting AI Citations

For 15 years, SEO teams ranked publications by domain authority. Higher DA, better link, done. That math broke the moment AI models started selecting sources based on training data composition, retrieval indexes, and citation patterns, not link equity.

A 2025 Ahrefs analysis found that 38% of AI Overview citations come from pages ranking in Google’s top 10, meaning 62% come from somewhere else entirely. Reddit threads, niche trade publications, documentation pages, community wikis. Pages that traditional SEO scoring would deprioritize.

The reason: AI models build their citation behavior from three overlapping signals, what was in their training corpus, what their retrieval index surfaces in real time, and what their grounding layer treats as authoritative for a given query type. Domain authority influences one of those signals weakly. Topical relevance and entity association influence all three.

So the question isn’t “what’s the DA of this publication?” It’s “does this publication appear in the source pool AI models draw from for queries in my category?” That question requires a different framework, a hierarchy built around AI behavior, not link metrics.

The Four-Tier Model

The hierarchy groups publications into four tiers based on how AI models treat them as sources. Each tier plays a distinct role. You don’t pick one and skip the others. You build presence across the stack, weighted toward the tiers that match your category.

Tier 1: Reference and Wire

Wikipedia, major reference databases, wire services (Reuters, AP, Bloomberg), and structured data sources (Crunchbase, Wikidata, official registries). These are the publications AI models treat as ground truth. When a model needs to verify a company exists, what it does, who founded it, what category it’s in, this tier supplies the answer.

Tier 1 isn’t where you pitch product features. It’s where your entity gets defined. A Wikipedia page with proper categorization, a Crunchbase profile with accurate funding and category data, a Bloomberg or Reuters mention that anchors your company description, these are the load-bearing references that downstream AI citations build on.

Most B2B brands don’t qualify for Wikipedia on day one. That’s fine. The work starts with Crunchbase accuracy, Wikidata entity creation, and earning wire-service coverage that gets syndicated into reference databases.

Tier 2: Editorial Authority Outlets

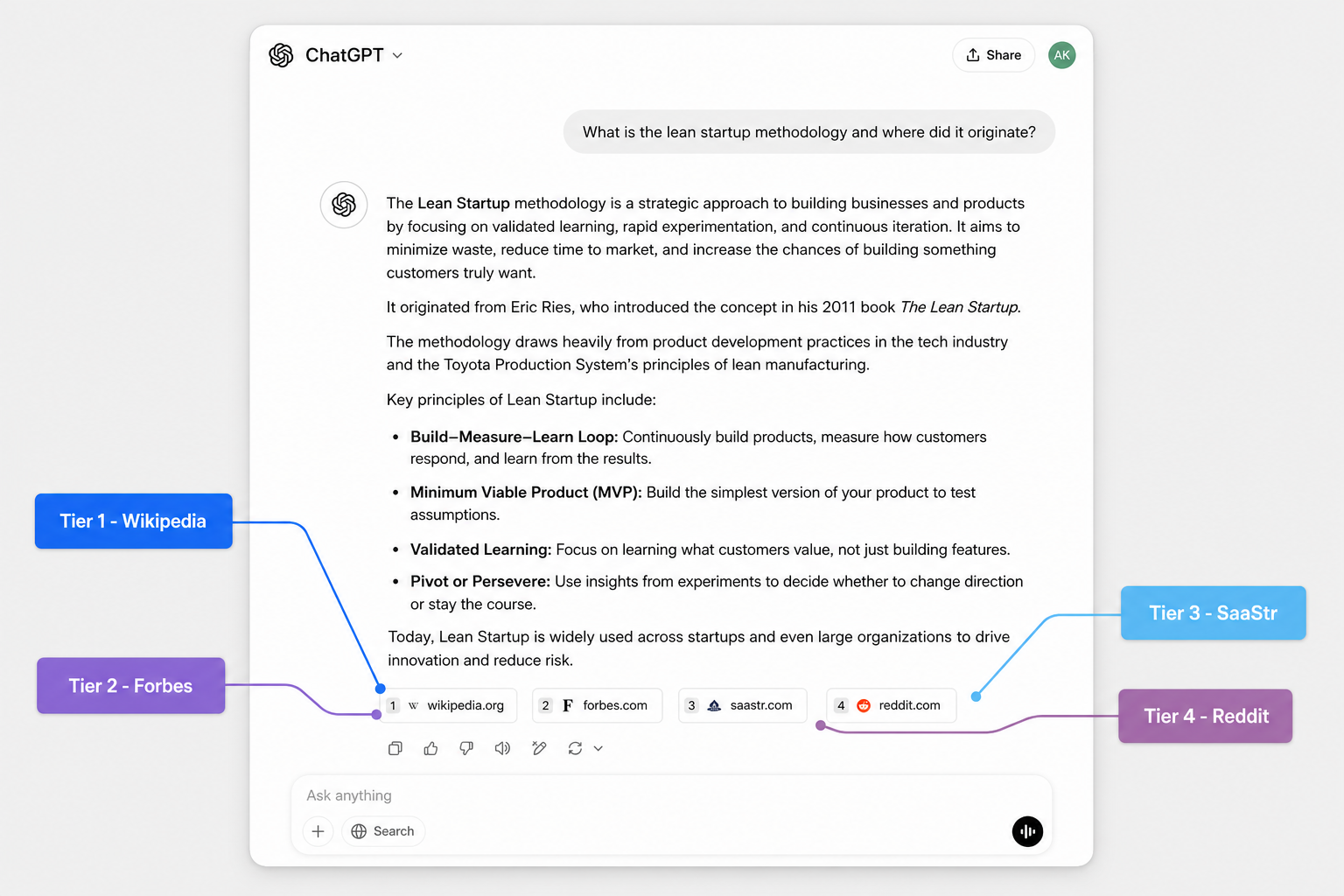

Forbes, Inc., Fast Company, HBR, MIT Sloan, TechCrunch, The Verge, Wired, Ars Technica, and the industry-leading editorial outlets in your category’s adjacent space. These publications carry enough trust signal that AI models weight them heavily in retrieval, especially for ChatGPT and Google AI Mode, which lean on established media for grounding.

Tier 2 is where opinion gets formed. When ChatGPT generates a recommendation in your category, it often pulls a framing sentence or a brand association from a Tier 2 article. Get cited here with substantive editorial coverage, not a quote drop in a roundup, and you start showing up in the answer.

Tier 3: Vertical Trade Publications

The trade press for your specific category. SaaStr, MarketingProfs, Search Engine Land, CMSWire, Information Week, healthcare-specific outlets, fintech-specific outlets, devtool-specific publications. Niche audience, deep topical relevance, and, critically, high topical density on the queries your buyers ask AI assistants.

Tier 3 is where AI citation patterns compound fastest for B2B. A SaaS company cited three times in SaaStr on different topics builds a stronger AI association in the “SaaS tools” category than the same company cited once in Forbes. Topical density beats generalist authority every time at this tier.

This is also the tier most brands underinvest in. The pitch hit rate is higher, the editorial standards are real but reachable, and the topical alignment with AI-search queries is the strongest of any tier.

Tier 4: Community and UGC

Reddit, Quora, Hacker News, Stack Overflow, GitHub discussions, niche Discord and Slack archives that index, and the long tail of community-generated content. Visual Capitalist data shows Reddit alone accounts for roughly 40% of AI search citations across major platforms. Wikipedia trails at 26%.

Tier 4 is where Perplexity lives. It’s where ChatGPT pulls “real user perspective” framing. It’s where Gemini grounds questions that don’t have clean editorial answers. Skip this tier and you lose half the AI citation surface.

Tier 4 isn’t paid placement. It’s community presence, founders and operators participating in threads where buyers ask category questions, leaving substantive comments that get upvoted, building accounts with credibility signals. The work looks like community management, not PR.

How to Qualify a Publication Into a Tier

Don’t guess. Run a 10-minute qualification check before you pitch:

- Check AI citation presence directly. Open ChatGPT, Perplexity, and Google AI Mode. Ask 5–10 category-relevant questions a buyer would ask. Note which publications show up as cited sources. If a publication appears repeatedly across your category’s queries, it belongs in your hierarchy.

- Check topical density. Site-search the publication for your category’s core terms. A publication with 200+ articles on your topic ranks higher in AI retrieval than a publication with 5 articles, even if the second one has higher DA.

- Check indexation in known training sources. Common Crawl, C4, and Wikipedia’s external link graph are the bones of most major training datasets. Publications well-represented in these corpora carry more weight in foundational training data.

- Check editorial substance. A publication that publishes original reporting and analysis gets weighted higher than one that republishes press releases. AI models learn to discount the latter.

- Assign the tier. Reference/wire = Tier 1. Established editorial brand = Tier 2. Vertical trade = Tier 3. Community/UGC = Tier 4.

Skip publications that don’t qualify into any tier. They’re not high-DA prizes worth chasing, they’re noise.

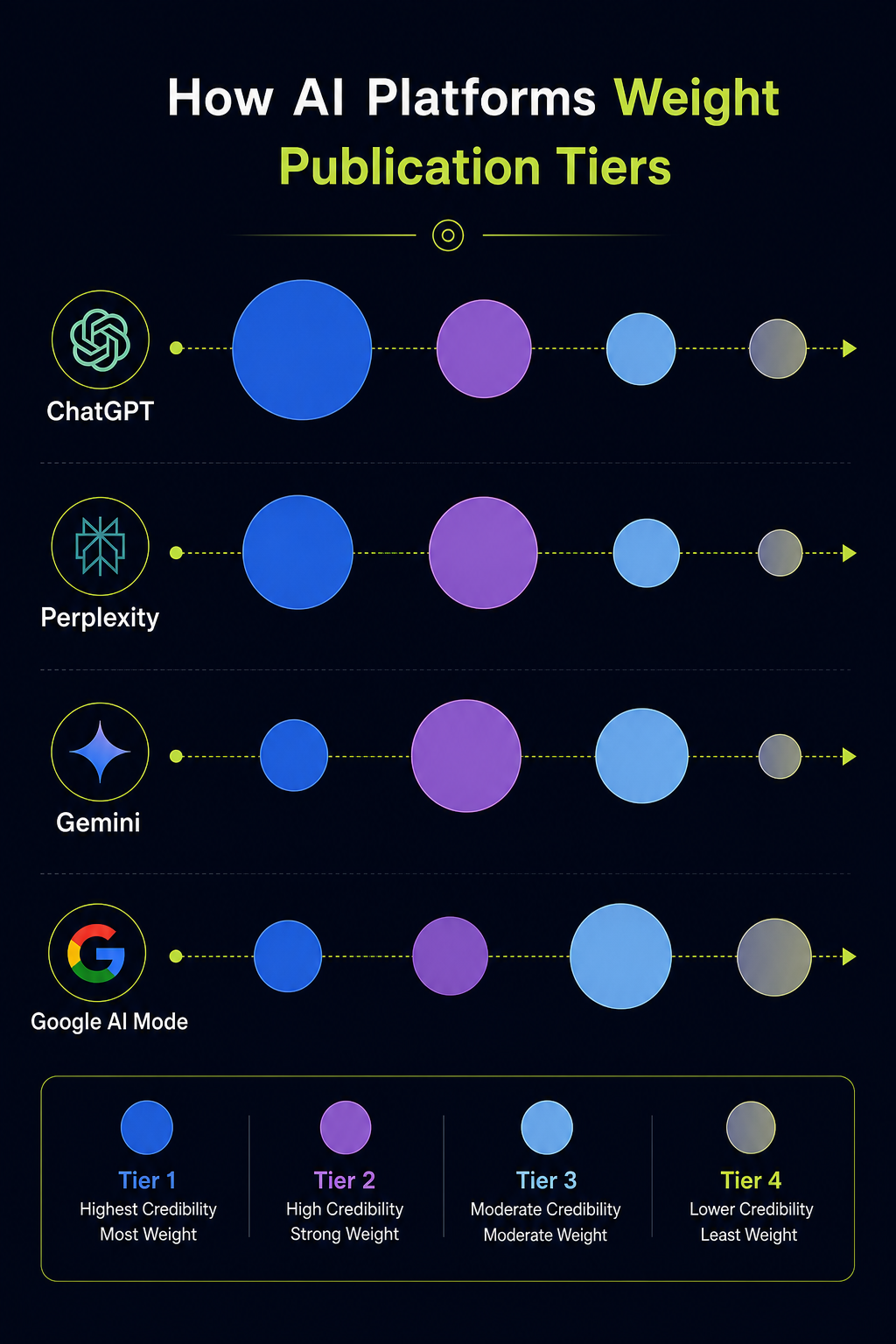

Which Tiers Each AI Platform Pulls From

Platform behavior isn’t uniform. Build for the platforms your buyers actually use.

| Platform | Primary Source Bias | Where to Focus |

|---|---|---|

| ChatGPT | Tier 2 editorial + Tier 1 reference, with Tier 4 for “user perspective” framing | Forbes/Inc./TechCrunch class outlets + Wikipedia accuracy |

| Perplexity | Tier 3 + Tier 4 heavily; query-time reranking favors fresh community signal | Trade publications + active Reddit and forum presence |

| Gemini | Tier 1 reference (knowledge graph) + Tier 2 editorial | Wikidata entity, Wikipedia, established media coverage |

| Google AI Mode | Pages ranking in top 10 organic + Tier 1/2 grounding sources | Whatever ranks already, plus reference-tier presence |

If your buyers research vendors in ChatGPT and Gemini, weight Tiers 1 and 2. If they live in Perplexity or use AI Mode for fresh comparisons, Tier 3 and 4 work harder. Most B2B teams need all four.

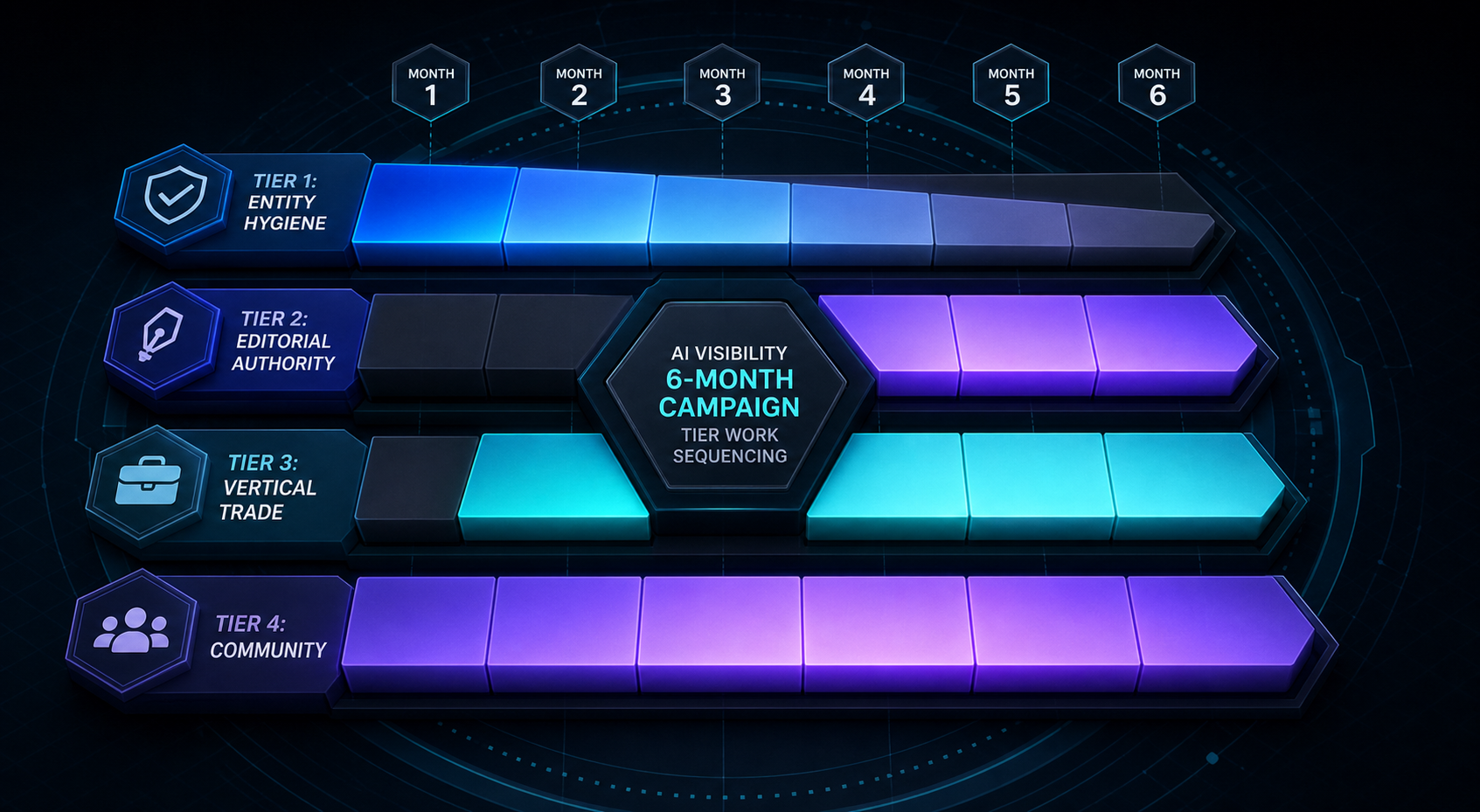

The Sequencing Playbook for Brands Starting From Zero

You don’t pitch all four tiers at once. The hierarchy compounds, earlier-tier work makes later-tier pitches more credible.

Start with Tier 1 entity hygiene. Fix or create your Crunchbase, Wikidata, and (where appropriate) Wikipedia entries. Make sure your company’s category, founders, and description are consistent across every reference database. This is the foundation AI models check first.

Then move to Tier 3. Vertical trade publications have the highest hit rate, the strongest topical relevance to AI-search queries, and the fastest compound effect. Aim for 4–6 substantive placements over your first quarter, bylined articles, expert commentary, or original-data features. Not press release drops.

With Tier 3 momentum, Tier 2 pitches become viable. Editorial outlets respond to brands that already have credible trade coverage. The pitch becomes “here’s our point of view, here’s where else it’s been published, here’s the data.” Aim for 2–3 Tier 2 placements per quarter once the trade base is in place.

Tier 4 runs in parallel from day one. Community presence isn’t a campaign, it’s an operating posture. Your founders, your engineering leads, your product people show up in the threads where buyers ask questions. They answer well, they don’t pitch, they build account credibility over months.

After two to three quarters of consistent work across this sequence, AI citation patterns start shifting. Brands that hold the pace through six months are the ones showing up in ChatGPT and Perplexity recommendations by the end of the year.

The Mistakes That Stall AI Citation Growth

Three patterns kill momentum:

Chasing DA over topical fit. A guest post on a DA 90 generalist site teaches AI models nothing about your category position. A bylined piece on a DA 55 trade publication that AI assistants pull from every week teaches them exactly what you want them to know.

Skipping Tier 1 entity work. Brands invest months in editorial coverage while their Crunchbase says they’re in the wrong category and they have no Wikidata entity. AI models can’t categorize you correctly if the reference layer is broken. Fix the foundation first.

Treating Tier 4 like spam ground. Founders who spray promotional Reddit comments get downranked by the community and ignored by AI retrieval. The bar on Tier 4 is the same as everywhere else: substantive contribution, real expertise, no pitching.

You can publish content every day and still get ignored by ChatGPT. Why? Because you’re building for Google’s index while AI models are learning from an entirely different set of sources. Fix the inputs, fix the output.

How to Track What’s Working

You can’t optimize a hierarchy you can’t measure. Track three things:

- Citation rate by platform. Run category-relevant prompts across ChatGPT, Perplexity, Gemini, and Google AI Mode on a weekly cadence. Log which sources get cited and whether your brand appears. Tools like AI visibility analytics tools automate this at scale.

- Tier coverage map. Maintain a simple matrix of your placements by tier. Most teams discover they have heavy Tier 2 coverage and zero Tier 1 or Tier 3, that’s why nothing’s compounding.

- Branded query share of voice. When buyers ask AI assistants about your category, how often does your brand appear versus competitors? This is the metric that maps to pipeline. Our guide on share of voice in AI search walks through the measurement methodology.

If you’re building citation strategy for the first time, the AI visibility diagnostic framework gives you a starting audit to see where the gaps are before you spend a dollar on outreach.

Frequently Asked Questions

What is a tier-based publication hierarchy for AI citations?

A tier-based publication hierarchy for AI citations ranks publications into four tiers, reference/wire, editorial authority, vertical trade, and community/UGC, based on how AI models like ChatGPT, Perplexity, and Gemini treat them as sources. The hierarchy helps brands prioritize earned media for AI visibility rather than chasing domain authority alone.

Does domain authority still matter for AI citations?

It matters weakly. AI models select sources based on training data composition, retrieval indexes, and topical relevance, not link equity. A trade publication with deep topical density in your category will outperform a higher-DA generalist outlet for AI citation likelihood almost every time.

Which tier should B2B brands start with?

Start with Tier 1 entity hygiene. Crunchbase, Wikidata, Wikipedia where applicable, then move to Tier 3 vertical trade publications. Tier 1 establishes how AI models categorize you. Tier 3 has the highest hit rate and the strongest topical relevance to AI-search queries.

How long until tier-based work shows up in AI citations?

Most brands see citation pattern shifts after two to three quarters of consistent work across the hierarchy. Brands that hold the pace through six months typically start showing up in ChatGPT and Perplexity recommendations by month four to six. Compound visibility isn’t fast, but it sticks.

Is Reddit really a citation tier worth building?

Yes. Reddit accounts for roughly 40% of AI search citations across major platforms, more than Wikipedia. Skipping Tier 4 means losing half the AI citation surface, especially on Perplexity. Build presence through substantive contribution from founders and operators, not promotional posts.

How do I know if a publication belongs in a specific tier?

Run a 10-minute qualification check: confirm AI citation presence by asking category-relevant questions in ChatGPT and Perplexity, check topical density via site-search, verify indexation in training corpora, and assess editorial substance. If a publication doesn’t qualify into any tier, skip it.

Should every B2B brand build for all four tiers?

Most should. Platform behavior varies. ChatGPT and Gemini lean on Tiers 1 and 2, Perplexity favors Tiers 3 and 4, so brands whose buyers use multiple AI assistants need coverage across the stack. Single-tier strategies leave citation surface on the table.

Building Your Hierarchy

The brands getting cited by AI models in 2026 aren’t the ones with the biggest PR budgets. They’re the ones who figured out which publications AI models actually pull from and built presence there systematically. Audit your current coverage against the four tiers this week. Find the tier with zero presence. That’s where the next quarter’s work goes.