Predictive AI alerts for brand mentions track shifts in how AI platforms reference your brand — before those shifts affect pipeline, reputation, or competitive positioning. As of 2026, monitoring what ChatGPT, Perplexity, Gemini, and Google AI Overviews say about your company has moved from experimental to essential. But most teams still rely on reactive tools that tell them what already happened. Predictive alerts change the equation by flagging emerging patterns — sentiment changes, competitor surges, factual errors gaining traction — while you still have time to act.

This article breaks down how predictive AI alerts work for brand mentions, what separates them from standard monitoring, and how to build a system that catches reputation risks and visibility opportunities early.

Key Takeaways

- Predictive AI alerts detect emerging changes in brand mentions across AI platforms before they compound into visibility loss or reputation damage.

- Standard monitoring tools report what already happened — predictive systems identify patterns that signal what’s about to happen.

- Effective predictive alerts require baselines, threshold calibration, and clear ownership to avoid alert fatigue.

- Sentiment drift, competitor mention surges, and factual error propagation are the three highest-value alert triggers for B2B brands.

- Building entity authority across high-authority publications directly improves both the accuracy and favorability of AI-generated brand mentions over time.

- Teams that act on predictive signals — rather than waiting for quarterly reports — close the gap between insight and intervention.

What Are Predictive AI Alerts for Brand Mentions?

A predictive AI alert for brand mentions is an automated notification triggered when monitoring data indicates an emerging shift in how AI platforms reference your brand — before that shift becomes visible in traffic, leads, or public reputation.

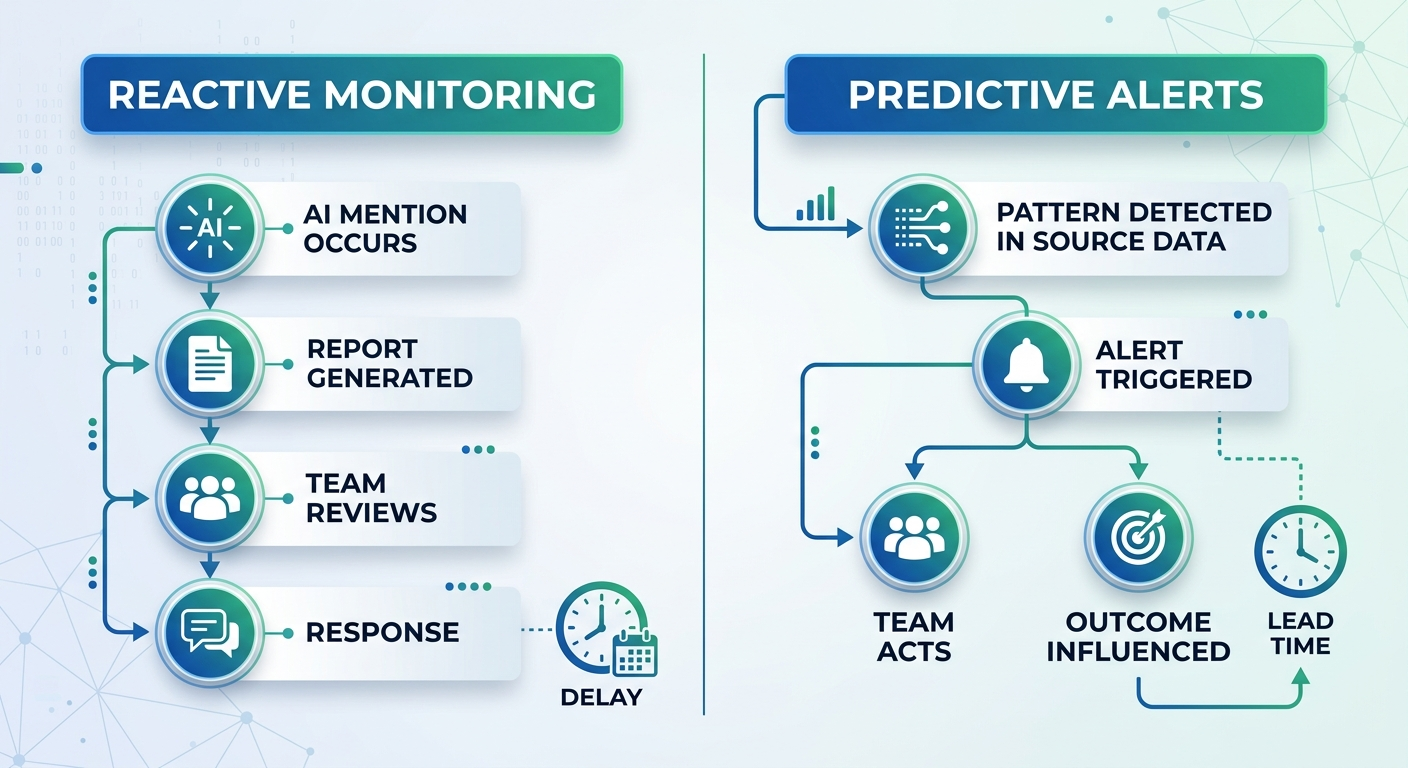

Traditional brand monitoring works like a rearview mirror. It tells you that ChatGPT mentioned your competitor five times last week in response to category queries. Useful, but late.

Predictive alerts work differently. They analyze patterns across mention frequency, sentiment trajectory, source authority, and competitive positioning to flag changes that are forming — not changes that already landed.

Three examples of what predictive alerts catch:

- Sentiment drift: Your brand’s average sentiment score in AI responses dropped 12% over seven days, driven by a negative Reddit thread gaining traction in AI training sources.

- Competitor surge: A competitor’s mention frequency in Perplexity responses for your primary category queries increased 40% after a wave of new editorial coverage.

- Factual error propagation: Outdated pricing information from a 2024 blog post is now appearing in ChatGPT responses to “how much does [your product] cost” queries.

Each of these patterns is detectable before it solidifies — but only if your system is calibrated to look for them.

How Predictive Alerts Differ from Standard AI Brand Monitoring

Most AI brand monitoring tools track mentions after they appear in responses. They answer the question: “What did AI say about us?” Predictive alerts answer a different question: “What is AI about to start saying about us — and why?”

The difference is structural, not cosmetic. Here’s how each approach handles the same scenario.

Standard monitoring response

Your weekly report shows that ChatGPT stopped recommending your brand for “best project management tools for remote teams” queries. You investigate. You find that three new competitor articles published two weeks ago now dominate the sources AI models reference. You’ve already lost two weeks of visibility.

Predictive alert response

Your system detects that competitor editorial coverage for “remote project management” increased 60% over five days across publications indexed by major AI models. An alert fires. You review the signal, identify the content gap, and begin publishing targeted responses within 48 hours — before the AI models fully absorb and weight the new competitor content.

The core difference: predictive alerts track the inputs that shape AI responses, not just the outputs.

According to a 2024 Gartner forecast, traditional search engine volume was expected to drop 25% by 2026 as AI search alternatives gained adoption. That shift has accelerated. For brands relying on AI-mediated discovery, the window between a visibility change forming and that change reaching users is shrinking. Predictive alerts protect that window.

Why B2B Brands Need Predictive Alerts in 2026

AI-generated answers now influence purchase decisions at a scale that makes reactive monitoring insufficient. When a VP of Engineering asks Claude to compare infrastructure vendors, the response carries weight. It reads like an authoritative summary. It shapes the shortlist before your sales team ever gets a call.

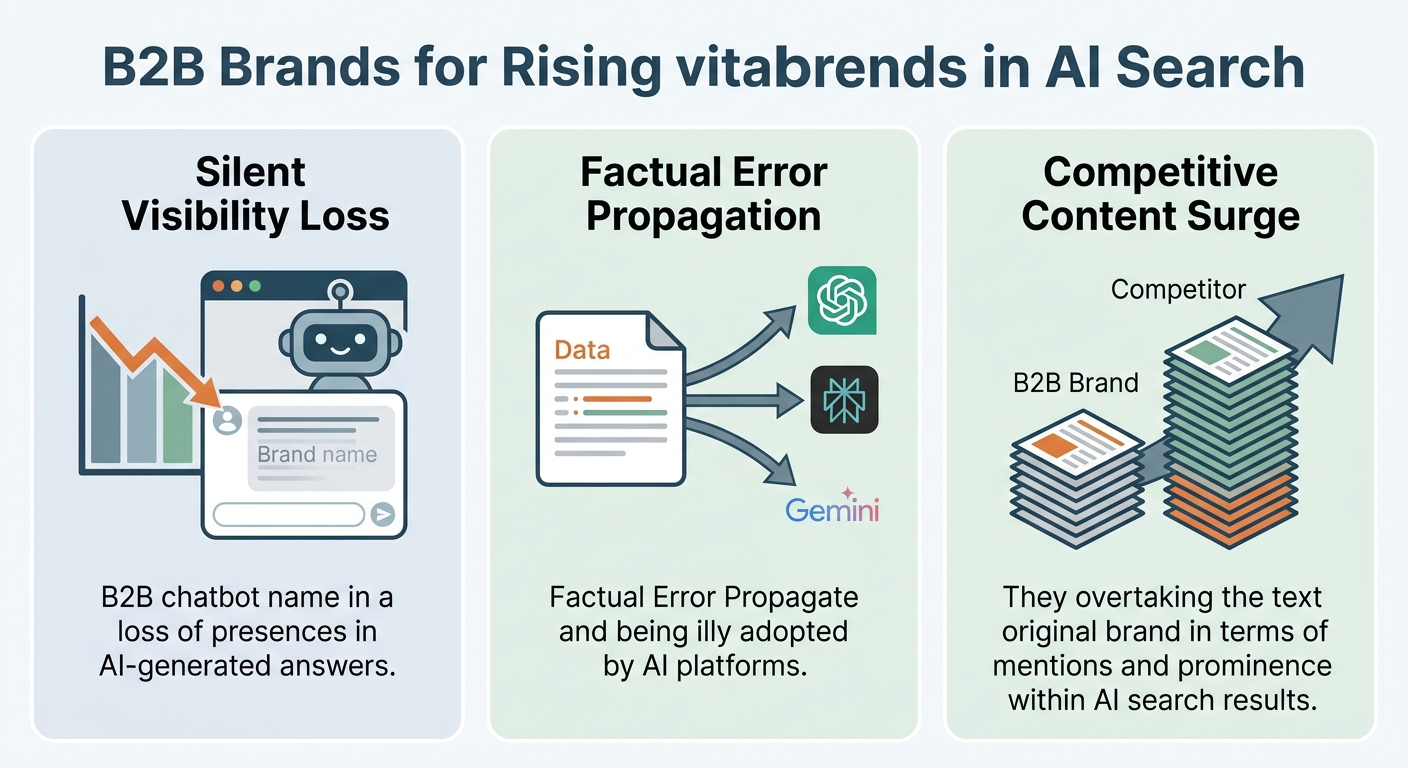

Three dynamics make predictive alerts critical for B2B brands as of 2026:

AI responses change faster than quarterly reviews can track

AI models update their training data, retrieval sources, and response patterns on cycles measured in days and weeks — not quarters. A brand that dominates AI recommendations in January can lose that position by March if competitor coverage shifts and goes unmonitored. According to SparkToro research from 2025, the majority of web searches now result in zero clicks, with AI-generated answers increasingly replacing traditional blue-link results. Brands that wait for periodic reports miss the inflection points that matter.

Factual errors compound silently

When an AI model absorbs incorrect information about your brand — wrong pricing, discontinued features described as current, outdated competitive positioning — that error gets repeated to every user who asks a relevant question. Unlike a negative review you can respond to publicly, AI factual errors spread invisibly. Predictive alerts that monitor source accuracy across indexed publications catch these errors early, before they become the default response.

Competitive positioning shifts happen in the content layer, not the product layer

Your competitor didn’t ship a better product last month. They published twelve articles on authoritative sites that AI models now reference when users ask category questions. Brand mentions in generative AI depend heavily on the volume, recency, and authority of content sources. Predictive alerts flag when a competitor’s content footprint expands in ways that directly threaten your AI visibility.

The Three Highest-Value Predictive Alert Triggers

Not every data point warrants an alert. Alert fatigue kills monitoring programs faster than missing data does. Focus your predictive system on these three triggers, which consistently deliver the highest signal-to-noise ratio for B2B brands tracking AI visibility.

1. Sentiment trajectory shifts

A single negative mention doesn’t warrant panic. But when your average sentiment score across AI-generated responses drops steadily over five to seven days, something is feeding that decline. The cause might be a critical blog post gaining traction, a customer complaint thread on a high-authority forum, or a competitor publishing comparison content that positions you unfavorably.

Alert threshold: Configure alerts for sustained sentiment declines (not single-response dips). A 10–15% drop sustained over five or more days across multiple AI platforms typically indicates a meaningful shift worth investigating.

2. Competitive mention share changes

Track the ratio of your brand mentions to competitor mentions across a defined set of category queries. When a competitor’s share increases by 20% or more within a two-week window, investigate the source. The cause is almost always traceable to new editorial coverage, updated product documentation, or a targeted content campaign.

Alert threshold: Monitor weekly mention share ratios. Flag any competitor gaining 20%+ share on queries where you previously held a strong position.

3. Factual accuracy degradation

This is the most damaging and least visible risk. Configure monitoring to compare AI-generated statements about your brand against verified source data — pricing, feature lists, executive names, company descriptions. When discrepancies appear and persist across multiple queries, you need to trace the source and correct it.

Alert threshold: Any factual error detected in AI responses that persists across two or more platforms or appears in response to high-volume queries should trigger an immediate alert.

Pro Insight: The most effective predictive alert systems combine automated detection with human review. Automated systems flag the pattern. A human determines whether the pattern represents a real risk or routine noise. Skipping the human review step leads to alert fatigue. Skipping the automated detection step means you miss patterns entirely.

How to Build a Predictive AI Alert System for Brand Mentions

Building a predictive alert system doesn’t require custom machine learning infrastructure. It requires disciplined setup, clear baselines, and consistent calibration. Here’s a practical process that works for B2B marketing teams managing AI visibility.

Step 1: Establish your monitoring baseline

Before you can detect changes, you need to know what normal looks like. Run a structured audit of your current AI brand visibility across ChatGPT, Perplexity, Gemini, and Google AI Overviews.

Document the following for each platform:

- Which category queries mention your brand

- Your position within AI responses (first mention, middle, end, absent)

- Which competitors appear alongside you

- The factual accuracy of statements about your brand

- The overall sentiment and framing of mentions

This baseline becomes your reference point. If you haven’t tracked visibility across AI platforms before, tracking brand mentions across AI search platforms is the logical starting point.

Step 2: Define your query set

Build a list of 20–50 queries that represent how your target buyers research solutions in your category. Include:

- Category comparison queries (“best [category] for [use case]”)

- Brand-specific queries (“is [your brand] good for [scenario]”)

- Competitor comparison queries (“[your brand] vs [competitor]”)

- Problem-driven queries (“[pain point] solution for [industry]”)

These queries are the prompts you’ll monitor continuously. The more precisely they reflect real buyer language, the more useful your alerts become.

Step 3: Set alert thresholds and assign ownership

For each of the three trigger types — sentiment, competitive share, and factual accuracy — define what constitutes an actionable change versus normal fluctuation.

Assign a specific person to triage each alert type. This person doesn’t need to fix every issue. They need the authority and context to determine whether a signal requires immediate action, deeper investigation, or no action at all.

Common ownership structure:

- Sentiment alerts: Owned by brand or communications lead

- Competitive share alerts: Owned by content strategy or growth lead

- Factual accuracy alerts: Owned by product marketing or documentation lead

Step 4: Calibrate over your first 30 days

Your initial thresholds will be imperfect. Plan to adjust them during the first month based on actual alert volume and relevance.

If you’re getting more than three to five actionable alerts per week, your thresholds are too sensitive. If you’re getting zero alerts for two consecutive weeks, your thresholds may be too loose — or your baseline position is stable enough that monitoring cadence can shift to biweekly deep reviews.

The goal is a system that surfaces two to four meaningful signals per week that your team can act on.

What Predictive Alerts Cannot Do

Predictive alerts detect emerging patterns. They do not guarantee outcomes, and they don’t replace the strategic work of building AI visibility in the first place.

Three honest limitations to understand:

- Alerts don’t fix the underlying problem. If your brand lacks editorial coverage across high-authority publications, alerts will consistently show competitors outperforming you — but the fix is building that coverage, not adjusting alert settings.

- AI model behavior isn’t fully predictable. Large language models change response patterns for reasons that aren’t always traceable to specific content changes. Sometimes a model update shifts outputs in ways no monitoring system can fully anticipate.

- Alerts require action to create value. A well-calibrated alert system that nobody acts on is worse than no system at all, because it creates a false sense of security.

The teams that extract the most value from predictive alerts treat them as intelligence inputs to an active content and citation strategy — not as a standalone solution.

Connecting Predictive Alerts to AI Visibility Strategy

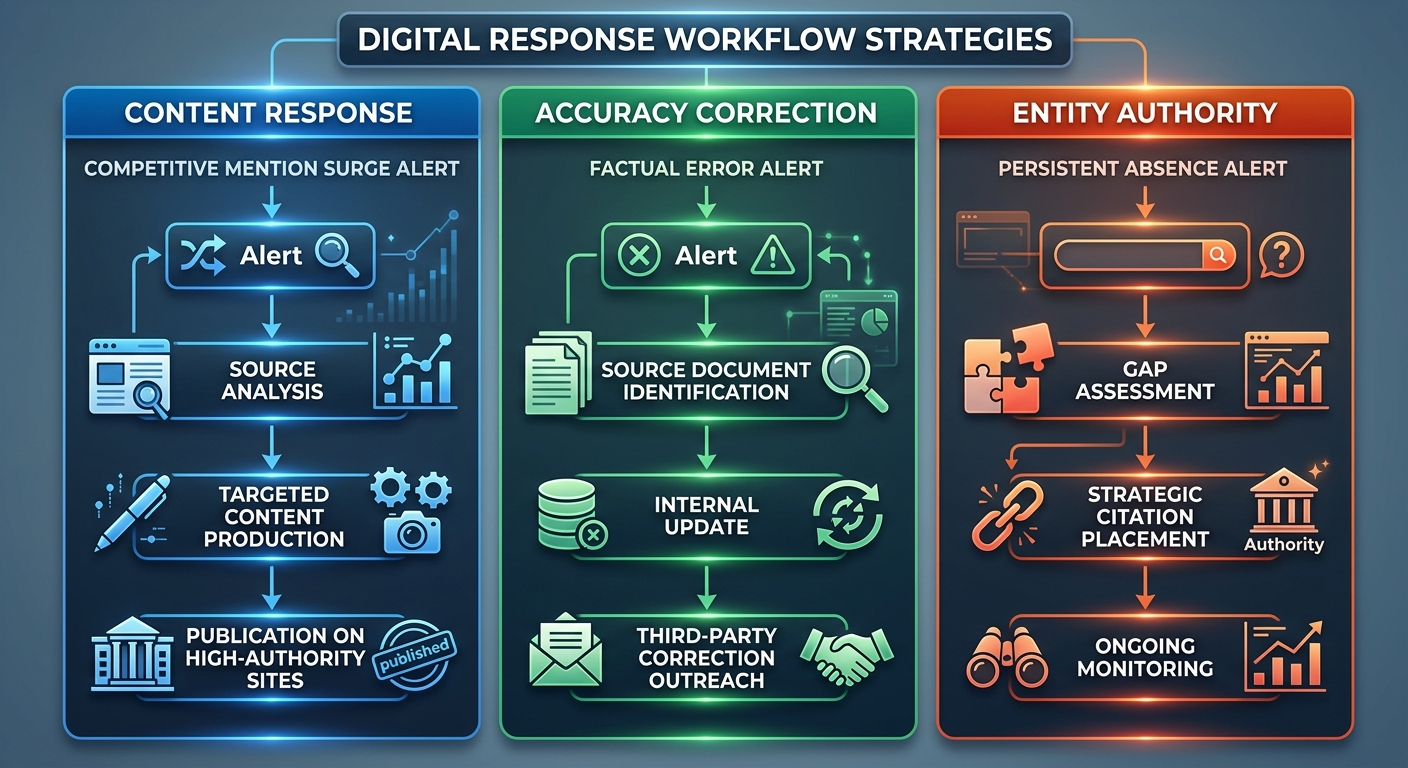

Detecting a competitive surge or sentiment shift is only useful if your team has a playbook for responding. Predictive alerts create the most value when connected to three response workflows.

Content response workflow

When alerts indicate a competitor gaining mention share, trace the signal to its source. Identify the publications, articles, or content formats driving the shift. Then produce targeted content that addresses the same queries with equal or greater depth, published on sites with comparable authority.

For B2B brands, this often means strategic AI brand mentions placed on editorial publications that AI models actively reference. The goal isn’t to match competitor volume overnight. It’s to close the content gap that’s driving the visibility shift.

Accuracy correction workflow

When alerts flag factual errors in AI responses, work backward through the information supply chain. Identify which source documents contain the incorrect information. Update your own published materials first — pricing pages, product documentation, help centers, comparison pages. Then pursue corrections on third-party sources where possible.

AI models learn from the web. Correcting source documents is the most reliable path to correcting AI outputs over time, as models refresh their training data and retrieval indexes.

Entity authority workflow

When alerts consistently show your brand absent from category queries — not declining, but never present — the issue isn’t a sudden shift. It’s a structural gap in brand visibility within AI systems. Predictive alerts surface this gap when competitors’ positions strengthen while yours remains flat.

The response is building entity authority through consistent, contextual brand mentions across the publications and platforms AI models trust. In campaigns across 67+ B2B companies, the BrandMentions team found that brands with consistent editorial mentions achieved AI recommendation rates 89% higher than those relying solely on traditional SEO.

How AI Platforms Use Source Data — and Why It Matters for Alerts

Predictive alerts are only as useful as your understanding of how AI platforms select and weight information. A brief overview of the mechanics helps calibrate your monitoring strategy.

ChatGPT relies on a combination of its training data (periodically updated) and, in its browsing-enabled modes, real-time web retrieval. Mentions in its training data carry long-term weight. Mentions surfaced through retrieval can change with each query. Both matter, but they require different monitoring approaches.

Perplexity is retrieval-heavy. It pulls from current web sources and cites them directly. Your presence in Perplexity responses depends heavily on whether your brand appears in the sources Perplexity indexes for each query. Monitoring brand mentions in Perplexity requires tracking both direct brand pages and third-party content that references your brand.

Google AI Overviews and AI Mode draw from Google’s search index and knowledge graph. Your entity authority within Google’s ecosystem — built through structured data, authoritative citations, and consistent NAP (name, address, product) information — directly influences whether AI Overviews include your brand.

Gemini operates across Google’s infrastructure and draws from similar sources as AI Overviews, with additional emphasis on recent, authoritative content. Brand visibility in Gemini correlates strongly with the recency and authority of citations across Google-indexed publications.

Understanding these mechanics helps you interpret alerts correctly. A sentiment drop in ChatGPT responses may trace to training data — a slower, harder-to-correct issue. The same drop in Perplexity may trace to a single high-ranking negative article — a faster, more actionable issue.

Measuring Whether Your Predictive Alert System Works

A predictive alert system should produce measurable improvements in three areas within its first 90 days.

Response time to visibility changes

Measure the time between a meaningful AI visibility shift and your team’s first corrective action. Before predictive alerts, most teams discover changes through quarterly audits — a lag measured in weeks or months. With calibrated alerts, response time should drop to 48–72 hours for high-priority signals.

Factual accuracy rate

Track the percentage of AI-generated statements about your brand that are factually correct across monitored platforms and queries. This metric should trend upward as your team corrects source documents and builds authoritative content. A starting accuracy rate below 80% is common; brands with active correction workflows typically reach 90%+ within six months.

Competitive mention share stability

Monitor your brand’s share of mentions across category queries relative to key competitors. The goal isn’t necessarily to dominate every query. It’s to maintain stable or growing share, without sudden drops going undetected. Alert-driven response workflows should reduce the frequency and duration of competitive share losses.

Tip: Track these metrics monthly in a simple dashboard. Combine them with your existing content and SEO performance data to show how AI visibility trends connect to pipeline and brand health. This makes the business case for continued investment in predictive monitoring clear to leadership.

Frequently Asked Questions

How are predictive AI alerts different from social listening tools?

Social listening tools monitor public conversations on social media platforms and forums. Predictive AI alerts specifically track how AI-generated responses — from ChatGPT, Perplexity, Gemini, and similar platforms — reference your brand. They analyze patterns in AI outputs and the source content AI models learn from, flagging emerging shifts before they solidify into the default response users see.

How many queries should I monitor for predictive alerts?

Start with 20–50 queries that represent your core category, key buyer questions, and competitive comparisons. Expand the set based on results from your first 30 days of monitoring. Quality of query selection matters more than quantity — queries that closely match real buyer language produce the most actionable alerts.

Can predictive alerts guarantee my brand appears in AI recommendations?

No. Predictive alerts detect and flag emerging changes. They do not control AI model behavior. Acting on alerts — by producing authoritative content, correcting factual errors, and building entity authority — improves your chances of favorable AI representation, but no tool or process can guarantee specific AI outputs.

What’s the minimum team size needed to manage predictive AI alerts?

A single marketing professional with authority to triage alerts and coordinate responses across content, product marketing, and communications can manage the system for a mid-sized B2B brand. Larger organizations may assign dedicated owners per alert type. The critical requirement is clear ownership, not headcount.

How do I know if my alert thresholds are calibrated correctly?

If you’re receiving more than five actionable alerts per week, thresholds are likely too sensitive. If you receive zero alerts for two consecutive weeks with no known changes in your AI visibility, they may be too loose. Plan to recalibrate thresholds monthly during the first quarter, then quarterly once patterns stabilize.

From Detection to Action: Making Predictive Alerts Count

Predictive AI alerts for brand mentions give your team something reactive monitoring can’t: time. Time to correct errors before they spread. Time to respond to competitive surges before you lose positioning. Time to identify opportunities while they’re still forming.

But the alerts themselves aren’t the outcome. The outcome is what your team does with the intelligence. The brands building durable AI visibility in 2026 treat predictive alerts as one layer of a broader strategy that includes consistent editorial presence, strategic brand mentions across high-authority publications, and ongoing monitoring of how AI platforms represent their brand.

If you’re not sure where your brand stands in AI search today, that’s the first gap to close. See where your brand appears — and where it’s missing — across the AI platforms your buyers use.