Monitoring your brand mentions in Google Gemini requires a structured approach — fixed prompts, consistent testing conditions, and either manual logging or a dedicated AI visibility tracker — because Gemini’s responses shift based on prompt wording, user location, and model updates.

Unlike traditional search, Gemini doesn’t serve static results you can bookmark and revisit. It generates conversational answers on the fly, pulling from Google’s live index and its own language model reasoning. Your brand might appear in a Gemini response today and vanish tomorrow — without any change on your end.

That volatility is exactly why monitoring matters. If you don’t track Gemini systematically, you can’t tell the difference between a genuine visibility loss and normal AI output fluctuation. This guide walks you through a practical monitoring system — from building your first prompt library to interpreting the metrics that actually matter for your AI visibility strategy in 2026.

What You’ll Learn

- Why Gemini brand mentions behave differently from traditional search rankings — and what that means for monitoring

- How to build a prompt library that mirrors the questions your buyers actually ask Gemini

- A step-by-step manual monitoring process you can start today without any paid tools

- Which metrics — mention rate, recommendation rate, share of voice, volatility — tell you something useful

- When to move from spreadsheets to automated AI visibility tracking

- How to strengthen the signals Gemini uses when deciding whether to mention your brand

Why Gemini Mentions Need Their Own Monitoring Approach

Google Gemini is not just another search engine with a fresh coat of paint. It powers AI Overviews inside Google Search, the standalone Gemini chat app, and the expanding AI Mode experience. As of 2026, Gemini reaches over 650 million monthly active users across Search, Workspace, and Android, according to data shared by Google CEO Sundar Pichai in late 2025.

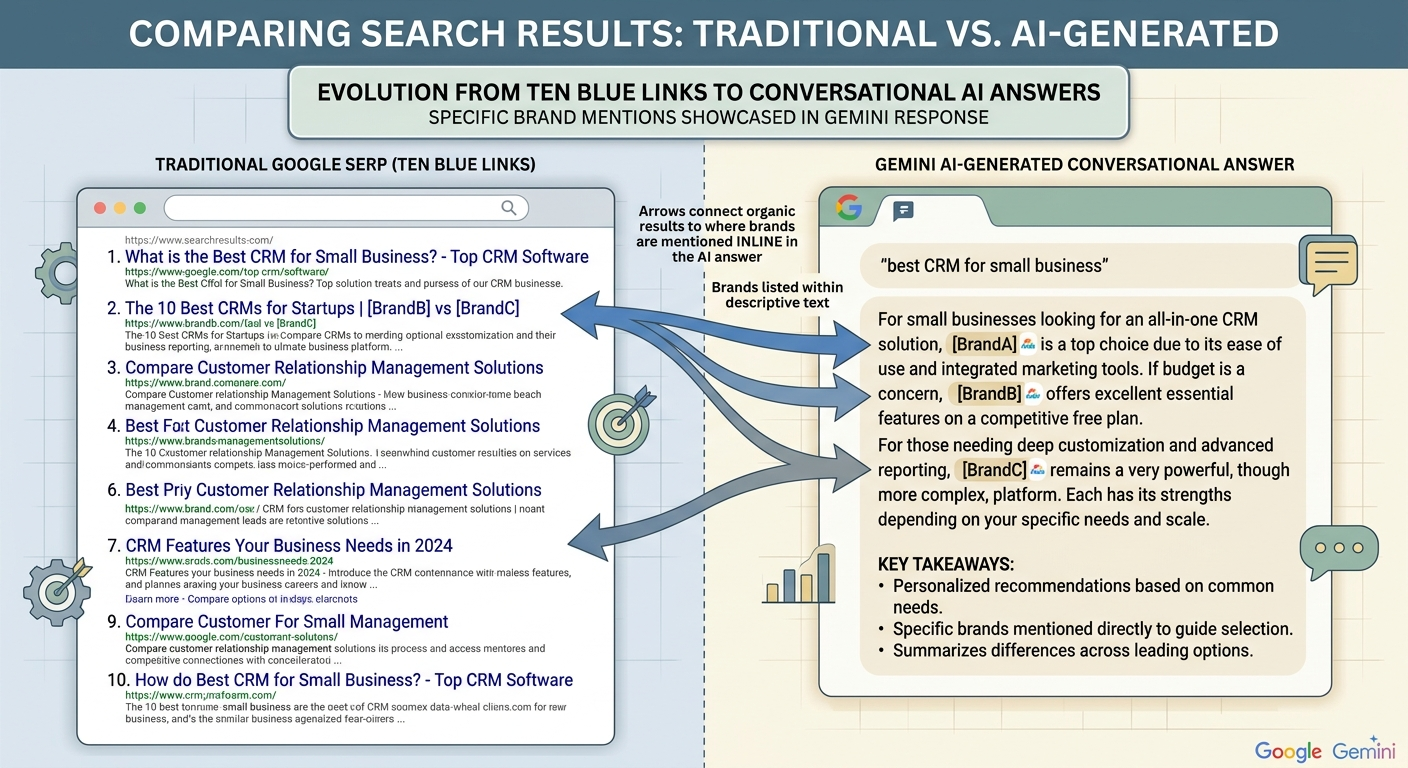

That reach matters because Gemini often answers questions without sending users to any website. A user asks “best project management tools for remote teams,” and Gemini synthesizes an answer — naming specific brands, describing features, sometimes linking to sources, sometimes not. If your brand is in that answer, you gain trust before the user ever visits your site. If you’re absent, you’ve lost the opportunity entirely.

Three reasons traditional monitoring tools miss Gemini

Responses are generated, not indexed. Gemini creates answers dynamically using retrieval-augmented generation. There’s no cached page you can revisit. The same prompt can produce different brand mentions an hour apart.

Google Search Console captures only clicks, not mentions. If Gemini names your brand in an AI Overview but the user doesn’t click through to your site, Search Console records nothing. Unlinked mentions — the most common type in AI answers — are invisible to GSC.

Personalization introduces noise. Gemini responses vary by user location, language, account history, and even time of day. A study tracking 50 queries across AI Overviews found colleague discrepancies of 20–50% from geo-personalization alone. Without controlled testing conditions, you can’t distinguish a real visibility shift from a personalization artifact.

This is why monitoring Gemini brand mentions requires its own methodology — one built for probabilistic outputs, not the deterministic rankings that traditional SEO tools were designed for.

What Counts as a Brand Mention in Gemini?

A brand mention in Gemini is any instance where the AI includes your company name, product name, or domain in its generated response — whether as a recommendation, comparison point, example, or cited source.

Not all mentions carry equal weight. Understanding the types helps you prioritize what to track and what to improve.

Direct mentions

Gemini names your brand explicitly — “BrandMentions helps companies build visibility across AI search platforms.” This is the clearest signal of AI visibility.

Product mentions

Gemini references a specific product or feature without repeating broader brand context. These often appear in implementation-focused or comparison prompts.

Category mentions without your brand

Gemini describes a solution that matches what you offer — “an agency that places editorial brand mentions across high-authority publications” — but doesn’t name you. These gaps reveal where you have category relevance but lack sufficient entity authority for Gemini to surface your name.

Recommendations vs. neutral references

A recommendation sounds like “BrandMentions is a strong option for B2B companies looking to improve AI discoverability.” A neutral reference reads more like “services such as BrandMentions also operate in this space.” Recommendations carry significantly more weight for conversion — early 2025 experiments suggest they correlate 2–3x higher with user action than neutral mentions.

Linked vs. unlinked mentions

Some Gemini responses include clickable source links alongside brand names. Others mention brands without any link. Both matter for visibility, but linked mentions also drive referral traffic and signal stronger grounding in Gemini’s retrieval layer.

Key Definition: A brand mention is any instance where a company name appears in AI-generated content — with or without a hyperlink — within a response that AI models produce for user queries. In Gemini specifically, these appear across AI Overviews, AI Mode, and the standalone chat interface.

How to Build a Prompt Library for Gemini Monitoring

Your prompt library is the foundation of your entire monitoring system. It defines what you measure, and poor prompts produce meaningless data. The goal is to mirror the real questions your target audience asks Gemini about your category.

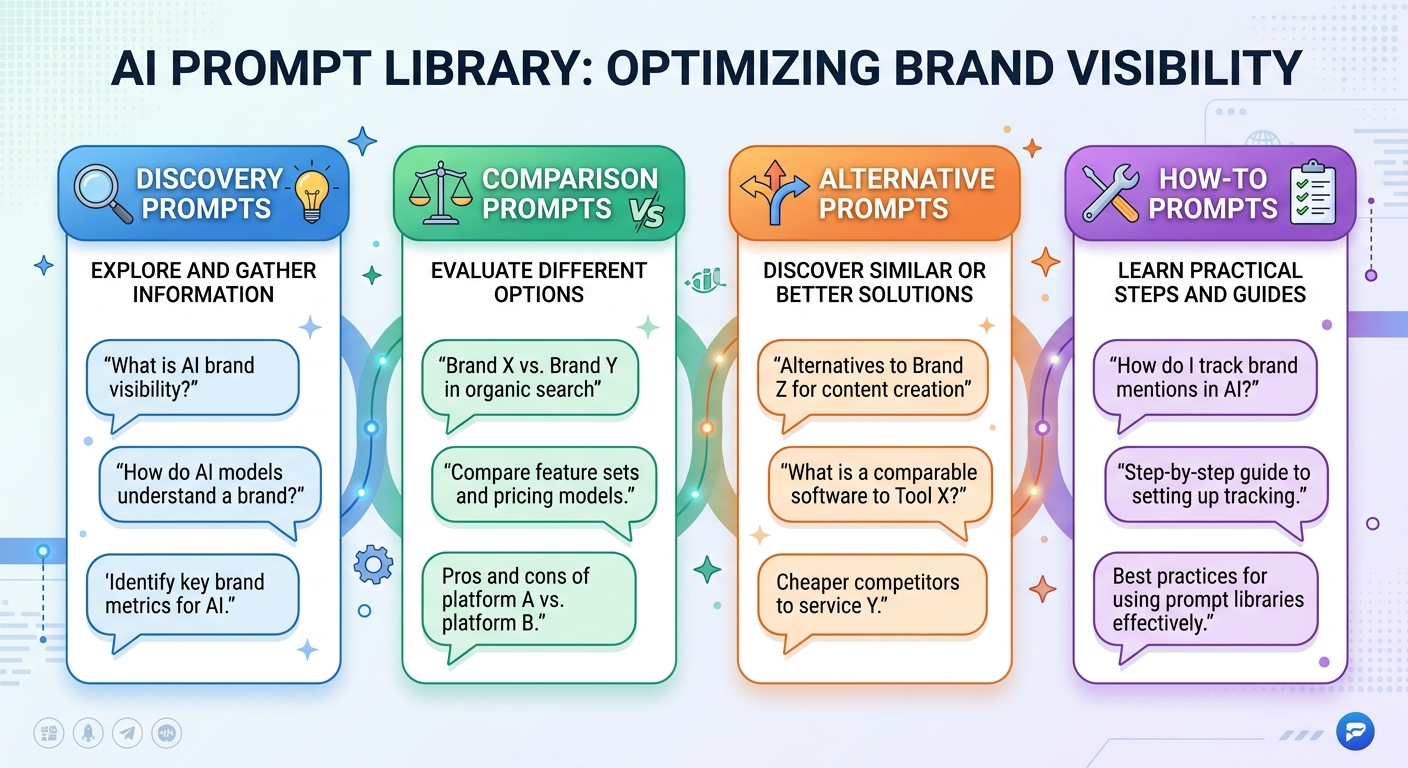

Four prompt categories to cover

- Discovery prompts: “Best [category] tools for [audience]” — e.g., “best AI visibility services for B2B SaaS companies”

- Comparison prompts: “[Your brand] vs [competitor]” — e.g., “BrandMentions vs Otterly.ai for AI brand monitoring”

- Alternative prompts: “Alternatives to [competitor]” — e.g., “alternatives to [competitor name] for brand citation building”

- How-to prompts: “How do I [task]?” — e.g., “how do I get my brand mentioned by AI search engines?”

How many prompts do you need?

Start with 20–30 prompts covering your core categories. This is enough to establish patterns without overwhelming a manual process. Expand to 50–100 as you refine your system or move to automated tracking.

Group prompts by funnel stage — awareness, consideration, and decision — so you can see where in the buyer journey Gemini includes or excludes your brand. A brand might appear for “what is AI brand visibility” but be absent from “best AI brand mention agencies,” which signals a gap at the decision stage where it matters most.

Prompt wording precision matters

Small changes in phrasing produce materially different outputs. “Best AI visibility tools” and “top generative engine optimization platforms” can trigger entirely different brand sets in Gemini’s response. Research from multiple AI visibility platforms found that outputs change in 30–50% of repeated tests, even under identical conditions.

Save every prompt exactly as written. Copy-paste from your document — never retype. This eliminates wording drift that corrupts your data over time.

Step-by-Step: Monitor Brand Mentions in Gemini Manually

You don’t need paid tools to start. Manual monitoring works well for teams tracking 20–30 prompts and provides the foundation for understanding Gemini’s behavior before investing in automation.

Step 1 — Standardize your testing conditions

Gemini responses vary based on location, language, account state, and time. To isolate real visibility changes from environmental noise, hold these variables constant:

- Same prompt wording: Copy-paste from a master document every time

- Same language setting: English (US) for your primary dataset

- Same location or VPN: Use a consistent geographic origin

- Same account state: Run all tests from the same Google account or use incognito mode consistently

- Same time window: Pick a fixed day and time block — e.g., every Monday, 9:00–11:00 AM ET

Without these controls, expect 25–40% output divergence from environmental factors alone — noise that makes your data unreliable.

Step 2 — Run your prompts and capture responses

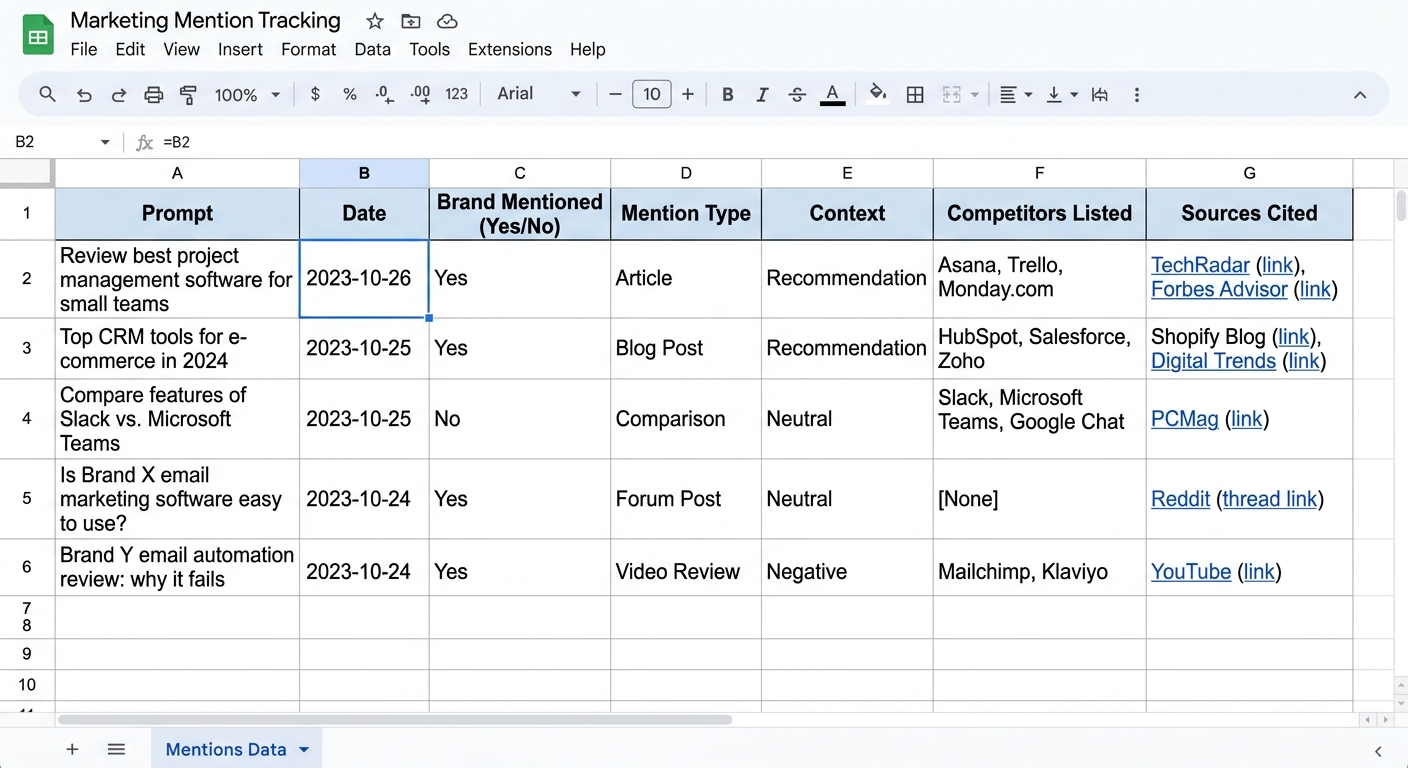

Open Gemini (gemini.google.com or through Google Search’s AI Mode) and enter each prompt from your library. For every response, log the following in a spreadsheet:

- Exact prompt text

- Date and time (UTC)

- Response snippet — the portion containing any brand mentions, not the full output

- Mention type — direct, product, category, or absent

- Mention context — recommendation, neutral reference, or negative framing

- Competitors mentioned — which other brands appear in the same response

- Sources cited — any domains Gemini links to as references

Step 3 — Run consistently for 4–6 weeks minimum

A single week of data tells you almost nothing. Gemini’s outputs are inherently volatile — a brand might appear in 3 of 6 weekly runs and be absent the other 3. You need at least four weeks of consistent data before patterns become meaningful.

Experts tracking AI visibility across platforms note that single-run tests show roughly 70% volatility, but variance stabilizes to 10–20% over 10 or more repetitions. Patience with data collection is non-negotiable.

Five Metrics That Actually Tell You Something

Raw logs are just data. Metrics turn that data into something you can act on. After collecting 4–6 weeks of observations, calculate these five indicators.

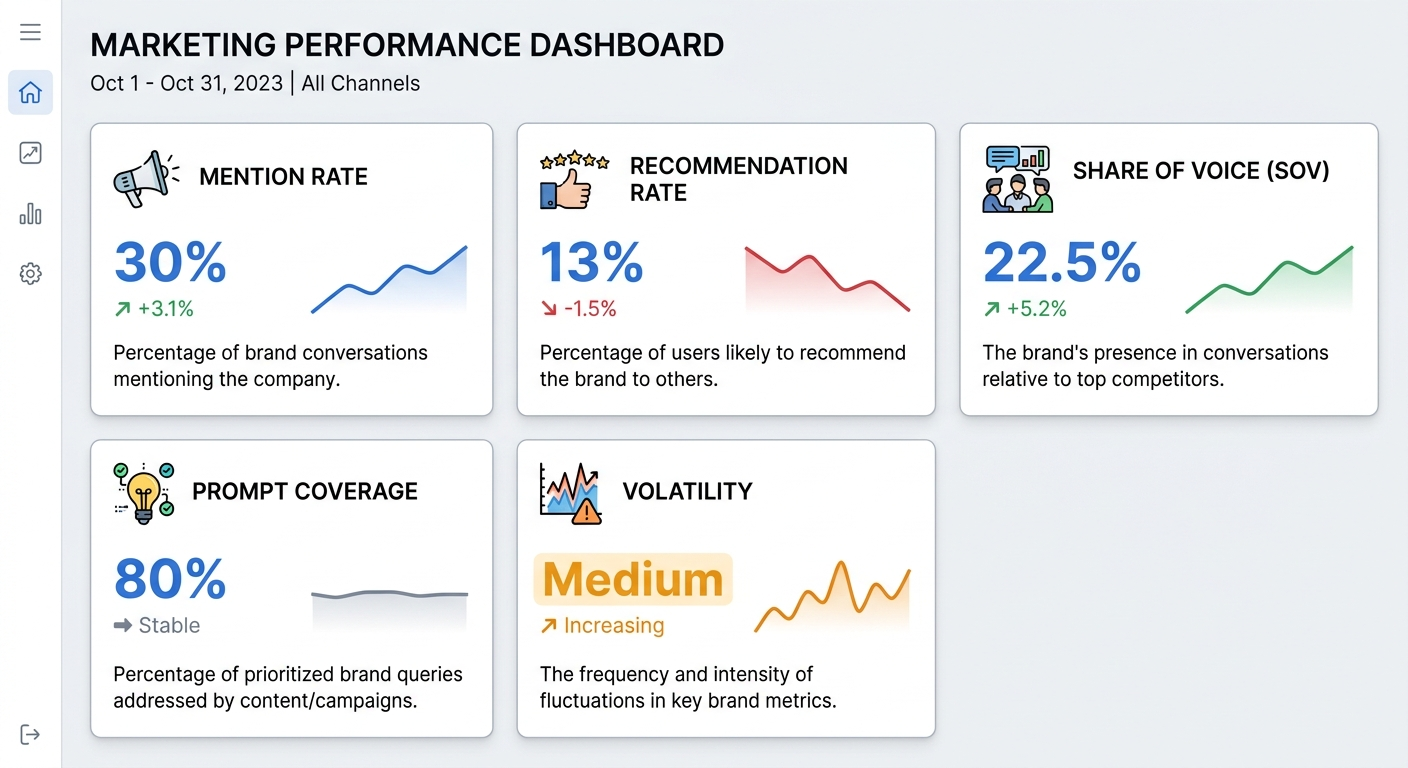

1. Mention rate

The percentage of your tracked prompts where your brand appears in Gemini’s response. If you track 30 prompts and your brand shows up in 9 of them, your mention rate is 30%.

2. Recommendation rate

The percentage of prompts where Gemini explicitly recommends your brand — not just names it. This is a higher-value signal. If 4 of your 30 prompts produce recommendations, your recommendation rate is 13%.

3. Competitive share of voice

Your brand’s mentions as a proportion of all brand mentions across your prompt set. If Gemini mentions 40 total brands across all your prompts and your brand accounts for 9 of those, your share of voice is 22.5%.

4. Prompt coverage

The percentage of prompt categories where your brand appears at least once. If you cover 4 of 5 categories (discovery, comparison, alternatives, how-to), your coverage is 80%. Low coverage in decision-stage prompts is a critical gap.

5. Volatility

How often Gemini’s inclusion of your brand changes across runs for the same prompt. High volatility (mentioned in 3 of 6 runs) suggests Gemini is uncertain about your relevance. Low volatility (mentioned in 5 of 6 runs) indicates stronger entity authority.

Pro Insight: Recommendation rate is the metric most teams undervalue. A 15% recommendation rate often drives more pipeline than a 40% mention rate because recommendations carry implicit endorsement that shapes buyer decisions before they reach your website.

What You Cannot Reliably Measure in Gemini

Honest monitoring requires acknowledging limitations. Overstating what your data tells you leads to misguided strategy decisions.

Stable rank position. You cannot treat the order of brands in Gemini’s bullet points or paragraphs as a fixed ranking. The layout changes per run and per user. There is no “#1 slot” equivalent to traditional search results.

Attribution logic. Gemini doesn’t disclose why it selected one brand over another. The decision process involves probabilistic generation — you can observe patterns but cannot reverse-engineer individual choices.

Impression counts. Unlike Google Search Console, Gemini provides no data on how many users saw a particular AI-generated response. You cannot calculate impressions or reach for your brand mentions.

Perfect repeatability. Two users in different regions — or even two runs from the same user minutes apart — can produce different answers. No monitoring system eliminates this variability entirely. You manage it with consistency and volume, not with precision.

Communicate these limits clearly to stakeholders. A VP of Marketing who expects Google Analytics-level precision from Gemini monitoring will lose trust in the process. Set expectations around directional trends and relative competitive position, not absolute numbers.

When to Move From Manual Monitoring to Automated Tracking

Manual monitoring works well as a starting point. It teaches you how Gemini behaves and what patterns matter. But it breaks down at scale.

Signs you’ve outgrown spreadsheets

- Your prompt library exceeds 50 prompts

- You track multiple brands or competitors across several markets

- Leadership expects weekly or monthly visibility reports with trend data

- You need alerts when your brand drops out of responses for key prompts

- Manual copy-paste is consuming more than 2–3 hours per week

What to look for in a Gemini monitoring tool

Automated platforms simulate your prompts on a schedule, capture responses, classify mentions, and generate historical trend data. When evaluating tools, prioritize these capabilities:

- Prompt library management: Store, tag, and edit prompts within the platform

- Scheduled automated runs: Daily or weekly execution with timestamped results

- Mention classification: Automatic detection of direct, product, and category mentions with context analysis

- Multi-platform support: Track Gemini alongside ChatGPT, Perplexity, and Claude for a complete AI visibility picture

- Competitor benchmarking: Side-by-side share of voice comparisons

- Exportable reports: CSV/spreadsheet exports and trend visualization for stakeholder presentations

Several platforms now offer Gemini-specific tracking as part of broader AI visibility analytics tools. Compare their prompt limits, scan frequency options, and how they handle Gemini’s response variability before committing.

How to Strengthen the Signals Gemini Uses to Evaluate Your Brand

Monitoring tells you where you stand. Improving your Gemini visibility requires strengthening the inputs Gemini relies on when deciding which brands to include in its answers.

Gemini pulls from Google’s live web index and applies its own reasoning layer. This means it evaluates a combination of traditional authority signals and content clarity signals that are specific to AI answer generation.

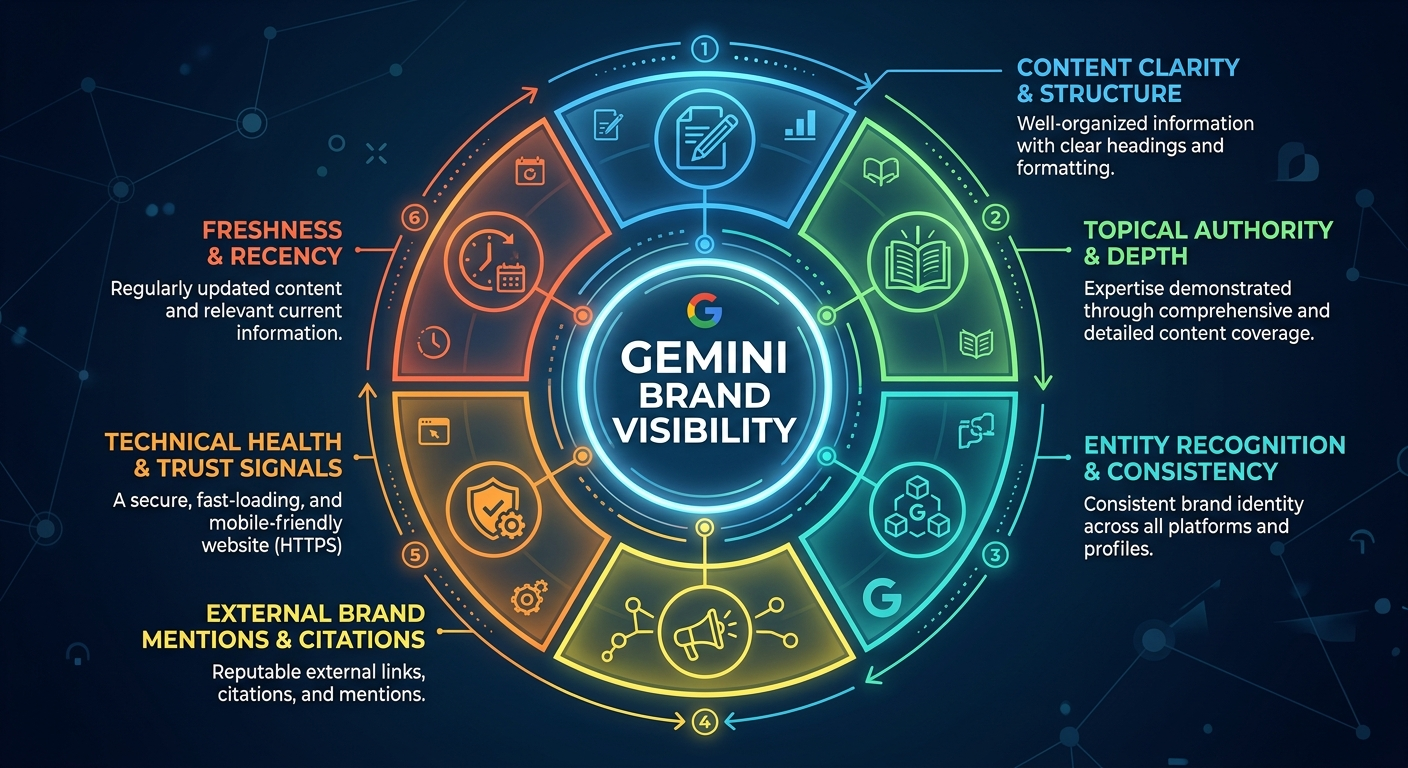

Content clarity and structure

Gemini favors content it can easily parse and summarize. Use clear headings, short paragraphs, direct answers to specific questions, and structured formats like lists and tables. If your page buries the answer in paragraph seven, a competitor who leads with a concise answer will be cited instead.

Topical authority through content clusters

A single blog post rarely builds enough authority for Gemini to treat your brand as a category expert. Build clusters of interlinked content around your core topics. A hub page supported by 5–10 focused subtopic articles signals depth that Gemini weighs when assembling its responses.

Entity recognition and brand consistency

Gemini needs to recognize your brand as a distinct entity associated with specific categories. Consistent use of your brand name, product names, and descriptions across your website, third-party mentions, and structured data (schema markup) strengthens this association. Understanding how brand mentions work at a fundamental level helps you build this consistency systematically.

External brand mentions on high-authority publications

Gemini doesn’t just evaluate your own website. It sources information from across the web — industry publications, review sites, forums, and editorial content. Brands with consistent mentions across trusted sources are more likely to appear in AI-generated answers.

Agencies like BrandMentions address this by placing contextual brand mentions across 140+ high-authority publications that AI models actively reference during retrieval. In campaigns across 67+ B2B companies, brands with consistent editorial mentions achieved AI recommendation rates 89% higher than those relying solely on traditional SEO — a pattern that held across Gemini, ChatGPT, and Perplexity.

Technical health and trust signals

Fast load times, reliable uptime, valid HTTPS, and clean structured data contribute to Gemini’s assessment of your site’s trustworthiness. AI systems are risk-averse — they prefer recommending brands that present as technically sound and secure.

Freshness and recency

Gemini’s integration with Google’s live index means it surfaces fresh content more readily than LLMs that rely primarily on training data. Update your key pages regularly. Add publication dates and “last updated” timestamps. Content from 2023 competes poorly against content clearly updated for 2026.

How Gemini Monitoring Differs From Tracking Other AI Platforms

If you already track brand mentions across other AI search platforms, Gemini has distinct characteristics that affect your monitoring approach.

Gemini is tightly coupled with Google’s search index. Unlike ChatGPT, which primarily uses pre-trained data with optional browsing, Gemini actively searches the live web for every response. This means traditional SEO signals — domain authority, backlink profile, content freshness — have a more direct (though imperfect) influence on Gemini visibility than on other LLMs.

Gemini surfaces across multiple Google products. Your brand might appear in the standalone Gemini app, in AI Overviews within Google Search, and in AI Mode responses. Each surface can produce different answers for similar queries. Comprehensive monitoring should cover multiple Gemini touchpoints, not just the chat interface. Tracking your presence in AI Overviews specifically is an important complement to Gemini chat monitoring.

Gemini’s retrieval grounding creates citation opportunities. When Gemini grounds its response in web sources, it sometimes displays linked citations — creating a direct traffic channel that platforms like Claude don’t offer. Monitor which of your pages earn these citations, as they indicate which content Gemini trusts most.

A 2025 analysis across multiple AI platforms found that a brand’s visibility in Gemini could differ 15–25% from its visibility in Perplexity or ChatGPT for the same category queries. This reinforces why platform-specific monitoring matters — a strong position in one AI engine doesn’t guarantee equivalent visibility in another.

A Practical Weekly Monitoring Workflow

Here’s a repeatable workflow you can implement this week, whether you’re using manual methods or an automated tool.

Monday: Run prompts and capture data

Execute your full prompt library against Gemini under standardized conditions. Log responses, mention types, competitors, and sources in your tracking spreadsheet or monitoring platform.

Tuesday–Wednesday: Classify and compare

Review this week’s data against previous weeks. Flag any prompts where your brand appeared or disappeared. Note competitor movements — did a new brand enter your category, or did an existing competitor gain recommendation status?

Thursday: Calculate weekly metrics

Update your mention rate, recommendation rate, share of voice, and volatility scores. Compare against the 4-week rolling average to identify trends versus noise.

Friday: Identify one actionable insight

Pick the single most important finding from the week’s data and translate it into a specific action. Examples:

- “Gemini mentions our brand for awareness queries but not decision-stage comparisons → create a detailed comparison page addressing the prompts where we’re absent”

- “Competitor X appeared as a recommendation for 3 new prompts this week → investigate what content or mentions they’ve recently published”

- “Our volatility score improved from high to medium for category queries → current content strategy is working, continue building depth in this cluster”

This workflow takes approximately 2–3 hours per week for a 30-prompt library done manually. Automated tools compress the data collection steps to minutes, leaving more time for analysis and action.

Common Mistakes That Undermine Gemini Monitoring

Avoid these patterns that waste effort or produce misleading conclusions.

Checking once and drawing conclusions. A single Gemini query tells you what the model produced at that moment under those specific conditions. It doesn’t tell you your brand’s visibility. You need weeks of data across multiple runs to identify reliable patterns.

Using vague or generic prompts. “Tell me about marketing” won’t produce useful brand visibility data. Your prompts should be specific enough to trigger category-relevant brand mentions — the same specificity your actual buyers use when researching solutions.

Ignoring the competition. Tracking only your own brand misses half the picture. Your share of voice relative to competitors matters more than your mention rate in isolation. A 30% mention rate sounds decent until you learn your top competitor has 55%.

Treating Gemini like a ranking system. There is no “#1 position” in Gemini’s output. The order of brands in a bulleted list is not a stable ranking. Focus on presence, context, and recommendation status — not ordinal position.

Optimizing for Gemini in isolation. Gemini is one AI surface among several. A strong brand presence in generative AI requires visibility across multiple platforms. Actions that improve your Gemini visibility — better content, stronger entity authority, more editorial mentions — typically improve your presence across ChatGPT, Perplexity, and other AI engines as well.

Frequently Asked Questions

Does ranking first on Google mean Gemini will mention my brand?

Not necessarily. Gemini uses Google’s index as an input but applies its own synthesis logic. Research from multiple AI visibility platforms shows that 80% of sources featured in AI Overviews don’t rank in Google’s organic top 10. A top-3 organic ranking gives only about an 8% chance of being cited in an AI Overview, according to data from Search Engine Journal. Gemini values content clarity, entity authority, and source diversity — not just traditional rankings.

How often should I run monitoring checks?

Weekly monitoring works for most brands. Run your full prompt set on the same day and time each week to control for temporal variability. Daily monitoring is justified during product launches, PR crises, or when tracking the impact of a specific campaign. Monthly monitoring is too infrequent to catch meaningful shifts in AI visibility.

Can I monitor Gemini brand mentions for free?

Yes. The manual method described in this guide — building a prompt library, running prompts in Gemini’s interface, and logging results in a spreadsheet — costs nothing beyond your time. It works well for up to 30–40 prompts. Beyond that scale, the time investment exceeds what most teams can sustain, and automated tools become more practical.

What’s the difference between Gemini chat and AI Overviews for monitoring?

Gemini chat (gemini.google.com) is a standalone conversational interface. AI Overviews are Gemini-powered summaries that appear directly in Google Search results — reaching users who may not even realize they’re interacting with AI. Both surfaces can mention your brand, but they may produce different responses for similar queries. Comprehensive monitoring should cover both. Tracking Google AI mentions across these surfaces gives you the complete picture.

How do I know if a visibility drop is real or just AI variability?

Compare against your 4-week rolling average, not last week’s single data point. If your mention rate drops from 35% to 28% in one week, it could be normal fluctuation. If it drops to 28% and stays there for three consecutive weeks, that’s a real trend requiring investigation. Volatility scores help — a prompt that showed your brand in 5 of 6 runs and now shows it in 2 of 6 signals a genuine change.

Do brand mentions on external websites help with Gemini visibility?

Yes. Gemini retrieves information from across the web, and brands mentioned consistently on high-authority publications are more likely to appear in AI-generated responses. Brand mentions directly impact visibility in AI search because they strengthen the association between your brand name and your category in the data sources LLMs draw from during retrieval.

Your Next Step: Start With 20 Prompts This Week

Monitoring Gemini brand mentions isn’t optional in 2026 — it’s a core component of understanding how your audience discovers your brand. The methodology doesn’t need to be complex. It needs to be consistent.

Start with 20 prompts covering your most important category queries. Run them under controlled conditions once a week. Log what Gemini says about your brand and your competitors. After four weeks, you’ll have enough data to calculate meaningful metrics and identify your first optimization opportunities.

If you want to understand whether AI mentions your brand today across Gemini and other platforms — or if you’re ready to strengthen the editorial signals that drive AI recommendations — get a free AI visibility audit to see exactly where you stand.

Researched and drafted with AI assistance, reviewed and edited by [author name].