How to Track Brand Mentions in AI Search — A Practical System for 2026

Tracking brand mentions in AI search means systematically monitoring when and how ChatGPT, Gemini, Perplexity, Claude, and Google AI Overviews name, cite, or recommend your brand in their generated responses. Unlike traditional SEO rank tracking, AI search monitoring requires a fundamentally different approach — one built around prompts, context analysis, and cross-platform measurement rather than keyword positions on a results page.

As of 2026, AI-powered search platforms process billions of queries monthly. According to a 2025 Capgemini study, 58% of consumers have replaced traditional search engines with generative AI tools for product recommendations. If your brand isn’t showing up in those AI-generated answers, you’re invisible at a critical moment in the buyer journey.

This article walks you through a practical, repeatable system for tracking your brand’s presence across every major AI search platform — including the metrics that matter, the tools that work, and the actions that turn monitoring data into measurable visibility gains.

Key Takeaways

- AI brand mention tracking requires monitoring generated responses, not search result positions — two people asking the same question can receive different brand recommendations.

- Mentions, citations, and recommendations are three distinct outcomes you need to measure separately.

- Front-end capture (what users actually see) matters more than API outputs, which can diverge from real responses.

- A minimum viable prompt set of 25–50 high-intent questions gives you a reliable baseline across ChatGPT, Gemini, Claude, and Perplexity.

- Weekly tracking cadence catches volatility that monthly snapshots miss entirely.

- Entity disambiguation prevents false positives that inflate your dashboards and mislead stakeholders.

- Schema markup, evidence pages, and editorial brand mentions on high-authority publications are the three levers that move AI visibility fastest.

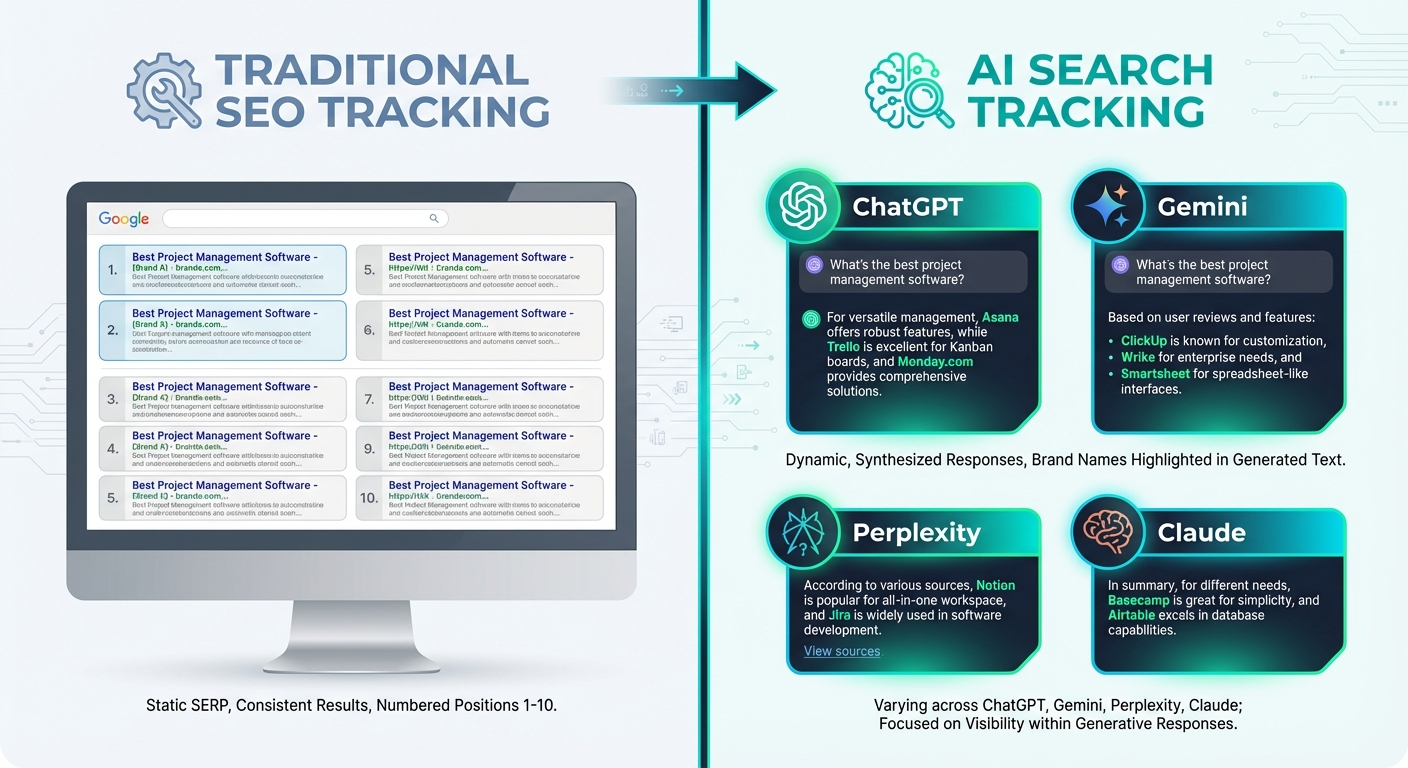

Why Traditional SEO Tracking Fails for AI Search

Traditional rank tracking tools monitor your position on a static results page — a list of ten blue links that stays relatively consistent for a given keyword. AI search operates on a completely different model.

When someone asks ChatGPT “What’s the best project management tool for remote teams?” or Perplexity “Which CRM has the best reviews?”, the AI generates a unique, synthesized answer. There are no fixed “rankings.” Different users may receive different brand recommendations for the same query, depending on phrasing, context, and model updates.

This creates three specific tracking challenges:

- No stable positions to monitor. AI answers are generated dynamically. A brand mentioned today may be absent tomorrow after a model update.

- Answers vary by platform. ChatGPT, Gemini, Claude, and Perplexity each pull from different data sources and use different retrieval methods. Your brand may appear in one and be absent from another.

- Citations behave inconsistently. According to a 2025 BrightEdge analysis, only 2 in 10 ChatGPT mentions include citation links, while Perplexity averages over 5 citations per answer but mentions brands less frequently — only 1 in 5 answers include brand references.

The implication is clear: you need a purpose-built monitoring approach that treats AI search as its own channel with its own metrics. Trying to adapt traditional SEO dashboards to AI visibility creates blind spots that grow more expensive over time.

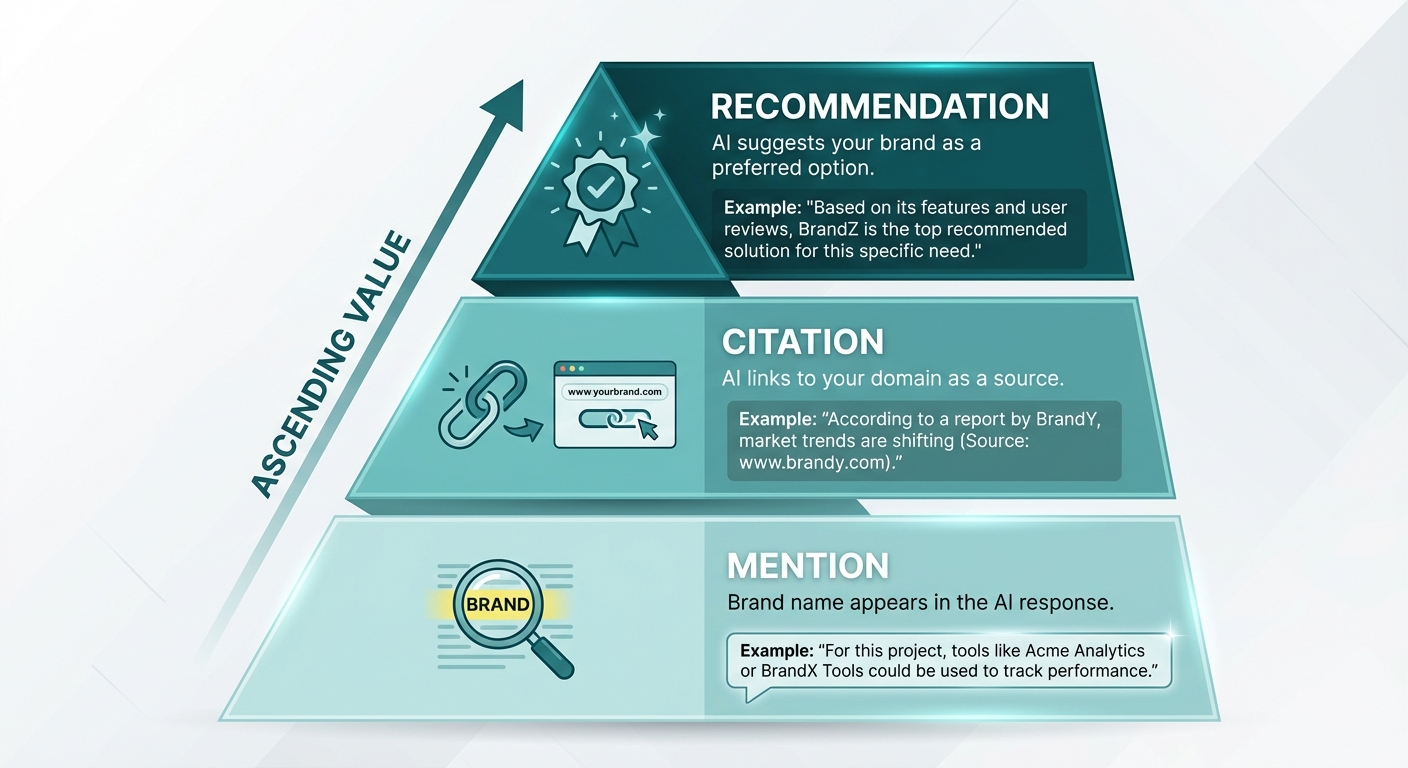

What Exactly Are You Tracking? Mentions vs. Citations vs. Recommendations

Before setting up any monitoring system, you need clarity on what you’re measuring. AI search produces three distinct outcomes that require separate tracking:

Brand mention — your brand name appears somewhere in the AI-generated answer, with or without a link. This is the broadest measure of presence. A mention in a warning (“some users report issues with BrandX”) counts the same as a positive mention if you’re only tracking raw frequency.

Citation — the AI answer links to your domain as a source or attribution. Citations drive traffic and signal that the AI engine considers your content authoritative enough to reference. Not all mentions include citations.

Recommendation — the AI answer frames your brand as a suggested option, often with language like “best for,” “top pick,” or “recommended.” This is the highest-value outcome because it directly influences purchase decisions.

Pro Insight: If you only track raw mentions, you’ll overstate your AI visibility. A mention buried in a list of ten alternatives with no endorsement is fundamentally different from being the first brand recommended. Tag every tracked result with presence (mentioned or not), attribution (cited with a link or not), and intent framing (recommended, neutral, or negative).

This three-field classification gives you reporting that stakeholders trust. For example: “We’re mentioned in 42% of prompts but cited in only 8% — we need stronger source coverage” tells a much more useful story than “our mention rate is 42%.”

How to Build Your AI Search Monitoring System Step by Step

Step 1: Map your buyer-intent prompt library

Start from how your customers actually search in AI tools. Unlike keyword research for traditional SEO, AI search monitoring requires you to build a prompt library — a structured set of natural-language questions grouped by buyer intent.

Create prompts across three intent clusters:

- Category prompts (problem-aware users): “What are the best tools for tracking AI brand mentions?” / “Best AI visibility platforms for B2B companies”

- Comparison prompts (solution-aware users): “BrandX vs BrandY for AI search monitoring” / “Alternatives to [competitor]”

- Solution prompts (evaluating users): “How to get my brand mentioned in ChatGPT” / “How to improve AI search visibility for a SaaS company”

Pull phrasing from real sources: sales call transcripts, customer support tickets, Reddit threads, and “People Also Ask” boxes in Google. Aim for 25–50 canonical prompts as a starting baseline. For each prompt, create 2–3 synonym variations (“best” vs. “top” vs. “recommended”) because small wording changes can produce different AI responses.

Assign each prompt a business value score based on revenue potential, funnel stage, and target market. This keeps your monitoring focused on the queries that matter most — not just the easiest to track.

Step 2: Choose which AI platforms to monitor

As of 2026, four AI search platforms require monitoring at minimum:

- ChatGPT — the dominant conversational AI with over 800 million weekly active users, according to OpenAI’s 2025 reporting.

- Google AI Overviews — appearing on billions of Google searches, often the first thing users see. A 2025 BrightEdge study found AI Overviews appearing in over 11% of queries, with a 22% increase since launch.

- Perplexity — growing rapidly for research-oriented queries, with approximately 22 million monthly active users as of late 2025.

- Claude — gaining traction through integrations with Safari and enterprise partnerships.

Add Gemini and Microsoft Copilot if your audience uses Google Workspace or Microsoft 365 heavily. Each platform pulls from different data sources, so your brand’s visibility can vary dramatically across them.

Key Definition: AI search monitoring is the practice of systematically running a defined set of natural-language prompts across AI platforms (ChatGPT, Gemini, Perplexity, Claude, Google AI Overviews) on a recurring schedule and logging which brands are mentioned, cited, or recommended in each response.

Step 3: Capture front-end responses, not API outputs

This is a mistake many teams make early. API responses from AI models can diverge from what users actually see in the front-end interface. The browsing features, retrieval-augmented generation (RAG) layers, and UI-specific formatting of consumer-facing AI tools produce different outputs than raw API calls.

For each monitoring run, capture:

- The full answer text as rendered in the user interface

- Every brand named in the response

- All visible links (normalized — strip UTM parameters, resolve redirects)

- Placement order (first mentioned, middle, end)

- Timestamp, model version, country, and language settings

Store weekly snapshots. This historical data lets you compute volatility over time, measure how quickly your content changes translate into AI visibility gains, and explain trend shifts to stakeholders.

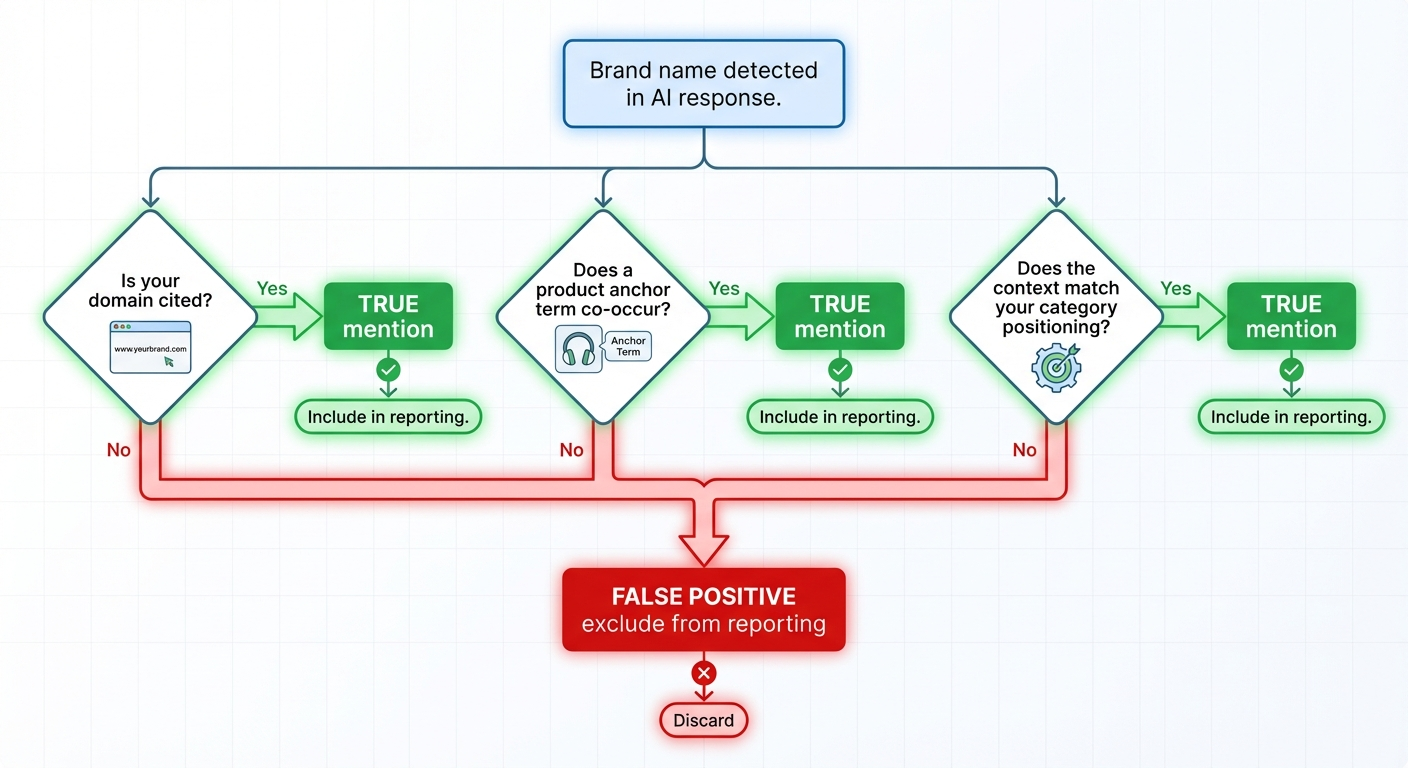

Step 4: Eliminate false positives with entity disambiguation

False positives are the silent credibility killer in AI mention tracking. Three common types inflate dashboards and mislead decision-making:

- Name collision — your brand name matches a person, feature, location, or different company.

- Category confusion — your brand name overlaps with a generic product category term (e.g., “Pulse,” “Beacon,” “Scout”).

- Prompted recall — your prompt includes your brand name (“Is BrandX good?”), so the AI mentions it by definition. That’s not organic visibility.

Build an entity definition for your brand that includes name variants, canonical domain, 2–5 anchor product names, and a competitor list. A mention only counts as “true” if at least one of these conditions is met:

- Your domain is cited in the response

- An anchor product name co-occurs with your brand name in the same paragraph

- The answer clearly references your company’s specific category positioning

Run a 5–10% human QA sample on every weekly batch to catch entity resolution errors that automated systems miss.

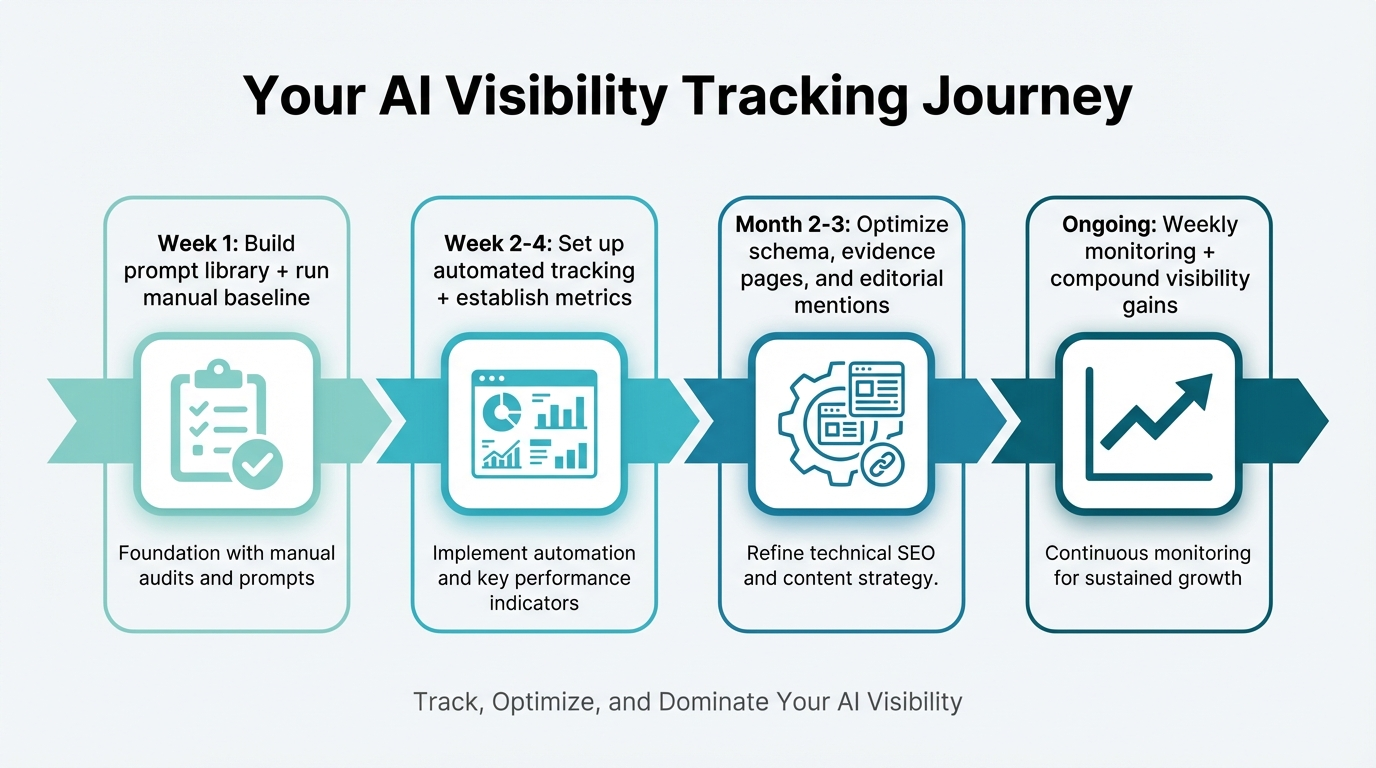

Step 5: Set your tracking cadence

AI responses change more frequently than traditional search rankings. A single monthly check gives you a snapshot — not a trend. Set your cadence based on prompt importance:

- Weekly: Core prompts (your 25–50 highest-value queries). This catches model updates, competitive displacement, and the impact of recent content changes.

- Bi-weekly: Extended prompt sets (100+ queries covering broader intent clusters).

- Monthly: Long-tail and experimental prompts you’re testing for new opportunities.

This tiered approach balances monitoring depth with resource constraints. Increase cadence on any prompt cluster showing high volatility — defined as significant week-over-week changes in which brands appear.

The Four Metrics That Actually Matter

Once your monitoring system is running, focus on four metrics that translate AI tracking data into actionable intelligence:

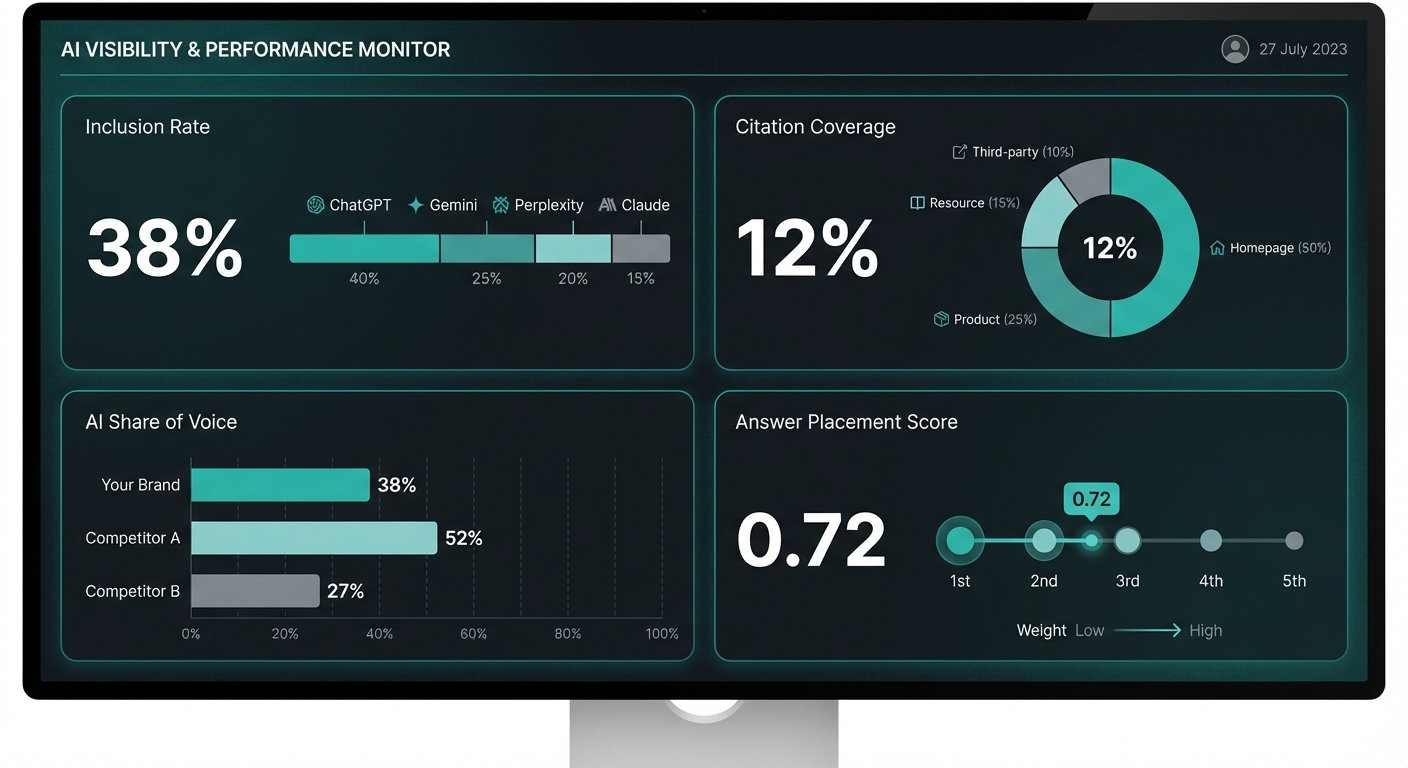

Inclusion Rate (IR)

The percentage of your tracked prompts where your brand is mentioned or cited. Segment by AI platform and intent cluster. A brand mentioned in 3 out of 10 relevant queries has a 30% Inclusion Rate — that’s your baseline to improve.

Citation Coverage (CC)

The percentage of your appearances that include a clickable link to your domain. Break this down by link type: homepage, product page, resource page, or third-party reference. Low citation coverage with decent mention rates signals that AI engines recognize your brand but don’t consider your content authoritative enough to link to.

AI Share of Voice (SOV)

Your brand’s mention rate compared to competitors for the same prompt set. If a competitor appears in 60% of relevant responses and you appear in 15%, that gap represents lost discovery opportunities. Track SOV by platform and intent cluster — you may dominate comparison queries but disappear on category-level prompts.

Answer Placement Score (APS)

A weighted score based on where your brand appears within each AI response. First-named brands carry more weight than those buried at the end of a list. Assign weights (e.g., first mentioned = 1.0, middle = 0.6, end = 0.3) and compute a normalized score across your prompt set.

Report these four metrics weekly. Add a Volatility Index (week-over-week change in which brands appear per prompt) to flag unstable prompts that need more frequent monitoring. Add Time to Inclusion (TTI) — the number of days between a content update and the first time your brand appears for a related prompt — to measure how quickly your optimization efforts translate into visibility.

Tools That Track Brand Mentions in AI Search

The AI visibility monitoring category has matured significantly since 2024. Here’s how the current landscape breaks down by use case — not by vendor marketing claims.

Dedicated AI visibility platforms

Tools like Peec AI, Profound, and OtterlyAI run structured prompt sets across multiple AI engines automatically. They provide dashboards showing mention rates, citation tracking, competitive benchmarking, and historical trends. These are purpose-built for the problem and provide the deepest AI-specific insights.

Dedicated platforms typically cost between $29 and $500+ per month depending on prompt volume, platform coverage, and reporting depth. They’re the strongest choice for teams committed to treating AI visibility as an ongoing program.

SEO platforms with AI tracking layers

Semrush and Ahrefs have both expanded their toolkits to include AI visibility metrics. Semrush’s AI Visibility Toolkit tracks brand presence across ChatGPT and Google AI Overviews starting at $99/month. Ahrefs monitors how brands get cited in AI-generated results with context, sentiment, and source attribution.

These are strong choices if your team already uses these platforms for traditional SEO and wants to consolidate AI monitoring into the same workflow. The tradeoff: they typically offer less depth on AI-specific metrics than dedicated platforms.

Manual monitoring (free, start today)

You don’t need any tools to begin. Run your 25 highest-value prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews manually. Log results in a spreadsheet: platform, brand mentioned (yes/no), sentiment (positive/neutral/negative), competitors mentioned, sources cited.

Manual monitoring is time-consuming and doesn’t scale, but it costs nothing and gives you real data within an hour. Use it as a baseline before investing in tooling.

Tip: When monitoring manually, use incognito mode or a fresh account. AI responses can be personalized based on conversation history, which skews results if you’re logged into an account with extensive prior usage.

For a deeper comparison of how specific tools handle ChatGPT visibility tracking, see our analysis of the best tools to track brand mentions on ChatGPT.

Platform comparison at a glance

| Approach | Best for | Starting cost | Key strength | Key limitation |

|---|---|---|---|---|

| Dedicated AI visibility platforms | Teams treating AI visibility as a core program | $29–$500+/month | Deepest AI-specific metrics and prompt-based tracking | Separate tool to manage alongside existing stack |

| SEO platforms with AI features | Teams consolidating SEO + AI monitoring | $99+/month | Unified workflow with traditional SEO data | Less depth on AI-specific metrics |

| Manual monitoring | Getting started with zero budget | Free | Immediate baseline data, no tool dependency | Time-consuming, small sample size, doesn’t scale |

How to Turn Tracking Data Into Improved Visibility

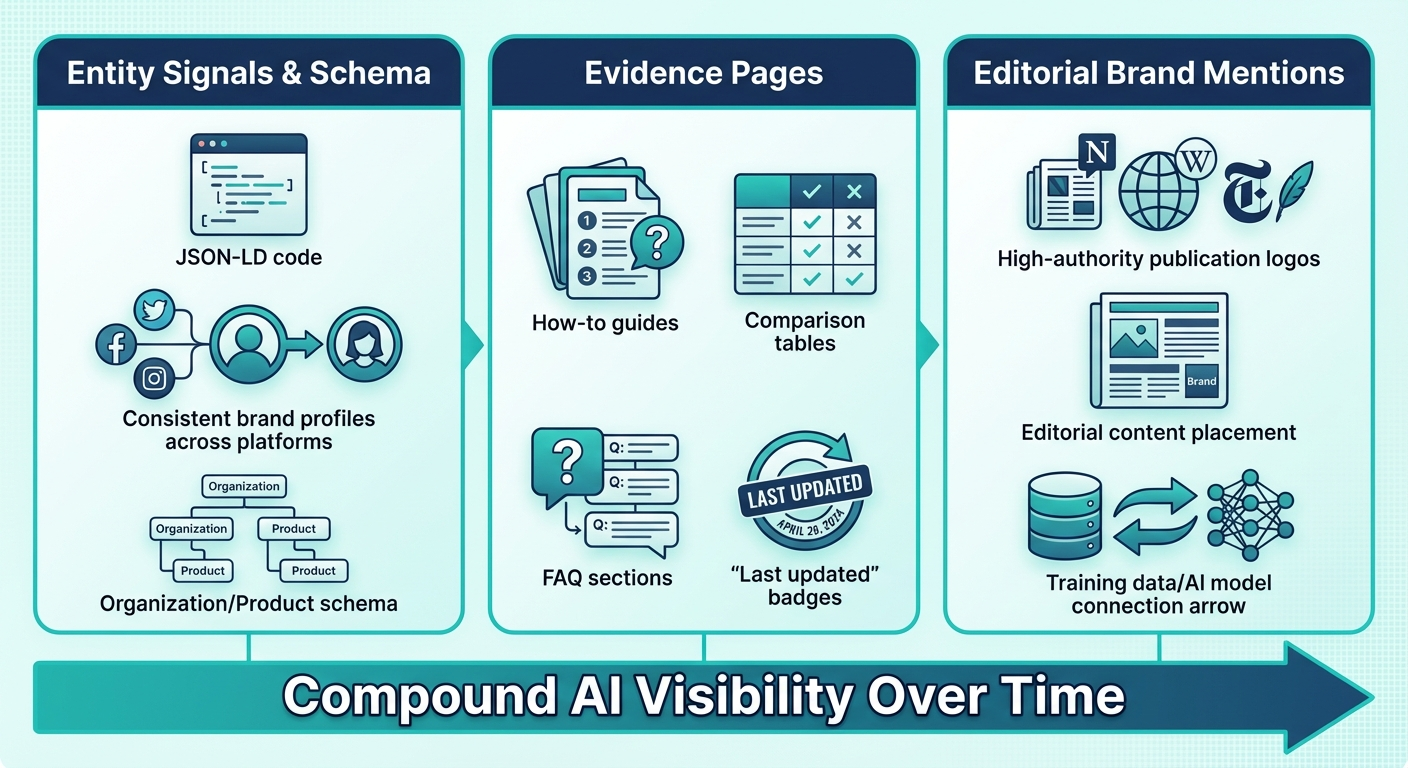

Monitoring without action is expensive observation. Every insight from your tracking system should connect to a concrete next step. Here are the three levers that move AI visibility fastest, based on what the data consistently shows.

Strengthen your entity signals and schema coverage

AI models need to clearly identify who you are before they can recommend you. Inconsistent brand information across your website, directory listings, and third-party profiles confuses AI engines and leads to missed mentions or inaccurate descriptions.

Implement structured data (JSON-LD) across your key pages:

- Organization schema — name, description, URL, logo, sameAs links to Wikipedia, LinkedIn, Crunchbase

- Product schema — product name, description, brand, offers, aggregate ratings

- FAQPage and HowTo schema — structured answers AI engines can extract directly

Keep your brand name, description, and positioning consistent across every platform AI models are likely to crawl. Research from Princeton University, Georgia Tech, and the Allen Institute for AI, published in 2024, suggested that adding citations, quotes, and structured data to content boosted AI visibility by more than 40%.

Build evidence pages that AI engines want to cite

AI platforms prefer content that is structured for extraction: clear headings, data-backed claims, step-by-step processes, comparison tables, and FAQ sections. These are your evidence pages — the assets most likely to earn citations.

Prioritize creating:

- Definitive how-to guides that answer high-intent prompts in your category

- Comparison pages with transparent evaluation criteria and structured tables

- Original research with specific data points, methodologies, and findings

- FAQ content that matches the exact questions your prompt library targets

Update these pages regularly with visible “last updated” dates. AI engines weigh freshness when selecting sources to cite. A page updated in 2026 with current data will outperform a 2024 page with identical structure.

For a deeper look at how content strategy connects to LLM visibility, explore how structured editorial placements influence which brands AI models learn to reference.

Earn editorial brand mentions on high-authority publications

This is where the strongest AI visibility gains compound over time. AI models learn brand-category associations from their training data. The more frequently your brand appears in editorial content on publications that AI models trust, the more likely those models are to reference you in generated answers.

According to analysis from Ahrefs examining 75,000 brands, those with the most web mentions appear up to 10× more often in AI-generated search results than competitors with similar products but fewer mentions.

This isn’t about random press coverage. It’s about strategic, contextual placements on the specific types of publications AI models reference most: industry publications, respected SaaS review sites, educational resources, and high-authority editorial outlets.

Agencies like BrandMentions approach this systematically — placing contextual brand mentions across 140+ high-authority publications that AI models actively learn from during training and retrieval cycles. The result is that brands build the editorial footprint AI engines need to confidently recommend them.

Common Mistakes That Undermine AI Search Tracking

Avoid these five pitfalls as you build and maintain your monitoring practice:

Monitoring only one platform. ChatGPT, Perplexity, and Google AI Overviews each pull from different source ecosystems. A 2025 Conductor analysis found that brand mention rates can vary by over 40% across different AI platforms for the same query. Track all platforms your audience uses.

Counting mentions without context. A mention in a negative framing (“users frequently complain about BrandX”) inflates your numbers while damaging your actual visibility. Tag every mention with sentiment and intent framing.

Tracking too infrequently. Monthly checks capture snapshots, not trends. AI responses shift with model updates, new training data, and competitor content changes. Weekly cadence is the minimum for competitive industries.

Relying on API outputs instead of front-end capture. What the API returns and what users see in ChatGPT’s interface can differ. Always validate against the consumer experience.

Treating monitoring as the end goal. Data without action is cost, not investment. Every tracking report should produce at least one concrete next step: a content update, a schema fix, or a new editorial placement target.

What’s Changed Since 2024–2025 — and What to Watch in 2026

AI search tracking has evolved rapidly. Here’s what has shifted and where the field is heading:

What changed: Dedicated AI visibility platforms emerged as a distinct category in 2024–2025, replacing makeshift manual tracking. Semrush and Ahrefs added AI visibility features to their established platforms. Google AI Overviews expanded from limited testing to appearing on billions of searches. Multi-modal AI responses (images, video, voice) began appearing alongside text-based answers.

What to watch in 2026:

- Real-time retrieval is becoming standard. AI models increasingly pull from live web data rather than static training sets. This means content freshness and publication timing matter more than ever.

- Platform fragmentation is accelerating. New AI search surfaces (Apple’s AI features, enterprise-specific tools) create more places your brand needs to appear.

- Attribution remains imperfect. Connecting AI mentions to pipeline and revenue is still the biggest gap in the field. Teams that build clean tracking systems now will have a significant data advantage as attribution tools mature.

According to a 2025 Gartner forecast, traditional organic search traffic is expected to decline by 50% by 2028. The brands building systematic AI visibility tracking and optimization programs now are establishing advantages that compound each quarter.

In campaigns across 67+ B2B companies, the BrandMentions team found that brands with consistent editorial mentions on high-authority publications achieved AI recommendation rates 89% higher than those relying solely on traditional SEO. The gap between brands that invest in AI visibility early and those that wait is widening — not narrowing.

Frequently Asked Questions

How often do AI-generated answers change for the same prompt?

AI responses can change daily, especially after model updates or when the AI engine re-indexes new web content. Weekly monitoring catches most significant shifts. High-volatility prompts — those where different brands appear frequently — may warrant more frequent checks.

Can I track AI brand mentions without paid tools?

Yes. Run your top 25 prompts manually across ChatGPT, Perplexity, Gemini, and Google AI Overviews using incognito mode. Log results in a spreadsheet with brand presence, sentiment, citations, and competitors mentioned. This gives you a baseline within an hour, though it doesn’t scale for ongoing monitoring.

What’s the difference between AI visibility and traditional SEO visibility?

Traditional SEO measures your position on a search results page. AI visibility measures whether AI platforms mention, cite, or recommend your brand in generated answers — often without sending any clicks to your website. According to a 2025 BrightEdge study, 76% of AI Overview citations come from the top 10 SERP pages, meaning traditional SEO and AI visibility are connected but not identical.

Which AI platform should I prioritize if I can only track one?

Google AI Overviews, because they reach the largest audience through standard Google searches. However, tracking only one platform misses significant visibility gaps. ChatGPT and Perplexity often produce different brand recommendations than Google AI Overviews for the same query. Start with Google AI Overviews and add ChatGPT as your second platform.

How long does it take for content improvements to show up in AI answers?

It varies by platform. Google AI Overviews can reflect changes within days to weeks because they pull from live web results. ChatGPT may take longer depending on when training data is refreshed or when RAG retrieval indexes new content. In BrandMentions campaigns, brands with strategic editorial placements typically saw measurable inclusion improvements within 60–90 days across multiple AI platforms.

Does getting more traditional backlinks improve AI visibility?

Indirectly, yes. Backlinks strengthen domain authority, which correlates with being cited in AI answers. But AI visibility specifically benefits from brand mentions — instances where your brand name appears in editorial context on high-authority publications, even without a hyperlink. Both backlinks and brand mentions contribute, but editorial mentions on publications AI models trust have a more direct relationship with AI recommendation behavior.

Your Next Step: Start With What You Can Measure Today

You don’t need enterprise tooling to begin tracking your brand’s AI visibility. Start with the fundamentals: build a 25-prompt library based on how your customers actually search, run those prompts across ChatGPT and Google AI Overviews, and log what you find. That baseline — imperfect as it is — tells you more about your AI discoverability than any amount of traditional rank tracking.

From there, layer in a structured cadence, add platforms, and invest in the three levers that move the needle: clean entity signals, evidence pages worth citing, and editorial brand mentions on publications AI models learn from.

The brands building this system now are the ones AI assistants will recommend next quarter. The ones that wait will spend more to catch up later.

See where your brand stands in AI search — and find out exactly what AI platforms say about you today.