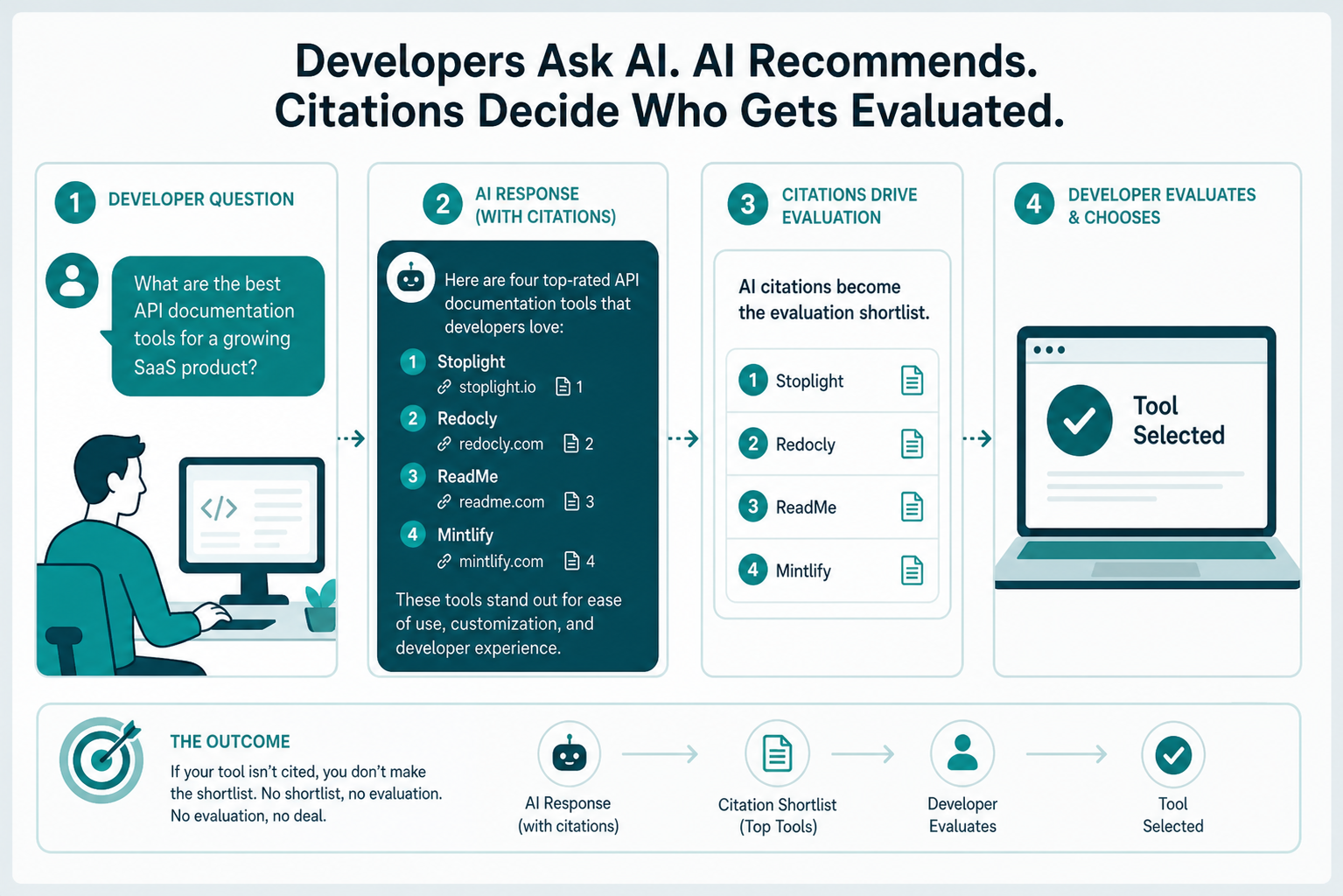

Developers stopped Googling for tools two years ago. They ask Claude which auth library to use, ask Perplexity for the best vector database, ask ChatGPT to compare observability platforms. If your devtool isn’t in those answers, you’re not in the consideration set, and you’ll never know it happened.

AI visibility for devtools is the practice of earning citations inside AI assistants when developers ask for tooling recommendations, comparisons, or implementation help. It runs on different signals than B2B SaaS visibility: GitHub presence, technical documentation depth, package registry authority, and Stack Overflow answers carry more weight than press mentions or thought leadership posts. Most generic AI visibility playbooks miss this entirely.

This is the playbook we’d hand a devtool founder or DevRel lead who wants to be cited by name when developers ask AI for recommendations in their category.

What “AI Visibility” Actually Means for Developer Tools

For B2B SaaS, AI visibility usually means showing up when a marketer asks ChatGPT for “best CRM for startups.” For devtools, the queries are sharper, more technical, and tied to specific stacks:

- “What’s the best library for streaming LLM responses in Node?”

- “Compare Resend vs. Postmark for transactional email”

- “How do I add OAuth to a Next.js app, what should I use?”

- “Which feature flag tool works best with Go microservices?”

These aren’t marketing questions. They’re implementation questions, and the AI is being asked to make a technical recommendation a developer will act on within minutes. The citation pool AI pulls from is also different. Generic AI visibility tools track mentions in Forbes, TechCrunch, and industry blogs. None of that moves the needle for devtools.

What does move it: GitHub README files, official docs, Stack Overflow accepted answers, dev.to posts, Hacker News threads, package registry pages (npm, PyPI, crates.io), and engineering blogs from teams the developer audience already trusts. That’s the source list. If your tool isn’t represented there, you don’t exist in AI answers.

Why Devtool AI Visibility Runs on Different Signals

Three things separate devtool AI visibility from generic B2B AI visibility, and getting these right is most of the work.

Documentation Is Your Highest-use Asset

For a marketing tool, the website’s blog and pricing page do the heavy lifting. For a devtool, the docs are the product surface AI models ingest most aggressively. Well-structured technical documentation, with code samples, API references, and clear use-case framing, gets pulled into AI responses constantly. Marketing copy doesn’t.

The docs that get cited share a few traits: they answer specific implementation questions in their headings, they include working code samples with multiple languages, and they explain *why* certain patterns are used, not just *how*. AI models prefer documentation that reads like a senior engineer explaining a concept to a peer.

GitHub and Package Registries Carry Authority Weight

A devtool with 800 GitHub stars, an active issue tracker, and weekly npm downloads in the tens of thousands is a different entity to an AI model than one with a polished landing page and no traction signals. AI assistants weigh adoption signals heavily when ranking technical recommendations, partly because that’s how their training data has been labeled, partly because RAG systems pulling current data lean on these signals too.

If your GitHub presence is a thin mirror repo with no community, the AI sees a tool nobody uses. That filters into answers.

Developer Communities Are Citation Engines

Stack Overflow, Reddit (specifically subs like r/programming, r/webdev, r/golang, and language-specific communities), Hacker News, and dev.to function as citation amplifiers. When a developer answers a question with “we use [Tool X] for this, here’s why,” and that answer gets upvoted, AI models register that signal. Repeat that across hundreds of organic mentions, and your tool becomes the default recommendation.

The reverse is also true. If your tool has visible community drama, unresolved bugs in active threads, or a pattern of negative comparisons, AI assistants surface that too, sometimes in the same response that recommends you.

The Five Signal Categories That Earn Devtool Citations

Across the devtool campaigns we’ve worked on, five signal categories consistently move citation rates. Skip any of them and the strategy underperforms.

| Signal Category | Why It Matters | Where to Build It |

|---|---|---|

| Technical Documentation | Highest-density source for implementation queries | Your docs site, with structured headings and runnable code |

| Open Source Footprint | Adoption signals AI weighs in ranking | GitHub repos, issues, discussions, package registries |

| Community Discourse | Organic mentions that compound over time | Stack Overflow, Reddit, Hacker News, dev.to |

| Editorial Authority | Trusted technical publications AI indexes deeply | InfoQ, The New Stack, IEEE Spectrum, language-specific blogs |

| Structured Metadata | Helps AI parse what your tool is and does | Schema markup, llms.txt, OpenAPI specs, README structure |

Each one needs a deliberate program. Treating them as marketing tactics, write a blog post, run a campaign, misses the point. These are infrastructure investments. They compound.

How to Build a Devtool AI Visibility Program

The right sequence matters. Most teams jump to community marketing without fixing their documentation, and they wonder why citations don’t follow. Build the foundation first.

Step 1: Audit Your Current AI Presence

Run 30–50 queries across ChatGPT, Perplexity, Claude, and Gemini that a developer in your category would actually ask. Don’t ask “what is [your tool]”, that’s a vanity query. Ask the recommendation queries:

- “Best [tool category] for [specific stack]”

- “Compare [Competitor A] vs. [Competitor B]”

- “How do I [specific implementation task]”

- “Open source alternatives to [Competitor]”

Log which tools get cited, in what order, and what sources the AI references. This is your baseline. If you appear in fewer than 20% of relevant queries, you have a visibility problem regardless of how good your product is. Tracking brand mentions across AI search platforms systematically beats spot-checking by hand.

Step 2: Fix Your Documentation for AI Extraction

This is the highest-ROI work you can do, and most teams skip it. The fix isn’t rewriting your docs from scratch, it’s restructuring them so AI models can extract clean answers.

- Use question-style headings for common implementation tasks (“How do I authenticate API requests?” not “Authentication”).

- Open every section with a 1–2 sentence direct answer before going into detail.

- Include working code samples in the languages your audience uses, with comments that explain the *why*.

- Add a comparison page that honestly shows when your tool fits and when it doesn’t. AI cites these heavily.

- Publish an llms.txt file at your root domain pointing AI crawlers to your most important documentation. Writing llms.txt for AI search takes a couple hours and pays off for years.

Step 3: Build Strategic GitHub and Package Presence

If your tool is open source, this is mostly hygiene: clear README with installation, quick start, and use cases at the top; active issue triage; published changelogs; tagged releases. If your tool is closed source but has SDKs, the SDKs are your GitHub presence, treat them with the same care.

For closed-source tools without SDKs, build a public examples repo. Real working examples for the top 10 use cases of your product, in the languages your audience uses. AI models index these aggressively because they’re exactly the kind of code-with-context that answers implementation queries.

Step 4: Earn Mentions on Sources AI Actually Indexes

This is where most devtool marketing programs break down. Teams pitch TechCrunch and Forbes when AI models care more about a thoughtful post on dev.to from an engineer with 5,000 followers. The hierarchy for devtool citations roughly looks like this:

- Stack Overflow accepted answers mentioning your tool in context

- Hacker News front-page discussions (organic, not promotional)

- Engineering blogs from companies developers respect

- Dev community publications (dev.to, Hashnode, Lobsters)

- Technical publications (InfoQ, The New Stack, IEEE Spectrum)

- Language or framework-specific newsletters and blogs

- Conference talks with published transcripts or videos

Pitching the wrong publications wastes months. Community mentions services that understand developer audiences operate very differently from generic PR firms, the difference shows up directly in citation rates within 60–90 days.

Step 5: Track, Iterate, Compound

Re-run your baseline queries every 30 days. Track citation rate, position in cited lists, and which sources AI is pulling from to mention you. The pattern you’ll see: citation rate moves slowly for the first 60 days, then accelerates as the signals you’ve planted start reinforcing each other. Most teams quit at day 45. The ones who push through see consistent recommendations by month 4.

Where Generic AI Visibility Tools Fall Short

If you’ve evaluated tools like Profound, Otterly, or Peec AI, they’re solid for B2B SaaS visibility but thin on devtool-specific signals. They track mentions in marketing publications well. They miss GitHub adoption signals, package registry authority, Stack Overflow answer dynamics, and the specific community sources where developer recommendations form.

DevTune is the most purpose-built option for the developer tool category right now, it tracks community discourse on GitHub, Hacker News, Stack Overflow, and dev.to alongside standard AI citation tracking. Worth evaluating if developer audiences are your entire focus.

For most devtool teams, the better move is a hybrid: a citation tracking tool that covers AI assistants broadly, paired with manual monitoring of the developer-specific sources that matter most for your category. Comparing AI visibility analytics tools against your specific signal needs is worth the afternoon it takes.

The Mistakes That Kill Devtool AI Visibility Programs

Five failure modes show up in nearly every devtool team that struggles with AI visibility:

- Treating it as marketing instead of infrastructure. AI visibility for devtools is a cross-functional program. DevRel, docs, engineering, and marketing all own pieces. If marketing runs it alone, the technical signals don’t get built.

- Optimizing for the wrong sources. Pitching mainstream tech press while ignoring Stack Overflow, dev.to, and language-specific communities. The press placements feel important but barely move citation rates.

- Skipping the documentation work. Docs are the highest-use asset and the most consistently neglected. A weekend of restructuring beats a month of content marketing.

- Faking community presence. Astroturfed Reddit comments, fake Stack Overflow answers, paid Hacker News posts. AI models and human readers both detect these, and the brand damage outlasts the short-term lift.

- Quitting too early. Citation rates compound, but the compounding kicks in around month 3-4. Programs killed at month 2 never see the return.

Frequently Asked Questions

How long does it take to see AI citations for a devtool?

Most devtools see early citation movement within 60-90 days of focused work, with consistent recommendations emerging around month 4. Tools with strong existing GitHub presence and documentation move faster, sometimes seeing citations within 30 days of an llms.txt and docs restructure. Net-new tools with no community presence take longer because adoption signals have to be built from zero.

Do GitHub stars actually influence AI recommendations?

Yes, indirectly but meaningfully. AI models don’t read star counts directly, but star counts correlate with the volume of community discussion, blog posts, and accepted Stack Overflow answers about a tool, and those are the sources that get cited. A tool with 5,000 stars typically has 50-100x the discussion footprint of a tool with 50 stars, and that footprint is what AI assistants pull from.

Is llms.txt worth implementing for devtools?

Worth implementing, not worth obsessing over. An llms.txt file pointing AI crawlers to your most important documentation pages takes a couple hours to write and is a clear positive signal. It won’t transform your visibility on its own, the underlying documentation has to be good, but combined with strong docs it accelerates AI extraction. Skip it only if you have nothing else to fix first.

Should we build SDKs even if our product is API-only?

If developer audience is core to your business, yes. SDKs give you a GitHub presence, get indexed by package registries, and create natural citation surfaces in code samples across the web. An API with no SDK is invisible to most of the citation pool that drives AI recommendations. Even thin client libraries in the top 3-4 languages your audience uses are worth the maintenance cost.

How do we track AI citations across multiple platforms efficiently?

Run a fixed query set monthly across ChatGPT, Perplexity, Claude, and Gemini, 30-50 queries that cover your category, comparisons, and implementation use cases. Log results in a spreadsheet or use a citation tracking tool that monitors AI assistants automatically. Spot-checking by hand works for early-stage teams; teams scaling beyond Series A usually need automated tracking to spot trends and competitor movement.

Does writing for Hacker News still work in 2026?

Writing *to* Hacker News rarely works. Writing things engineers want to share that happen to surface on Hacker News works extremely well. The pattern that gets cited: technical deep-dives, postmortems, novel benchmarks, and contrarian takes from credible engineers. Promotional posts get flagged and buried, and that signal sticks to your domain.

Where Devtool Visibility Programs Pay Off

The devtools that win the next five years won’t be the ones with the biggest marketing budgets. They’ll be the ones AI assistants recommend by default when a developer asks for tooling help, because their docs are extractable, their GitHub presence is real, their community signals are organic, and the editorial mentions they’ve earned come from publications AI actually trusts. That’s a different game than B2B SaaS marketing, and it’s not optional anymore.

Start with the audit. Run the queries, see where you stand, and pick the one signal category that’s weakest. Fix that first. The compounding starts the day you do.

Want help mapping your devtool’s AI citation gaps and the publications AI models pull from in your category? Get a free AI visibility audit built specifically for developer tool companies.