AI visibility analytics tools for brand mentions help you measure how often — and how accurately — AI platforms like ChatGPT, Perplexity, and Gemini reference your brand when users ask questions about your category. As of 2026, these tools have become essential for B2B marketing teams because AI-generated answers now influence a significant share of buyer research. Without the right analytics, you cannot tell whether AI search is helping your pipeline or silently sending prospects to competitors.

This article breaks down what AI visibility analytics tools actually measure, how they differ from traditional SEO monitoring, which capabilities matter most for tracking brand mentions across AI platforms, and how to build a measurement system that connects AI visibility data to business outcomes.

- How AI visibility analytics differs from traditional brand monitoring — and why the distinction matters for pipeline

- The five core metrics these tools track and which ones actually correlate with revenue

- How to evaluate whether a tool measures mention accuracy, not just mention frequency

- A practical framework for connecting AI brand mention data to marketing attribution

- Where the analytics category is headed in 2026 — and what’s still missing

- How to avoid common measurement mistakes that inflate dashboards but miss real problems

What AI Visibility Analytics Tools Actually Measure

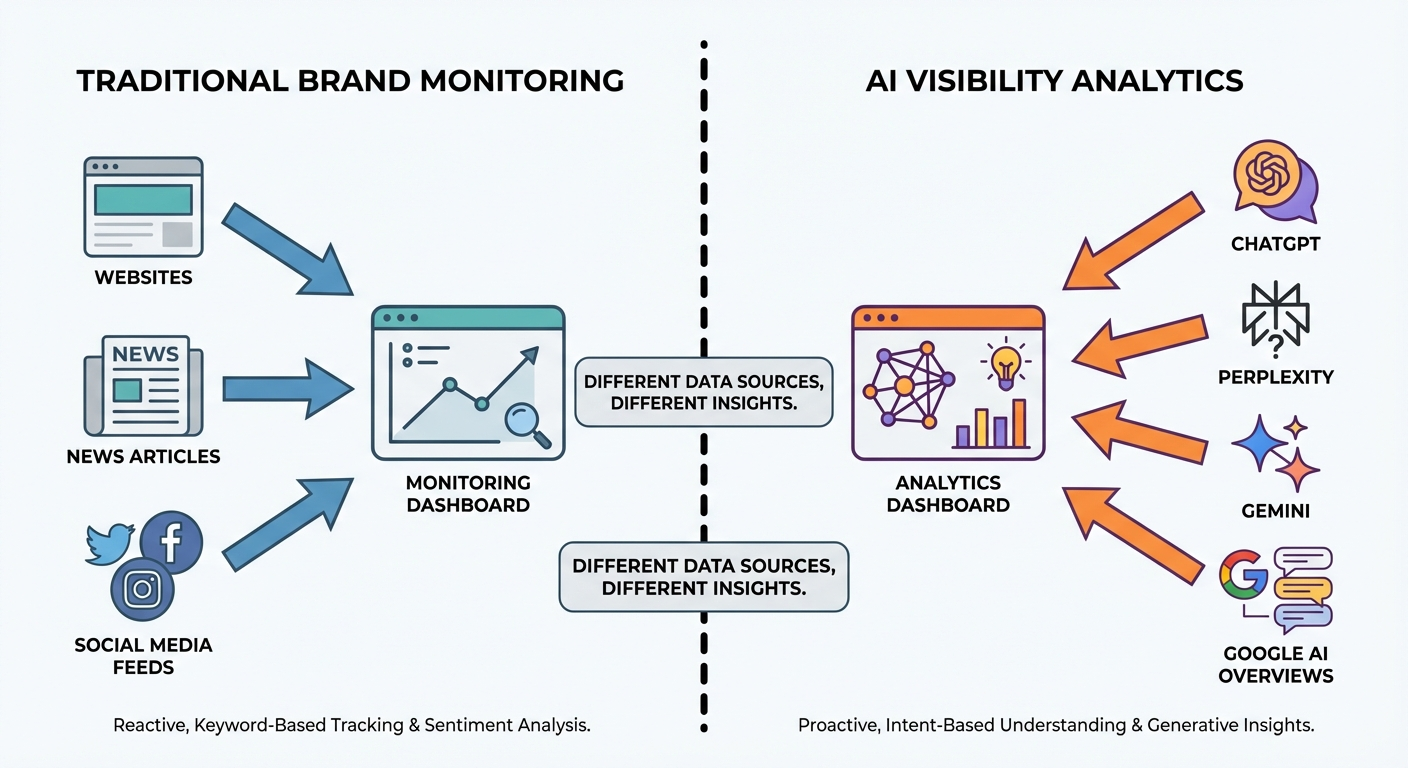

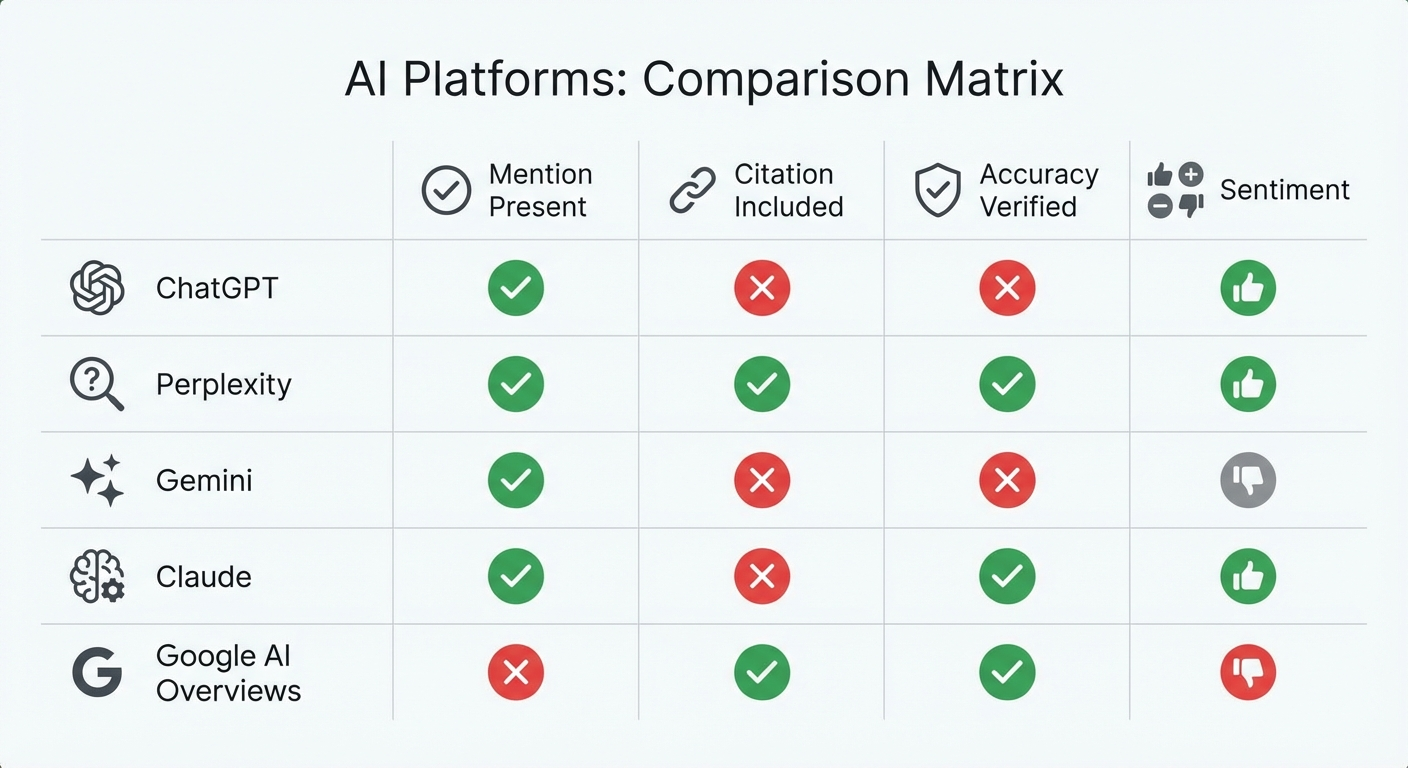

AI visibility analytics tools monitor the outputs of large language models and AI answer engines to determine how your brand appears when users ask questions relevant to your product category. Unlike traditional media monitoring, these tools query AI platforms directly — running prompts through ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews — and analyzing the responses for brand presence, positioning, and accuracy.

The core difference from traditional brand monitoring: these tools don’t scrape the web for mentions on websites. They analyze what AI says about you when asked.

Five Core Data Points These Tools Capture

Most AI visibility analytics platforms track some combination of these metrics:

- Mention frequency — How often your brand name appears in AI-generated responses for tracked prompts

- Citation rate — Whether AI links to your domain as a source, a third-party source, or no source at all

- Sentiment and framing — Whether the mention is a recommendation, a neutral listing, or a warning

- Competitive share of answer — What percentage of relevant prompts include your brand versus competitors

- Mention accuracy — Whether the AI response correctly represents your pricing, features, and positioning

That last metric — accuracy — is the one most tools still handle poorly, and it’s arguably the most important. Being mentioned with incorrect information can damage conversion rates more than not being mentioned at all.

Why Traditional SEO Tools Fall Short for AI Brand Mentions

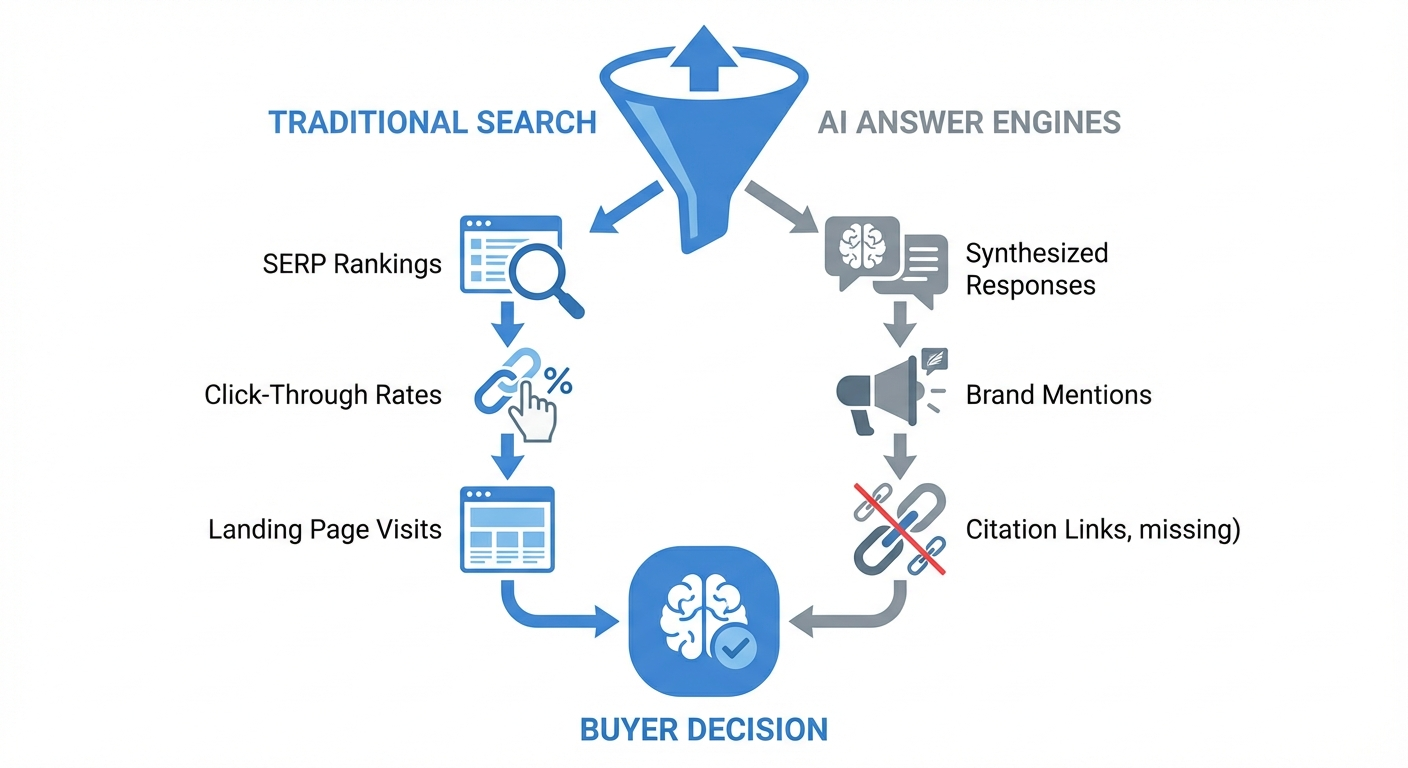

Traditional SEO platforms like Ahrefs, Semrush, and Moz were built to track where your pages rank in search engine results. They tell you which keywords drive clicks. They do not tell you what AI platforms say about your brand in synthesized answers.

The gap exists because of a fundamental architectural difference. Google Search returns a list of links. AI answer engines return a single synthesized response — sometimes citing sources, sometimes not. A brand that ranks on page one for a keyword might be completely absent from ChatGPT’s answer to the same question.

Where the Data Models Diverge

SEO tools index URLs and track their position in search results. AI visibility analytics tools index model outputs — the actual text AI generates in response to prompts. This means:

- No stable rankings — AI responses can change with every query, even for identical prompts. The same question asked twice may produce different brand mentions.

- No click-through data — Many AI answers don’t include clickable links. Users get the answer and move on without visiting your website.

- No keyword-to-page mapping — AI doesn’t rank your “pricing page” for “best CRM pricing.” It synthesizes an answer from multiple sources, sometimes citing none of them.

According to a 2024 Gartner prediction, traditional search engine volume will decline 25% by 2026 as users shift to AI-powered search. Since that forecast was published, the trend has accelerated. As of 2026, teams that only monitor traditional SERP rankings are measuring an increasingly incomplete picture of how buyers discover brands.

This doesn’t mean SEO tools are obsolete. Strong organic search performance still feeds AI models with source material. But measuring AI visibility requires a separate analytics layer — one built specifically for how brand mentions in generative AI actually work.

How AI Visibility Analytics Connects to Revenue

The most common objection marketing leaders raise about AI visibility tools: “How does this connect to pipeline?”

It’s a fair question. And as of 2026, the honest answer is that attribution is still imperfect — but improving rapidly. Here’s what the data shows and where the gaps remain.

What Can Be Measured Today

Referral traffic from AI platforms. Google Analytics and most modern analytics tools can identify sessions originating from ChatGPT, Perplexity, and other AI platforms. According to SparkToro’s 2025 research on zero-click searches, direct referral traffic from AI answer engines grew significantly year-over-year, though it still represents a fraction of total traffic for most B2B sites.

Correlation between mention frequency and branded search volume. Teams tracking AI visibility alongside Google Search Console data often see a pattern: as AI mentions increase, branded search queries rise — a signal that AI recommendations drive users to search for brands directly.

Pipeline influence tracking. Some organizations now ask prospects during sales calls or in forms, “Where did you first hear about us?” AI platforms increasingly appear in these responses.

What Remains Difficult to Measure

Most AI-influenced buyer journeys leave no direct click trail. A prospect asks Perplexity, “What’s the best project management tool for remote teams?” — gets an answer mentioning your brand — then later Googles your brand name directly. The AI influence is invisible in standard attribution models.

This is similar to how brand advertising and word-of-mouth have always been difficult to attribute. The difference: AI visibility analytics tools give you leading indicators — mention frequency, share of answer, sentiment — that traditional brand awareness channels never provided.

Five Capabilities That Separate Strong Tools from Weak Ones

The AI visibility analytics market in 2026 includes dozens of platforms. Most share a similar surface-level promise: “See how AI talks about your brand.” The differences that matter emerge in how they handle five specific capabilities.

1. Mention Accuracy Validation

The most valuable — and rarest — capability. Does the tool simply report that your brand was mentioned, or does it flag when AI describes your product incorrectly?

A mention marked “positive” that contains outdated pricing or fabricated features is worse than no mention. Look for tools that compare AI-generated claims against your actual product data — pricing tiers, feature sets, integration lists, supported use cases.

Most tools still categorize this as “sentiment analysis,” which catches tone (positive/negative) but misses factual errors entirely. A response can be enthusiastically positive and completely wrong.

2. Multi-Engine Coverage

AI answers vary significantly across platforms. ChatGPT, Perplexity, Gemini, Claude, Google AI Overviews, and Copilot each pull from different data sources and apply different synthesis methods. A brand mentioned consistently in Perplexity may be absent from Claude.

Strong analytics tools track across at least four major AI engines. If you’re only monitoring one platform, you’re seeing a narrow slice of your actual AI visibility — and potentially missing the platforms your buyers use most. For a deeper look at platform-specific differences, see how brand mentions appear differently in Perplexity versus Gemini’s citation behavior.

3. Prompt Discovery and Management

AI visibility tracking is only as good as the prompts you monitor. Tools that require you to manually create every prompt leave gaps — you’ll miss the questions buyers actually ask.

Stronger platforms offer automated prompt discovery: they surface questions users ask AI about your category, your competitors, and your use cases. This matters because the way people phrase AI queries differs significantly from how they type Google searches.

For example, a Google search might be “best CRM software.” The same buyer asks ChatGPT: “I run a 50-person B2B SaaS company and need a CRM that integrates with HubSpot and handles complex deal stages. What should I use?” The prompt is more specific, more contextual, and harder to predict.

4. Competitive Benchmarking Depth

Knowing your own mention rate is useful. Knowing it relative to competitors is actionable.

Basic tools show whether competitors were mentioned in the same response. Advanced tools show why — which attributes AI associates with each brand, which prompts competitors dominate, and which gaps in your content or authority allow competitors to take your place.

Effective competitive analytics answers: “For buyer-intent prompts in our category, we appear in 34% of AI responses, Competitor A appears in 61%, and Competitor B appears in 48%. Competitor A dominates prompts related to enterprise features because they have stronger coverage on review sites and technical publications.”

5. Actionable Recommendations vs. Raw Data

A dashboard showing mention counts is a starting point. What marketing teams actually need is guidance: What do I do with this data?

The most useful tools connect visibility gaps to specific actions: create a comparison page for this competitor pairing, strengthen schema markup on your pricing page, build authority on these specific publications that AI models cite frequently. Tools that stop at “you’re not mentioned for this prompt” leave teams guessing about next steps.

For teams building an action plan from AI visibility data, practical approaches to tracking brand mentions in AI search can help bridge the gap between measurement and execution.

A Practical Framework for Evaluating AI Visibility Analytics Tools

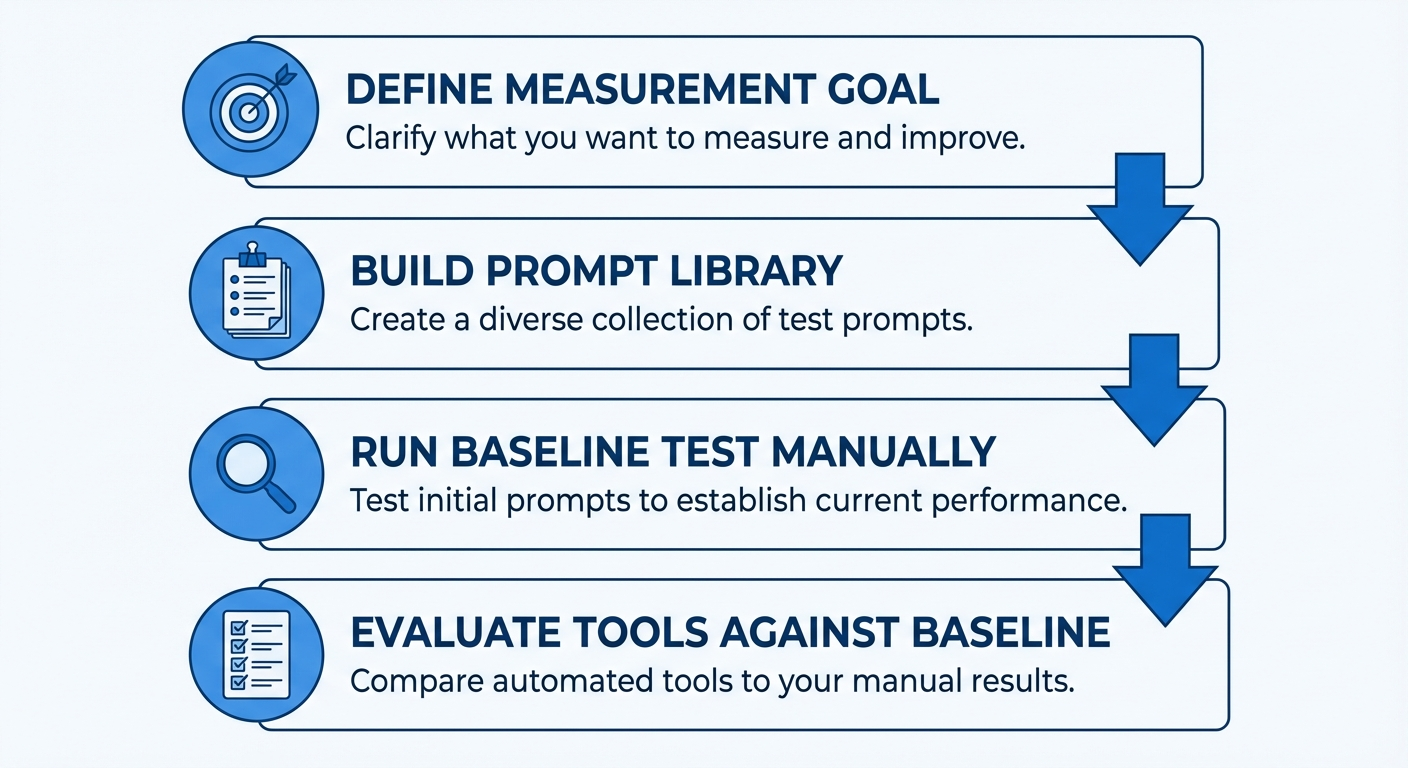

Rather than ranking specific tools — the market changes too fast for static rankings to stay accurate — here’s a decision framework you can apply to any platform as of 2026.

Step 1: Define What “Visibility” Means for Your Business

Before choosing a tool, clarify your measurement goal:

- Awareness stage: “Are we mentioned at all when buyers ask about our category?” → Focus on mention frequency and share of answer.

- Consideration stage: “Are we recommended as a viable option?” → Focus on sentiment, positioning within responses, and recommendation framing.

- Decision stage: “Is the information AI shares about us accurate enough to support a purchase decision?” → Focus on accuracy validation and citation tracking.

Most B2B teams need all three. But knowing your primary gap helps you prioritize which tool capabilities matter most.

Step 2: Map Your Prompt Universe

Build a prompt library before evaluating tools. This gives you a consistent test set.

Create 25–50 prompts across these clusters:

- Category discovery: “Best [category] tools for [use case]”

- Competitive alternatives: “Alternatives to [competitor name]”

- Direct comparison: “Compare [your brand] vs [competitor]”

- Feature-specific: “Which [category] tools support [specific feature]?”

- Trust and validation: “[Your brand] reviews,” “[Your brand] pricing 2026”

Run these prompts manually across ChatGPT, Perplexity, and Gemini before buying any tool. This 30-minute exercise establishes your baseline and helps you evaluate whether a tool’s data matches reality.

Step 3: Test With Your Own Data

Every tool looks good in demos. The real test: does it produce accurate, actionable results for your brand and category?

During trial periods, verify:

- Do the tool’s mention counts match what you see when you manually run the same prompts?

- Does it correctly distinguish your brand from similarly named entities?

- Does it catch inaccuracies in AI responses, or only report tone?

- Can you export data and build reports your leadership team will understand?

- Does it suggest specific actions, or only show dashboards?

Step 4: Evaluate Total Cost Against Insight Value

AI visibility tools range from free tiers with limited prompts to enterprise platforms costing several thousand dollars per month. The right budget depends on your prompt volume, number of competitors tracked, AI engines covered, and how much manual validation work the tool eliminates.

A cheaper tool that requires 10 hours per month of manual verification may cost more than a premium tool that handles validation automatically — especially when you factor in the salary cost of the person doing the checking.

Common Measurement Mistakes That Inflate AI Visibility Dashboards

After reviewing how dozens of B2B teams use AI visibility analytics, several recurring errors stand out. These mistakes don’t just waste budget — they create false confidence that delays real improvements.

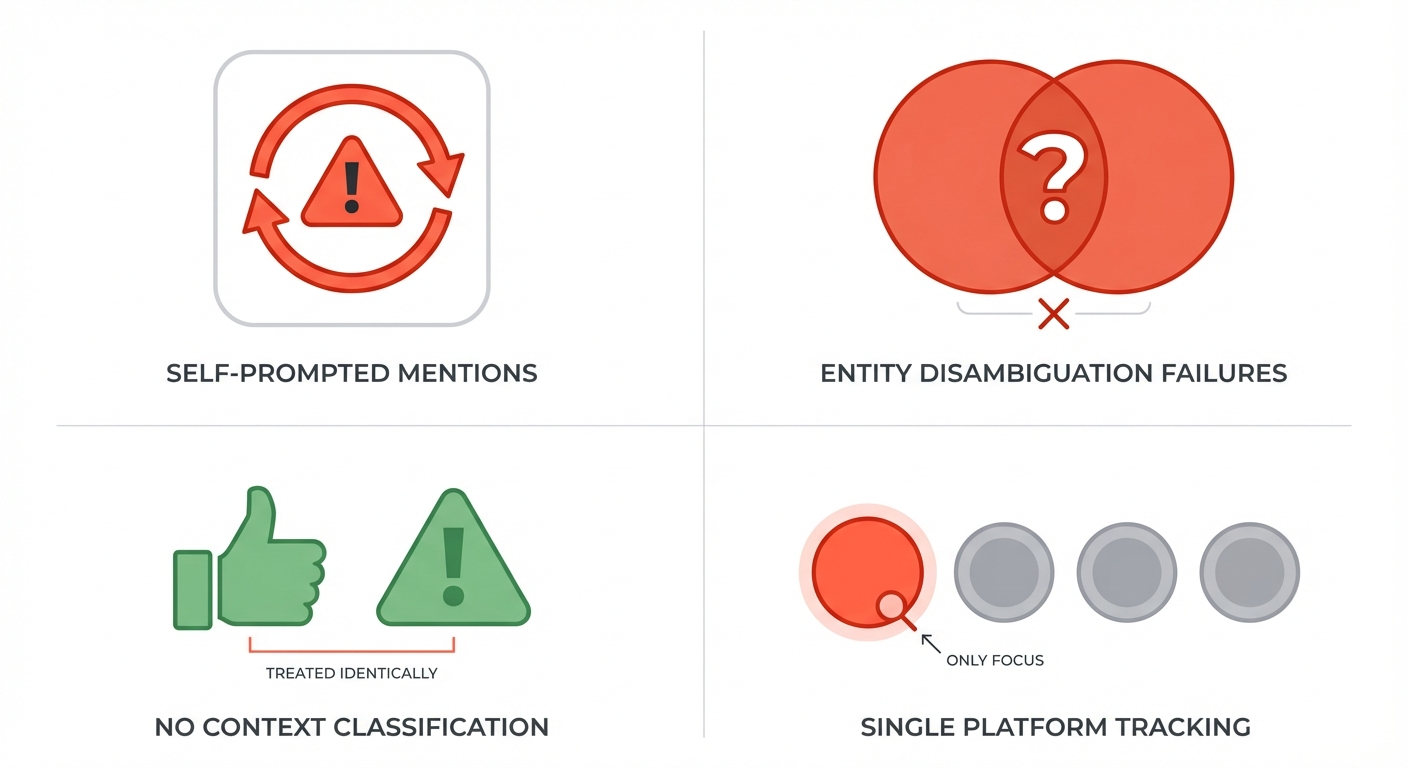

Counting Self-Prompted Mentions

If your tracking prompt includes your brand name — “Is [Your Brand] good for enterprise?” — you’ll almost always get a mention. That’s prompted recall, not organic visibility. It tells you nothing about whether AI recommends you when buyers ask open-ended category questions.

Separate your prompts into two sets: branded prompts (where your name appears in the question) and unbranded prompts (category and use-case questions). Only unbranded prompts measure real AI visibility.

Ignoring Entity Disambiguation

If your brand name is a common word — “Pulse,” “Beacon,” “Signal” — AI visibility tools may count mentions that refer to something entirely different. A tool that tracks “Pulse” will flag every AI response that mentions pulse rates, pulse surveys, or pulse checks.

Reliable tracking requires entity rules: the mention only counts if it co-occurs with your domain, product category, or specific product feature. Tools vary widely in how well they handle this. For more on tracking accuracy challenges, see how to monitor brand mentions in LLMs effectively.

Treating All Mentions as Positive

A mention in a “tools to avoid” list or a factually incorrect recommendation both count as mentions in most dashboards. Without context classification — recommended, neutral, negative, inaccurate — raw mention counts are unreliable signals.

Measuring Only One AI Platform

Teams often start with ChatGPT tracking because it has the largest user base. But buyer behavior varies. Technical audiences may prefer Claude or Perplexity. Google AI Overviews influences users who never leave Google’s ecosystem. Tracking a single platform gives you a distorted view.

At minimum, track the three platforms where your target buyers are most active. Most cross-platform brand mention tracking approaches recommend covering ChatGPT, Perplexity, and Google AI Overviews as a baseline.

How AI Visibility Analytics Differs From Brand Mention Services

An important distinction for marketing leaders: AI visibility analytics tools measure how your brand appears in AI responses. Brand mention services build the editorial presence that influences those responses.

Analytics tools answer: “Where do we stand today?”

Brand mention services answer: “How do we improve where we stand?”

Both are necessary. Analytics without action produces dashboards that gather dust. Action without analytics means you’re building visibility blind — unable to measure what’s working or identify where gaps persist.

Agencies like BrandMentions bridge this gap by placing contextual brand mentions on 140+ high-authority publications that AI models actively reference during training and retrieval — while tracking the measurable impact those placements create across AI platforms.

The most effective AI visibility programs combine analytics tools for ongoing measurement with strategic placement services that compound authority over time. Neither alone is sufficient.

What’s Changed in AI Visibility Analytics Since 2024–2025

This category has evolved rapidly. If you evaluated tools in 2024 or early 2025, the landscape looks meaningfully different now.

Platform Coverage Has Expanded

Early tools tracked only ChatGPT. As of 2026, the standard expectation is coverage across ChatGPT, Perplexity, Gemini, Claude, Google AI Overviews, and Copilot. Some platforms also track Meta AI, Grok, and DeepSeek. Tools that still only cover one or two engines are behind.

Accuracy Detection Is Emerging

Throughout 2024, nearly every tool treated mention tracking as a binary — mentioned or not mentioned. In 2025, a few platforms began adding accuracy validation layers. As of 2026, this capability is still rare but increasingly recognized as essential. Expect accuracy detection to become a baseline feature within the next 12 months.

Integration With Broader Marketing Stacks Is Improving

Early AI visibility tools were standalone dashboards. The 2026 generation increasingly offers API connections, Looker Studio integrations, and connections to Google Analytics 4 — making it easier to correlate AI visibility data with traffic and conversion metrics.

Prompt Databases Are Growing

Some platforms now maintain databases of millions of real user prompts, moving beyond manual prompt creation toward automated discovery. This shift is significant because it reduces the risk of tracking only prompts you thought to create — rather than the ones buyers actually use.

For teams that evaluated AI visibility tools in 2024 or 2025, revisiting the category is worthwhile. The capabilities available now are substantially more mature, and pricing has become more transparent across most platforms. Teams exploring advanced GEO tools for AI-generated brand mentions will find options that didn’t exist even six months ago.

Building an AI Visibility Measurement Program That Scales

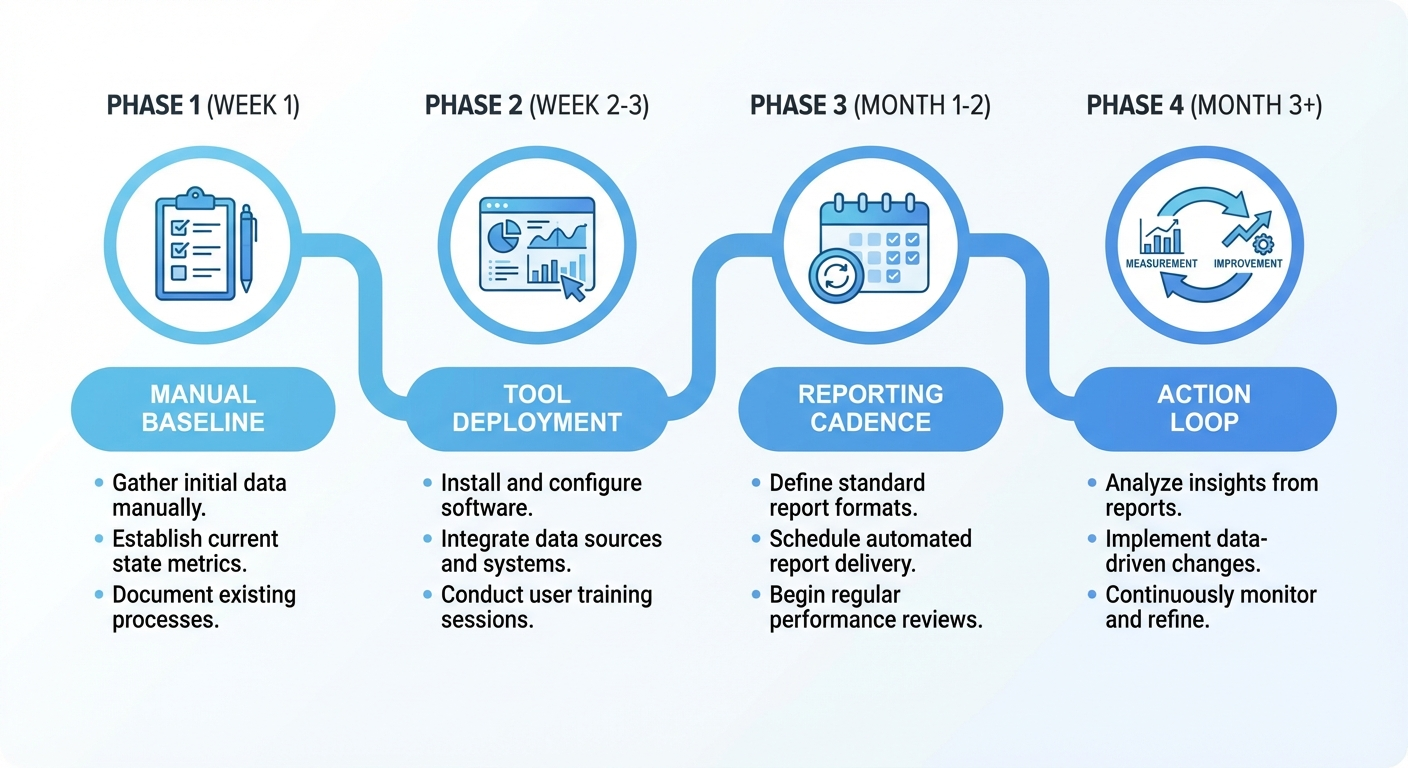

Choosing a tool is one step. Building a sustainable measurement program requires a system — prompts, cadence, reporting structure, and connection to action.

Week 1: Establish Your Baseline

Run your 25–50 prompt library manually across three AI platforms. Document: which prompts mention your brand, which mention competitors, what information is accurate, what’s wrong. This manual baseline becomes your calibration reference when evaluating tool accuracy.

Week 2–3: Deploy Your Analytics Tool

Set up your selected platform with the same prompt library. Compare the tool’s results against your manual baseline. If discrepancies exceed 15%, investigate — the tool may have entity disambiguation problems or limited coverage for your category.

Month 1–2: Build Reporting Cadence

Establish a weekly or biweekly review cycle. Track four metrics:

- Share of Answer — percentage of unbranded prompts where your brand appears

- Recommendation Rate — percentage of mentions framed as recommendations (not just neutral listings)

- Accuracy Score — percentage of mentions with factually correct information

- Competitive Gap — difference between your share of answer and your top competitor’s

Month 3+: Connect Measurement to Action

Every visibility gap should map to a specific action:

- Not mentioned for category prompts? → Build category authority through editorial placements on publications AI models reference. Strategic AI brand mention campaigns directly address this gap.

- Mentioned but not recommended? → Strengthen differentiation signals — comparison content, use-case pages, third-party validation.

- Mentioned with inaccurate information? → Update your owned content with clear, structured data (pricing pages, feature matrices, FAQ schemas) and build corrective mentions on authoritative external sources.

- Losing to a specific competitor on certain prompts? → Analyze which sources AI cites for that competitor and develop presence on those same publications.

What’s Still Missing From AI Visibility Analytics in 2026

Despite rapid progress, the category has real limitations worth acknowledging.

Attribution remains imperfect. No tool can yet draw a clean line from “AI mentioned your brand” to “that mention generated $X in pipeline.” The best available approach combines AI visibility data with branded search trends, referral traffic, and self-reported attribution — but it’s an approximation.

Non-deterministic outputs create noise. AI models can generate different answers to identical prompts. A brand mentioned in Monday’s response might be absent from Tuesday’s. Tools handle this through repeated sampling, but variability means you need trend data over weeks, not snapshots from single runs.

Training data influence is opaque. We know that content on high-authority publications influences what AI models include in their knowledge. We don’t have precise visibility into which publications carry the most weight for specific models. BrandMentions tracks when major AI models update their training data and times placements to maximize inclusion in each knowledge refresh cycle — but even the most sophisticated approaches work with incomplete information about model internals.

Prompt coverage will always be incomplete. Even tools with databases of millions of prompts can’t capture every question buyers ask. Your tracking is a statistical sample, not a census. Build prompt libraries that represent your most important buyer intents, and accept that peripheral coverage will have gaps.

Acknowledging these limitations isn’t pessimism — it’s the basis for realistic expectations. AI visibility analytics in 2026 provides directional intelligence that’s far better than operating blind, even if it doesn’t yet offer the precision of mature SEO analytics.

Frequently Asked Questions

Do AI visibility analytics tools replace traditional SEO monitoring?

No. AI visibility analytics and SEO monitoring measure different things. SEO tools track where your pages rank in search results. AI visibility tools track what AI platforms say about your brand in synthesized answers. Strong organic search performance feeds AI models with source material, so both measurement layers work together. Most B2B marketing teams in 2026 run both.

How often should I check AI visibility data?

Weekly reviews are sufficient for most B2B teams. AI model outputs change gradually — not daily. A weekly cadence gives you enough data points to spot trends without creating noise from natural response variability. After major content updates or publication placements, you may want to check more frequently to measure impact.

Can I track AI visibility for free?

You can manually run prompts through ChatGPT, Perplexity, and Gemini at no cost. This works for establishing a baseline but doesn’t scale. Free tiers from some analytics platforms offer limited prompt tracking — useful for testing whether AI visibility matters for your brand before investing in paid tools. For ongoing measurement across multiple platforms and competitors, paid tools save significant time.

What’s the difference between a brand mention and a brand citation in AI responses?

A brand mention means your brand name appears in the AI-generated text. A citation means the AI response links to or attributes information to a specific source — sometimes your website, sometimes a third party. Mentions indicate awareness. Citations indicate source authority. Both matter, but citations are stronger signals because they connect your content to the AI’s reasoning chain. Learn more about how brand mentions function across AI platforms.

How long does it take to improve AI visibility after starting a measurement program?

Based on data from campaigns across B2B companies, initial improvements typically become measurable within 8–12 weeks of consistent effort — editorial placements on high-authority publications, content optimization for AI readability, and structured data improvements. Significant competitive gains usually require 4–6 months of sustained activity. AI visibility compounds over time as brand-category associations strengthen across model training cycles.

Moving From Measurement to Momentum

AI visibility analytics tools give you something B2B marketing teams never had before: direct insight into how AI platforms represent your brand during buyer research. That insight is valuable. But insight without action is just an expensive dashboard.

The teams seeing real results in 2026 follow a clear pattern: measure their current AI visibility, identify the specific gaps costing them pipeline, build authority through strategic placements on publications AI models trust, and track the impact over time. The analytics layer and the action layer reinforce each other.

If you haven’t yet measured where your brand stands in AI search, start with a manual baseline — 25 prompts, three platforms, 30 minutes. That exercise alone will reveal whether AI is helping your brand or silently redirecting buyers to competitors.

For teams ready to move faster, see where your brand stands in AI search — and what it takes to strengthen your position.