Your next client is asking ChatGPT for a lawyer recommendation right now. They’re not scrolling Google’s page two. They’re reading a short AI-generated answer that names three firms, and your firm either shows up or it doesn’t. AI search optimization for law firms is the work of becoming one of the firms that shows up, built through earned citations on legal publications, structured entity signals, and content that AI models can extract cleanly. This isn’t SEO with a new name. The ranking factors are different, the sources that matter are different, and the ethical guardrails, advertising rules, confidentiality, truthfulness, are different too.

The Short Version

- AI assistants recommend law firms based on citations from legal publications, bar associations, and authoritative directories, not domain authority alone.

- Bar advertising rules (ABA Model Rule 7.1, state variants) still apply to AI-generated mentions. You can’t claim specialist status an AI attributed to you incorrectly.

- Entity clarity, consistent firm name, practice areas, jurisdictions, and attorney bios across the web, is the single biggest lever most firms ignore.

- Practice-area content written for lawyer-level specificity outperforms general “what is [legal topic]” content in AI extractions.

- Tracking AI visibility is different from tracking SERP rankings. You need query-level monitoring across ChatGPT, Perplexity, Gemini, and Google AI Overviews.

Why Law Firms Are Losing the AI Citation Race

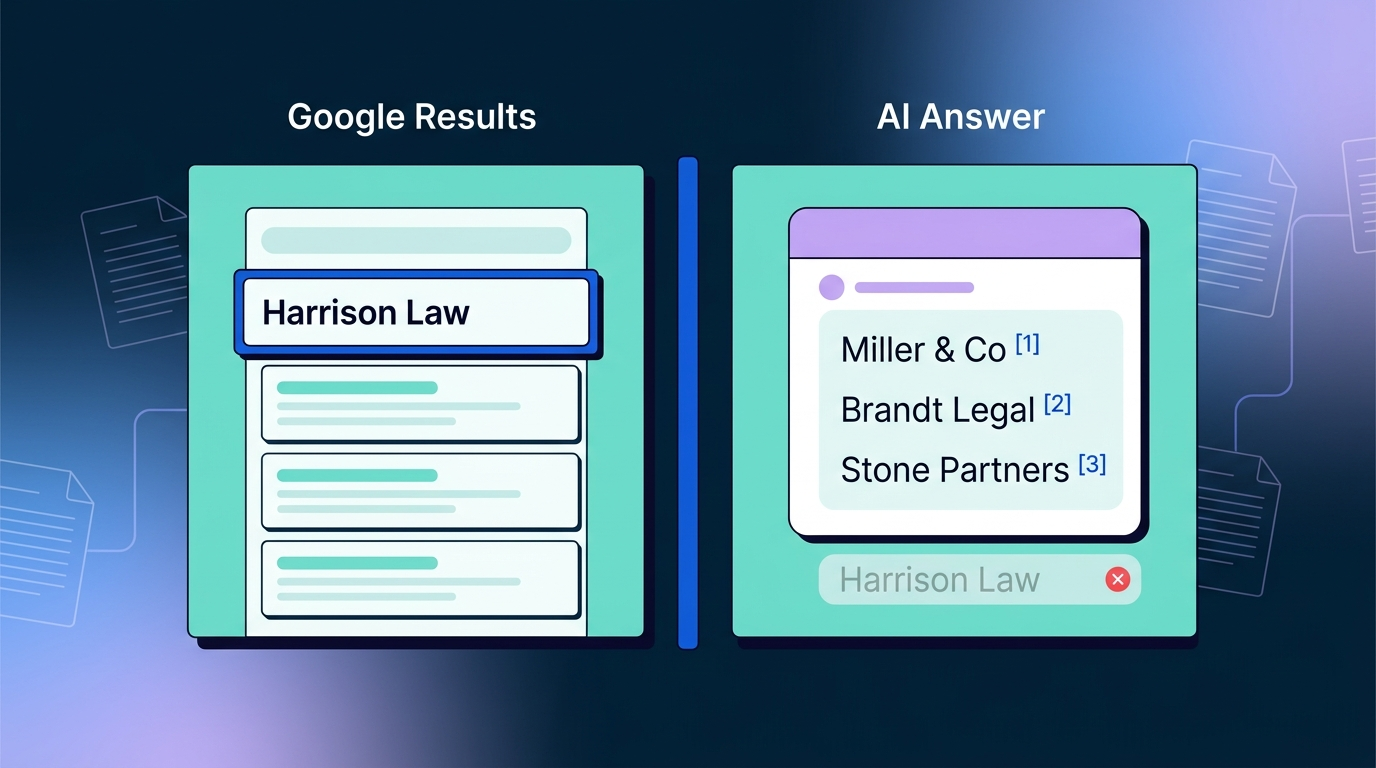

Most firms built their digital presence for one surface: Google organic search. Blog posts targeted keywords. The site had a services page, attorney bios, and maybe a few press mentions. That playbook got firms to page one for “personal injury lawyer [city]” for a decade.

AI assistants don’t read the web the same way. When someone asks Perplexity “who’s the best estate planning attorney in Denver for blended families,” the model isn’t ranking 10 blue links. It’s composing an answer from a handful of sources it trusts, usually a mix of legal publications (Law360, ABA Journal, state bar magazines), directories with editorial review (Super Lawyers, Best Lawyers, Chambers), and long-form content that directly answers the specific scenario.

If your firm’s name doesn’t appear in that source pool, you’re invisible in the answer. It doesn’t matter that you rank #3 on Google.

Here’s the uncomfortable part: the firms winning AI citations today aren’t necessarily the ones with the biggest budgets. They’re the ones with the clearest entity signals, the most earned coverage in legal publications, and content that AI models can extract without hallucinating.

The Five Signals AI Models Use to Pick Law Firms

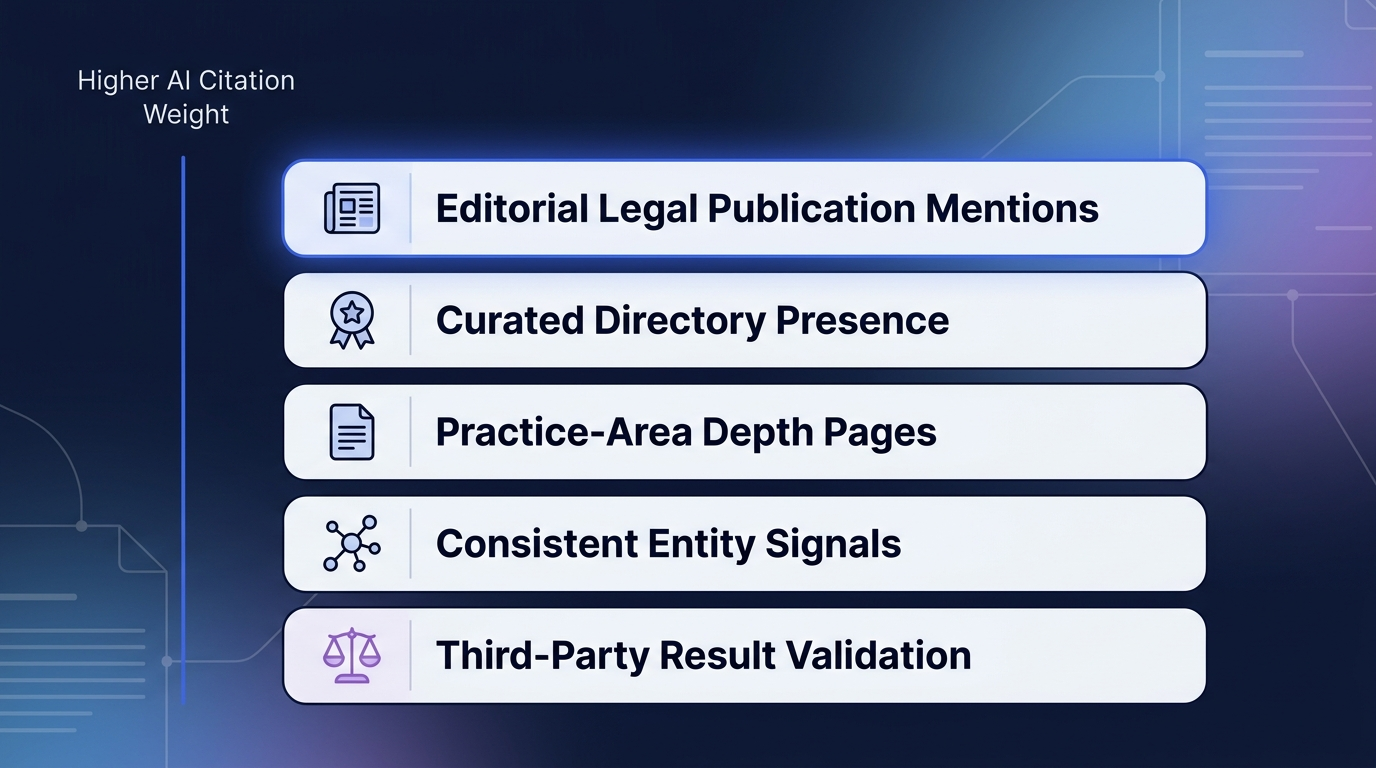

After reviewing how ChatGPT, Perplexity, Gemini, and Google AI Overviews cite firms in actual queries, family law, personal injury, M&A, immigration, IP, a pattern holds. Five signals do most of the work.

1. Editorial Mentions in Legal Publications

Law360, Bloomberg Law, ABA Journal, American Lawyer, state bar magazines, Above the Law, JD Supra, and the legal sections of Reuters and the WSJ. These aren’t just PR targets, they’re training-data sources for every major LLM. A firm quoted as a practice-area source in three of these publications will outperform a firm with 50 blog posts optimized for Google.

2. Directory Presence With Editorial Review

Not every directory matters. The ones AI models weight heavily are the ones with editorial selection: Chambers USA, Best Lawyers, Super Lawyers, Martindale-Hubbell (AV Preeminent specifically), and Benchmark Litigation. Avvo and Justia carry less weight in AI answers but still contribute to entity confirmation.

3. Practice-Area Depth on Your Own Site

AI models extract specific answers. A page titled “Business Litigation” that lists five bullet points loses to a page titled “Breach of Fiduciary Duty Claims in Delaware Chancery Court” that walks through the actual elements, recent rulings, and procedural posture. Specificity is the moat.

4. Consistent Entity Signals Across the Web

Your firm name, attorney names, bar admissions, office addresses, and practice areas should match, exactly, across your site, Google Business Profile, legal directories, bar association listings, and editorial mentions. AI models build entity graphs. Inconsistency (Smith & Jones LLP vs. Smith Jones LLP vs. The Smith Jones Firm) fragments your graph and weakens citation confidence.

5. Third-Party Validation of Results

Verdict reports, settlement announcements, and case commentary published by someone other than you. A $4.2M verdict written up in the Daily Journal carries more weight than the same verdict written up in your firm’s newsroom. AI models are trained to discount self-reported claims.

What to Build on Your Own Site First

Before you earn a single new mention, fix what’s yours. This is where most firms leak the most citation opportunity.

Practice-Area Pages Written for Specific Scenarios

Your “Family Law” page is doing nothing for AI visibility. Break it into the actual questions clients ask: “High-Asset Divorce in [State],” “Relocation Disputes After Custody Orders,” “Modifying Spousal Support After Job Loss.” Each page should open with a direct 40–80 word answer to the specific question, then expand into the legal framework, procedural reality, and what typically happens next. AI models extract these opening paragraphs cleanly.

Attorney Bios With Structured Entity Data

Name, bar admissions (with years), law school, practice areas, notable matters, publications, speaking engagements, LinkedIn URL. Structured. Consistent. Every attorney. This is the single cheapest lift with the highest entity-graph payoff.

Real FAQ Content for Jurisdictional Queries

“How long does a personal injury case take in New York?” “What’s the statute of limitations for medical malpractice in Texas?” These are the exact queries people type into AI assistants. Answer them with lawyer-level accuracy on your site, and AI models will cite you when they appear.

Schema Markup That Lawyers Actually Implement

LegalService schema, Attorney schema, FAQPage schema on FAQ pages, HowTo schema on procedural guides, and Article schema on your commentary pieces. In campaigns we’ve run with law firm clients, adding proper Attorney schema to bio pages correlated with the firm’s attorneys appearing in AI answers about specialist areas within 6–10 weeks. Schema alone doesn’t make you citable, but without it, you’re invisible to the parsers that build AI knowledge graphs.

An llms.txt File

A simple llms.txt at your root tells AI crawlers which pages matter for your firm’s entity. List your practice-area pages, attorney bios, and key resources. It’s a low-effort signal that’s becoming a default expectation.

Earning Citations From Publications AI Models Actually Read

This is the hardest part and the part that compounds. You’re not writing guest posts on marketing blogs. You’re becoming a cited source in legal journalism and authoritative legal publishing.

Three channels do most of the work:

Being a Source for Legal Journalists

Law360, Reuters Legal, Bloomberg Law, and the WSJ law section quote practicing attorneys for practice-area commentary. Getting on their source lists requires a simple, unsexy practice: responding fast, having a clear point of view, and being quotable without being self-promotional. Sign up for HARO, Qwoted, and Connectively. Track which reporters cover your practice area. Build relationships by responding to their queries with actual analysis, not pitches.

Publishing Commentary on JD Supra and Law.com Affiliates

JD Supra is the single most-indexed legal commentary platform for AI training data. Firms publishing substantive practice-area analysis there, not thin blog posts, real analysis of new rulings or regulatory changes, get cited in AI answers disproportionately. The ALM network (Law.com state affiliates, Daily Business Review, National Law Journal) plays a similar role.

Bar Association and CLE Authority Signals

Speaking at state bar conferences, chairing committees, authoring CLE materials, and contributing to bar journal articles. These carry weight in AI citations because bar association domains are treated as authoritative by every major LLM. An article in your state bar journal often outperforms a Forbes mention for AI visibility in your practice area.

The Ethics Layer Most AI Guides Skip

Everything above has to pass the bar. Every state has advertising rules, and ABA Model Rule 7.1 prohibits false or misleading communications about a lawyer or their services. When you optimize for AI visibility, you take on new risks that generic SEO guides don’t address.

| Scenario | Risk | What to Do |

|---|---|---|

| AI assistant calls your attorney a “specialist” in an area where your state bar prohibits that claim | Rule 7.4 violation if you share/amplify the output | Don’t screenshot or promote AI outputs that use prohibited language. Correct your source content if the AI pulled the claim from your site. |

| AI hallucinates case outcomes or client results attributed to your firm | False/misleading communication under Rule 7.1 | Monitor AI mentions. Document inaccuracies. Don’t let fabricated results stay uncorrected in sources you control. |

| AI pulls confidential client information into an answer about your firm | Rule 1.6 confidentiality breach | Audit every public case study, verdict report, and testimonial for consent and privilege before publishing. |

| AI recommendation appears in a jurisdiction where the attorney isn’t admitted | Unauthorized practice / Rule 5.5 concern | Make jurisdictional limits explicit on every practice-area page. AI models extract these disclaimers. |

You can’t control what ChatGPT says about your firm. You can control the inputs it learns from, and you can monitor the outputs and act when something crosses the line.

Tracking AI Visibility Without Losing Your Weekends

SERP rank tracking won’t tell you anything useful about AI visibility. You need query-level monitoring: a defined set of questions a potential client would ask, run regularly across ChatGPT, Perplexity, Gemini, and Google AI Overviews, with the firm’s appearance (or absence) logged over time.

Build your query set around:

- Practice-area + jurisdiction queries: “best M&A lawyer in Boston,” “top immigration attorney San Diego”

- Scenario queries: “who do I hire for a will contest in Florida,” “lawyer for workplace harassment after retaliation”

- Comparative queries: “[your firm] vs [competitor]”, these reveal how AI positions you

- Branded queries: “is [your firm] good for [practice area]”, these reveal citation gaps

Run the set monthly at minimum. Log the firms cited, the sources cited, and the language used. Patterns emerge within 60–90 days, which practice areas you own, which you’re losing, and which sources you need to get into.

A Realistic 90-Day Plan

Days 1–30: Foundation

Audit your current AI visibility across 25–50 practice-area queries. Fix entity inconsistencies across your site, Google Business Profile, and top five legal directories. Break general practice-area pages into scenario-specific pages. Add LegalService, Attorney, and FAQPage schema. Publish or update attorney bios with full structured data.

Days 31–60: Authority Building

Register for HARO, Qwoted, and Connectively. Identify 10 legal journalists covering your practice area and start responding to their queries with substantive analysis. Publish two in-depth commentary pieces on JD Supra on recent rulings or regulatory changes in your area. Submit for Super Lawyers and Best Lawyers if eligible. Create or update your llms.txt file.

Days 61–90: Compounding

Pitch a bar journal article or CLE presentation. Land a second round of legal publication mentions through journalist relationships built in month two. Re-run your query set monitoring and compare to baseline. Identify the two practice areas showing the most citation growth and double down there.

Results aren’t linear. You might see nothing for 8 weeks and then a noticeable citation jump as AI models refresh. That’s normal. The firms that quit at week 6 are the firms that stay invisible.

Where Most Firms Waste Their First Six Months

A few patterns we see repeatedly:

Publishing thin blog posts hoping volume solves it. Twenty 800-word posts on general legal topics do less than one 2,500-word analysis of a recent appellate decision in your practice area. Depth wins. Volume loses.

Chasing high-DA placements that AI models don’t index. A link on a generalist SaaS blog does nothing for AI legal visibility. A quote in ABA Journal does. Know the difference before you spend.

Ignoring attorney bios. Bios are the most citation-rich pages on most firm sites, and most firms treat them like LinkedIn summaries. They should read like a cited expert’s CV, bar admissions, notable matters, publications, speaking, committees.

Treating AI visibility as a marketing line item. It isn’t. It’s a firm-wide discipline that touches PR, content, bios, website architecture, and ethics compliance. Firms that silo it into the marketing team and expect results at 90 days are the ones still invisible at month twelve.

Frequently Asked Questions

How is AI search optimization for law firms different from traditional legal SEO?

Traditional legal SEO targets Google rankings through keywords, backlinks, and on-page optimization. AI search optimization targets being cited as a source in AI-generated answers from ChatGPT, Perplexity, Gemini, and Google AI Overviews. The tactics overlap, quality content, authoritative mentions, technical cleanliness, but the source pool that matters is different. AI models weight legal publications, curated directories, and editorial mentions more heavily than domain authority alone.

Do state bar advertising rules apply to AI-generated mentions of my firm?

Yes. If an AI assistant describes your firm in a way that would violate ABA Model Rule 7.1 or your state’s equivalent, and you amplify or endorse that output, by sharing it, republishing it, or linking to it, you can be held responsible. You’re also responsible for correcting source content on your own site or in materials you control that feed into those AI outputs. Consult your state bar’s guidance on AI-generated advertising; several states have issued formal opinions since 2024.

How long does AI visibility for a law firm actually take?

First signals typically appear between 8 and 14 weeks after coordinated work begins, assuming the firm is publishing substantive commentary, earning legal publication mentions, and fixing entity signals. Meaningful, sustained citation presence usually takes 6–9 months. AI models refresh their training and retrieval layers on different cycles, which is why results arrive in jumps rather than a straight line.

Which AI assistant matters most for law firms?

Perplexity and ChatGPT drive the most referral behavior for consumer-facing legal queries today, while Google AI Overviews influence the largest total search volume. For B2B legal work (corporate counsel searching for outside counsel), ChatGPT and Perplexity dominate. Track all four. Don’t optimize for one at the expense of the others, the sources that earn citations on one usually earn them on the others too.

Can I pay for AI visibility the way I pay for Google Ads?

No, and this is a trap worth naming. There is no sponsored placement in ChatGPT or Perplexity answers. Anyone selling “guaranteed AI citations” is either gaming short-term prompts in ways that will get penalized or misrepresenting what they actually do. Real AI visibility comes from earned citations, entity clarity, and substantive content, not ad spend.

The Work Starts With the Sources

If you take one thing from this, take this: AI assistants recommend firms they can trace back to credible sources. The firms winning citations aren’t the loudest, the flashiest, or the ones with the biggest marketing budgets. They’re the ones legal journalists quote, bar associations feature, curated directories include, and specific clients can find through specific scenarios.

Pick one practice area. Run 20 queries across ChatGPT, Perplexity, and Google AI Overviews this week. See who’s cited. That’s your gap, and that’s your roadmap. Want to go deeper on the tactics that earn citations? Start with our guide on generative engine optimization, then work through entity SEO and editorial link building to connect the whole system.