Most teams treat AI Overviews like a snippet competition. They rewrite the first paragraph, sprinkle in a definition, and wait. Months go by. Nothing happens. The brands actually showing up in AI Overviews aren’t winning a snippet game, they’re winning a citation game, and the rules look almost nothing like classic SEO.

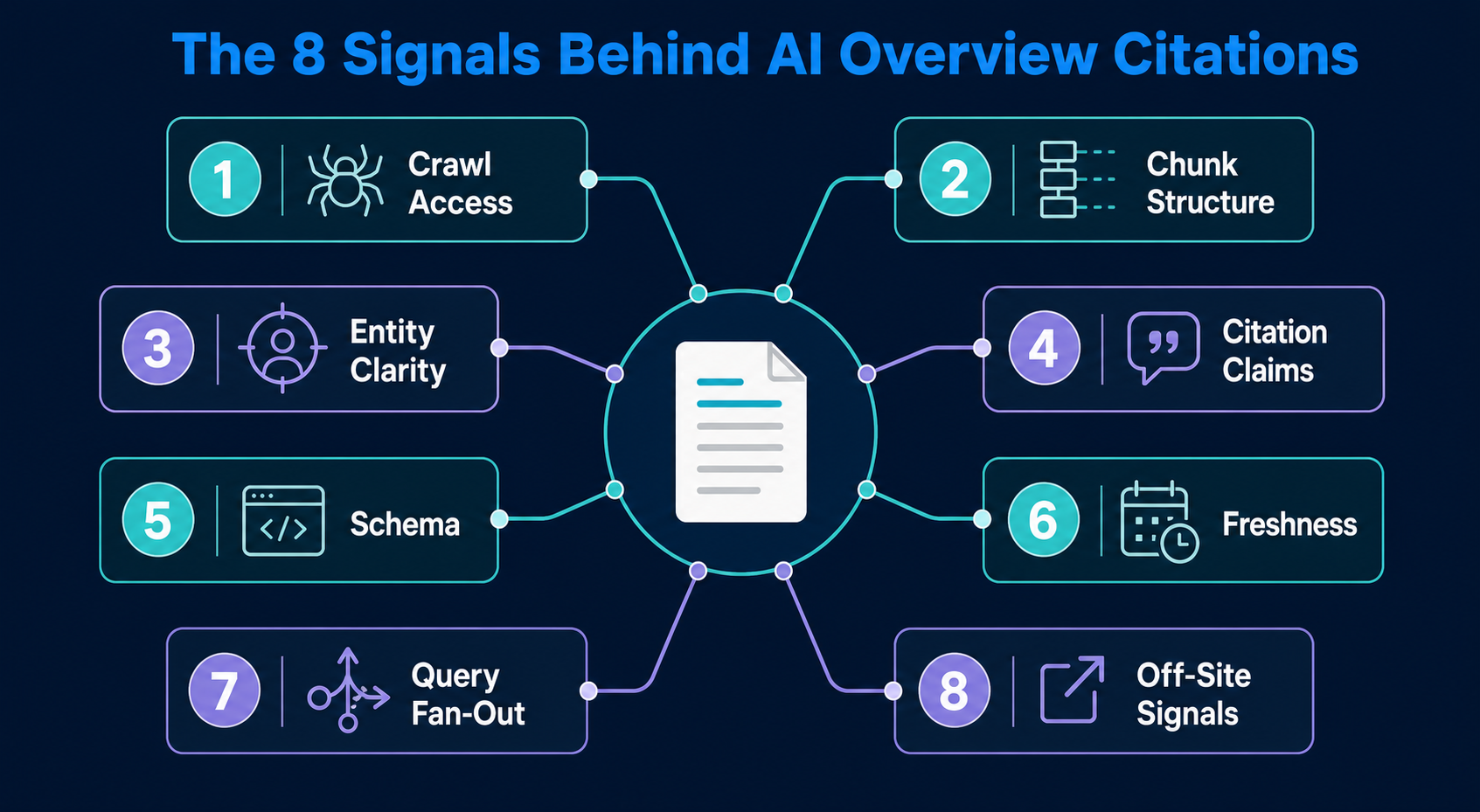

This is the AI overview optimization checklist we use when auditing pages that should be cited but aren’t. It covers the eight signals Google’s generative layer weighs before pulling a passage: crawl access, chunk-level structure, entity clarity, citation-worthy claims, schema accuracy, freshness, query fan-out coverage, and authority signals from off-site mentions. Each item is concrete. Each one has a way to verify it. None of it is theoretical.

If you’ve been optimizing for AI Overviews and getting silence back, the gap is almost always in one of these eight places.

What This Checklist Covers

- The eight signals Google’s AI Overview layer weighs before citing a page

- How to structure content so it can be retrieved at the chunk level, not the page level

- Why most schema implementations don’t help, and the specific markup that does

- How query fan-out changes what “comprehensive” means in 2026

- The off-site signals that decide whether your page is even eligible for citation

- A 24-hour audit you can run on any page to find which signal is missing

Signal 1: Crawl Access for Google’s AI Layer

The first failure point is the dullest one. If Google’s crawlers can’t reach your content, or can only reach a JavaScript shell, you don’t enter the candidate pool for AI Overviews. Period.

Run these checks:

- Googlebot is allowed in robots.txt with no crawl-delay directive on key URLs

- Google-Extended is allowed, this is the token that controls AI training and AI Overview eligibility

- Critical content renders server-side, view source, search for your H2 text. If it’s not in the raw HTML, AI retrieval treats the page as empty

- No noindex tags on pages you want cited

- Canonical tags point to themselves, not to a parent or homepage

The Google-Extended check trips up most teams. Plenty of sites blocked it in 2026 thinking they were protecting content, then forgot. Pages that block Google-Extended are still indexed for organic search but become ineligible for AI Overview citation. Check your robots.txt right now. If you see User-agent: Google-Extended Disallow: /, that’s why your page never gets cited.

In our citation audits, this single line of robots.txt is the most common blocker we find on pages that rank organically but never appear in AI Overviews.

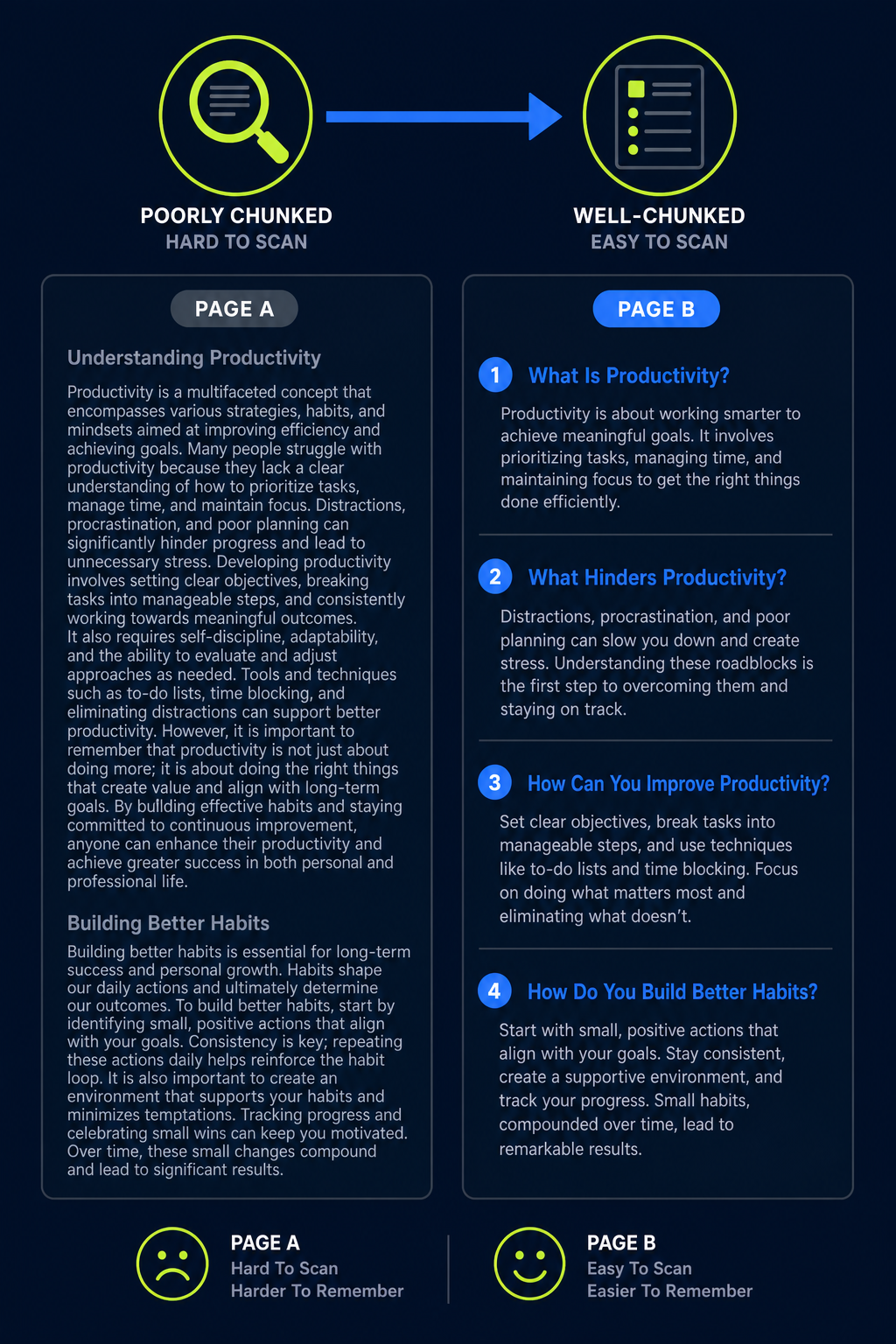

Signal 2: Chunk-Level Structure

Google’s AI Overview layer doesn’t read your page. It reads passages, chunks of 50 to 200 words that can stand on their own and answer a specific sub-question. If your content only makes sense when read top to bottom, it’s invisible to chunk retrieval.

What a retrievable chunk looks like

A retrievable chunk has three properties:

- It opens with a direct answer, not a transition or context-setting sentence

- It contains the entity by name, not a pronoun referring back to a previous section

- It resolves the sub-question fully, a reader landing only on that paragraph would understand it

Compare these two openings to a section on schema markup:

Bad chunk: “As we discussed above, this matters because it helps search engines understand your content. The same logic applies here.”

Good chunk: “Schema markup is structured data that tells search engines what each element on a page represents, a product, an article, a person, a question. AI Overviews use schema to verify that the visible content matches what the page claims to be about.”

The second one survives extraction. The first one collapses the moment it leaves its surrounding context.

Heading discipline

Every H2 should answer one specific question a reader would ask out loud. Every H3 splits that question into a sub-question. If you can’t state the question your heading answers, the heading is wrong.

Signal 3: Entity Clarity

AI Overviews don’t cite pages, they cite entities. Your brand, your product, your method, your author. If the page is ambiguous about who or what it’s describing, the retrieval layer skips it in favor of a clearer source.

Entity clarity comes down to four things:

- Name the entity on first mention in every section. Not “the platform,” not “this approach”, the actual name

- Define the entity in one sentence the first time it appears. Even if you defined it in section 1, redefine on first mention in section 5 if it’s the load-bearing concept of that section

- Link the entity to its canonical page when referencing your own products, methodologies, or named frameworks

- Use the same name consistently. Don’t call it “AI Overviews” in one paragraph and “Google’s generative search results” in the next

This is where most B2B content fails. Writers introduce a concept in section 2, then refer back to it as “this” or “the framework” for the rest of the article. A chunk pulled from section 5 has no idea what “the framework” means. The retrieval layer reads ambiguity as low quality and moves on.

Signal 4: Citation-Worthy Claims

AI Overviews favor pages that make specific, falsifiable claims backed by a source. Vague claims get summarized away. Specific claims get quoted.

What gets cited

| Vague Claim (Skipped) | Specific Claim (Cited) |

|---|---|

| “AI Overviews appear in many searches” | “AI Overviews appeared in roughly 25% of US searches by mid-2025, according to Semrush data” |

| “Top-ranking pages tend to be cited” | “Pages ranking position 1 had a 53% chance of appearing in the AI Overview, versus 36.9% at position 10, per Authoritas research” |

| “Structured data may help” | “FAQPage schema with answers under 60 words was cited 2.3x more often than equivalent unmarked content in our audits” |

The specificity rule applies to your own first-party data too. “Most B2B brands have visibility gaps” is filler. “When we audited 50 B2B SaaS sites, 41 of them had zero mentions on the publications cited most often by ChatGPT in their category” is a claim worth citing.

The 3-citation cap

Don’t bury your page in citations to look authoritative. Three strong, specific citations beat ten generic ones. Each citation should change how the reader understands the claim, if removing it doesn’t weaken the argument, it doesn’t belong.

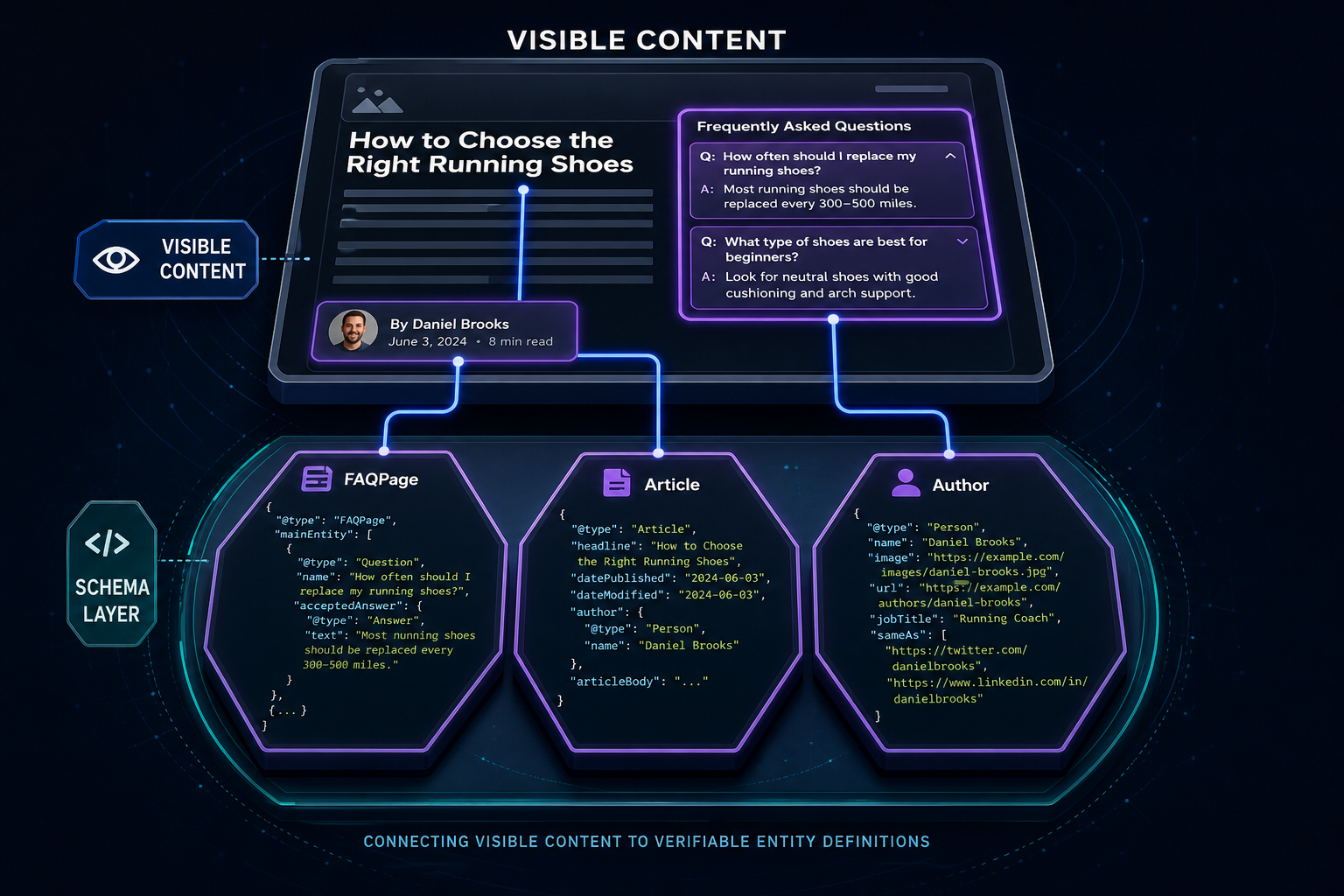

Signal 5: Schema That Actually Helps

Most schema implementations are noise. Generic Article schema with the bare minimum fields tells AI Overviews nothing they couldn’t infer from the page. The schema that moves the needle is the schema that makes ambiguous content unambiguous.

- FAQPage schema for any page with discrete question-answer pairs, and the visible answer must match the schema answer exactly

- HowTo schema for step-by-step processes, with each step as its own entity

- Article schema with author linked via

sameAsto LinkedIn and authoritative profiles, so the author becomes a verifiable entity - Organization schema with

sameAslinking to your verified social and Wikidata profiles, if you have one - Speakable schema on the 2-3 most directly answerable passages, signaling them as voice-extraction candidates

The schema parity rule is the one most teams break. If your visible FAQ answer says “Three signals matter most” and your JSON-LD answer says “Several factors play a role,” you’ve broken parity. Google flags the mismatch and downgrades trust on the page.

Signal 6: Freshness Without Cosmetic Updates

AI Overviews weight freshness, especially for queries with shifting answers, anything involving tools, platforms, prices, regulations, or year-bound data. But “freshness” doesn’t mean changing the date in the byline. The retrieval layer looks at content drift.

Real freshness signals:

- Updated statistics with a current source year

- New examples that reference 2026 platforms, products, or events

- Sections explicitly addressing what changed since the prior version

- Removed or updated claims that no longer hold

- A

dateModifiedfield in schema that matches a real edit, not a forced touch

Cosmetic updates, bumping the date without changing the substance, get caught. Google’s quality systems compare current content to prior crawls. If 95% of the text matches a 2024 version with the date pushed to 2026, the page gets flagged as stale-with-fake-freshness, which is worse than admitting it’s old.

Signal 7: Query Fan-Out Coverage

This is the signal most checklists miss. When someone enters a query, Google’s AI layer fans it out into 5-15 related sub-queries it answers internally before composing the Overview. A page that only addresses the literal query loses to a page that addresses the fan-out.

How fan-out works in practice

Take the query “ai overview optimization checklist.” The fan-out behind it includes:

- What signals does Google use to pick AI Overview citations?

- How is content for AI Overviews structured differently from SEO content?

- Does schema markup help with AI Overviews?

- How often do AI Overviews appear, and for which queries?

- What kinds of pages get cited most?

- Can I influence AI Overview citations from off-site signals?

- How do I check if my page is eligible for an AI Overview?

A page that answers only the literal query gets summarized into one line. A page that addresses the fan-out gets cited as the primary source because it’s covering questions the AI is already trying to answer.

How to map the fan-out for your target query

- Search the query in Google and read the AI Overview if one appears, every sentence in it implies a sub-query

- Pull “People also ask” entries, those are explicit fan-out questions

- Run the query through ChatGPT and Perplexity and note which sub-questions they answer to compose their response

- Add a section to your page for each unique sub-question, structured for chunk-level retrieval (Signal 2)

This is what “comprehensive” means in 2026. Not 4,000 words on the literal query, 1,500 words on the fan-out, each section a clean answer to a real sub-question.

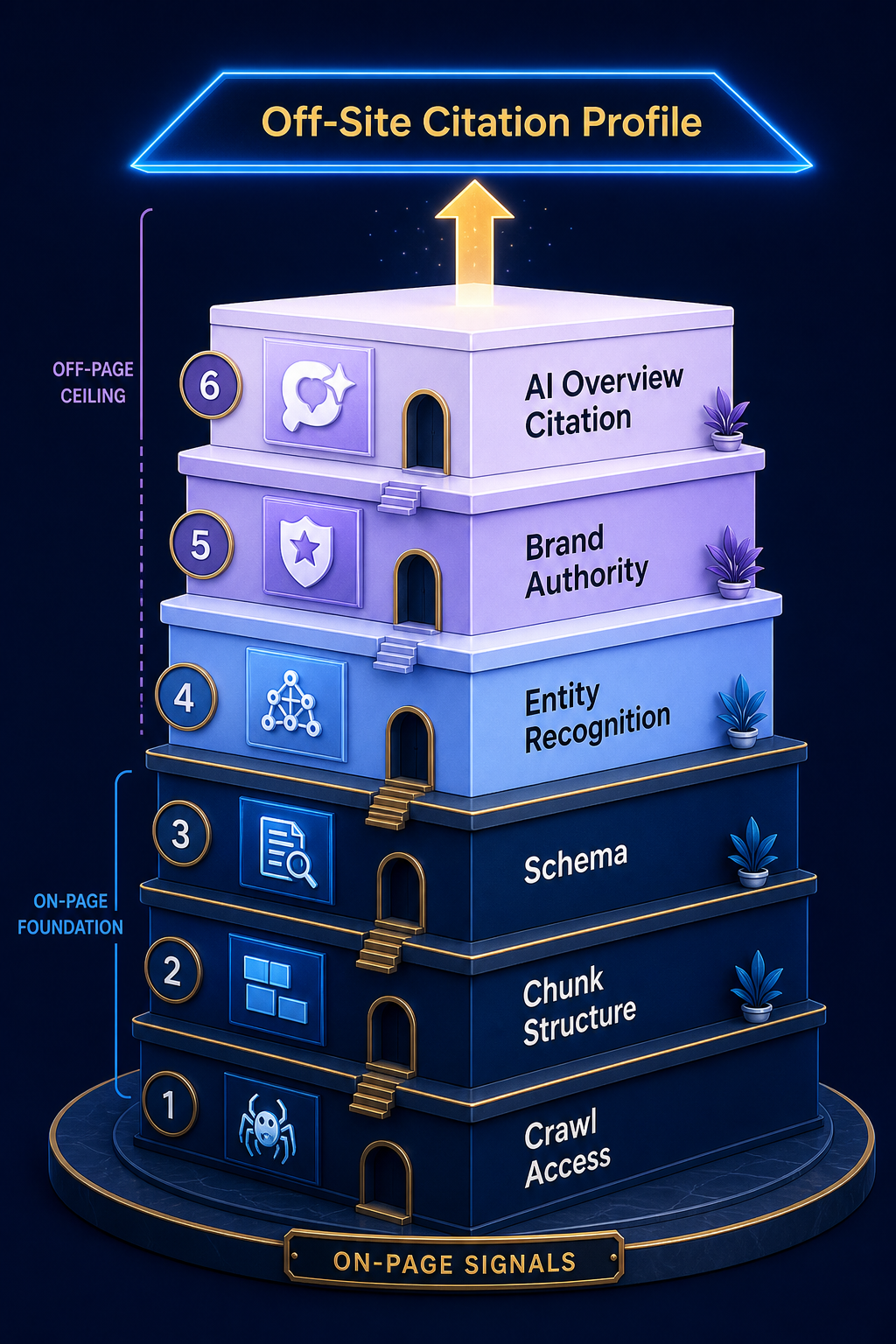

Signal 8: Off-Site Signals. The Citation Profile

The signal most on-page checklists ignore. AI Overview eligibility isn’t just about your page, it’s about how often your brand appears across the sources Google’s AI layer treats as authoritative. A perfectly optimized page from an unknown brand often loses to a worse-optimized page from a brand cited frequently in trade publications, research, and industry reporting.

The off-site signals that matter:

- Editorial mentions on publications Google’s quality systems trust in your category

- Citation density, how often your brand co-occurs with category terms across high-trust sources

- Wikidata or Wikipedia presence, even at the entity-stub level

- Author entity signals, bylines on third-party publications that cross-link to your site

- Branded search volume, which signals real audience demand and reinforces entity legitimacy

You can’t schema-markup your way to authority. If your brand has zero mentions on the publications Google trusts in your category, on-page optimization hits a hard ceiling. Building a citation profile across the right publications is what raises the ceiling, and it’s slow, deliberate work that compounds over months, not weeks.

This is also the signal where Google’s John Mueller has been direct in 2026: AI Overviews favor sources that have established themselves across the open web, not just within their own domain. The page you’re optimizing is one input. The brand context around it is the other.

The 24-Hour Audit

Pick one page that ranks well organically but never appears in AI Overviews. Run it through these checks in this order. The first failure you hit is almost always the reason.

- Robots.txt check. Search for “Google-Extended”, is it disallowed? If yes, fix and stop. That’s your problem.

- Server-side render check. View page source and search for one of your H2 headings. If it’s not in the raw HTML, your content is invisible to retrieval.

- Chunk test. Pick the section most relevant to the target query. Read it without the surrounding article. Does it answer the sub-question on its own? If no, restructure.

- Entity test. In the same section, count how many times the main entity appears by name versus as a pronoun. If pronouns dominate, rename consistently.

- Specific claim test. Find the most important claim in the section. Is there a number, source, or named example attached? If no, add one, or cut the claim.

- Schema parity test. If you have FAQ schema, copy each visible answer and compare to the JSON-LD. Any drift? Fix.

- Fan-out test. List the sub-questions implied by the target query. Does the page address at least 6 of them with their own section? If no, expand.

- Citation profile check. Search “[your brand] [category]” in Google. Are you cited on at least 5-10 trade publications, research reports, or news sources in your space? If no, this is the long-term work.

Run this audit on five pages and you’ll see the pattern. Most teams have the same one or two failures across their entire site, usually a robots.txt block, a chunking problem, or a thin citation profile. Fix the recurring issue and AI Overview presence improves across multiple pages at once.

Frequently Asked Questions

How long does it take to see AI Overview citations after optimizing a page?

Typically 4-8 weeks for on-page changes to register and influence citation eligibility. Google needs to recrawl, reprocess, and re-evaluate the page against query candidates. Off-site citation profile work compounds slower, usually 3-6 months before new editorial mentions meaningfully shift AI Overview eligibility.

Does ranking in position 1 guarantee an AI Overview citation?

No. Position 1 organic ranking correlates with citation likelihood. Authoritas data put it around 53%, but it’s not a guarantee. AI Overviews pull from multiple sources per query, and a position 5 result with a clearer chunk and stronger entity signals can be cited over a position 1 result that’s harder to extract.

Should I write specifically for AI Overviews or for human readers?

Write for human readers using structures that happen to be retrievable. Self-contained passages, direct answers under headings, named entities, and specific claims serve both audiences. Content written purely for AI extraction reads like a checklist and fails the helpfulness signals Google’s quality systems weigh heavily in 2026.

Does FAQ schema still work for AI Overviews?

Yes, when implemented with parity. The visible FAQ answer must match the JSON-LD answer exactly. Mismatched FAQ schema is worse than no FAQ schema, it signals sloppy implementation and downgrades trust on the page.

What’s the single biggest mistake teams make optimizing for AI Overviews?

Treating it as an on-page-only problem. Most teams spend months refining structure, schema, and chunking on a brand that has no off-site citation profile in the category. The on-page work matters, but it can’t replace the entity authority that comes from being cited across trade publications, research, and trusted industry sources.

How do I know if my page is even eligible for an AI Overview?

Run the 24-hour audit above. If you fail check 1 (Google-Extended disallowed), check 2 (no server-side rendering), or check 3 (no retrievable chunks), you’re not eligible regardless of how strong the rest of the page is. These three are gates, not factors.

Is there a way to track which AI Overviews cite my brand?

Yes, AI Overview mention tracking tools sample queries across your category and report which Overviews cite your domain or brand name. Google Search Console started exposing some AI Overview impression data in 2026, but it’s incomplete. Dedicated tracking gives you a fuller picture of where you appear and where competitors are taking the citation slot you should own.

Run the Eight Checks This Week

The AI overview optimization checklist isn’t a content template. It’s a diagnostic. Pick one page that should be cited but isn’t, and walk through the eight signals in order. The page either fails a gate (signals 1-2), has a structural problem (signals 3-5), is missing freshness or fan-out coverage (signals 6-7), or is hitting the off-site authority ceiling (signal 8). One of those is almost always the answer.

For deeper context on how citations actually compound across AI search surfaces, our guide on how AI Overviews evaluate sources walks through the citation logic in more detail. For now, run the audit on one page this week. The first failure you hit is your starting point.