AI detectors work by scoring how predictable your writing is. They run text through a language model, measure how closely each word matches what a machine would have picked, and flag passages that look too statistically clean to be human. That’s the whole trick. No hidden watermark, no secret signature, just probability math wearing a confidence score. Which is why they’re useful, fallible, and frequently wrong about the same paragraph twice.

If you’re a content lead deciding whether to trust a 87% AI score on a freelancer’s draft, you need to know what that number actually measures before you act on it.

The Short Version

- AI detectors score text on predictability (perplexity) and rhythm variation (burstiness), then run it through a classifier trained on human and machine samples.

- Their accuracy claims (often “99%”) come from controlled benchmarks. Real-world false positive rates run higher, especially on edited AI text and non-native English writing.

- Modern models like GPT-5 and Claude 4 produce text with more variation, which collapses the perplexity signal detectors built their reputation on.

- Detectors are a signal, not a verdict. Treat the score like a smoke alarm: useful, occasionally hysterical, never the only evidence you need.

[FEATURED_IMAGE: This image teaches that AI detectors score predictability rather than identify hidden markers.

CONCEPT: A reader sees a paragraph of text passing through a scoring meter that measures predictability and rhythm, with a probability percentage on the right.

PROMPT: Horizontal 16:9 editorial infographic on a soft light-gray background. On the left, a clean document card shows a paragraph of placeholder text with blue accent lines. In the center, a horizontal scoring meter labeled “Predictability Score” runs from low to high with a small needle pointing toward the middle. On the right, a deep dark navy result card shows a large percentage with the label “AI Probability” below it. A subtle blue-to-purple gradient appears only on the result card. Thin connector arrows between elements. Rounded cards, soft shadows, large mobile-readable type, strong visual hierarchy, minimal clutter. No people, no faces, no neon.

Alt text: “ai-detector-predictability-scoring-flow-diagram”

Placement: featured

Caption: “Detection is a probability score, not a verdict on authorship.”]

What an AI Detector Actually Measures

An AI detector is a classifier. You feed it text, it returns a probability that the text came from a language model. That probability is built on a small set of signals, and once you understand them, the whole category stops feeling magical.

The two signals doing most of the work are perplexity and burstiness. Everything else (embeddings, stylometry, ensemble scoring) is a refinement layered on top.

Perplexity: How Surprised the Model Is

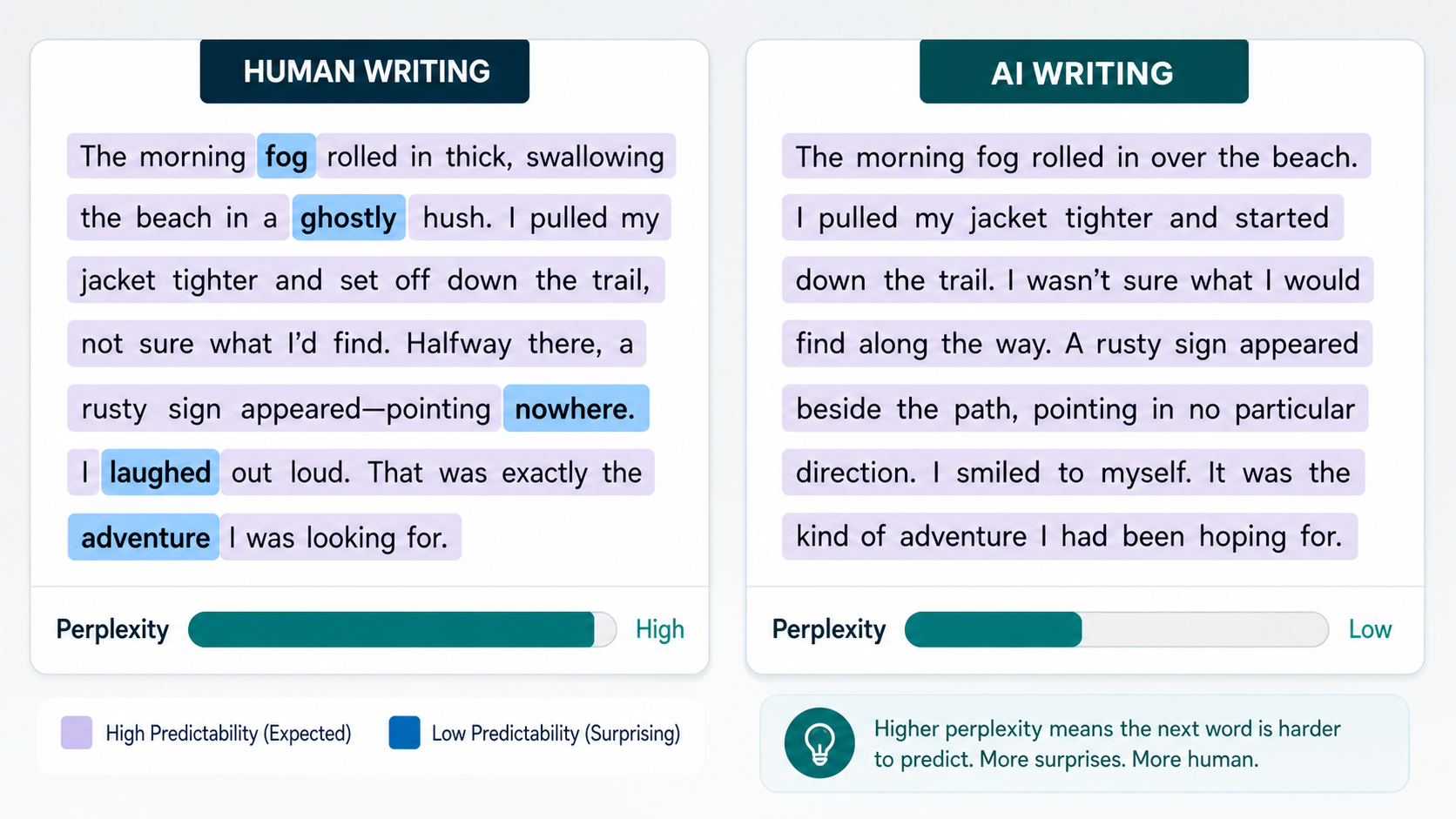

Perplexity measures how unexpected each word is, given the words before it. A reference language model reads your text and predicts the next word at every position. If the actual next word matches its top guesses, perplexity is low. If the word is one the model didn’t see coming, perplexity is high.

Human writing tends to be high-perplexity. We pick odd phrasings, double back, abandon a sentence halfway and start again. Machine-generated text tends to be low-perplexity, because the model writing it is the same kind of model doing the predicting. They agree on what should come next. That agreement is the fingerprint.

So when a detector says your text is “likely AI,” what it often means is: a reference model wasn’t surprised by any of your word choices.

Burstiness: The Rhythm of How You Write

Burstiness measures variation in sentence length and complexity. Humans write in bursts. A long sentence packed with clauses, then a short one. Then a fragment. Then back to a 25-word build. Machines, especially older ones, smooth that out. Their sentences cluster around a similar length and a similar grammatical shape.

A detector that sees twelve consecutive sentences all between 18 and 22 words, all subject-verb-object, all hedged in the same way, raises a flag. Not because that’s proof, but because it’s a pattern humans rarely produce when writing naturally.

The Machine Learning Underneath

Perplexity and burstiness give a detector raw signal. The classifier turns that signal into a verdict.

Most production detectors are supervised classifiers trained on labeled corpora: human-written samples on one side, machine-generated samples on the other. The model learns the features that separate the two and outputs a probability score for new text. That’s the architecture behind GPTZero, Originality.ai, Copyleaks, Turnitin’s AI indicator, and most of the field.

The training data is where the real differences live. A detector trained mostly on GPT-3.5 output from 2023 will struggle on Claude 4 or GPT-5 output from 2026, because the newer models write differently. A detector trained on academic essays will misfire on marketing copy. The classifier is only as current as the samples it learned from.

This is why the same paragraph can score 12% AI on one tool and 91% on another. They’re not measuring the same thing against the same baseline. They’re each running their own classifier against their own training distribution.

Embeddings and Stylometric Layers

The better detectors add embedding analysis on top of perplexity. Embeddings turn text into a vector (a long list of numbers) that captures meaning, structure, and style. The detector compares your text’s vector to clusters of known human and AI vectors. If your text sits inside the AI cluster, that adds to the score.

Stylometric analysis goes further. It looks at function-word frequency, punctuation patterns, sentence-opener variety, and clause structure. Forensic linguists used these techniques on disputed authorship cases long before AI detection existed. They’ve been quietly absorbed into the modern detector stack.

Why Detectors Get It Wrong So Often

Every AI visibility client I work with has been burned by a false positive at least once. Usually it’s a senior writer’s draft flagged at 78% AI when the writer can’t even spell ChatGPT. The reason is structural, not a bug.

Polished Writing Looks Like AI

If you write tight, edited prose with consistent sentence rhythm and clean grammar, you produce low-perplexity text. The detector can’t tell whether you’re a careful writer or a careful machine. Both look the same on the meter.

This is why journalism graduates, technical writers, and people who edit their own work obsessively get flagged more than chaotic first-draft writers. Polish is a fingerprint detectors mistake for synthesis.

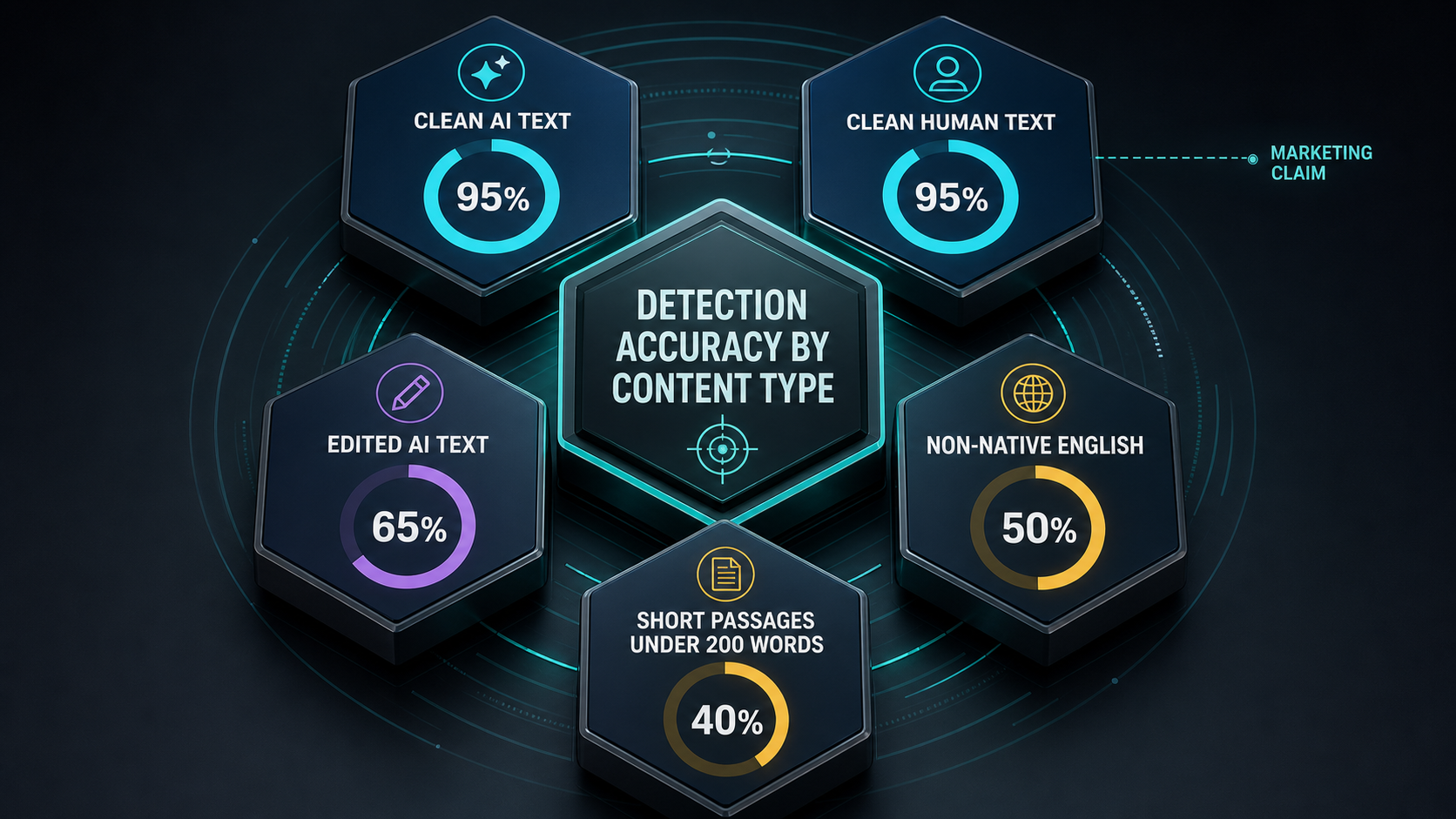

Non-Native English Triggers False Positives

A 2023 Stanford study found that detectors flagged non-native English essays as AI-generated at rates above 60%, while flagging native essays at single-digit rates. The mechanism is the same: non-native writers tend to use a smaller vocabulary and more predictable sentence structures, which the detector reads as machine-like.

Three years later, the bias has been documented but not fixed. If your editorial team includes ESL writers, a raw detector score is not just unreliable, it’s actively unfair.

Edited AI Text Slips Through

Take a ChatGPT draft, rewrite 30% of the sentences, swap in some idioms, vary the lengths, and most detectors drop their score below 20%. The signal they rely on (uniform predictability) gets disrupted by light human editing.

This is the open secret of the AI content economy in 2026. The companies producing AI content at scale aren’t trying to fool the detectors. They’re hiring editors to do a pass. The pass breaks the signal.

Short Text Has Nothing to Measure

Perplexity and burstiness need volume. Under 200 words, the statistical signal is too thin to be reliable. Most detectors will still produce a score, but it’s closer to a coin flip than a measurement. Treat any score on a short passage as advisory at best.

What “99% Accuracy” Really Means

Every major detector claims 98 to 99% accuracy. The numbers are real, and they’re also misleading.

Those accuracy claims come from controlled benchmarks: a set of clearly labeled human texts and clearly labeled AI texts, run through the detector, scored on whether each verdict was right. Under those conditions, the top tools do hit 95%+ accuracy.

The benchmark RAID (Robust AI Detection), maintained by researchers at the University of Pennsylvania, evaluates detectors against 11 domains, 12 language models, and 12 adversarial attack types. Top performers cluster around 95% on clean text and drop to 60 to 80% under adversarial conditions like paraphrasing, character substitution, or light editing.

In production, “accuracy” splits into two numbers that matter more than the headline:

- False positive rate: how often human writing gets flagged as AI. A 1% false positive rate sounds small until you realize you’d flag 50 innocent drafts out of 5,000.

- False negative rate: how often AI writing gets through. Higher on recent models, higher on edited text.

A detector that’s 99% accurate overall can still be 30% accurate on the specific kind of text you’re checking. Ask vendors for the breakdown by content type, not the marquee number.

Watermarking: The Approach That Might Actually Work

The most promising long-term answer isn’t smarter detection. It’s text that comes labeled.

Watermarking embeds a statistical pattern into AI-generated text at the moment of generation. The model is steered toward a specific subset of words at certain positions, in a way humans can’t see but a detector with the watermark key can verify with high confidence.

Google’s DeepMind released SynthID for text in 2024, and OpenAI has been sitting on a watermarking system for ChatGPT output for over two years. The reason watermarks haven’t taken over: they’re trivially defeated by paraphrasing tools, and the labs are cautious about deploying systems that can be reverse-engineered to evade.

If watermarking becomes standard across major model providers, detection becomes a key-verification problem instead of a probability-guessing problem. We’re not there yet.

How to Actually Use a Detector Without Embarrassing Yourself

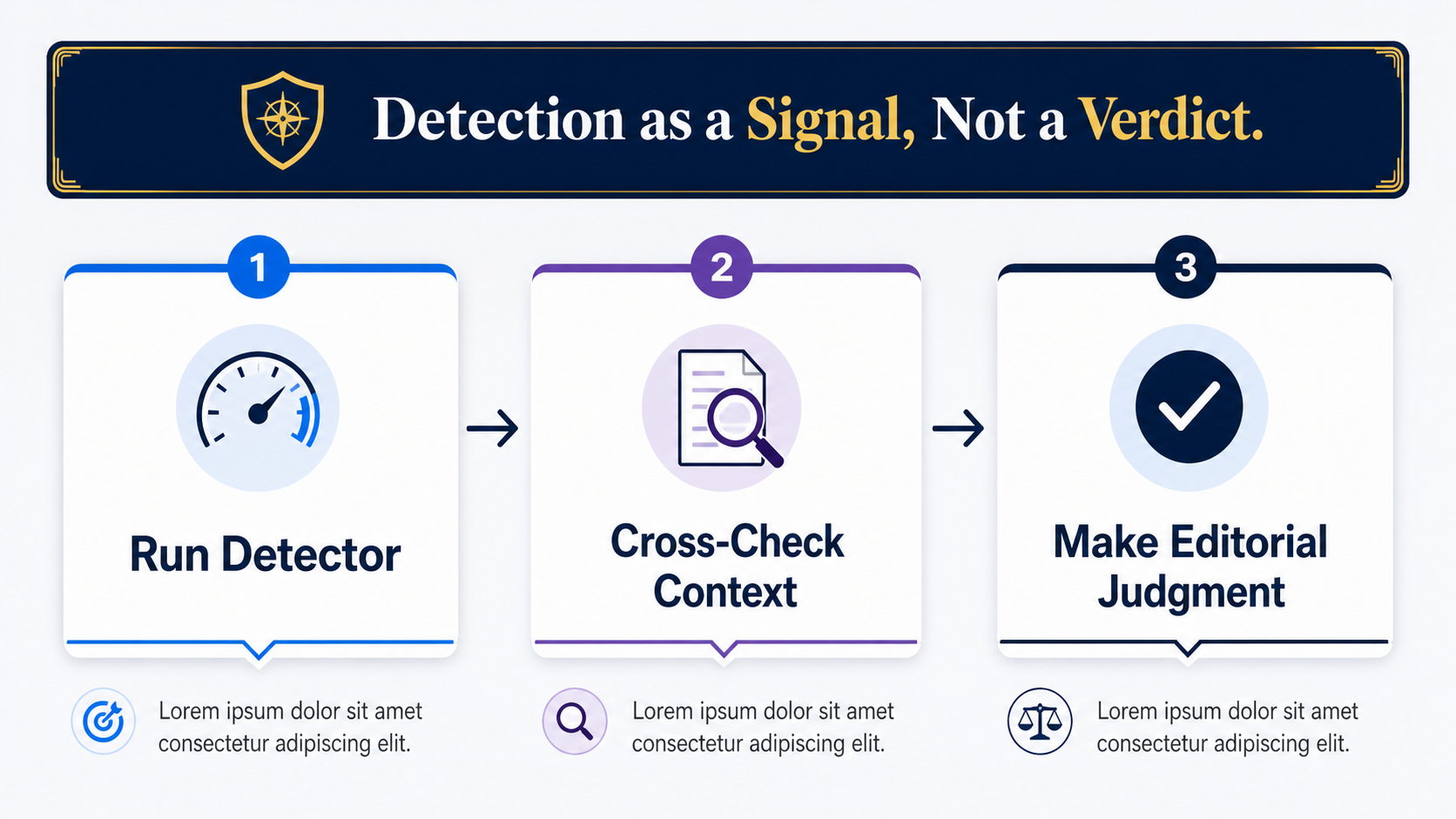

Detectors are tools, not judges. Use them inside a process that accounts for their failure modes.

For Editorial Teams

Run the detector as one signal in a review, not a gate. If a draft scores high, that’s a prompt to look closer, not a verdict. Check for the things detectors can’t see: does the writer have version history? Can they explain their sources? Does the voice match their previous work?

I’ve worked with content programs where a single 80%+ score automatically rejected a draft. Every one of those programs eventually lost a good writer to a false positive and had to walk back the policy. Make the score a flag, not a kill switch.

For Academic and Compliance Use

Don’t act on a detector score alone. Pair it with process artifacts: drafts, revision history, source notes, an oral conversation about the work. Detectors should support a judgment, not make it.

OpenAI shut down its own AI text classifier in 2023, citing low accuracy. That was an unusually honest move from a company that had every commercial incentive to keep the tool alive. The signal was loud: even the people building these models don’t trust detection as a standalone verdict.

For Your Own Content

If you’re publishing under your name and want to check whether your work would trip a detector, run it through two or three different tools. If they disagree wildly, the result is noise. If they agree, the issue is usually rhythm: your sentences are too uniform. Vary the lengths, break a pattern, add a fragment. The score drops.

That’s also a sign your writing might benefit from the variation regardless of what any detector says.

Where This Connects to AI Visibility

The detection conversation matters for one more reason most marketing teams miss: the same signals detectors look for (predictability, low burstiness, generic phrasing) are the signals AI search models use when deciding which content to ignore.

Content that reads as machine-generated doesn’t get cited by ChatGPT, Perplexity, or Google’s AI Mode. The models can recognize their own house style and they actively avoid grounding their answers in it. If you want your brand to surface in AI search results, the same writing discipline that beats detectors also earns citations: real opinions, specific numbers, varied rhythm, and points of view a generic model wouldn’t produce.

That’s the deeper bet behind every AI search optimization strategy worth running. Detection isn’t just about catching AI content. It’s about understanding what authentic writing actually looks like to a machine, and producing more of it.

Frequently Asked Questions

Can AI detectors tell which model generated the text?

Some claim to, but the accuracy drops sharply compared to the basic human-or-AI verdict. Detectors trained to identify GPT-4 specifically might confuse it with Claude or Gemini, especially as the models converge stylistically. Treat model-attribution claims with more skepticism than the base score.

Why does the same text score differently on different detectors?

Each detector uses its own training data, its own reference model for perplexity, and its own classifier weights. They’re measuring related but distinct things. A 20-point gap between two tools on the same passage is normal, not a sign that one is broken.

Do AI detectors work on languages other than English?

Most are English-trained and degrade significantly on other languages. Some support a handful of major languages, but performance drops 10 to 30 points compared to English. For Spanish, French, German, and Mandarin, results are usable but noisy. For lower-resource languages, the score is closer to a guess.

Can I make AI writing undetectable?

Yes, with enough editing. Vary sentence length, swap predictable word choices for unexpected ones, add a personal anecdote, break a grammatical convention occasionally. The signal collapses. Whether you should is a different question, especially in academic or compliance contexts where the rule is about disclosure, not detection.

How accurate is Turnitin’s AI detector?

Turnitin reports around 98% accuracy on clean GPT-generated text with a 1% false positive rate, based on its internal benchmarks. Independent testing has found higher false positive rates on student writing, especially edited drafts and non-native English. Use it as a signal, not a finding.

Will AI detection get better or worse over time?

Both. Detectors will improve their classifiers and add new signals. The underlying language models will also keep getting better at producing varied, human-like text. The gap stays open. Watermarking might close it, but only if major model providers all agree to deploy it, which they currently haven’t.

The Honest Take

AI detectors are useful when you treat them as probability scores from an imperfect classifier. They’re dangerous when you treat them as verdicts. The teams getting value from them have built workflows that use the score as one input among several. The teams getting burned by them gave the score the final word.

If you’re trying to figure out whether AI content is hurting your brand’s visibility in ChatGPT, Perplexity, or Google AI Mode, that’s a different question with a different answer. Get your free AI visibility audit and we’ll show you what AI search actually says about your brand.

Here is the publication-ready HTML for “How Do AI Detectors Work? The Mechanics, Honestly.”