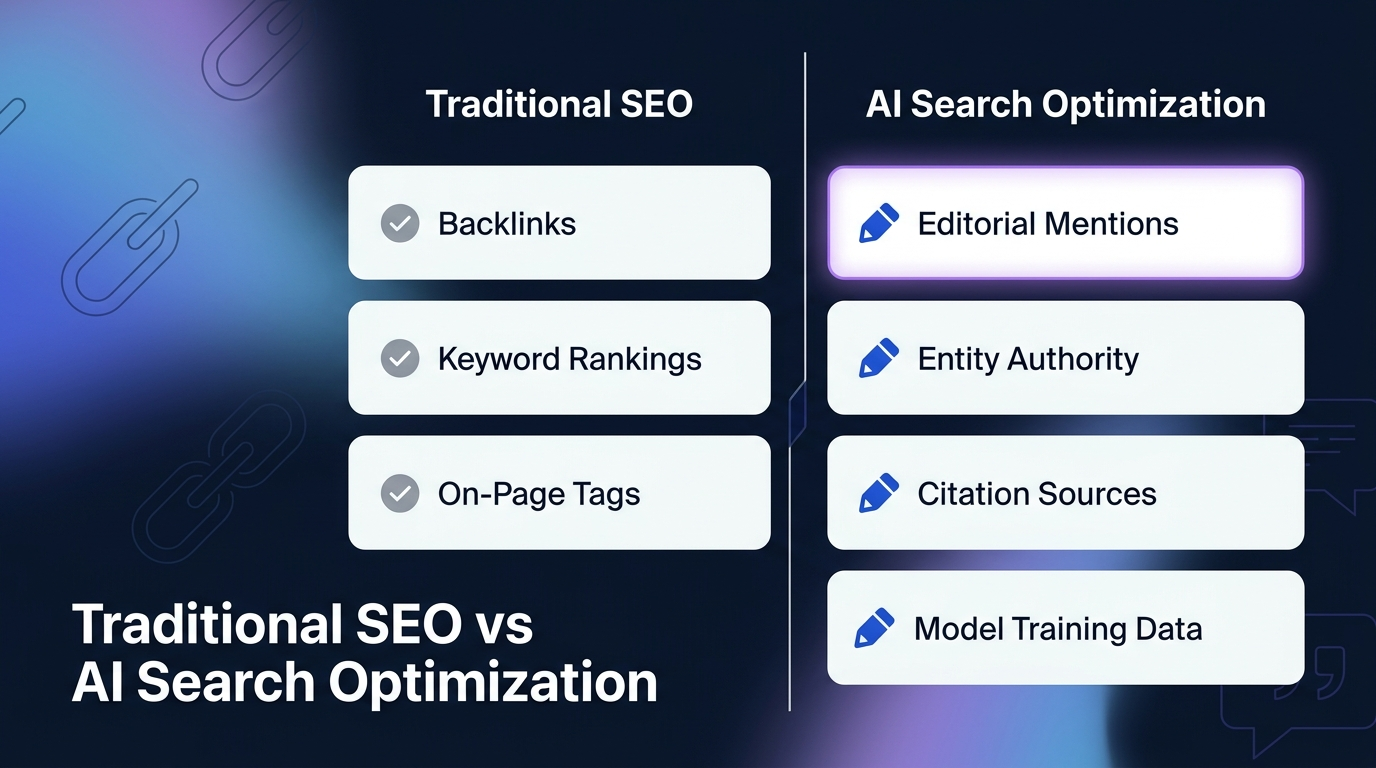

Most teams treat AI search optimization like SEO with a new label. It isn’t. The brands that show up when someone asks ChatGPT, Perplexity, or Gemini for a recommendation built that presence through a different set of signals, editorial mentions, entity authority, and citation patterns that traditional SEO tooling doesn’t track. AI search optimization is the practice of making your brand discoverable, citable, and recommended inside AI-generated answers across LLMs and AI-powered search surfaces, and it depends less on ranking pages and more on being the entity models associate with your category. This guide walks through what actually moves the needle in 2026, where teams waste time, and how to build a system that compounds.

What You’ll Learn

- Why AI search optimization is a distinct discipline, not rebranded SEO

- The five signals AI answer engines actually weight when selecting sources

- How ChatGPT, Perplexity, Gemini, and Google AI Overviews differ in how they pick citations

- A practical prioritization framework, what to do in month 1 vs month 6

- How to measure AI visibility when traditional rankings stop mattering

- The three mistakes that quietly destroy AI visibility campaigns

AI Search Optimization, Defined Without the Buzzwords

AI search optimization (sometimes called AEO or GEO, depending on who’s selling it) is the work of getting your brand selected, cited, and recommended inside AI-generated answers. That includes ChatGPT, Perplexity, Claude, Gemini, Microsoft Copilot, Grok, and Google’s AI Overviews, surfaces where the user gets an answer instead of ten blue links.

Here’s the shift that matters: in traditional search, you compete for a ranking slot. In AI search, you compete to be part of the model’s answer, either pulled live from a citation source or already embedded in the model’s understanding of your category. Those are two different games. Ranking #3 for “best CRM for startups” means something. Being the brand Perplexity names when asked the same question means something different, and the signals that produce each outcome only partially overlap.

What It’s Not

AI search optimization isn’t keyword stuffing prompts. It isn’t schema markup by itself. It isn’t publishing one more blog post per week. And it definitely isn’t “gaming” ChatGPT, the models retrain, the retrieval layer changes, and anything hacky evaporates within a few update cycles.

The Five Signals AI Answer Engines Actually Weight

After watching hundreds of brand citation profiles develop across B2B categories, the pattern is consistent. AI answer engines, whether they pull live from a retrieval layer or lean on pretrained knowledge, weight roughly the same five signals when deciding which brands to mention and which sources to cite.

1. Entity Authority, Does the Model Know You Exist?

Before a model can recommend you, it has to recognize you as an entity in your category. That recognition comes from consistent, editorial mentions of your brand across the sources the model learned from, Wikipedia, high-authority publications, industry databases, structured knowledge sources, and Reddit. A brand with zero presence in those sources is functionally invisible, no matter how much content it publishes on its own site.

2. Category Association, What Problem Do You Solve?

The model needs to associate your brand with a specific category or problem. If your name appears in editorial contexts alongside “revenue operations platforms” or “AI transcription tools,” the model builds that association. If your name only appears on your own website, the association is thin. Category association is why “Notion” gets recommended for collaborative docs and why a functionally identical unknown tool doesn’t, even if the unknown tool has better SEO.

3. Citation Sources, Are You On Pages That AI Systems Retrieve?

For surfaces with live retrieval (Perplexity, ChatGPT with browsing, AI Overviews, Copilot), the question becomes: when the model pulls sources to answer a query in your category, do any of them mention you? This is where editorial placements on trusted industry publications compound. Being quoted in a TechCrunch piece on AI workflow tools matters differently than being ranked #4 on Google for a long-tail keyword.

4. Structural Readability, Can the Model Extract Your Content Cleanly?

When your own pages are part of the retrieval set, structure decides whether the model can lift the answer out. Clear H2/H3 hierarchy, direct-answer paragraphs beneath headings, tables for comparisons, lists for processes, and definitions on first mention, these aren’t aesthetic choices. They’re the difference between a page that gets summarized into an answer and a page that gets skipped for one that’s easier to parse.

5. Trust Signals, E-E-A-T, But For Machines

Named authors with verifiable expertise, cited data with real sources, updated publication dates, and brand mentions on trusted third-party sites all reduce the model’s uncertainty about your content. Lower uncertainty = higher chance of inclusion. Perplexity in particular leans heavily on source quality; anecdotally, pages with named expert authors and real citations get pulled at dramatically higher rates than pages with generic bylines and stock claims.

How Each AI Surface Actually Picks Sources

Treating “AI search” as one monolithic thing is where most strategies go wrong. The surfaces behave differently. Here’s how the major ones actually decide what to surface, based on what we’ve observed tracking brand citations across them.

| Surface | Primary Mechanism | What Gets Cited | Where to Focus |

|---|---|---|---|

| ChatGPT (default) | Pretrained knowledge + optional live browsing | Brands with strong training-data presence | Wikipedia, major publications, Reddit, industry databases |

| ChatGPT (with browsing / SearchGPT) | Live retrieval weighted toward Bing index | Recent, well-structured pages with clear answers | Bing indexation + answer-first content structure |

| Perplexity | Live retrieval with multi-source synthesis | Expert-authored pages, named sources, structured data | Editorial mentions + on-page E-E-A-T signals |

| Gemini / Google AI Overviews | Google index + knowledge graph + retrieval | Pages ranking well + entity-graph brands | Traditional SEO + entity authority |

| Claude | Pretrained knowledge (limited live retrieval) | Brands deeply embedded in training corpus | Long-tail editorial presence on trusted sites |

| Microsoft Copilot | Bing retrieval + GPT reasoning | Bing-indexed pages with clear answer structure | Bing Webmaster Tools + structured answers |

Two practical implications. First, a campaign targeting only one surface misses most of the audience, buyers toggle between tools freely. Second, the signals for retrieval-based surfaces (Perplexity, AI Overviews, Copilot) and pretrained-weight surfaces (default ChatGPT, Claude) require different investments. Retrieval-based surfaces respond to content work within weeks. Pretrained surfaces only shift when the model retrains, and that shift comes from editorial presence built over months, not from a new blog post.

A Prioritization Framework: What to Do First

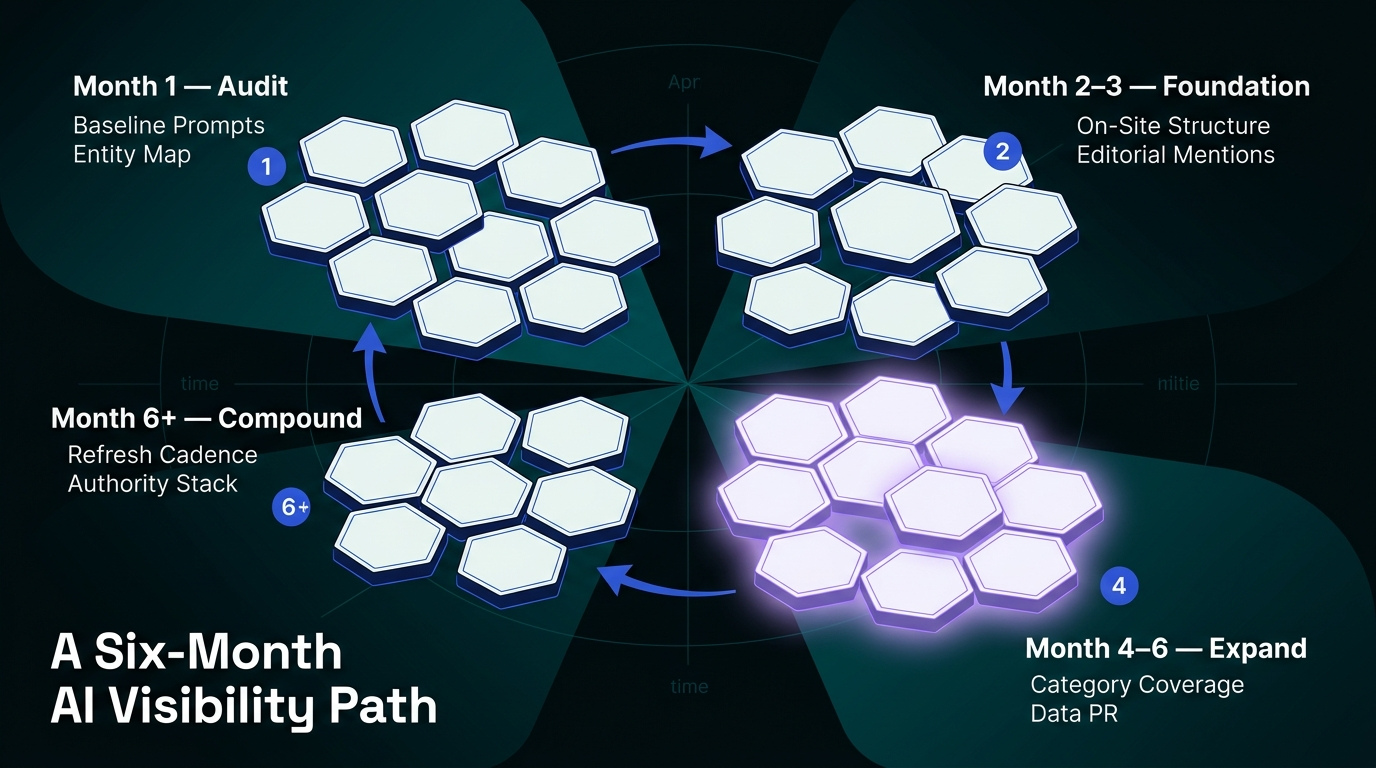

Most AI search optimization advice reads like a 30-item checklist with no guidance on sequence. In practice, the work stacks. Here’s the order that consistently produces results.

Month 1: Audit and Baseline

Before changing anything, measure where you actually stand. Run the same 20–30 category prompts across ChatGPT, Perplexity, Gemini, and Claude. Record which brands get named, which sources get cited, and where you appear (or don’t). This baseline is the single most important artifact of the entire program, without it, you’ll spend six months guessing whether your work moved anything.

At the same time, audit your existing editorial footprint. Search your brand name on major industry publications. Pull your Wikipedia presence (or lack of it). Check whether your brand appears in category Reddit threads, G2 / Capterra, industry wikis, and any structured data sources. This gives you the entity map the model is working with.

Month 2–3: Fix the Foundation

Two workstreams run in parallel.

On-site: Restructure the pages you want cited. Answer-first paragraphs under H2 headings. Direct definitions on first mention of any entity. Comparison tables where comparisons exist. Schema markup where it genuinely applies (Article, FAQ, HowTo, Organization). Don’t block GPTBot, ClaudeBot, PerplexityBot, or Google-Extended in robots.txt, that’s an unforced error that removes you from consideration entirely.

Off-site: Start building editorial presence on the publications AI models actually learn from. This isn’t link building. It’s mention building, commentary in industry articles, expert quotes, inclusion in category round-ups, presence on the sites that feed training data. One editorial mention on the right publication outperforms fifty unlinked mentions on low-authority blogs.

Month 4–6: Expand Category Coverage

Once the foundation is in place, broaden the editorial footprint. Get your brand into category comparisons, best-of lists, how-to pieces that mention your category, and expert round-ups. Publish original data, proprietary research gets cited far more than opinion content, because models favor claims with evidence. If you’ve internal usage data, benchmark data, or survey data from your customer base, turn it into a report and pitch it to industry publications.

Month 6+: Compound and Maintain

Refresh high-performing content on a 90-day cadence. Add new editorial placements every month. Rerun your baseline prompt set quarterly and track citation share over time. This is where the real leverage is, the brands that keep publishing and earning mentions in month 9 and month 12 pull ahead of competitors who ran a campaign for a quarter and stopped.

How to Actually Measure AI Search Optimization

For the per-platform walkthroughs behind the measurement surface, see how to check brand mentions in ChatGPT and how to track brand mentions in Perplexity, and monitoring brand mentions in LLMs covers the cross-platform cadence that pairs with the prioritization framework described above.

Traditional rank tracking tells you nothing here. The measurement stack for AI visibility is different.

Citation share: Across a defined set of category prompts, what percentage of responses mention your brand? Track this by surface (ChatGPT, Perplexity, Gemini, Claude) and over time. This is the closest equivalent to “ranking” in AI search.

Source inclusion rate: When AI surfaces with live retrieval answer category queries, what percentage of the citations are from your owned pages? This tells you whether your on-page work is converting into actual inclusion.

Sentiment in AI answers: When AI surfaces mention your brand, how do they describe it? Positive category framing, neutral listing, or critical comparison? This is the AI-era version of brand sentiment analysis, and it shifts as your editorial footprint changes.

Referral traffic from AI: Check analytics for traffic sourced from chat.openai.com, perplexity.ai, gemini.google.com, and similar domains. It’s small but growing, and it’s the cleanest signal that AI citations are converting to actual visits.

Prompt-level visibility movement: For any specific high-value prompt (“best [category] for [segment]”), track whether you move from invisible → mentioned → primary recommendation over time. This is the metric that actually correlates with pipeline.

For teams tracking this systematically, a dedicated LLM brand monitoring workflow matters more than a traditional rank tracker at this point. The two aren’t substitutes, one is history, one is the present.

Three Mistakes That Quietly Destroy AI Visibility Campaigns

The AI-search-optimization mistake we see most often in visibility audits is a team running the SEO keyword-volume playbook on AI surfaces and wondering why citation rates stay flat. AI retrievers weight entity clarity and third-party corroboration, not the long-tail keyword coverage that moved the old rank reports. Reframing the target from “ranking for queries” to “becoming the clearest entity in the category’s top retrieval surfaces” is the change that actually moves ChatGPT and Perplexity output.

These are the failure patterns we see most often. Each one feels reasonable in the moment and costs months of compounding progress.

Mistake 1: Treating AI Search Like SEO With New Headings

Teams inherit an SEO playbook, add “FAQ schema” and “conversational keywords” to it, and call it AI search optimization. The problem: this approach only touches the on-site layer. It ignores entity authority, category association, and editorial presence, which together drive more variance in AI citations than any on-site change. The fix is recognizing that off-site work (editorial mentions, expert commentary, industry presence) isn’t optional. It’s the primary lever.

Mistake 2: Measuring Too Early and Quitting

Most AI visibility work has a lag. Retrieval-based surfaces shift within weeks, but pretrained-weight surfaces (default ChatGPT, Claude) only reflect your editorial work after the next model update. Teams that run a campaign for 8 weeks, see modest movement, and pivot back to SEO never capture the real payoff. The honest version: expect meaningful citation-share movement at month 4. Expect category-level dominance at month 9+. Anything faster is noise.

Mistake 3: Publishing More Instead of Publishing Better

Volume doesn’t compound here the way it did in 2015 SEO. What compounds is credibility per page. One deeply researched piece with original data, a named expert author, clear structure, and placements in third-party publications outperforms ten thin pages. If your content team is measured on output count, the program will underperform. Measure them on citations earned, editorial placements secured, and prompt-level visibility gained.

Where AI Search Optimization Fits Alongside SEO

SEO isn’t dead. It feeds AI visibility, Gemini and AI Overviews lean on Google’s index, and ChatGPT with browsing weights Bing heavily. A page that ranks well is a page more likely to be retrieved. The relationship is additive, not replacement.

But the inverse isn’t true. A brand that dominates SEO and has no editorial footprint still loses in AI citations, we’ve seen category leaders with strong rankings get passed over in AI answers for smaller competitors with better editorial presence. The lesson: keep investing in SEO, and layer AI search optimization on top. The two programs share technical foundations and diverge at the top of the stack.

Frequently Asked Questions

Is AI search optimization the same as AEO or GEO?

Mostly yes, the labels overlap. AEO (answer engine optimization) usually emphasizes being the direct answer pulled by a retrieval layer. GEO (generative engine optimization) usually emphasizes being cited by generative systems. In practice, the work is the same: earn entity authority, build editorial presence, structure content for extraction, and measure citation share. The terminology is less settled than the discipline.

How long does AI search optimization take to show results?

Retrieval-based surfaces (Perplexity, AI Overviews, Copilot) can show movement within 4–8 weeks of coordinated on-site and editorial work. Pretrained-weight surfaces (default ChatGPT, Claude) typically show meaningful shifts at month 4+ as your editorial footprint reaches the sources models learn from during retraining cycles. Plan for a 6-month minimum to judge the program.

Do I need to block or allow AI crawlers?

Allow them. Blocking GPTBot, ClaudeBot, PerplexityBot, and Google-Extended in robots.txt removes you from consideration for retrieval and future training. The risk of being “scraped” is minor compared to the cost of invisibility. Exceptions exist for content you genuinely need to keep proprietary, but those should be targeted, not site-wide.

Does schema markup actually matter for AI search?

Yes, but less than most SEO guides claim. Schema helps AI systems disambiguate entities and parse structured content like FAQs and products. It’s table stakes, not a differentiator. A page with perfect schema and no editorial authority won’t outperform a page with modest schema and strong third-party citations.

How is AI search optimization different for B2B vs B2C?

B2B categories have smaller prompt volumes but higher decision value per citation, being recommended for “best revenue operations platform” may be worth more than ranking #1 on Google for the same query. B2C categories have higher prompt volume and more competition for retrieval slots, so structural work and freshness matter more. The underlying signals are the same; the emphasis shifts.

What’s the single highest-leverage action for most brands?

Audit your editorial footprint across the publications AI models learn from, find the category-defining publications where you’re absent, and start earning mentions there. This single workstream moves more AI citation share than any on-site change we’ve seen, because it addresses the root cause: models don’t recommend brands they don’t recognize.

What to Do This Week

Open ChatGPT, Perplexity, and Gemini. Ask each one the same three questions a buyer in your category would ask. Write down which brands they mention and which sources they cite. If your brand doesn’t appear, that’s your starting position, and it’s more useful than any report you could buy. The path from invisible to recommended is long, but it’s knowable. The brands that start now will own the citation slots in 2027. The ones waiting for the category to settle will be playing catch-up to the teams that didn’t.

For a deeper look at how citations actually get built in specific AI surfaces, our guide on increasing brand mentions in AI search walks through the editorial and on-site mechanics in detail.