Quick answer: Most brands trying to fix their AI visibility are guessing. They publish more content, rewrite a few pages, add schema, and wait. Three months later, ChatGPT still recommends their competitors. The problem isn’t effort, it’s diagnosis. An AI visibility diagnostic framework tells you exactly which failure mode is keeping you out of AI answers: entity conflicts, structural gaps, weak citation surface, or contradictory signals across the web. Once you know the cause, the fix is straightforward. Without a diagnosis, you’re guessing in a system that doesn’t reward guessing.

This guide gives you a working framework you can apply this week. Six diagnostic layers. A scoring rubric. Specific fixes per failure mode. And the honest order of operations, because most teams fix the wrong layer first and waste a quarter.

What You’ll Learn

- The six diagnostic layers that decide whether AI cites you, and which one to test first

- How to score each layer in under an hour using prompts, source checks, and entity audits

- The five failure modes behind almost every “we’re invisible in ChatGPT” problem

- What to fix in week 1, month 1, and quarter 1, in the right order

- Why structural fixes compound and content-only fixes plateau

Why a Diagnostic Framework Beats a Checklist

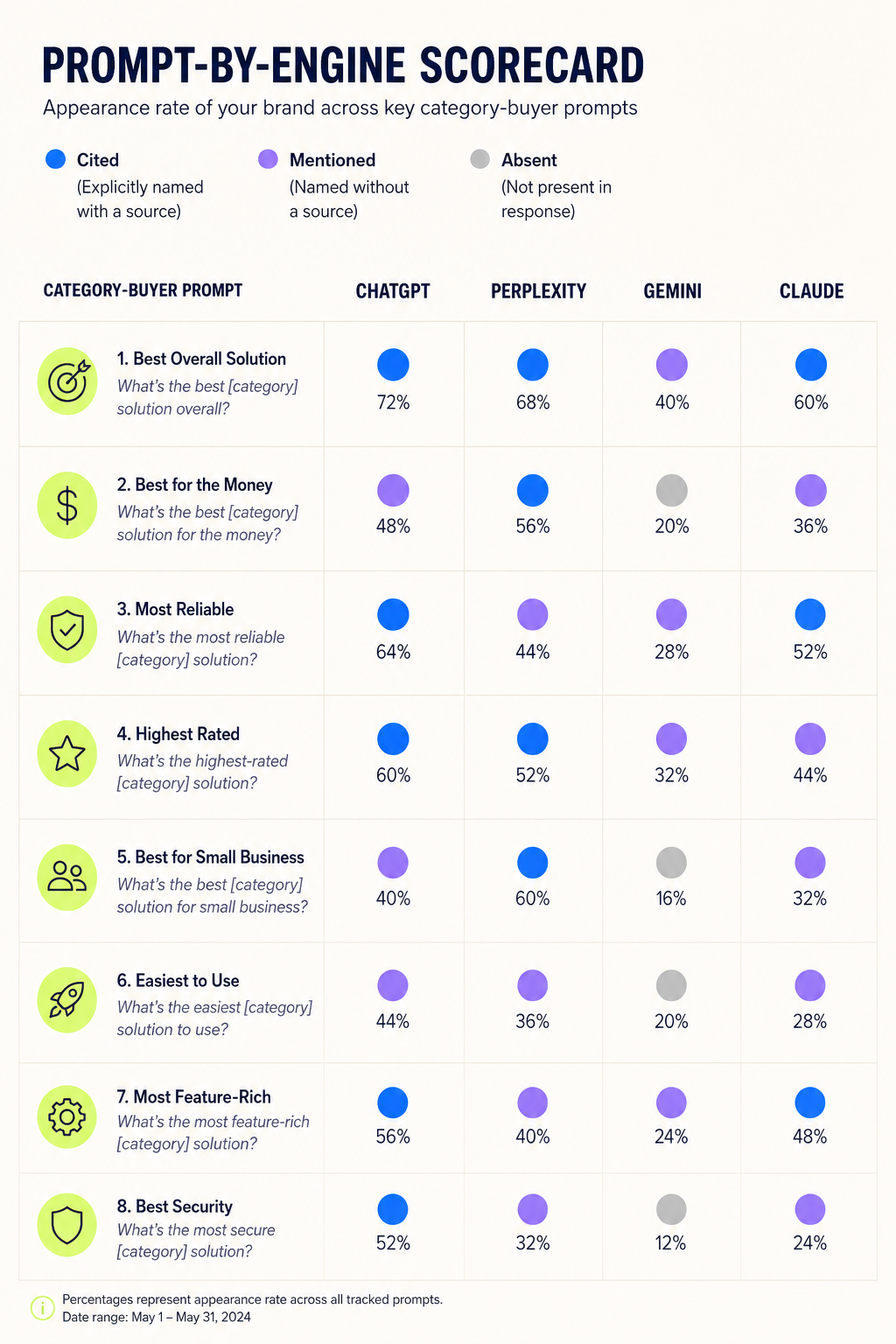

AI visibility is not a single metric. It’s the output of six independent systems behaving differently across engines. ChatGPT might cite you for one query and ignore you for the next. Perplexity might pull you from a Reddit thread. Gemini might surface a competitor because Google’s Knowledge Graph treats them as the canonical entity in your category.

A checklist treats this like a content problem. It isn’t. It’s a diagnosis problem. The same symptom, “AI doesn’t recommend us”, has at least five distinct causes, and the fix for each is different. Publishing more content fixes one of them. The other four get worse if you publish into a broken foundation.

The framework below isolates each cause. Run it once and you’ll know whether you have an entity problem, a structure problem, a content problem, a citation problem, or an engine-disagreement problem. Then you can fix the right thing.

The Symptom-to-Cause Gap

Here’s what makes this work different from SEO auditing. In SEO, the symptom (low ranking) usually points to a small set of well-understood causes: weak backlinks, thin content, technical issues, intent mismatch. In AI visibility, the symptom is identical across causes. “We don’t show up in ChatGPT” can mean any of these:

- Your brand entity is ambiguous. AI doesn’t know which company you are

- Your content exists but isn’t structured in a way AI can extract

- Your content is structured but lives on surfaces AI doesn’t index well

- You’re cited by AI, but the engines disagree about what you do

- You have all of the above working, but a competitor has stronger third-party validation

Each of these requires a different fix. The framework tells you which one you have.

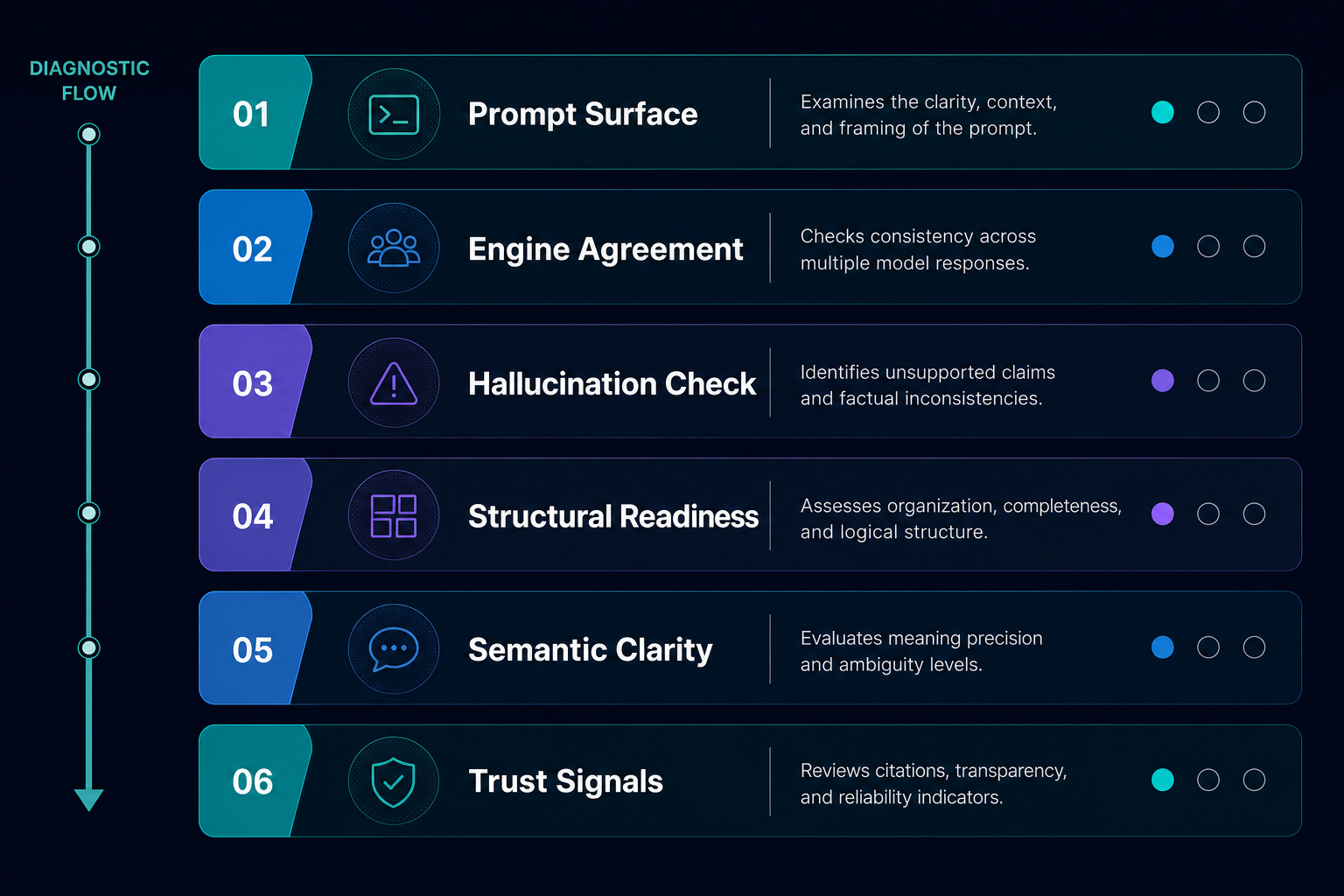

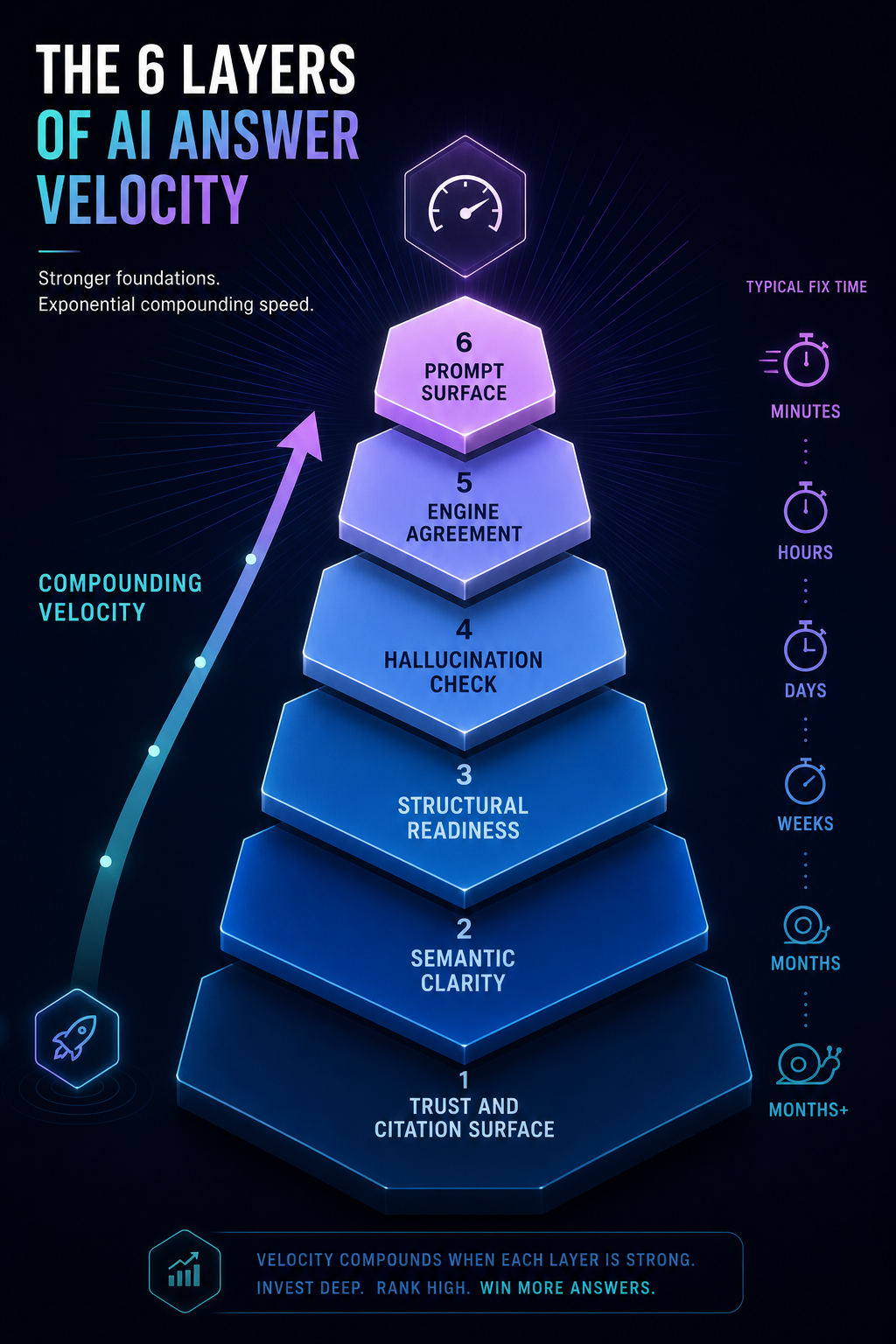

The Six Diagnostic Layers

Run these in order. The order matters, fixes at lower layers compound, fixes at higher layers don’t compound if the lower layers are broken.

Layer 1: Prompt Surface. What AI Actually Says About You

Start with the symptom. Open ChatGPT, Perplexity, Gemini, and Claude. Run 15–25 prompts a buyer in your category would actually ask. Not branded queries. Category queries.

For a project management SaaS, that’s prompts like: “What’s the best project management tool for marketing agencies?” / “Compare Asana and ClickUp for small teams” / “Which PM tool integrates best with Slack and HubSpot?”

Record three things for each prompt: Do you appear? In what position? Are you described accurately? Run the same prompt three times. AI responses vary. If you appear once out of three runs, that’s not visibility. That’s noise.

Score this layer on appearance rate, not just presence. Appearing in 80% of relevant prompts across engines is the bar. Most B2B brands score under 15%.

Layer 2: Engine Agreement. Do AI Systems Tell the Same Story?

For every prompt where you appear, capture how each engine describes you. Then compare. If ChatGPT calls you “a project management tool for enterprises,” Perplexity calls you “a task tracker for freelancers,” and Gemini doesn’t recognize you as a PM tool at all, you have an engine disagreement problem.

Engine disagreement happens when your entity signals are inconsistent across sources. One section of your site positions you for enterprise. Your G2 listing categorizes you as small business. Your founder’s LinkedIn says “for creative teams.” AI models pull from all of it and produce three different summaries.

This is the single most overlooked diagnostic. Brands obsess over getting cited and never check whether the citation tells the right story.

Layer 3: Hallucination and Guessing Check

When AI doesn’t have enough signal about your brand, it guesses. Sometimes the guess is close. Often it’s wrong, wrong pricing, wrong customer segment, wrong features, wrong founding year.

Hallucination is a structural symptom, not an AI failure. It means the model couldn’t find authoritative content fast enough and reached for adjacent patterns. Fix the underlying gap and the hallucination stops.

Test this directly: ask each engine “What does [your company] do?” / “Who are [your company]’s customers?” / “How does [your company] price?” If the answers vary or invent details, log every hallucinated claim. Each one points to a missing or weak authoritative source.

Layer 4: Structural Readiness

Now you’re checking your own site. The question: can AI crawlers and retrievers actually read and extract from your content?

Check these specifically:

- Crawl access: Are GPTBot, ClaudeBot, PerplexityBot, and Google-Extended allowed in robots.txt? Many companies block them by default and don’t know it.

- Content surface: Is your expertise locked inside PDFs, gated downloads, or JavaScript-rendered pages? AI crawlers struggle with all three.

- Schema: Organization, Product, FAQPage, and Article schema present and accurate on relevant pages?

- llms.txt: Do you have one? If not, you’re leaving extraction guidance on the table. Our guide on how to write llms.txt for AI search walks through the format.

- Answer-first formatting: Do your key pages lead with a direct, extractable answer in the first 100 words, or do they warm up for three paragraphs?

This layer is where most teams have the fastest wins. Structural fixes don’t take months, they take a week. And they unlock everything above.

Layer 5: Semantic Clarity and Entity Resolution

Does the open web know who you are as an entity? This is where entity SEO meets AI visibility.

Check these signals:

- Wikipedia or Wikidata entry, if your category supports one

- Consistent company name, founding year, and category across G2, Crunchbase, LinkedIn, and your own About page

- A clear, repeated category descriptor across third-party sources (“marketing analytics platform”, not “platform” on one site, “tool” on another, “software” on a third)

- Founder and key executive profiles linked to the company entity

If three different sources describe you three different ways, AI will pick whichever description is most reinforced, which is almost never the one you want.

Layer 6: Trust and Citation Surface

This is the foundation. AI engines weight sources by perceived authority and topical relevance. Your citation surface is the set of third-party publications, communities, and references where your brand appears in context.

Audit specifically:

- Editorial mentions in publications AI models actually index (industry trade publications, established business media, vertical authority sites)

- Reddit threads, Quora answers, and community discussions where your brand is referenced. Perplexity weights these heavily

- Comparison content on third-party sites (review platforms, “best of” roundups, alternatives pages)

- Conference talks, podcast appearances, and named author bylines on authoritative sites

This layer compounds slowest and matters most. Brands with strong citation surfaces survive engine updates. Brands without them get displaced every time the underlying training data shifts.

Scoring the Framework

Each layer gets scored 0–10. The composite score tells you where you stand. The individual scores tell you where to start.

| Layer | What 0 Looks Like | What 10 Looks Like | Fix Speed |

|---|---|---|---|

| Prompt Surface | Zero appearances in 25 category prompts | 80%+ appearance rate across 4 engines | Symptom only, fix below |

| Engine Agreement | Each engine describes you differently | Consistent positioning across all engines | 4–8 weeks |

| Hallucination Check | Wrong facts in 50%+ of responses | Accurate facts in 90%+ of responses | 2–6 weeks |

| Structural Readiness | Blocked crawlers, no schema, PDF-locked content | Full crawl access, complete schema, answer-first content | 1–2 weeks |

| Semantic Clarity | Inconsistent descriptions across third-party sources | One clear category descriptor everywhere | 6–12 weeks |

| Trust Surface | Zero editorial mentions on indexed publications | Consistent mentions across 20+ relevant publications | 3–6 months |

A score under 30 means you’re effectively invisible. 30–50 means you appear inconsistently. 50–70 means you’re competitive in some queries. Above 70 is rare and defensible.

The Five Failure Modes

Almost every diagnostic result maps to one of these patterns. Identify yours before you fix anything.

1. The Entity Conflict

Symptom: AI confuses you with another company, mislabels your category, or invents details that match a competitor.

Cause: Inconsistent or sparse entity signals across the open web. Often combined with a generic company name or a recent rebrand.

Fix: Layer 5 first. Reconcile your category descriptor across all third-party listings. Build out Wikidata if eligible. Make sure your About page, schema, and primary listings all use the same exact category language.

2. The Structural Lock

Symptom: You have great content but AI never cites it. Competitors with thinner content outrank you in AI responses.

Cause: Your best content is gated, PDF-locked, or buried behind JavaScript. Or you’re blocking AI crawlers without realizing it.

Fix: Layer 4. Audit robots.txt. Extract PDF expertise into HTML pages. Restructure flagship content to lead with extractable answers. This is the fastest-compounding fix in the framework.

3. The Citation Desert

Symptom: AI knows what you do but never recommends you. Competitors with similar features get cited regularly.

Cause: Weak third-party citation surface. AI engines need external validation to surface a brand confidently, your own site isn’t enough.

Fix: Layer 6. Build a real AI citation surface through editorial placements on publications AI models index. This is slow. Six months minimum. It’s also the most defensible result.

4. The Engine Split

Symptom: You appear in ChatGPT but not Perplexity, or in Gemini but not Claude. Each engine tells a different story.

Cause: Different engines weight different sources. ChatGPT leans on training data and a narrow set of high-trust sources. Perplexity leans on real-time web and community sources. Gemini leans on Google’s Knowledge Graph.

Fix: Diversify your surface. If you’re strong on owned content but weak on Reddit and Quora, Perplexity will miss you. If you don’t have a Wikidata or strong Google entity, Gemini will misclassify you. Our breakdown of how brand mentions work in AI search covers engine-specific weighting.

5. The Hallucination Pattern

Symptom: AI mentions you but invents details, wrong pricing, wrong features, wrong customers.

Cause: The model couldn’t find authoritative content for the specific question and filled the gap with adjacent patterns from competitors.

Fix: Identify the hallucinated claim. Find or create authoritative content that directly answers it. Make sure that content is structurally extractable (Layer 4) and reinforced by third-party citations (Layer 6).

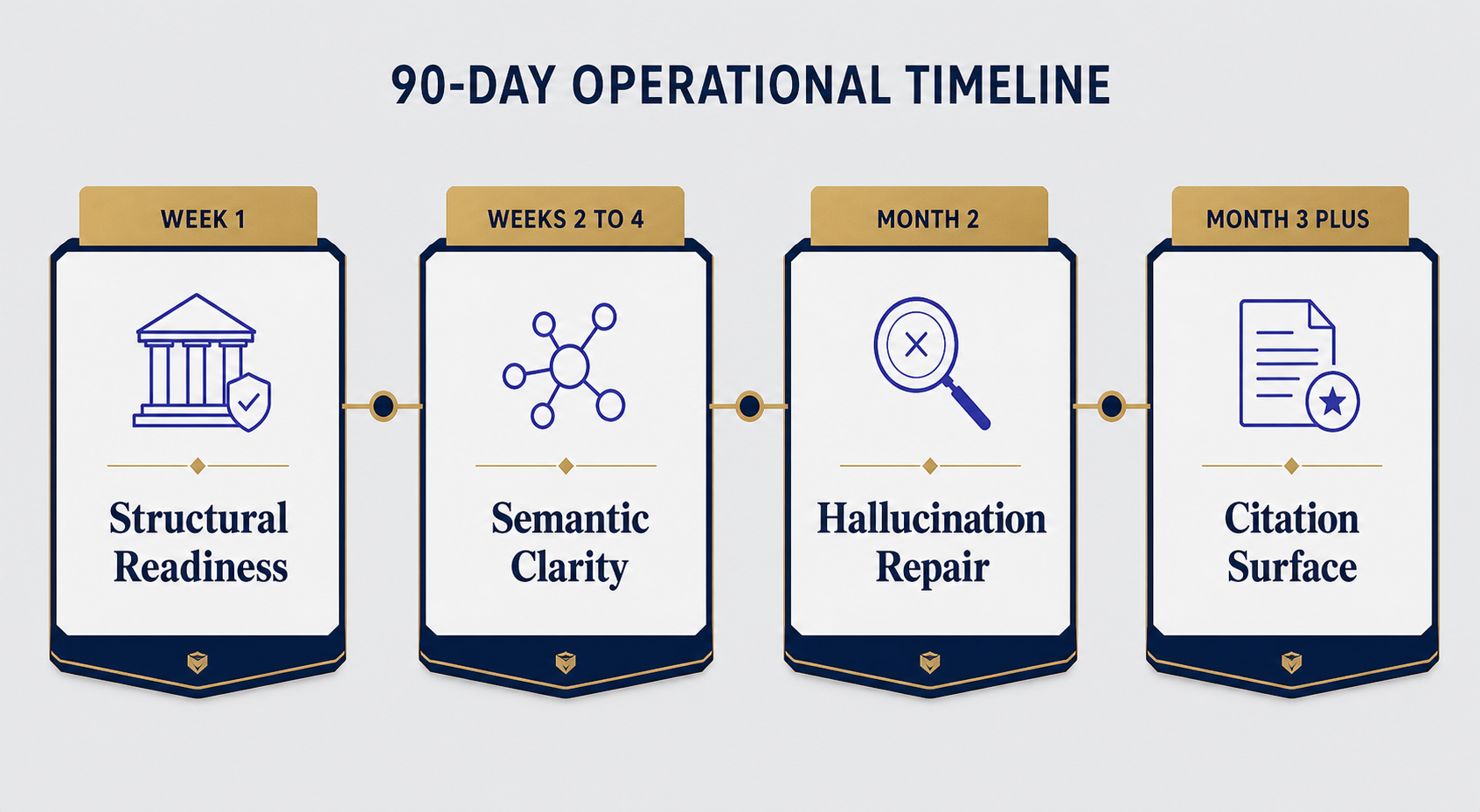

The Right Order of Operations

This is where most diagnostic frameworks fail in practice. They tell you what’s wrong but not what to fix first. Here’s the order that compounds.

Week 1: Structural Readiness

Unblock crawlers. Add or fix schema. Extract PDF-locked content into HTML. Rewrite key landing pages with answer-first formatting in the first 100 words. Publish llms.txt.

This is the fastest layer to fix and it unlocks everything else. Skip it and every later fix underperforms.

Weeks 2–4: Semantic Clarity

Audit how third-party sources describe you. Pick one canonical category descriptor. Update G2, Crunchbase, LinkedIn, your About page, your schema. Reconcile founder and exec profiles. If you qualify for Wikidata, file it.

Track entity consistency across at least 10 high-authority third-party sources.

Month 2: Hallucination Repair

Take every hallucinated claim from Layer 3. For each, create one authoritative page that answers the question directly, factually, and extractably. Reinforce with at least one third-party reference where possible.

Re-run Layer 3 prompts every two weeks. Hallucination rates drop within 30–60 days when the underlying content gap is filled.

Month 3+: Citation Surface

Now build the trust layer. This is the longest fix and the one most teams want to do first. Don’t. Build it last. If you build citations into a broken foundation, the citations don’t compound, they get diluted by inconsistent signals at every other layer.

Target editorial placements on publications AI models actually index. Audit the Reddit and Quora surfaces in your category. Pursue named bylines and podcast appearances that reinforce your category positioning consistently.

What Most Teams Get Wrong

Three patterns keep showing up in audits.

They start with content. They publish 30 new pages while their crawlers are blocked and their entity signals contradict. Result: nothing changes.

They optimize one engine. They get cited in ChatGPT and assume the problem is solved. Then Perplexity drives a major buying decision and they’re nowhere to be found because they never built community-surface signals.

They confuse mentions with citations. A passing mention in a low-authority blog isn’t the same as a contextual citation in a publication AI models weight heavily. The difference shows up in your appearance rate, not your mention count.

The diagnostic framework catches all three. If you scored Structural Readiness at 4 and you’re hiring a content team, you’re solving the wrong problem.

How This Differs From an SEO Audit

SEO audits measure crawlability, rankings, backlinks, and on-page signals against Google. AI visibility diagnostics measure something different: whether your entity is legible, your content is extractable, your story is consistent across engines, and your citation surface is dense enough to survive engine updates.

You can pass an SEO audit and fail an AI visibility diagnostic. We see it constantly. A site with 80+ domain authority, great rankings, clean schema, and zero appearances in ChatGPT. The cause is almost always Layer 5 or Layer 6, strong owned signals, weak third-party reinforcement.

The reverse is also true. A site with mid-tier SEO metrics can dominate AI responses if its entity is sharp, its content is extractable, and its citation surface is dense in the right places.

If you’re auditing both, run them separately. Combining them produces a checklist that fixes neither problem well.

Frequently Asked Questions

How long does the full diagnostic take to run?

A first-pass diagnostic across all six layers takes 4–6 hours of focused work. Layer 1 (prompt testing) is the slowest because you need to run prompts across multiple engines and multiple times to control for variance. The rest, structural audit, entity check, citation surface review, moves faster once you know what to look for.

How often should I re-run the diagnostic?

Re-run Layers 1–3 monthly. They’re symptom-level and drift quickly with engine updates. Re-run Layers 4–6 quarterly. They change slowly and require deeper work to move. If you do a major rebrand, repositioning, or product launch, run the full diagnostic immediately afterward.

Which AI engine should I prioritize?

Prioritize by where your buyers actually research. For most B2B categories, that’s ChatGPT and Perplexity. For visual or local categories, Gemini matters more. For technical and developer audiences, Claude usage is growing fast. Don’t optimize for one engine, optimize for the engines your buyers use, then verify with the others.

Can I run this diagnostic on a competitor?

Yes, and you should. Run Layers 1–3 on your top three competitors. You’ll learn which engines they’re winning, how they’re being described, and where their hallucination patterns are. That tells you exactly where your positioning has room to win, especially in share of voice across AI surfaces.

What if my appearance rate is zero?

Zero appearance rate almost always points to Layer 5 or Layer 6. Your entity is either invisible or unrecognizable to the engines. Start with Layer 4 to make sure nothing is structurally blocking AI crawlers, then move to Layer 5 to reconcile your entity signals. Citation surface (Layer 6) is the long fix, but without Layers 4 and 5 in place first, citations don’t compound.

Does this framework work for local businesses?

The structure works, but Layer 6 looks different. For local businesses, citation surface includes Google Business Profile consistency, local directory signals, and review platform presence. The diagnostic logic is identical, only the source set changes.

How does this connect to traditional brand monitoring?

Traditional brand monitoring tools track where your brand appears across the web. The diagnostic framework tells you whether those appearances translate into AI visibility. They’re complementary, monitoring tells you what’s happening, diagnostics tells you why and what to fix.

Run the Diagnostic This Week

Open ChatGPT today. Run 10 category prompts. Note where you appear, where you don’t, and what AI says about you when you do. That’s your Layer 1 baseline. The rest of the framework gives you the path from “we’re invisible” to “we’re cited consistently”, but it starts with knowing what’s actually broken.

The brands that will own AI search in 2027 aren’t the ones publishing the most content right now. They’re the ones running diagnostics, fixing the right layer first, and compounding their citation surface while everyone else is still guessing.

Want the full diagnostic worksheet with prompts, scoring rubric, and fix priorities? Get your free AI visibility audit and we’ll run the framework against your brand and your top competitors.

Nice blog

Right, this is good blog

Informative blog