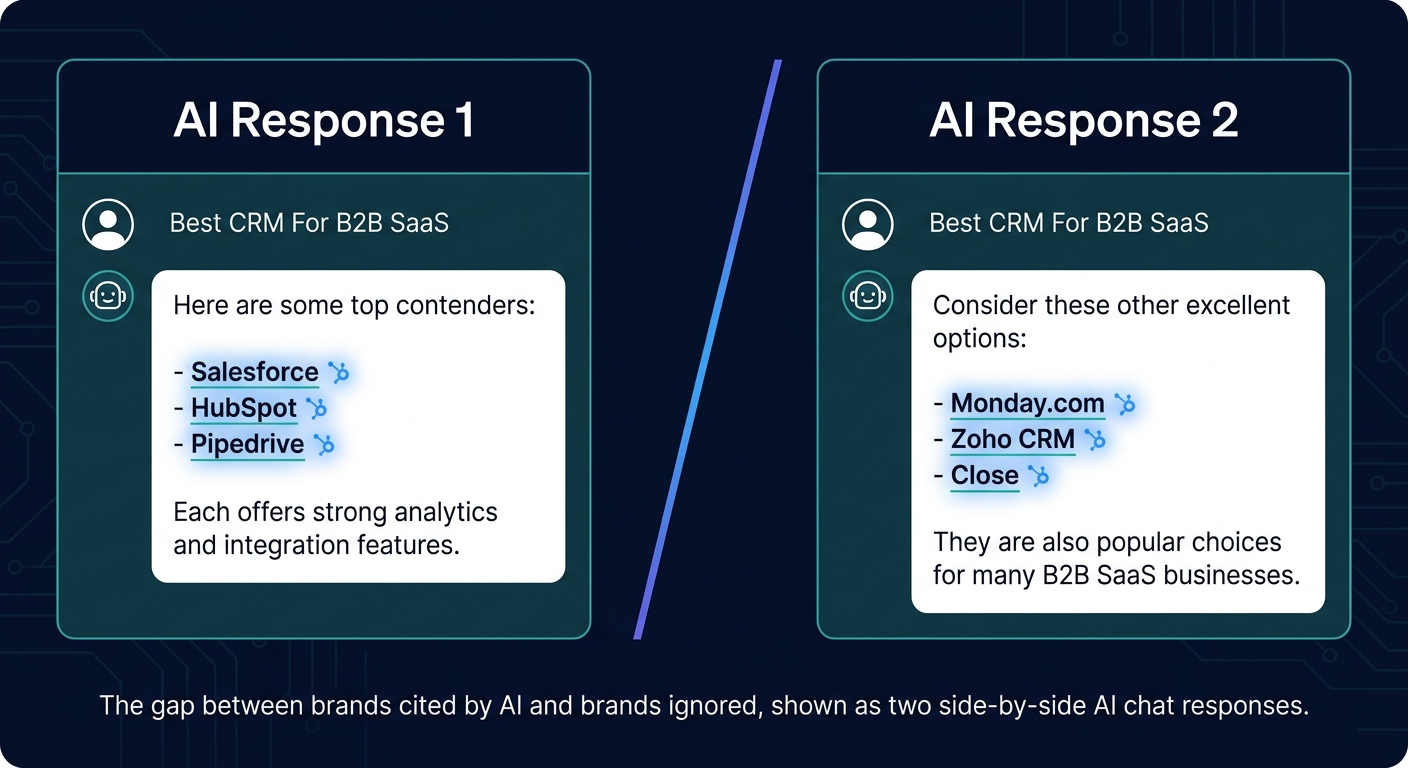

Your brand doesn’t show up when a buyer asks ChatGPT for recommendations in your category. Your competitor does. That gap didn’t appear overnight, it compounded over months while AI models quietly trained on editorial sources that mentioned them and ignored you. An AI citation service exists to close that gap: it places your brand inside the editorial content that large language models actually learn from, so you get surfaced when AI answers the questions your buyers are asking.

An AI citation service is a specialist agency or platform that earns brand mentions on the high-authority publications LLMs use as training data, so your brand gets cited, recommended, and surfaced in AI-generated answers from ChatGPT, Perplexity, Gemini, and Claude.

What You’ll Learn

- What an AI citation service actually does, and what separates real ones from rebranded SEO agencies

- Why AI models cite some brands and skip others (it’s not the brands with the most backlinks)

- The 5 criteria a citation service should qualify every publication against

- Realistic timelines: when you’ll see your first AI citation, and when results compound

- How to evaluate an AI citation service before you sign anything

- When you need one, and when you don’t

What an AI Citation Service Actually Does

Strip away the marketing language, and an AI citation service does one thing: it earns editorial mentions of your brand on publications that LLMs crawl, index, and cite when generating answers. Not backlinks. Not press releases. Not guest posts on random blogs. Specifically the publications that make it into the training data and real-time retrieval systems of ChatGPT, Perplexity, Gemini, and Claude.

That distinction matters more than most teams realize. A backlink on a high-DA site may help your Google rankings. It may do nothing for your AI visibility if the source isn’t part of how AI models build brand-category associations. The two goals overlap, but they’re not the same, and treating them as identical is the single biggest reason brands pour money into “AI SEO” services and see zero movement in LLM citations.

The Three Jobs of a Real Citation Service

A legitimate AI citation service handles three jobs that sit outside what traditional SEO or PR agencies do well:

- Source qualification. Identifying which publications LLMs actually pull from for your category, not just high-DA sites, but topically relevant ones that show up in AI response sources.

- Editorial placement. Earning natural, contextually relevant mentions inside editorial content (not sponsored sections, not author boxes) on those qualified publications.

- Citation tracking. Measuring whether your brand’s presence in AI answers actually changes, across ChatGPT, Perplexity, Gemini, and Claude, over the weeks and months following placement.

If a service offers you placements without qualifying sources against AI citation behavior, you’re buying link building with a new label. If they can’t show you how they track AI citations post-placement, you’ve no way to know if the work is compounding.

Why LLMs Cite Some Brands and Ignore Others

For the per-platform walkthroughs that make a citation program measurable, see how to check brand mentions in ChatGPT and how to track brand mentions in Perplexity, and monitoring brand mentions in LLMs covers the cross-platform cadence any citation service should support from day one.

AI models don’t cite brands because those brands have good websites. They cite brands because the brands appear, repeatedly, in context, across credible editorial sources, inside the data the model learned from or retrieves from in real time.

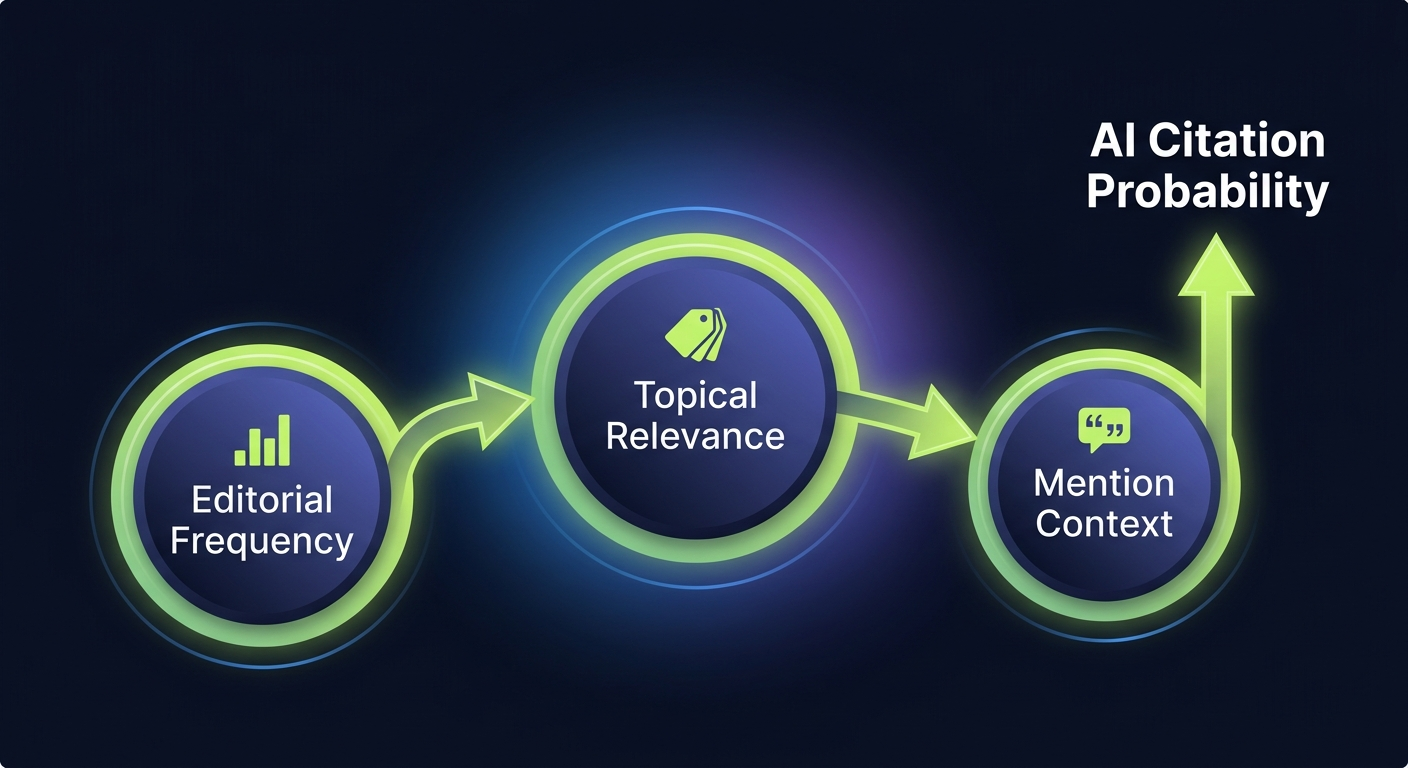

Three signals drive whether an LLM associates your brand with a category:

1. Editorial Frequency Across Trusted Sources

A brand mentioned once on Forbes won’t become a go-to AI recommendation. A brand mentioned 30 times across 15 different trusted publications in the same category will. LLMs weight repeated co-occurrence between your brand and the category vocabulary buyers use. One high-profile mention is a spike. Thirty contextual mentions is a pattern, and patterns are what models learn.

2. Topical Relevance of the Source

A mention on a SaaS-focused industry publication beats a mention on a general business site for a B2B SaaS brand, even if the general site has higher domain authority. AI models build category associations from sources that are already categorized as authoritative on that topic. Relevance wins over raw authority more often than teams expect.

3. Context of the Mention

“[Your Brand] is a leading CRM for mid-market B2B teams” teaches an AI model something specific. Your brand name appearing in a list of 50 companies without context teaches it almost nothing. Editorial context, where the mention sits inside an argument, comparison, or recommendation, shapes how much weight the model gives it.

The 5 Criteria a Citation Service Should Qualify Against

Before a single placement gets pitched, a serious AI citation service qualifies every candidate publication against a specific checklist. If the service you’re evaluating can’t articulate its qualification criteria, that’s your answer.

Here’s what qualification should actually look like:

| Criterion | What It Measures | Why It Matters |

|---|---|---|

| LLM retrieval presence | Does this publication show up in AI response source citations for your category? | If AI models don’t cite the source, your mention there won’t reach AI-generated answers. |

| Topical alignment | Is the publication categorized as authoritative on your specific topic? | Topical relevance outweighs raw DA in how AI builds category associations. |

| Editorial standards | Does the publication produce original, editorially reviewed content? | AI models weight editorial content higher than syndicated or sponsored material. |

| Indexing behavior | Is the site regularly crawled and indexed by major AI crawlers (GPTBot, ClaudeBot, PerplexityBot)? | If AI crawlers don’t see the site, your mention won’t enter the retrieval pool. |

| Mention placement quality | Can the mention appear in editorial body content, not author bylines, sidebars, or ad units? | Body-content mentions carry meaningful context. Byline or sidebar mentions often don’t. |

A citation service that skips any of these is giving you placements, not strategic citations. The difference shows up three months later when you’re still invisible in AI answers and wondering why.

Realistic Timelines, What to Actually Expect

This is where most services oversell and most buyers get disappointed. AI citation work compounds. It doesn’t spike.

Month 1–2: Foundation

Initial placements go live. You’ll see a small number of brand mentions entering the editorial ecosystem. Don’t expect AI citations yet, most LLMs haven’t updated their retrieval with this new content, and training data updates happen on their own cadence.

Month 3–4: Early Signal

Perplexity and Google AI Overviews, both of which rely heavily on real-time retrieval, typically start surfacing the new mentions first. You may see your brand appearing as a cited source in Perplexity responses for niche, long-tail queries in your category.

Month 5–8: Compounding

As mention density reaches critical mass (usually 15–30 contextually relevant placements), AI models begin associating your brand with category vocabulary more consistently. ChatGPT and Gemini start including you in category recommendations. This is the inflection point.

Month 9–12: Category Presence

Consistent citation across multiple AI platforms for high-intent category queries. Your brand becomes part of the default recommendation set. This is what compound AI visibility actually looks like, and it’s why teams that quit at month 3 never see it.

Honestly? Most teams underestimate how long this takes and overestimate how fast SEO-style tactics work. AI citation building is slower than paid ads and faster than organic SEO to category dominance. Plan for 6 months minimum before evaluating ROI.

How to Evaluate an AI Citation Service Before You Sign

The citation-service mistake we see most often in vendor audits is a team skipping the reference call and judging the partner from the sales deck and a handful of screenshots. A 30-minute conversation with a current client in the same category tells you more about whether the placements actually move category prompts than any capabilities document, and it surfaces the kind of month-four friction (editor churn, delayed drafts, citation decay) that rarely appears in a pitch.

Six questions separate real citation services from repackaged link builders. Ask every one of them before committing.

1. Can You Show Me Which AI Responses Cite Your Existing Clients?

A real citation service can pull up examples of specific AI queries where their clients appear as cited sources. If they can only show you “published mentions” without any connection to AI citation outcomes, they’re measuring the wrong thing.

2. How Do You Qualify Publications Before Pitching?

You want to hear a specific, repeatable process, not “we work with top-tier publications.” Ask them to walk through how they’d qualify three sources for your specific category. Vague answers mean no process.

3. What’s Your Placement-to-Citation Conversion Rate?

Good services track how many of their placed mentions actually end up surfaced in AI answers. It’s not 100%, no one’s is, but they should have a number. No number means no tracking.

4. How Do You Handle AI Crawler Access on Placement Sites?

This is a technical question that separates serious services from generalists. If a target publication blocks GPTBot or ClaudeBot in robots.txt, your mention there won’t enter the AI training pool. A real citation service audits this before pitching.

5. Do You Track Citations Across All Four Major LLMs?

ChatGPT, Perplexity, Gemini, and Claude behave differently. A service that only tracks ChatGPT is missing most of the visibility picture. Cross-platform tracking is table stakes in 2026.

6. What Happens in Month 2 If I See No Citations Yet?

The answer should be a clear explanation of the compounding timeline, not reassurances. If they tell you citations spike in week 2, walk away. That’s not how this works.

When You Need a Citation Service, And When You Don’t

Not every brand needs this. Spending money on AI citations before you’ve the fundamentals in place is like running paid ads before your landing page works.

You Need an AI Citation Service When:

- Your category is already being discussed in AI answers, and your competitors are getting cited while you’re not

- Your buyers are actively using AI assistants in the research phase (most B2B SaaS buyers are, as of 2026)

- you’ve a solid product and brand narrative but limited editorial presence on industry publications

- You’ve tried PR and traditional SEO and neither is moving your AI citation rate

- You’re playing a long game, 6 to 12 months of compounding, not a quick win

You Probably Don’t Need One Yet When:

- Your product isn’t ready for the buyers AI is sending you

- Your website can’t convert traffic from high-intent queries

- Your category isn’t mature enough yet for AI models to have strong category vocabulary (very early-stage categories)

- You don’t have budget for at least a 6-month engagement, anything shorter won’t show meaningful results

Be honest with yourself on that last point. The compounding timeline is real, and shorter engagements are a waste of money for both sides.

In-House vs. Citation Service, The Real Tradeoff

Teams often ask whether they can do this in-house. The answer is yes, if you’ve the right people and enough time. Most don’t.

| Factor | In-House | Citation Service |

|---|---|---|

| Publication relationships | Built from scratch, 6–12 months minimum | Existing relationships across qualified publications |

| Source qualification | Requires dedicated research time and AI citation tracking tools | Built into the service process |

| Editorial pitching | Time-intensive, typically 1 FTE minimum | Handled by the service team |

| Citation tracking | Requires purchasing or building tracking infrastructure | Included in reporting |

| Monthly cost | $8K–$15K (FTE + tools) with slower ramp | $5K–$20K depending on scope |

| Time to first citation | 6–9 months typical | 3–4 months typical |

The math tips toward a service when your team lacks existing editorial relationships in your category, which is true for most B2B SaaS companies under $50M ARR. If you’ve a PR veteran on staff with strong industry contacts, in-house becomes viable.

How BrandMentions Approaches AI Citation Work

A quick note on where BrandMentions fits in this picture, because the approach matters more than the label.

Across the AI visibility campaigns we’ve run, the brands that compound fastest share one trait: they treat citation building as a category-authority play, not a link-building play. Our process qualifies every target publication against the five criteria above before any pitch goes out. We track citation outcomes across ChatGPT, Perplexity, Gemini, and Claude, not just placement volume, because volume without citation surfacing is a vanity metric.

If you want a baseline before committing to a tool or process, request a quick AI visibility audit. We’ll run 25 category-relevant prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews so you can see exactly which sources each platform trusts for your category, and which competitors are capturing citations you’re not.

Frequently Asked Questions

What’s the difference between an AI citation service and an SEO agency?

An AI citation service earns brand mentions on publications that LLMs use as training data and retrieval sources, specifically to influence how your brand appears in AI-generated answers. An SEO agency optimizes your site and earns backlinks to influence Google rankings. The goals overlap, but the target sources, tactics, and success metrics are different.

How long until I see my brand cited by ChatGPT?

Typical timeline is 5 to 8 months for consistent ChatGPT citations. Perplexity and Google AI Overviews usually show results earlier, around month 3 to 4, because they rely more heavily on real-time retrieval. ChatGPT and Gemini take longer because their citation behavior depends on mention density reaching critical mass across multiple sources.

Do AI citations affect traditional SEO rankings?

Indirectly, yes. Many of the publications that drive AI citations are also high-authority sites that pass link equity and brand signals to Google. But the primary goal of an AI citation service is AI visibility, SEO improvements are a beneficial side effect, not the core outcome.

Can I just ask ChatGPT to mention my brand?

No. LLMs don’t add brands to their recommendations on request. Their outputs reflect the patterns they learned from training data and retrieve from indexed sources. The only way to change what ChatGPT says about your category is to change what the editorial web says about your category, which is what a citation service does.

How much should I budget for an AI citation service?

Serious AI citation services run between $5,000 and $20,000 per month depending on scope, publication tier, and citation tracking depth. Anything under $3,000 per month is almost certainly repackaged link building. Plan for a minimum 6-month engagement, shorter commitments don’t allow the compounding effect that drives real results.

Will AI citations matter more in 2027 than they do in 2026?

Yes. AI assistants are taking a larger share of the research and discovery phase of buying journeys every quarter. Brands that build citation density now will own those AI recommendation slots when the competition tries to catch up in 2027 and 2028. The compounding nature of editorial presence means late starters pay a significant catch-up tax.

A 90-Day Citation-Building Plan to Start This Quarter

Most brands will wait too long to take AI citations seriously. They’ll watch a competitor get cited by ChatGPT for a year, tell themselves it doesn’t matter yet, and then panic in month 14 when half their inbound research traffic has moved to AI assistants. By then, the competitor’s citation density is already compounding and the gap is expensive to close.

The brands that move now, even modestly, even cautiously, will own category recommendation slots that get harder to break into every month. AI citation building is slow. It’s also durable. That’s the tradeoff.

If you want a baseline before committing to a tool or process, request a quick AI visibility audit. We’ll run 25 category-relevant prompts across ChatGPT, Perplexity, Gemini, and Google AI Overviews so you can see exactly which sources each platform trusts for your category, and which competitors are capturing citations you’re not.