AI rank trackers for brand mentions show you exactly how — and how often — your brand appears inside AI-generated answers from ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews. As of 2026, this category has exploded from a handful of beta tools to more than 30 competing platforms, each claiming to solve the same problem: visibility in AI search.

But most B2B marketing teams still pick the wrong tool — or track the wrong things entirely. The result is wasted budget, misleading dashboards, and zero strategic clarity on what actually drives AI recommendations.

This article breaks down what AI rank trackers actually measure, which capabilities matter for brand mention tracking specifically, and how to evaluate platforms based on what your team needs in 2026 — not what a vendor’s feature list promises.

Key Takeaways

- AI rank trackers and AI brand mention trackers solve different problems — most teams need both, but few tools do both well

- Prompt selection matters more than platform count; tracking 50 high-intent prompts beats 500 generic ones

- Citation source analysis reveals why AI mentions your competitors — not just that it does

- Sentiment and narrative framing in AI responses shape buyer perception before your sales team ever speaks to a prospect

- No tracker can guarantee AI recommendations — the data informs your content and citation strategy, not the other way around

- Pricing ranges from free tiers to $3,000+/month; the best value depends on prompt volume, engine coverage, and whether you need optimization guidance or just monitoring

What AI rank trackers for brand mentions actually measure

An AI rank tracker monitors your brand’s position, frequency, and context inside AI-generated responses. Unlike traditional SEO rank trackers that report your website’s position on a search engine results page, AI rank trackers analyze the text output of large language models.

When someone asks ChatGPT “What’s the best CRM for mid-market SaaS companies?” the model generates a response that may or may not include your brand. An AI rank tracker captures that response, identifies whether your brand appears, notes its position relative to competitors, and records which sources the model cited.

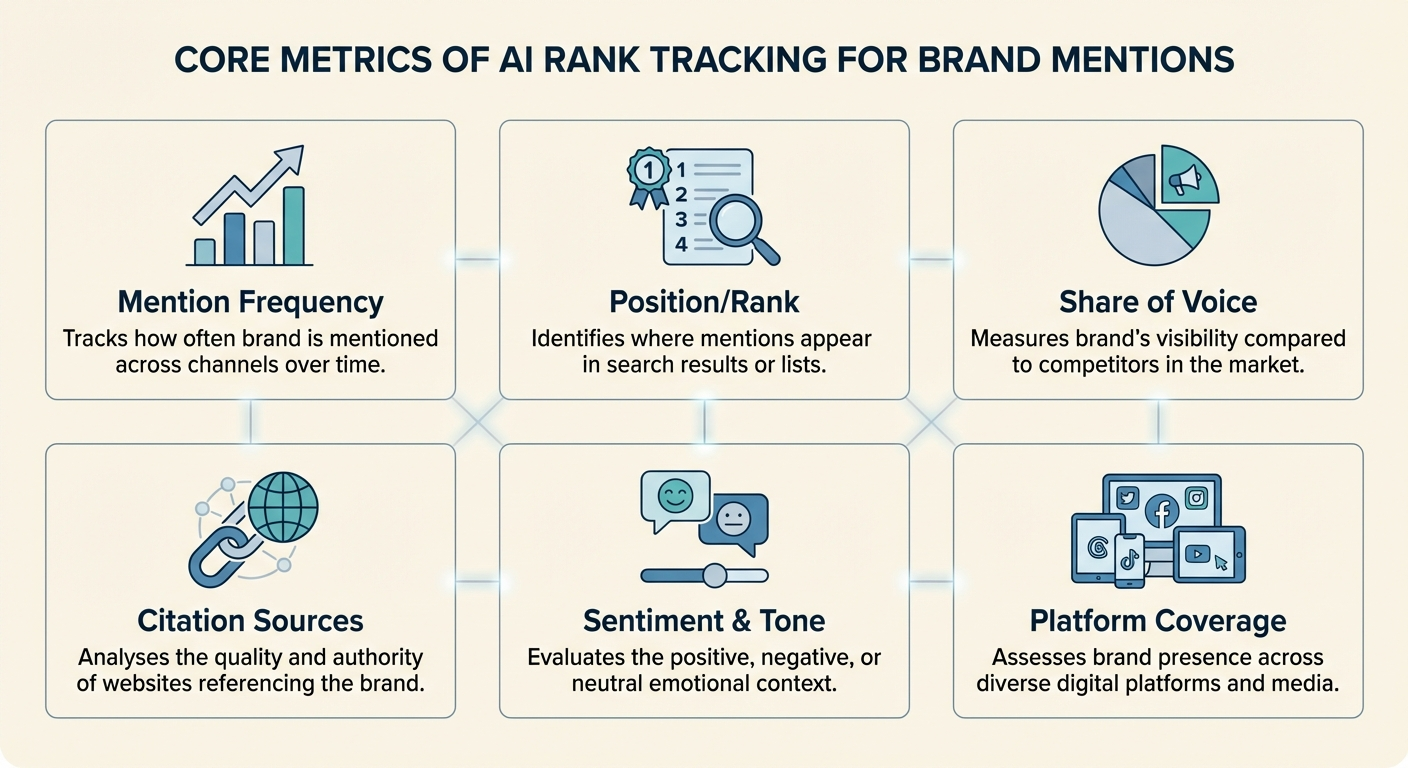

The core metrics these tools report include:

- Mention frequency — how often your brand name appears across tracked prompts

- Position or rank — where your brand falls in a list when the AI generates one

- Share of voice — your mention rate compared to competitors within the same prompt set

- Citation sources — the URLs or domains the AI model references when mentioning your brand

- Sentiment and tone — whether the AI describes your brand positively, neutrally, or with caveats

- Platform coverage — which AI engines (ChatGPT, Gemini, Claude, Perplexity, Copilot) include your brand

The challenge is that AI responses are nondeterministic. Ask the same question twice and you may get different answers. Reputable trackers address this by running each prompt multiple times — typically three to five repetitions — and reporting averaged results rather than single snapshots.

AI rank tracking vs. AI brand mention monitoring — what’s the difference?

These terms get used interchangeably, but they describe distinct capabilities. Confusing them leads to buying the wrong tool.

AI rank tracking focuses on position. It answers: “Where does my brand appear in the AI’s list of recommendations for this prompt?” Think of it as the AI equivalent of checking your Google ranking for a keyword.

AI brand mention monitoring focuses on narrative. It answers: “How does the AI describe my brand, what strengths does it highlight, what weaknesses does it flag, and how does it compare me to competitors?”

| Capability | AI Rank Tracking | AI Brand Mention Monitoring |

|---|---|---|

| Primary question | Am I included and where? | How am I described and framed? |

| Key metric | Position, visibility score | Narrative themes, sentiment, comparison patterns |

| Useful for | Tracking progress over time | Shaping content and PR strategy |

| Limitation | Doesn’t explain why you rank | Doesn’t quantify competitive position cleanly |

Most teams need both. A brand that ranks third for “best project management tools” but is consistently described as “expensive and complex” has a positioning problem that rank tracking alone won’t surface. Meanwhile, a brand with positive sentiment but zero mentions in commercial prompts has a visibility problem that narrative monitoring alone won’t quantify.

For a deeper look at monitoring specifically, see how to track brand mentions across AI search platforms.

Which features actually matter for tracking brand mentions in AI

Feature lists on AI tracker websites are long and growing. Not every feature carries equal weight. Based on what drives actionable decisions for B2B marketing teams, here’s what to prioritize.

Multi-engine coverage with per-engine breakdowns

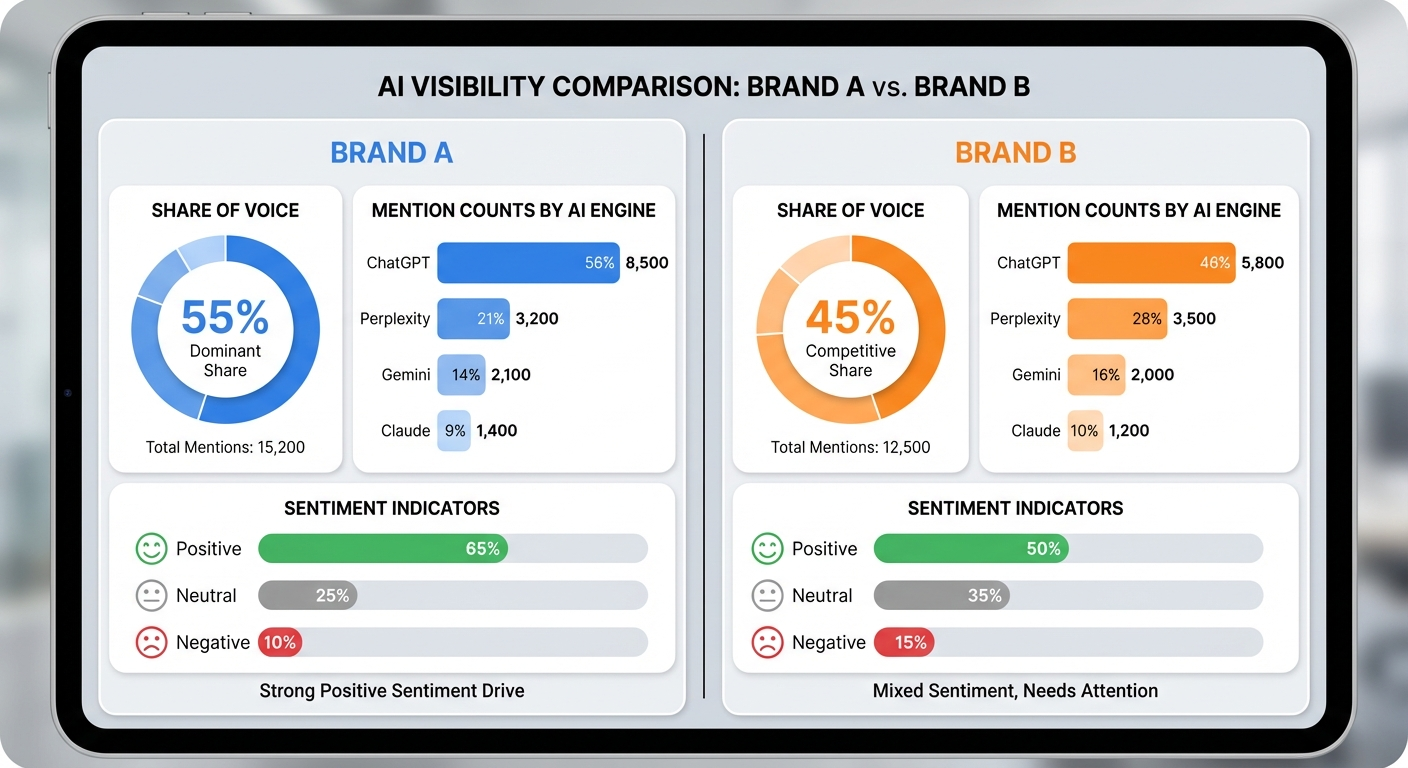

Your buyers don’t all use the same AI assistant. ChatGPT dominates consumer awareness, but Perplexity indexes the web in real time, Gemini draws from Google’s search index, and Claude has gained traction in enterprise and research contexts. A tracker that only covers ChatGPT gives you one-third of the picture.

More importantly, each engine cites different sources and frames brands differently. A 2025 analysis by Authoritas found that the same brand could appear in the top three recommendations on Perplexity but be entirely absent from Claude’s response to an identical prompt. Per-engine breakdowns let you diagnose platform-specific gaps.

Prompt-level tracking with custom prompt support

Pre-built prompt libraries are a starting point, but your highest-value insights come from prompts that mirror your actual buyer’s questions. A tool that only offers pre-set industry prompts limits your ability to track the specific commercial queries that drive pipeline.

Look for platforms that let you add custom prompts, organize them by topic or funnel stage, and track them on a recurring schedule (daily or weekly). The prompts you define should include:

- Category prompts: “What are the best [your category] tools in 2026?”

- Comparison prompts: “How does [your brand] compare to [competitor]?”

- Problem-solution prompts: “How do I solve [specific pain point your product addresses]?”

- Use-case prompts: “What tool should I use for [specific workflow]?”

Citation source analysis

This is the most underrated feature in the category. Knowing that ChatGPT recommends your competitor is useful. Knowing which sources ChatGPT drew from to make that recommendation is actionable.

Citation analysis shows you the specific URLs, domains, and publications that AI models reference when generating responses about your category. This data feeds directly into your content and digital PR strategy — telling you exactly where you need to earn placements to influence future AI outputs.

Agencies like BrandMentions build their entire strategy around this insight, placing contextual brand mentions on the high-authority publications that AI models actively cite.

Sentiment and narrative tracking

A brand mention is not inherently valuable. Being mentioned as “a legacy option with a steep learning curve” actively hurts pipeline. Sentiment analysis tells you whether AI responses position your brand favorably, and narrative tracking identifies recurring themes across responses.

Watch for patterns like:

- Consistent praise for a specific feature

- Repeated caveats about pricing or complexity

- Competitor comparisons that frame you as secondary

- Outdated information being presented as current

Competitive benchmarking

Your AI visibility only matters relative to your competitors. Every tracker should let you monitor at least three to five competitor brands alongside your own, with share-of-voice comparisons across your tracked prompts.

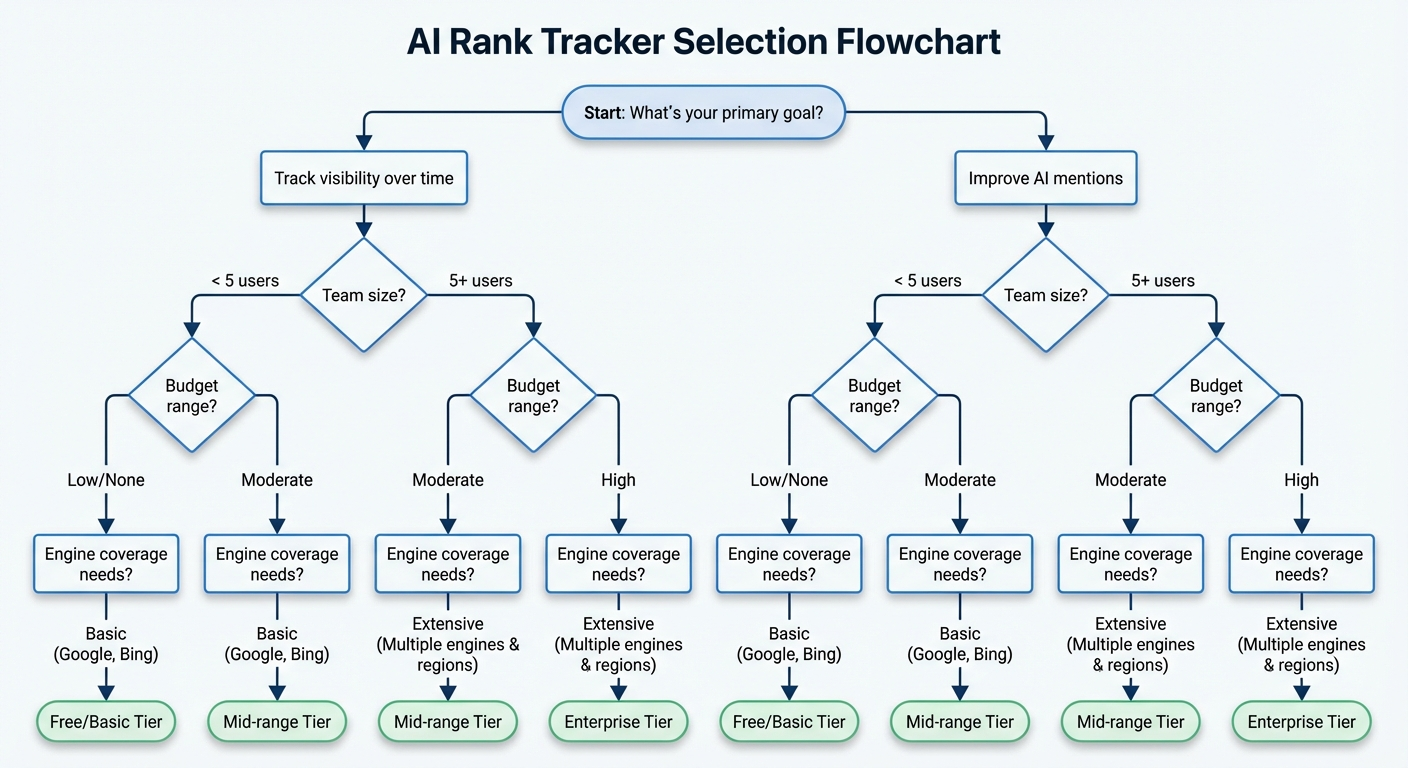

How to evaluate AI rank trackers — a practical framework

With 30+ tools on the market, a structured evaluation saves weeks of trial-and-error. Use this five-factor framework to narrow your options.

Factor 1: Engine coverage vs. your audience

Map your buyer’s AI usage patterns before comparing platforms. If your audience is enterprise procurement teams, Copilot and Gemini coverage matters more than consumer-focused engines. If your buyers are developers, Claude and Perplexity may carry disproportionate influence.

Most tools now cover ChatGPT, Gemini, and Perplexity. Differentiators include Claude, Copilot, DeepSeek, Grok, Mistral, and Google AI Mode / AI Overviews tracking.

Factor 2: Data methodology and sampling rigor

Ask every vendor: How many times do you run each prompt, and how do you handle response variability?

A single prompt run is unreliable. Tools that run each prompt five times and report averaged positions give you statistically useful data. Tools that run once and report a snapshot give you noise.

Also ask about refresh frequency. Weekly updates are sufficient for strategic monitoring. Daily updates matter if you’re running active campaigns and need to measure impact quickly.

Factor 3: Actionability — tracking vs. optimization guidance

The most common frustration with AI trackers is “great data, no idea what to do with it.” Some platforms stop at dashboards and exports. Others include optimization recommendations, content gap analysis, and citation source mapping that feeds directly into your next strategic move.

If your team has a dedicated GEO strategist, raw data may be enough. If your team is stretched thin, look for platforms that translate data into specific actions.

Factor 4: Prompt volume and pricing structure

Most platforms price by tracked prompts. This is the hidden cost driver. A tool that looks affordable at 50 prompts may become expensive at 500.

Estimate your prompt needs before signing up:

- Single product, one market: 25–75 prompts

- Multi-product or multi-market: 100–300 prompts

- Agency managing multiple clients: 500+ prompts

Factor 5: Integration with your existing workflow

AI visibility data is most useful when it connects to your content, SEO, and PR workflows. Check whether the tool exports data cleanly, integrates with Looker Studio or other reporting platforms, and supports team collaboration with shared dashboards and alerts.

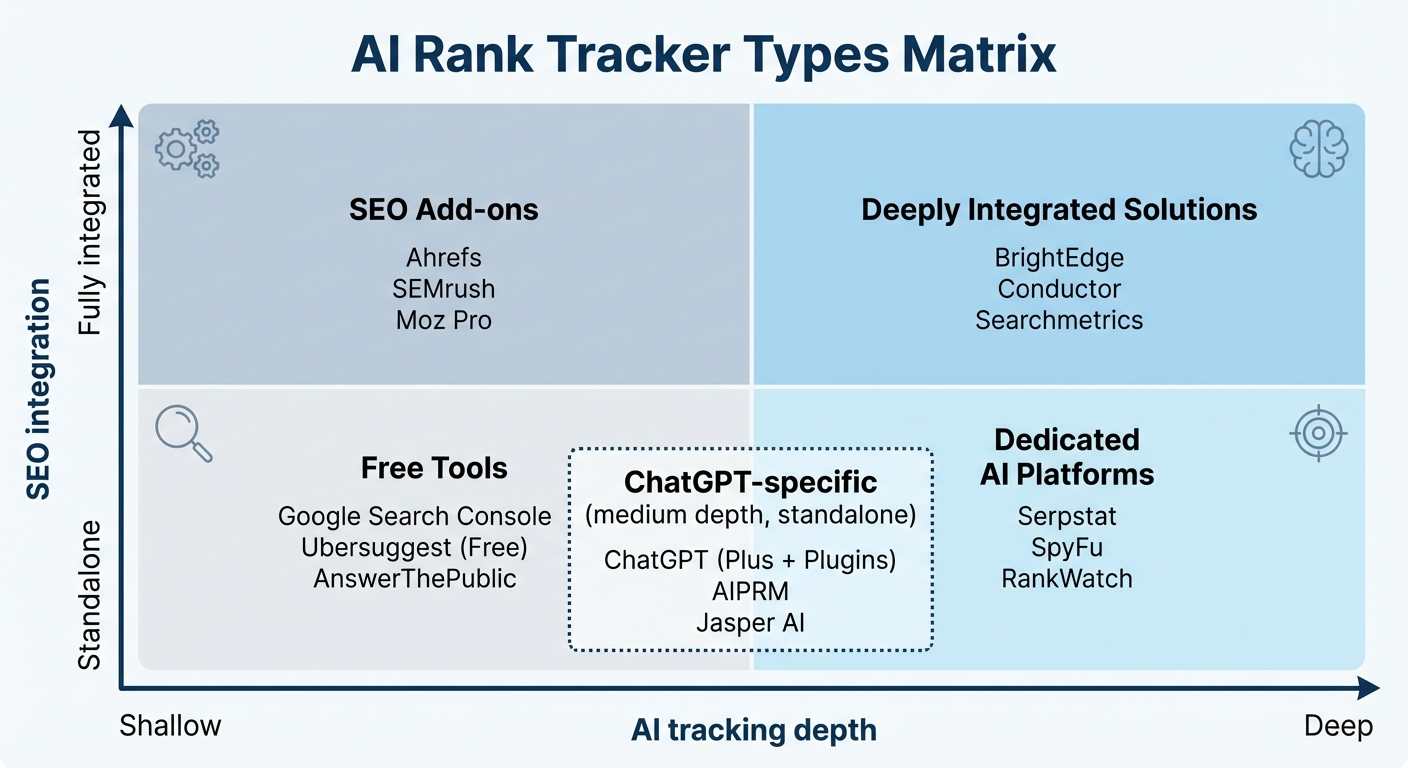

The current landscape: tool categories and where they fit

Rather than ranking individual tools (the market shifts monthly), it’s more useful to understand the categories and match them to your situation.

Dedicated AI visibility platforms

Built from the ground up for AI search tracking. Examples include Peec AI, Rankscale, Otterly, Scrunch AI, and Profound. These typically offer the deepest AI-specific features — prompt research, citation analysis, sentiment tracking — but lack traditional SEO integration.

Best for: Teams that already have an SEO tool stack and need a specialist layer for AI visibility.

SEO platforms with AI tracking add-ons

Established SEO tools like SE Ranking, Semrush (AI Toolkit), Ahrefs (Brand Radar), and Nightwatch have added AI visibility modules. These keep your traditional and AI tracking in one dashboard but often lack the depth of dedicated tools.

Best for: Teams that want a single platform for both SEO and AI monitoring, and can accept less granular AI data.

Free and freemium entry tools

Tools like Semrush’s free AI Visibility Checker, Mangools’ AI Search Watcher free tier, and Am I On AI provide quick visibility snapshots without cost. Useful for initial audits and executive presentations, but limited in ongoing tracking, prompt customization, and competitive depth.

Best for: First-time visibility checks, proving the case for investment, or supplementing a paid tool with periodic spot-checks.

ChatGPT-specific trackers

Platforms like Genrank focus specifically on ChatGPT. Useful if ChatGPT is your primary concern, but limiting as AI search diversifies across engines.

Best for: Brands with data showing that ChatGPT is their dominant AI referral channel.

For teams specifically interested in ChatGPT monitoring, see the breakdown of ChatGPT brand tracking tools.

What tracking data alone won’t fix

AI rank trackers diagnose the problem. They don’t solve it. The gap between “we know we’re not showing up” and “now we consistently appear in AI recommendations” requires a content and citation strategy that sits outside the tracker.

The citation source gap

When your tracker shows that a competitor appears in ChatGPT’s response to “best B2B marketing automation platforms” and you don’t, the natural question is: why?

The answer almost always traces back to sources. AI models learn brand-category associations from their training data and, in the case of engines with real-time retrieval (Perplexity, ChatGPT with browsing, Gemini), from the web pages they index during response generation.

If your competitor has editorial mentions across industry publications, review sites, and comparison pages that the model trusts — and you don’t — no amount of tracking will close that gap. You need to earn placements on the sources that AI models draw from.

In campaigns across 67+ B2B companies, the BrandMentions team found that brands with consistent editorial mentions on high-authority publications achieved measurably higher AI recommendation rates than those relying solely on owned content and traditional SEO. The placement process targets the publications that AI models actively reference.

The content quality gap

Your tracker may reveal that AI mentions your brand but frames it negatively. This signals a content quality problem — not a tracking problem. AI models synthesize information from multiple sources. If the strongest sources describe your product with caveats, the AI will echo those caveats.

Fixing this requires updating your own content, earning positive third-party coverage, publishing case studies with measurable outcomes, and ensuring your product messaging is clear and current across every page that AI might index.

The prompt intent gap

Some teams track hundreds of prompts but miss the ones that drive revenue. A prompt like “What is project management software?” is informational. A prompt like “Which project management tool is best for remote teams with 50–200 employees?” is commercial and closer to purchase.

Your tracker should be weighted toward commercial and decision-stage prompts. These are the queries where an AI recommendation directly influences a sale.

Building an AI brand mention tracking workflow that compounds

Tracking becomes strategic when it connects to a feedback loop: monitor → analyze → act → measure. Here’s how to structure that workflow.

Step 1: Establish your prompt baseline

Define 30–50 prompts across categories, comparisons, problem-solution, and use-case queries. Run an initial tracking cycle to establish your baseline visibility score, share of voice, and sentiment across each AI engine.

Step 2: Identify gaps and prioritize

Sort your results by two dimensions: prompt importance (search volume and commercial intent) and current visibility (mentioned vs. absent vs. negatively framed). High-importance, low-visibility prompts are your priority.

Step 3: Map citation sources for priority prompts

For each priority prompt, analyze which sources the AI cited when recommending competitors. These are the publications, review sites, and content hubs where your brand needs to appear.

Step 4: Execute your citation and content strategy

Create or update content that directly answers priority prompts. Simultaneously, pursue editorial placements on the citation sources you identified. This is where strategic brand mention services add the most value — systematically placing your brand in the publications that AI models trust.

Step 5: Measure impact and iterate

After two to four weeks (depending on whether the AI engine uses real-time retrieval or periodic training updates), rerun your tracked prompts. Compare visibility scores, share of voice, and sentiment against your baseline. Double down on what moved the needle. Adjust prompt selections based on what you’ve learned.

Common mistakes teams make with AI rank trackers

Avoid these patterns. They waste budget and create false confidence.

Tracking too many low-intent prompts. Vanity metrics feel good. Tracking “What is [your category]?” generates mentions but rarely influences pipeline. Focus on commercial prompts.

Monitoring only one AI engine. ChatGPT gets the most attention, but Perplexity’s real-time web retrieval and Gemini’s Google integration create distinct visibility dynamics. Multi-engine tracking reveals where your strategy works and where it doesn’t.

Treating AI visibility scores as absolute metrics. AI responses fluctuate. A visibility score of 72 one week and 68 the next is noise, not a crisis. Track trends over months, not day-to-day swings.

Using tracker data in isolation. AI visibility data is most powerful when paired with web analytics, pipeline data, and content performance metrics. If your AI mentions increased but demo requests didn’t, the correlation needs investigation — not celebration.

Ignoring the “why” behind the data. A tracker tells you what AI says. Citation analysis tells you why. Without the “why,” you’re reacting to symptoms instead of addressing root causes.

How pricing works across the market in 2026

AI rank tracker pricing varies dramatically. Here’s a realistic breakdown by tier so you can budget accordingly.

| Tier | Monthly Cost Range | Typical Prompt Limit | What You Get |

|---|---|---|---|

| Free / Freemium | $0–$29 | 5–25 prompts | Basic visibility snapshots, limited engines, weekly updates |

| Mid-range | $79–$300 | 100–500 prompts | Multi-engine tracking, competitive analysis, citation data, sentiment |

| Enterprise | $499–$3,000+ | 1,000+ prompts | Full platform coverage, optimization guidance, API access, team features |

According to a 2026 analysis by Rankability, the industry average across tracked tools is approximately $337 per month. The best value comes from platforms in the $79–$300 range that include citation analysis and competitive benchmarking — features that directly inform strategy, not just reporting.

Pro Insight: Before committing to a paid tier, use free tools to run an initial audit. If your brand has zero AI visibility, you need a citation and content strategy before you need an advanced tracker. Investing in the strategy first — then using a tracker to measure its impact — delivers better ROI than paying for a dashboard that shows persistent zeroes.

What’s changed since 2024–2025 — and what to expect next

The AI visibility tracking category barely existed in early 2024. Here’s what shifted:

- Engine diversity increased. In 2024, most tracking focused on ChatGPT and Google AI Overviews. By 2026, serious tools cover six or more engines, reflecting how buyer behavior has fragmented across AI assistants.

- Citation analysis became standard. Early tools only reported mention counts. The most useful platforms now show which sources drive AI recommendations — making the data actionable for content and PR teams.

- Pricing compressed. Over $31 million in venture funding flowed into the category between 2024 and 2026, according to Rankability’s market analysis. Competition pushed mid-range pricing down while expanding feature sets.

- Traditional SEO tools entered the market. Semrush, Ahrefs, and SE Ranking all launched AI visibility features in 2025, signaling that AI tracking is becoming a standard component of search marketing — not a niche specialty.

Looking ahead, expect consolidation. The 30+ tools on the market today will narrow as traditional SEO platforms absorb AI tracking functionality and standalone tools differentiate through deeper optimization guidance, not just monitoring.

For marketing leaders tracking this space, the BrandMentions resource hub covers evolving best practices as the category matures.

Frequently Asked Questions

Can AI rank trackers guarantee that my brand will be recommended by AI?

No. AI rank trackers measure visibility — they do not control AI model outputs. No tool or service can guarantee an AI recommendation. What trackers provide is the data you need to build a strategy that increases the probability of being cited, by showing you where gaps exist and which sources influence AI responses.

How often should I check my AI rank tracking data?

Weekly reviews work for most B2B teams. AI responses fluctuate, so daily monitoring creates noise. Focus on trend analysis over four- to eight-week windows. If you’re running an active campaign (new content launch, digital PR push), increase to twice-weekly checks for two to three weeks to measure impact.

Do I need a separate tracker for each AI platform?

No. Most mid-range and enterprise trackers cover multiple AI engines within a single subscription. Using separate single-engine tools creates fragmented data and makes competitive benchmarking harder. Choose a platform with multi-engine coverage unless your audience overwhelmingly uses one specific AI assistant.

What’s the difference between AI rank trackers and traditional SEO rank trackers?

Traditional SEO rank trackers report your website’s position on a search engine results page for specific keywords. AI rank trackers analyze the text of AI-generated responses to determine whether your brand is mentioned, how it’s described, and which sources the AI cited. They measure different surfaces entirely, and most teams need both.

How do AI rank trackers handle the variability of AI responses?

Reputable tools run each prompt multiple times (typically three to five) and report averaged results. This smooths out the inherent variability in AI outputs. Always ask a vendor about their sampling methodology — a tool that runs prompts once and reports a single snapshot provides unreliable data.

Is it worth paying for an AI tracker if my brand has no AI visibility yet?

Start with a free tool to confirm your baseline. If your brand has zero or near-zero visibility, invest first in building the content and citation foundation that AI models learn from. Once your strategy is active, a paid tracker becomes essential for measuring progress and identifying new opportunities.