Most teams treating generative engine optimization like “SEO with a new coat of paint” are getting outranked by smaller brands who figured out the real difference. Ranking #1 on Google doesn’t guarantee a single citation in ChatGPT. Publishing 200 blog posts a year doesn’t either. Generative engine optimization is the practice of earning citations, mentions, and recommendations inside AI-generated answers, and it rewards a different playbook than traditional search. This guide covers what actually works in 2026, what’s wasting your time, and how to build AI visibility that compounds.

The Short Version

- Generative engine optimization focuses on citation and mention inside AI answers, not rank position.

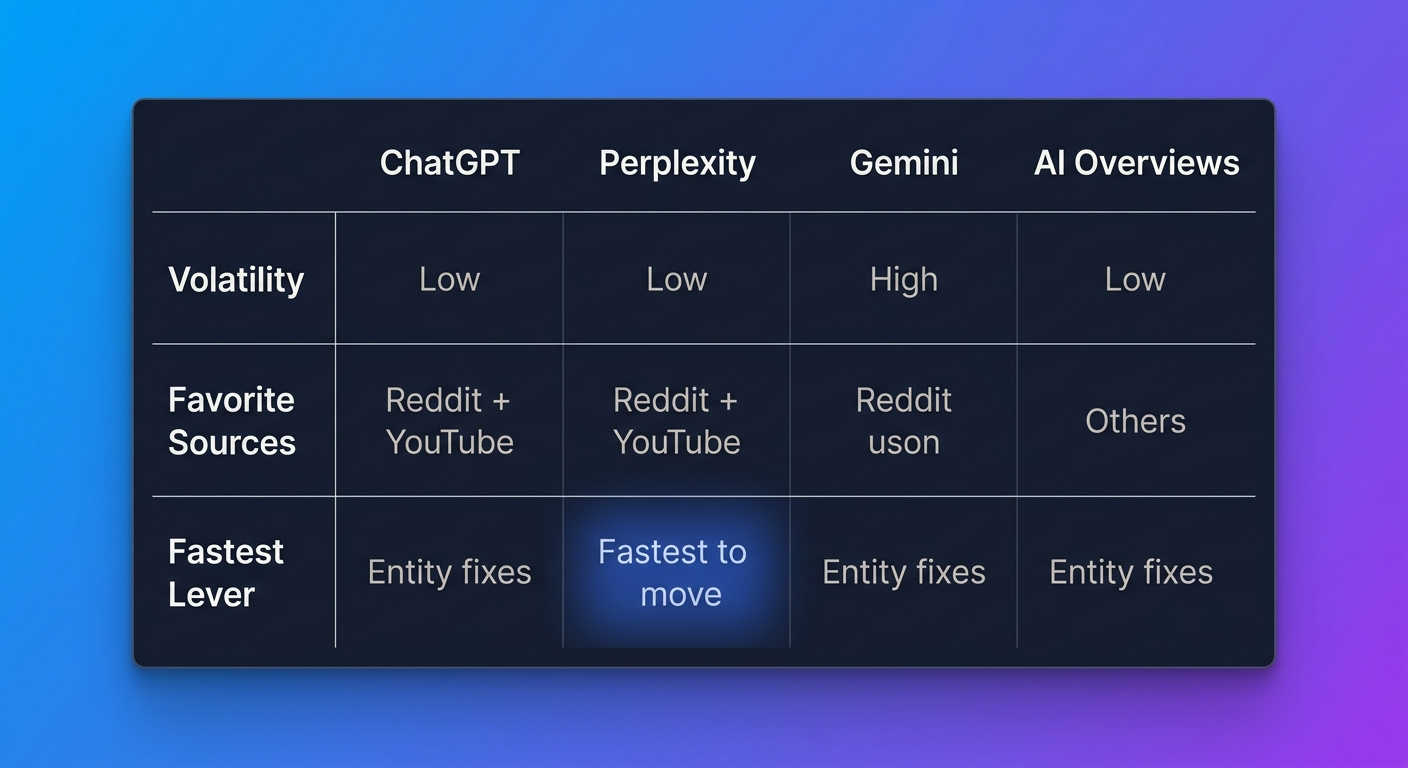

- ChatGPT, Perplexity, Gemini, and Google AI Overviews each select sources differently. One strategy won’t fit all four.

- Entity clarity, extractable content, and third-party authority outweigh backlinks for AI visibility.

- Pages with direct quotes and specific statistics see 30–40% higher inclusion in AI responses, per the arXiv GEO benchmark study.

- Track citations monthly, cited sources shift fast, and assuming stability will cost you visibility.

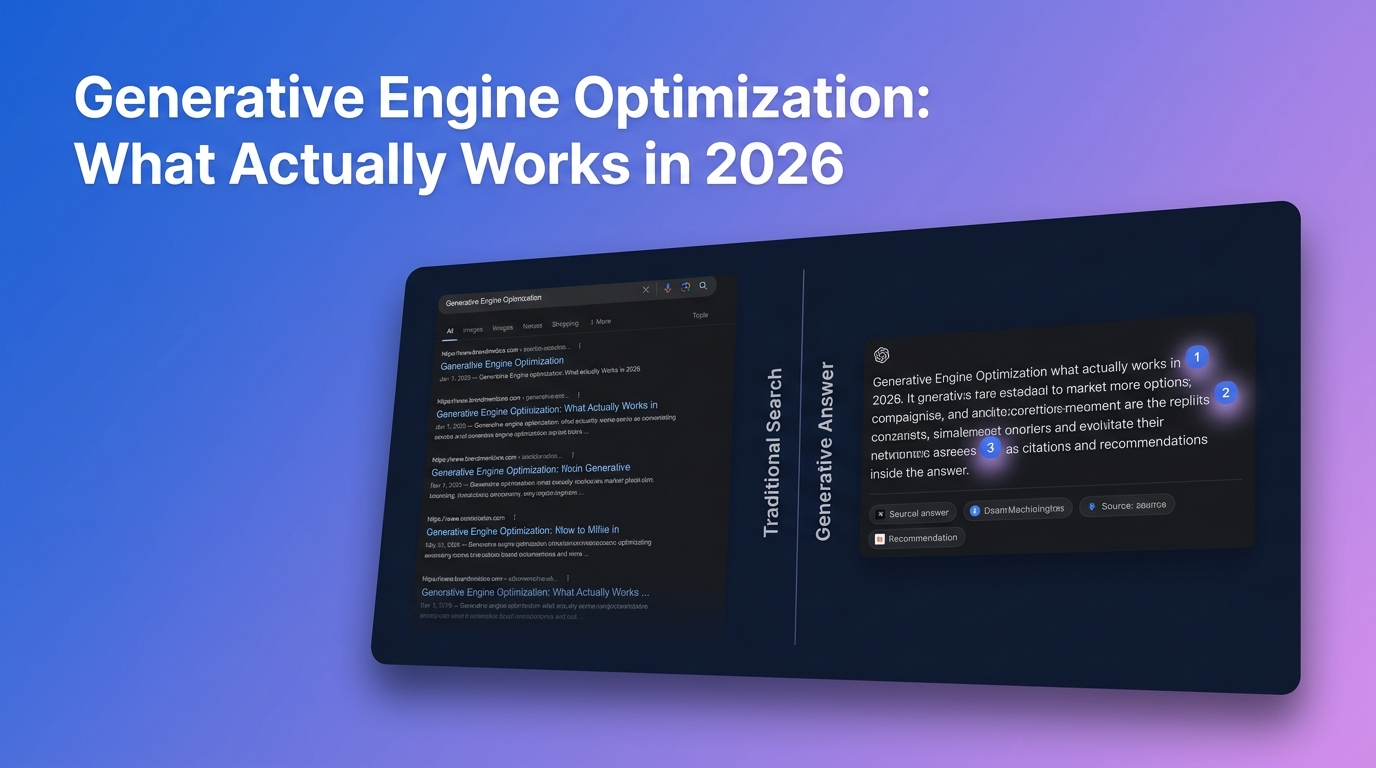

What Generative Engine Optimization Actually Means

Generative engine optimization (GEO) is the work of structuring your content, online presence, and brand entity so AI engines cite, quote, or recommend you when they answer a question. The “generative engines” in question are ChatGPT, Perplexity, Gemini, Claude, Google AI Overviews, Microsoft Copilot, and anything else that produces a synthesized answer instead of a list of blue links.

The goal shifts from “rank #1 for this keyword” to “be one of the 3–5 sources the model pulls from when it answers this question.” Those aren’t the same job. A brand can rank #1 on Google for a query and still get zero mentions when someone asks ChatGPT the same thing. We’ve watched it happen repeatedly across client audits, the correlation between SERP position and AI citation is weaker than most teams assume.

The practical implication: if your AI visibility strategy is “keep doing SEO,” you’re going to lose ground to competitors who treat GEO as its own discipline.

How GEO Differs From SEO (The Parts That Actually Matter)

Most GEO explainers list 20 differences. Three of them matter.

The unit of success changed

SEO measures success by rank and click. GEO measures it by citation, mention, and recommendation inside an AI-generated answer. You can get zero clicks and still win if your brand is the one the model names when a buyer asks for a recommendation.

The source pool is different

AI models don’t cite the web the way Google ranks it. Perplexity leans heavily on Reddit, YouTube, and niche industry publications. ChatGPT pulls from a mix of Wikipedia, well-cited editorial sites, and its training data. Google AI Overviews often favor sources already ranking in the top 10, but not always. One pattern: the top-cited domains in AI responses shift 40–60% month over month, so assuming stability will get you ignored.

Extractability beats optimization

Google rewards pages that cover a topic comprehensively. AI engines reward sentences that can be pulled out of a page and dropped into an answer without losing meaning. Dense, hedged, context-dependent prose gets skipped. Self-contained claims with a named source get cited.

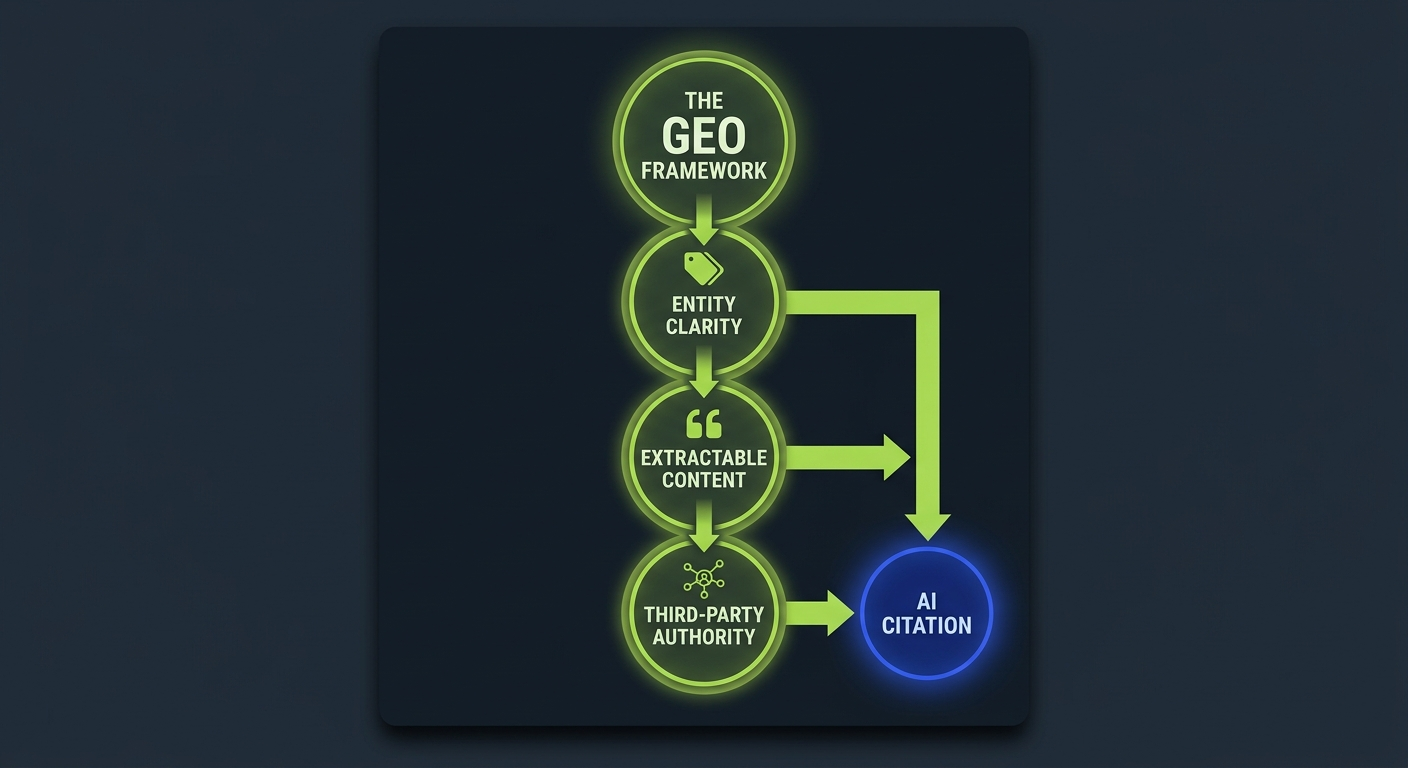

The Three Inputs That Drive AI Citation

After auditing brand visibility across ChatGPT, Perplexity, and Gemini for dozens of B2B companies, the same three inputs surface every time. Get these right and citation rates climb. Get any one wrong and the others can’t compensate.

1. Entity clarity, does the model know what you’re?

AI engines build internal representations of brands the same way knowledge graphs do: they associate your brand name with a category, a set of attributes, and a typical use case. When that association is weak or inconsistent, the model has nothing to recommend.

Entity clarity means your brand is described the same way across your own site, Wikipedia-adjacent sources, review sites, industry publications, and third-party databases. If LinkedIn says you’re a “customer feedback platform,” your website says you’re an “experience analytics suite,” and G2 categorizes you as “survey software,” the model will either pick one at random or skip you entirely.

Fix this by publishing a clear, consistent one-sentence category description everywhere the brand appears. Use it on your homepage, your About page, your LinkedIn, your press releases, and every byline. Consistency compounds.

2. Extractable content, can the model pull a clean answer from your page?

Write for retrieval. A retrieval system grabs a passage, not a page. If the passage only makes sense after reading the three paragraphs above it, the model won’t use it.

What extractable content looks like in practice:

- Direct answers in the first sentence under every H2.

- Definitions that include the entity being defined (“A brand citation is…” not “This is…”).

- Statistics with the source and year inside the same sentence.

- Short, dense paragraphs with one idea each.

- Comparison tables with clear column headers.

The Princeton-led GEO research tested 10,000 queries and found that adding quotations, statistics, and citations to content improved visibility in generative engine responses by up to 40%. That finding has held up consistently in our own client testing.

3. Third-party authority, where else does your brand appear?

AI models don’t rely only on your website. They cross-reference the web to decide whether your brand is legitimate, relevant, and worth recommending. Brands cited frequently in Reddit threads, YouTube tutorials, industry publications, and independent reviews get named by AI more often, even when their own site ranks lower.

This is the input most teams underinvest in. They pour resources into their blog and wonder why ChatGPT doesn’t mention them. Meanwhile, a smaller competitor with 15 solid editorial mentions across trusted publications gets cited every time.

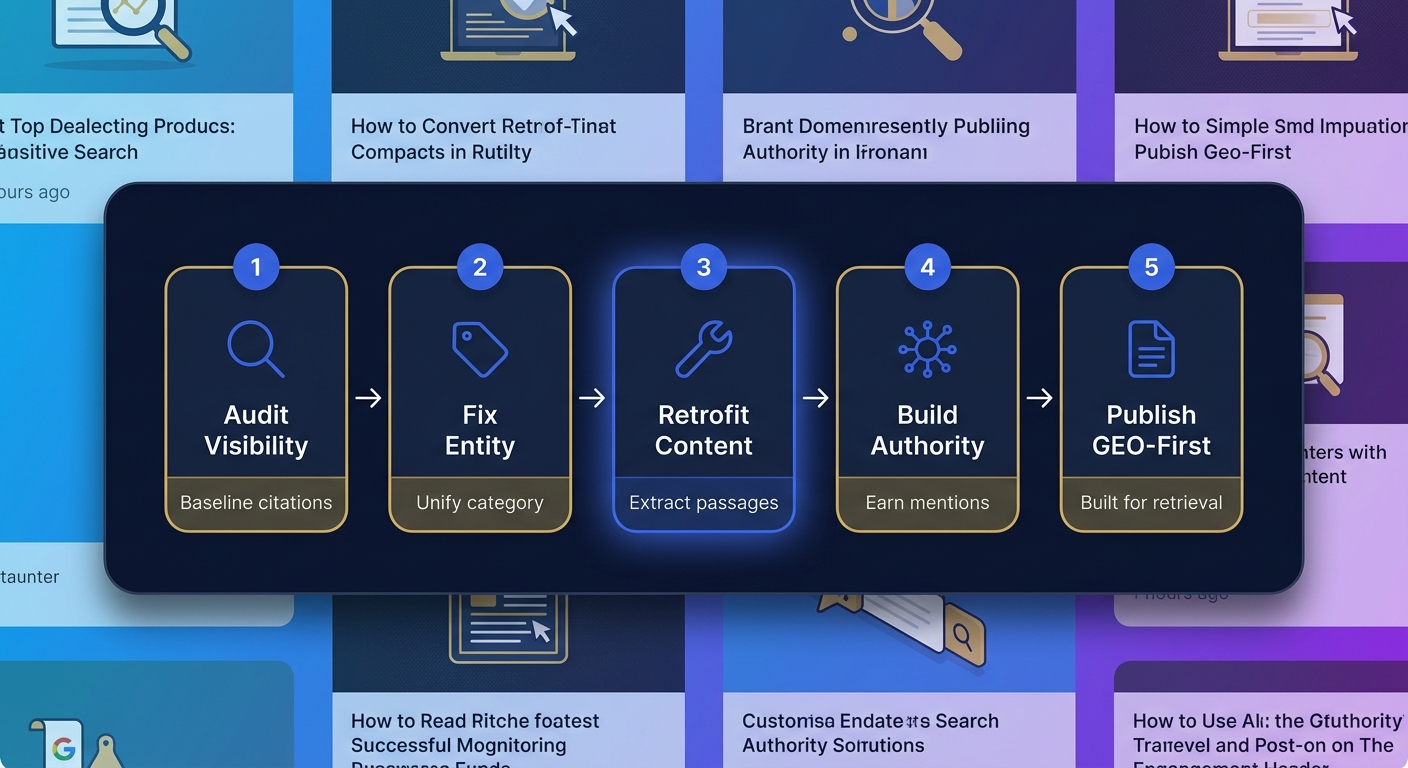

The Tactical GEO Playbook for 2026

Here’s the sequence that actually produces AI citations, in priority order. This is deliberately different from most GEO guides, which front-load content work. Entity fixes come first because they unlock everything else.

Step 1: Audit your brand’s current AI visibility

Before changing anything, establish a baseline. Ask ChatGPT, Perplexity, Gemini, and Google AI Overviews for recommendations in your category, 10–15 prompts that a real buyer would use. Document which brands get named, which get cited as sources, and where you appear (or don’t).

Repeat monthly. Without this baseline, you won’t know if anything you’re doing is working.

Step 2: Fix your entity footprint

Before writing a single new blog post, audit how your brand is described across:

- Your own site (homepage, About, product pages)

- LinkedIn company page

- Crunchbase and similar databases

- Review sites (G2, Capterra, Gartner Peer Insights, TrustRadius)

- Wikipedia (if eligible)

- Every press release and byline from the past two years

Pick one clear category description. Unify every source to match. This isn’t glamorous work, but we’ve watched brands double their ChatGPT citation rate from this step alone, before touching a word of new content.

Step 3: Retrofit existing content for extractability

Your best existing pages, the ones already getting SEO traffic, are your fastest GEO wins. Retrofit them:

- Add a direct 1-2 sentence answer immediately under each H2.

- Add one self-contained statistic with source and year per major section.

- Convert long qualitative paragraphs into short comparison tables where appropriate.

- Define key entities on first mention with the entity name in the sentence.

- Add an FAQ section that answers the specific sub-questions real users ask AI engines.

This is usually 4–6 hours per top page. The payoff: pages that already rank start getting pulled into AI Overviews and Perplexity answers within weeks.

Step 4: Build third-party presence strategically

This is where most GEO strategies stall. Teams know they need external mentions but don’t know where to focus. The shortcut:

- Identify which sources AI engines actually cite in your category. Ask ChatGPT, “What sources do you draw on when recommending [category] tools?” Then check Perplexity citations for your top 20 queries. Patterns emerge fast.

- Prioritize Reddit, YouTube, and niche industry publications. These three categories punch well above their weight in Perplexity and ChatGPT citations.

- Earn mentions on the publications that already show up in AI answers for your category. Not high-DA generalist sites, the specific publications that AI models treat as authoritative for your niche.

One pattern worth sharing: the brands that get cited by AI consistently tend to have a handful of editorial mentions on category-specific publications, not fifty on random high-DA blogs. Specificity beats volume.

Step 5: Publish content designed for retrieval, not rank

New content should be built GEO-first from the start:

- Question-based H2 headings that mirror how people query AI assistants.

- One direct answer per section, readable as a standalone passage.

- Original data, statistics, or frameworks that competitors don’t have.

- Schema markup (Article, FAQ, HowTo) to support entity understanding.

- A consistent author byline tied to LinkedIn and industry profiles.

What Doesn’t Work (And Why Teams Keep Trying It)

The GEO mistake we see most often in visibility audits is a team treating generative engine optimization as a content-volume problem and running the same keyword playbook they used in 2018. More blog posts rarely move ChatGPT citation rates; they dilute the brand’s extractable entity signal and push the authoritative third-party coverage (review sites, editorial features, category round-ups) further down the retrieval surface the models actually learn from.

Three tactics burn budget without moving AI citations. Skip them.

Stuffing content with brand mentions

Writing “Our company, [Brand], is the leading [category] solution” repeatedly across every page doesn’t fool the model. It flags as promotional and often hurts extraction. AI engines reward clean, authoritative language, not keyword density.

Publishing AI-generated content at volume

Dumping 50 AI-written articles into your blog every month doesn’t build entity authority. It fragments it. AI engines seem to down-weight sources whose content looks generic and templated, and they’re getting sharper at detecting it every quarter.

Chasing high-DA backlinks

Backlinks still matter for SEO. They correlate weakly with AI citation. A link from a DA-90 site that AI models don’t treat as a category authority won’t earn you mentions. A single editorial mention on a trusted niche publication often will.

How to Measure GEO Performance

For the per-platform walkthroughs behind the measurement surface, see how to check brand mentions in ChatGPT and how to track brand mentions in Perplexity, and monitoring brand mentions in LLMs covers the cross-platform cadence that pairs with the GEO playbook described below.

Traditional SEO metrics don’t capture AI visibility. You need a different dashboard.

| Metric | What It Measures | How to Track |

|---|---|---|

| AI Citation Rate | How often your brand is cited as a source in AI answers for target queries | Manual prompt testing or AI rank tracking tools, monthly |

| Brand Mention Rate | How often your brand name appears in AI-generated answers for category queries | Prompt set of 20–50 category questions, run monthly across platforms |

| Share of AI Voice | Your mention share vs. competitors in AI responses | Competitor comparison across the same prompt set |

| AI Referral Traffic | Sessions originating from ChatGPT, Perplexity, and AI Overviews | GA4 with custom channel grouping for AI sources |

| Sentiment in AI Mentions | Whether your brand is described positively, neutrally, or negatively | Manual review of full AI responses, monthly |

Run the measurement cycle monthly, not quarterly. Cited sources shift fast, a 40–60% month-over-month change in top-cited domains isn’t unusual in 2026, so waiting a quarter to react means three months of invisibility you can’t recover.

Measure generative engine optimization using five metrics: AI citation rate, brand mention rate, share of AI voice, AI referral traffic, and sentiment inside AI mentions. Track monthly across ChatGPT, Perplexity, Gemini, and Google AI Overviews.

Platform Differences That Change Your Tactics

Treating all AI engines the same is the fastest way to waste budget. Each one selects sources differently.

ChatGPT

Weights training data heavily for established queries, and uses live search for fresher topics. Favors Wikipedia, well-cited editorial content, and sources that appear repeatedly across its training corpus. Hard to influence quickly, entity clarity and long-term authority matter more than recent content.

Perplexity

Most responsive to content changes. Cites multiple sources per answer, often 5 to 10. Leans heavily on Reddit, YouTube, Stack Overflow, and industry publications. If you want fast GEO wins, Perplexity is usually the first surface to move.

Gemini

Integrates with Google’s knowledge graph. Entity consistency across the web matters more here than almost anywhere else. Schema markup and Wikipedia-style entity descriptions carry real weight.

Google AI Overviews

Often pulls from sources already ranking in the top 10, but the overlap isn’t complete. Pages with direct answers, FAQ schema, and list-friendly structure get extracted most often.

Claude

Currently cites fewer sources than Perplexity or ChatGPT. Favors authoritative, well-written longform content. Less volatile, but also harder to break into.

A Realistic Timeline for GEO Results

Teams consistently underestimate how long GEO takes. Set expectations now:

- Weeks 1–4: Entity audit, baseline measurement, quick content retrofits. Some Perplexity movement possible within 30 days for retrofitted pages.

- Months 2–3: Entity consistency fixes start reflecting in Gemini and AI Overviews. Third-party mention work begins producing early citations.

- Months 4–6: ChatGPT citations start appearing for brands that have built real entity authority and multi-source presence.

- Months 6–12: Compound visibility, the brands that stayed consistent start showing up in AI answers where they weren’t on the radar before.

Most teams quit around month 2 because they expect SEO-speed results. The ones who push to month 6 are the ones seeing consistent citations.

Frequently Asked Questions

Is GEO replacing SEO?

No. GEO and SEO overlap heavily, and strong SEO fundamentals still support GEO performance. What’s changing is that rank alone no longer captures visibility. Brands now need both: ranking for traditional search and being cited inside AI answers. Treat GEO as an additional discipline, not a replacement.

How long does generative engine optimization take to work?

Perplexity citations can start moving within 30 days after content retrofits. ChatGPT and Gemini typically take 3–6 months because they weight training data and long-term entity signals. Compound results show up between months 6 and 12.

Do backlinks still matter for GEO?

Less than they matter for SEO. A single editorial mention on a category-specific publication that AI engines already cite often outperforms 10 generic high-DA backlinks. Prioritize sources AI models treat as authoritative for your niche, not raw domain authority.

Which AI engine should I optimize for first?

Perplexity. It’s the most responsive to content and third-party signals, cites multiple sources per answer, and moves fastest. If your retrofit work is going to show up anywhere first, it’ll show up there.

Can small brands compete with established ones in AI search?

Yes, more than in traditional SEO. AI engines frequently cite smaller, niche-authoritative brands alongside household names, especially in Perplexity and ChatGPT. A focused GEO strategy can punch well above weight class because entity clarity and third-party relevance matter more than brand size.

What’s the biggest mistake in generative engine optimization?

Treating GEO as a content volume play. The brands winning in AI answers aren’t publishing the most, they’re publishing the most extractable, have the cleanest entity footprint, and appear consistently on the sources AI engines actually cite. Volume without those three inputs produces nothing.

Start With the Audit, Not the Content

Open ChatGPT right now. Ask for recommendations in your category using five different phrasings a real buyer would use. Write down every brand that gets named. If yours isn’t on the list, that’s your starting point, not a reason to publish more content. The brands showing up didn’t get there by writing more. They got there by being the clearest, most extractable, most cross-referenced entity in their space. See exactly how to check if AI mentions your brand and turn the audit into a working baseline.